数据挖掘_LDA主题模型详解_Python手把手实战

LDA主题模型Python实战

- 1. 文本数据读取

- 2. 文本预处理

- 3. 文本分词处理

- 4. 文本向量化

- 5. LDA主题模型

-

- 5.1 模型构建

- 5.2 模型主题对应词语

- 6. LDA定主题

- 7. 模型可视化

- 8. 模型可改善之处

1. 文本数据读取

import pandas as pd

import warnings

warnings.filterwarnings("ignore")

data = pd.read_table('data.txt', sep=',')

data.head()

2. 文本预处理

re.findall(‘[\u4e00-\u9fa5]+’, x, re.S),用法见针对该部分的详细讲解。

import re

#删除空值、重复值

data = data.drop_duplicates()

data = data[data.notnull()]

#去掉非汉字字符

data = data.apply(lambda x: re.findall('[\u4e00-\u9fa5]+', x, re.S))

data = data.apply(lambda x: ' '.join(x))

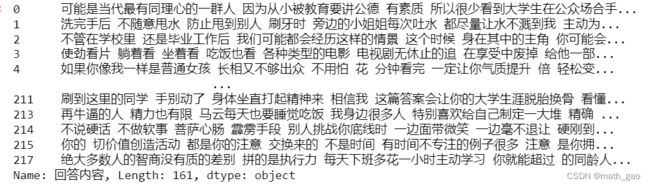

data

3. 文本分词处理

import jieba

#分词

data_cut = data.apply(lambda x:jieba.lcut(x))

data_cut = data_cut.apply(lambda x:' '.join(x))

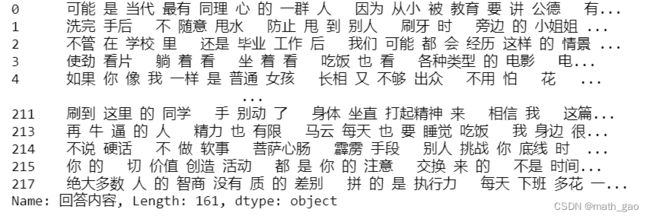

data_cut

4. 文本向量化

from sklearn.feature_extraction.text import TfidfVectorizer

tf_idf_vectorizer = TfidfVectorizer()

tf_idf = tf_idf_vectorizer.fit_transform(data_cut)

5. LDA主题模型

5.1 模型构建

LatentDirichletAllocation主题模型用法详见

from sklearn.decomposition import LatentDirichletAllocation

n_topics = 5 #选择5个主题考察

lda = LatentDirichletAllocation(

n_components=n_topics, max_iter=50,

learning_method='online',

learning_offset=50.,

random_state=0)

lda.fit(tf_idf)

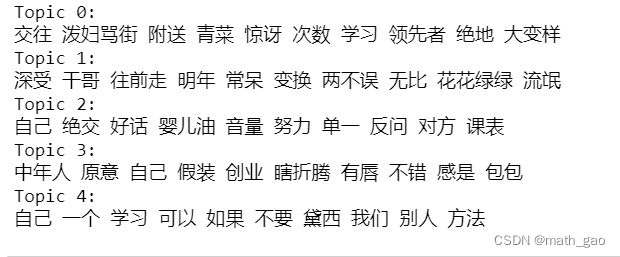

5.2 模型主题对应词语

n_top_words = 10 #每个主题对应的前10个词语

tf_idf_feature_names = tf_idf_vectorizer.get_feature_names() #文本集对应的所有词语

top_words = []

for idx,topic in enumerate(lda.components_):

print(f'Topic {idx}:')

topic_words = ' '.join([tf_idf_feature_names[i] for i in topic.argsort()[:-n_top_words-1:-1]])

top_words.append(topic_words)

print(topic_words)

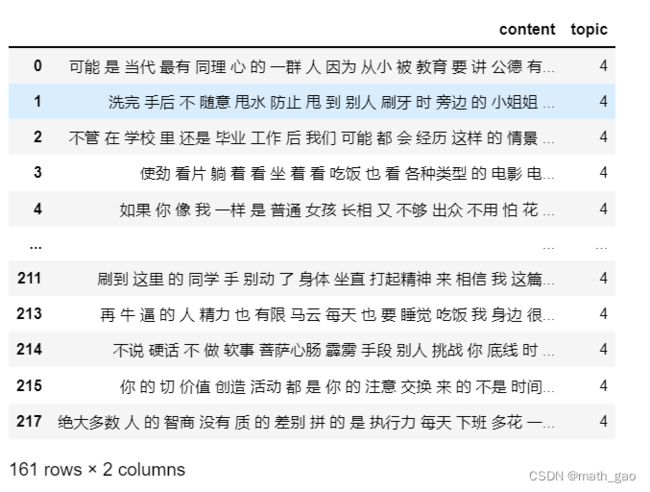

6. LDA定主题

import numpy as np

topics = lda.transform(tf_idf) #shape=(161,5):每篇内容在模型下的每个主题的概率

topic = []

for tcs in topics:

topic.append(tcs.argsort()[-1]) #获取每篇内容在模型下的主题类型

data_final = pd.DataFrame()

data_final['content']=data_cut

data_final['topic']=topic

data_final #主题的DataFrame形式

7. 模型可视化

import pyLDAvis.sklearn

import pyLDAvis

html_data = pyLDAvis.sklearn.prepare(lda, tf_idf, tf_idf_vectorizer)

html_path = 'document-lda-visualization.html'

pyLDAvis.save_html(html_data,html_path)

pyLDAvis.show(html_data, local=False)

# 清屏

os.system('clear')

# 浏览器打开 html 文件以查看可视化结果

os.system(f'start {html_path}')

8. 模型可改善之处

- 分词模式:可以再采用全模式和搜索引擎模式的分词方法作尝试。

- 文本向量化:方法1,去停用词+count;方法2:,tf-idf。

- LDA模型参数修改。