安装Scala

使用spark-shell命令进入shell模式,查看spark版本和Scala版本:

下载Scala2.10.5

wget https://downloads.lightbend.com/scala/2.10.5/scala-2.10.5.tgz

解压

tar -xzvf scala-2.10.5.tgz

创建文件夹

mkdir -p /usr/local/scala

cp -r scala-2.10.5 /usr/local/scala

配置环境

vim /etc/profile

添加内容

export SCALA_HOME=/usr/local/scala/scala-2.10.5 export PATH=$PATH:$JAVA_HOME/bin:$PHOENIX_PATH/bin:$M2_HOME/bin:$SCALA_HOME/bin

生效

source /etc/profile

验证安装成功

安装Maven

参考:https://www.cnblogs.com/ratels/p/10874379.html

只是默认使用Maven中央仓库,不用另外添加Maven中央仓库的镜像;中央仓库虽然慢,但是内容全;镜像虽然速度快,但是内容有欠缺。

安装HiBench

获取源码

wget https://codeload.github.com/Intel-bigdata/HiBench/zip/master

进入文件夹下,执行以下命令进行安装

(参考:https://github.com/Intel-bigdata/HiBench ; https://github.com/Intel-bigdata/HiBench/blob/master/docs/build-hibench.md)

mvn -Phadoopbench -Psparkbench -Dspark=1.6 -Dscala=2.10 clean package

报错:

Plugin org.apache.maven.plugins:maven-clean-plugin:2.5 or one of its dependencies could not be

The POM for org.apache.maven.plugins:maven-clean-plugin:jar:2.5 is invalid, transitive dependencies (if any) will not be available

解决方法(参考:https://blog.csdn.net/expect521/article/details/75663221):

(1)删除plugin目录下的文件夹,重新生成;

(2)设置Maven中央仓库为源;

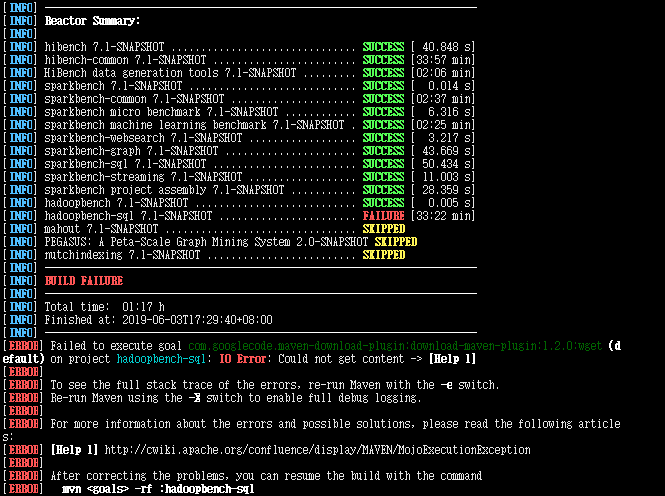

编译后返回如下信息:

[INFO] ------------------------------------------------------------------------ [INFO] Reactor Summary: [INFO] [INFO] hibench 7.1-SNAPSHOT ............................... SUCCESS [ 40.848 s] [INFO] hibench-common 7.1-SNAPSHOT ........................ SUCCESS [33:57 min] [INFO] HiBench data generation tools 7.1-SNAPSHOT ......... SUCCESS [02:06 min] [INFO] sparkbench 7.1-SNAPSHOT ............................ SUCCESS [ 0.014 s] [INFO] sparkbench-common 7.1-SNAPSHOT ..................... SUCCESS [02:37 min] [INFO] sparkbench micro benchmark 7.1-SNAPSHOT ............ SUCCESS [ 6.316 s] [INFO] sparkbench machine learning benchmark 7.1-SNAPSHOT . SUCCESS [02:25 min] [INFO] sparkbench-websearch 7.1-SNAPSHOT .................. SUCCESS [ 3.217 s] [INFO] sparkbench-graph 7.1-SNAPSHOT ...................... SUCCESS [ 43.669 s] [INFO] sparkbench-sql 7.1-SNAPSHOT ........................ SUCCESS [ 50.434 s] [INFO] sparkbench-streaming 7.1-SNAPSHOT .................. SUCCESS [ 11.003 s] [INFO] sparkbench project assembly 7.1-SNAPSHOT ........... SUCCESS [ 28.359 s] [INFO] hadoopbench 7.1-SNAPSHOT ........................... SUCCESS [ 0.005 s] [INFO] hadoopbench-sql 7.1-SNAPSHOT ....................... FAILURE [33:22 min] [INFO] mahout 7.1-SNAPSHOT ................................ SKIPPED [INFO] PEGASUS: A Peta-Scale Graph Mining System 2.0-SNAPSHOT SKIPPED [INFO] nutchindexing 7.1-SNAPSHOT ......................... SKIPPED [INFO] ------------------------------------------------------------------------ [INFO] BUILD FAILURE [INFO] ------------------------------------------------------------------------ [INFO] Total time: 01:17 h [INFO] Finished at: 2019-06-03T17:29:40+08:00 [INFO] ------------------------------------------------------------------------ [ERROR] Failed to execute goal com.googlecode.maven-download-plugin:download-maven-plugin:1.2.0:wget (default) on project hadoopbench-sql: IO Error: Could not get content -> [Help 1] [ERROR] [ERROR] To see the full stack trace of the errors, re-run Maven with the -e switch. [ERROR] Re-run Maven using the -X switch to enable full debug logging. [ERROR] [ERROR] For more information about the errors and possible solutions, please read the following articles: [ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/MojoExecutionException [ERROR] [ERROR] After correcting the problems, you can resume the build with the command [ERROR] mvn-rf :hadoopbench-sql

错误原因是:

[WARNING] Could not get content org.apache.maven.wagon.TransferFailedException: Connect to archive.apache.org:80 [archive.apache.org/163.172.17.199] failed: Connection timed out (Connection timed out) Caused by: java.net.ConnectException: Connection timed out (Connection timed out) [WARNING] Retrying (1 more) Downloading: http://archive.apache.org/dist/hive/hive-0.14.0//apache-hive-0.14.0-bin.tar.gz java.net.SocketTimeoutException: Read timed out

本人手动去下载文件:http://archive.apache.org/dist/hive/hive-0.14.0//apache-hive-0.14.0-bin.tar.gz ,依然无法下载,说明是文件地址问题!

已经构建的模块暂时能够满足需求,先略过该问题。

创建并修改配置文件hadoop.conf

cp conf/hadoop.conf.template conf/hadoop.conf

然后修改配置文件: vim hadoop.conf

参考:https://github.com/Intel-bigdata/HiBench/blob/master/docs/run-hadoopbench.md ;https://www.cnblogs.com/PJQOOO/p/6899988.html ;https://blog.csdn.net/xiaoxiaojavacsdn/article/details/80235078

1 # Hadoop home 2 hibench.hadoop.home /opt/cloudera/parcels/CDH-5.14.2-1.cdh5.14.2.p0.3/lib/hadoop 3 4 # The path of hadoop executable 5 hibench.hadoop.executable /opt/cloudera/parcels/CDH-5.14.2-1.cdh5.14.2.p0.3/bin/hadoop 6 7 # Hadoop configraution directory 8 hibench.hadoop.configure.dir /etc/hadoop/conf.cloudera.yarn 9 10 # The root HDFS path to store HiBench data 11 hibench.hdfs.master hdfs://node1:8020 12 13 #hdfs://localhost:8020 14 #hdfs://localhost:9000 15 16 # Hadoop release provider. Supported value: apache, cdh5, hdp 17 hibench.hadoop.release cdh5

注意:

1.hibench.hadoop.home是你本机上hadoop的安装路径。

2.在配置hibench.hdfs.master的时候我傻傻地写了hdfs://localhost:8020,导致后来运行脚本一直不成功。

首先localhost是你的机器的IP,后面的端口号可能是8020也可能是9000,要根据本机的具体情况,在命令行输入vim /etc/hadoop/conf.cloudera.yarn/core-site.xml,可以观察到

1 "1.0" encoding="UTF-8"?> 2 3 45 6 fs.defaultFS 7hdfs://node1:8020 8

接下来就是在HiBench下运行脚本,比如:

bin/workloads/micro/wordcount/prepare/prepare.sh

在HDFS中创建好目录

su hdfs hadoop dfs -mkdir /HiBench/Wordcount hadoop dfs -mkdir /HiBench/Wordcount/Input

目录创建好以后执行脚本,报错:

rm: Permission denied: user=root, access=WRITE, inode="/HiBench/Wordcount":hdfs:supergroup:drwxr-xr-x

原因:

root对hdfs创建的文件目录没有访问权限!

bash-4.2$ hadoop fs -ls / Found 5 items drwxr-xr-x - hdfs supergroup 0 2019-06-04 16:07 /HiBench drwxr-xr-x - hdfs supergroup 0 2019-04-03 16:57 /benchmarks drwxr-xr-x - hbase hbase 0 2019-05-16 14:20 /hbase drwxrwxrwt - hdfs supergroup 0 2019-05-16 15:50 /tmp drwxr-xr-x - hdfs supergroup 0 2019-04-28 21:04 /user

解决方法:

(1 可选)参考:https://blog.csdn.net/dingding_ting/article/details/84955325

hadoop dfsadmin -safemode leave

(2)参考:https://blog.csdn.net/xianjie0318/article/details/75453758

hdfs dfs -chown -R root /HiBench

权限修正:

bash-4.2$ hadoop fs -ls / Found 5 items drwxr-xr-x - root supergroup 0 2019-06-04 16:07 /HiBench drwxr-xr-x - hdfs supergroup 0 2019-04-03 16:57 /benchmarks drwxr-xr-x - hbase hbase 0 2019-05-16 14:20 /hbase drwxrwxrwt - hdfs supergroup 0 2019-05-16 15:50 /tmp drwxr-xr-x - hdfs supergroup 0 2019-04-28 21:04 /user

再次执行脚本,返回结果信息:

[root@node1 prepare]# ./prepare.sh patching args= Parsing conf: /home/cf/app/HiBench-master/conf/hadoop.conf Parsing conf: /home/cf/app/HiBench-master/conf/hibench.conf Parsing conf: /home/cf/app/HiBench-master/conf/workloads/micro/wordcount.conf probe sleep jar: /opt/cloudera/parcels/CDH-5.14.2-1.cdh5.14.2.p0.3/lib/hadoop/../../jars/hadoop-mapreduce-client-jobclient-2.6.0-cdh5.14.2-tests.jar start HadoopPrepareWordcount bench hdfs rm -r: /opt/cloudera/parcels/CDH-5.14.2-1.cdh5.14.2.p0.3/bin/hadoop --config /etc/hadoop/conf.cloudera.yarn fs -rm -r -skipTrash hdfs://node1:8020/HiBench/Wordcount/Input Deleted hdfs://node1:8020/HiBench/Wordcount/Input Submit MapReduce Job: /opt/cloudera/parcels/CDH-5.14.2-1.cdh5.14.2.p0.3/bin/hadoop --config /etc/hadoop/conf.cloudera.yarn jar /opt/cloudera/parcels/CDH-5.14.2-1.cdh5.14.2.p0.3/lib/hadoop/../../jars/hadoop-mapreduce-examples-2.6.0-cdh5.14.2.jar randomtextwriter -D mapreduce.randomtextwriter.totalbytes=32000 -D mapreduce.randomtextwriter.bytespermap=4000 -D mapreduce.job.maps=8 -D mapreduce.job.reduces=8 hdfs://node1:8020/HiBench/Wordcount/Input The job took 11 seconds. finish HadoopPrepareWordcount bench

在 /home/cf/app/HiBench-master 目录下,执行脚本

bin/workloads/micro/wordcount/hadoop/run.sh

返回结果信息

[root@node1 hadoop]# ./run.sh patching args= Parsing conf: /home/cf/app/HiBench-master/conf/hadoop.conf Parsing conf: /home/cf/app/HiBench-master/conf/hibench.conf Parsing conf: /home/cf/app/HiBench-master/conf/workloads/micro/wordcount.conf probe sleep jar: /opt/cloudera/parcels/CDH-5.14.2-1.cdh5.14.2.p0.3/lib/hadoop/../../jars/hadoop-mapreduce-client-jobclient-2.6.0-cdh5.14.2-tests.jar start HadoopWordcount bench hdfs rm -r: /opt/cloudera/parcels/CDH-5.14.2-1.cdh5.14.2.p0.3/bin/hadoop --config /etc/hadoop/conf.cloudera.yarn fs -rm -r -skipTrash hdfs://node1:8020/HiBench/Wordcount/Output rm: `hdfs://node1:8020/HiBench/Wordcount/Output': No such file or directory hdfs du -s: /opt/cloudera/parcels/CDH-5.14.2-1.cdh5.14.2.p0.3/bin/hadoop --config /etc/hadoop/conf.cloudera.yarn fs -du -s hdfs://node1:8020/HiBench/Wordcount/Input Submit MapReduce Job: /opt/cloudera/parcels/CDH-5.14.2-1.cdh5.14.2.p0.3/bin/hadoop --config /etc/hadoop/conf.cloudera.yarn jar /opt/cloudera/parcels/CDH-5.14.2-1.cdh5.14.2.p0.3/lib/hadoop/../../jars/hadoop-mapreduce-examples-2.6.0-cdh5.14.2.jar wordcount -D mapreduce.job.maps=8 -D mapreduce.job.reduces=8 -D mapreduce.inputformat.class=org.apache.hadoop.mapreduce.lib.input.SequenceFileInputFormat -D mapreduce.outputformat.class=org.apache.hadoop.mapreduce.lib.output.SequenceFileOutputFormat -D mapreduce.job.inputformat.class=org.apache.hadoop.mapreduce.lib.input.SequenceFileInputFormat -D mapreduce.job.outputformat.class=org.apache.hadoop.mapreduce.lib.output.SequenceFileOutputFormat hdfs://node1:8020/HiBench/Wordcount/Input hdfs://node1:8020/HiBench/Wordcount/Output Bytes Written=22308 finish HadoopWordcount bench

执行结束以后可以查看分析结果

/report/hibench.report

Type Date Time Input_data_size Duration(s) Throughput(bytes/s) Throughput/node HadoopWordcount 2019-06-04 16:59:04 37055 20.226 1832 610

\report\wordcount路径下有两个文件夹,分别对应执行了脚本/prepare/prepare.sh和/hadoop/run.sh所产生的信息

\report\wordcount\prepare下有多个文件:monitor.log是原始日志,bench.log是Map-Reduce信息,monitor.html可视化了系统的性能信息,\conf\wordcount.conf本次任务的环境变量

\report\wordcount\hadoop下有多个文件:monitor.log是原始日志,bench.log是Map-Reduce信息,monitor.html可视化了系统的性能信息,\conf\wordcount.conf本次任务的环境变量

monitor.html中包含了Memory usage heatmap等统计图:

根据官方文档 https://github.com/Intel-bigdata/HiBench/blob/master/docs/run-hadoopbench.md ,还可以修改 hibench.scale.profile 调整测试的数据规模,修改 hibench.default.map.parallelism 和 hibench.default.shuffle.parallelism 调整并行化