使用PyTorch+OpenCV进行人脸识别(附代码演练)

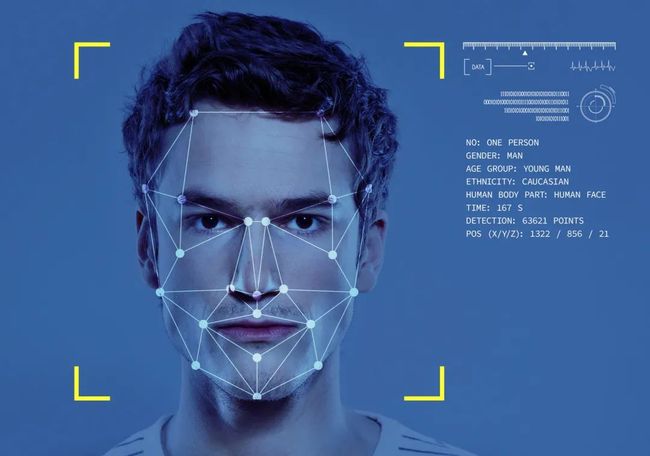

人脸识别是一种用于从图像或视频中识别人脸的系统。它在许多应用程序和垂直行业中很有用。如今,我们看到这项技术可帮助新闻机构在重大事件报道中识别名人,为移动应用程序提供二次身份验证,为媒体和娱乐公司自动索引图像和视频文件,允许人道主义团体识别和营救人口贩卖受害者。

在这个博客中,我尝试构建一个人脸识别系统,该系统将一个人的图像与数据集中的护照大小的照片相匹配,并输出该图像是否匹配。

该系统可分为以下部分:人脸检测和人脸分类器

人脸检测

首先,将加载包含护照尺寸的图像和自拍照的数据集。然后将其分为训练数据和验证数据。

pip install split-folders

该库有助于将数据集划分为训练,测试和验证数据。

import splitfolders

splitfolders.ratio('dataset', output="/data", seed=1337, ratio=(.8, 0.2))

这将创建一个包含训练和有效子文件夹的数据目录,将数据集分别划分为80%训练集和20%验证集。

现在,我们将尝试从图像中提取人脸。为此,我将OpenCV的预训练Haar Cascade分类器用于人脸。

首先,我们需要加载haarcascade_frontalface_default XML分类器。然后以灰度模式加载我们的输入图像(或视频)。如果找到人脸,则将检测到的人脸的位置返回为Rect(x,y,w,h)。然后,将这些位置用于为人脸创建ROI。

import fnmatch

import os

from matplotlib import pyplot as plt

import cv2

# Load the cascade

face_cascade = cv2.CascadeClassifier('/haarcascade_frontalface_default.xml')

paths="/data/"

for root,_,files in os.walk(paths):

for filename in files:

file = os.path.join(root,filename)

if fnmatch.fnmatch(file,'*.jpg'):

img = cv2.imread(file)

gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

# Detect faces

faces = face_cascade.detectMultiScale(gray, 1.1, 4)

# Draw rectangle around the faces

for (x, y, w, h) in faces:

crop_face = img[y:y+h, x:x+w]

path = os.path.join(root,filename)

cv2.imwrite(path,crop_face)

这会将目录中的所有图像替换为图像中检测到的人脸。分类器的数据准备部分现已完成。

现在,我们将加载该数据集。

from torch import nn, optim, as_tensor

from torch.utils.data import Dataset, DataLoader

import torch.nn.functional as F

from torch.optim import lr_scheduler

from torch.nn.init import *

from torchvision import transforms, utils, datasets, models

import cv2

from PIL import Image

from pdb import set_trace

import time

import copy

from pathlib import Path

import os

import sys

import matplotlib.pyplot as plt

import matplotlib.animation as animation

from skimage import io, transform

from tqdm import trange, tqdm

import csv

import glob

import dlib

import pandas as pd

import numpy as np

这将导入所有必需的库。现在我们将加载数据集,为了增加数据集的大小,应用了各种数据扩充。

data_transforms = {

'train': transforms.Compose([

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Scale((224,224)),

transforms.ColorJitter(brightness=0.4, contrast=0.4, saturation=0.4, hue=0.4),

transforms.RandomRotation(5, resample=False,expand=False, center=None),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

]),

'val': transforms.Compose([

transforms.ToTensor(),

transforms.Scale((224,224)),

transforms.ColorJitter(brightness=0.4, contrast=0.4, saturation=0.4, hue=0.4),

transforms.RandomRotation(5, resample=False,expand=False, center=None),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225]),

]),

}

data_dir = '/content/drive/MyDrive/AttendanceCapturingSystem/data/'

image_datasets = {x: datasets.ImageFolder(os.path.join(data_dir, x),

data_transforms[x])

for x in ['train', 'val']}

dataloaders = {x: torch.utils.data.DataLoader(image_datasets[x],

batch_size=8,

shuffle=True)

for x in ['train', 'val']}

dataset_sizes = {x: len(image_datasets[x]) for x in ['train','val']}

class_names = image_datasets['train'].classes

class_names

现在让我们可视化数据集。

def imshow(inp, title=None):

"""Imshow for Tensor."""

inp = inp.numpy().transpose((1, 2, 0))

mean = np.array([0.485, 0.456, 0.406])

std = np.array([0.229, 0.224, 0.225])

inp = std * inp + mean

inp = np.clip(inp, 0, 1)

plt.imshow(inp)

if title is not None:

plt.title(title)

plt.pause(0.001) # pause a bit so that plots are updated

# Get a batch of training data

inputs, classes = next(iter(dataloaders['train']))

# Make a grid from batch

out = utils.make_grid(inputs)

imshow(out, title=[class_names[x] for x in classes])

现在让我们建立分类器模型。在这里,我们将使用在VGGFace2数据集上预训练的InceptionResnetV1作为基础模型。

from models.inception_resnet_v1 import InceptionResnetV1

print('Running on device: {}'.format(device))

model_ft = InceptionResnetV1(pretrained='vggface2', classify=False, num_classes = len(class_names))

list(model_ft.children())[-6:]

layer_list = list(model_ft.children())[-5:] # all final layers

model_ft = nn.Sequential(*list(model_ft.children())[:-5])

for param in model_ft.parameters():

param.requires_grad = False

class Flatten(nn.Module):

def __init__(self):

super(Flatten, self).__init__()

def forward(self, x):

x = x.view(x.size(0), -1)

return x

class normalize(nn.Module):

def __init__(self):

super(normalize, self).__init__()

def forward(self, x):

x = F.normalize(x, p=2, dim=1)

return x

model_ft.avgpool_1a = nn.AdaptiveAvgPool2d(output_size=1)

model_ft.last_linear = nn.Sequential(

Flatten(),

nn.Linear(in_features=1792, out_features=512, bias=False),

normalize()

)

model_ft.logits = nn.Linear(layer_list[2].out_features, len(class_names))

model_ft.softmax = nn.Softmax(dim=1)

model_ft = model_ft.to(device)

criterion = nn.CrossEntropyLoss()

# Observe that all parameters are being optimized

optimizer_ft = optim.SGD(model_ft.parameters(), lr=1e-2, momentum=0.9)

# Decay LR by a factor of *gamma* every *step_size* epochs

exp_lr_scheduler = lr_scheduler.StepLR(optimizer_ft, step_size=7, gamma=0.1)

model_ft = model_ft.to(device)

criterion = nn.CrossEntropyLoss()

# Observe that all parameters are being optimized

optimizer_ft = optim.SGD(model_ft.parameters(), lr=1e-2, momentum=0.9)

# Decay LR by a factor of *gamma* every *step_size* epochs

exp_lr_scheduler = lr_scheduler.StepLR(optimizer_ft, step_size=7, gamma=0.1)

model_ft

现在我们将训练模型。

def train_model(model, criterion, optimizer, scheduler,

num_epochs=25):

since = time.time()

FT_losses = []

best_model_wts = copy.deepcopy(model.state_dict())

best_acc = 0.0

for epoch in range(num_epochs):

print('Epoch {}/{}'.format(epoch, num_epochs - 1))

print('-' * 10)

# Each epoch has a training and validation phase

for phase in ['train', 'val']:

if phase == 'train':

model.train() # Set model to training mode

else:

model.eval() # Set model to evaluate mode

running_loss = 0.0

running_corrects = 0

# Iterate over data.

for inputs, labels in dataloaders[phase]:

inputs = inputs.to(device)

labels = labels.to(device)

# zero the parameter gradients

optimizer.zero_grad()

# forward

# track history if only in train

with torch.set_grad_enabled(phase == 'train'):

outputs = model(inputs)

_, preds = torch.max(outputs, 1)

loss = criterion(outputs, labels)

# backward + optimize only if in training phase

if phase == 'train':

loss.backward()

optimizer.step()

scheduler.step()

FT_losses.append(loss.item())

# statistics

running_loss += loss.item() * inputs.size(0)

running_corrects += torch.sum(preds == labels.data)

epoch_loss = running_loss / dataset_sizes[phase]

epoch_acc = running_corrects.double() / dataset_sizes[phase]

print('{} Loss: {:.4f} Acc: {:.4f}'.format(

phase, epoch_loss, epoch_acc))

# deep copy the model

if phase == 'val' and epoch_acc > best_acc:

best_acc = epoch_acc

best_model_wts = copy.deepcopy(model.state_dict())

time_elapsed = time.time() - since

print('Training complete in {:.0f}m {:.0f}s'.format(

time_elapsed // 60, time_elapsed % 60))

print('Best val Acc: {:4f}'.format(best_acc))

# load best model weights

model.load_state_dict(best_model_wts)

return model, FT_losses

最后,我们将评估模型并保存。

model_ft, FT_losses = train_model(model_ft, criterion, optimizer_ft, exp_lr_scheduler, num_epochs=200)

plt.figure(figsize=(10,5))

plt.title("FRT Loss During Training")

plt.plot(FT_losses, label="FT loss")

plt.xlabel("iterations")

plt.ylabel("Loss")

plt.legend()

plt.show()

torch.save(model, "/model.pt")

现在,我们将输入图像输入已保存的模型并检查匹配情况。

import fnmatch

import os

from matplotlib import pyplot as plt

import cv2

from facenet_pytorch import MTCNN, InceptionResnetV1

resnet = InceptionResnetV1(pretrained='vggface2').eval()

# Load the cascade

face_cascade = cv2.CascadeClassifier('/haarcascade_frontalface_default.xml')

def face_match(img_path, data_path): # img_path= location of photo, data_path= location of data.pt

# getting embedding matrix of the given img

img = cv2.imread(img_path)

gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

# Detect faces

faces = face_cascade.detectMultiScale(gray, 1.1, 4)

# Draw rectangle around the faces

for (x, y, w, h) in faces:

crop_face = img[y:y+h, x:x+w]

img = cv2.imwrite(img_path,crop_face)

emb = resnet(img.unsqueeze(0)).detach() # detech is to make required gradient false

saved_data = torch.load('model.pt') # loading data.pt file

embedding_list = saved_data[0] # getting embedding data

name_list = saved_data[1] # getting list of names

dist_list = [] # list of matched distances, minimum distance is used to identify the person

for idx, emb_db in enumerate(embedding_list):

dist = torch.dist(emb, emb_db).item()

dist_list.append(dist)

idx_min = dist_list.index(min(dist_list))

return (name_list[idx_min], min(dist_list))

result = face_match('trainset/0006/0006_0000546/0006_0000546_script.jpg', '/model.pt')

print('Face matched with: ',result[0], 'With distance: ',result[1])

☆ END ☆

如果看到这里,说明你喜欢这篇文章,请转发、点赞。微信搜索「uncle_pn」,欢迎添加小编微信「 mthler」,每日朋友圈更新一篇高质量博文。

↓扫描二维码添加小编↓