badgerdb 压缩合并

压缩合并原因

- badgerdb是lsm tree派系的数据库,put,delete接口都是通过追加写日志的方式来保存的,日志如果一直不清理,会导致读性能越来越差,占用的存储空间也越来越大,badgerdb为了解决这些问题,就有了日志合并,日志的合并的实现方式主要有参考rockdb和pebbledb这两个数据库。

压缩合并的协程启动

-

加快压缩

在open的时候,默认启动4个协程来进行压缩,启动4个协程进行压缩的目的是为了加快压缩,每个协程启动的时候还特意让协程启动的时间不一致,经可能的避开多个压缩写成同时操作同一层的同一个table。同一层的同一个table只能是一个压缩协程占用。levelsController.cstatus结构体会记录各层的table.

func (s *levelsController) startCompact(lc *z.Closer) {

n := s.kv.opt.NumCompactors//默认是4

lc.AddRunning(n - 1)

for i := 0; i < n; i++ {

go s.runCompactor(i, lc)//启动4个协程

}

}-

优先压缩第0层

其中编号为0的压缩协程会把L0层的的的优先级提高,编号为0的压缩协程不检查得分是否高于1,直接进行压缩。

runOnce := func() bool {

prios := s.pickCompactLevels()//选择压缩的sst

if id == 0 {

// Worker ID zero prefers to compact L0 always.

prios = moveL0toFront(prios)

}

for _, p := range prios {

if id == 0 && p.level == 0 {

// Allow worker zero to run level 0, irrespective of its adjusted score.

} else if p.adjusted < 1.0 {

break

}

if run(p) {

return true

}

}

return false

}选择合并的层数

第0层的得分的计算方式是第0层的table表的总数除以配置的第0层的大小;

非0层的得分的计算方式是该层所有的table表的总大小除以目标大小;

addPriority := func(level int, score float64) {

pri := compactionPriority{

level: level,

score: score,

adjusted: score,

t: t,

}

prios = append(prios, pri)

}

// Add L0 priority based on the number of tables.

addPriority(0, float64(s.levels[0].numTables())/float64(s.kv.opt.NumLevelZeroTables))//opt.NumLevelZeroTables默认是5

// All other levels use size to calculate priority.

for i := 1; i < len(s.levels); i++ {

// Don't consider those tables that are already being compacted right now.

delSize := s.cstatus.delSize(i)

l := s.levels[i]

sz := l.getTotalSize() - delSize

addPriority(i, float64(sz)/float64(t.targetSz[i]))//L1->L6层剩余的size

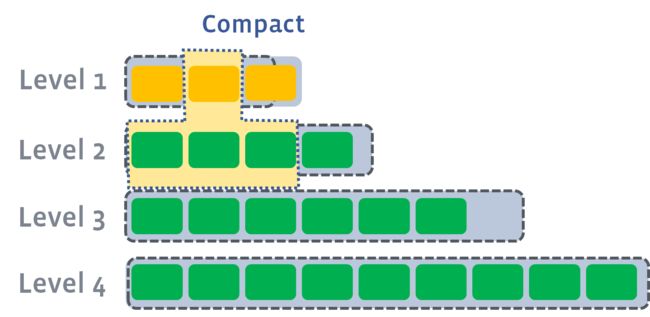

}目标层的大小有一个比例系数LevelSizeMultiplier,默认是10,最后构成的目标大小如下图

非0层选择合并层的table表

先按提交的时间把合并层的tbale表排序,提交时间早的优先被挑选到,挑选出来的table有最大和最小的key,在待合并的下一层找到和这个范围有交集的table表。在挑选的时候会放弃其他压缩协程正在处理的压缩table,重新进行选择

// We pick tables, so we compact older tables first. This is similar to

// kOldestLargestSeqFirst in RocksDB.

s.sortByHeuristic(tables, cd)

for _, t := range tables {

cd.thisSize = t.Size()

cd.thisRange = getKeyRange(t)

// If we're already compacting this range, don't do anything.

if s.cstatus.overlapsWith(cd.thisLevel.level, cd.thisRange) {

continue

}

cd.top = []*table.Table{t}

left, right := cd.nextLevel.overlappingTables(levelHandlerRLocked{}, cd.thisRange)

cd.bot = make([]*table.Table, right-left)

copy(cd.bot, cd.nextLevel.tables[left:right])

if len(cd.bot) == 0 {

cd.bot = []*table.Table{}

cd.nextRange = cd.thisRange

if !s.cstatus.compareAndAdd(thisAndNextLevelRLocked{}, *cd) {

continue

}

return true

}

cd.nextRange = getKeyRange(cd.bot...)

if s.cstatus.overlapsWith(cd.nextLevel.level, cd.nextRange) {

continue

}

if !s.cstatus.compareAndAdd(thisAndNextLevelRLocked{}, *cd) {

continue

}

return true

}

return false每一次压缩过程,都是上层只有一个table表,下层至少有0个table表,最多可能是所有的table表

选择合并第0层table表

第0层的table表和table之间的排列不是按照sst的范围来排的,只是按照写入的时间顺序来排,非0层table表之间按照sst的范围来排,非0层选择待压缩的table就直接从第0个开始,即写入时间最旧的,如果和下一个table表有交集就也需要加入,下一个table表的范围没有交集就退出。

也就是会造成第0层待合入的table的个数会至少是一个,下一层也是至少是0个,最大是整层。

top := cd.thisLevel.tables

if len(top) == 0 {

return false

}

var out []*table.Table

if len(cd.dropPrefixes) > 0 {//正常业务这里就是0

// Use all tables if drop prefix is set. We don't want to compact only a

// sub-range. We want to compact all the tables.

out = top

} else {

var kr keyRange

// cd.top[0] is the oldest file. So we start from the oldest file first.

for _, t := range top {

dkr := getKeyRange(t)

if kr.overlapsWith(dkr) {

out = append(out, t)

kr.extend(dkr)

} else {

break

}

}

}

cd.thisRange = getKeyRange(out...)//L0 层的tables[0]起到没有交集的tables[i]

cd.top = out

left, right := cd.nextLevel.overlappingTables(levelHandlerRLocked{}, cd.thisRange)//找出cd.thisRange.left,cd.thisRange.right在cd.nextLevel.tables中的索引

cd.bot = make([]*table.Table, right-left)

copy(cd.bot, cd.nextLevel.tables[left:right])

if len(cd.bot) == 0 {//cd.thisRange范围在cd.nextLevel的一个Table中

cd.nextRange = cd.thisRange

} else {

cd.nextRange = getKeyRange(cd.bot...)

}合并过程

把一次压缩过程,拆成多个片段,方便起多个迭代器进行并发的合并。创建新的table,并top和bot的表做为一个迭代器打开,从迭代器里面读出所有的kv对,过滤掉过期的,再插入到新table里面去。

newIterator := func() []y.Iterator {

// Create iterators across all the tables involved first.

var iters []y.Iterator

switch {

case lev == 0:

iters = append(iters, iteratorsReversed(topTables, table.NOCACHE)...)

case len(topTables) > 0:

y.AssertTrue(len(topTables) == 1)

iters = []y.Iterator{topTables[0].NewIterator(table.NOCACHE)}

}

// Next level has level>=1 and we can use ConcatIterator as key ranges do not overlap.

return append(iters, table.NewConcatIterator(valid, table.NOCACHE))

} addKeys := func(builder *table.Builder) {

timeStart := time.Now()

var numKeys, numSkips uint64

var rangeCheck int

var tableKr keyRange

for ; it.Valid(); it.Next() {

// See if we need to skip the prefix.

if len(cd.dropPrefixes) > 0 && hasAnyPrefixes(it.Key(), cd.dropPrefixes) {

numSkips++

updateStats(it.Value())

continue

}

// See if we need to skip this key.

if len(skipKey) > 0 {

if y.SameKey(it.Key(), skipKey) {

numSkips++

updateStats(it.Value())

continue

} else {

skipKey = skipKey[:0]

}

}

if !y.SameKey(it.Key(), lastKey) {

firstKeyHasDiscardSet = false

if len(kr.right) > 0 && y.CompareKeys(it.Key(), kr.right) >= 0 {

break

}

if builder.ReachedCapacity() {

// Only break if we are on a different key, and have reached capacity. We want

// to ensure that all versions of the key are stored in the same sstable, and

// not divided across multiple tables at the same level.

break

}

lastKey = y.SafeCopy(lastKey, it.Key())

numVersions = 0

firstKeyHasDiscardSet = it.Value().Meta&BitDiscardEarlierVersions > 0

if len(tableKr.left) == 0 {

tableKr.left = y.SafeCopy(tableKr.left, it.Key())

}

tableKr.right = lastKey

rangeCheck++

if rangeCheck%5000 == 0 {

// This table's range exceeds the allowed range overlap with the level after

// next. So, we stop writing to this table. If we don't do this, then we end up

// doing very expensive compactions involving too many tables. To amortize the

// cost of this check, we do it only every N keys.

if exceedsAllowedOverlap(tableKr) {

// s.kv.opt.Debugf("L%d -> L%d Breaking due to exceedsAllowedOverlap with

// kr: %s\n", cd.thisLevel.level, cd.nextLevel.level, tableKr)

break

}

}

}

vs := it.Value()

version := y.ParseTs(it.Key())

isExpired := isDeletedOrExpired(vs.Meta, vs.ExpiresAt)

// Do not discard entries inserted by merge operator. These entries will be

// discarded once they're merged

if version <= discardTs && vs.Meta&bitMergeEntry == 0 {

// Keep track of the number of versions encountered for this key. Only consider the

// versions which are below the minReadTs, otherwise, we might end up discarding the

// only valid version for a running transaction.

numVersions++

// Keep the current version and discard all the next versions if

// - The `discardEarlierVersions` bit is set OR

// - We've already processed `NumVersionsToKeep` number of versions

// (including the current item being processed)

lastValidVersion := vs.Meta&BitDiscardEarlierVersions > 0 ||

numVersions == s.kv.opt.NumVersionsToKeep

if isExpired || lastValidVersion {

// If this version of the key is deleted or expired, skip all the rest of the

// versions. Ensure that we're only removing versions below readTs.

skipKey = y.SafeCopy(skipKey, it.Key())

switch {

// Add the key to the table only if it has not expired.

// We don't want to add the deleted/expired keys.

case !isExpired && lastValidVersion:

// Add this key. We have set skipKey, so the following key versions

// would be skipped.

case hasOverlap:

// If this key range has overlap with lower levels, then keep the deletion

// marker with the latest version, discarding the rest. We have set skipKey,

// so the following key versions would be skipped.

default:

// If no overlap, we can skip all the versions, by continuing here.

numSkips++

updateStats(vs)

continue // Skip adding this key.

}

}

}

numKeys++

var vp valuePointer

if vs.Meta&bitValuePointer > 0 {

vp.Decode(vs.Value)

}

switch {

case firstKeyHasDiscardSet:

// This key is same as the last key which had "DiscardEarlierVersions" set. The

// the next compactions will drop this key if its ts >

// discardTs (of the next compaction).

builder.AddStaleKey(it.Key(), vs, vp.Len)

case isExpired:

// If the key is expired, the next compaction will drop it if

// its ts > discardTs (of the next compaction).

builder.AddStaleKey(it.Key(), vs, vp.Len)

default:

builder.Add(it.Key(), vs, vp.Len)

}

}合并完成后

合并后需要删除sst文件和创建新的sst,删除和创建需要原子性操作,要记录到mainfest文件里面。删除上层top的sst和下层bot的sst,再加上nextlevel层的sst 文件

func buildChangeSet(cd *compactDef, newTables []*table.Table) pb.ManifestChangeSet {

changes := []*pb.ManifestChange{}

for _, table := range newTables {

changes = append(changes,

newCreateChange(table.ID(), cd.nextLevel.level, table.KeyID(), table.CompressionType()))

}

for _, table := range cd.top {

// Add a delete change only if the table is not in memory.

if !table.IsInmemory {

changes = append(changes, newDeleteChange(table.ID()))

}

}

for _, table := range cd.bot {

changes = append(changes, newDeleteChange(table.ID()))

}

return pb.ManifestChangeSet{Changes: changes}

}https://github.com/facebook/rocksdb/wiki/Leveled-CompactionLSM Tree-Based存储引擎的compaction策略(feat. RocksDB)_LittleMagics的博客-CSDN博客