- Pybind11教程:从零开始打造 Python 的 C++ 小帮手

Yc9801

c++开发语言

参考官网文档:https://pybind11.readthedocs.io/en/stable/index.html一、Pybind11是什么?想象你在Python里写了个计算器,但跑得太慢,想用C++提速,又不想完全抛弃Python。Pybind11就像一座桥,把C++的高性能代码“嫁接”到Python里。你可以用Python调用C++函数,就像请了个跑得飞快的帮手来干活。主要功能:绑定函数:

- python自定义函数的参数有多种类型_python自定义函数的参数之四种表现形式

weixin_39860755

(1)defa(x,y):printx,y这是最常见的定义方式,调用该函数,a(1,2)则x取1,y取2,形参与实参相对应,如果a(1)或者a(1,2,3)则会报错(2)defa(x,y=3):printx,y提供了默认值,调用该函数,a(1,2)同样还是x取1,y取2,但是如果a(1),则不会报错了。上面这俩种方式,还可以更换参数位置,比如a(y=4,x=3)用这种形式也是可以的如果是defa(

- Python文件操作

红虾程序员

Pythonpython

在Python中文件操作是一项基础且重要的功能,它主要包括打开、读写、关闭等操作。1.打开文件使用open()函数来打开文件,其基本语法如下: f=open(file_path,mode,encoding=None)f:是open函数的文件对象,拥有属性和方法。file_path:文件的路径,可以是相对路径或绝对路径。mode:打开文件的模式,常见的模式有:r:以只读模式打开文件,文件指针会放在文

- Windows使用Browser Use笔记

人工智能ai开发

相关文档:https://docs.browser-use.com/quickstart首先安装UV命令行cmdpowershell-ExecutionPolicyByPass-c"irmhttps://astral.sh/uv/install.ps1|iex"设置环境变量setPath=C:\xx\.local\bin;%Path%查看版本uv-V查看可用和已安装的Python版本uvpytho

- 查看 CUDA cudnn 版本 查看Navicat GPU版本

FergusJ

备份python开发语言

查看显卡型号:lspci|grepVGA(lspci是linux查看硬件信息的命令),屏幕会打印出主机的集显几独显信息python中查看显卡型号fromtensorflow.python.clientimportdevice_libdevice_lib.list_local_devices()

- python函数的多种参数使用形式

红虾程序员

Pythonpython开发语言pycharm

目录1.位置参数(PositionalArguments)2.关键字参数(KeywordArguments)3.默认参数(DefaultArguments)4.可变参数(VariablePositionalArguments)5.关键字可变参数(VariableKeywordArguments)6.特殊用法:传递列表或字典作为参数Python中函数的参数使用形式非常灵活,主要包括以下几种类型:位置

- 【附JS、Python、C++题解】Leetcode面试150题(7)

moz与京

leetcode整理javascriptpythonc++

一、题目167.两数之和II-输入有序数组给你一个下标从1开始的整数数组numbers,该数组已按非递减顺序排列,请你从数组中找出满足相加之和等于目标数target的两个数。如果设这两个数分别是numbers[index1]和numbers[index2],则1targetIndex(vectornums,inttarget){intlength=nums.size();if(length<2){

- 量化交易api有哪些类型?如何选择适合自己的量化交易api?

股票程序化交易接口

量化交易股票API接口Python股票量化交易区块链量化交易api类型选择数据获取股票量化接口股票API接口

Python股票接口实现查询账户,提交订单,自动交易(1)Python股票程序交易接口查账,提交订单,自动交易(2)股票量化,Python炒股,CSDN交流社区>>>量化交易API的主要类型量化交易依赖大量数据,数据获取型API就显得尤为重要。这种类型的API能够连接到各种数据源,如股票市场数据、期货数据等。它可以为交易者提供实时价格数据、历史数据等。一些API能从各大证券交易所获取股票的最新成交

- python读取excel数据和提取图片

我就是全世界

pythonexcel开发语言

1.引言1.1日常工作中Excel的使用在现代办公环境中,Excel(电子表格软件)是数据管理和分析的重要工具之一。无论是财务报表、销售数据、项目管理还是日常报告,Excel都扮演着不可或缺的角色。其强大的数据处理能力、灵活的格式设置以及丰富的图表功能,使得Excel成为各行各业专业人士的首选工具。Excel的主要功能包括:数据录入与管理:用户可以轻松输入、编辑和管理大量数据。数据分析:通过内置的

- 从 0 开始使用 cursor 开发一个移动端跨平台应用程序

沐怡旸

reactnative

1.安装必要的工具和环境在开始之前,确保你的开发环境已经安装了以下工具:a.安装Node.js和npmReactNative依赖Node.js和npm(NodePackageManager)。你可以从Node.js官网下载并安装最新版本。b.安装PythonReactNative的Android开发需要Python。确保你已经安装了Python2.7或Python3.x。c.安装Java环境Rea

- 2020年第十一届蓝桥杯python组省赛

Ruoki~

蓝桥杯python真题蓝桥杯职场和发展

前言:python最简单的一套题了,适合小白入门练手目录填空题门牌制作寻找2020跑步锻炼蛇形填数排序编程大题成绩统计单词分析数字三角形平面切分装饰珠填空题门牌制作题目:小蓝要为一条街的住户制作门牌号。这条街一共有2020位住户,门牌号从1到2020编号。小蓝制作门牌的方法是先制作0到9这几个数字字符,最后根据需要将字符粘贴到门牌上,例如门牌1017需要依次粘贴字符1、0、1、7,即需要1个字符0

- 详解如何通过Python的BeautifulSoup爬虫+NLP标签提取+Dijkstra规划路径和KMeans聚类分析帮助用户规划旅行路线

mosquito_lover1

pythonbeautifulsoup爬虫kmeans自然语言处理

系统模块:数据采集模块(爬虫):负责从目标网站抓取地点数据(如名称、经纬度、描述等)数据预处理模块(标签算法):对抓取到的地点数据进行清洗和分类。根据地点特征(如经纬度、描述文本)打上标签(如“适合家庭”、“适合冒险”)。地理数据处理模块(地图API):使用地图API获取地点的详细信息(如地址、距离、路径等)。计算地点之间的距离或路径。路径规划模块:根据用户输入的起点和终点,规划最优路径。支持多种

- Python 问题:ModuleNotFoundError: No module named ‘matplotlib‘

我命由我12345

Python-问题清单pythonmatplotlib开发语言c++c#后端

问题与处理策略1、问题描述importmatplotlib.pyplotaspltfig,ax=plt.subplots()ax.plot([1,2,3,4],[1,4,2,3])plt.show()执行上述代码,报如下错误ModuleNotFoundError:Nomodulenamed'matplotlib'#翻译ModuleNotFoundation错误:没有名为matplotlib的模块2

- Python函数专题:引用传参

圣逸

从入门到精通Python语言python开发语言Python入门精通python数据结构

在Python编程中,函数是一个非常重要的概念。函数不仅能提高代码的可重用性,还能够使代码结构更加清晰。在函数的设计和使用中,参数的传递方式是一个关键的因素。Python中的参数传递有两种主要形式:值传递和引用传递。虽然Python的参数传递机制有时被称为"引用传递",但实际上它更接近于"对象引用传递"。本文将深入探讨Python中的引用传参及其相关概念。一、基本概念在讨论引用传参之前,首先要理解

- python函数支持哪些参数类型_Python函数的几种参数类型

weixin_39965283

以下代码均以Python3为基础理解。初识Python函数大部分常见的语言如C、Java、PHP、C#、JavaScript等属于C系语言,Python不属于他们中的一员(ruby亦然)。在这些语言中,Python也属于比较新奇的一派,就函数来说,它没有大括号,用def关键字定义一个函数,定义后用:然后换行tab指定函数函数的范围,当然也不存在什么分号。作为一个函数,那个它肯定是有参数的,Pyth

- python自定义函数的参数有多种类型_Python实现自定义函数的5种常见形式分析

weixin_39632728

Python自定义函数是以def开头,空一格之后是这个自定义函数的名称,名称后面是一对括号,括号里放置形参列表,结束括号后面一定要有冒号“:”,函数的执行体程序代码也要有适当的缩排。Python自定义函数的通用语法是:def函数名称(形参列表):执行体程序代码Python自定义函数的5种常见形式:1、标准自定义函数:形参列表是标准的tuple数据类型>>>defabvedu_add(x,y):pr

- 深入了解Python的shutil模块

上官美丽

技术分享python

在Python编程中,处理文件和目录是一个常见的需求。而shutil模块就像一个得力助手,专门用于文件和目录的操作!这篇文章将带你深入探索shutil模块的各种功能,让你在管理文件时游刃有余。什么是shutil模块?shutil是Python的一个标准库,主要用于高效地处理文件和目录。这个模块提供了很多有用的功能,比如复制、移动、删除文件,甚至可以压缩和解压文件!无论你是要整理文档、备份数据,还是

- Django ORM自定义排序的实用示例

上官美丽

技术分享django数据库sqlite

在使用Django进行开发时,ORM(对象关系映射)是一个非常强大的工具。它让我们可以用Python代码直接操作数据库,而不需要写SQL语句。当我们需要对数据进行排序时,DjangoORM同样提供了丰富的功能。今天,我们就来聊聊如何在Django中实现自定义排序,帮助你更好地管理和展示数据!理解DjangoORM的排序功能DjangoORM提供了order_by()方法,允许我们对查询集进行排序。

- Python for循环详解

红虾程序员

Python开发语言idepythonpycharm

目录一、基本语法二、用法示例1、遍历字符串2、遍历列表3、遍历元组4、遍历字典5、使用range()函数6、使用enumerate()函数7、嵌套循环8、break和continue语句9、else子句三、优点四、缺点在Python中,for循环是一种用于迭代可迭代对象(如列表、元组、字典、集合、字符串或任何实现了迭代协议的对象)的语句,它允许按顺序访问可迭代对象中的每个元素,并对每个元素执行一组

- Python:区块链 Blockchain 入门的技术指南

拾荒的小海螺

Pythonpython区块链开发语言

1、简述区块链(Blockchain)是一种去中心化、不可篡改的分布式账本技术,最初因比特币而广为人知。如今,区块链已发展成为一种可以应用于金融、供应链管理、智能合约等多个领域的技术。本文将简要介绍区块链的基本概念和原理,并通过Python实现一个简化的区块链原型,帮助您快速上手区块链的实践。2、基本原理区块链是一种链式结构,由多个“区块”串联而成。每个区块中包含若干交易信息,并通过加密哈希指向前

- python实现一个通讯录,拥有添加联系人,删除联系人,修改联系人,查询联系人,查找通讯录,退出功能

新手懒羊哥

python开发语言

print('-'*25)#输出25个横杠print('-'*25)print("欢迎使用通讯录")print("1.添加联系人")print("2.查看通讯录")print("3.删除联系人")print("4.修改联系人")print("5.查找联系人")print("6.退出")print('-'*25)list1=[0]*10all_user=[]whileTrue:choose=inpu

- 基于Python爬虫的商业新闻趋势分析:数据抓取与深度分析实战

Python爬虫项目

2025年爬虫实战项目python爬虫开发语言媒体游戏

在信息化和数字化日益发展的今天,商业新闻成为了行业动向、市场变化、竞争格局等多方面信息的重要来源。对于企业和投资者来说,及时了解商业新闻不仅能帮助做出战略决策,还能洞察市场趋势和风险。在此背景下,商业新闻分析的需求日益增长。通过爬虫技术获取和分析商业新闻数据,不仅可以节省时间和成本,还能高效、精准地进行趋势预测与决策支持。本篇博客将详细介绍如何使用Python爬虫技术抓取商业新闻数据,并进行趋势分

- 基于Python的金融领域AI训练数据抓取实战(完整技术解析)

海拥✘

python金融人工智能

项目背景与需求分析场景描述为训练一个覆盖全球金融市场的多模态大语言模型(LLM),需实时采集以下数据:全球30+主要证券交易所(NYSE、NASDAQ、LSE、TSE等)的上市公司公告企业财报PDF文档及结构化数据社交媒体舆情数据(Twitter、StockTwits)新闻媒体分析(Reuters、Bloomberg)技术挑战地理封锁:部分交易所(如日本TSE)仅允许本国IP访问历史数据动态反爬:

- 视频转音频, 音频转文字

言之。

python音视频

Ubuntu24环境准备#系统级依赖sudoaptupdate&&sudoaptinstall-yffmpegpython3-venvgitbuild-essentialpython3-dev#Python虚拟环境python3-mvenv~/ai_summarysource~/ai_summary/bin/activate核心工具链工具用途安装命令Whisper语音识别pipinstallope

- 用 Python 实现每秒百万级请求

weixin_33719619

python网络后端

本文讲的是用Python实现每秒百万级请求,用Python可以每秒发出百万个请求吗?这个问题终于有了肯定的回答。许多公司抛弃Python拥抱其他语言就为了提高性能节约服务器成本。但是没必要啊。Python也可以胜任。Python社区近来针对性能做了很多优化。CPython3.6新的字典实现方式提升了解释器的总体性能。得益于更快的调用约定和字典查询缓存,CPython3.7会更快。对于计算密集型工作

- 详解离线安装Python库

爱编程的喵喵

Python基础课程python离线安装requirements

大家好,我是爱编程的喵喵。双985硕士毕业,现担任全栈工程师一职,热衷于将数据思维应用到工作与生活中。从事机器学习以及相关的前后端开发工作。曾在阿里云、科大讯飞、CCF等比赛获得多次Top名次。现为CSDN博客专家、人工智能领域优质创作者。喜欢通过博客创作的方式对所学的知识进行总结与归纳,不仅形成深入且独到的理解,而且能够帮助新手快速入门。 本文主要介绍了详解离线安装Python库,希望能对

- Argos Translate 开源项目教程

经优英

ArgosTranslate开源项目教程argos-translateOpen-sourceofflinetranslationlibrarywritteninPython项目地址:https://gitcode.com/gh_mirrors/ar/argos-translate项目介绍ArgosTranslate是一个开源的离线翻译库,使用Python编写。它利用OpenNMT进行翻译,Sent

- pytesseract,一个超强的 Python 库!

大模型开发

python开发语言

大家好,今天为大家分享一个超强的Python库-pytesseract。在当今数字化时代,文字识别技术扮演着越来越重要的角色。Pythonpytesseract库是一个强大的工具,能够帮助开发者轻松实现图像中文字的识别。本文将深入探讨pytesseract库的原理、功能、使用方法以及实际应用场景,并提供丰富的示例代码,让读者更全面地了解这个工具库。什么是Pythonpytesseract库?Pyt

- 基于协同过滤推荐算法的景点票务数据系统(python-计算机毕设)

计算机程序设计(接毕设)

推荐算法机器学习毕业设计python人工智能

摘要IABSTRACTII第1章引言1研究背景及意义1研究背景1研究意义1国内外研究现状2智慧旅游3旅游大数据3研究内容4本章小结4第2章相关技术概述5基于内容的推荐算法5基于内容的推荐算法原理5基于内容的推荐算法实现5协同过滤推荐算法6协同过滤算法原理6协同过滤算法实现7SpringBoot框架9SpringBoot简介9SpringBoot特性10SpringBoot工作原理10Vue.js框

- 3月TIOBE编程语言排行:Python稳居榜首,C++和Java市场份额稳步上升

朱公子的Note

编程语言pythonc++javaTIOBE编程语言排行

TIOBE编程语言排行榜是一个基于全球程序员数量、课程数量和第三方供应商数量的指标,旨在反映编程语言的流行度。根据TIOBEIndex,它每月更新一次,计算方法基于搜索引擎(如Google、Bing、Wikipedia等)的查询结果,涵盖专业开发者的兴趣和需求。需要注意的是,TIOBE指数不代表“最佳”编程语言或代码量最多的语言,而是反映语言在开发者社区中的热度。2025年3月的排行榜特别提到Py

- 桌面上有多个球在同时运动,怎么实现球之间不交叉,即碰撞?

换个号韩国红果果

html小球碰撞

稍微想了一下,然后解决了很多bug,最后终于把它实现了。其实原理很简单。在每改变一个小球的x y坐标后,遍历整个在dom树中的其他小球,看一下它们与当前小球的距离是否小于球半径的两倍?若小于说明下一次绘制该小球(设为a)前要把他的方向变为原来相反方向(与a要碰撞的小球设为b),即假如当前小球的距离小于球半径的两倍的话,马上改变当前小球方向。那么下一次绘制也是先绘制b,再绘制a,由于a的方向已经改变

- 《高性能HTML5》读后整理的Web性能优化内容

白糖_

html5

读后感

先说说《高性能HTML5》这本书的读后感吧,个人觉得这本书前两章跟书的标题完全搭不上关系,或者说只能算是讲解了“高性能”这三个字,HTML5完全不见踪影。个人觉得作者应该首先把HTML5的大菜拿出来讲一讲,再去分析性能优化的内容,这样才会有吸引力。因为只是在线试读,没有机会看后面的内容,所以不胡乱评价了。

- [JShop]Spring MVC的RequestContextHolder使用误区

dinguangx

jeeshop商城系统jshop电商系统

在spring mvc中,为了随时都能取到当前请求的request对象,可以通过RequestContextHolder的静态方法getRequestAttributes()获取Request相关的变量,如request, response等。 在jshop中,对RequestContextHolder的

- 算法之时间复杂度

周凡杨

java算法时间复杂度效率

在

计算机科学 中,

算法 的时间复杂度是一个

函数 ,它定量描述了该算法的运行时间。这是一个关于代表算法输入值的

字符串 的长度的函数。时间复杂度常用

大O符号 表述,不包括这个函数的低阶项和首项系数。使用这种方式时,时间复杂度可被称为是

渐近 的,它考察当输入值大小趋近无穷时的情况。

这样用大写O()来体现算法时间复杂度的记法,

- Java事务处理

g21121

java

一、什么是Java事务 通常的观念认为,事务仅与数据库相关。 事务必须服从ISO/IEC所制定的ACID原则。ACID是原子性(atomicity)、一致性(consistency)、隔离性(isolation)和持久性(durability)的缩写。事务的原子性表示事务执行过程中的任何失败都将导致事务所做的任何修改失效。一致性表示当事务执行失败时,所有被该事务影响的数据都应该恢复到事务执行前的状

- Linux awk命令详解

510888780

linux

一. AWK 说明

awk是一种编程语言,用于在linux/unix下对文本和数据进行处理。数据可以来自标准输入、一个或多个文件,或其它命令的输出。它支持用户自定义函数和动态正则表达式等先进功能,是linux/unix下的一个强大编程工具。它在命令行中使用,但更多是作为脚本来使用。

awk的处理文本和数据的方式:它逐行扫描文件,从第一行到

- android permission

布衣凌宇

Permission

<uses-permission android:name="android.permission.ACCESS_CHECKIN_PROPERTIES" ></uses-permission>允许读写访问"properties"表在checkin数据库中,改值可以修改上传

<uses-permission android:na

- Oracle和谷歌Java Android官司将推迟

aijuans

javaoracle

北京时间 10 月 7 日,据国外媒体报道,Oracle 和谷歌之间一场等待已久的官司可能会推迟至 10 月 17 日以后进行,这场官司的内容是 Android 操作系统所谓的 Java 专利权之争。本案法官 William Alsup 称根据专利权专家 Florian Mueller 的预测,谷歌 Oracle 案很可能会被推迟。 该案中的第二波辩护被安排在 10 月 17 日出庭,从目前看来

- linux shell 常用命令

antlove

linuxshellcommand

grep [options] [regex] [files]

/var/root # grep -n "o" *

hello.c:1:/* This C source can be compiled with:

- Java解析XML配置数据库连接(DOM技术连接 SAX技术连接)

百合不是茶

sax技术Java解析xml文档dom技术XML配置数据库连接

XML配置数据库文件的连接其实是个很简单的问题,为什么到现在才写出来主要是昨天在网上看了别人写的,然后一直陷入其中,最后发现不能自拔 所以今天决定自己完成 ,,,,现将代码与思路贴出来供大家一起学习

XML配置数据库的连接主要技术点的博客;

JDBC编程 : JDBC连接数据库

DOM解析XML: DOM解析XML文件

SA

- underscore.js 学习(二)

bijian1013

JavaScriptunderscore

Array Functions 所有数组函数对参数对象一样适用。1.first _.first(array, [n]) 别名: head, take 返回array的第一个元素,设置了参数n,就

- plSql介绍

bijian1013

oracle数据库plsql

/*

* PL/SQL 程序设计学习笔记

* 学习plSql介绍.pdf

* 时间:2010-10-05

*/

--创建DEPT表

create table DEPT

(

DEPTNO NUMBER(10),

DNAME NVARCHAR2(255),

LOC NVARCHAR2(255)

)

delete dept;

select

- 【Nginx一】Nginx安装与总体介绍

bit1129

nginx

启动、停止、重新加载Nginx

nginx 启动Nginx服务器,不需要任何参数u

nginx -s stop 快速(强制)关系Nginx服务器

nginx -s quit 优雅的关闭Nginx服务器

nginx -s reload 重新加载Nginx服务器的配置文件

nginx -s reopen 重新打开Nginx日志文件

- spring mvc开发中浏览器兼容的奇怪问题

bitray

jqueryAjaxspringMVC浏览器上传文件

最近个人开发一个小的OA项目,属于复习阶段.使用的技术主要是spring mvc作为前端框架,mybatis作为数据库持久化技术.前台使用jquery和一些jquery的插件.

在开发到中间阶段时候发现自己好像忽略了一个小问题,整个项目一直在firefox下测试,没有在IE下测试,不确定是否会出现兼容问题.由于jquer

- Lua的io库函数列表

ronin47

lua io

1、io表调用方式:使用io表,io.open将返回指定文件的描述,并且所有的操作将围绕这个文件描述

io表同样提供三种预定义的文件描述io.stdin,io.stdout,io.stderr

2、文件句柄直接调用方式,即使用file:XXX()函数方式进行操作,其中file为io.open()返回的文件句柄

多数I/O函数调用失败时返回nil加错误信息,有些函数成功时返回nil

- java-26-左旋转字符串

bylijinnan

java

public class LeftRotateString {

/**

* Q 26 左旋转字符串

* 题目:定义字符串的左旋转操作:把字符串前面的若干个字符移动到字符串的尾部。

* 如把字符串abcdef左旋转2位得到字符串cdefab。

* 请实现字符串左旋转的函数。要求时间对长度为n的字符串操作的复杂度为O(n),辅助内存为O(1)。

*/

pu

- 《vi中的替换艺术》-linux命令五分钟系列之十一

cfyme

linux命令

vi方面的内容不知道分类到哪里好,就放到《Linux命令五分钟系列》里吧!

今天编程,关于栈的一个小例子,其间我需要把”S.”替换为”S->”(替换不包括双引号)。

其实这个不难,不过我觉得应该总结一下vi里的替换技术了,以备以后查阅。

1

所有替换方案都要在冒号“:”状态下书写。

2

如果想将abc替换为xyz,那么就这样

:s/abc/xyz/

不过要特别

- [轨道与计算]新的并行计算架构

comsci

并行计算

我在进行流程引擎循环反馈试验的过程中,发现一个有趣的事情。。。如果我们在流程图的每个节点中嵌入一个双向循环代码段,而整个流程中又充满着很多并行路由,每个并行路由中又包含着一些并行节点,那么当整个流程图开始循环反馈过程的时候,这个流程图的运行过程是否变成一个并行计算的架构呢?

- 重复执行某段代码

dai_lm

android

用handler就可以了

private Handler handler = new Handler();

private Runnable runnable = new Runnable() {

public void run() {

update();

handler.postDelayed(this, 5000);

}

};

开始计时

h

- Java实现堆栈(list实现)

datageek

数据结构——堆栈

public interface IStack<T> {

//元素出栈,并返回出栈元素

public T pop();

//元素入栈

public void push(T element);

//获取栈顶元素

public T peek();

//判断栈是否为空

public boolean isEmpty

- 四大备份MySql数据库方法及可能遇到的问题

dcj3sjt126com

DBbackup

一:通过备份王等软件进行备份前台进不去?

用备份王等软件进行备份是大多老站长的选择,这种方法方便快捷,只要上传备份软件到空间一步步操作就可以,但是许多刚接触备份王软件的客用户来说还原后会出现一个问题:因为新老空间数据库用户名和密码不统一,网站文件打包过来后因没有修改连接文件,还原数据库是好了,可是前台会提示数据库连接错误,网站从而出现打不开的情况。

解决方法:学会修改网站配置文件,大多是由co

- github做webhooks:[1]钩子触发是否成功测试

dcj3sjt126com

githubgitwebhook

转自: http://jingyan.baidu.com/article/5d6edee228c88899ebdeec47.html

github和svn一样有钩子的功能,而且更加强大。例如我做的是最常见的push操作触发的钩子操作,则每次更新之后的钩子操作记录都会在github的控制板可以看到!

工具/原料

github

方法/步骤

- ">的作用" target="_blank">JSP中的作用

蕃薯耀

JSP中<base href="<%=basePath%>">的作用

>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>

- linux下SAMBA服务安装与配置

hanqunfeng

linux

局域网使用的文件共享服务。

一.安装包:

rpm -qa | grep samba

samba-3.6.9-151.el6.x86_64

samba-common-3.6.9-151.el6.x86_64

samba-winbind-3.6.9-151.el6.x86_64

samba-client-3.6.9-151.el6.x86_64

samba-winbind-clients

- guava cache

IXHONG

cache

缓存,在我们日常开发中是必不可少的一种解决性能问题的方法。简单的说,cache 就是为了提升系统性能而开辟的一块内存空间。

缓存的主要作用是暂时在内存中保存业务系统的数据处理结果,并且等待下次访问使用。在日常开发的很多场合,由于受限于硬盘IO的性能或者我们自身业务系统的数据处理和获取可能非常费时,当我们发现我们的系统这个数据请求量很大的时候,频繁的IO和频繁的逻辑处理会导致硬盘和CPU资源的

- Query的开始--全局变量,noconflict和兼容各种js的初始化方法

kvhur

JavaScriptjquerycss

这个是整个jQuery代码的开始,里面包含了对不同环境的js进行的处理,例如普通环境,Nodejs,和requiredJs的处理方法。 还有jQuery生成$, jQuery全局变量的代码和noConflict代码详解 完整资源:

http://www.gbtags.com/gb/share/5640.htm jQuery 源码:

(

- 美国人的福利和中国人的储蓄

nannan408

今天看了篇文章,震动很大,说的是美国的福利。

美国医院的无偿入院真的是个好措施。小小的改善,对于社会是大大的信心。小孩,税费等,政府不收反补,真的体现了人文主义。

美国这么高的社会保障会不会使人变懒?答案是否定的。正因为政府解决了后顾之忧,人们才得以倾尽精力去做一些有创造力,更造福社会的事情,这竟成了美国社会思想、人

- N阶行列式计算(JAVA)

qiuwanchi

N阶行列式计算

package gaodai;

import java.util.List;

/**

* N阶行列式计算

* @author 邱万迟

*

*/

public class DeterminantCalculation {

public DeterminantCalculation(List<List<Double>> determina

- C语言算法之打渔晒网问题

qiufeihu

c算法

如果一个渔夫从2011年1月1日开始每三天打一次渔,两天晒一次网,编程实现当输入2011年1月1日以后任意一天,输出该渔夫是在打渔还是在晒网。

代码如下:

#include <stdio.h>

int leap(int a) /*自定义函数leap()用来指定输入的年份是否为闰年*/

{

if((a%4 == 0 && a%100 != 0

- XML中DOCTYPE字段的解析

wyzuomumu

xml

DTD声明始终以!DOCTYPE开头,空一格后跟着文档根元素的名称,如果是内部DTD,则再空一格出现[],在中括号中是文档类型定义的内容. 而对于外部DTD,则又分为私有DTD与公共DTD,私有DTD使用SYSTEM表示,接着是外部DTD的URL. 而公共DTD则使用PUBLIC,接着是DTD公共名称,接着是DTD的URL.

私有DTD

<!DOCTYPErootSYST

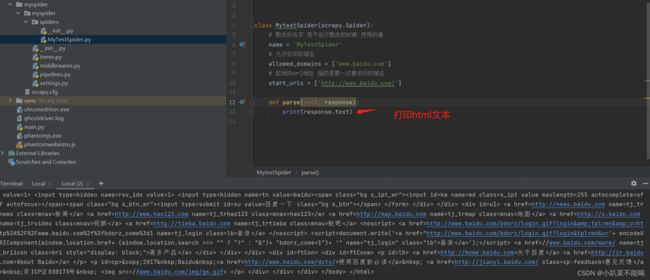

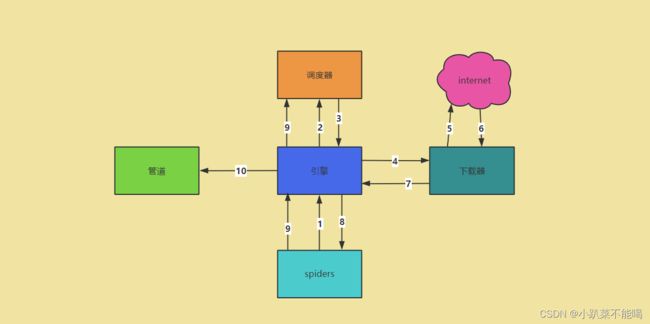

scrapy爬虫案例

scrapy爬虫案例