code

#include "ggml.h"

#include

#include

#include

#include

#include

#include "ggml.h"

#include

#include

#include

#include

#define MAX_NARGS 2

#if defined(__GNUC__)

#pragma GCC diagnostic ignored "-Wdouble-promotion"

#endif

//

// logging

//

#define GGML_DEBUG 0

#if (GGML_DEBUG >= 1)

#define GGML_PRINT_DEBUG(...) printf(__VA_ARGS__)

#else

#define GGML_PRINT_DEBUG(...)

#endif

#if (GGML_DEBUG >= 5)

#define GGML_PRINT_DEBUG_5(...) printf(__VA_ARGS__)

#else

#define GGML_PRINT_DEBUG_5(...)

#endif

#if (GGML_DEBUG >= 10)

#define GGML_PRINT_DEBUG_10(...) printf(__VA_ARGS__)

#else

#define GGML_PRINT_DEBUG_10(...)

#endif

#define GGML_PRINT(...) printf(__VA_ARGS__)

float frand(void) {

return (float)rand()/(float)RAND_MAX;

}

int irand(int n) {

return rand()%n;

}

void get_random_dims(int64_t * dims, int ndims) {

dims[0] = dims[1] = dims[2] = dims[3] = 1;

for (int i = 0; i < ndims; i++) {

dims[i] = 1 + irand(4);

}

}

void get_random_dims_minmax(int64_t * dims, int ndims, int min, int max) {

dims[0] = dims[1] = dims[2] = dims[3] = 1;

for (int i = 0; i < ndims; i++) {

dims[i] = min + irand(max-min);

}

}

struct ggml_tensor * get_random_tensor(

struct ggml_context * ctx0,

int ndims,

int64_t ne[],

float fmin,

float fmax) {

struct ggml_tensor * result = ggml_new_tensor(ctx0, GGML_TYPE_F32, ndims, ne);

switch (ndims) {

case 1:

for (int i0 = 0; i0 < ne[0]; i0++) {

((float *)result->data)[i0] = frand()*(fmax - fmin) + fmin;

}

break;

case 2:

for (int i1 = 0; i1 < ne[1]; i1++) {

for (int i0 = 0; i0 < ne[0]; i0++) {

((float *)result->data)[i1*ne[0] + i0] = frand()*(fmax - fmin) + fmin;

}

}

break;

case 3:

for (int i2 = 0; i2 < ne[2]; i2++) {

for (int i1 = 0; i1 < ne[1]; i1++) {

for (int i0 = 0; i0 < ne[0]; i0++) {

((float *)result->data)[i2*ne[1]*ne[0] + i1*ne[0] + i0] = frand()*(fmax - fmin) + fmin;

}

}

}

break;

case 4:

for (int i3 = 0; i3 < ne[3]; i3++) {

for (int i2 = 0; i2 < ne[2]; i2++) {

for (int i1 = 0; i1 < ne[1]; i1++) {

for (int i0 = 0; i0 < ne[0]; i0++) {

((float *)result->data)[i3*ne[2]*ne[1]*ne[0] + i2*ne[1]*ne[0] + i1*ne[0] + i0] = frand()*(fmax - fmin) + fmin;

}

}

}

}

break;

default:

assert(false);

};

return result;

}

float get_element(const struct ggml_tensor * t, int idx) {

return ((float *)t->data)[idx];

}

void set_element(struct ggml_tensor * t, int idx, float value) {

((float *)t->data)[idx] = value;

}

float run(int val){

return 0.1f;

}

struct ggml_init_params params = {

/* .mem_size = */ 1024*1024*10, // 设置内存大小为1GB

/* .mem_buffer = */ NULL, // 设置内存缓冲区为空

/* .no_alloc = */ false, // 允许内存分配

};

struct ggml_context * ctx = ggml_init(params); // 创建一个ggml_context结构体对象

int64_t ne1[4] = {2, 512, 1, 1};

int64_t ne2[4] = {512, 256, 1, 1};

int64_t ne3[4] = {1, 256, 1, 1};

int64_t ne4[4] = {256, 3, 1, 1};

int64_t ne5[4] = {3, 1, 1, 1};

int64_t mid_w[4] = {256, 256, 1, 1};

int64_t mid_b[4] = {256, 1, 1, 1};

struct res {ggml_cgraph ge;ggml_tensor * e;ggml_tensor * output;ggml_tensor * intput;ggml_tensor * target;};

res hf_getcompute_graph(){

struct ggml_tensor * X = ggml_new_tensor_1d(ctx, GGML_TYPE_F32, 2);// struct ggml_tensor * X = get_random_tensor(ctx, 2, ne1, -1, +1); // 创建一个2维随机张量a

struct ggml_tensor * target = ggml_new_tensor_1d(ctx, GGML_TYPE_F32, 3);

struct ggml_tensor * proj = get_random_tensor(ctx, 2, ne1, -1, +1);

struct ggml_tensor * fc1_weight = get_random_tensor(ctx, 2, ne2, -1, +1);

struct ggml_tensor * fc1_bias = get_random_tensor(ctx, 2, ne3, -1, +1);

struct ggml_tensor * fcout_weight = get_random_tensor(ctx, 2, ne4, -1, +1);

struct ggml_tensor * fcout_bias = get_random_tensor(ctx, 2, ne5, -1, +1);

struct ggml_tensor * fc3_weight = get_random_tensor(ctx, 2, mid_w, -1, +1);

struct ggml_tensor * fc3_bias = get_random_tensor(ctx, 2, mid_b, -1, +1);

struct ggml_tensor * fc4_weight = get_random_tensor(ctx, 2, mid_w, -1, +1);

struct ggml_tensor * fc4_bias = get_random_tensor(ctx, 2, mid_b, -1, +1);

struct ggml_tensor * fc5_weight = get_random_tensor(ctx, 2, mid_w, -1, +1);

struct ggml_tensor * fc5_bias = get_random_tensor(ctx, 2, mid_b, -1, +1);

ggml_set_param(ctx, fcout_weight); ggml_set_param(ctx, fcout_bias); ggml_set_param(ctx, fc3_weight); ggml_set_param(ctx, fc3_bias);

ggml_set_param(ctx, fc4_weight); ggml_set_param(ctx, fc4_bias); ggml_set_param(ctx, fc5_weight); ggml_set_param(ctx, fc5_bias);

ggml_tensor * proj_of_x = ggml_mul_mat(ctx, proj,X); // 512*1

ggml_tensor * fc1 = ggml_add(ctx, ggml_mul_mat(ctx, proj_of_x,fc1_weight), fc1_bias); // 1*256

ggml_tensor * fc1_ = ggml_view_2d(ctx,fc1,256,1,4*256,0);//ggml_transpose(ctx,fc1); // 256*1

// ggml_tensor * fc2 = ggml_mul_mat(ctx,fc3_weight,fc1_); // 256*1 ggml_tensor * fc2 = ggml_mul_mat(ctx,fc1_,fc3_weight); // 256*1 & 256*256 =>1*256

ggml_tensor * fc3 = ggml_add(ctx, ggml_mul_mat(ctx, fc3_weight, ggml_relu(ctx, fc1_)), fc3_bias);

ggml_tensor * fc4 = ggml_add(ctx, ggml_mul_mat(ctx, fc4_weight, ggml_relu(ctx, fc3)), fc4_bias);

ggml_tensor * fc5 = ggml_add(ctx, ggml_mul_mat(ctx, fc5_weight, ggml_relu(ctx, fc4)), fc5_bias);

ggml_tensor * fcout = ggml_add(ctx, ggml_mul_mat(ctx, fcout_weight, ggml_relu(ctx, fc5)), fcout_bias);// 3*1

ggml_tensor * fcout_ = ggml_sub(ctx,fcout,target);

struct ggml_tensor * e = ggml_sum(ctx, ggml_sqr(ctx, fcout_) ); // 计算张量d的平方和e

struct ggml_cgraph ge = ggml_build_forward(e); // 构建计算图ge

return {ge, e, fcout, X,target};

}

void hf_out(ggml_tensor * output){

printf("f = %f\n", ggml_get_f32_1d(output, 0));// cout<< "res "<<((float *)(fc2->data))[0];

printf("f = %f\n", ggml_get_f32_1d(output, 1));

printf("f = %f\n", ggml_get_f32_1d(output, 2));

}

void hf_free(){

ggml_free(ctx); // 释放上下文内存

}

void hf_set_data_random(ggml_tensor * input,ggml_tensor * target){

std::random_device rd; // 获取随机数种子

std::mt19937 gen(rd()); // 使用随机数种子初始化随机数生成器

std::uniform_real_distribution dis(0.0, 1.0); // 定义均匀分布函数,范围为[0.0, 1.0)

std::vector digit(512); // 创建大小为512的vector

for (int i = 0; i < digit.size(); i++) {

digit[i] = dis(gen); // 使用分布函数生成随机数,并赋值给vector的每个元素

}

memcpy(input->data, digit.data(), ggml_nbytes(input));

digit = {100.3f,200.5f,100.1f};

memcpy(target->data, digit.data(), ggml_nbytes(target));

}

void hf_set_data(ggml_tensor * input,ggml_tensor * target,vector& v_in ,vector& v_out){

memcpy(input->data, v_in.data(), ggml_nbytes(input));

memcpy(target->data, v_out.data(), ggml_nbytes(target));

}

res a = hf_getcompute_graph();

#ifdef __cplusplus

extern "C" {

#endif

struct rgb{ float r; float g; float b;};

float hf_play_test(int istrain) {

ggml_cgraph ge = a.ge;

ggml_tensor * e = a.e;

ggml_tensor * output = a.output;

ggml_tensor * input = a.intput;

ggml_tensor * target = a.target;

hf_set_data_random(input,target);

if(istrain == 1){

struct ggml_opt_params opt_params = ggml_opt_default_params(GGML_OPT_ADAM); // 获取默认的优化参数

ggml_opt(ctx, opt_params, e); // 通过指定的优化参数优化张量e

ggml_graph_reset(&ge); // 重置计算图

ggml_graph_compute_with_ctx(ctx, &ge, /*n_threads*/ 1); // 使用指定上下文计算计算图

}else{

ggml_graph_reset(&ge); // 重置计算图

ggml_graph_compute_with_ctx(ctx, &ge, /*n_threads*/ 1); // 使用指定上下文计算计算图

}

hf_out(output);

float fe = ggml_get_f32_1d(e, 0); // 获取张量e的第一个元素值

printf("%s: e = %.4f\n", __func__, fe); // 输出e的值

return fe;

}

rgb hf_play_set_val(int istrain,vector& v_in ,vector& v_out) {

//res a = hf_getcompute_graph();

ggml_cgraph ge = a.ge;

ggml_tensor * e = a.e;

ggml_tensor * output = a.output;

ggml_tensor * input = a.intput;

ggml_tensor * target = a.target;

hf_set_data(input,target,v_in,v_out);

if(istrain == 1){

struct ggml_opt_params opt_params = ggml_opt_default_params(GGML_OPT_ADAM); // 获取默认的优化参数

ggml_opt(ctx, opt_params, e); // 通过指定的优化参数优化张量e

ggml_graph_reset(&ge); // 重置计算图

ggml_graph_compute_with_ctx(ctx, &ge, /*n_threads*/ 1); // 使用指定上下文计算计算图

}else{

ggml_graph_reset(&ge); // 重置计算图

ggml_graph_compute_with_ctx(ctx, &ge, /*n_threads*/ 1); // 使用指定上下文计算计算图

}

hf_out(output);

float fe = ggml_get_f32_1d(e, 0); // 获取张量e的第一个元素值

printf("%s: e = %.4f\n", __func__, fe); // 输出e的值

return {ggml_get_f32_1d(output, 0),ggml_get_f32_1d(output, 1),ggml_get_f32_1d(output, 2)};

}

#ifdef __cplusplus

}

#endif

int main(void) {

// hf_play_test(0);

// hf_play_test(1);

vector v_in; vector v_out;

v_in={1.0f,1.0f}; v_out = {100.3f,200.5f,100.1f};

hf_play_set_val(0,v_in,v_out);

hf_play_set_val(1,v_in,v_out);

hf_play_set_val(0,v_in,v_out);

return 0;

}

浏览器端训练神经网络

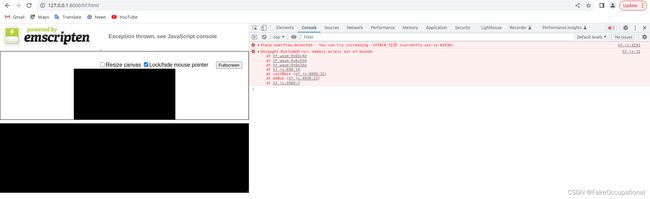

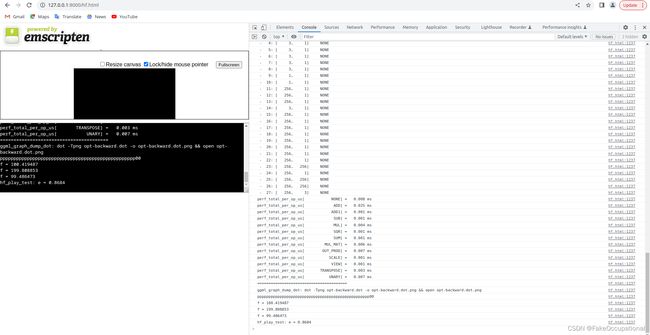

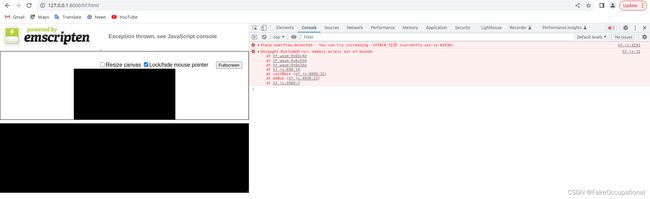

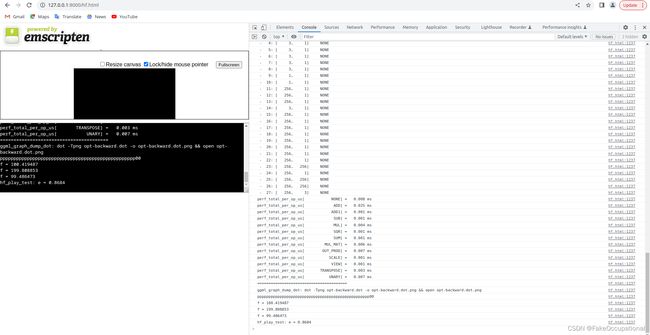

emcc -I~/***/76/ ~/***/76/ggml.c ~/leetcode/76/main.cpp -o ~/leetcode/76/hf.js -s EXPORTED_FUNCTIONS='["_hf_play_test","_hf_play_set_val","_malloc","_free"]' -s EXPORTED_RUNTIME_METHODS='["ccall"]' -s ALLOW_MEMORY_GROWTH=1

- 解决

emcc -I~/***/76/ ~/***/76/ggml.c ~/leetcode/76/main.cpp -o ~/leetcode/76/hf.html -s EXPORTED_FUNCTIONS='["_hf_play_set_val","_hf_play_test","_malloc","_free"]' -s EXPORTED_RUNTIME_METHODS='["ccall"]' -s STACK_SIZE=955360

CG

- 更多网络的训练方法: 神经网络的极限训练方法:gradient checkpointing

ggml_tensor * fc2 = ggml_mul_mat(ctx,fc1_,fc3_weight); // 256*1 & 256*256 =>1*256- hf_play_set_val’ has C-linkage specified, but returns user-defined type ‘vector’ which is incompatible with C [-Wreturn-type-c-linkage]

- https://github.com/cython/cython/issues/1839

- https://stackoverflow.com/questions/22901697/error-in-c-code-linkage-warning-c4190-type-has-c-linkage-specified-but-retu