第62步 深度学习图像识别:多分类建模(Pytorch)

基于WIN10的64位系统演示

一、写在前面

上期我们基于TensorFlow环境做了图像识别的多分类任务建模。

本期以健康组、肺结核组、COVID-19组、细菌性(病毒性)肺炎组为数据集,基于Pytorch环境,构建SqueezeNet多分类模型,因为它建模速度快。

同样,基于GPT-4辅助编程,这次改写过程就不展示了。

二、多分类建模实战

使用胸片的数据集:肺结核病人和健康人的胸片的识别。其中,健康人900张,肺结核病人700张,COVID-19病人549张、细菌性(病毒性)肺炎组900张,分别存入单独的文件夹中。

(a)直接分享代码

######################################导入包###################################

# 导入必要的包

import copy

import torch

import torchvision

import torchvision.transforms as transforms

from torchvision import models

from torch.utils.data import DataLoader

from torch import optim, nn

from torch.optim import lr_scheduler

import os

import matplotlib.pyplot as plt

import warnings

import numpy as np

warnings.filterwarnings("ignore")

plt.rcParams['font.sans-serif'] = ['SimHei']

plt.rcParams['axes.unicode_minus'] = False

# 设置GPU

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

################################导入数据集#####################################

import torch

from torchvision import datasets, transforms

import os

# 数据集路径

data_dir = "./MTB-1"

# 图像的大小

img_height = 100

img_width = 100

# 数据预处理

data_transforms = {

'train': transforms.Compose([

transforms.RandomResizedCrop(img_height),

transforms.RandomHorizontalFlip(),

transforms.RandomVerticalFlip(),

transforms.RandomRotation(0.2),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

]),

'val': transforms.Compose([

transforms.Resize((img_height, img_width)),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

]),

}

# 加载数据集

full_dataset = datasets.ImageFolder(data_dir)

# 获取数据集的大小

full_size = len(full_dataset)

train_size = int(0.7 * full_size) # 假设训练集占70%

val_size = full_size - train_size # 验证集的大小

# 随机分割数据集

torch.manual_seed(0) # 设置随机种子以确保结果可重复

train_dataset, val_dataset = torch.utils.data.random_split(full_dataset, [train_size, val_size])

# 将数据增强应用到训练集

train_dataset.dataset.transform = data_transforms['train']

# 创建数据加载器

batch_size = 32

train_dataloader = torch.utils.data.DataLoader(train_dataset, batch_size=batch_size, shuffle=True, num_workers=4)

val_dataloader = torch.utils.data.DataLoader(val_dataset, batch_size=batch_size, shuffle=True, num_workers=4)

dataloaders = {'train': train_dataloader, 'val': val_dataloader}

dataset_sizes = {'train': len(train_dataset), 'val': len(val_dataset)}

class_names = full_dataset.classes

###############################定义SqueezeNet模型################################

# 定义SqueezeNet模型

model = models.squeezenet1_1(pretrained=True) # 这里以SqueezeNet 1.1版本为例

num_ftrs = model.classifier[1].in_channels

# 根据分类任务修改最后一层

model.classifier[1] = nn.Conv2d(num_ftrs, len(class_names), kernel_size=(1,1))

# 修改模型最后的输出层为我们需要的类别数

model.num_classes = len(class_names)

model = model.to(device)

# 打印模型摘要

print(model)

#############################编译模型#########################################

# 定义损失函数

criterion = nn.CrossEntropyLoss()

# 定义优化器

optimizer = optim.Adam(model.parameters())

# 定义学习率调度器

exp_lr_scheduler = lr_scheduler.StepLR(optimizer, step_size=7, gamma=0.1)

# 开始训练模型

num_epochs = 50

# 初始化记录器

train_loss_history = []

train_acc_history = []

val_loss_history = []

val_acc_history = []

for epoch in range(num_epochs):

print('Epoch {}/{}'.format(epoch, num_epochs - 1))

print('-' * 10)

# 每个epoch都有一个训练和验证阶段

for phase in ['train', 'val']:

if phase == 'train':

model.train() # 设置模型为训练模式

else:

model.eval() # 设置模型为评估模式

running_loss = 0.0

running_corrects = 0

# 遍历数据

for inputs, labels in dataloaders[phase]:

inputs = inputs.to(device)

labels = labels.to(device)

# 零参数梯度

optimizer.zero_grad()

# 前向

with torch.set_grad_enabled(phase == 'train'):

outputs = model(inputs)

_, preds = torch.max(outputs, 1)

loss = criterion(outputs, labels)

# 只在训练模式下进行反向和优化

if phase == 'train':

loss.backward()

optimizer.step()

# 统计

running_loss += loss.item() * inputs.size(0)

running_corrects += torch.sum(preds == labels.data)

epoch_loss = running_loss / dataset_sizes[phase]

epoch_acc = (running_corrects.double() / dataset_sizes[phase]).item()

# 记录每个epoch的loss和accuracy

if phase == 'train':

train_loss_history.append(epoch_loss)

train_acc_history.append(epoch_acc)

else:

val_loss_history.append(epoch_loss)

val_acc_history.append(epoch_acc)

print('{} Loss: {:.4f} Acc: {:.4f}'.format(phase, epoch_loss, epoch_acc))

print()

# 保存模型

torch.save(model.state_dict(), 'model.pth')

# 加载最佳模型权重

#model.load_state_dict(best_model_wts)

#torch.save(model, 'shufflenet_best_model.pth')

#print("The trained model has been saved.")

###########################Accuracy和Loss可视化#################################

epoch = range(1, len(train_loss_history)+1)

fig, ax = plt.subplots(1, 2, figsize=(10,4))

ax[0].plot(epoch, train_loss_history, label='Train loss')

ax[0].plot(epoch, val_loss_history, label='Validation loss')

ax[0].set_xlabel('Epochs')

ax[0].set_ylabel('Loss')

ax[0].legend()

ax[1].plot(epoch, train_acc_history, label='Train acc')

ax[1].plot(epoch, val_acc_history, label='Validation acc')

ax[1].set_xlabel('Epochs')

ax[1].set_ylabel('Accuracy')

ax[1].legend()

#plt.savefig("loss-acc.pdf", dpi=300,format="pdf")

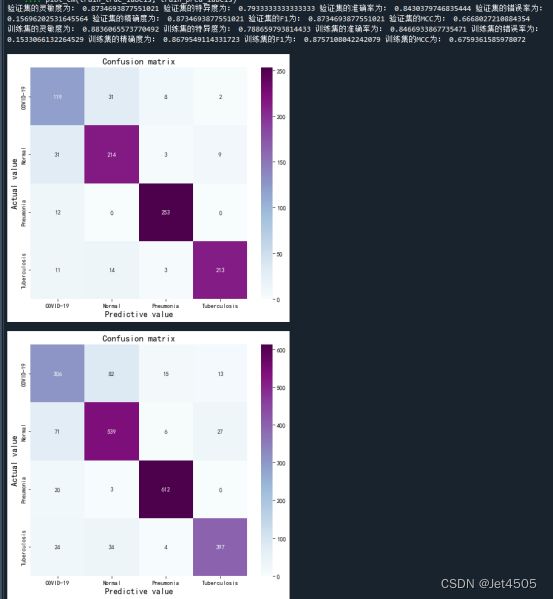

####################################混淆矩阵可视化#############################

from sklearn.metrics import classification_report, confusion_matrix

import math

import pandas as pd

import numpy as np

import seaborn as sns

from matplotlib.pyplot import imshow

# 定义一个绘制混淆矩阵图的函数

def plot_cm(labels, predictions):

# 生成混淆矩阵

conf_numpy = confusion_matrix(labels, predictions)

# 将矩阵转化为 DataFrame

conf_df = pd.DataFrame(conf_numpy, index=class_names ,columns=class_names)

plt.figure(figsize=(8,7))

sns.heatmap(conf_df, annot=True, fmt="d", cmap="BuPu")

plt.title('Confusion matrix',fontsize=15)

plt.ylabel('Actual value',fontsize=14)

plt.xlabel('Predictive value',fontsize=14)

def evaluate_model(model, dataloader, device):

model.eval() # 设置模型为评估模式

true_labels = []

pred_labels = []

# 遍历数据

for inputs, labels in dataloader:

inputs = inputs.to(device)

labels = labels.to(device)

# 前向

with torch.no_grad():

outputs = model(inputs)

_, preds = torch.max(outputs, 1)

true_labels.extend(labels.cpu().numpy())

pred_labels.extend(preds.cpu().numpy())

return true_labels, pred_labels

# 获取预测和真实标签

true_labels, pred_labels = evaluate_model(model, dataloaders['val'], device)

# 计算混淆矩阵

cm_val = confusion_matrix(true_labels, pred_labels)

a_val = cm_val[0,0]

b_val = cm_val[0,1]

c_val = cm_val[1,0]

d_val = cm_val[1,1]

# 计算各种性能指标

acc_val = (a_val+d_val)/(a_val+b_val+c_val+d_val) # 准确率

error_rate_val = 1 - acc_val # 错误率

sen_val = d_val/(d_val+c_val) # 灵敏度

sep_val = a_val/(a_val+b_val) # 特异度

precision_val = d_val/(b_val+d_val) # 精确度

F1_val = (2*precision_val*sen_val)/(precision_val+sen_val) # F1值

MCC_val = (d_val*a_val-b_val*c_val) / (np.sqrt((d_val+b_val)*(d_val+c_val)*(a_val+b_val)*(a_val+c_val))) # 马修斯相关系数

# 打印出性能指标

print("验证集的灵敏度为:", sen_val,

"验证集的特异度为:", sep_val,

"验证集的准确率为:", acc_val,

"验证集的错误率为:", error_rate_val,

"验证集的精确度为:", precision_val,

"验证集的F1为:", F1_val,

"验证集的MCC为:", MCC_val)

# 绘制混淆矩阵

plot_cm(true_labels, pred_labels)

# 获取预测和真实标签

train_true_labels, train_pred_labels = evaluate_model(model, dataloaders['train'], device)

# 计算混淆矩阵

cm_train = confusion_matrix(train_true_labels, train_pred_labels)

a_train = cm_train[0,0]

b_train = cm_train[0,1]

c_train = cm_train[1,0]

d_train = cm_train[1,1]

acc_train = (a_train+d_train)/(a_train+b_train+c_train+d_train)

error_rate_train = 1 - acc_train

sen_train = d_train/(d_train+c_train)

sep_train = a_train/(a_train+b_train)

precision_train = d_train/(b_train+d_train)

F1_train = (2*precision_train*sen_train)/(precision_train+sen_train)

MCC_train = (d_train*a_train-b_train*c_train) / (math.sqrt((d_train+b_train)*(d_train+c_train)*(a_train+b_train)*(a_train+c_train)))

print("训练集的灵敏度为:",sen_train,

"训练集的特异度为:",sep_train,

"训练集的准确率为:",acc_train,

"训练集的错误率为:",error_rate_train,

"训练集的精确度为:",precision_train,

"训练集的F1为:",F1_train,

"训练集的MCC为:",MCC_train)

# 绘制混淆矩阵

plot_cm(train_true_labels, train_pred_labels)

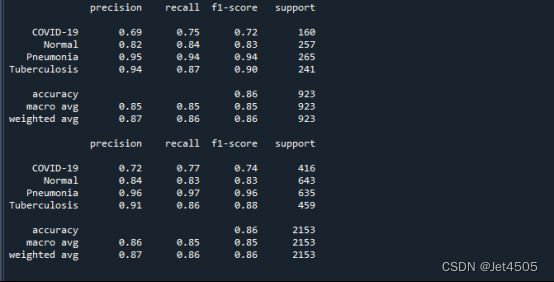

################################模型性能参数计算################################

from sklearn import metrics

def test_accuracy_report(model, dataloader, device):

true_labels, pred_labels = evaluate_model(model, dataloader, device)

print(metrics.classification_report(true_labels, pred_labels, target_names=class_names))

test_accuracy_report(model, dataloaders['val'], device)

def train_accuracy_report(model, dataloader, device):

true_labels, pred_labels = evaluate_model(model, dataloader, device)

print(metrics.classification_report(true_labels, pred_labels, target_names=class_names))

train_accuracy_report(model, dataloaders['train'], device)

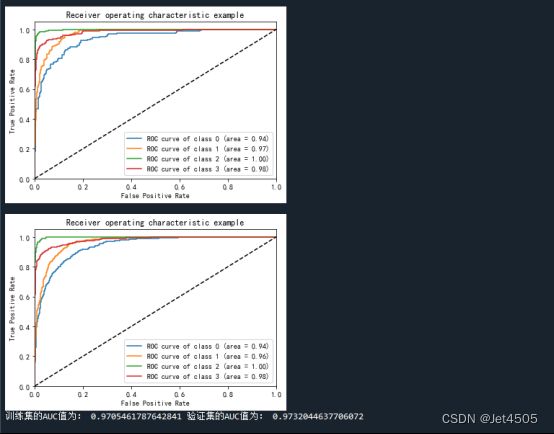

################################AUC曲线绘制####################################

from sklearn import metrics

import numpy as np

import matplotlib.pyplot as plt

from matplotlib.pyplot import imshow

from sklearn.metrics import classification_report, confusion_matrix

import seaborn as sns

import pandas as pd

import math

from sklearn.metrics import roc_auc_score, auc

from sklearn.preprocessing import LabelBinarizer

def multiclass_roc_auc_score(y_test, y_pred, average="macro"):

# 判断 y_test 是否需要进行标签二值化

if len(np.unique(y_test)) > 2: # 假设 y_test 是类别标签,且类别数大于 2

lb = LabelBinarizer()

lb.fit(y_test)

y_test = lb.transform(y_test)

return roc_auc_score(y_test, y_pred, average=average)

def plot_roc(name, labels, predictions, **kwargs):

lb = LabelBinarizer()

labels = lb.fit_transform(labels) # one-hot 编码

# predictions 不需要进行标签二值化

# 计算ROC曲线和AUC值

fpr = dict()

tpr = dict()

roc_auc = dict()

class_num = len(class_names)

for i in range(class_num): # class_num是类别数目

fpr[i], tpr[i], _ = metrics.roc_curve(labels[:, i], predictions[:, i])

roc_auc[i] = metrics.auc(fpr[i], tpr[i])

for i in range(class_num):

plt.plot(fpr[i], tpr[i], label='ROC curve of class {0} (area = {1:0.2f})' ''.format(i, roc_auc[i]))

plt.plot([0, 1], [0, 1], 'k--')

plt.xlim([0.0, 1.0])

plt.ylim([0.0, 1.05])

plt.xlabel('False Positive Rate')

plt.ylabel('True Positive Rate')

plt.title('Receiver operating characteristic example')

plt.legend(loc="lower right")

plt.show()

# 确保模型处于评估模式

model.eval()

def evaluate_model_pre(model, data_loader, device):

model.eval()

predictions = []

labels = []

with torch.no_grad():

for inputs, targets in data_loader:

inputs = inputs.to(device)

targets = targets.to(device)

outputs = model(inputs)

# 使用 softmax 函数,转换成概率值

prob_outputs = torch.nn.functional.softmax(outputs, dim=1)

predictions.append(prob_outputs.detach().cpu().numpy())

labels.append(targets.detach().cpu().numpy())

return np.concatenate(predictions, axis=0), np.concatenate(labels, axis=0)

val_pre_auc, val_label_auc = evaluate_model_pre(model, dataloaders['val'], device)

train_pre_auc, train_label_auc = evaluate_model_pre(model, dataloaders['train'], device)

auc_score_val = multiclass_roc_auc_score(val_label_auc, val_pre_auc)

auc_score_train = multiclass_roc_auc_score(train_label_auc, train_pre_auc)

plot_roc('validation AUC: {0:.4f}'.format(auc_score_val), val_label_auc, val_pre_auc, color="red", linestyle='--')

plot_roc('training AUC: {0:.4f}'.format(auc_score_train), train_label_auc, train_pre_auc, color="blue", linestyle='--')

print("训练集的AUC值为:",auc_score_train, "验证集的AUC值为:",auc_score_val)(b)输出结果:学习曲线

(c)输出结果:混淆矩阵

(d)输出结果:性能参数

(e)输出结果:ROC曲线

三、数据

链接:https://pan.baidu.com/s/1rqu15KAUxjNBaWYfEmPwgQ?pwd=xfyn

提取码:xfyn