K8S 学习笔记一 基础概念和环境搭建

K8S 学习笔记一 基础概念

- 1. Kubernates 基础概念

-

- 1.1 概述和特性

- 1.2 kubernetes 功能和架构

-

- 1.2.1 说明

- 1.2.2 K8S 功能

- 1.2.3 K8S 集群架构组件

-

- 1.2.3.1 MasterNode - API server

- 1.2.3.2 MasterNode - Scheduler

- 1.2.3.3 MasterNode - Controller-Manager

- 1.2.3.4 MasterNode - etcd

- 1.2.3.5 WorkerNode - Kubelet

- 1.2.3.6 WorkerNode - Kube-proxy

- 1.2.3.7 docker (通常都包含的一个容器化的工具)

- 1.3 K8S 核心概念

-

- 1.3.1 Pod

-

- 1.3.1.1 Pod 概述

- 1.3.1.2 Pod 特性

- 1.3.2 controller

- 1.3.3 service

- 2. 单master方式搭建集群

-

- 2.1 示意图

- 2.2 硬件要求

- 2.3 前置知识点

-

- 2.3.1 部署方式

- 2.3.2 docker与k8s的依赖关系

- 2.4 Kubeadm 单master集群搭建

-

- 2.4.1 Kubeadm 部署介绍

- 2.4.2 准备内容

- 2.4.3 三台服务器系统初始化

- 2.4.4 所有节点安装 Docker/kubeadm/kubelet

-

- 2.4.4.1 docker 安装

- 2.4.4.2 添加阿里云 YUM 软件源

- 2.4.4.3 安装 kubeadm,kubelet 和 kubectl

-

- 2.4.4.3.1 题外话,k8s的卸载

- 2.4.4.4 部署 Kubernetes Master

-

- 2.4.4.4.1 在 192.168.226.141 **(Master)** 进行初始化

- 2.4.4.4.2 题外话 -- kubeadm 重启

- 2.4.4.4.3 异常处理

- 2.4.4.4.4 异常处理2 Unable to register node

- 2.4.4.4.5 异常处理3 Docker驱动问题

- 2.4.4.5 部署 Kubernetes worker

-

- 2.4.4.5.1 在 192.168.226.142/143 (worker) 执行

- 2.4.4.5.2 token有效期(令牌过期问题)

- 2.4.4.5.3 在 master 中查看node状态

- 2.4.4.6 部署 CNI 网络插件

- 2.4.4.7 测试

- 2.4.4.8 小结

- 2.5 kubernetes 集群搭建(二进制方式) -- 建议看看就好

-

- 2.5.1 安装要求

- 2.5.2 准备内容

- 2.5.3 三台服务器系统初始化

-

- 2.5.3.1 系统初始化

- 2.5.3.3 添加阿里云 YUM 软件源

- 2.5.3.3 安装 kubeadm、kubelet和kubectl

- 2.5.3.4 服务器规划:

- 2.5.4 为 Etcd 自签证书

-

- 2.5.4.1 准备 cfssl 证书生成工具

- 2.5.4.2 生成 Etcd 证书

- 2.5.4.3 使用自签 CA 签发 Etcd HTTPS 证书

- 2.5.4.4 从 Github 下载etcd二进制文件

- 2.5.4.5 部署 Etcd 集群

- 2.5.5 所有节点systemd 管理 docker

-

- 2.5.5.1 下载docker

- 2.5.5.2 systemd 管理 docker

- 2.5.6 部署master node

-

- 2.5.6.1 生成 kube-apiserver 证书

-

- 2.5.6.1.1 自签证书颁发机构(CA)

- 2.5.6.1.2 生成证书

- 2.5.6.1.3 使用自签 CA 签发 kube-apiserver HTTPS 证书

- 2.5.6.2 从 Github 下载二进制文件

- 2.5.6.3 解压二进制包

- 2.5.6.4 所有机器部署 kube-apiserver

-

- 2.5.6.4.1 创建配置文件

- 2.5.6.4.2 拷贝刚才生成的证书

- 2.5.6.4.3 启用 TLS Bootstrapping 机制

- 2.5.6.4.4 systemd 管理 apiserver

- 2.5.6.4.5 启动并设置开机启动

- 2.5.6.4.6 授权 kubelet-bootstrap 用户允许请求证书

- 2.5.6.5 部署kube-controller-manager

-

- 2.5.6.5.1 创建配置文件

- 2.5.6.5.2 systemd 管理 controller-manager

- 2.5.6.5.3 启动并设置开机启动

- 2.5.6.6 部署 kube-scheduler

-

- 2.5.6.6.1 创建配置文件

- 2.5.6.6.2 systemd 管理 scheduler

- 2.5.6.6.3 启动并设置开机启动

- 2.5.6.6.4 查看集群状态

- 2.5.7 部署worker node组件

-

- 2.5.7.1 创建工作目录并拷贝二进制文件

- 2.5.7.2 部署 kubelet

-

- 2.5.7.2.1 创建配置文件

- 2.5.7.2.2 配置参数文件

- 2.5.7.2.3 生成 bootstrap.kubeconfig 文件

- 2.5.7.2.4 systemd 管理 kubelet

- 2.5.7.2.5 启动并设置开机启动

- 2.5.7.3 批准 kubelet 证书申请并加入集群

-

- 2.5.7.3.1 查看 kubelet 证书请求

- 2.5.7.3.2 批准申请

- 2.5.7.3.3 查看节点

- 2.5.7.4 部署 kube-proxy

-

- 2.5.7.4.1 创建配置文件

- 2.5.7.4.2 配置参数文件

- 2.5.7.4.3 生成 kube-proxy.kubeconfig 文件

- 2.5.7.4.4 systemd 管理 kube-proxy

- 2.5.7.4.5 启动并设置开机启动

- 2.5.7.5 部署CNI 网络

- 2.5.7.6 授权 apiserver 访问 kubelet

- 2.5.7.7 新增加worker node

-

- 2.5.7.7.1 拷贝已部署好的 Node 相关文件到新节点

- 2.5.7.7.2 修改主机名

- 2.5.7.7.3 启动并设置开机启动

- 2.5.7.555 踩坑总结

- 2.5.7.555 踩坑总结2

- 2.6 两种搭建方式的区别

笔记记录尚硅谷老师的视频课

地址:https://www.bilibili.com/video/BV1GT4y1A756?p=3

1. Kubernates 基础概念

官网: https://kubernetes.io/docs/home/

1.1 概述和特性

kubernetes,简称 K8s,是用 8 代替 8 个字符“ubernete”而成的缩写。是一个开源的,

用于管理云平台中多个主机上的容器化的应用,Kubernetes 的目标是让部署容器化的

应用简单并且高效(powerful),Kubernetes 提供了应用部署,规划,更新,维护的

一种机制

Kubernetes 是 Google 开源的一个容器编排引擎,它支持自动化部署、大规模

可伸缩、应用容器化管理。在生产环境中部署一个应用程序时,通常要部署该应

用的多个实例以便对应用请求进行负载均衡。

在 Kubernetes 中,我们可以创建多个容器,每个容器里面运行一个应用实例,然后

通过内置的负载均衡策略,实现对这一组应用实例的管理、发现、访问,而这些细

节都不需要运维人员去进行复杂的手工配置和处理

1.2 kubernetes 功能和架构

1.2.1 说明

Kubernetes 是一个轻便的和可扩展的开源平台,用于管理容器化应用和服务。通过

Kubernetes 能够进行应用的自动化部署和扩缩容。在 Kubernetes 中,会将组成应用

的容器组合成一个逻辑单元以更易管理和发现。Kubernetes 积累了作为 Google

生产环境运行工作负载 15 年的经验,并吸收了来自于社区的最佳想法和实践。

1.2.2 K8S 功能

(1)自动装箱

基于容器对应用运行环境的资源配置要求自动部署应用容器

(2)自我修复(自愈能力)

当容器失败时,会对容器进行重启

当所部署的 Node 节点有问题时,会对容器进行重新部署和重新调度

当容器未通过监控检查时,会关闭此容器直到容器正常运行时,才会对外提供服务

(3)水平扩展

通过简单的命令、用户 UI 界面或基于 CPU 等资源使用情况,对应用容器进行规模

扩大或规模剪裁

(4)服务发现

用户不需使用额外的服务发现机制,就能够基于 Kubernetes 自身能力实现服务

发现和负载均衡

(5)滚动更新

可以根据应用的变化,对应用容器运行的应用,进行一次性或批量式更新

(6)版本回退

可以根据应用部署情况,对应用容器运行的应用,进行历史版本即时回退

(7)密钥和配置管理

在不需要重新构建镜像的情况下,可以部署和更新密钥和应用配置,类似热部署。

(8)存储编排

自动实现存储系统挂载及应用,特别对有状态应用实现数据持久化非常重要

存储系统可以来自于本地目录、网络存储(NFS、Gluster、Ceph 等)、公共云存储服务

(9)批处理

提供一次性任务,定时任务;满足批量数据处理和分析的场景

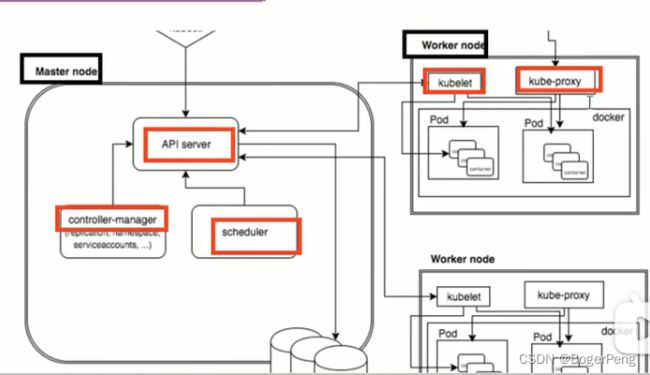

1.2.3 K8S 集群架构组件

Master Node: 主控节点

k8s 集群控制节点,对集群进行调度管理,接受集群外用户去集群操作请求;Master Node 由 API Server、Scheduler、ClusterState Store(ETCD 数据库)和 Controller MangerServer 所组成

Worker Node: 工作节点

集群工作节点,运行用户业务应用容器;

Worker Node 包含 kubelet、kube proxy 和 ContainerRun

1.2.3.1 MasterNode - API server

集群统一的入口,以restful方式,交给etcd存储

1.2.3.2 MasterNode - Scheduler

节点调度功能,选择node节点进行应用部署

1.2.3.3 MasterNode - Controller-Manager

处理集群中常规的后台任务,一个资源对应一个控制器

1.2.3.4 MasterNode - etcd

存储系统,用于保存集群相关的数据

1.2.3.5 WorkerNode - Kubelet

MasterNode指派到 worker node 节点的代表,管理本机容器

1.2.3.6 WorkerNode - Kube-proxy

提供网络代理,提供负载均衡等操作

1.2.3.7 docker (通常都包含的一个容器化的工具)

提供容器化工作

1.3 K8S 核心概念

1.3.1 Pod

1.3.1.1 Pod 概述

Pod 是 k8s 系统中可以创建和管理的最小单元,是资源对象模型中由用户创建或部署的最

小资源对象模型,也是在 k8s 上运行容器化应用的资源对象,其他的资源对象都是用来支

撑或者扩展 Pod 对象功能的,比如控制器对象是用来管控 Pod 对象的,Service 或者

Ingress 资源对象是用来暴露 Pod 引用对象的,PersistentVolume 资源对象是用来为 Pod

提供存储等等,k8s 不会直接处理容器,而是 Pod,Pod 是由一个或多个 container 组成

Pod 是 Kubernetes 的最重要概念,每一个 Pod 都有一个特殊的被称为”根容器“的 Pause

容器。Pause 容器对应的镜 像属于 Kubernetes 平台的一部分,除了 Pause 容器,每个 Pod还包含一个或多个紧密相关的用户业务容器

1.3.1.2 Pod 特性

是K8S的最小部署单元

是一组容器的集合

Pod里的容器共享网络

Pod生命周期是短暂的

1.3.2 controller

特性:

确保预期的pod副本数量

有状态应用部署

无状态应用部署

确保所有的node都运行同一个pod

支持一次性任务和定时任务

1.3.3 service

Service 是 Kubernetes 最核心概念,通过创建 Service,可以为一组具有相同功能的容器应用提供一个统一的入口地 址,并且将请求负载分发到后端的各个容器应用上。

定义一组pod的访问规则

2. 单master方式搭建集群

2.1 示意图

2.2 硬件要求

测试环境:

- master 2核 4G内存 20G硬盘

- worker 4核 8G内存 40G硬盘

生产环境:

- 更高要求

2.3 前置知识点

2.3.1 部署方式

目前生产部署 Kubernetes 集群主要有两种方式:

(1)kubeadm

Kubeadm 是一个 K8s 部署工具,提供 kubeadm init 和 kubeadm join,

用于快速部署 Kubernetes 集群。

官方地址:https://kubernetes.io/docs/reference/setup-tools/kubeadm/kubeadm/

(2)二进制包

从 github 下载发行版的二进制包,手动部署每个组件,组成 Kubernetes 集群。

Kubeadm 降低部署门槛,但屏蔽了很多细节,遇到问题很难排查。如果想更容易

可控,推荐使用二进制包部署 Kubernetes 集群,虽然手动部署麻烦点,期间可以

学习很多工作原理,也利于后期维护

2.3.2 docker与k8s的依赖关系

请参考:

http://t.zoukankan.com/sylvia-liu-p-14884920.html

https://blog.csdn.net/yucaifu1989/article/details/104651902

2.4 Kubeadm 单master集群搭建

2.4.1 Kubeadm 部署介绍

kubeadm 是官方社区推出的一个用于快速部署 kubernetes 集群的工具,这个工具

能通过两条指令完成一个 kubernetes 集群的部署:

第一、创建一个 Master 节点 kubeadm init

第二, 将 Node 节点加入到当前集群中 $ kubeadm join

2.4.2 准备内容

-3台虚拟机 141, 142, 143

安装CentOS7系统

- 硬件配置:2GB 或更多 RAM,2 个 CPU 或更多 CPU,硬盘 30GB 或更多

- 集群中所有机器之间网络互通

- 可以访问外网,需要拉取镜像

- 禁止 swap 分区

搭建参考这里:https://blog.csdn.net/BogerPeng/article/details/124908685

2.4.3 三台服务器系统初始化

- 1). 关闭防火墙:

systemctl stop firewalld

systemctl disable firewalld

为什么关闭防火墙,请参考:https://www.q578.com/s-5-2615546-0/

- 2). 关闭 selinux:

sed -i 's/enforcing/disabled/' /etc/selinux/config # 永久

setenforce 0 # 临时

- 3). 关闭 swap - K8S的要求

#(1)临时关闭swap分区, 重启失效;

swapoff -a

#(2)永久关闭swap分区

sed -ri 's/.*swap.*/#&/' /etc/fstab

#(3)输入命令 free -mh 查看一下分区的状态:

[root@k8shost admin]# sed -ri 's/.*swap.*/#&/' /etc/fstab

[root@k8shost admin]# free -mh

total used free shared buff/cache available

Mem: 1.8G 777M 331M 35M 710M 859M

Swap: 0B 0B 0B

- 4). 主机名:

$ hostnamectl set-hostname

## 141 机器

hostnamectl set-hostname k8smaster

## 142 机器

hostnamectl set-hostname k8sworker1

## 143 机器

hostnamectl set-hostname k8sworker2

- 5). 在 master 添加 hosts:

cat >> /etc/hosts << EOF

192.168.226.141 k8smaster

192.168.226.142 k8sworker1

192.168.226.143 k8sworker2

EOF

- 6). 将桥接的 IPv4 流量传递到 iptables 的链:

三台服务器都设置

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

# 生效

sysctl --system

- 7). 时间同步:

yum install ntpdate -y

ntpdate time.windows.com

2.4.4 所有节点安装 Docker/kubeadm/kubelet

2.4.4.1 docker 安装

Kubernetes 默认 CRI(容器运行时)为 Docker,因此先安装 Docker。

1.1 更新yum包

yum update

1.2 安装需要的包

yum install -y yum-utils device-mapper-persistent-data lvm2

1.3 配置镜像仓库,设置yum源为阿里云

– 使用国内的源

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

1.4 安装docker Engine

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

因为需要考虑docker 和kubernates的依赖关系,所以这里不下载docker最新版本,

按照教程下载

yum -y install docker-ce-18.06.1.ce-3.el7

## yum -y install docker-ce docker-ce-cli containerd.io docker-compose-plugin

1.5 启动Docker

systemctl start docker

# 查看当前版本号,是否启动成功

docker version

# 设置开机自启动

systemctl enable docker

2.4.4.2 添加阿里云 YUM 软件源

## 设置仓库地址

## > 是覆盖, >> 是追加写

cat > /etc/docker/daemon.json << EOF

{

"registry-mirrors": ["https://xxxxxxxx.mirror.aliyuncs.com"]

}

EOF

## 刷新

systemctl daemon-reload

systemctl restart docker

## 添加 yum 源

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

2.4.4.3 安装 kubeadm,kubelet 和 kubectl

会从上方指定的yum源中下载

## 最新版本

yum install -y kubelet kubeadm kubectl

## 指定版本,如果下载不了,需要去yum源查看,或者直接用默认的yum源

# yum install -y kubelet-1.21.3 kubeadm-1.21.3 kubectl-1.21.3

yum install -y kubelet-1.18.0 kubeadm-1.18.0 kubectl-1.18.0

## kubelet 开机启动

systemctl enable kubelet

查看版本:

[root@k8smaster admin]# kubectl version

WARNING: This version information is deprecated and will be replaced with the output from kubectl version --short. Use --output=yaml|json to get the full version.

Client Version: version.Info{Major:"1", Minor:"24", GitVersion:"v1.24.0", GitCommit:"4ce5a8954017644c5420bae81d72b09b735c21f0", GitTreeState:"clean", BuildDate:"2022-05-03T13:46:05Z", GoVersion:"go1.18.1", Compiler:"gc", Platform:"linux/amd64"}

Kustomize Version: v4.5.4

The connection to the server localhost:8080 was refused - did you specify the right host or port?

2.4.4.3.1 题外话,k8s的卸载

参考url: https://blog.csdn.net/qq_41489540/article/details/114151340

yum remove -y kubelet kubeadm kubectl

kubeadm reset -f

modprobe -r ipip

lsmod

rm -rf ~/.kube/

rm -rf /etc/kubernetes/

rm -rf /etc/systemd/system/kubelet.service.d

rm -rf /etc/systemd/system/kubelet.service

rm -rf /usr/bin/kube*

rm -rf /etc/cni

rm -rf /opt/cni

rm -rf /var/lib/etcd

rm -rf /var/etcd

2.4.4.4 部署 Kubernetes Master

参考URL:https://blog.csdn.net/nerdsu/article/details/123359523

初始化集群会自动拉取集群所有的镜像,这里也可以在init之前拉取镜像

kubeadm config images pull --image-repository registry.aliyuncs.com/google_containers

2.4.4.4.1 在 192.168.226.141 (Master) 进行初始化

kubeadm init \

--apiserver-advertise-address=192.168.226.141 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.18.0 \

--service-cidr=10.96.0.0/12 \

--pod-network-cidr=10.244.0.0/16

## --apiserver-advertise-address 本机地址

## --image-repository 镜像仓库地址

## --kubernetes-version 版本

## --service-cidr service服务的地址段

## --pod-network-cidr pod服务的地址段

## 由于默认拉取镜像地址 k8s.gcr.io 国内无法访问,这里指定阿里云镜像仓库地址。image-repository registry.aliyuncs.com/google_containers

#更改kubelet的参数

gedit /etc/sysconfig/kubelet

# 改为如下参数

KUBELET_EXTRA_ARGS=--cgroup-driver=systemd

------------------------------

gedit /etc/containerd/config.toml

# 配置下面两行

[plugins."io.containerd.grpc.v1.cri"]

systemd_cgroup = true

------------------------------

执行命令:

systemctl restart containerd

启动有时会失败,尝试多重启几次

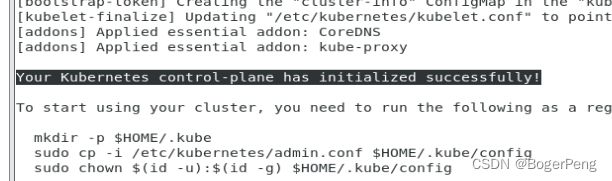

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.226.141:6443 --token e3g98q.om32v4wq5ikurrnk \

--discovery-token-ca-cert-hash sha256:02c1f32c0053a14ecaa3c6dbe969c2e008bd05e6fac38c1221737f9f24eed18b

按照上面启动成功的日志提示,再执行三行脚本

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

2.4.4.4.2 题外话 – kubeadm 重启

kubeadm reset -f

2.4.4.4.3 异常处理

执行kubeadm init命令时出现问题:

查看日志:

journalctl -u kubelet -f

Unknown desc = failed to get sandbox image \\\"k8s.gcr.io/pause:3.6\\\": failed to pull image \\\"k8s.gcr.io/pause:3.6

分析并处理:

### 由于k8s.gcr.io 需要连外网才可以拉取到,导致 k8s 的基础容器 pause 经常无法获取。k8s docker 可使用代理服拉取,再利用 docker tag 解决问题

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.6

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.6 k8s.gcr.io/pause:3.6

但是我们k8s集群中使用的CRI是containerd。所以只能通过 docker tag 镜像,再使用 ctr 导入镜像.

docker save k8s.gcr.io/pause -o pause.tar

ctr -n k8s.io images import pause.tar

重启docker 和 kubectl

再次执行上面的kubeadm init 脚本

然后根据图文中说明执行三行脚本:

[root@k8smaster etc]# mkdir -p $HOME/.kube

[root@k8smaster etc]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@k8smaster etc]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

[root@k8smaster etc]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8smaster NotReady control-plane 16m v1.24.0

2.4.4.4.4 异常处理2 Unable to register node

## 异常:

kubelet_node_status.go:92] Unable to register node "k8smaster" with API server: Unauthorized

## 处理及结果:

[root@k8smaster admin]# kubeadm init phase kubeconfig kubelet

I0525 01:34:47.029263 5701 version.go:252] remote version is much newer: v1.24.0; falling back to: stable-1.18

W0525 01:34:48.332562 5701 configset.go:202] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io]

[kubeconfig] Using existing kubeconfig file: "/etc/kubernetes/kubelet.conf"

2.4.4.4.5 异常处理3 Docker驱动问题

参考:https://www.jianshu.com/p/b9d43465a09c

2.4.4.5 部署 Kubernetes worker

2.4.4.5.1 在 192.168.226.142/143 (worker) 执行

按照 2.4.4.4.1 中执行成功后的日志提示,

在 worker node 中执行下面脚本

kubeadm join 192.168.226.141:6443 --token e3g98q.om32v4wq5ikurrnk \

--discovery-token-ca-cert-hash sha256:02c1f32c0053a14ecaa3c6dbe969c2e008bd05e6fac38c1221737f9f24eed18b

执行结果:

[root@k8sworker1 admin]# kubeadm join 192.168.226.141:6443 --token e3g98q.om32v4wq5ikurrnk \

> --discovery-token-ca-cert-hash sha256:02c1f32c0053a14ecaa3c6dbe969c2e008bd05e6fac38c1221737f9f24eed18b

W0525 17:42:07.312091 4644 join.go:346] [preflight] WARNING: JoinControlPane.controlPlane settings will be ignored when control-plane flag is not set.

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

[WARNING Hostname]: hostname "k8sworker1" could not be reached

[WARNING Hostname]: hostname "k8sworker1": lookup k8sworker1 on 119.29.29.29:53: no such host

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml'

[kubelet-start] Downloading configuration for the kubelet from the "kubelet-config-1.18" ConfigMap in the kube-system namespace

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

2.4.4.5.2 token有效期(令牌过期问题)

参考URL: https://www.hangge.com/blog/cache/detail_2418.html

# 1. 查看 token

kubeadm token list

# 2. 重新生成 token

# 默认 token 的有效期为 24 小时。当过期之后,该 token 就不可用了,

# 我们可以执行如下命令重新生成

kubeadm token create

# 3. 获取 ca 证书的 hash 值

# 加入集群除了需要 token 外,还需要 Master 节点的 ca 证书 sha256 编码 hash 值,

# 这个可以通过如下命令获取

openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | openssl dgst -sha256 -hex | sed 's/^.* //'

2.4.4.5.3 在 master 中查看node状态

[root@k8smaster admin]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8smaster NotReady master 14h v1.18.0

k8sworker1 NotReady 27s v1.18.0

k8sworker2 NotReady 11s v1.18.0

2.4.4.6 部署 CNI 网络插件

在 2.4.4.5.3中,node 状态是 NotReady, 原因是目前k8s缺少网络组件

或者找k8s中文社区: http://docs.kubernetes.org.cn/

## github 的kube-flannel 文件有时候下载失败,多试几次。

## 如果还是无法访问,把命令修改为docker hub镜像仓库

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

## kubectl get pods -n kube-system

[root@k8smaster admin]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-7ff77c879f-dqqtz 1/1 Running 0 14h

coredns-7ff77c879f-gzn6z 1/1 Running 0 14h

etcd-k8smaster 1/1 Running 1 14h

kube-apiserver-k8smaster 1/1 Running 1 14h

kube-controller-manager-k8smaster 1/1 Running 1 14h

kube-flannel-ds-ldt25 1/1 Running 0 5m39s

kube-flannel-ds-mnh5s 1/1 Running 0 5m39s

kube-flannel-ds-tqpzp 1/1 Running 0 5m39s

kube-proxy-55frw 1/1 Running 0 21m

kube-proxy-l4bll 1/1 Running 0 22m

kube-proxy-rshj2 1/1 Running 1 14h

kube-scheduler-k8smaster 1/1 Running 1 14h

2.4.4.7 测试

[root@k8smaster admin]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8smaster Ready master 14h v1.18.0

k8sworker1 Ready 23m v1.18.0

k8sworker2 Ready 22m v1.18.0

在 Kubernetes 集群中创建一个 pod,验证是否正常运行:

[root@k8smaster admin]# kubectl create deployment nginx --image=nginx

deployment.apps/nginx created

## 查看 k8s 最小单元pod 列表

[root@k8smaster admin]# kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-f89759699-jqtb8 1/1 Running 0 40s

## 对外暴露 nginx 容器的端口 80

kubectl expose deployment nginx --port=80 --type=NodePort

## 查看pod和svc的应用状态, 注意,下面指令用的是逗号

kubectl get pod,svc

[root@k8smaster admin]# kubectl get pod,svc

NAME READY STATUS RESTARTS AGE

pod/nginx-f89759699-jqtb8 1/1 Running 0 3m32s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kubernetes ClusterIP 10.96.0.1 443/TCP 14h

service/nginx NodePort 10.96.115.205 80:30315/TCP 62s

2.4.4.8 小结

1. 安装3台虚拟机,安装操作系统centos7.x

2. 对三个安装之后的操作系统进行初始化操作

3. 在三个节点安装 docker kubelet kubeadm kubectl

4. 在master节点执行kubeadm init命令进行初始化

5. 在node节点上执行 kubeadm join 命令,把worker节点添加到当前集群里面

6. 配置网络插件

2.5 kubernetes 集群搭建(二进制方式) – 建议看看就好

---------- 特意写在前面

注:二进制搭建就是把kubeadm自动化部署的内容全部手动来一遍,跟了一遍,人已半残,才知道使用kubeadm搭建是多么慈祥。个人建议二进制方式的搭建多看几遍视频就好,哈哈。

2.5.1 安装要求

在开始之前,部署 Kubernetes 集群机器需要满足以下几个条件:

(1)一台或多台机器,操作系统 CentOS7.x-86_x64

(2)硬件配置:2GB 或更多 RAM,2 个 CPU 或更多 CPU,硬盘 30GB 或更多

(3)集群中所有机器之间网络互通

(4)可以访问外网,需要拉取镜像,如果服务器不能上网,需要提前下载镜像并导入节点

(5)禁止 swap 分

2.5.2 准备内容

-3台虚拟机 151, 152, 153

安装CentOS7系统

- 硬件配置:2GB 或更多 RAM,2 个 CPU 或更多 CPU,硬盘 30GB 或更多

- 集群中所有机器之间网络互通

- 可以访问外网,需要拉取镜像

- 禁止 swap 分区

2.5.3 三台服务器系统初始化

2.5.3.1 系统初始化

类似 2.4.3 所示

除了第4,第5步,其他1、2、3、6、7照搬

- 4). 主机名:

$ hostnamectl set-hostname

## 151 机器

hostnamectl set-hostname k8smaster151

## 152 机器

hostnamectl set-hostname k8sworker152

## 153 机器

hostnamectl set-hostname k8sworker153

- 5). 在 master 添加 hosts:

cat >> /etc/hosts << EOF

192.168.226.151 k8smaster151

192.168.226.152 k8sworker152

192.168.226.153 k8sworker153

EOF

2.5.3.3 添加阿里云 YUM 软件源

参考 2.4.4.2 添加阿里云 YUM 软件源

2.5.3.3 安装 kubeadm、kubelet和kubectl

三台机器都安装

yum install -y kubelet-1.19.16 kubectl-1.19.16 kubeadm-1.19.16

systemctl daemon-reload

systemctl start kubelet

systemctl enable kubelet

systemctl start kubectl

systemctl enable kubectl

2.5.3.4 服务器规划:

| 角色 | IP | 组件 |

|---|---|---|

| k8s-master | 192.168.226.147 | kube-apiserver,kube-controller-manager,kube-scheduler,etcd |

| k8s-worker1 | 192.168.226.148 | kubelet,kube-proxy,docker etcd |

| k8s-worker2 | 192.168.226.149 | kubelet,kube-proxy,docker,etcd |

2.5.4 为 Etcd 自签证书

Etcd 是一个分布式键值存储系统,Kubernetes 使用 Etcd 进行数据存储,所以先准备

一个 Etcd 数据库,为解决 Etcd 单点故障,应采用集群方式部署,这里使用 3 台组建集

群,可容忍 1 台机器故障,当然,你也可以使用 5 台组建集群,可容忍 2 台机器故障。

| 节点名称 | IP |

|---|---|

| etcd-1 | 192.168.226.147 |

| etcd-2 | 192.168.226.148 |

| etcd-3 | 192.168.226.149 |

注:为了节省机器,这里与 K8s 节点机器复用。

也可以独立于 k8s 集群之外部署,只要apiserver 能连接到就行

2.5.4.1 准备 cfssl 证书生成工具

cfssl 是一个开源的证书管理工具,使用 json 文件生成证书,相比 openssl 更方便使用。

找任意一台服务器操作,这里用 Master 节点。

在 masternode(151/152/153) 执行下面脚本

## 下载文件到当前目录(下载一个,剩余的远程拷贝即可)

wget --no-check-certificate https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

wget --no-check-certificate https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

wget --no-check-certificate https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64

## 赋予权限并移动

chmod +x cfssl_linux-amd64 cfssljson_linux-amd64 cfssl-certinfo_linux-amd64

scp cfssl_linux-amd64 [email protected]:/usr/local/bin/cfssl

scp cfssljson_linux-amd64 [email protected]:/usr/local/bin/cfssljson

scp cfssl-certinfo_linux-amd64 [email protected]:/usr/local/bin/cfssl-certinfo

mv cfssl_linux-amd64 /usr/local/bin/cfssl

mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

mv cfssl-certinfo_linux-amd64 /usr/local/bin/cfssl-certinfo

注意: 如果出现下面异常,

需要添加 --no-check-certificate 跳过验证

[root@k8smaster147 admin]# wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

--2022-05-28 00:36:14-- https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

Resolving pkg.cfssl.org (pkg.cfssl.org)... 172.64.151.245, 104.18.36.11, 2606:4700:4400::ac40:97f5, ...

Connecting to pkg.cfssl.org (pkg.cfssl.org)|172.64.151.245|:443... connected.

Unable to establish SSL connection.

国内下载偶尔会失败,多试几次

2.5.4.2 生成 Etcd 证书

- 1). 自签证书颁发机构(CA)

## 创建工作目录:

# 在当前用户目录下(/root)创建

mkdir -p ~/TLS/{etcd,k8s}

cd ~/TLS/etcd

## 去到当前用户目录下(/root)

[root@k8smaster147 local]# cd ~

[root@k8smaster147 ~]# pwd

/root

- 2). 自签 CA

两个文件:

cat > ca-config.json<< EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat > ca-csr.json<< EOF

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

EOF

- 3). 生成Etcd证书

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

ls *pem

## 生成的两个文件

ca-key.pem ca.pem

2.5.4.3 使用自签 CA 签发 Etcd HTTPS 证书

- 1). 创建证书申请文件:

cat > server-csr.json<< EOF

{

"CN": "etcd",

"hosts": [

"192.168.226.151",

"192.168.226.152",

"192.168.226.153"

## 为了方便后期扩容可以多写几个预留的 IP

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

EOF

注:上述文件 hosts 字段中 IP 为所有 etcd 节点的集群内部通信 IP,一个都不能少!为了方便后期扩容可以多写几个预留的 IP。

- 2). 生成证书

## 生成证书指令如下

## cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

[root@k8smaster151 etcd]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

2022/05/29 11:50:37 [INFO] generate received request

2022/05/29 11:50:37 [INFO] received CSR

2022/05/29 11:50:37 [INFO] generating key: rsa-2048

2022/05/29 11:50:37 [INFO] encoded CSR

2022/05/29 11:50:37 [INFO] signed certificate with serial number 590971995686843080879961781238516631227185575525

2022/05/29 11:50:37 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

## 查询证书

[root@k8smaster151 etcd]# ls server*pem

server-key.pem server.pem

2.5.4.4 从 Github 下载etcd二进制文件

解决github无法访问的问题:

https://www.jianshu.com/p/eeb984057fb9

去ETCD官网看,有跳转到 github 的连接,或者直接按照下面地址,下载3.4.9 的版本

下载地址: https://github.com/etcd-io/etcd/releases/download/v3.4.9/etcd-v3.4.9-linux-amd64.tar.gz

2.5.4.5 部署 Etcd 集群

以下在节点 151上操作,为简化操作,待会将节点 151 生成的所有文件拷贝到节点 1452和节点 153.

- 1). 创建工作目录并解压二进制包

mkdir /opt/etcd/{bin,cfg,ssl} -p

tar zxvf etcd-v3.4.9-linux-amd64.tar.gz

mv etcd-v3.4.9-linux-amd64/{etcd,etcdctl} /opt/etcd/bin/

- 2). 创建 etcd 配置文件

cat > /opt/etcd/cfg/etcd.conf << EOF

#[Member]

ETCD_NAME="etcd-1"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.226.151:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.226.151:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.226.151:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.226.151:2379"

ETCD_INITIAL_CLUSTER="etcd-1=https://192.168.226.151:2380,etcd-2=https://192.168.226.152:2380,etcd-3=https://192.168.226.153:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

参数说明:

ETCD_NAME:节点名称,集群中唯一

ETCD_DATA_DIR:数据目录

ETCD_LISTEN_PEER_URLS:集群通信监听地址

ETCD_LISTEN_CLIENT_URLS:客户端访问监听地址

ETCD_INITIAL_ADVERTISE_PEER_URLS:集群通告地址

ETCD_ADVERTISE_CLIENT_URLS:客户端通告地址

ETCD_INITIAL_CLUSTER:集群节点地址

ETCD_INITIAL_CLUSTER_TOKEN:集群 Token

ETCD_INITIAL_CLUSTER_STATE:加入集群的当前状态,new 是新集群,existing 表示加入已有集群

- 3). systemd 管理 etcd

cat > /usr/lib/systemd/system/etcd.service << EOF

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/opt/etcd/cfg/etcd.conf

ExecStart=/opt/etcd/bin/etcd \

--cert-file=/opt/etcd/ssl/server.pem \

--key-file=/opt/etcd/ssl/server-key.pem \

--peer-cert-file=/opt/etcd/ssl/server.pem \

--peer-key-file=/opt/etcd/ssl/server-key.pem \

--trusted-ca-file=/opt/etcd/ssl/ca.pem \

--peer-trusted-ca-file=/opt/etcd/ssl/ca.pem \

--logger=zap

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

- 4). 拷贝刚才生成的证书

把刚才生成的证书拷贝到配置文件中的路径:

cp ~/TLS/etcd/ca*pem ~/TLS/etcd/server*pem /opt/etcd/ssl/

- 5). 启动并设置开机启动

systemctl daemon-reload

systemctl start etcd

systemctl enable etcd

- 5). 将上面节点 1 所有生成的文件拷贝到节点 2 和节点 3

节点2 - slave152

scp -r /opt/etcd/ [email protected]:/opt/

scp /usr/lib/systemd/system/etcd.service [email protected]:/usr/lib/systemd/system/

节点3 - slave153

scp -r /opt/etcd/ [email protected]:/opt/

scp /usr/lib/systemd/system/etcd.service [email protected]:/usr/lib/systemd/system/

- 6). 然后在节点 2 和节点 3 分别修改 etcd.conf 配置文件中的节点名称和当前服务器 IP

----------- 节点2 - slave152

vi /opt/etcd/cfg/etcd.conf

#[Member]

ETCD_NAME="etcd-2"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.226.152:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.226.152:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.226.152:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.226.152:2379"

ETCD_INITIAL_CLUSTER="etcd-1=https://192.168.226.151:2380,etcd-2=https://192.168.226.152:2380,etcd-3=https://192.168.226.153:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

----------- 节点3 - slave153

vi /opt/etcd/cfg/etcd.conf

#[Member]

ETCD_NAME="etcd-3"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.226.153:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.226.153:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.226.153:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.226.153:2379"

ETCD_INITIAL_CLUSTER="etcd-1=https://192.168.226.151:2380,etcd-2=https://192.168.226.152:2380,etcd-3=https://192.168.226.153:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

- 6). 最后启动 etcd 并设置开机启动,同上

- 7). 测试

## 指令

ETCDCTL_API=3 /opt/etcd/bin/etcdctl --cacert=/opt/etcd/ssl/ca.pem \

--cert=/opt/etcd/ssl/server.pem --key=/opt/etcd/ssl/server-key.pem \

--endpoints="https://192.168.226.151:2379,https://192.168.226.152:2379,https://192.168.226.153:2379" \

endpoint health

## 结果成功

https://192.168.226.151:2379 is healthy: successfully committed proposal: took = 9.400731ms

https://192.168.226.153:2379 is healthy: successfully committed proposal: took = 18.032509ms

https://192.168.226.152:2379 is healthy: successfully committed proposal: took = 28.848643ms

2.5.5 所有节点systemd 管理 docker

2.5.5.1 下载docker

在所有节点下载docker

参考URL: https://blog.csdn.net/BogerPeng/article/details/124286266

2.5.5.2 systemd 管理 docker

以下在所有节点操作

cat > /usr/lib/systemd/system/docker.service << EOF

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service

Wants=network-online.target

[Service]

Type=notify

ExecStart=/usr/bin/dockerd

ExecReload=/bin/kill -s HUP $MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s

[Install]

WantedBy=multi-user.target

EOF

2.5.6 部署master node

2.5.6.1 生成 kube-apiserver 证书

2.5.6.1.1 自签证书颁发机构(CA)

## 去到 k8s 界面

[root@k8smaster147 k8s]# pwd

/root/TLS/k8s

cat > ca-config.json<< EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat > ca-csr.json<< EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

2.5.6.1.2 生成证书

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

ls *pem

## 生成的两个文件

ca-key.pem ca.pem

2.5.6.1.3 使用自签 CA 签发 kube-apiserver HTTPS 证书

创建证书申请文件

## 当前用户

cd /root/TLS/k8s

cat > server-csr.json<< EOF

{

"CN": "kubernetes",

"hosts": [

"10.0.0.1",

"127.0.0.1",

"192.168.226.151",

"192.168.226.152",

"192.168.226.153",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

生成证书:

## 指令

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

[root@k8smaster151 k8s]# ls server*pem

server-key.pem server.pem

2.5.6.2 从 Github 下载二进制文件

下载地址:

https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG

https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG/CHANGELOG-1.19.md#server-binaries

下载 server 包就足够了,

下载后拷贝到 master node 的 /root 目录下

2.5.6.3 解压二进制包

[root@k8smaster151 ~]# pwd

/root

[root@k8smaster151 ~]# ls

anaconda-ks.cfg initial-setup-ks.cfg kubernetes-server-linux-amd64.tar.gz TLS

## 解压 k8s server

tar zxvf kubernetes-server-linux-amd64.tar.gz

mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs}

cd kubernetes/server/bin

cp kube-apiserver kube-scheduler kube-controller-manager /opt/kubernetes/bin

cp kubectl /usr/bin

2.5.6.4 所有机器部署 kube-apiserver

否则会出现以下异常:

The connection to the server localhost:8080 was refused - did you specify the right host or port?

参考资料:https://www.e-learn.cn/topic/2103718

2.5.6.4.1 创建配置文件

cat > /opt/kubernetes/cfg/kube-apiserver.conf << EOF

KUBE_APISERVER_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/opt/kubernetes/logs \\

--etcd-servers=https://192.168.226.151:2379,https://192.168.226.152:2379,https://192.168.226.153:2379 \\

--bind-address=192.168.226.151 \\

--secure-port=6443 \\

--advertise-address=192.168.226.151 \\

--allow-privileged=true \\

--service-cluster-ip-range=10.0.0.0/24 \\

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \\

--authorization-mode=RBAC,Node \\

--enable-bootstrap-token-auth=true \\

--token-auth-file=/opt/kubernetes/cfg/token.csv \\

--service-node-port-range=30000-32767 \\

--kubelet-client-certificate=/opt/kubernetes/ssl/server.pem \\

--kubelet-client-key=/opt/kubernetes/ssl/server-key.pem \\

--tls-cert-file=/opt/kubernetes/ssl/server.pem \\

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \\

--client-ca-file=/opt/kubernetes/ssl/ca.pem \\

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--etcd-cafile=/opt/etcd/ssl/ca.pem \\

--etcd-certfile=/opt/etcd/ssl/server.pem \\

--etcd-keyfile=/opt/etcd/ssl/server-key.pem \\

--audit-log-maxage=30 \\

--audit-log-maxbackup=3 \\

--audit-log-maxsize=100 \\

--audit-log-path=/opt/kubernetes/logs/k8s-audit.log"

EOF

注:上面两个\ \ 第一个是转义符,第二个是换行符,使用转义符是为了使用 EOF 保留换行符。

############ 参数含义 ############

-logtostderr:启用日志

-v:日志等级

-log-dir:日志目录

-etcd-servers:etcd 集群地址

-bind-address:监听地址

-secure-port:https 安全端口

-advertise-address:集群通告地址

-allow-privileged:启用授权

-service-cluster-ip-range:Service 虚拟 IP 地址段

-enable-admission-plugins:准入控制模块

-authorization-mode:认证授权,启用 RBAC 授权和节点自管理

-enable-bootstrap-token-auth:启用 TLS bootstrap 机制

-token-auth-file:bootstrap token 文件

-service-node-port-range:Service nodeport 类型默认分配端口范围

-kubelet-client-xxx:apiserver 访问 kubelet 客户端证书

-tls-xxx-file:apiserver https 证书

-etcd-xxxfile:连接 Etcd 集群证书

-audit-log-xxx:审计日志

2.5.6.4.2 拷贝刚才生成的证书

把刚才生成的证书拷贝到配置文件中的路径:

cp ~/TLS/k8s/ca*pem ~/TLS/k8s/server*pem /opt/kubernetes/ssl/

2.5.6.4.3 启用 TLS Bootstrapping 机制

TLS Bootstraping:Master apiserver 启用 TLS 认证后,Node 节点 kubelet 和

kube- proxy 要与 kube-apiserver 进行通信,必须使用 CA 签发的有效证书才可

以,当 Node节点很多时,这种客户端证书颁发需要大量工作,同样也会增加集

群扩展复杂度。为了简化流程,Kubernetes 引入了 TLS bootstraping 机制来自动

颁发客户端证书,kubelet会以一个低权限用户自动向 apiserver 申请证书,kubelet 的

证书由 apiserver 动态签署。

所以强烈建议在 Node 上使用这种方式,目前主要用于 kubelet,kube-proxy

还是由我们统一颁发一个证书。

TLS bootstraping 工作流程:

创建上述配置文件中 token 文件:

token 可自行生成替换:

head -c 16 /dev/urandom | od -An -t x | tr -d ' '

[root@k8smaster147 ssl]# head -c 16 /dev/urandom | od -An -t x | tr -d ' '

e4ab4214db9a15193216d2007f1f8dad

## 格式:token,用户名,UID,用户组

cat > /opt/kubernetes/cfg/token.csv << EOF

e4ab4214db9a15193216d2007f1f8dad,kubelet-bootstrap,10001,"system:node-bootstrapper"

EOF

2.5.6.4.4 systemd 管理 apiserver

cat > /usr/lib/systemd/system/kube-apiserver.service << EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-apiserver.conf

ExecStart=/opt/kubernetes/bin/kube-apiserver \$KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

2.5.6.4.5 启动并设置开机启动

systemctl daemon-reload

systemctl start kube-apiserver

systemctl enable kube-apiserver

检查:

[root@k8smaster147 k8s]# ps -ef | grep kube

2.5.6.4.6 授权 kubelet-bootstrap 用户允许请求证书

kubectl create clusterrolebinding kubelet-bootstrap \

--clusterrole=system:node-bootstrapper \

--user=kubelet-bootstrap

2.5.6.5 部署kube-controller-manager

2.5.6.5.1 创建配置文件

cat > /opt/kubernetes/cfg/kube-controller-manager.conf << EOF

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/opt/kubernetes/logs \\

--leader-elect=true \\

--master=127.0.0.1:8080 \\

--bind-address=127.0.0.1 \\

--allocate-node-cidrs=true \\

--cluster-cidr=10.244.0.0/16 \\

--service-cluster-ip-range=10.0.0.0/24 \\

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \\

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--root-ca-file=/opt/kubernetes/ssl/ca.pem \\

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--experimental-cluster-signing-duration=87600h0m0s"

EOF

参数说明:

–master:通过本地非安全本地端口 8080 连接 apiserver。

–leader-elect:当该组件启动多个时,自动选举(HA)

–cluster-signing-cert-file/–cluster-signing-key-file:自动为 kubelet 颁发证书的 CA,与 apiserver 保持一致

2.5.6.5.2 systemd 管理 controller-manager

cat > /usr/lib/systemd/system/kube-controller-manager.service << EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-controller-manager.conf

ExecStart=/opt/kubernetes/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

2.5.6.5.3 启动并设置开机启动

systemctl daemon-reload

systemctl start kube-controller-manager

systemctl enable kube-controller-manager

2.5.6.6 部署 kube-scheduler

2.5.6.6.1 创建配置文件

cat > /opt/kubernetes/cfg/kube-scheduler.conf << EOF

KUBE_SCHEDULER_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/kubernetes/logs \

--leader-elect \

--master=127.0.0.1:8080 \

--bind-address=127.0.0.1"

EOF

## –master:通过本地非安全本地端口 8080 连接 apiserver。

## –leader-elect:当该组件启动多个时,自动选举(HA)

2.5.6.6.2 systemd 管理 scheduler

cat > /usr/lib/systemd/system/kube-scheduler.service << EOF

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-scheduler.conf

ExecStart=/opt/kubernetes/bin/kube-scheduler \$KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

2.5.6.6.3 启动并设置开机启动

systemctl daemon-reload

systemctl start kube-scheduler

systemctl enable kube-scheduler

2.5.6.6.4 查看集群状态

所有组件都已经启动成功,通过 kubectl 工具查看当前集群组件状态:

[root@k8smaster147 admin]# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

## 查看日志:

journalctl -u scheduler -f

如上输出说明 Master 节点组件运行正常。

2.5.7 部署worker node组件

下面还是在 Master Node 上操作,即同时作为 Worker Node

注:部署完毕会把配置拷贝到另外两个worker node 上修改启动

2.5.7.1 创建工作目录并拷贝二进制文件

在所有 worker node (151/152/153)创建工作目录:

mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs}

在 master(151) 节点拷贝到 152、153:

cd /root/kubernetes/server/bin

cp kubelet kube-proxy /opt/kubernetes/bin # 本地拷贝

#去workernode 152/153

mkdir /opt/kubernetes

mkdir /opt/kubernetes/bin

#在masternode 151 远程拷贝

cd /root/kubernetes/server/bin

scp kubectl [email protected]:/usr/bin

scp kubectl [email protected]:/usr/bin

cd /root/kubernetes/server/bin

scp kubelet kube-proxy [email protected]:/opt/kubernetes/bin

scp kubelet kube-proxy [email protected]:/opt/kubernetes/bin

2.5.7.2 部署 kubelet

2.5.7.2.1 创建配置文件

152/153 机器

mkdir /opt/kubernetes/cfg

cat > /opt/kubernetes/cfg/kubelet.conf << EOF

KUBELET_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/opt/kubernetes/logs \\

--hostname-override=k8sworker152 \\

--network-plugin=cni \\

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \\

--bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \\

--config=/opt/kubernetes/cfg/kubelet-config.yml \\

--cert-dir=/opt/kubernetes/ssl \\

--pod-infra-container-image=lizhenliang/pause-amd64:3.0"

EOF

## 153 机器修改为

--hostname-override=k8sworker153

## 命令解释

–hostname-override:显示名称,集群中唯一

–network-plugin:启用 CNI

–kubeconfig:空路径,会自动生成,后面用于连接 apiserver

–bootstrap-kubeconfig:首次启动向 apiserver 申请证书

–config:配置参数文件

–cert-dir:kubelet 证书生成目录

–pod-infra-container-image:管理 Pod 网络容器的基础镜像,去docker hub找镜像名称

2.5.7.2.2 配置参数文件

# 在152/153 机器

cat > /opt/kubernetes/cfg/kubelet-config.yml << EOF

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 0.0.0.0

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS:

- 10.0.0.2

clusterDomain: cluster.local

failSwapOn: false

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 2m0s

enabled: true

x509:

clientCAFile: /opt/kubernetes/ssl/ca.pem

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

evictionHard:

imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

maxOpenFiles: 1000000

maxPods: 110

EOF

2.5.7.2.3 生成 bootstrap.kubeconfig 文件

## 依次执行

KUBE_APISERVER="https://192.168.226.151:6443" # apiserver IP:PORT

TOKEN="e4ab4214db9a15193216d2007f1f8dad" # 与 token.csv 里保持一致

# 生成 kubelet bootstrap kubeconfig 配置文件

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=bootstrap.kubeconfig

kubectl config set-credentials "kubelet-bootstrap" \

--token=${TOKEN} \

--kubeconfig=bootstrap.kubeconfig

kubectl config set-context default \

--cluster=kubernetes \

--user="kubelet-bootstrap" \

--kubeconfig=bootstrap.kubeconfig

kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

生成bootstrap.kubeconfig 后,

修改参数:把密码去掉,换成验证文件

certificate-authority: /opt/kubernetes/ssl/ca.pem

拷贝到配置文件路径

cp bootstrap.kubeconfig /opt/kubernetes/cfg

最终生成的bootstrap.kubeconfig 内容可以修改为如下

apiVersion: v1

clusters:

- cluster:

certificate-authority: /opt/kubernetes/ssl/ca.pem

server: https://192.168.226.151:6443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: kubelet-bootstrap

name: default

current-context: default

kind: Config

preferences: {}

users:

- name: kubelet-bootstrap

user:

token: e4ab4214db9a15193216d2007f1f8dad

2.5.7.2.4 systemd 管理 kubelet

cat > /usr/lib/systemd/system/kubelet.service << EOF

[Unit]

Description=Kubernetes Kubelet

After=docker.service

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kubelet.conf

ExecStart=/opt/kubernetes/bin/kubelet \$KUBELET_OPTS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

kubectl get csr

No resources found.

## 参考内容: https://blog.csdn.net/GDKbb/article/details/123231226

## 查看日志:

journalctl -u kubelet -f

2.5.7.2.5 启动并设置开机启动

systemctl daemon-reload

systemctl start kubelet

systemctl enable kubelet

2.5.7.3 批准 kubelet 证书申请并加入集群

2.5.7.3.1 查看 kubelet 证书请求

命令: kubectl get csr

## 当前只有本机

[root@k8smaster147 system]# kubectl get csr

NAME AGE SIGNERNAME REQUESTOR CONDITION

node-csr-G_1AdBhKfguFFsEr9or0dz_u5NoVRwAnChvE8j7wMns 3m46s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

2.5.7.3.2 批准申请

kubectl certificate approve node-csr-G_1AdBhKfguFFsEr9or0dz_u5NoVRwAnChvE8j7wMns

2.5.7.3.3 查看节点

kubectl get node

[root@k8smaster147 system]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8smaster147 NotReady 23s v1.19.16

注:由于网络插件还没有部署,节点会没有准备就绪 NotReady

2.5.7.4 部署 kube-proxy

2.5.7.4.1 创建配置文件

cat > /opt/kubernetes/cfg/kube-proxy.conf << EOF

KUBE_PROXY_OPTS="--logtostderr=false \\

--v=2 \\

--log-dir=/opt/kubernetes/logs \\

--config=/opt/kubernetes/cfg/kube-proxy-config.yml"

EOF

2.5.7.4.2 配置参数文件

cat > /opt/kubernetes/cfg/kube-proxy-config.yml << EOF

kind: KubeProxyConfiguration

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

metricsBindAddress: 0.0.0.0:10249

clientConnection:

kubeconfig: /opt/kubernetes/cfg/kube-proxy.kubeconfig

hostnameOverride: k8sworker152

clusterCIDR: 10.0.0.0/24

mode: ipvs

ipvs:

scheduler: "rr"

iptables:

masqueradeAll: true

EOF

2.5.7.4.3 生成 kube-proxy.kubeconfig 文件

生成 kube-proxy 证书

# 切换工作目录

cd /root/TLS/k8s

# 创建证书请求文件

cat > kube-proxy-csr.json<< EOF

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

# 生成证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

# 显示证书

[root@k8smaster147 k8s]# ls kube-proxy*pem

kube-proxy-key.pem kube-proxy.pem

# 拷贝证书

cp /root/TLS/k8s/kube-proxy*pem /opt/kubernetes/ssl/

生成 kubeconfig 文件:

cd /root/TLS/k8s

KUBE_APISERVER="https://192.168.226.151:6443"

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-credentials kube-proxy \

--client-certificate=./kube-proxy.pem \

--client-key=./kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

最终生成的kube-proxy.kubeconfig 内容可以修改为如下

apiVersion: v1

clusters:

- cluster:

certificate-authority: /opt/kubernetes/ssl/ca.pem

server: https://192.168.226.151:6443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: kube-proxy

name: default

current-context: default

kind: Config

preferences: {}

users:

- name: kube-proxy

user:

client-certificate: /opt/kubernetes/ssl/kube-proxy.pem

client-key: /opt/kubernetes/ssl/kube-proxy-key.pem

拷贝到配置文件指定路径:

cp kube-proxy-key.pem kube-proxy.pem /opt/kubernetes/ssl/

cp kube-proxy.kubeconfig /opt/kubernetes/cfg/

2.5.7.4.4 systemd 管理 kube-proxy

cat > /usr/lib/systemd/system/kube-proxy.service << EOF

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-proxy.conf

ExecStart=/opt/kubernetes/bin/kube-proxy \$KUBE_PROXY_OPTS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

2.5.7.4.5 启动并设置开机启动

systemctl daemon-reload

systemctl start kube-proxy

systemctl enable kube-proxy

2.5.7.5 部署CNI 网络

先准备好 CNI 二进制文件:

下载地址:

https://github.com/containernetworking/plugins/releases/download/v1.1.0/cni-plugins-linux-arm64-v1.1.0.tgz

也可以自己去github找自己需要的版本

解压二进制包并移动到默认工作目录:

mkdir /opt/cni

mkdir /opt/cni/bin

tar zxvf cni-plugins-linux-arm64-v1.1.0.tgz -C /opt/cni/bin

部署 CNI 网络:

有两个方法,取决于你的网络情况

## 方法1 下载 kube-flannel.xml 到当前目录下

wget --no-check-certificate https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

# sed -i -r "s#quay.io/coreos/flannel:.*-amd64#lizhenliang/flannel:v0.12.0-amd64#g"

kubectl apply -f /root/kube-flannel.yml

## 方法2 直接从github上 apply kube-flannel.xml

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

查看日志:

journalctl -u kubelet -f

测试:

[root@k8smaster147 admin]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8smaster147 Ready 44h v1.19.16

[root@k8smaster147 admin]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

kube-flannel-ds-hkp4w 1/1 Running 8 22h

2.5.7.6 授权 apiserver 访问 kubelet

## 放到 /root/ 目录下

cat > apiserver-to-kubelet-rbac.yaml << EOF

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:kube-apiserver-to-kubelet

rules:

- apiGroups:

- ""

resources:

- nodes/proxy

- nodes/stats

- nodes/log

- nodes/spec

- nodes/metrics

- pods/log

verbs:

- "*"

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: system:kube-apiserver

namespace: ""

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:kube-apiserver-to-kubelet

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: kubernetes

EOF

## kubectl apply -f /root/apiserver-to-kubelet-rbac.yaml

[root@k8smaster147 ~]# kubectl apply -f /root/apiserver-to-kubelet-rbac.yaml

clusterrole.rbac.authorization.k8s.io/system:kube-apiserver-to-kubelet created

clusterrolebinding.rbac.authorization.k8s.io/system:kube-apiserver created

2.5.7.7 新增加worker node

2.5.7.7.1 拷贝已部署好的 Node 相关文件到新节点

在 master 节点将 Worker Node 涉及文件拷贝

到新节点 192.168.226.152/153

## 148

scp -r /opt/kubernetes [email protected]:/opt/

scp -r /usr/lib/systemd/system/{kubelet,kube-proxy}.service [email protected]:/usr/lib/systemd/system

scp -r /opt/cni/ [email protected]:/opt/

scp /opt/kubernetes/ssl/ca.pem [email protected]:/opt/kubernetes/ssl

scp /root/kube-flannel.yml [email protected]:/root/kube-flannel.yml

# 在worker节点

kubectl apply -f /root/kube-flannel.yml

进入 148机器 删除 kubelet 证书和 kubeconfig 文件

rm /opt/kubernetes/cfg/kubelet.kubeconfig

rm -f /opt/kubernetes/ssl/kubelet*

注:这几个文件是证书申请审批后自动生成的,每个 Node 不同,必须删除重新生成。

2.5.7.7.2 修改主机名

vi /opt/kubernetes/cfg/kubelet.conf

--hostname-override=k8sworker152

vi /opt/kubernetes/cfg/kube-proxy-config.yml

hostnameOverride: k8sworker152

vi /opt/kubernetes/cfg/bootstrap.kubeconfig

## 修改为master 节点的ip

server: https://192.168.226.151:6443

vi /opt/kubernetes/cfg/kube-proxy.kubeconfig

## 修改为master 节点的ip

server: https://192.168.226.151:6443

2.5.7.7.3 启动并设置开机启动

systemctl daemon-reload

systemctl start kubelet

systemctl enable kubelet

systemctl start kube-proxy

systemctl enable kube-proxy

如果遇见问题:

kubectl get csr

No resources found.

## 参考内容: https://blog.csdn.net/GDKbb/article/details/123231226

解决办法;

进入 /usr/lib/systemd/system 目录

查看是否拥有 kubelet.service.d 文件夹,如存在,删除或重名为其他昵称,再次 daemon-reload ,stop 再 restart 即可

2.5.7.555 踩坑总结

大坑:

原因:cpu核数和内存没给够

因为我按照教程,把在 k8smaster147 节点上也安装了docker,kubelet, kube-proxy,也即是把master node 也当作 Worker Node。

因为一开始分配的只有两个核加2G的内存,导致我在 2.5.7.5 部署CNI网络后进行测试时,pod一直没有成功启动。

[root@k8smaster147 admin]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

kube-flannel-ds-hkp4w 0/1 CrashLoopBackOff 8 20h

一开始以为是配置问题,找了好久,甚至重新配了一次,仍然报相同错误。

最后看了该node的日志,kubelet.k8smaster147.root.log.ERROR.xxxx

## 报错

## Image garbage collection failed once. Stats initialization may not have completed yet: failed to get imageFs info: unable to find data in memory cache

E0602 22:35:04.870942 21006 aws_credentials.go:77] while getting AWS credentials NoCredentialProviders: no valid providers in chain. Deprecated.

For verbose messaging see aws.Config.CredentialsChainVerboseErrors

E0602 22:35:04.880979 21006 kubelet.go:1238] Image garbage collection failed once. Stats initialization may not have completed yet: failed to get imageFs info: unable to find data in memory cache

E0602 22:35:04.931131 21006 kubelet.go:1794] skipping pod synchronization - [container runtime status check may not have completed yet, PLEG is not healthy: pleg has yet to be successful]

E0602 22:35:10.349490 21006 pod_workers.go:191] Error syncing pod 46b0affc-7fb3-4d99-b3c8-7aa6b031660a ("kube-flannel-ds-hkp4w_kube-system(46b0affc-7fb3-4d99-b3c8-7aa6b031660a)"), skipping: failed to "StartContainer" for "kube-flannel" with CrashLoopBackOff: "back-off 10s restarting failed container=kube-flannel pod=kube-flannel-ds-hkp4w_kube-system(46b0affc-7fb3-4d99-b3c8-7aa6b031660a)"

## 其余略

连垃圾回收都没有空间了。

遂把机器内存提到5G(主因),cpu增加到4核,重启。问题解决。

docker system prune

systemctl stop kubelet

systemctl stop docker

systemctl start docker

systemctl start kubelet

或者另外一解决方案:

## 停止在该主机建node节点

systemctl disable kube-proxy kubelet docker

systemctl stop kube-proxy kubelet docker

参考资料:

https://blog.csdn.net/qq_36783142/article/details/103443750

https://blog.csdn.net/qq_24210767/article/details/109187314

https://stackoverflow.com/questions/62020493/kubernetes-1-18-warning-imagegcfailed-error-failed-to-get-imagefs-info-unable-t

2.5.7.555 踩坑总结2

异常:

can't set sysctl net/ipv4/vs/conn_reuse_mode, kernel version must be at least 4.1

需要升级CentOS7 内核到4.1

/boot 容量不足具体操作:

https://blog.csdn.net/qq_46319397/article/details/122736105

内核升级参考资料:

https://www.jianshu.com/p/7aa31da955ac