KubeAdmin安装k8s

文章目录

- 一、kubeadm安装

-

-

-

- kubernetes集群大体上分为两类: 一主多从和多主多从

- 官方地址:

-

- 1.准备环境

-

- 1)部署软件、系统要求

- 2)克隆三台机器

- 3)节点规划

-

- 二、部署k8s

-

- 1、系统优化(所有节点都做)

-

- 1)关闭swap分区

- 2)关闭selinux、firewalld

- 3)修改主机名并且做域名解析

- 4)配置免密登录、分发公钥(主节点)

- 5)同步集群时间

- 6)配置镜像源

- 7)更新系统

- 8)安装基础常用软件

- 9)更新系统内核(docker对系统内核要求比较高,最好用4.4+)

- 10)安装系统内核内容

- 11)安装IPVS

- 12)修改内核启动参数

- 2.安装docker(所有节点)

- 3.安装kubele(所有节点)

- 4.初始化master节点(只在master节点执行)

- 5.初始化后续(只在master节点执行)=====针对于用户集群权限,集群网络插件

-

- 1)建立用户集群权限

- 2) 增加命令提示(选做)

- 3)安装集群网络插件(flannel.yaml)

- 4)安装网络插件

- 5)将其他节点加入集群

-

- 1)主节点创建集群

- 2)从节点上执行

- 3)查看token值命令

- 6.检查集群状态(主节点)

-

- 7.如果测试成功,无需执行以下命令

- 8.新增Master节点

- 9、让其他节点可用kubectl获取状态

一、kubeadm安装

kubernetes集群大体上分为两类: 一主多从和多主多从

1、一主多从:

一台 Master节点和多台Node节点,搭建简单,有单机故障分析,适合于测试环境

2、多主多从:

多台 Master节点和多台Node节点,搭建麻烦,安全性比较高,适合于生产环境

Kubeadm 是一个K8s 部署工具,提供kubeadm init 和kubeadm join,用于快速部署Kubernetes 集群。官方地址:

https://kubernetes.io/docs/reference/setup-tools/kubeadm/kubeadm/

要求:服务器配置至少是2G2核的。如果不是则可以在集群初始化后面增加 --ignore-preflight-errors=NumCPU

1.准备环境

1)部署软件、系统要求

| 软件 | 版本 |

|---|---|

| Centos | CentOS Linux release 7.9 |

| Docker | 19.03.12 |

| Kubernetes | V1.19.1 |

| Flannel | V0.13.0 |

| Kernel-lm | kernel-lt-5.4.140-1.el7.elrepo.x86_64.rpm |

| Kernel-lm-deve | kernel-lt-devel-4.4.245-1.el7.elrepo.x86_64.rpm |

2)克隆三台机器

192.168.15.31 k8s-master-01 m1

192.168.15.32 k8s-node-01 n1

192.168.15.33 k8s-node-02 n2

3)节点规划

二、部署k8s

1、系统优化(所有节点都做)

1)关闭swap分区

#1.一旦触发 swap,会导致系统性能急剧下降,所以一般情况下,K8S 要求关闭 swap

vim /etc/fstab

用#注释掉UUID swap分区那一行

swapoff -a

忽略kubelet的swap区

echo 'KUBELET_EXTRA_ARGS="--fail-swap-on=false"' > /etc/sysconfig/kubelet

2)关闭selinux、firewalld

sed -i 's#enforcing#disabled#g' /etc/selinux/config

setenforce 0 #临时关闭selinux

systemctl disable firewalld #永久关闭selinux

# 如果iptables没有安装就不需要执行

# systemctl disable --now iptables &&\

3)修改主机名并且做域名解析

#1。修改主机名

[root@k8s-master-01 ~]# hostnamectl set-hostname k8s-master-01

[root@k8s-node-01]# hostnamectl set-hostname k8s-node-01

[root@k8s-node-02]# hostnamectl set-hostname k8s-node-02

#2.修改hosts文件 (主节点)

vim /etc/hosts

192.168.15.31 k8s-master-01 m1

192.168.15.32 k8s-node-01 n1

192.168.15.33 k8s-node-02 n2

4)配置免密登录、分发公钥(主节点)

ssh-keygen -t rsa

for i in m1 n1 n2;do ssh-copy-id -i ~/.ssh/id_rsa.pub root@$i;done

5)同步集群时间

方式一:centos7

在集群中,时间是一个很重要的概念,一旦集群当中某台机器视觉按跟集群时间不一致,可能会导致集群面临很多问题。所以,在部署集群之前,需要同步集群当中的所有机器时间

yum install ntpdate -y

ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

echo 'Asia/Shanghai' > /etc/timezone

ntpdate time2.aliyun.com

#写入定时任务

*/1 * * * * /usr/sbin/ntpdate time2.aliyun.com > /dev/null 2>&1

方式二:centos8

rpm -ivh http://mirrors.wlnmp.com/centos/wlnmp-release-centos.noarch.rpm

yum install wntp -y

ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

echo 'Asia/Shanghai' > /etc/timezone

ntpdate time2.aliyun.com

# 写入定时任务

*/1 * * * * /usr/sbin/ntpdate time2.aliyun.com > /dev/null 2>&1

6)配置镜像源

#1.默认情况下,centos使用的是官方yum源,所以一般情况下在国内使用时非常慢的,所以我们可以替换成国内的一些比较成熟的yum源,例如:清华大学镜像源,网易云镜像源等等。

rm -rf /ect/yum.repos.d/*

curl -o /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-7.repo

curl -o /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo

yum clean all

yum makecache

7)更新系统

如果内核符合要求的话,排除内核升级

yum update -y --exclud=kernel*

查看

[root@k8s-master-01 ~]# cat /etc/redhat-release

CentOS Linux release 7.9.2009 (Core)

8)安装基础常用软件

yum install wget expect vim net-tools ntp bash-completion ipvsadm ipset jq iptables conntrack sysstat libseccomp -y

9)更新系统内核(docker对系统内核要求比较高,最好用4.4+)

#这里我们是centos7采用5.4内核,#如果是centos8则不需要升级内核

官网:https://elrepo.org/

wget https://elrepo.org/linux/kernel/el7/x86_64/RPMS/kernel-lt-5.4.140-1.el7.elrepo.x86_64.rpm

wget https://elrepo.org/linux/kernel/el7/x86_64/RPMS/kernel-lt-devel-5.4.140-1.el7.elrepo.x86_64.rpm

10)安装系统内核内容

yum localinstall -y kernel-lt*

grub2-set-default 0 && grub2-mkconfig -o /etc/grub2.cfg #调到默认启动

grubby --default-kernel #查看当前默认启动的内核

reboot #重启

查看

[root@k8s-master-01 ~]# uname -r

5.4.140-1.el7.elrepo.x86_64

11)安装IPVS

#IPVS是系统内核中的一个模块,其网络转发性能很高。一般情况下我们首选ipvs

yum install -y conntrack-tools ipvsadm ipset conntrack libseccomp

vim /etc/sysconfig/modules/ipvs.modules #加载IPVS模块

#!/bin/bash

ipvs_modules="ip_vs ip_vs_lc ip_vs_wlc ip_vs_rr ip_vs_wrr ip_vs_lblc ip_vs_lblcr ip_vs_dh ip_vs_sh ip_vs_fo ip_vs_nq ip_vs_sed ip_vs_ftp nf_conntrack"

for kernel_module in ${ipvs_modules}; do

/sbin/modinfo -F filename ${kernel_module} > /dev/null 2>&1

if [ $? -eq 0 ]; then

/sbin/modprobe ${kernel_module}

fi

done

#给文件修改权限

chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep ip_vs

12)修改内核启动参数

#加载IPVS 模块、生效配置

#内核参数优化的主要目的是使其更合适kubernetes的正常运行

vim /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

fs.may_detach_mounts = 1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp.keepaliv.probes = 3

net.ipv4.tcp_keepalive_intvl = 15

net.ipv4.tcp.max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp.max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.top_timestamps = 0

net.core.somaxconn = 16384

#立即生效

sysctl --system

内核优化参数解释

#开启端口转发

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

# 可以之间修改这两个

net.bridge.bridge-nf-call-ip6tables = 1

# 可以之间修改这两个

fs.may_detach_mounts = 1

vm.overcommit_memory=1

# 不检查物理内存是否够用

vm.swappiness=0

# 禁止使用 swap 空间,只有当系统 OOM 时才允许使用它

vm.panic_on_oom=0

# 开启 OOM

fs.inotify.max_user_watches=89100

fs.file-max=52706963 开启 OOM

fs.nr_open=52706963

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp.keepaliv.probes = 3

net.ipv4.tcp_keepalive_intvl = 15

net.ipv4.tcp.max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp.max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.top_timestamps = 0

net.core.somaxconn = 16384

2.安装docker(所有节点)

docker主要是作为k8s管理得常用的容器工具之一

#1.卸载之前安装过的docker

sudo yum remove docker docker-common docker-selinux docker-engine

#2.安装docker需要的依赖包

sudo yum install -y yum-utils device-mapper-persistent-data lvm2

#3.安装docker的yum源

wget -O /etc/yum.repos.d/docker-ce.repo https://repo.huaweicloud.com/docker-ce/linux/centos/docker-ce.repo

#4.安装docker

yum install docker-ce -y

#5.设置开机自启

systemctl enable --now docker.service

# 6.docker加速优化

sudo mkdir -p /etc/docker

sudo tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://k7eoap03.mirror.aliyuncs.com"]

}

EOF

#查看是否安装成功

docker info

注意:

# 官方软件源默认启用了最新的软件,您可以通过编辑软件源的方式获取各个版本的软件包。例如官方并没有将测试版本的软件源置为可用,您可以通过以下方式开启。同理可以开启各种测试版本等。

vim /etc/yum.repos.d/docker-ce.repo

将[docker-ce-test]下方的enabled=0修改为enabled=1

安装指定版本的Docker-CE:

#Step 1: 查找Docker-CE的版本:

yum list docker-ce.x86_64 --showduplicates | sort -r

# Step2: 安装指定版本的Docker-CE: (VERSION例如上面的19.03.0.ce.1-1.el7.centos)

sudo yum -y install docker-ce-[VERSION]

3.安装kubele(所有节点)

#1.安装kebenetes yum源

方式一 #华为源

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://repo.huaweicloud.com/kubernetes/yum/repos/kubernetes-el7-\$basearch

enabled=1

gpgcheck=1

repo_gpgcheck=0

gpgkey=https://repo.huaweicloud.com/kubernetes/yum/doc/yum-key.gpg https://repo.huaweicloud.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

方式二 #阿里源

[root@k8s-node-02 yum.repos.d]# cat < /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

setenforce 0

yum install -y kubelet kubeadm kubectl

# 版本是kubelet-1.21.3

systemctl enable kubelet && systemctl start kubelet

方式三 #清华源

[root@k8s-master-01 yum.repos.d]# cat >> kubernetes.repo <

[kubernetes]

name=kubernetes

baseurl=https://mirrors.tuna.tsinghua.edu.cn/kubernetes/yum/repos/kubernetes-el7-\$basearch

enabled=1

gpgcheck=0

EOF

# 2、重新生成源

yum clean all

yum makecache

# 3、此处指定版本下载为了与下边初始化节点版本对应

yum install kubectl-1.20.2 kubeadm-1.20.2 kubelet-1.20.2 -y

# 此处下载最新版本为了与下边初始化节点版本对应

yum install kubectl-1.20.2 kubeadm-1.20.2 kubelet-1.20.2 -y

# 4、此时只需开机自启,无需启动,因为还未初始化

systemctl enable --now kubelet.service

# 5、查看版本

[root@k8s-master-01 ~]# kubectl version

Client Version: version.Info{Major:"1", Minor:"20", GitVersion:"v1.20.2"

4.初始化master节点(只在master节点执行)

# 1、查看镜像列表

[root@k8s-master-01 ~]# kubeadm config images list

I0818 15:34:59.667298 63989 version.go:251] remote version is much newer: v1.22.0; falling back to: stable-1.20

k8s.gcr.io/kube-apiserver:v1.20.2

k8s.gcr.io/kube-controller-manager:v1.20.2

k8s.gcr.io/kube-scheduler:v1.20.2

k8s.gcr.io/kube-proxy:v1.20.2

k8s.gcr.io/pause:3.2

k8s.gcr.io/etcd:3.4.13-0

k8s.gcr.io/coredns:1.7.0

初始化:

kubeadm init \

--image-repository=registry.cn-hangzhou.aliyuncs.com/k8sos \

--kubernetes-version=v1.20.2 \

--service-cidr=10.96.0.0/12 \

--pod-network-cidr=10.244.0.0/16

注:初始化节点需注意版本问题,yum不指定版本下载默认下载最新版本,所以初始化需指定对应版本

所有节点版本都需对应,node节点与master节点版本不对应会导致node节点加入集群失败

注:避免导致上述问题可以指定版本下载

yum install kubectl-1.20.2 kubeadm-1.20.2 kubelet-1.20.2 -y

ps : 可以先使用手动先下载镜像: kubeadm config images pull --image-repository=registry.cn-hangzhou.aliyuncs.com/google_containers

#参数详解:

–apiserver-advertise-address #集群通告地址

–image-repository #由于默认拉取镜像地址k8s.gcr.io国内无法访问,这里指定阿里云镜像仓库地址

–kubernetes-version #K8s版本,与安装的一致

–service-cidr #集群内部虚拟网络,Pod统一访问入口

–pod-network-cidr #Pod网络,,与下面部署的CNI网络组件yaml中保持一致

# 过程中可监控初始化日志,出现successfully即为成功!

tail -f /var/log/messages

[root@k8s-master1 ~] cat /var/log/messages | grep successfully

# Mar 24 21:02:07 k8s-master1 containerd: time="2021-03-24T21:02:07.063840628+08:00" level=info msg="containerd successfully booted in 0.181480s"

5.初始化后续(只在master节点执行)=====针对于用户集群权限,集群网络插件

1)建立用户集群权限

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

#如果是root用户,则可以使用:export KUBECONFIG=/etc/kubernetes/admin.conf

2) 增加命令提示(选做)

所有节点执行

yum install -y bash-completion

source /usr/share/bash-completion/bash_completion

source <(kubectl completion bash)

echo "source <(kubectl completion bash)" >> ~/.bashrc

3)安装集群网络插件(flannel.yaml)

kubernetes 需要使用第三方的网络插件来实现 kubernetes 的网络功能,这样一来,安装网络插件成为必要前提

第三方网络插件有多种,常用的有 flanneld、calico 和 cannel(flanneld+calico),不同的网络组件,都提供基本的网络功能,为各个 Node 节点提供 IP 网络等

插件文件下载(方式一):

[root@m01 ~]#wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

[root@m01 ~]# kubectl apply -f kube-flannel.yml #指定文件进行部署集群网络

cat >> /root/flannel.yaml <<EOF

---

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default

seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default

apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default

apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:

privileged: false

volumes:

- configMap

- secret

- emptyDir

- hostPath

allowedHostPaths:

- pathPrefix: "/etc/cni/net.d"

- pathPrefix: "/etc/kube-flannel"

- pathPrefix: "/run/flannel"

readOnlyRootFilesystem: false

# Users and groups

runAsUser:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

fsGroup:

rule: RunAsAny

# Privilege Escalation

allowPrivilegeEscalation: false

defaultAllowPrivilegeEscalation: false

# Capabilities

allowedCapabilities: ['NET_ADMIN', 'NET_RAW']

defaultAddCapabilities: []

requiredDropCapabilities: []

# Host namespaces

hostPID: false

hostIPC: false

hostNetwork: true

hostPorts:

- min: 0

max: 65535

# SELinux

seLinux:

# SELinux is unused in CaaSP

rule: 'RunAsAny'

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

rules:

- apiGroups: ['extensions']

resources: ['podsecuritypolicies']

verbs: ['use']

resourceNames: ['psp.flannel.unprivileged']

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-system

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: registry.cn-hangzhou.aliyuncs.com/alvinos/flanned:v0.13.1-rc1

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: registry.cn-hangzhou.aliyuncs.com/alvinos/flanned:v0.13.1-rc1

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

EOF

4)安装网络插件

kubectl apply -f flannel.yaml

5)将其他节点加入集群

1)主节点创建集群

node节点要查看token,主节点生成token可重复执行查看,不会改变

[root@k8s-master-01~]# kubeadm token create --print-join-command

kubeadm join 192.168.15.31:6443 --token s6svmh.lw88lchyl6m24tts --discovery-token-ca-cert-hash sha256:4d7e3e37e73176a97322e26fe501d2c27830a7bf3550df56f3a55b68395b507b

2)从节点上执行

[root@k8s-node-01~]# kubeadm join 192.168.15.31:6443 --token s6svmh.lw88lchyl6m24tts --discovery-token-ca-cert-hash sha256:4d7e3e37e73176a97322e26fe501d2c27830a7bf3550df56f3a55b68395b507b

[root@k8s-node-02~]# kubeadm join 192.168.15.31:6443 --token s6svmh.lw88lchyl6m24tts --discovery-token-ca-cert-hash sha256:4d7e3e37e73176a97322e26fe501d2c27830a7bf3550df56f3a55b68395b507b

3)查看token值命令

[root@gdx1 ~]# kubeadm token list

kubeadm join 192.168.12.11:6443 --token fm0387.iqixomz5jmsukwsi --discovery-token-ca-cert-hash sha256:d8ff83ffed5967000034d07b3da738ae4f1f0254e8417bb30c21f3ed15ac5d18

注:每生成一次token值都不一样,一次token值有效期24小时

#扩展:生成永久Token(node加入的时候会用到)

kubeadm token create --ttl 0 --print-join-command

`kubeadm join 192.168.233.3:6443 --token rpi151.qx3660ytx2ixq8jk --discovery-token-ca-cert-hash sha256:5cf4e801c903257b50523af245f2af16a88e78dc00be3f2acc154491ad4f32a4`#这是生成的Token,node加入时使用,此``是起到注释作用,无其他用途。

6.检查集群状态(主节点)

#1.第一种方式

[root@k8s-master-01 opt]# kubectl get nodes 后者 kubectl get nodes -o wide

NAME STATUS ROLES AGE VERSION

k8s-master-01 Ready control-plane,master 3h13m v1.20.2

k8s-node-01 Ready <none> 3h3m v1.20.2

k8s-nonde-02 Ready <none> 3h3m v1.20.2

注:都出现ready的状态就证明成功

#1.第二种方式

[root@k8s-master-01 opt]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-f68b4c98f-527gt 1/1 Running 0 3h15m

coredns-f68b4c98f-hmm8j 1/1 Running 0 3h15m

etcd-k8s-master-01 1/1 Running 0 3h15m

kube-apiserver-k8s-master-01 1/1 Running 0 3h15m

kube-controller-manager-k8s-master-01 1/1 Running 0 3h15m

kube-flannel-ds-44r9l 1/1 Running 0 3h13m

kube-flannel-ds-h755r 1/1 Running 0 3h5m

kube-flannel-ds-ntdz5 1/1 Running 0 3h5m

kube-proxy-8k9nt 1/1 Running 0 3h5m

kube-proxy-jbm5l 1/1 Running 0 3h5m

kube-proxy-xkpxj 1/1 Running 0 3h15m

kube-scheduler-k8s-master-01 1/1 Running 0 3h15m

#第三种方式:直接验证集群DNS

[root@k8s-master-01 opt]# kubectl run test -it --rm --image=busybox:1.28.3

If you don't see a command prompt, try pressing enter.

/ # nslookup kubernetes

Server: 10.96.0.10

Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local

Name: kubernetes

Address 1: 10.96.0.1 kubernetes.default.svc.cluster.local

/ #

解决办法:

# 默认下载是最新版本,难免出现版本不一致的问题,所以下载时指定同一版本才行

# 1.从节点删除下载版本重新指定版本格式:

yum remove kubectl kubeadm kubelet -y

yum install kubectl-1.20.2 kubeadm-1.20.2 kubelet-1.20.2 -y

#2.设置开机自启

systemctl enable --now kubelet

#3.重置nonde节点配置(因为上述已经加入过集群,会报错证书,配置文件,端口号已存在,需要格式化子节点配置)

[ERROR FileAvailable--etc-kubernetes-kubelet.conf]: /etc/kubernetes/kubelet.conf already exists

[ERROR Port-10250]: Port 10250 is in use

[ERROR FileAvailable--etc-kubernetes-pki-ca.crt]: /etc/kubernetes/pki/ca.crt already exists

[root@gdx2 ~]# kubectl reset #报错以上内容执行此命令格式化子节点

#4.从集群移除状态为notready的node节点

[root@gdx1 ~]# kubectl delete node gdx3

#5.重新将node节点加入集群,此时需注意token值是否相同,如果多次生成token值,需确认最后生成的token值

注:此处做好在master节点重新生成一次token值用来node节点加入集群使用

[root@gdx1 ~]# kubeadm token create --print-join-command

kubeadm join 192.168.12.11:6443 --token fm0387.iqixomz5jmsukwsi --discovery-token-ca-cert-hash sha256:d8ff83ffed5967000034d07b3da738ae4f1f0254e8417bb30c21f3ed15ac5d18

注:将生成结果在node节点执行

#6.将node节点重新加入集群

[root@gdx2 ~]# kubeadm join 192.168.12.11:6443 --token fm0387.iqixomz5jmsukwsi --discovery-token-ca-cert-hash sha256:d8ff83ffed5967000034d07b3da738ae4f1f0254e8417bb30c21f3ed15ac5d18

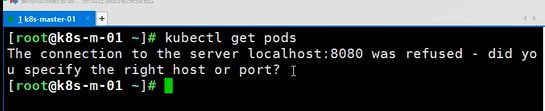

查看get pods报错:server localhost:8080 was refused

原因:家目录下的.kube被移除走了,就没有影虎管理集群权限了

7.如果测试成功,无需执行以下命令

node节点加入集群失败,状态为NotReady 解决方法

# 1、加入集群报错解决

1> 报错原因1

[ERROR FileContent--proc-sys-net-bridge-bridge-nf-call-iptables]: /proc/sys/net/bridge/bridge-nf-call-iptables contents are not set to 1

# 解决方法: echo "1" >/proc/sys/net/bridge/bridge-nf-call-iptables

# 2、然后重新生成,再次测试,加入集群

[root@k8s-m-01 ~]# kubeadm token create --print-join-command

kubeadm join 192.168.15.111:6443 --token fm0387.iqixomz5jmsukwsi --discovery-token-ca-cert-hash sha256:d8ff83ffed5967000034d07b3da738ae4f1f0254e8417bb30c21f3ed15ac5d18

注:将生成结果在node节点执行

# 3、将node节点重新加入集群

[root@k8s-m-01 ~]# kubeadm join 192.168.15.111:6443 --token fm0387.iqixomz5jmsukwsi --discovery-token-ca-cert-hash sha256:d8ff83ffed5967000034d07b3da738ae4f1f0254e8417bb30c21f3ed15ac5d18

2> 报错原因1

从节点加入集群可能会出现如下报错:

[ERROR FileContent--proc-sys-net-bridge-bridge-nf-call-iptables]: /proc/sys/net/bridge/bridge-nf-call-iptables contents are not set to 1

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

To see the stack trace of this error execute with --v=5 or higher

PS:前提安装Docker+启动,再次尝试加入节点!

# 1、报错原因:

swap没关,一旦触发 swap,会导致系统性能急剧下降,所以一般情况下,所以K8S 要求关闭 swap

# 2、解决方法:

1> 执行以下三条命令后再次执行添加到集群命令:

modprobe br_netfilter

echo 1 > /proc/sys/net/bridge/bridge-nf-call-iptables

echo 1 > /proc/sys/net/ipv4/ip_forward

2> 追加 --ignore-preflight-errors=Swap 参数重新执行即可!

[root@k8s-n-1 ~] kubeadm join 192.168.15.11:6443 --token iypm65.p5nmdzzw1zifxy6c --discovery-token-ca-cert-hash sha256:8bdbe324980e3350aaa3b9cea58edf576dc0a6d937da6b7bff6dbe6a01e0b525 --ignore-preflight-errors=Swap

3> 报错原因3

# 1、报错原因:

可能是内核参数忘记优化所有节点都需优化

# 2、解决方法:

回到上面第七小节,复制粘贴优化参数即可: cat > /etc/sysctl.d/k8s.conf <<EOF

4> 报错原因4

ode节点加入集群失败,状态为NotReady

情况1:软件版本不一致

[root@k8s-m-01 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-m-01 Ready control-plane,master 16m v1.21.2

k8s-n-01 NotReady <none> 22m v1.20.2

k8s-n-02 NotReady <none> 22m v1.20.2

# 1、原因分析:

默认下载是最新版本,难免出现版本不一致的问题,所以下载时指定同一版本才行

# 2、解决方法:

yum install kubectl-1.21.2 kubeadm-1.21.2 kubelet-1.21.2 -y # 主从节点安装指定版本格式

kubectl reset # 重新初始化

kubeadm join 192.168.15.111:6443 --token fm0387.iqixomz5jmsukwsi --discovery-token-ca-cert-hash sha256:d8ff83ffed5967000034d07b3da738ae4f1f0254e8417bb30c21f3ed15ac5d18 # 重新将node节点加入集群

# PS:查看token值命令

[root@k8s-m-01 ~]# kubeadm token list

kubeadm join 192.168.15.111:6443 --token fm0387.iqixomz5jmsukwsi --discovery-token-ca-cert-hash sha256:d8ff83ffed5967000034d07b3da738ae4f1f0254e8417bb30c21f3ed15ac5d18

8ff83ffed5967000034d07b3da738ae4f1f0254e8417bb30c21f3ed15ac5d18

情况2:软件版本一致,touken值可能不对

[root@k8s-m-01 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-m-01 Ready control-plane,master 16m v1.21.2

k8s-n-01 NotReady <none> 22m v1.20.2

k8s-n-02 NotReady <none> 22m v1.20.2

# node节点为notready状态,加入从节点时报错:

[ERROR FileAvailable--etc-kubernetes-kubelet.conf]: /etc/kubernetes/kubelet.conf already exists

[ERROR Port-10250]: Port 10250 is in use

[ERROR FileAvailable--etc-kubernetes-pki-ca.crt]: /etc/kubernetes/pki/ca.crt already exists

# 原因分析:因为多次生成主节点的token值,导致token值加入不一致或输入错误

# 解决方法:

# 从集群移除状态为notready的node节点

[root@k8s-m-01 ~]# kubectl delete n2

# node节点重置touken值以及证书端口号等信息

[root@k8s-n-01 ~]# kubeadm reset

[root@k8s-n-02 ~]# kubeadm reset

# 主节点重新创建token

[root@k8s-m-01 ~]# kubeadm token create --print-join-command

kubeadm join 192.168.15.111:6443 --token fm0387.iqixomz5jmsukwsi --discovery-token-ca-cert-hash sha256:d8ff83ffed5967000034d07b3da738ae4f1f0254e8417bb30c21f3ed15ac5d18

# 将node节点重新加入集群

kubeadm join 192.168.15.111:6443 --token fm0387.iqixomz5jmsukwsi --discovery-token-ca-cert-hash sha256:d8ff83ffed5967000034d07b3da738ae4f1f0254e8417bb30c21f3ed15ac5d18

# 报错 3、STATUS 状态是Healthy

[root@k8s-m-01 ~]# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Unhealthy Get "http://127.0.0.1:10251/healthz": dial tcp 127.0.0.1:10251: connect: connection refused

controller-manager Unhealthy Get "http://127.0.0.1:10252/healthz": dial tcp 127.0.0.1:10252: connect: connection refused

etcd-0 Healthy {"health":"true"}

1、解决方式

[root@k8s-m-01 ~]# vim /etc/kubernetes/manifests/kube-controller-manager.yaml

#- --port=0

[root@k8s-m-01 ~]# vim /etc/kubernetes/manifests/kube-scheduler.yaml

#- --port=0

[root@k8s-m-01 ~]# systemctl restart kubelet.service

2、查看状态

[root@k8s-m-01 ~]# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-0 Healthy {"health":"true"}

报错4:

# 加入集群是种失败,一直未NotReady状态,没什么报错,只是显示部分提醒

# 解决方法:

查看node节点是否缺失bin目录即里面的文件,若缺失,将其他节点或master节点的bin推送过来即可

建议直接scp覆盖,node节点再重新执行:

kubeadm reset

kubeadm join ··· 重新加入集群即可

[root@k8s-m1 code] ls -l /opt/cni/bin/

总用量 56484

-rwxr-xr-x 1 root root 3254624 9月 10 2020 bandwidth

-rwxr-xr-x 1 root root 3581192 9月 10 2020 bridge

-rwxr-xr-x 1 root root 9837552 9月 10 2020 dhcp

-rwxr-xr-x 1 root root 4699824 9月 10 2020 firewall

-rwxr-xr-x 1 root root 2650368 9月 10 2020 flannel

-rwxr-xr-x 1 root root 3274160 9月 10 2020 host-device

-rwxr-xr-x 1 root root 2847152 9月 10 2020 host-local

-rwxr-xr-x 1 root root 3377272 9月 10 2020 ipvlan

-rwxr-xr-x 1 root root 2715600 9月 10 2020 loopback

-rwxr-xr-x 1 root root 3440168 9月 10 2020 macvlan

-rwxr-xr-x 1 root root 3048528 9月 10 2020 portmap

-rwxr-xr-x 1 root root 3528800 9月 10 2020 ptp

-rwxr-xr-x 1 root root 2849328 9月 10 2020 sbr

-rwxr-xr-x 1 root root 2503512 9月 10 2020 static

-rwxr-xr-x 1 root root 2820128 9月 10 2020 tuning

-rwxr-xr-x 1 root root 3377120 9月 10 2020 vlan

[root@k8s-m1 code] scp -r /opt/cni/bin n2:/opt/cni/

8.新增Master节点

- 新节点准备目录

rm -rf /etc/kubernetes

mkdir -p /etc/kubernetes/pki/etcd

- 推送原Master节点的配置文件到新节点

scp /etc/kubernetes/pki/{ca.crt,ca.key,sa.key,sa.pub,front-proxy-ca.crt,front-proxy-ca.key} m2:/etc/kubernetes/pki/

scp /etc/kubernetes/pki/etcd/{ca.crt,ca.key} m2:/etc/kubernetes/pki/etcd/{ca.crt,ca.key} m2:/etc/kubernetes/pki/etcd

scp /etc/kubernetes/pki/etcd/{ca.crt,ca.key} m2:/etc/kubernetes/pki/etcd/

scp /etc/kubernetes/admin.conf m2:/etc/kubernetes/

- 原Master节点查看token值,并复制到新节点执行

kubeadm token create --print-join-command

# 复制到新节点执行

kubeadm join 172.23.0.241:6443 --token lnvj7t.c7mc3254dnz3kp0u --discovery-token-ca-cert-hash sha256:2c0cdaae024d5668cece036cca6e2696eee92da5a92188b89da74c8364bb5251 --ignore-preflight-errors=Swap

加入报错解决:

# 报错1:

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

# 解决:关闭Swap配置

增加 --ignore-preflight-errors=Swap 选项即可

# 报错2

[ERROR Port-10250]: Port 10250 is in use

可能还有报错1的提示,添加选项后可无视

# 解决:提示端口占用,可能是因为之前加入过集群,加入失败残留端口占用,重置后重新加入即刻

kubeadmin reset

kubeadm join 172.23.0.241:6443 --token lnvj7t.c7mc3254dnz3kp0u --discovery-token-ca-cert-hash sha256:2c0cdaae024d5668cece036cca6e2696eee92da5a92188b89da74c8364bb5251 --ignore-preflight-errors=Swap

此时m2新master节点已成功加入集群!

[root@k8s-m1 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-m1 Ready control-plane,master 15d v1.20.2

k8s-m2 Ready <none> 15m v1.20.2

k8s-n1 Ready <none> 15d v1.20.2

k8s-n2 Ready <none> 15d v1.20.2

9、让其他节点可用kubectl获取状态

- 让其他节点可用kubectl获取nodes、cs等状态信息

# 将master1节点的admin.conf推送至其他节点对应目录

for i in m1 m2 n1 n2 ;do scp /etc/kubernetes/admin.conf $i:/etc/kubernetes/

# 其他节点加入环境变量,即可使用

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

source ~/.bash_profile

# 查看

kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-m1 Ready control-plane,master 18d v1.20.2

k8s-m2 Ready <none> 3d4h v1.20.2

k8s-n1 Ready <none> 18d v1.20.2

k8s-n2 Ready <none> 18d v1.20.2