SpringCloud(十)——ElasticSearch简单了解(三)数据聚合和自动补全

文章目录

- 1. 数据聚合

-

- 1.1 聚合介绍

- 1.2 Bucket 聚合

- 1.3 Metrics 聚合

- 1.4 使用 RestClient 进行聚合

- 2. 自动补全

-

- 2.1 安装补全包

- 2.2 自定义分词器

- 2.3 自动补全查询

- 2.4 拼音自动补全查询

- 2.5 RestClient 实现自动补全

-

- 2.5.1 建立索引

- 2.5.2 修改数据定义

- 2.5.3 补全查询

- 2.5.4 解析结果

1. 数据聚合

1.1 聚合介绍

聚合(aggregations)可以实现对文档数据的统计、分析、运算。

聚合常见的有三类:

- 桶(Bucket)聚合:用来对文档做分组

- TermAggregation:按照文档字段值分组

- Date Histogram:按照日期阶梯分组,例如一周为一组,或者一月为一组

- 度量(Metric)聚合:用以计算一些值,比如:最大值、最小值、平均值等

- Avg:求平均值

- Max:求最大值

- Min:求最小值

- Stats:同时求max、min、avg、sum等

- 管道(pipeline)聚合:其它聚合的结果为基础做聚合

参与聚合的字段为以下字段:

- keyword

- 数值

- 日期

- 布尔

注意,不能是 text 字段

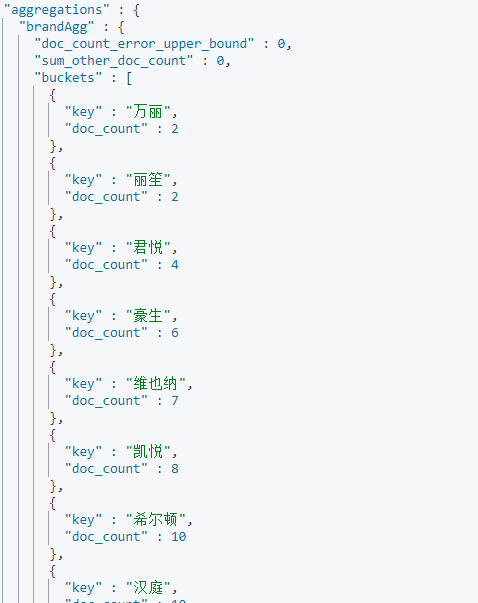

1.2 Bucket 聚合

这里假如我们需要对不同品牌的酒店进行聚合人,那么我们就可以使用桶聚合,桶聚合的例子如下:

GET /hotel/_search

{

"size": 0, // 设置size为0,结果中不包含文档,只包含聚合结果

"aggs": { // 定义聚合

"brandAgg": { //给聚合起个名字

"terms": { // 聚合的类型,按照品牌值聚合,所以选择term

"field": "brand", // 参与聚合的字段

"order": {

"_count": "asc"// 按照聚合数量进行升序排列,默认降序

},

"size": 20 // 希望获取的聚合结果数量

}

}

}

}

如果我们需要在一些查询的条件下进行聚合,比如我们只对200元一下的酒店文档进行聚合,那么聚合条件如下:

GET /hotel/_search

{

"query": {

"range": {

"price": {

"lte": 200 // 只对200元以下的文档聚合

}

}

},

"size": 0,

"aggs": {

"brandAgg": {

"terms": {

"field": "brand",

"size": 20

}

}

}

}

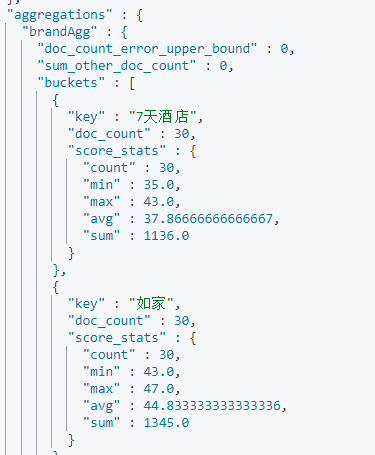

1.3 Metrics 聚合

如果我们需要按照每个品牌的用户的评分的最大值、最小值、平均值等进行排序,那么这就需要用到 Metrics 聚合了,我们使用 stats 查看所有的聚合属性,该聚合的实现如下:

GET /hotel/_search

{

"size": 0,

"aggs": {

"brandAgg": {

"terms": {

"field": "brand",

"size": 20

},

"aggs": { // 是brands聚合的子聚合,也就是分组后对每组分别计算

"scoreAgg": { // 聚合名称

"stats": { // 聚合类型,这里stats可以计算min、max、avg等

"field": "score" // 聚合字段,这里是score

}

}

}

}

}

}

如果我们想要对按照聚合的平均值进行排序,那么DSL语句如下:

GET /hotel/_search

{

"size": 0,

"aggs": {

"brandAgg": {

"terms": {

"field": "brand",

"size": 20,

"order": {

"scoreAgg.avg": "asc" //按照平均值进行排序

}

},

"aggs": {

"scoreAgg": {

"stats": {

"field": "score"

}

}

}

}

}

}

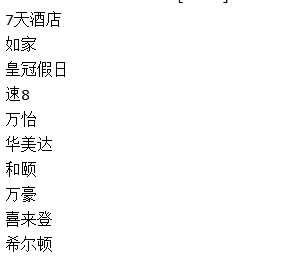

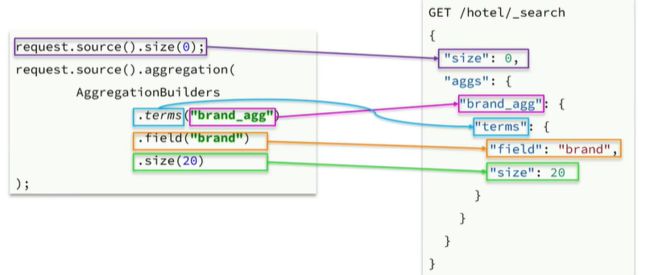

1.4 使用 RestClient 进行聚合

我们以各个酒店的品牌聚合为例,其中java语句与DSL语句的一一对应关系如下:

使用RestClient进行聚合的代码如下:

@Test

void testAgg() throws IOException {

//1.准备Request对象

SearchRequest request = new SearchRequest("hotel");

//2.准备size

request.source().size(0);

//3.进行聚合

request.source().aggregation(AggregationBuilders

.terms("brandAgg")

.field("brand")

.size(10));

//4.发送请求

SearchResponse response = restHighLevelClient.search(request, RequestOptions.DEFAULT);

//解析聚合结果

Aggregations aggregations = response.getAggregations();

//根据名称获取聚合结果

Terms brandterms = aggregations.get("brandAgg");

//获取桶

List<? extends Terms.Bucket> buckets = brandterms.getBuckets();

// 遍历

for (Terms.Bucket bucket: buckets){

// 获取key,也就是品牌信息

String brandName = bucket.getKeyAsString();

System.out.println(brandName);

}

}

2. 自动补全

2.1 安装补全包

自动补全我们需要实现的效果是当我们输入拼音的时候,就有一些产品的提示,这种情况下就需要我们对拼音有一定的处理,所以我们在这里下载一个拼音分词器,下载的方式与上面下载 IK 分词器相差不大,都是首先进入容器内部,然后在容器插件目录下进行安装,

# 进入容器内部

docker exec -it es /bin/bash

# 在线下载并安装

/usr/share/elasticsearch/bin/elasticsearch-plugin install --batch \

https://github.com/medcl/elasticsearch-analysis-pinyin/releases/download/v7.12.1/elasticsearch-analysis-pinyin-7.12.1.zip

#退出

exit

#重启容器

docker restart es

重启后,使用拼音分词器试试效果,如下:

POST /_analyze

{

"text": ["你干嘛哎哟"],

"analyzer": "pinyin"

}

2.2 自定义分词器

从上面的例子我们可以看出,拼音分词器是将一句话的每个字都进行分开,并且首字母的拼音全部都在一起的,这肯定不是我们想要看到的,我们想要的是对句子进行分词后还能根据词语来创建拼音的索引,所以,这就需要我们自定义分词器了。

首先,我们需要了解分词器的工作步骤,elasticsearch中分词器(analyzer)的组成包含三部分:

- character filters:在tokenizer之前对文本进行处理。例如删除字符、替换字符

- tokenizer:将文本按照一定的规则切割成词条(term)。例如keyword,就是不分词;还有ik_smart tokenizer

- filter:将tokenizer输出的词条做进一步处理。例如大小写转换、同义词处理、拼音处理等

自定义分词器的DSL代码如下:

PUT /test //针对的是test索引库

{

"settings": {

"analysis": {

"analyzer": { //自定义分词器

"my_analyzer": { //分词器名称

"tokenizer": "ik_max_word",

"filter": "py" //过滤器名称

}

},

"filter": {

"py": {

"type": "pinyin", //拼音分词器

"keep_full_pinyin": false,

"keep_joined_full_pinyin": true,

"keep_original": true,

"limit_first_letter_length": 16,

"remove_duplicated_term": true,

"none_chinese_pinyin_tokenize": false

}

}

}

},

"mappings": { //创建的索引库的映射

"properties": {

"name": {

"type": "text",

"analyzer": "my_analyzer",//创建索引时的

"search_analyzer": "ik_smart"

}

}

}

}

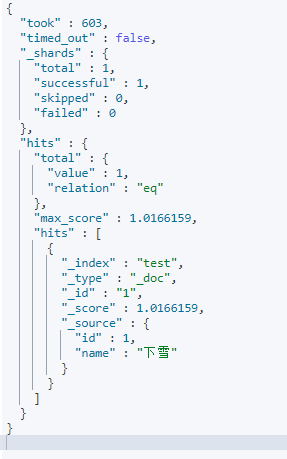

进行测试如下:

POST /test/_doc/1

{

"id": 1,

"name": "下雪"

}

POST /test/_doc/2

{

"id": 2,

"name": "瞎学"

}

GET /test/_search

{

"query": {

"match": {

"name": "武汉在下雪嘛"

}

}

}

2.3 自动补全查询

elasticsearch提供了 Completion Suggester 查询来实现自动补全功能。这个查询会匹配以用户输入内容开头的词条并返回。为了提高补全查询的效率,对于文档中字段的类型有一些约束:

- 参与补全查询的字段必须是

completion类型。 - 字段的内容一般是用来补全的多个词条形成的数组。

首先我们先建立索引库以及索引库约束,

PUT test2

{

"mappings": {

"properties": {

"title":{

"type": "completion"

}

}

}

}

在 test2 索引库中添加数据

// 示例数据

POST test2/_doc

{

"title": ["Sony", "WH-1000XM3"]

}

POST test2/_doc

{

"title": ["SK-II", "PITERA"]

}

POST test2/_doc

{

"title": ["Nintendo", "switch"]

}

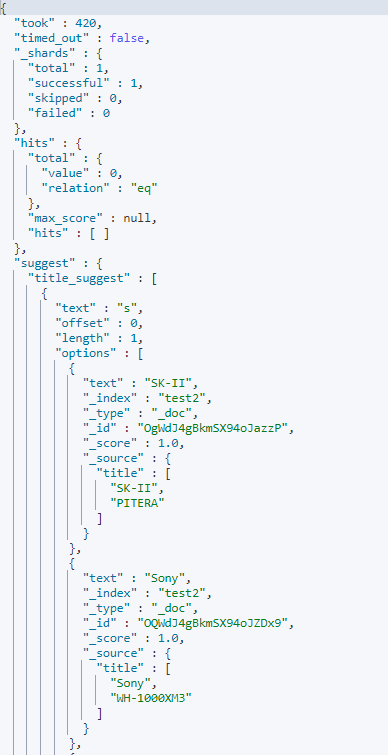

进行自动补全查询,我们给出一个关键字 s ,对其进行补全查询如下,

GET /test2/_search

{

"suggest": {

"title_suggest": {

"text": "s", // 关键字

"completion": {

"field": "title", // 补全查询的字段

"skip_duplicates": true, // 跳过重复的

"size": 10 // 获取前10条结果

}

}

}

}

2.4 拼音自动补全查询

如果想要使用拼音自动补全进行查询,那么就必须自定义分词器了,自定义分词器如下:

PUT /test3

{

"settings": {

"analysis": {

"analyzer": {

"my_analyzer": {

"tokenizer": "ik_max_word",

"filter": "py"

},

"completion_analyzer": {

"tokenizer": "keyword",

"filter": "py"

}

},

"filter": {

"py": {

"type": "pinyin",

"keep_full_pinyin": false,

"keep_joined_full_pinyin": true,

"keep_original": true,

"limit_first_letter_length": 16,

"remove_duplicated_term": true,

"none_chinese_pinyin_tokenize": false

}

}

}

},

"mappings": {

"properties": {

"title": {

"type": "completion",

"analyzer": "completion_analyzer"

}

}

}

}

然后创建一个索引库,并添加一些文档,如下:

PUT /test3

{

"mappings": {

"properties": {

"title":{

"type": "completion"

}

}

}

}

POST test3/_doc

{

"title": ["上子", "熵字", "下雪"]

}

POST test3/_doc

{

"title": ["赏金", "秀色", "猎人"]

}

然后就可以根据首字母缩写或者拼音来进行查询了,DSL代码如下:

GET /test3/_search

{

"suggest": {

"suggestions": {

"text": "xx",

"completion": {

"field": "title",

"skip_duplicates": true,

"size": 10

}

}

}

}

如上,我们输入的是下雪的拼音缩写 xx ,进行查询时,结果如下:

当然,也可以对拼音进行按顺序的补全查询。

2.5 RestClient 实现自动补全

2.5.1 建立索引

要实现对索引库的内容进行自动补全,我们需要重新创建索引库,我们的索引库需要多出一个 completion 字段的类型,所以删除原有的索引库后创建新的索引库如下:

DELETE /hotel

PUT /hotel

{

"settings": {

"analysis": {

"analyzer": {

"my_analyzer": {

"tokenizer": "ik_max_word",

"filter": "py"

},

"completion_analyzer": {

"tokenizer": "keyword",

"filter": "py"

}

},

"filter": {

"py": {

"type": "pinyin",

"keep_full_pinyin": false,

"keep_joined_full_pinyin": true,

"keep_original": true,

"limit_first_letter_length": 16,

"remove_duplicated_term": true,

"none_chinese_pinyin_tokenize": false

}

}

}

},

"mappings": {

"properties": {

"id": {

"type": "keyword"

},

"name": {

"type": "text",

"analyzer": "ik_max_word",

"copy_to": "all"

},

"address": {

"type": "keyword",

"index": false

},

"price": {

"type": "integer"

},

"score": {

"type": "integer"

},

"brand": {

"type": "keyword",

"copy_to": "all"

},

"city": {

"type": "keyword"

},

"starName": {

"type": "keyword"

},

"bussiness": {

"type": "keyword",

"copy_to": "all"

},

"location": {

"type": "geo_point"

},

"pic": {

"type": "keyword",

"index": false

},

"all": {

"type": "text",

"analyzer": "ik_max_word"

},

"suggestion": {

"type": "completion",

"analyzer": "completion_analyzer"

}

}

}

}

2.5.2 修改数据定义

除此之外,还需要将 Hotel 的定义进行修改,因为自动补全的字段不止一个字段,所以我们使用列表类型,定义如下:

@Data

@NoArgsConstructor

@ToString

public class HotelDoc {

private Long id;

private String name;

private String address;

private Integer price;

private Integer score;

private String brand;

private String city;

private String starName;

private String business;

private String location;

private String pic;

private List<String> suggestion;

public HotelDoc(Hotel hotel) {

this.id = hotel.getId();

this.name = hotel.getName();

this.address = hotel.getAddress();

this.price = hotel.getPrice();

this.score = hotel.getScore();

this.brand = hotel.getBrand();

this.city = hotel.getCity();

this.starName = hotel.getStarName();

this.business = hotel.getBusiness();

this.location = hotel.getLatitude() + ", " + hotel.getLongitude();

this.pic = hotel.getPic();

this.suggestion = Arrays.asList(this.brand, this.business);

}

}

以上定义就是将 brand 和 business 都进行补全的数据结构定义。

之后再按照之前的批量添加将数据库中的数据进行批量新增即可。

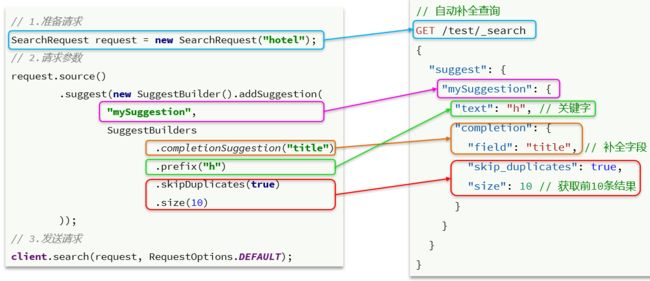

2.5.3 补全查询

以下是RestClient与DSL语句的一一对应关系,

补全查询的语句如下:

@Test

void testSuggest() throws IOException{

//1. 准备Request

SearchRequest request = new SearchRequest("hotel");

//2. 准备DSL

request.source().suggest(new SuggestBuilder().addSuggestion(

"suggestions",

SuggestBuilders.completionSuggestion("suggestion")

.prefix("hu")

.skipDuplicates(true)

.size(10)

));

//3. 发起请求

SearchResponse response = restHighLevelClient.search(request, RequestOptions.DEFAULT);

//4. 输出结果

System.out.println(response);

}

2.5.4 解析结果

输出的查询结果是一个包含很多形式的信息的Map类型,我们需要对其进行解析,获取其中想要的结果才行,解析的语句与DSL查询的结果对应关系如下:

解析的结果的语句如下:

@Test

void testSuggest() throws IOException{

//1. 准备Request

SearchRequest request = new SearchRequest("hotel");

//2. 准备DSL

request.source().suggest(new SuggestBuilder().addSuggestion(

"suggestions",

SuggestBuilders.completionSuggestion("suggestion")

.prefix("hu")

.skipDuplicates(true)

.size(10)

));

//3. 发起请求

SearchResponse response = restHighLevelClient.search(request, RequestOptions.DEFAULT);

//4. 解析结果

Suggest suggest = response.getSuggest();

//4.1 根据补全查询名称,获取补全结果

CompletionSuggestion suggestions = suggest.getSuggestion("suggestions");

//4.2 获取options

List<CompletionSuggestion.Entry.Option> options = suggestions.getOptions();

//4.3 遍历

for (CompletionSuggestion.Entry.Option option : options) {

String text = option.getText().toString();

System.out.println(text);

}

}