k8s笔记6--使用kubeadm快速部署k8s集群 v1.19.4

k8s笔记6--使用kubeadm快速部署k8s集群 v1.19.4

- 1 简介

- 2 搭建集群

-

- 2.1 安装基础软件

- 2.2 设置常见功能

- 2.3 启动集群

- 3 测试

- 4 说明

最近由于工作需要开始研究k8s,看了好几个基础教程,也搭建了好几次集群;多次想着写一篇简单的易懂的教程(小白可上手),一方面以便于自己后续查阅,另一方面给有需要的人员提供一个参考案例;由于各种原因没起笔,恰逢周六晚稍空闲了些,从11点开始搭建集群,然后测试落笔,调整不合理的地方,终于完成了(已经凌晨4点了),再次体验到这种如释重负的感觉!!

1 简介

-

节点说明

本次搭建的共4个节点,1master+3node角色 ip cpu 内存 master 192.168.2.131 2核 3Gi node01 192.168.2.132 1核 2Gi node02 192.168.2.133 1核 2Gi node03 192.168.2.134 1核 2Gi -

部署目标

- 在所有节点上安装Docker和kubeadm

- 部署Kubernetes master

- 部署容器网络插件

- 部署 Kubernetes Node,将节点加入Kubernetes集群中

- 部署Dashboard Web页面,可视化查看Kubernetes资源

- 安装metrics-server,监控节点|pod cpu和内存资源

- 安装lens,通过普罗米修斯采集相关数据,并通过lens展示

-

资源说明

此处提供了一个几乎基于最新kubeadm搭建k8s的完整步骤,以便于有需要的人员学习!

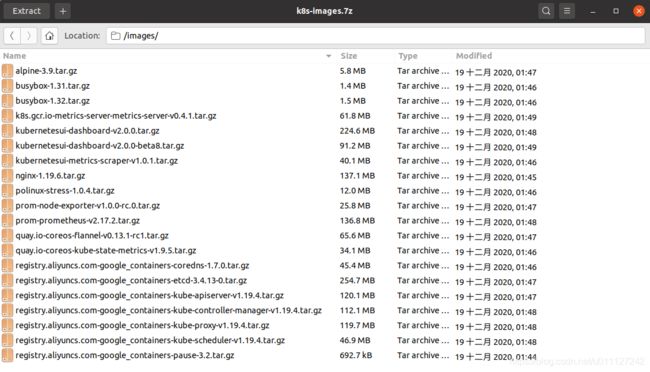

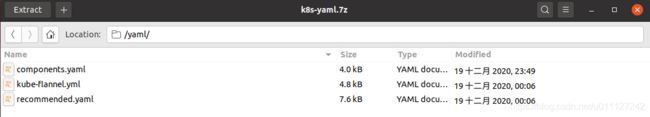

除此之外,也对此次搭建的 系统、镜像、组件配置yaml 文件等所有文件打包,上传到百度云盘,网络不太好的情况下可以直接下载安装包,然后跳过docker 和 kubeadm等软件的安装,直接从 2.3小节开始启动集群!

资源中虚拟机器网络调整方法可以参考笔者博文:Windows小技巧8–VMware workstation虚拟机网络通信,当前镜像中为nat模式固定IP!

资源链接:kubeadm 配套资源 https://pan.baidu.com/s/1_JGnMv83yO6mDXXmO9Y3ng 提取码: a3hd 用户: xg 密码: 111111

2 搭建集群

2.1 安装基础软件

基础软件包括 docker、kubelet、kubeadm 和 kubectl; 以下操作都在root权限下进行的,因此不需要sudo。

-

更新源安装基础软件

apt-get update && sudo apt-get install -y apt-transport-https curl此处建议将系统源更换为清华源,以防部分资源下载过慢;

具体参考: 清华ubuntu源 https://mirror.tuna.tsinghua.edu.cn/help/ubuntu/ -

安装docker

apt-get -y install apt-transport-https ca-certificates curl software-properties-common curl -fsSL http://mirrors.aliyun.com/docker-ce/linux/ubuntu/gpg | sudo apt-key add - add-apt-repository "deb [arch=amd64] http://mirrors.aliyun.com/docker-ce/linux/ubuntu $(lsb_release -cs) stable" apt-get update apt-get install docker-cedocker 版本最好不要和kubeadm的版本差距太大,即尽量不要早期版本docker配较新的kubeadm,也不要最新docker配置早期的kubeadm;笔者这里为最新docker 和 kubeadm 1.19.4(机会最新kubeadm),无冲突。

docker 安装和使用的常见问题,可以参考笔者博文: docker笔记7–Docker常见操作 -

安装kubeadm相关软件

add-apt-repository "deb [arch=amd64] https://mirrors.aliyun.com/kubernetes/apt/ kubernetes-xenial main" apt-get update apt install -y kubelet=1.19.4-00 kubectl=1.19.4-00 kubeadm=1.19.4-00 --allow-unauthenticated 若提示如下错误: GPG error: https://mirrors.aliyun.com/kubernetes/apt kubernetes-xenial InRelease: The following signatures couldn't be verified because the public key is not available: NO_PUBKEY 6A030B21BA07F4FB NO_PUBKEY 8B57C5C2836F4BEB 解决方法: apt-key adv --keyserver keyserver.ubuntu.com --recv-keys 6A030B21BA07F4FB(更换为实际的PUBKEY即可) -

锁定软件版本

apt-mark hold kubelet kubeadm kubectl docker -

重启kubelet

systemctl daemon-reload systemctl restart kubelet

2.2 设置常见功能

- 关闭swap

临时关闭: swapoff -a 持久关闭: vim /etc/fstab # UUID=e9a6ffe0-5f53-4e23-99ab-3fedfb3399c1 none swap sw 0 0 - 关闭防火墙

ufw disable - 设置网络参数

cat < - 加载镜像

为了能快速下载镜像,笔者已经将镜像等资源打包传到百度网盘了,可以直接下载下来,解压后进入images文件夹,执行下面命令批量加载镜像;for i in $(ls);do docker load -i $i; done 如果需要备份所有的镜像,可以通过如下命令进行: docker images|tail -n +2|awk '{print $1":"$2, $1"-"$2".tar.gz"}'|awk '{print $1, gsub("/","-") $var}'|awk '{print "docker save -o " $3,$1}' > save_img.sh && bash save_img.sh - 设置hosts

192.168.2.131 kmaster 192.168.2.132 knode01 192.168.2.133 knode02 192.168.2.134 knode03

注意:若使用虚拟机部署k8s,以上1-6操作完成后,可以直接clone一份虚拟机,然后更改不同机器的网络配置、hostname即可。

2.3 启动集群

- master 集群启动 kubeadm

以下为正常输出信息:kubeadm init --apiserver-advertise-address=192.168.2.131 --image-repository registry.aliyuncs.com/google_containers --service-cidr=10.1.0.0/16 --pod-network-cidr=10.244.0.0/16I1220 01:16:58.210095 1831 version.go:252] remote version is much newer: v1.20.1; falling back to: stable-1.19 W1220 01:16:58.878208 1831 configset.go:348] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io] [init] Using Kubernetes version: v1.19.6 [preflight] Running pre-flight checks [WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/ [WARNING Hostname]: hostname "kmaster" could not be reached [WARNING Hostname]: hostname "kmaster": lookup kmaster on 8.8.8.8:53: no such host [preflight] Pulling images required for setting up a Kubernetes cluster [preflight] This might take a minute or two, depending on the speed of your internet connection [preflight] You can also perform this action in beforehand using 'kubeadm config images pull' [certs] Using certificateDir folder "/etc/kubernetes/pki" [certs] Generating "ca" certificate and key [certs] Generating "apiserver" certificate and key [certs] apiserver serving cert is signed for DNS names [kmaster kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.1.0.1 192.168.2.131] [certs] Generating "apiserver-kubelet-client" certificate and key [certs] Generating "front-proxy-ca" certificate and key [certs] Generating "front-proxy-client" certificate and key [certs] Generating "etcd/ca" certificate and key [certs] Generating "etcd/server" certificate and key [certs] etcd/server serving cert is signed for DNS names [kmaster localhost] and IPs [192.168.2.131 127.0.0.1 ::1] [certs] Generating "etcd/peer" certificate and key [certs] etcd/peer serving cert is signed for DNS names [kmaster localhost] and IPs [192.168.2.131 127.0.0.1 ::1] [certs] Generating "etcd/healthcheck-client" certificate and key [certs] Generating "apiserver-etcd-client" certificate and key [certs] Generating "sa" key and public key [kubeconfig] Using kubeconfig folder "/etc/kubernetes" [kubeconfig] Writing "admin.conf" kubeconfig file [kubeconfig] Writing "kubelet.conf" kubeconfig file [kubeconfig] Writing "controller-manager.conf" kubeconfig file [kubeconfig] Writing "scheduler.conf" kubeconfig file [kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env" [kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml" [kubelet-start] Starting the kubelet [control-plane] Using manifest folder "/etc/kubernetes/manifests" [control-plane] Creating static Pod manifest for "kube-apiserver" [control-plane] Creating static Pod manifest for "kube-controller-manager" [control-plane] Creating static Pod manifest for "kube-scheduler" [etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests" [wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s [apiclient] All control plane components are healthy after 34.505441 seconds [upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace [kubelet] Creating a ConfigMap "kubelet-config-1.19" in namespace kube-system with the configuration for the kubelets in the cluster [upload-certs] Skipping phase. Please see --upload-certs [mark-control-plane] Marking the node kmaster as control-plane by adding the label "node-role.kubernetes.io/master=''" [mark-control-plane] Marking the node kmaster as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule] [bootstrap-token] Using token: xxpowp.b8zoas29foe15zuz [bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles [bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes [bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials [bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token [bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster [bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace [kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key [addons] Applied essential addon: CoreDNS [addons] Applied essential addon: kube-proxy Your Kubernetes control-plane has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/ Then you can join any number of worker nodes by running the following on each as root: kubeadm join 192.168.2.131:6443 --token xxpowp.b8zoas29foe15zuz \ --discovery-token-ca-cert-hash sha256:9025b306232c82bb8f5a572d0453247d6db95e5c70dea1e90c63a5e8b8309af5 - master 部署cni网络

网络正常部署后,master节点为Ready状态# wget https://github.com/flannel-io/flannel/releases/latest/download/kube-flannel.yml # kubectl apply -f kube-flannel.yml 输出: podsecuritypolicy.policy/psp.flannel.unprivileged created clusterrole.rbac.authorization.k8s.io/flannel created clusterrolebinding.rbac.authorization.k8s.io/flannel created serviceaccount/flannel created configmap/kube-flannel-cfg created daemonset.apps/kube-flannel-ds created - node 节点加入集群

在node01-03 上分别执行 join 命令

3个node节点加入后,过1-2分钟后通过get nodes查看,发现都是Ready状态了,至此集群已正常启动!kubeadm join 192.168.2.131:6443 --token xxpowp.b8zoas29foe15zuz \ --discovery-token-ca-cert-hash sha256:9025b306232c82bb8f5a572d0453247d6db95e5c70dea1e90c63a5e8b8309af5 输出: [preflight] Running pre-flight checks [WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/ [preflight] Reading configuration from the cluster... [preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml' [kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml" [kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env" [kubelet-start] Starting the kubelet [kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap... This node has joined the cluster: * Certificate signing request was sent to apiserver and a response was received. * The Kubelet was informed of the new secure connection details. Run 'kubectl get nodes' on the control-plane to see this node join the cluster. - 忘记token和discovery-token-ca-cert-hash时,重新加入集群方法

参考文档 Reference->Setup tools reference->Kubeadm->kubeadm join4.1 master 节点执行 # kubeadm token create 输出: W0122 22:39:46.556169 22712 configset.go:348] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io] 6jvgb0.7g0mdms1xllhr2pc # openssl x509 -pubkey \ -in /etc/kubernetes/pki/ca.crt | openssl rsa \ -pubin -outform der 2>/dev/null | openssl dgst \ -sha256 -hex | sed 's/ˆ.* //' 输出: (stdin)= d07b6263d56d9329fc9a313b0c64ddc83c1eb828eba350ad4b76d9fbd76a1e89 4.2 Worker 节点执行: # kubeadm join --token 6jvgb0.7g0mdms1xllhr2pc 10.120.75.102:6443 --discovery-token-ca-cert-hash sha256:d07b6263d56d9329fc9a313b0c64ddc83c1eb828eba350ad4b76d9fbd76a1e89 - 下线节点

5.1 master节点设置不可调度,驱逐pod 设置不可调度 # kubectl cordon gdcintern-test01-mj.i.nease.net 驱逐节点上的pod # kubectl drain gdcintern-test01-mj.i.nease.net --ignore-daemonsets 确认节点上是否有pod运行,没有就可以删除了 # kubectl get pod -o wide|grep gdcintern-test01-mj.i.nease.net 删除节点 # kubectl delete node gdcintern-test01-mj.i.nease.net 5.2 worker 节点执行reset kubeadm reset, 然后按照提示删除 stateful directories: [/var/lib/kubelet /var/lib/dockershim /var/run/kubernetes /var/lib/cni] rm -fr /etc/cni/net.d 注: 默认情况下,如果只在master节点上执行delete node,过一段时间worker节点上kubelet自动拉起服务后,会继续加入集群,因此需要在worker节点上执行以下reset操作 5.3 如果需要重新加入到集群, 可先后生成token 和 证书 重新生成token kubeadm token create --print-join-command 重新生成证书 kubeadm init phase upload-certs --upload-certs

3 测试

-

部署nginx 并暴露80 端口

kubectl create deployment nginx --image=nginx:1.19.6 kubectl expose deployment nginx --port=80 --type=NodePort kubectl get pod,svc -

安装 dashboard

wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-beta8/aio/deploy/recommended.yaml kubectl apply -f recommended.yaml 输出: namespace/kubernetes-dashboard created serviceaccount/kubernetes-dashboard created service/kubernetes-dashboard created secret/kubernetes-dashboard-certs created secret/kubernetes-dashboard-csrf created secret/kubernetes-dashboard-key-holder created configmap/kubernetes-dashboard-settings created role.rbac.authorization.k8s.io/kubernetes-dashboard created clusterrole.rbac.authorization.k8s.io/kubernetes-dashboard created rolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created clusterrolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created deployment.apps/kubernetes-dashboard created service/dashboard-metrics-scraper created deployment.apps/dashboard-metrics-scraper created注意此处需要对 service kubernetes-dashboard 的 ports 属性中添加 nodePort: 30001(也可以按需更新其它它端口) 和 type: NodePort,如下图:

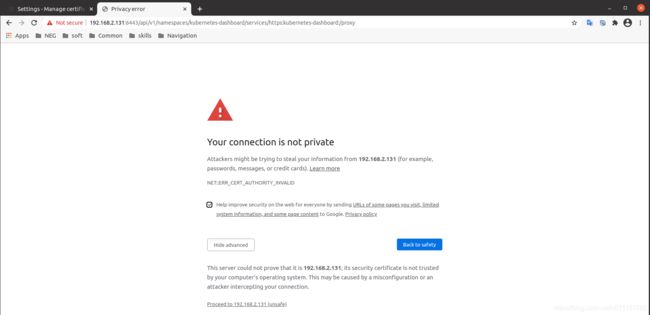

对面板生成证书1) root@kmaster:~# kubectl create serviceaccount dashboard-admin -n kube-system serviceaccount/dashboard-admin created 2) root@kmaster:~# kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin clusterrolebinding.rbac.authorization.k8s.io/dashboard-admin created 3) root@kmaster:~# kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}') Name: dashboard-admin-token-6hl77 Namespace: kube-system Labels:Annotations: kubernetes.io/service-account.name: dashboard-admin kubernetes.io/service-account.uid: 3e37a5cd-8f54-4cde-8d6d-cefbc7d92516 Type: kubernetes.io/service-account-token Data ==== ca.crt: 1066 bytes namespace: 11 bytes token: eyJhbGciOiJSUzI1NiIsImtpZCI6IktpaWNYeW5DSVRLdWx6YmpoUFJsdHVXTzRQV0NGQnlKV1dmN29Xd21zX1UifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJkYXNoYm9hcmQtYWRtaW4tdG9rZW4tNmhsNzciLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiZGFzaGJvYXJkLWFkbWluIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiM2UzN2E1Y2QtOGY1NC00Y2RlLThkNmQtY2VmYmM3ZDkyNTE2Iiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmUtc3lzdGVtOmRhc2hib2FyZC1hZG1pbiJ9.fTGH-2oHqGcc4yOcjEUgco4aDPF5OyojQWzVt2AnvQLiOWynFtaxIjWMXNqMcfH4fpTE7sT1PrDECFG2iV4J6ZIhtQUMfDD5YqjPSLU_w1qr528HcDFRtNbS6ik-OA-KjmfbNU6bdQ4QEYNPsXC40TBj1kpr9nr-ZZQIuQhD7zXQ5AEQR3S6A9B0TPwl8v1wRn86ge7YD2YZ76JY-knntlnd5wgsbfYpAeQECxZ6uOcN-mJYOWB11WtGmfVCtWC4-N63SlWyvcXEfzl8h5wnxI8yTGdH-LoEjHMx-B9-_yS0yRfZLPDowND9BgoqQvJF7lqyC1PR7M25Z20s2h7Log 通过网址以下网址查看dashboard,由于该 https 未认证,大部分浏览器不能直接查看,因此需要继续生成p12 证书,导入证书后再通过上面生成的token查看;

https://192.168.2.131:30001 (https://nodeIp:NodePort)

https://192.168.2.131:6443/api/v1/namespaces/kubernetes-dashboard/services/https:kubernetes-dashboard:/proxy/#/overview?namespace=default(通过api server 的6443端口的proxy来访问)1)生成 kubecfg.crt grep 'client-certificate-data' ~/.kube/config | head -n 1 | awk '{print $2}' | base64 -d >> kubecfg.crt 2)生成 kubecfg.key grep 'client-key-data' ~/.kube/config | head -n 1 | awk '{print $2}' | base64 -d >> kubecfg.key 3)kubecfg.key kubecfg.p12 openssl pkcs12 -export -clcerts -inkey kubecfg.key -in kubecfg.crt -out kubecfg.p12 -name "kubernetes-client" 此处密码设置111111,浏览器导入 p12证书的时候需要使用该密码在浏览器中导入该证书,google浏览器->settings->privacy and security->Manage certificates->Your certificates->Import ,导入刚刚生成的 kubecfg.key kubecfg.p12 文件,重启下浏览器输入dashboard网址即可。

导入p12证书文件:

正常情况下添加认证后,可以通过 Proceed to 192.168.2.131(unsafe) 访问dashbaord(mac 最新系统设置方法不太一样,so,此处只针对linux 和 windows的chrome),如下图:

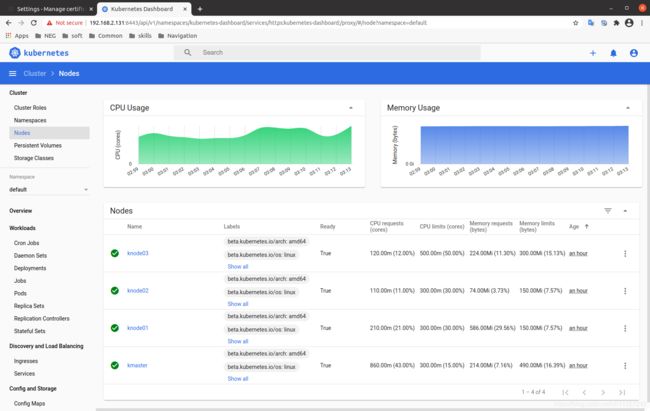

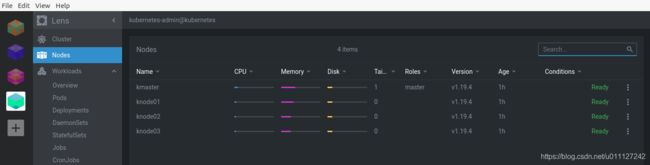

查看节点信息:

到这里,终于可以通过浏览器来访问dashboard了;当然,也可以通过相关设置,开放基于http 的dashboard端口,那样就不会这样绕一个大圈子了;

后续笔者测试下 http 端口dashboard 调整方式后,也会把相关步骤补充在此处! -

安装 metrics-server

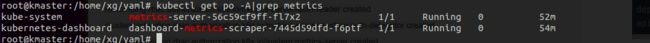

wget https://github.com/kubernetes-sigs/metrics-server/releases/latest/download/components.yaml 需要在 container metrics-server 的args 中添加: --kubelet-insecure-tls,否则会启动报错 kubectl apply -f components.yaml 输出: serviceaccount/metrics-server created clusterrole.rbac.authorization.k8s.io/system:aggregated-metrics-reader created clusterrole.rbac.authorization.k8s.io/system:metrics-server created rolebinding.rbac.authorization.k8s.io/metrics-server-auth-reader created clusterrolebinding.rbac.authorization.k8s.io/metrics-server:system:auth-delegator created clusterrolebinding.rbac.authorization.k8s.io/system:metrics-server created service/metrics-server created deployment.apps/metrics-server created apiservice.apiregistration.k8s.io/v1beta1.metrics.k8s.io created此时,各个pod已经正常拉起来了, pod 信息如下图:

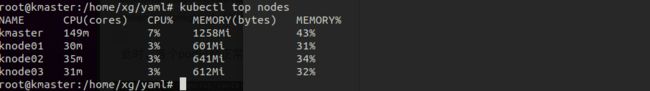

metrics-server 正常工作后,可以通过 kubectl top nodes 来查看节点cpu和内存使用信息(若未正常安装,则执行该操作会报错):

-

使用 lens 插件安装普罗米修斯监控

拷贝config文件到主机,通过lens导入,并设置Prometheus信息,具体操作参考笔者博文:k8s笔记3–Kubernetes IDE Lens

master 节点监控信息:

各个node节点cpu|memory|disk信息:

4 说明

- 参考文档

1 production-environment/tools/kubeadm/install-kubeadm

2 使用kubeadm快速部署一个Kubernetes集群(v1.18)

3 kubeadm 配套资源 https://pan.baidu.com/s/1_JGnMv83yO6mDXXmO9Y3ng 提取码: a3hd 用户: xg 密码: 111111 - 软件说明

docker 版本为:Docker version 20.10.1;

k8s 集群版本为:v1.19.4;

测试系统为ubuntu 16.04 server版本;

测试 VMwarestation 为16.0.0 Pro,vm 版本太高可能不支持(最基础的 Userver.vmdk 镜像是笔者18年用 vm12.5 生成的);