手把手教你在虚拟机中部署Kubernetes集群(K8S)

我们在上面:VM部署CentOS并且设置网络 部署好了服务器。接下来需要准备三个服务器分别为

master节点:master 192.168.171.7

node节点:node1 192.168.171.6

node节点:node2 192.168.171.4

此步骤需要启动三台虚拟机,并且使用xshell进行连接

使用执行多个的命令来在每个服务器同步执行相同的命令

一:部署前准备(三台服务器都操作)

检查操作系统的版本

# 此方式安装kubernetes集群要求Centos版本要在7.5或以上

cat /etc/redhat-release

# CentOS Linux release 7.9.2009 (Core)主机名解析

为了方便后面集群节点间的直接调用,在这配置一下主机名解析, 企业中推荐使用内部DNS服务器,这里的ip地址要根据自己机器的来。主机名成解析编辑三台服务器的/etc/hosts文件,添加下面内容

192.168.171.7 master

192.168.171.6 node1

192.168.171.4 node2分别在三台服务器上

ping master

ping node1

ping node2时间同步

kubernetes要求集群中的节点时间必须精确一致, 这里直接使用chronyd服务从网络同步时间。

企业中建议配置内部的时间同步服务器

# 启动chronyd服务

systemctl start chronyd

#设置chronyd服务开机自启

systemctl enable chronyd

# chronyd服务启动稍等几秒钟,就可以使用date命令验证时间了

date禁用iptables和firewalld服务

kubernetes和docker在运行中会产生大量的iptables规则,为了不让系统规则跟它们混淆,直接关闭系统的规则

# 1关闭firewalld服务

systemctl stop firewalld

systemctl disable firewalld

# 2关闭iptables服务

systemctl stop iptables

systemctl disable iptables禁用selinux

selinux是inux系统下的一个安全服务,如果不关闭它,在安装集群中会产生各种各样的奇葩问题

-

编辑/etc/selinux/config文件,修改SELINUX的值为disabled

-

# 注意修改完毕之后需要重启linux服务

SELINUX=disabled

禁用swap分区

wap分区指的是虚拟内存分区,它的作用是在物理内存使用完之后,将磁盘空间虚拟成内存来使用

启用swap设备会对系统的性能产生非常负面的影响,因此kubernetes要求每个节点都要禁用swap设备

但是如果因为某些原因确实不能关闭swap分区,就需要在集群安装过程中通过明确的参数进行配置说明

# 编辑分区配置文件/etc/fstab,注释掉swap分区一行

# 注意修改完毕之后需要重启linux服务

UUID=455cc753-7a60-4c17-a424-7741728c44a1 /boot xfs defaults 0 0

# /dev/mapper/centos-swap swap swap defaults 0 0修改linux的内核参数

# 修改linux的内核参数,添加网桥过滤和地址转发功能

# 编辑/etc/sysctl.d/kubernetes.conf文件,添加如下配置:

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

# 重新加载配置

sysctl -p

#加载网桥过滤模块

modprobe br_netfilter

#查看网桥过滤模块是否加载成功

lsmod | grep br_netfilter配置ipvs功能

# 1安装ipset和ipvsadm

yum install ipset ipvsadmin -y

# 2添加需要加载的模块写入脚本文件

cat < /etc/sysconfig/modules/ipvs.modules

#! /bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF

# 3为脚本文件添加执行权限

chmod +x /etc/sysconfig/modules/ipvs.modules

# 4执行脚本文件

/bin/bash /etc/sysconfig/modules/ipvs.modules

# 5查看对应的模块是否加载成功

lsmod | grep -e ip_vs -e nf_conntrack_ipv4

重启服务器

上面步骤完成之后,需要重新启动linux系统

reboot

二:安装k8s前准备的软件(三台服务器都操作)

1:安装docker

https://o36meer8.mirror.aliyuncs.com可以替换为自己的阿里云镜像仓库

# 1切换镜像源

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

# 2查看当前镜像源中支持的docker版本

yum list docker-ce --showduplicates

# 3安装特定版本的docker-ce

# 必须指定--setopt=obsoletes=0,否则yum会自动安装更高版本

yum install --setopt=obsoletes=0 docker-ce-18.06.3.ce-3.el7 -y

# 4添加一个配置文件

# Docker在默认情况下使用的Cgroup Driver为cgroupfs, 而kubernetes推荐使用systemd来代替cgroupfs

mkdir /etc/docker

cat < /etc/docker/daemon.json

{

"exec-opts": ["native.cgroupdriver=systemd"],

"registry-mirrors": ["https://o36meer8.mirror.aliyuncs.com"]

}

EOF

# 5启动docker

systemctl restart docker

systemctl enable docker

# 6检查docker状态和版本

docker version 2:安装kubernetes组件

# 由于kubernetes的镜像源在国外,速度比较慢,这里切换成国内的镜像源

# 编辑/etc/yum.repos.d/kubernetes.repo,添加下面的配置

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

# 安装kubeadm、kubelet和kubectl

yum install --setopt=obsoletes=0 kubeadm-1.17.4-0 kubelet-1.17.4-0 kubectl-1.17.4-0 -y

# 配置kubelet的cgroup

# 编辑/etc/sysconfig/kubelet,添加下面的配置

KUBELET_CGROUP_ARGS="--cgroup-driver=systemd"

KUBE_PROXY_MODE="ipvs"

# 4设置kubelet开机自启

systemctl enable kubelet3:准备集群镜像

其中images={.....}是直接在控制台输入,点击enter即可,for循环也是一样直接在控制台输入,

# 在安装kubernetes集群之前,必须要提前准备好集群需要的镜像,所需镜像可以通过下面命令查看

kubeadm config images list

# 下载镜像

# 此镜像在kubernetes的仓库中,由于网络原因,无法连接,下面提供了一种替代方案

images=(

kube-apiserver:v1.17.4

kube-controller-manager:v1.17.4

kube-scheduler:v1.17.4

kube-proxy:v1.17.4

pause:3.1

etcd:3.4.3-0

coredns:1.6.5

)

for imageName in ${images[@]} ; do

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/$imageName

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/$imageName k8s.gcr.io/$imageName

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/$imageName

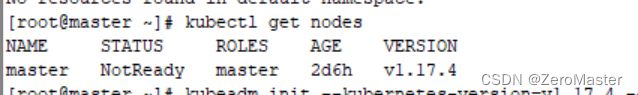

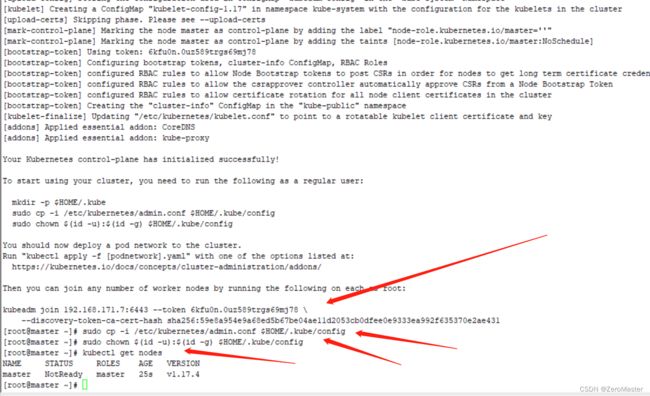

done三:集群初始化

下面开始对集群进行初始化,并将node节点加入到集群中

下面的操作只需要在master节点上执行即可

apiserver-advertise-address为master节点的IP地址,根据自己的master节点来修改

# 创建集群

kubeadm init --kubernetes-version=v1.17.4 --pod-network-cidr=10.244.0.0/16 --service-cidr=10.96.0.0/12 --apiserver-advertise-address=192.168.171.7

# 创建必要文件

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config这个时候执行

kubectl get nodes目前只有master节点,需要把node1,node2添加进去。

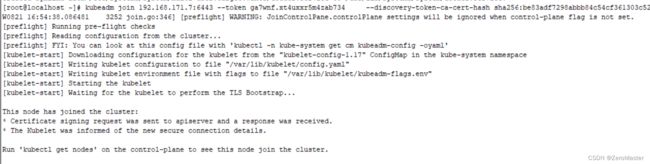

创建集群的时候,我们会看到有一个kubeadmin join的命令,拷贝下来。

在另外两个node节点执行语句 (这个语句是根据自己机器的执行结果来的)

在再master节点上执行 kubectl get nodes就能看到三个节点都在上面了。

但是状态为NotReady,因为还未安装网络

四:安装网络插件

kubernetes支持多种网络插件,比如flannel、 calico、 canal等等, 任选- 种使用即可, 本次选择flannel

下面操作依旧只在master节点执行即可,插件使用的是DaemonSet的控制器,它会在每个节点上都运行

# 获取fanne1的配置文件

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

# 使用配置文件启动fannel

kubectl apply -f kube-flannel.yml

# 稍等片刻,再次查看集群节点的状态

kubectl get nodes到此,kubernetes的集群环境搭建完成

注意:如果发现kube-flannel.yml用不了,可以在网上找合适的kube-flannel.yml文件

下面是kube-flannel.yml的命令

---

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default

seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default

apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default

apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:

privileged: false

volumes:

- configMap

- secret

- emptyDir

- hostPath

allowedHostPaths:

- pathPrefix: "/etc/cni/net.d"

- pathPrefix: "/etc/kube-flannel"

- pathPrefix: "/run/flannel"

readOnlyRootFilesystem: false

# Users and groups

runAsUser:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

fsGroup:

rule: RunAsAny

# Privilege Escalation

allowPrivilegeEscalation: false

defaultAllowPrivilegeEscalation: false

# Capabilities

allowedCapabilities: ['NET_ADMIN']

defaultAddCapabilities: []

requiredDropCapabilities: []

# Host namespaces

hostPID: false

hostIPC: false

hostNetwork: true

hostPorts:

- min: 0

max: 65535

# SELinux

seLinux:

# SELinux is unused in CaaSP

rule: 'RunAsAny'

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

rules:

- apiGroups: ['extensions']

resources: ['podsecuritypolicies']

verbs: ['use']

resourceNames: ['psp.flannel.unprivileged']

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-system

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-amd64

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

- key: kubernetes.io/arch

operator: In

values:

- amd64

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: registry.cn-zhangjiakou.aliyuncs.com/test-lab/coreos-flannel:amd64

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: registry.cn-zhangjiakou.aliyuncs.com/test-lab/coreos-flannel:amd64

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-arm64

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

- key: kubernetes.io/arch

operator: In

values:

- arm64

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: registry.cn-zhangjiakou.aliyuncs.com/test-lab/coreos-flannel:arm64

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: registry.cn-zhangjiakou.aliyuncs.com/test-lab/coreos-flannel:arm64

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-arm

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

- key: kubernetes.io/arch

operator: In

values:

- arm

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: registry.cn-zhangjiakou.aliyuncs.com/test-lab/coreos-flannel:arm

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: registry.cn-zhangjiakou.aliyuncs.com/test-lab/coreos-flannel:arm

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-ppc64le

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

- key: kubernetes.io/arch

operator: In

values:

- ppc64le

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: registry.cn-zhangjiakou.aliyuncs.com/test-lab/coreos-flannel:ppc64le

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: registry.cn-zhangjiakou.aliyuncs.com/test-lab/coreos-flannel:ppc64le

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-s390x

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

- key: kubernetes.io/arch

operator: In

values:

- s390x

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: registry.cn-zhangjiakou.aliyuncs.com/test-lab/coreos-flannel:s390x

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: registry.cn-zhangjiakou.aliyuncs.com/test-lab/coreos-flannel:s390x

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

五:一些问题总结

1:我之前运行 kubeadm init 时丢失了原始的“kubeadm join”命令,怎么办?

在master节点上执行:

kubeadm token create --print-join-command2:service docker start/systemctl start docker执行时报这“"systemctl status docker.service" and "journalctl -xe" for details”错误

错误信息还可能是如下

Job for docker.service failed because the control process exited with error code. See "systemctl status docker.service" and "journalctl -xe" for details.

解决方案(针对自己在docker 官网下载最新的rpm 后自己使用yum install **/**/**.rpm 安装出现这个问题):

Apr 3 15:31:11 Docker kernel: bio: create slab at 2

Apr 3 15:31:11 Docker dockerd: time="2018-04-03T15:31:11.835565555+08:00" level=info msg="devmapper: Creating filesystem xfs on device docker-253:1-34265854-base, mkfs args: [-m crc=0,finobt=0 /dev/mapper/docker-253:1-34265854-base]"

Apr 3 15:31:11 Docker dockerd: time="2018-04-03T15:31:11.836336636+08:00" level=info msg="devmapper: Error while creating filesystem xfs on device docker-253:1-34265854-base: exit status 1"

Apr 3 15:31:11 Docker dockerd: time="2018-04-03T15:31:11.836350296+08:00" level=error msg="[graphdriver] prior storage driver devicemapper failed: exit status 1"

Apr 3 15:31:11 Docker dockerd: Error starting daemon: error initializing graphdriver: exit status 1

Apr 3 15:31:11 Docker systemd: docker.service: main process exited, code=exited, status=1/FAILURE

Apr 3 15:31:11 Docker systemd: Failed to start Docker Application Container Engine. 很明显了:mkfs.xfs版本太低,遂更新:

yum update xfsprogs

重启docker服务,正常!

3:子节点node1启动 kubeadm init 的时候出错:error execution phase preflight: [preflight] Some fatal errors occurred:

W0821 17:29:08.265525 7407 join.go:346] [preflight] WARNING: JoinControlPane.controlPlane settings will be ignored when control-plane flag is not set.

[preflight] Running pre-flight checks

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR FileAvailable--etc-kubernetes-kubelet.conf]: /etc/kubernetes/kubelet.conf already exists

[ERROR FileAvailable--etc-kubernetes-bootstrap-kubelet.conf]: /etc/kubernetes/bootstrap-kubelet.conf already exists

[ERROR FileAvailable--etc-kubernetes-pki-ca.crt]: /etc/kubernetes/pki/ca.crt already exists

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

To see the stack trace of this error execute with --v=5 or higher原因是:一些配置文件与服务已经存在

解决方案:

#重置kubeadm

kubeadm reset在运行kubeadm join命令

4:安装网络插件,node节点出现错误: Unknown desc = failed pulling image "k8s.gcr.io/pause:3.1"

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled default-scheduler Successfully assigned kube-system/kube-flannel-ds-amd64-rkb6q to localhost.localdomain

Warning FailedCreatePodSandBox 4m1s (x68 over 39m) kubelet, localhost.localdomain Failed to create pod sandbox: rpc error: code = Unknown desc = failed pulling image "k8s.gcr.io/pause:3.1": Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: TLS handshake timeout 解决方案如下:

# 查看 kubelet 配置

$ systemctl status -l kubelet

$ cd /var/lib/kubelet/

$ cp kubeadm-flags.env kubeadm-flags.env.ori

# 把 k8s.gcr.io/pause:3.3 改成 registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.2

$ cat /var/lib/kubelet/kubeadm-flags.env

$ KUBELET_KUBEADM_ARGS="--cgroup-driver=systemd --network-plugin=cni --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.1"

# 重启 kubelet 服务

$ systemctl daemon-reload

$ systemctl restart kubelet5:服务器的主机名还是localhost如何修改

临时的:sudo hostname new_hostname

永久的 vi /etc/hosts

将localhost.localhostadmin改成new_hostname

6:k8s安装报错Error: docker-ce-cli conflicts with 2:docker-1.13.1-103.git7f2769b.el7.centos.x86_64

原因是因为之前安装过docker,版本冲突原因,解决方法如下:

查询安装docker列表:yum list installed | grep docker

containerd.io.x86_64 1.2.13-3.1.el7 @docker-ce-stable

docker-ce.x86_64 3:19.03.8-3.el7 @docker-ce-stable

docker-ce-cli.x86_64 1:19.03.7-3.el7 @docker-ce-stable卸载对应的包

yum -y remove containerd.io.x86_64 docker-ce.x86_64 docker-ce-cli.x86_64

参考:https://www.cnblogs.com/liulj0713/p/12501957.html

7:[ERROR DirAvailable--var-lib-etcd]: /var/lib/etcd is not empty

原因:这是在 Kubernetes 中初始化集群时出现的错误。/var/lib/etcd 目录不为空,这是由于之前在该目录下有一个 etcd 实例。需要清空该目录或将其备份,然后再次尝试初始化。可以使用命令来忽略这个错误并继续执行初始化过程。另外,如果需要查看更详细的错误栈信息,可以在命令中加上参数 --v=5 或更高。

解决方法:在语句后面添加:--ignore-preflight-errors=DirAvailable--var-lib-etcd

如:kubeadm init --config /root/kubeadm-config.yaml --upload-certs --ignore-preflight-errors=DirAvailable--var-lib-etcd

参考:https://www.cnblogs.com/yuwen01/p/17438591.html

其他错误:K8S 故障处理经验积累(网络)_k8 s取消失败重启_牛牛Blog的博客-CSDN博客

参考:K8s集群搭建教程_ikemorebi的博客-CSDN博客

虚拟机部署Kubernetes(K8S)_虚拟机安装k8s_生骨大头菜的博客-CSDN博客

K8S集群安装与部署(Linux系统)_linux 安装k8s_Chensay.的博客-CSDN博客