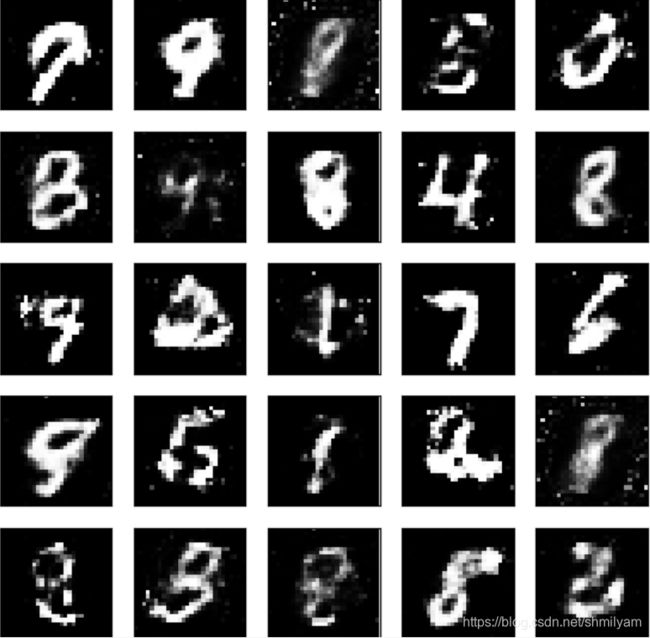

GAN(MNIST生成)

GAN(MNIST生成)

一:GAN的简介

生成式对抗网络(GAN, Generative Adversarial Networks )是一种深度学习模型,是近年来复杂分布上无监督学习最具前景的方法之一。模型通过框架中(至少)两个模块:生成模型(Generative Model)和判别模型(Discriminative Model)的互相博弈学习产生相当好的输出。原始 GAN 理论中,并不要求 G 和 D 都是神经网络,只需要是能拟合相应生成和判别的函数即可。但实用中一般均使用深度神经网络作为 G 和 D 。一个优秀的GAN应用需要有良好的训练方法,否则可能由于神经网络模型的自由性而导致输出不理想。

二:在此过程中遇到问题:

在运行代码时可能会出现以下报错:

ModuleNotFoundError:

No module named 'tensorflow.examples.tutorials'

解决办法:

1.打开我的电脑---->查找…\Python3\Lib\site-packages---->找到tensorflow, tensorflow_core, ensorflow_estimator

2.打开tensorflow_core\examples文件夹查看是否有saved_model和tutorials文件。

3.如果文件夹下只有saved_model这个文件,没有tutorials。打开github的tensorflow主页下载缺失的文件

网址为:https://github.com/tensorflow/tensorflow。

4.在tensorflowmaster\tensorflow\examples\这里找到了tutorials文件夹,把tutorials整个文件夹拷贝到上文中提到的…\Python3\Lib\site-packages\tensorflow_core\examples\

三、MNIST生成:

import tensorflow as tf

import numpy as np

import os

from tensorflow.examples.tutorials.mnist import input_data

from matplotlib import pyplot as plt

BATCH_SIZE = 64

UNITS_SIZE = 128

LEARNING_RATE = 0.001

EPOCH = 300

SMOOTH = 0.1

mnist = input_data.read_data_sets('/mnist_data/', one_hot=True)

# 生成模型

def generatorModel(noise_img, units_size, out_size, alpha=0.01):

with tf.variable_scope('generator'):

FC = tf.layers.dense(noise_img, units_size)

reLu = tf.nn.leaky_relu(FC, alpha)

drop = tf.layers.dropout(reLu, rate=0.2)

logits = tf.layers.dense(drop, out_size)

outputs = tf.tanh(logits)

return logits, outputs

def discriminatorModel(images, units_size, alpha=0.01, reuse=False):

with tf.variable_scope('discriminator', reuse=reuse):

FC = tf.layers.dense(images, units_size)

reLu = tf.nn.leaky_relu(FC, alpha)

logits = tf.layers.dense(reLu, 1)

outputs = tf.sigmoid(logits)

return logits, outputs

# 损失函数

def loss_function(real_logits, fake_logits, smooth):

G_loss = tf.reduce_mean(tf.nn.sigmoid_cross_entropy_with_logits(logits=fake_logits,

labels=tf.ones_like(fake_logits)*(1-smooth)))

fake_loss = tf.reduce_mean(tf.nn.sigmoid_cross_entropy_with_logits(logits=fake_logits,

labels=tf.zeros_like(fake_logits)))

real_loss = tf.reduce_mean(tf.nn.sigmoid_cross_entropy_with_logits(logits=real_logits,

labels=tf.ones_like(real_logits)*(1-smooth)))

D_loss = tf.add(fake_loss, real_loss)

return G_loss, fake_loss, real_loss, D_loss

# 优化器

def optimizer(G_loss, D_loss, learning_rate):

train_var = tf.trainable_variables()

G_var = [var for var in train_var if var.name.startswith('generator')]

D_var = [var for var in train_var if var.name.startswith('discriminator')]

# 因为GAN中一共训练了两个网络,所以分别对G和D进行优化

G_optimizer = tf.train.AdamOptimizer(learning_rate).minimize(G_loss, var_list=G_var)

D_optimizer = tf.train.AdamOptimizer(learning_rate).minimize(D_loss, var_list=D_var)

return G_optimizer, D_optimizer

# 训练

def train(mnist):

image_size = mnist.train.images[0].shape[0]

real_images = tf.placeholder(tf.float32, [None, image_size])

fake_images = tf.placeholder(tf.float32, [None, image_size])

#调用生成模型生成图像G_output

G_logits, G_output = generatorModel(fake_images, UNITS_SIZE, image_size)

# D对真实图像的判别

real_logits, real_output = discriminatorModel(real_images, UNITS_SIZE)

# D对G生成图像的判别

fake_logits, fake_output = discriminatorModel(G_output, UNITS_SIZE, reuse=True)

# 计算损失函数

G_loss, real_loss, fake_loss, D_loss = loss_function(real_logits, fake_logits, SMOOTH)

# 优化

G_optimizer, D_optimizer = optimizer(G_loss, D_loss, LEARNING_RATE)

saver = tf.train.Saver()

step = 0

with tf.Session() as session:

session.run(tf.global_variables_initializer())

for epoch in range(EPOCH):

for batch_i in range(mnist.train.num_examples // BATCH_SIZE):

batch_image, _ = mnist.train.next_batch(BATCH_SIZE)

# 对图像像素进行scale,tanh的输出结果为(-1,1)

batch_image = batch_image * 2 -1

# 生成模型的输入噪声

noise_image = np.random.uniform(-1, 1, size=(BATCH_SIZE, image_size))

session.run(G_optimizer, feed_dict={fake_images:noise_image})

session.run(D_optimizer, feed_dict={real_images: batch_image, fake_images: noise_image})

step = step + 1

# 判别器D的损失

loss_D = session.run(D_loss, feed_dict={real_images: batch_image, fake_images:noise_image})

# D对真实图片

loss_real =session.run(real_loss, feed_dict={real_images: batch_image, fake_images: noise_image})

# D对生成图片

loss_fake = session.run(fake_loss, feed_dict={real_images: batch_image, fake_images: noise_image})

# 生成模型G的损失

loss_G = session.run(G_loss, feed_dict={fake_images: noise_image})

print('epoch:', epoch, 'loss_D:', loss_D, ' loss_real', loss_real, ' loss_fake', loss_fake, ' loss_G', loss_G)

model_path = os.getcwd() + os.sep + "mnist.model"

saver.save(session, model_path, global_step=step)

def main(argv=None):

train(mnist)

if __name__ == '__main__':

tf.app.run()

import tensorflow as tf

import numpy as np

from matplotlib import pyplot as plt

import pickle

import mnist_GAN

UNITS_SIZE = mnist_GAN.UNITS_SIZE

def generatorImage(image_size):

sample_images = tf.placeholder(tf.float32, [None, image_size])

G_logits, G_output = mnist_GAN.generatorModel(sample_images, UNITS_SIZE, image_size)

saver = tf.train.Saver()

with tf.Session() as session:

session.run(tf.global_variables_initializer())

saver.restore(session, tf.train.latest_checkpoint('.'))

sample_noise = np.random.uniform(-1, 1, size=(25, image_size))

samples = session.run(G_output, feed_dict={sample_images:sample_noise})

with open('samples.pkl', 'wb') as f:

pickle.dump(samples, f)

def show():

with open('samples.pkl', 'rb') as f:

samples = pickle.load(f)

fig, axes = plt.subplots(figsize=(7, 7), nrows=5, ncols=5, sharey=True, sharex=True)

for ax, image in zip(axes.flatten(), samples):

ax.xaxis.set_visible(False)

ax.yaxis.set_visible(False)

ax.imshow(image.reshape((28, 28)), cmap='Greys_r')

plt.show()

def main(argv=None):

image_size = mnist_GAN.mnist.train.images[0].shape[0]

generatorImage(image_size)

show()

if __name__ == '__main__':

tf.app.run()