学习笔记:Flink DataStream API

Flink程序开发步骤

Flink程序由相同的基本部分组成:

- 获取执行环境

- 创建或加载初始数据(Source)

- 指定此数据的转换(Transformation)

- 指定将计算结果放在何处(Sink)

- 触发程序执行

获取执行环境

Flink程序首先需要声明一个执行环境,这是流式程序执行的上下文。

// getExecutionEnvironment:创建本地或集群执行环境,默认并行度

ExecutionEnvironment env = ExecutionEnvironment.getExecutionEnvironment();

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// createLocalEnvironment:返回本地执行环境,指定并行度

LocalStreamEnvironment env = StreamExecutionEnvironment.createLocalEnvironment(1);

// createRemoteEnvironment:返回集群执行环境,指定JobManager的IP和端口号,并指定要在集群中运行的Jar包

StreamExecutionEnvironment env = StreamExecutionEnvironment.createRemoteEnvironment("IP", 6123, "YOURPATH//WordCount.jar");

触发程序执行

Flink程序是延迟计算的,只有最后调用execute方法的时候才会真正触发执行程序。

// 执行任务

env.execute();

Source:数据输入

Sources模块定义了数据接入功能,主要是将各种外部数据接入至Flink系统中,并将接入数据转换成对应的DataStream数据集。

从集合读取数据

package api.source;

import api.pojo.SensorReading;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import java.util.Arrays;

/**

* 从集合读取数据

*/

public class Source1_Collection {

public static void main(String[] args) throws Exception{

// 创建执行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// 设置并行度为1

env.setParallelism(1);

// fromCollection:从给定的集合中创建DataStream

DataStream<SensorReading> sensorDataStream = env.fromCollection(

Arrays.asList(

// 属性:ID,时间戳,温度值

new SensorReading("sensor_1", 1547718199L, 35.8),

new SensorReading("sensor_6", 1547718201L, 15.4),

new SensorReading("sensor_7", 1547718202L, 6.7),

new SensorReading("sensor_10", 1547718205L, 38.1)

)

);

sensorDataStream.print("sensor");

// fromElements:从给定的对象序列中创建DataStream

DataStream<Integer> integerDataStream = env.fromElements(1, 5, 9, 12, 34, 67, 122);

integerDataStream.print("integer");

// generateSequence:从给定间隔的数字序列中创建DataStream

DataStream<Long> longDataStream = env.generateSequence(1, 5);

longDataStream.print("long");

// 执行任务

env.execute();

}

}

从文件读取数据

package api.source;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

/**

* 从文件读取数据

*/

public class Source2_File {

public static void main(String[] args) throws Exception {

// 创建执行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// 设置并行度为1

env.setParallelism(1);

// readTextFile:逐行读取指定文件来创建DataStream

String inputPath = "src/main/resources/sensor.txt";

DataStream<String> sensorDataStream = env.readTextFile(inputPath);

sensorDataStream.print("sensor");

// 执行任务

env.execute();

}

}

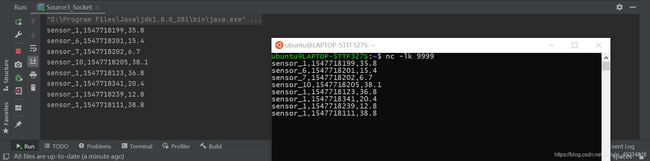

从Socket读取数据

package api.source;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

/**

* 从Socket读取数据

*/

public class Source3_Socket {

public static void main(String[] args) throws Exception {

// 创建执行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// 设置并行度为1

env.setParallelism(1);

// socketTextStream:指定Socket主机和端口,默认数据分隔符为换行符(\n)

DataStream<String> inputDataStream = env.socketTextStream("localhost",9999);

inputDataStream.print();

// 用ParameterTool从程序启动参数中提取配置项

// ParameterTool parameterTool = ParameterTool.fromArgs(args);

// String host = parameterTool.get("host");

// int port = parameterTool.getInt("port");

// DataStream inputDataStream = env.socketTextStream(host,port);

// 执行任务

env.execute();

}

}

在终端启动netcat作为输入流:

nc -lk 9999

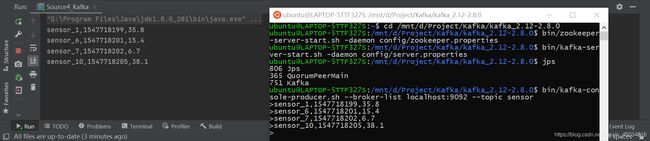

从Kafka读取数据

引入依赖:

<dependency>

<groupId>org.apache.flinkgroupId>

<artifactId>flink-connector-kafka-0.11_2.12artifactId>

<version>1.10.1version>

dependency>

package api.source;

import org.apache.flink.api.common.serialization.SimpleStringSchema;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.connectors.kafka.FlinkKafkaConsumer011;

import java.util.Properties;

/**

* 从Kafka读取数据

*/

public class Source4_Kafka {

public static void main(String[] args) throws Exception {

// 创建执行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// 设置并行度为1

env.setParallelism(1);

// Kafka配置参数

Properties properties = new Properties();

// bootstrap.servers:必要参数,指定一组host:port,用于创建与Kafka broker服务器的Socket连接

properties.setProperty("bootstrap.servers", "localhost:9092");

// 下面是次要参数

properties.setProperty("group.id", "consumer-group");

properties.setProperty("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

properties.setProperty("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

properties.setProperty("auto.offset.reset", "latest");

// addSource:添加外部数据源

DataStream<String> inputDataStream = env.addSource(new FlinkKafkaConsumer011<String>("sensor", new SimpleStringSchema(), properties));

inputDataStream.print();

// 执行任务

env.execute();

}

}

后台启动ZooKeeper和Kafka:

cd /mnt/d/Project/Kafka/kafka_2.12-2.8.0

bin/zookeeper-server-start.sh -daemon config/zookeeper.properties

bin/kafka-server-start.sh -daemon config/server.properties

创建生产者:

bin/kafka-console-producer.sh --broker-list localhost:9092 --topic sensor

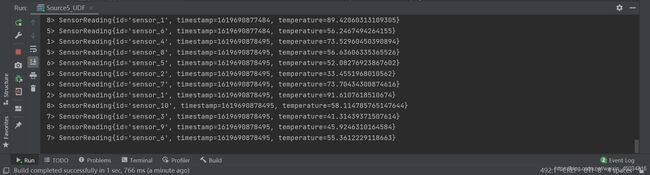

自定义数据源

package api.source;

import api.pojo.SensorReading;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.source.SourceFunction;

import java.util.HashMap;

import java.util.Random;

/**

* 自定义数据源

*/

public class Source5_UDF {

public static void main(String[] args) throws Exception {

// 创建执行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// addSource:添加外部数据源

DataStream<SensorReading> inputDataStream = env.addSource(new MySourceFunction());

inputDataStream.print();

// 执行任务

env.execute();

}

// 实现自定义的SourceFunction

public static class MySourceFunction implements SourceFunction<SensorReading> {

// 定义一个标志位,用来控制数据的产生

private boolean running = true;

@Override

public void run(SourceContext<SensorReading> ctx) throws Exception {

// 定义一个随机数发生器

Random random = new Random();

// 设置10个传感器的名称和初始温度

HashMap<String, Double> sensorTemMap = new HashMap<>();

for (int i = 0; i < 10; i++) {

// 生成高斯分布随机数作为初始温度:0~120之间

sensorTemMap.put("sensor_" + (i+1), 60 + random.nextGaussian() * 20);

}

// 当running为true时不断循环生成数据

while (running) {

for (String sensorID: sensorTemMap.keySet()) {

// 在当前温度基础上随机波动

Double newTemp = sensorTemMap.get(sensorID) + random.nextGaussian();

sensorTemMap.put(sensorID, newTemp);

ctx.collect(new SensorReading(sensorID, System.currentTimeMillis(), newTemp));

}

// 控制输出频率,过1秒更新一次

Thread.sleep(1000L);

}

}

@Override

public void cancel() {

// 可以使循环终止,取消数据源

running = false;

}

}

}

Transform:转换操作

数据处理的核心就是对数据进行各种转化操作,在Flink上就是通过转换将一个或多个DataStream转换成新的DataStream。

从文件读取数据:src/main/resources/sensor.txt

sensor_1,1547718199,35.8

sensor_6,1547718201,15.4

sensor_7,1547718202,6.7

sensor_10,1547718205,38.1

sensor_1,1547718123,36.8

sensor_1,1547718341,20.4

sensor_1,1547718239,12.8

sensor_1,1547718111,38.8

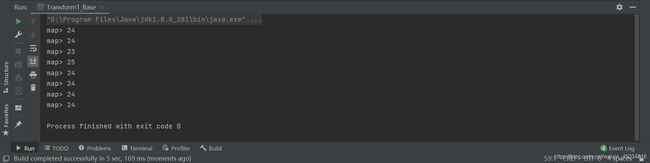

基本转换操作:map,flatMap,filter

package api.transform;

import org.apache.flink.api.common.functions.FilterFunction;

import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.util.Collector;

/**

* 基本转换操作:map,flatMap,filter

*/

public class Transform1_Base {

public static void main(String[] args) throws Exception {

// 创建执行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// 设置并行度为1

env.setParallelism(1);

// 从文件读取数据

String inputPath = "src/main/resources/sensor.txt";

DataStream<String> inputStream = env.readTextFile(inputPath);

// map:读入一个元素,返回转换后的一个元素

DataStream<Integer> mapStream = inputStream.map(new MapFunction<String, Integer>() {

@Override

public Integer map(String value) throws Exception {

// 输入字符串,输出数据长度

return value.length();

}

});

mapStream.print("map");

// flatMap:读入一个元素,返回转换后的0个、1个或者多个元素

DataStream<String> flatMapStream = inputStream.flatMap(new FlatMapFunction<String, String>() {

@Override

public void flatMap(String value, Collector<String> out) throws Exception {

// 输入字符串,按逗号切分得到多个单词字段,输出字段集合

String[] fields = value.split(",");

for (String field: fields) {

out.collect(field);

}

}

});

flatMapStream.print("flatMap");

// filter:对读入的每个元素进行规则判断,并保留返回true的元素

DataStream<String> filterStream = inputStream.filter(new FilterFunction<String>() {

@Override

public boolean filter(String value) throws Exception {

// 筛选以sensor_1开头的ID对应的数据

return value.startsWith("sensor_1");

}

});

filterStream.print("filter");

// 执行任务

env.execute();

}

}

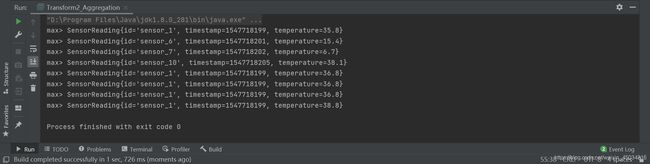

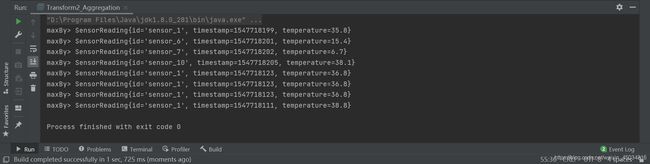

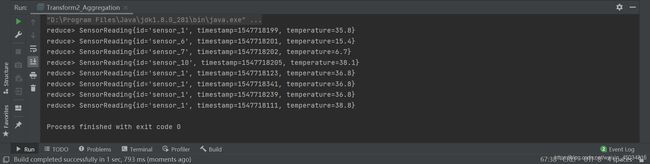

聚合操作:keyBy,滚动聚合,reduce聚合

package api.transform;

import api.pojo.SensorReading;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.common.functions.ReduceFunction;

import org.apache.flink.api.java.tuple.Tuple;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.datastream.KeyedStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

/**

* 聚合操作:keyBy,滚动聚合,reduce聚合

*/

public class Transform2_Aggregation {

public static void main(String[] args) throws Exception {

// 创建执行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// 设置并行度为1

env.setParallelism(1);

// 从文件读取数据

String inputPath = "src/main/resources/sensor.txt";

DataStream<String> inputStream = env.readTextFile(inputPath);

// 转换成SensorReading类型

DataStream<SensorReading> dataStream = inputStream.map(new MapFunction<String, SensorReading>() {

@Override

public SensorReading map(String value) throws Exception {

String[] fields = value.split(",");

return new SensorReading(fields[0], new Long(fields[1]), new Double(fields[2]));

}

});

// Lambda表达式写法:

// DataStream dataStream = inputStream.map(line -> {

// String[] fields = line.split(",");

// return new SensorReading(fields[0], new Long(fields[1]), new Double(fields[2]));

// });

// keyBy:逻辑上将流分区为不相交的分区,每个分区包含相同key的元素,在内部通过hash分区来实现

KeyedStream<SensorReading, Tuple> keyedStream = dataStream.keyBy("id");

// 其他写法:

// KeyedStream keyedStream = dataStream.keyBy(data -> data.getId());

// KeyedStream keyedStream = dataStream.keyBy(SensorReading::getId);

// 滚动聚合:sum,min,max,minBy,maxBy

// max:对指定字段取当前最大值,对其他字段取第一次获得的值

DataStream<SensorReading> maxStream = keyedStream.max("temperature");

maxStream.print("max");

// maxBy:对指定字段取当前最大值,对其他字段取指定字段对应的值,其他字段与指定字段保持一致

DataStream<SensorReading> maxByStream = keyedStream.maxBy("temperature");

maxByStream.print("maxBy");

// reduce聚合:将当前元素与上一个reduce后的元素进行reduce操作

DataStream<SensorReading> reduceStream = keyedStream.reduce(new ReduceFunction<SensorReading>() {

@Override

// curData为上一个数据,newData为最新的数据

public SensorReading reduce(SensorReading curData, SensorReading newData) throws Exception {

// 时间戳为当前最新值,温度值为当前最大值

return new SensorReading(curData.getId(), newData.getTimestamp(), Math.max(curData.getTemperature(), newData.getTemperature()));

}

});

reduceStream.print("reduce");

// 执行任务

env.execute();

}

}

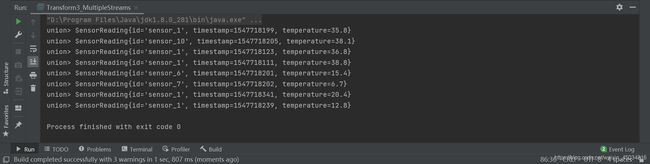

多流转换操作:split/select,connect,union

package api.transform;

import api.pojo.SensorReading;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.api.java.tuple.Tuple3;

import org.apache.flink.streaming.api.collector.selector.OutputSelector;

import org.apache.flink.streaming.api.datastream.ConnectedStreams;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.datastream.SplitStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.co.CoMapFunction;

import java.util.Collections;

/**

* 多流转换操作:split/select,connect,union

*/

public class Transform3_MultipleStreams {

public static void main(String[] args) throws Exception {

// 创建执行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// 设置并行度为1

env.setParallelism(1);

// 从文件读取数据

String inputPath = "src/main/resources/sensor.txt";

DataStream<String> inputStream = env.readTextFile(inputPath);

// 转换成SensorReading类型

DataStream<SensorReading> dataStream = inputStream.map(new MapFunction<String, SensorReading>() {

@Override

public SensorReading map(String value) throws Exception {

String[] fields = value.split(",");

return new SensorReading(fields[0], new Long(fields[1]), new Double(fields[2]));

}

});

// split:根据一些标准将流分成两个或更多个流,设置标记

SplitStream<SensorReading> splitStream = dataStream.split(new OutputSelector<SensorReading>() {

@Override

public Iterable<String> select(SensorReading value) {

// 按照温度高低(以30度为界),分成两个流

return (value.getTemperature() > 30) ? Collections.singletonList("high") : Collections.singletonList("low");

}

});

// select:获取分流后对应的数据

DataStream<SensorReading> highTempStream = splitStream.select("high");

DataStream<SensorReading> lowTempStream = splitStream.select("low");

highTempStream.print("high");

lowTempStream.print("low");

// 将高温流转换成二元组类型

DataStream<Tuple2<String, Double>> warningStream = highTempStream.map(new MapFunction<SensorReading, Tuple2<String, Double>>() {

@Override

public Tuple2<String, Double> map(SensorReading value) throws Exception {

return new Tuple2<>(value.getId(), value.getTemperature());

}

});

// connect:连接两个数据流,合流后依然保持各自的数据和形式不发生任何变化

// 高温流(highTempTupleStream)与低温流(lowTempStream)连接合并

ConnectedStreams<Tuple2<String, Double>, SensorReading> connectedStreams = warningStream.connect(lowTempStream);

// coMap/coFlatMap:可用map中的CoMapFunction(flatMap中的CoFlatMapFunction)来对合并流中的每个流进行处理,处理后的数据类型不同,取公共父类Object

DataStream<Object> coMapStream = connectedStreams.map(new CoMapFunction<Tuple2<String, Double>, SensorReading, Object>() {

@Override

public Object map1(Tuple2<String, Double> value) throws Exception {

// 对合并流中的第一条流(高温流)处理,得到三元组:ID,温度,警告信息

return new Tuple3<>(value.f0, value.f1, "high temp warning");

}

@Override

public Object map2(SensorReading value) throws Exception {

// 对合并流中的第二条流(低温流)处理,得到二元组:ID,正常信息

return new Tuple2<>(value.getId(), "normal");

}

});

coMapStream.print("connect");

// union:联合多条流,联合的流的数据类型必须是一样的

DataStream<SensorReading> unionStream = highTempStream.union(lowTempStream);

unionStream.print("union");

// 执行任务

env.execute();

}

}

- connect的数据类型可以不同,connect只能合并两个流。

- union可以合并多条流,union的数据结构必须是一样的。

富函数(Rich Functions)

富函数是DataStream API提供的一个函数类的接口,所有Flink函数类都有其Rich版本。它与常规函数的不同在于,可以获取运行环境的上下文,并拥有一些生命周期方法,所以可以实现更复杂的功能。

package api.transform;

import api.pojo.SensorReading;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.common.functions.RichMapFunction;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.configuration.Configuration;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

/**

* 富函数(RichFunction)

*/

public class Transform4_RichFunction {

public static void main(String[] args) throws Exception {

// 创建执行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// 设置并行度为3

env.setParallelism(3);

// 从文件读取数据

String inputPath = "src/main/resources/sensor.txt";

DataStream<String> inputStream = env.readTextFile(inputPath);

// 转换成SensorReading类型

DataStream<SensorReading> dataStream = inputStream.map(new MapFunction<String, SensorReading>() {

@Override

public SensorReading map(String value) throws Exception {

String[] fields = value.split(",");

return new SensorReading(fields[0], new Long(fields[1]), new Double(fields[2]));

}

});

// RichFunction:获取运行环境的上下文

DataStream<Tuple2<String, Integer>> resultStream = dataStream.map(new MyRichMapFunction());

resultStream.print();

// 执行任务

env.execute();

}

// 实现自定义的RichMapFunction

public static class MyRichMapFunction extends RichMapFunction<SensorReading, Tuple2<String, Integer>> {

@Override

public Tuple2<String, Integer> map(SensorReading value) throws Exception {

// getRuntimeContext():提供了函数的RuntimeContext的一些信息,例如函数执行的并行度,任务的名字,state状态

return new Tuple2<>(value.getId(), getRuntimeContext().getIndexOfThisSubtask());

}

@Override

public void open(Configuration parameters) throws Exception {

// open():RichFunction的初始化方法,一般是定义状态或者建立数据库连接

System.out.println("open");

}

@Override

public void close() throws Exception {

// close():生命周期中的最后一个调用的方法,一般是关闭连接和清空状态的收尾操作

System.out.println("close");

}

}

}

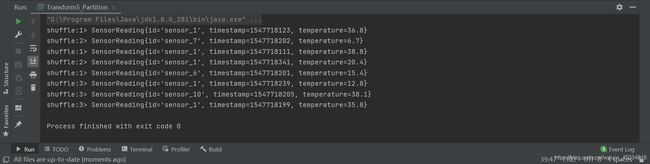

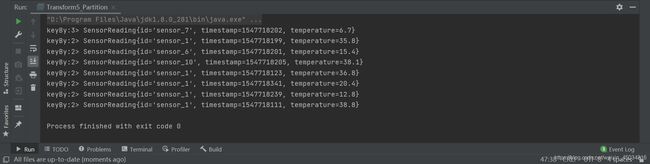

数据重分区:shuffle,rebalance,global,keyBy

package api.transform;

import api.pojo.SensorReading;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

/**

* 数据重分区:shuffle,rebalance,global,keyBy

*/

public class Transform5_Partition {

public static void main(String[] args) throws Exception {

// 创建执行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// 设置并行度为3

env.setParallelism(3);

// 从文件读取数据

String inputPath = "src/main/resources/sensor.txt";

DataStream<String> inputStream = env.readTextFile(inputPath);

// 转换成SensorReading类型

DataStream<SensorReading> dataStream = inputStream.map(new MapFunction<String, SensorReading>() {

@Override

public SensorReading map(String value) throws Exception {

String[] fields = value.split(",");

return new SensorReading(fields[0], new Long(fields[1]), new Double(fields[2]));

}

});

// 默认情况下,数据是自动分配到多个分区

dataStream.print("input");

// shuffle:数据会被随机分发到每一个分区

dataStream.shuffle().print("shuffle");

// rebalance:数据会被循环分发到每一个分区

dataStream.rebalance().print("rebalance");

// global:数据会被直接分发到第一个分区

dataStream.global().print("global");

// keyBy:会将数据按key的hash值输出到分区。

dataStream.keyBy("id").print("keyBy");

// 执行任务

env.execute();

}

}

Sink:数据输出

数据经过转换操作后,最终结果会通过Sink模块写出到外部存储介质中,例如将数据输出到文件或Kafka消息中间件等。

向Kafka写入数据

引入依赖:

<dependency>

<groupId>org.apache.flinkgroupId>

<artifactId>flink-connector-kafka-0.11_2.12artifactId>

<version>1.10.1version>

dependency>

package api.sink;

import api.pojo.SensorReading;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.common.serialization.SimpleStringSchema;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.connectors.kafka.FlinkKafkaConsumer011;

import org.apache.flink.streaming.connectors.kafka.FlinkKafkaProducer011;

import java.util.Properties;

/**

* 向Kafka写入数据

*/

public class Sink1_Kafka {

public static void main(String[] args) throws Exception {

// 创建执行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// 设置并行度为1

env.setParallelism(1);

// 从Kafka读取数据

Properties properties = new Properties();

properties.setProperty("bootstrap.servers", "localhost:9092");

DataStream<String> inputStream = env.addSource(new FlinkKafkaConsumer011<String>("sensor", new SimpleStringSchema(), properties));

// 转换成SensorReading类型后,再转换成String类型

DataStream<String> dataStream = inputStream.map(new MapFunction<String, String>() {

@Override

public String map(String value) throws Exception {

String[] fields = value.split(",");

return new SensorReading(fields[0], new Long(fields[1]), new Double(fields[2])).toString();

}

});

// addSink:添加数据接收器

dataStream.addSink(new FlinkKafkaProducer011<String>("localhost:9092", "sink", new SimpleStringSchema()));

// 执行任务

env.execute();

}

}

后台启动ZooKeeper和Kafka:

cd /mnt/d/Project/Kafka/kafka_2.12-2.8.0

bin/zookeeper-server-start.sh -daemon config/zookeeper.properties

bin/kafka-server-start.sh -daemon config/server.properties

创建生产者:

bin/kafka-console-producer.sh --broker-list localhost:9092 --topic sensor

创建消费者:

bin/kafka-console-consumer.sh --bootstrap-server localhost:9092 --topic sink

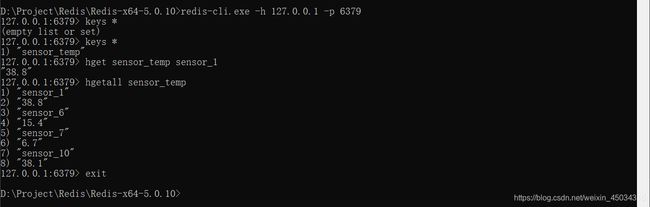

向Redis写入数据

引入依赖:

<dependency>

<groupId>org.apache.flinkgroupId>

<artifactId>flink-connector-redis_2.11artifactId>

<version>1.1.5version>

dependency>

package api.sink;

import api.pojo.SensorReading;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.connectors.redis.RedisSink;

import org.apache.flink.streaming.connectors.redis.common.config.FlinkJedisPoolConfig;

import org.apache.flink.streaming.connectors.redis.common.mapper.RedisCommand;

import org.apache.flink.streaming.connectors.redis.common.mapper.RedisCommandDescription;

import org.apache.flink.streaming.connectors.redis.common.mapper.RedisMapper;

/**

* 向Redis写入数据

*/

public class Sink2_Redis {

public static void main(String[] args) throws Exception {

// 创建执行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// 设置并行度为1

env.setParallelism(1);

// 从文件读取数据

String inputPath = "src/main/resources/sensor.txt";

DataStream<String> inputStream = env.readTextFile(inputPath);

// 转换成SensorReading类型

DataStream<SensorReading> dataStream = inputStream.map(new MapFunction<String, SensorReading>() {

@Override

public SensorReading map(String value) throws Exception {

String[] fields = value.split(",");

return new SensorReading(fields[0], new Long(fields[1]), new Double(fields[2]));

}

});

// Jedis连接配置参数

FlinkJedisPoolConfig config = new FlinkJedisPoolConfig.Builder()

.setHost("localhost").setPort(6379)

.build();

// addSink:添加数据接收器

dataStream.addSink(new RedisSink<>(config, new MyRedisMapper()));

// 执行任务

env.execute();

}

// 实现自定义的RedisMapper

public static class MyRedisMapper implements RedisMapper<SensorReading> {

@Override

public RedisCommandDescription getCommandDescription() {

// getCommandDescription():描述我们写入哪种类型的数据,比如list、hash等

return new RedisCommandDescription(RedisCommand.HSET, "sensor_temp");

}

@Override

public String getKeyFromData(SensorReading data) {

// getKeyFromData():从我们的输入数据中抽取key

return data.getId();

}

@Override

public String getValueFromData(SensorReading data) {

// getValueFromData():从我们的输入数据中抽取value

return data.getTemperature().toString();

}

}

}

启动并连接Redis:

cd /d D:\Project\Redis\Redis-x64-5.0.10

redis-server.exe redis.windows.conf

redis-cli.exe -h 127.0.0.1 -p 6379

查看Redis里的数据:

127.0.0.1:6379> hget sensor_temp sensor_1

127.0.0.1:6379> hgetall sensor_temp

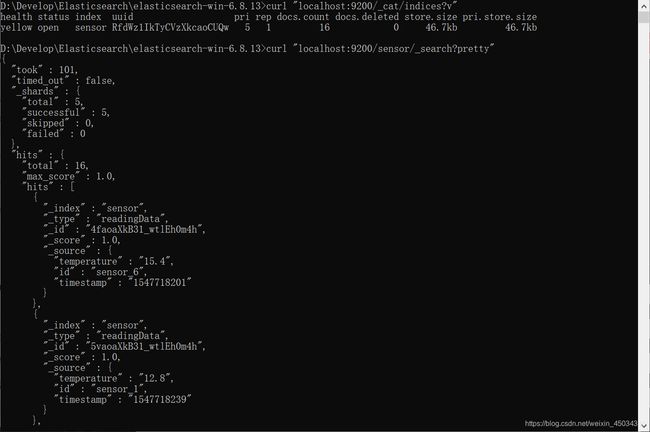

向Elasticsearch写入数据

引入依赖:

<dependency>

<groupId>org.apache.flinkgroupId>

<artifactId>flink-connector-elasticsearch6_2.12artifactId>

<version>1.10.1version>

dependency>

package api.sink;

import api.pojo.SensorReading;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.common.functions.RuntimeContext;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.connectors.elasticsearch.ElasticsearchSinkFunction;

import org.apache.flink.streaming.connectors.elasticsearch.RequestIndexer;

import org.apache.flink.streaming.connectors.elasticsearch6.ElasticsearchSink;

import org.apache.http.HttpHost;

import org.elasticsearch.action.index.IndexRequest;

import org.elasticsearch.client.Requests;

import java.util.ArrayList;

import java.util.HashMap;

/**

* 向Elasticsearch写入数据

*/

public class Sink3_Elasticsearch {

public static void main(String[] args) throws Exception {

// 创建执行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// 设置并行度为1

env.setParallelism(1);

// 从文件读取数据

String inputPath = "src/main/resources/sensor.txt";

DataStream<String> inputStream = env.readTextFile(inputPath);

// 转换成SensorReading类型

DataStream<SensorReading> dataStream = inputStream.map(new MapFunction<String, SensorReading>() {

@Override

public SensorReading map(String value) throws Exception {

String[] fields = value.split(",");

return new SensorReading(fields[0], new Long(fields[1]), new Double(fields[2]));

}

});

// Elasticsearch连接配置参数

ArrayList<HttpHost> httpHosts = new ArrayList<>();

httpHosts.add(new HttpHost("localhost", 9200));

// addSink:添加数据接收器

dataStream.addSink(new ElasticsearchSink.Builder<SensorReading>(httpHosts, new MyEsSinkFunction()).build());

// 执行任务

env.execute();

}

// 实现自定义的ElasticsearchSinkFunction

public static class MyEsSinkFunction implements ElasticsearchSinkFunction<SensorReading> {

@Override

public void process(SensorReading element, RuntimeContext ctx, RequestIndexer indexer) {

// 定义需要写入的数据字段

HashMap<String, String> dataSource = new HashMap<>();

dataSource.put("id", element.getId());

dataSource.put("timestamp", element.getTimestamp().toString());

dataSource.put("temperature", element.getTemperature().toString());

// 创建请求,作为向Elasticsearch发起的写入命令

IndexRequest request = Requests.indexRequest()

.index("sensor") // 指定索引名

.type("readingData") // Es7之前的版本必须设置

.source(dataSource); // 指定写入的数据

// 发送请求

indexer.add(request);

// System.out.println("info: data saved successfully!");

}

}

}

启动Elasticsearch:

cd /d D:\Project\Elasticsearch\elasticsearch-6.8.13

bin\elasticsearch.bat

查看Elasticsearch里的数据:

curl "localhost:9200/_cat/indices?v"

curl "localhost:9200/sensor/_search?pretty"

向MySQL写入数据

引入依赖:

<dependency>

<groupId>mysqlgroupId>

<artifactId>mysql-connector-javaartifactId>

<version>5.1.44version>

dependency>

package api.sink;

import api.pojo.SensorReading;

import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.configuration.Configuration;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.sink.RichSinkFunction;

import java.sql.Connection;

import java.sql.DriverManager;

import java.sql.PreparedStatement;

/**

* 向MySQL写入数据

*/

public class Sink4_JDBC {

public static void main(String[] args) throws Exception {

// 创建执行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// 设置并行度为1

env.setParallelism(1);

// 从文件读取数据

String inputPath = "src/main/resources/sensor.txt";

DataStream<String> inputStream = env.readTextFile(inputPath);

// 转换成SensorReading类型

DataStream<SensorReading> dataStream = inputStream.map(new MapFunction<String, SensorReading>() {

@Override

public SensorReading map(String value) throws Exception {

String[] fields = value.split(",");

return new SensorReading(fields[0], new Long(fields[1]), new Double(fields[2]));

}

});

// addSink:添加数据接收器

dataStream.addSink(new MyJdbcSinkFunction());

// 执行任务

env.execute();

}

// 实现自定义的SinkFunction

public static class MyJdbcSinkFunction extends RichSinkFunction<SensorReading> {

// 声明连接和预编译语句

Connection connection = null;

PreparedStatement insertStmt = null;

PreparedStatement updateStmt = null;

@Override

public void open(Configuration parameters) throws Exception {

// 创建连接

connection = DriverManager.getConnection("jdbc:mysql://localhost:3306/test", "root", "123456");

// 创建预编译器,有占位符(?),可传入参数

insertStmt = connection.prepareStatement("insert into sensor_temp (id, temp) values (?, ?)");

updateStmt = connection.prepareStatement("update sensor_temp set temp = ? where id = ?");

}

@Override

public void invoke(SensorReading value, Context context) throws Exception {

// 每条数据到来后,直接执行更新语句

updateStmt.setDouble(1, value.getTemperature()); // 填写参数temp

updateStmt.setString(2, value.getId()); // 填写参数id

updateStmt.execute(); // 执行更新语句

// 如果更新数为0,则执行插入语句

if (updateStmt.getUpdateCount() == 0) {

insertStmt.setString(1, value.getId()); // 填写参数id

insertStmt.setDouble(2, value.getTemperature()); // 填写参数temp

insertStmt.execute(); // 执行插入语句

}

}

@Override

public void close() throws Exception {

// 关闭预编译语句和连接

insertStmt.close();

updateStmt.close();

connection.close();

}

}

}

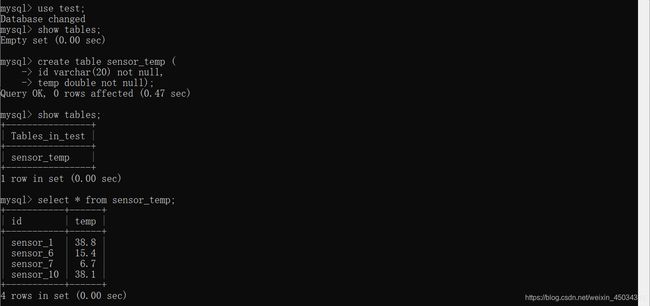

启动MySQL:

cd /d D:\Program Files\MySQL\MySQL Server 5.7\bin

mysql -u root -p

创建数据表:

mysql> create table sensor_temp (

-> id varchar(20) not null,

-> temp double not null);

查询数据表:

mysql> select * from sensor_temp;