- IOS 图片绘制过程中的剪切之后没有原图清晰的问题解决方法

Cao_Shixin攻城狮

ios开发iOS图片剪切模糊问题

在开发的过程中,我们一般或多或少遇到对图片进行“压”和“缩”处理。“压”,一般我们就是使用UIImageJPEGRepresentationNSData*data=UIImageJPEGRepresentation(image,compression);UIImage*resultImage=[UIImageimageWithData:data];进行处理,无非内容稍微变一下,1.来一个for循环

- python实现二分查找(对新手友好,内容通俗易懂)

dlage

python列表python数据结构

python实现二分查找二分查找又名折半查找。优点:查询速度快,性能好。缺点:要求查询的表为有序表原理:将表中间位置(mid)的数字与待查数字(data)做比较,如果相等:返回true,结束。如果不相等:则使用中间位置的记录将表分为前后两个子表。若data>mid则进一步查找后一个表。若datadata:last=mid-1elifalist[mid]data:last=mid-1elifalis

- Ubuntu从零创建Hadoop集群

爱编程的王小美

大数据专业知识系列ubuntuhadooplinux

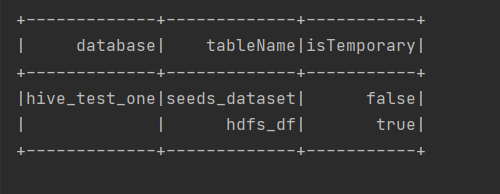

目录前言前提准备1.设置网关和网段2.查看虚拟机IP及检查网络3.Ubuntu相关配置镜像源配置下载vim编辑器4.设置静态IP和SSH免密(可选)设置静态IPSSH免密5.JDK环境部署6.Hadoop环境部署7.配置Hadoop配置文件HDFS集群规划HDFS集群配置1.配置works文件2.配置hadoop-env.sh文件3.配置core-site.xml文件4.配置hdfs-site.x

- META-INF 文件夹用途

杏花春雨江南

java基础pycharmidepython

META-INF文件夹是Java应用程序和库中一个特殊的目录,通常用于存放元数据(Metadata)和配置文件。它是Java标准的一部分,Java虚拟机和相关工具会识别并处理该目录中的特定文件。以下是META-INF文件夹的常用使用场景:1.存放Manifest文件(MANIFEST.MF)作用:MANIFEST.MF是JavaJAR文件的元数据文件,用于描述JAR文件的内容和属性。常用场景:指定

- C# dotnet core开发跨平台桌面应用程序(基于GTK+3.0)

xingyun86

C++C#.netcore

1.Windows下开发环境VisualStudio20192.新建C#跨平台应用3.工程解决方案右键Nuget安装Gtk3依赖4.编写代码:5.编译运行(编译ok,会提示下载gtk-3.24.zip)自行下载gtk-3.24.zip:下载完成后解压在C:\Users\登陆用户\AppData\Local\Gtk\3.24\目录下6.运行截图7.发布到Linux下右键工程->publish(发布)

- Vue 系列之:基础知识

程序员SKY

VUEvue.js

什么是MVVMMVVM(Model-View-ViewModel)一种软件设计模式,旨在将应用程序的数据模型(Model)与视图层(View)分离,并通过ViewModel来实现它们之间的通信。降低了代码的耦合度。Model代表数据模型,是应用程序中用于处理数据的部分。在Vue.js中,Model通常指的是组件的data函数返回的对象,或者Vue实例的data属性。View是用户界面,是用户与应用

- ODX(Open Diagnostic Data Exchange)简介

aFakeProgramer

APAUTOSAR#ODX

ODX(OpenDiagnosticDataExchange)是一种由ASAM制定的开放标准,用于描述和交换ECU(电子控制单元)诊断数据,广泛应用于车辆诊断。ODX文件采用XML格式,包含通讯参数,如ISO15765-2/3时间参数。ASAM(AssociationforStandardisationofAutomationandMeasuringSystems)ODX文件的结构ODX文件的结构

- TCP与UDP协议:你应该知道的传输层协议

Evaporator Core

网络工程师tcp/ipudp网络

第一部分:引言与协议概述在互联网通信的宏伟架构中,传输控制协议(TCP,TransmissionControlProtocol)与用户数据报协议(UDP,UserDatagramProtocol)如同两颗璀璨的星辰,各自扮演着不可或缺的角色。它们作为传输层的两大支柱,奠定了现代互联网通信的基础。本文旨在深入剖析TCP与UDP的机制、特点、应用场景及其相互之间的差异,为读者构建一个全面而深入的理解框

- SQLServer第一章 - 初识SQLServer 头哥 EDUCODER

海无极

sqlserver数据库

整活版:不想整活的看下面的极速版在第一题创建实验环境后,下面的一次复制一行进去后回车,然后所有题直接点提交就行了sqlcmd-Slocalhost-Usa-P''createdatabaseTestDbcreatedatabaseMyDbgouseTestDbCREATETABLEt_emp(idINT,nameVARCHAR(32),deptIdINT,salaryFLOAT)gouseMyDb

- 在nodejs中使用ElasticSearch(一)安装,使用

konglong127

nodejselasticsearch大数据搜索引擎

使用docker安装ElasticSearch和Kibana1)创建相应的data文件夹和子文件夹用来持久化ElasticSearch和kibana数据2)提前创建好elasticsearch配置文件data/elasticsearch/config/elasticsearch.yml文件#========================ElasticsearchConfiguration====

- 使用Java操作Excel

m0_67244960

Java基础javaexcelpython

1.引入依赖com.alibabaeasyexcel3.1.32.编写实体类,用注解映射Excel表格属性@DatapublicclassExcel{@ExcelProperty("用户名")@ColumnWidth(20)privateStringname;@ExcelProperty("性别")@ColumnWidth(20)privateStringsex;}3.编写Java代码向Excel

- 基于ODX/OTX诊断的整车扫描

「已注销」

Softing汽车诊断测试仪车辆诊断诊断仪汽车电子ODXOTX

|ODX(OpenDiagnosticdataeXchange)是基于XML语言、开放的诊断数据格式,用于车辆整个生命周期中诊断数据的交互。它一开始由ASAM提出并形成标准MCD-2D,后来以ODX2.2.0为基础形成了ISO标准——ISO22901-1。|OTX(OpenTestsequenceeXchange)是基于XML语言、开放的测试序列交换格式,用于描述基础的功能测试到整个测试应用的需求

- JDK活化石复活:setStream()抢救指南,看完想给Applet开追悼会

筱涵哥

Java基础入门java

一、时空错乱现场:当我试图用Applet传2024年的数据1.1来自侏罗纪的SOS"把这个2003年的数据采集Applet改造成能对接新系统!"——看着要传输的JSON数据,我仿佛听到硬盘在哀嚎:"臣妾做不到啊!"1.2现代程序员的降维打击//试图传输JSON数据时try{InputStreamjsonStream=newByteArrayInputStream("{\"data\":1}".ge

- ODX架构开发流程

芊言凝语

架构

一、引言随着汽车电子技术的飞速发展,诊断数据在汽车故障诊断和维护中的作用日益凸显。ODX(OpenDiagnosticDataExchange)作为一种标准化的诊断数据格式,其开发架构对于实现高效、准确的诊断系统至关重要。本文将详细阐述ODX架构开发流程,涵盖从需求分析到最终部署的各个环节,以帮助开发者更好地理解和实践这一复杂的开发过程。二、ODX架构概述ODX是一种基于XML的开放式诊断数据交换

- 华为数通Datacom认证体系详解:从HCIA到HCIE的进阶路径

武汉誉天

誉天资讯华为

华为数通Datacom(DataCommunication)课程是华为认证体系中的核心方向之一,聚焦企业网络通信与数据通信技术,适合从事网络规划、部署和运维的人员。一、数通Datacom课程体系华为数通Datacom认证分为三个级别,逐级递进,覆盖从网络基础到架构设计的全技能栈:HCIA-Datacom:华为认证数通工程师(初级)掌握基础网络搭建与运维能力HCIP-Datacom:华为认证数通高级

- DeepMind首席科学家最新万字访谈:模型「慢思考」,能力大幅提升!

datawhale

DatawhaleDatawhale分享访谈:JackRae,编译:数字开物2月25日,谷歌DeepMind首席科学家JackRae接受访谈,就谷歌思维模型的发展进行深入讨论。JackRae指出,推理模型是AI发展的新范式,推理模型并非追求即时响应,而是通过增加推理时的思考时间来提升答案质量,这导致了一种新的ScalingLaw,“慢思考”模式是提升AI性能的有效途径。JackRae认为长语境对于

- python代码实现支持神经网络对鸢尾花分类

邀_灼灼其华

机器学习及概率统计python神经网络分类sklearn

1、导入支持向量机模型,划分数据集fromsklearnimportdatasetsfromsklearnimportsvmiris=datasets.load_iris()iris_x=iris.datairis_y=iris.targetindices=np.random.permutation(len(iris_x))iris_x_train=iris_x[indices[:-10]]iri

- mysql8.0.12安装_mysql 8.0.12 安装配置图文教程

梦醒马亡

记录了mysql8.0.12下载安装教程,分享给大家。下载如图下载以后将安装包解压到任意文件夹,我这里解压到E盘。安装1、解压以后有E:\mysql\mysql-8.0.12-winx64,里面建立一个空文件夹data,如果已经有这个文件夹就不用进行这一步2、建立一个my.ini文件,用记事本打开,复制以下代码进去[mysqld]#设置3306端口port=3306#设置mysql的安装目录bas

- My SQL笔记

党和人民

笔记mysql

数据库的使用主要功能:查询数据(SELECT):从一个或多个表中检索数据。插入数据(INSERT):向表中添加新记录。更新数据(UPDATE):修改现有记录。删除数据(DELETE):移除记录。定义数据库结构(CREATE,DROP):创建、修改或删除数据库对象(如表、索引等)。创建数据库创建数据库是通过SQL语句来完成的,通常使用createdatabase语句常用数据类型:整型(int):用于

- 构建神经网络之sklearn(完善)

邪恶的贝利亚

神经网络sklearn机器学习

1.数据预处理1.缺失值importpandasaspd#假设我们有一个DataFramedfprint(df.isnull().sum())#查看每一列缺失值的数量数值型数据:fromsklearn.imputeimportSimpleImputer#对于数值型数据,使用均值填充imputer=SimpleImputer(strategy='mean')#可选:'mean','median','

- 使用el-tabs时,如何通过另一个页面传值来默认选中某个tab

冷冷清清中的风风火火

笔记前端vuevue.jsjavascript前端

要实现通过另一个页面传值以默认选中特定的el-tab,可以按照以下步骤检查和调整代码:方法一:父子组件通信(使用.sync修饰符)父组件使用.sync修饰符绑定activeName,并确保el-tabs的v-model正确绑定:exportdefault{data(){return{activeName:'1'//默认值};}};子组件在需要修改时触发update:activeName事件:thi

- 京东Hive SQL面试题实战:APP路径分析场景解析与幽默生存指南

数据大包哥

#大厂SQL面试指南hivesqlhadoop

京东HiveSQL面试题实战:APP路径分析场景解析与幽默生存指南“数据开发工程师的终极浪漫,就是把用户路径写成诗——用HiveSQL押韵。”——某不愿透露姓名的SQL诗人一、题目背景:来自京东的真实需求假设你是京东APP的数据工程师,现在需要分析用户在APP中的访问路径特征。原始日志表user_behavior结构如下:字段名类型说明user_idBIGINT用户ID(脱敏)session_id

- 腾讯SQL面试题解析:如何找出连续5天涨幅超过5%的股票

数据大包哥

#大厂SQL面试指南sql大数据数据库

腾讯SQL面试题解析:如何找出连续5天涨幅超过5%的股票作者:某七年数据开发工程师|2025年02月23日关键词:SQL窗口函数、连续问题、股票分析、腾讯面试题一、问题背景与难点拆解在股票量化分析场景中,"连续N天满足条件"是高频面试题类型。本题要求在单表stock_data中,筛选出连续5天以上(含)每日涨幅≥5%的股票,并输出连续天数及起止日期。其核心难点在于:涨幅计算:需通过时间窗口函数获取

- 【华为认证】HCIA-DATACOM技术分享-STP生成树基础实验-入门级手册(一)

最铁头的网工

HCIA华为认证网络pythonstp网络通信网络协议

文章目录1.1实验二:生成树基础实验1.1.1实验介绍1.1.1.1关于本实验1.1.1.2实验目的1.1.1.3实验组网介绍1.1.1.4实验背景1.1.2实验任务配置1.1.2.1配置思路1.1.2.2配置步骤步骤1关闭多余接口步骤2配置设备运行STP步骤3修改设备参数,使得S1成为根桥,S2成为备份根桥步骤4修改设备参数,使得S3的GigabitEthernet0/0/2接口成为根端口1.1

- Completion TLP :CplD和Cpl

昇柱

fpga开发

术语定义用途特点CplD带数据的完成事务层包(CompletionwithData)响应读取请求(ReadRequest),将请求的数据返回给发起设备(Requester)。包含请求的数据和相关的状态信息,确保数据传输的完整性和可靠性。Cpl不带数据的完成事务层包(CompletionwithoutData)响应写入请求(WriteRequest)或其他不需要返回数据的操作,确认操作完成。不携带数

- 解决问题:cannot import name ‘layers‘ from ‘paddle.fluid.和No module named ‘paddle.fluid.dataloader‘

halonaQZ

paddle深度学习人工智能

问题描述1:问题解决1:将frompaddle.fluid.dygraphimportlayers改成frompaddle.nn.layerimportlayers即可;问题描述2:问题解决2:将frompaddle.fluid.dataloader.collateimportdefault_collate_fn改成frompaddle.io.dataloader.collateimportdef

- Python中使用httpx模块详解

skydust1979

python

导入httpxIn [25]: import httpx获取一个网页In [26]: r = httpx.get("https://httpbin.org/get")In [27]: rOut[27]: 同样,发送HTTPPOST请求:In [28]: r = httpx.post("https://httpbin.org/post", data={"key": "value"})In [29]:

- html data-src和src的区别,img 的data-src 属性实现懒加载

薄辉

htmldata-src和src的区别

一、什么是图片懒加载?当访问一个页面的时候,先把img元素或是其他元素的背景图片路径替换成一张大小为1*1px图片的路径(这样就只需请求一次),当图片出现在浏览器的可视区域内时,才设置图片真正的路径,让图片显示出来。这就是图片懒加载。通俗一点:1、就是创建一个自定义属性data-src存放真正需要显示的图片路径,而img自带的src放一张大小为1*1px的图片路径。2、当页面滚动直至此图片出现在可

- 用python写一个网格交易策略代码

一曲歌长安

python数据分析数据挖掘开发语言机器学习

网格交易策略的python代码大致如下:导入需要的库importpandasaspd加载数据data=pd.read_csv("data.csv")定义一个函数,用于计算最优买入和卖出价格defcalculate_optimal_buy_sell_price(data,grid_size):#计算最低价和最高价low_price=data['low'].min()high_price=data['

- 数据库 复习

initial- - -

数据库数据库

第一章、绪论一、数据库的四个基本概念1、数据Data:描述事物的符号记录。2、数据库DB:(1)定义:数据库是长期储存在计算机内、有组织的、可共享的大量数据的集合。(2)三个基本特点:永久存储、有组织和可共享(3)数据库存储基本对象:数据3、数据库管理系统DBMS:(1)定义:是位于用户和操作系统之间的一层数据管理软件。和操作系统一样是计算机的基础软件。(2)主要功能:1)、数据定义功能2)、数据

- java类加载顺序

3213213333332132

java

package com.demo;

/**

* @Description 类加载顺序

* @author FuJianyong

* 2015-2-6上午11:21:37

*/

public class ClassLoaderSequence {

String s1 = "成员属性";

static String s2 = "

- Hibernate与mybitas的比较

BlueSkator

sqlHibernate框架ibatisorm

第一章 Hibernate与MyBatis

Hibernate 是当前最流行的O/R mapping框架,它出身于sf.net,现在已经成为Jboss的一部分。 Mybatis 是另外一种优秀的O/R mapping框架。目前属于apache的一个子项目。

MyBatis 参考资料官网:http:

- php多维数组排序以及实际工作中的应用

dcj3sjt126com

PHPusortuasort

自定义排序函数返回false或负数意味着第一个参数应该排在第二个参数的前面, 正数或true反之, 0相等usort不保存键名uasort 键名会保存下来uksort 排序是对键名进行的

<!doctype html>

<html lang="en">

<head>

<meta charset="utf-8&q

- DOM改变字体大小

周华华

前端

<!DOCTYPE html PUBLIC "-//W3C//DTD XHTML 1.0 Transitional//EN" "http://www.w3.org/TR/xhtml1/DTD/xhtml1-transitional.dtd">

<html xmlns="http://www.w3.org/1999/xhtml&q

- c3p0的配置

g21121

c3p0

c3p0是一个开源的JDBC连接池,它实现了数据源和JNDI绑定,支持JDBC3规范和JDBC2的标准扩展。c3p0的下载地址是:http://sourceforge.net/projects/c3p0/这里可以下载到c3p0最新版本。

以在spring中配置dataSource为例:

<!-- spring加载资源文件 -->

<bean name="prope

- Java获取工程路径的几种方法

510888780

java

第一种:

File f = new File(this.getClass().getResource("/").getPath());

System.out.println(f);

结果:

C:\Documents%20and%20Settings\Administrator\workspace\projectName\bin

获取当前类的所在工程路径;

如果不加“

- 在类Unix系统下实现SSH免密码登录服务器

Harry642

免密ssh

1.客户机

(1)执行ssh-keygen -t rsa -C "xxxxx@xxxxx.com"生成公钥,xxx为自定义大email地址

(2)执行scp ~/.ssh/id_rsa.pub root@xxxxxxxxx:/tmp将公钥拷贝到服务器上,xxx为服务器地址

(3)执行cat

- Java新手入门的30个基本概念一

aijuans

javajava 入门新手

在我们学习Java的过程中,掌握其中的基本概念对我们的学习无论是J2SE,J2EE,J2ME都是很重要的,J2SE是Java的基础,所以有必要对其中的基本概念做以归纳,以便大家在以后的学习过程中更好的理解java的精髓,在此我总结了30条基本的概念。 Java概述: 目前Java主要应用于中间件的开发(middleware)---处理客户机于服务器之间的通信技术,早期的实践证明,Java不适合

- Memcached for windows 简单介绍

antlove

javaWebwindowscachememcached

1. 安装memcached server

a. 下载memcached-1.2.6-win32-bin.zip

b. 解压缩,dos 窗口切换到 memcached.exe所在目录,运行memcached.exe -d install

c.启动memcached Server,直接在dos窗口键入 net start "memcached Server&quo

- 数据库对象的视图和索引

百合不是茶

索引oeacle数据库视图

视图

视图是从一个表或视图导出的表,也可以是从多个表或视图导出的表。视图是一个虚表,数据库不对视图所对应的数据进行实际存储,只存储视图的定义,对视图的数据进行操作时,只能将字段定义为视图,不能将具体的数据定义为视图

为什么oracle需要视图;

&

- Mockito(一) --入门篇

bijian1013

持续集成mockito单元测试

Mockito是一个针对Java的mocking框架,它与EasyMock和jMock很相似,但是通过在执行后校验什么已经被调用,它消除了对期望 行为(expectations)的需要。其它的mocking库需要你在执行前记录期望行为(expectations),而这导致了丑陋的初始化代码。

&nb

- 精通Oracle10编程SQL(5)SQL函数

bijian1013

oracle数据库plsql

/*

* SQL函数

*/

--数字函数

--ABS(n):返回数字n的绝对值

declare

v_abs number(6,2);

begin

v_abs:=abs(&no);

dbms_output.put_line('绝对值:'||v_abs);

end;

--ACOS(n):返回数字n的反余弦值,输入值的范围是-1~1,输出值的单位为弧度

- 【Log4j一】Log4j总体介绍

bit1129

log4j

Log4j组件:Logger、Appender、Layout

Log4j核心包含三个组件:logger、appender和layout。这三个组件协作提供日志功能:

日志的输出目标

日志的输出格式

日志的输出级别(是否抑制日志的输出)

logger继承特性

A logger is said to be an ancestor of anothe

- Java IO笔记

白糖_

java

public static void main(String[] args) throws IOException {

//输入流

InputStream in = Test.class.getResourceAsStream("/test");

InputStreamReader isr = new InputStreamReader(in);

Bu

- Docker 监控

ronin47

docker监控

目前项目内部署了docker,于是涉及到关于监控的事情,参考一些经典实例以及一些自己的想法,总结一下思路。 1、关于监控的内容 监控宿主机本身

监控宿主机本身还是比较简单的,同其他服务器监控类似,对cpu、network、io、disk等做通用的检查,这里不再细说。

额外的,因为是docker的

- java-顺时针打印图形

bylijinnan

java

一个画图程序 要求打印出:

1.int i=5;

2.1 2 3 4 5

3.16 17 18 19 6

4.15 24 25 20 7

5.14 23 22 21 8

6.13 12 11 10 9

7.

8.int i=6

9.1 2 3 4 5 6

10.20 21 22 23 24 7

11.19

- 关于iReport汉化版强制使用英文的配置方法

Kai_Ge

iReport汉化英文版

对于那些具有强迫症的工程师来说,软件汉化固然好用,但是汉化不完整却极为头疼,本方法针对iReport汉化不完整的情况,强制使用英文版,方法如下:

在 iReport 安装路径下的 etc/ireport.conf 里增加红色部分启动参数,即可变为英文版。

# ${HOME} will be replaced by user home directory accordin

- [并行计算]论宇宙的可计算性

comsci

并行计算

现在我们知道,一个涡旋系统具有并行计算能力.按照自然运动理论,这个系统也同时具有存储能力,同时具备计算和存储能力的系统,在某种条件下一般都会产生意识......

那么,这种概念让我们推论出一个结论

&nb

- 用OpenGL实现无限循环的coverflow

dai_lm

androidcoverflow

网上找了很久,都是用Gallery实现的,效果不是很满意,结果发现这个用OpenGL实现的,稍微修改了一下源码,实现了无限循环功能

源码地址:

https://github.com/jackfengji/glcoverflow

public class CoverFlowOpenGL extends GLSurfaceView implements

GLSurfaceV

- JAVA数据计算的几个解决方案1

datamachine

javaHibernate计算

老大丢过来的软件跑了10天,摸到点门道,正好跟以前攒的私房有关联,整理存档。

-----------------------------华丽的分割线-------------------------------------

数据计算层是指介于数据存储和应用程序之间,负责计算数据存储层的数据,并将计算结果返回应用程序的层次。J

&nbs

- 简单的用户授权系统,利用给user表添加一个字段标识管理员的方式

dcj3sjt126com

yii

怎么创建一个简单的(非 RBAC)用户授权系统

通过查看论坛,我发现这是一个常见的问题,所以我决定写这篇文章。

本文只包括授权系统.假设你已经知道怎么创建身份验证系统(登录)。 数据库

首先在 user 表创建一个新的字段(integer 类型),字段名 'accessLevel',它定义了用户的访问权限 扩展 CWebUser 类

在配置文件(一般为 protecte

- 未选之路

dcj3sjt126com

诗

作者:罗伯特*费罗斯特

黄色的树林里分出两条路,

可惜我不能同时去涉足,

我在那路口久久伫立,

我向着一条路极目望去,

直到它消失在丛林深处.

但我却选了另外一条路,

它荒草萋萋,十分幽寂;

显得更诱人,更美丽,

虽然在这两条小路上,

都很少留下旅人的足迹.

那天清晨落叶满地,

两条路都未见脚印痕迹.

呵,留下一条路等改日再

- Java处理15位身份证变18位

蕃薯耀

18位身份证变15位15位身份证变18位身份证转换

15位身份证变18位,18位身份证变15位

>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>

蕃薯耀 201

- SpringMVC4零配置--应用上下文配置【AppConfig】

hanqunfeng

springmvc4

从spring3.0开始,Spring将JavaConfig整合到核心模块,普通的POJO只需要标注@Configuration注解,就可以成为spring配置类,并通过在方法上标注@Bean注解的方式注入bean。

Xml配置和Java类配置对比如下:

applicationContext-AppConfig.xml

<!-- 激活自动代理功能 参看:

- Android中webview跟JAVASCRIPT中的交互

jackyrong

JavaScripthtmlandroid脚本

在android的应用程序中,可以直接调用webview中的javascript代码,而webview中的javascript代码,也可以去调用ANDROID应用程序(也就是JAVA部分的代码).下面举例说明之:

1 JAVASCRIPT脚本调用android程序

要在webview中,调用addJavascriptInterface(OBJ,int

- 8个最佳Web开发资源推荐

lampcy

编程Web程序员

Web开发对程序员来说是一项较为复杂的工作,程序员需要快速地满足用户需求。如今很多的在线资源可以给程序员提供帮助,比如指导手册、在线课程和一些参考资料,而且这些资源基本都是免费和适合初学者的。无论你是需要选择一门新的编程语言,或是了解最新的标准,还是需要从其他地方找到一些灵感,我们这里为你整理了一些很好的Web开发资源,帮助你更成功地进行Web开发。

这里列出10个最佳Web开发资源,它们都是受

- 架构师之面试------jdk的hashMap实现

nannan408

HashMap

1.前言。

如题。

2.详述。

(1)hashMap算法就是数组链表。数组存放的元素是键值对。jdk通过移位算法(其实也就是简单的加乘算法),如下代码来生成数组下标(生成后indexFor一下就成下标了)。

static int hash(int h)

{

h ^= (h >>> 20) ^ (h >>>

- html禁止清除input文本输入缓存

Rainbow702

html缓存input输入框change

多数浏览器默认会缓存input的值,只有使用ctl+F5强制刷新的才可以清除缓存记录。

如果不想让浏览器缓存input的值,有2种方法:

方法一: 在不想使用缓存的input中添加 autocomplete="off";

<input type="text" autocomplete="off" n

- POJO和JavaBean的区别和联系

tjmljw

POJOjava beans

POJO 和JavaBean是我们常见的两个关键字,一般容易混淆,POJO全称是Plain Ordinary Java Object / Pure Old Java Object,中文可以翻译成:普通Java类,具有一部分getter/setter方法的那种类就可以称作POJO,但是JavaBean则比 POJO复杂很多, Java Bean 是可复用的组件,对 Java Bean 并没有严格的规

- java中单例的五种写法

liuxiaoling

java单例

/**

* 单例模式的五种写法:

* 1、懒汉

* 2、恶汉

* 3、静态内部类

* 4、枚举

* 5、双重校验锁

*/

/**

* 五、 双重校验锁,在当前的内存模型中无效

*/

class LockSingleton

{

private volatile static LockSingleton singleton;

pri