openEuler 系统环境使用 KubeKey 搭建企业级高可用 Kubernetes 集群

k8s 高可用集群部署

在生产环境中,k8s 高可用集群部署能够确保应用程序稳态运行不出现服务中断情况。

此处我们基于 openEuler 系统环境,配置 Keepalived 和 HAProxy 使负载均衡(LB/Load Balancer)、实现高可用。

步骤如下:

- 准备主机资源配置(

OS系统使用华为openEuler 22.03 LTS社区版)。 - 配置

Keepalived和HAproxy。 - 使用

KubeKey创建Kubernetes集群,并安装KubeSphere。

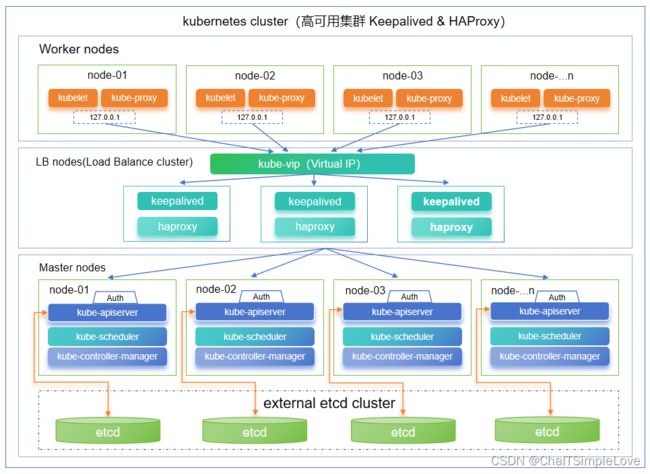

企业级 k8s 高可用集群架构

- 此处集群环境搭建,我们采用

3个master节点,3个node工作节点和2个LB(Load Balancer/负载均衡器)的节点,以及1个VIP(虚拟 IP)地址。 - 本示例中的

VIP(虚拟 IP)地址也可称为“浮动 IP 地址”。这意味着在节点故障的情况下,该IP地址可在节点之间漂移,从而实现高可用。

注意:在本示例中,

Keepalived和HAProxy没有安装在任何Master节点上。但您也可以这样做,并同时实现高可用。然而,配置两个用于负载均衡的特定节点(您可以按需增加更多此类节点)会更加安全。这两个LB/Load Balancer节点上只安装Keepalived和HAProxy,以避免与任何Kubernetes组件和服务的潜在冲突。

系统环境信息

此处我们使用 openEuler 22.03 LTS (openEuler-22.03-LTS-x86_64-dvd.iso) 版本作为基础系统环境,采用 KubeKey 部署 Kubernetes 和 KubeSphere 集群环境。

关于 openEuler 22.03 LTS

openEuler 22.03-LTS是基于5.10内核构建,实现了服务器、云、边缘和嵌入式的全场景支持;- 生命周期:

Planned EOL: 2024/03 - 更多发行信息,请查看 发行说明;

- 关于系统安装信息,请查看 安装指南 ;

openEuler 系统安装信息如下:

Authorized users only. All activities may be monitored and reported.

Welcome to 5.10.0-60.18.0.50.oe2203.x86_64

System information as of time: 2023年 09月 22日 星期五 18:13:08 CST

System load: 0.03

Processes: 109

Memory used: 70.0%

Swap used: 0%

Usage On: 6%

IP address: 172.27.237.173

Users online: 1

注意:安装 kubesphere 的系统环境要求,务必严格遵守。

OS 系统环境要求

1、最低资源要求(仅适用于 KubeSphere 的最低安装):

- 2 个虚拟处理器(

vCPU) - 4 GB 内存

- 20 GB 存储空间

/var/lib/docker主要用于存储容器数据,在使用和操作过程中会逐渐增大大小。对于生产环境,建议/var/lib/docker单独挂载驱动器。

2、操作系统要求:

SSH可以访问所有节点。- 所有

node节点的时间同步一致。 sudo/curl/openssl应在所有节点中使用。docker可以自己安装,也可以由KubeKey安装。- 建议关闭

SELinux或将SELinux的模式切换为SELinux Linux release Permissive。

- 建议你的操作系统是干净的(没有安装任何其他软件),否则可能会出现冲突。

- 如果从

dockerhub.io下载映像时遇到问题,建议准备容器映像镜像(加速器)。为Docker守护程序配置注册表镜像。- KubeKey 默认会安装

OpenEBS为开发和测试环境配置LocalPV,方便新用户使用。对于生产,请使用NFS/Ceph/GlusterFS或商业产品作为持久存储,并在所有节点中安装相关客户端。- 如果在复制时遇到,建议先检查

SELinux并关闭它Permission denied。

3、依赖要求:

KubeKey 可以同时安装 Kubernetes 和 KubeSphere。在 1.18 版本之后安装 kubernetes 之前,需要先安装一些依赖项。您可以参考下面的列表,提前检查并安装节点上的相关依赖项。

| 组件 | Kubernetes 版本 ≥ 1.18 |

|---|---|

| socat | 必须 |

| conntrack | 必须 |

| ebtables | 可选但推荐 |

| ipset | 可选但推荐 |

| ipvsadm | 可选但推荐 |

4、网络和 DNS 要求:

- 确保网络节点中的

DNS地址可用。否则,可能会导致群集中的DNS出现问题。/etc/resolv.conf - 如果您的网络配置使用防火墙或安全组,则必须确保基础架构组件可以通过特定端口相互通信。

建议您关闭防火墙或按照链接配置:网络访问。

关于系统环境要求和建议

参考,https://github.com/kubesphere/kubekey#requirements-and-recommendations

OS 系统前置准备

说明:以下操作均以

root身份在所有节点执行以下命令操作。

1、关闭 SELinux 防火墙:

参考,https://github.com/kubesphere/kubekey/blob/master/docs/turn-off-SELinux.md

永久关闭 SELinux 防火墙

# Edit the configuration

sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/selinux/config

#restart the system

reboot

# check SELinux

getenforce

2、关闭 swap 内存交换分区( windows 平台叫虚拟内存) :

- 查看

/etc/fstab:

more /etc/fstab

- 找到

swap分区的记录:

#

# /etc/fstab

# Created by anaconda on Thu Sep 28 06:30:43 2023

#

# Accessible filesystems, by reference, are maintained under '/dev/disk/'.

# See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info.

#

# After editing this file, run 'systemctl daemon-reload' to update systemd

# units generated from this file.

#

/dev/mapper/openeuler-root / ext4 defaults 1 1

UUID=7d693be5-d0a0-4b5e-afa4-7ed5a57f1cad /boot ext4 defaults 1 2

UUID=D8BD-D917 /boot/efi vfat umask=0077,shortname=winnt 0 2

/dev/mapper/openeuler-home /home ext4 defaults 1 2

#/dev/mapper/openeuler-swap none swap defaults 0 0

- 注释

swap分区记录行

#/dev/mapper/openeuler-swap none swap defaults 0 0

- 重启机器

systemctl reboot

- 再用

free -m检查一下

[root@master01 ~]# free -m

total used free shared buff/cache available

Mem: 1086 482 227 20 376 264

Swap: 0 0 0

参考,https://www.cnblogs.com/architectforest/p/12982886.html

3、所有节点必须安装工具:

yum install -y tar socat conntrack

说明:OS 资源务必满足以上操作步骤要求。

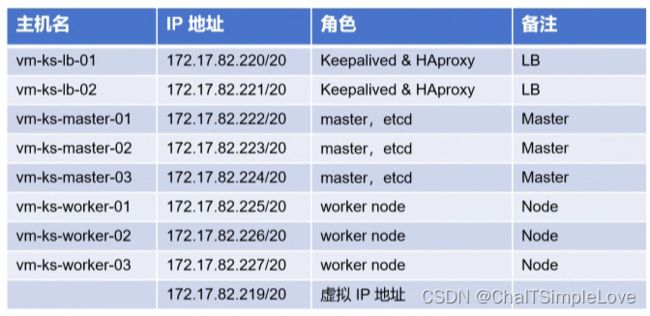

主机网络规划

此处我们采用 8 个节点搭建 k8s 高可用集群环境,为了方便编排,把主机节点 IP v4 地址连续排序递增并指定静态地址,网络规划信息如下:

IP v4 地址信息:

- IP v4 地址:172.17.80.220/20(依次往后递增)

- 默认路由:172.17.80.1 (一致)

- DNS:172.17.80.1(一致)

- 子网掩码:255.255.240.0(一致)

说明:此处网络规划请依据实际网络环境编排即可,务必确保组建集群环境的所有节点主机网络相互连通。

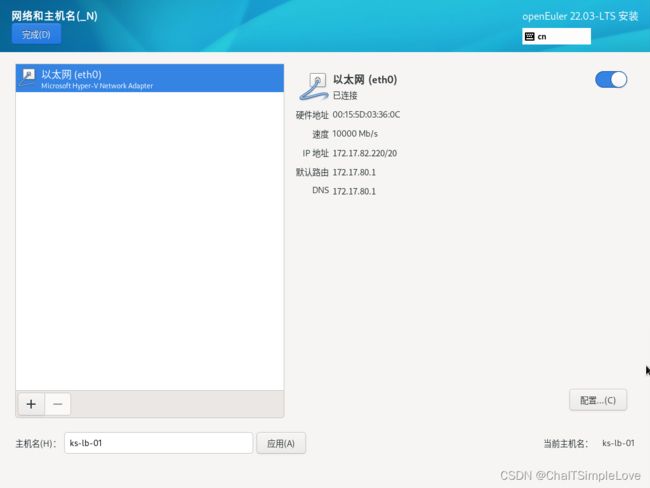

此处以 ks-lb-01 为例,网络配置信息如下:

为了方便操作,此处我们把规划主机(lb,master 和 worker)均设置统一用户密码,如下所示:

分别设置了 root 和 jeff 的账号密码。未了方便操作还可以选择,用户设置 => 创建用户此处步骤,勾选将此用户设为管理员。

注意:生产环境,为了安全保障账号密码等铭感信息请慎重处理。

配置好信息后,点击开始安装,等待安装完成,重启系统。

重启系统:

查看当前网络信息:

[root@vm-ks-lb-02 network-scripts]# ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:15:5d:03:36:04 brd ff:ff:ff:ff:ff:ff

inet 172.27.237.173/20 brd 172.27.239.255 scope global dynamic noprefixroute eth0

valid_lft 75574sec preferred_lft 75574sec

inet6 fe80::8ca:4c78:91b0:1723/64 scope link noprefixroute

valid_lft forever preferred_lft forever

进入网络配置目录:

[root@vm-ks-lb-02 ~]# pwd

/root

[root@vm-ks-lb-02 ~]# cd /etc/sysconfig/network-scripts

[root@vm-ks-lb-02 network-scripts]# ls

ifcfg-eth0

编辑配置 ifcfg-eth0 文件:

[root@vm-ks-lb-02 network-scripts]# vi ifcfg-eth0

默认信息如下:

TYPE=Ethernet

PROXY_METHOD=none

BROWSER_ONLY=no

BOOTPROTO=dhcp

DEFROUTE=yes

IPV4_FAILURE_FATAL=no

IPV6INIT=yes

IPV6_AUTOCONF=yes

IPV6_DEFROUTE=yes

IPV6_FAILURE_FATAL=no

IPV6_ADDR_GEN_MODE=stable-privacy

NAME=eth0

UUID=4e52750e-36e2-4004-befc-2f2da5c8c4e5

DEVICE=eth0

ONBOOT=yes

修改为如下信息:

TYPE=Ethernet

PROXY_METHOD=none

BROWSER_ONLY=no

BOOTPROTO=none

DEFROUTE=yes

IPV4_FAILURE_FATAL=no

IPV6INIT=yes

IPV6_AUTOCONF=yes

IPV6_DEFROUTE=yes

IPV6_FAILURE_FATAL=no

IPV6_ADDR_GEN_MODE=stable-privacy

NAME=eth0

UUID=4e52750e-36e2-4004-befc-2f2da5c8c4e5

DEVICE=eth0

ONBOOT=yes

IPADDR=172.27.237.4 # 规划的 IP v4 地址

PREFIX=20

关于

openEuler指定静态IP v4地址参考,https://blog.csdn.net/qq_41070393/article/details/126932108

配置负载均衡

Keepalived 提供 VRRP 实现,并允许您配置 Linux 机器使负载均衡,预防单点故障。HAProxy 提供可靠、高性能的负载均衡,能与 Keepalived 完美配合。

此处我们在 LB 角色节点均安装 Keepalived 和 HAproxy,如果其中一个节点故障,VIP(虚拟 IP) 地址(即 浮动 IP 地址)将自动与另一个节点关联,使集群仍然可以正常运行,从而实现高可用。

此处我们按照规划暂定定 2 个节点作为 LB 角色节点。若有需要,也可以此为目的,添加更多安装 Keepalived 和 HAproxy 的节点。

安装 Keepalived 和 HAProxy

管理员身份(root)执行命令安装 Keepalived 和 HAProxy:

yum install -y keepalived haproxy psmisc

安装信息如下:

update 109 kB/s | 59 MB 09:16

Last metadata expiration check: 0:02:33 ago on 2023年09月22日 星期五 18时13分46秒.

Package psmisc-23.4-1.oe2203.x86_64 is already installed.

Dependencies resolved.

===========================================================================================================================

Package Architecture Version Repository Size

===========================================================================================================================

Installing:

haproxy x86_64 2.4.8-4.oe2203 update 1.0 M

keepalived x86_64 2.2.4-2.oe2203 update 327 k

Installing dependencies:

lm_sensors x86_64 3.6.0-5.oe2203 OS 142 k

mariadb-connector-c x86_64 3.1.13-2.oe2203 update 179 k

net-snmp x86_64 1:5.9.1-5.oe2203 update 825 k

net-snmp-libs x86_64 1:5.9.1-5.oe2203 update 618 k

Transaction Summary

===========================================================================================================================

Install 6 Packages

Total download size: 3.1 M

Installed size: 10 M

Downloading Packages:

(1/6): keepalived-2.2.4-2.oe2203.x86_64.rpm 84 kB/s | 327 kB 00:03

(2/6): lm_sensors-3.6.0-5.oe2203.x86_64.rpm 35 kB/s | 142 kB 00:04

(3/6): mariadb-connector-c-3.1.13-2.oe2203.x86_64.rpm 117 kB/s | 179 kB 00:01

(4/6): haproxy-2.4.8-4.oe2203.x86_64.rpm 118 kB/s | 1.0 MB 00:09

(5/6): net-snmp-libs-5.9.1-5.oe2203.x86_64.rpm 79 kB/s | 618 kB 00:07

(6/6): net-snmp-5.9.1-5.oe2203.x86_64.rpm 63 kB/s | 825 kB 00:13

---------------------------------------------------------------------------------------------------------------------------

Total 185 kB/s | 3.1 MB 00:17

retrieving repo key for OS unencrypted from http://repo.openeuler.org/openEuler-22.03-LTS/OS/x86_64/RPM-GPG-KEY-openEuler

OS 1.6 kB/s | 2.1 kB 00:01

Importing GPG key 0xB25E7F66:

Userid : "private OBS (key without passphrase) "

Fingerprint: 12EA 74AC 9DF4 8D46 C69C A0BE D557 065E B25E 7F66

From : http://repo.openeuler.org/openEuler-22.03-LTS/OS/x86_64/RPM-GPG-KEY-openEuler

Key imported successfully

Running transaction check

Transaction check succeeded.

Running transaction test

Transaction test succeeded.

Running transaction

Running scriptlet: mariadb-connector-c-3.1.13-2.oe2203.x86_64 1/1

Preparing : 1/1

Installing : net-snmp-libs-1:5.9.1-5.oe2203.x86_64 1/6

Installing : mariadb-connector-c-3.1.13-2.oe2203.x86_64 2/6

Installing : lm_sensors-3.6.0-5.oe2203.x86_64 3/6

Running scriptlet: lm_sensors-3.6.0-5.oe2203.x86_64 3/6

Created symlink /etc/systemd/system/multi-user.target.wants/lm_sensors.service → /usr/lib/systemd/system/lm_sensors.service.

Installing : net-snmp-1:5.9.1-5.oe2203.x86_64 4/6

Running scriptlet: net-snmp-1:5.9.1-5.oe2203.x86_64 4/6

Installing : keepalived-2.2.4-2.oe2203.x86_64 5/6

Running scriptlet: keepalived-2.2.4-2.oe2203.x86_64 5/6

Running scriptlet: haproxy-2.4.8-4.oe2203.x86_64 6/6

Installing : haproxy-2.4.8-4.oe2203.x86_64 6/6

Running scriptlet: haproxy-2.4.8-4.oe2203.x86_64 6/6

/usr/lib/tmpfiles.d/net-snmp.conf:1: Line references path below legacy directory /var/run/, updating /var/run/net-snmp → /run/net-snmp; please update the tmpfiles.d/ drop-in file accordingly.

Verifying : lm_sensors-3.6.0-5.oe2203.x86_64 1/6

Verifying : haproxy-2.4.8-4.oe2203.x86_64 2/6

Verifying : keepalived-2.2.4-2.oe2203.x86_64 3/6

Verifying : mariadb-connector-c-3.1.13-2.oe2203.x86_64 4/6

Verifying : net-snmp-1:5.9.1-5.oe2203.x86_64 5/6

Verifying : net-snmp-libs-1:5.9.1-5.oe2203.x86_64 6/6

Installed:

haproxy-2.4.8-4.oe2203.x86_64 keepalived-2.2.4-2.oe2203.x86_64 lm_sensors-3.6.0-5.oe2203.x86_64

mariadb-connector-c-3.1.13-2.oe2203.x86_64 net-snmp-1:5.9.1-5.oe2203.x86_64 net-snmp-libs-1:5.9.1-5.oe2203.x86_64

Complete!

修改 HAProxy 配置文件

=》务必确认在两台用于负载均衡器的机器上 Proxy 配置相同。

1、编辑 haproxy.cfg 配置文件:

vi /etc/haproxy/haproxy.cfg

输出类似信息如下:

#---------------------------------------------------------------------

# Example configuration for a possible web application. See the

# full configuration options online.

#

# https://www.haproxy.org/download/1.8/doc/configuration.txt

#

#---------------------------------------------------------------------

global

log 127.0.0.1 local2

chroot /var/lib/haproxy

pidfile /var/run/haproxy.pid

user haproxy

group haproxy

daemon

maxconn 4000

defaults

mode http

log global

option httplog

option dontlognull

retries 3

timeout http-request 5s

timeout queue 1m

timeout connect 5s

timeout client 1m

timeout server 1m

timeout http-keep-alive 5s

timeout check 5s

maxconn 3000

frontend main

bind *:80

default_backend http_back

backend http_back

balance roundrobin

server node1 127.0.0.1:5001 check

server node2 127.0.0.1:5002 check

server node3 127.0.0.1:5003 check

server node4 127.0.0.1:5004 check

2、以下是示例配置,供您参考(请注意 server 字段,请记住 6443 是 kube-apiserver 端口),修改为如下信息:

#---------------------------------------------------------------------

# Example configuration for a possible web application. See the

# full configuration options online.

#

# https://www.haproxy.org/download/1.8/doc/configuration.txt

#

#---------------------------------------------------------------------

global

log 127.0.0.1 local2

chroot /var/lib/haproxy

pidfile /var/run/haproxy.pid

user haproxy

group haproxy

daemon

maxconn 4000

defaults

mode http

log global

option httplog

option dontlognull

retries 3

timeout http-request 5s

timeout queue 1m

timeout connect 5s

timeout client 1m

timeout server 1m

timeout http-keep-alive 5s

timeout check 5s

maxconn 3000

frontend kube-apiserver

bind *:6443

default_backend kube-apiserver

backend kube-apiserver

balance roundrobin

server kube-apiserver-1 172.27.237.4:6443 check

server kube-apiserver-2 172.27.237.5:6443 check

server kube-apiserver-3 172.27.237.6:6443 check

3、保存文件并运行以下命令以重启 HAproxy。

:wq!

systemctl restart haproxy

4、设置 HAproxy 在开机后自动运行:

systemctl enable haproxy

5、确保您在另一台机器 (ks-lb-02) 上也配置了 HAproxy。

修改 Keepalived 配置文件

务必确保两台机器上必须都安装 Keepalived,但在配置上略有不同。

1、运行以下命令以配置 Keepalived。

vi /etc/keepalived/keepalived.conf

2、以下是示例配置 (ks-lb-01) 参考:

ks-lb-01,172.17.82.220ks-lb-02,172.17.82.221ks-lb-vip,172.17.82.219

global_defs {

notification_email {

}

router_id LVS_DEVEL

vrrp_skip_check_adv_addr

vrrp_garp_interval 0

vrrp_gna_interval 0

}

vrrp_script chk_haproxy {

script "killall -0 haproxy"

interval 2

weight 2

}

vrrp_instance haproxy-vip {

state BACKUP

priority 100

interface eth0 # Network card

virtual_router_id 60

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

unicast_src_ip 172.17.82.220 # The IP address of this machine

unicast_peer {

172.17.82.221 # The IP address of peer machines

}

virtual_ipaddress {

172.17.82.219/20 # The VIP address

}

track_script {

chk_haproxy

}

}

注意:

- 对于

interface字段,您必须提供自己的网卡信息。您可以在机器上运行ip addr以获取该值。- 为

unicast_src_ip提供的IP地址是您当前机器的IP地址。对于也安装了HAproxy和Keepalived进行负载均衡的其他机器,必须在字段unicast_peer中输入其IP地址。

3、保存文件并运行以下命令重启 Keepalived。

systemctl restart keepalived

4、设置 Keepalived 在开机后自动运行。

systemctl enable keepalived

5、确保您在另一台机器 (ks-lb-02) 上也配置了 Keepalived。

验证 LB 高可用性

在开始创建 k8s 集群之前,请确保已经测试了 lb/Load Balancer 节点的高可用性。测试方法如下:

1、在 ks-lb-01 机器上执行如下命令:

[root@lb01 ~]# ip a s

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:15:5d:00:92:02 brd ff:ff:ff:ff:ff:ff

inet 192.168.3.6/24 brd 192.168.3.255 scope global dynamic noprefixroute eth0

valid_lft 68239sec preferred_lft 68239sec

inet 192.168.3.14/24 scope global secondary eth0 # The VIP address

valid_lft forever preferred_lft forever

inet6 fe80::8f58:37:5d19:ec6c/64 scope link noprefixroute

valid_lft forever preferred_lft forever

2、如上图所示,VIP(虚拟 IP,也叫 浮动 IP)地址已经成功添加。模拟此节点上的故障:

# 停止该节点上的 haproxy

systemctl stop haproxy

3、再次检查 浮动 IP 地址,您可以看到该地址在 ks-lb-01 上消失了。

[root@lb01 ~]# ip a s

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:15:5d:00:92:02 brd ff:ff:ff:ff:ff:ff

inet 192.168.3.6/24 brd 192.168.3.255 scope global dynamic noprefixroute eth0

valid_lft 68239sec preferred_lft 68239sec

inet6 fe80::8f58:37:5d19:ec6c/64 scope link noprefixroute

valid_lft forever preferred_lft forever

4、理论上讲,若配置成功,该节点(ks-lb-01) 的 VIP 会漂移到另一台机器 (ks-lb-02) 上。在 ks-lb-02 上运行以下命令,预期信息的输出如下:

[root@lb02 ~]# ip a s

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:15:5d:00:92:03 brd ff:ff:ff:ff:ff:ff

inet 192.168.3.7/24 brd 192.168.3.255 scope global dynamic noprefixroute eth0

valid_lft 69227sec preferred_lft 69227sec

inet 192.168.3.14/24 scope global secondary eth0 # The VIP address

valid_lft forever preferred_lft forever

inet6 fe80::4a71:a401:41a9:fc88/64 scope link noprefixroute

valid_lft forever preferred_lft forever

5、如上所示,lb 节点基于 keepalived & haproxy 的高可用已经配置成功。

说明:此处 LB 节点的高可用性务必测试验证通过,否则会影响后面的 k8s 集群环境。

使用 KubeKey 创建 k8s 集群

KubeKey 是一款用来创建 Kubernetes 集群的工具,高效而便捷。请按照以下步骤下载 KubeKey。

下载 KubeKey 并添加可执行权限

由于国内网络限制原因,可以采用如下方式访问 GitHub/Googleapis,在 ks-master-01 角色执行操作如下:

[root@master01 ~]# export KKZONE=cn

[root@master01 ~]# curl -sfL https://get-kk.kubesphere.io | VERSION=v3.0.10 sh -

Downloading kubekey v3.0.10 from https://kubernetes.pek3b.qingstor.com/kubekey/releases/download/v3.0.10/kubekey-v3.0.10-linux-amd64.tar.gz ...

Kubekey v3.0.10 Download Complete!

查看文件列表:

[root@master01 ~]# ls

anaconda-ks.cfg config-sample.yaml config-sample.yaml.bak kk kubekey kubekey-v3.0.10-linux-amd64.tar.gz

[root@master01 ~]# ls -al

总用量 112108

dr-xr-x---. 3 root root 4096 9月 28 21:54 .

dr-xr-xr-x. 19 root root 4096 9月 28 14:36 ..

-rw-------. 1 root root 1269 9月 28 15:07 anaconda-ks.cfg

-rw-------. 1 root root 1765 9月 28 22:08 .bash_history

-rw-r--r--. 1 root root 18 12月 24 2019 .bash_logout

-rw-r--r--. 1 root root 176 12月 24 2019 .bash_profile

-rw-r--r--. 1 root root 176 12月 24 2019 .bashrc

-rw-r--r-- 1 root root 5302 10月 9 23:10 config-sample.yaml

-rw-r--r--. 1 root root 5302 9月 28 19:14 config-sample.yaml.bak

-rw-r--r--. 1 root root 100 12月 24 2019 .cshrc

-rwxr-xr-x. 1 root root 78944067 7月 28 14:11 kk

drwxr-xr-x. 17 root root 4096 9月 28 21:54 kubekey

-rw-r--r--. 1 root root 35791788 9月 28 19:08 kubekey-v3.0.10-linux-amd64.tar.gz

-rw-r--r--. 1 root root 129 12月 24 2019 .tcshrc

会看到文件列表中有一个 kk,并设置权限为可执行文件:

chmod +x kk

使用 KubeKey 创建配置示例文件

当前 kubekey v3.0.10 默认 k8s 配置版本是 v1.23.10,此处我们使用指定版本执行:

./kk create config --with-kubesphere v3.4.0 --with-kubernetes v1.26.9

将创建配置示例文件 config-sample.yaml 信息如下:

apiVersion: kubekey.kubesphere.io/v1alpha2

kind: Cluster

metadata:

name: sample

spec:

hosts:

- {name: node1, address: 172.16.0.2, internalAddress: 172.16.0.2, user: ubuntu, password: "Qcloud@123"}

- {name: node2, address: 172.16.0.3, internalAddress: 172.16.0.3, user: ubuntu, password: "Qcloud@123"}

roleGroups:

etcd:

- node1

control-plane:

- node1

worker:

- node1

- node2

controlPlaneEndpoint:

## Internal loadbalancer for apiservers

# internalLoadbalancer: haproxy

domain: lb.kubesphere.local

address: ""

port: 6443

kubernetes:

version: v1.26.9

clusterName: cluster.local

autoRenewCerts: true

containerManager: containerd

etcd:

type: kubekey

network:

plugin: calico

kubePodsCIDR: 10.233.64.0/18

kubeServiceCIDR: 10.233.0.0/18

## multus support. https://github.com/k8snetworkplumbingwg/multus-cni

multusCNI:

enabled: false

registry:

privateRegistry: ""

namespaceOverride: ""

registryMirrors: []

insecureRegistries: []

addons: []

---

apiVersion: installer.kubesphere.io/v1alpha1

kind: ClusterConfiguration

metadata:

name: ks-installer

namespace: kubesphere-system

labels:

version: v3.4.0

spec:

persistence:

storageClass: ""

authentication:

jwtSecret: ""

zone: ""

local_registry: ""

namespace_override: ""

# dev_tag: ""

etcd:

monitoring: false

endpointIps: localhost

port: 2379

tlsEnable: true

common:

core:

console:

enableMultiLogin: true

port: 30880

type: NodePort

# apiserver:

# resources: {}

# controllerManager:

# resources: {}

redis:

enabled: false

enableHA: false

volumeSize: 2Gi

openldap:

enabled: false

volumeSize: 2Gi

minio:

volumeSize: 20Gi

monitoring:

# type: external

endpoint: http://prometheus-operated.kubesphere-monitoring-system.svc:9090

GPUMonitoring:

enabled: false

gpu:

kinds:

- resourceName: "nvidia.com/gpu"

resourceType: "GPU"

default: true

es:

# master:

# volumeSize: 4Gi

# replicas: 1

# resources: {}

# data:

# volumeSize: 20Gi

# replicas: 1

# resources: {}

logMaxAge: 7

elkPrefix: logstash

basicAuth:

enabled: false

username: ""

password: ""

externalElasticsearchHost: ""

externalElasticsearchPort: ""

opensearch:

# master:

# volumeSize: 4Gi

# replicas: 1

# resources: {}

# data:

# volumeSize: 20Gi

# replicas: 1

# resources: {}

enabled: true

logMaxAge: 7

opensearchPrefix: whizard

basicAuth:

enabled: true

username: "admin"

password: "admin"

externalOpensearchHost: ""

externalOpensearchPort: ""

dashboard:

enabled: false

alerting:

enabled: false

# thanosruler:

# replicas: 1

# resources: {}

auditing:

enabled: false

# operator:

# resources: {}

# webhook:

# resources: {}

devops:

enabled: false

jenkinsCpuReq: 0.5

jenkinsCpuLim: 1

jenkinsMemoryReq: 4Gi

jenkinsMemoryLim: 4Gi

jenkinsVolumeSize: 16Gi

events:

enabled: false

# operator:

# resources: {}

# exporter:

# resources: {}

# ruler:

# enabled: true

# replicas: 2

# resources: {}

logging:

enabled: false

logsidecar:

enabled: true

replicas: 2

# resources: {}

metrics_server:

enabled: false

monitoring:

storageClass: ""

node_exporter:

port: 9100

# resources: {}

# kube_rbac_proxy:

# resources: {}

# kube_state_metrics:

# resources: {}

# prometheus:

# replicas: 1

# volumeSize: 20Gi

# resources: {}

# operator:

# resources: {}

# alertmanager:

# replicas: 1

# resources: {}

# notification_manager:

# resources: {}

# operator:

# resources: {}

# proxy:

# resources: {}

gpu:

nvidia_dcgm_exporter:

enabled: false

# resources: {}

multicluster:

clusterRole: none

network:

networkpolicy:

enabled: false

ippool:

type: none

topology:

type: none

openpitrix:

store:

enabled: false

servicemesh:

enabled: false

istio:

components:

ingressGateways:

- name: istio-ingressgateway

enabled: false

cni:

enabled: false

edgeruntime:

enabled: false

kubeedge:

enabled: false

cloudCore:

cloudHub:

advertiseAddress:

- ""

service:

cloudhubNodePort: "30000"

cloudhubQuicNodePort: "30001"

cloudhubHttpsNodePort: "30002"

cloudstreamNodePort: "30003"

tunnelNodePort: "30004"

# resources: {}

# hostNetWork: false

iptables-manager:

enabled: true

mode: "external"

# resources: {}

# edgeService:

# resources: {}

gatekeeper:

enabled: false

# controller_manager:

# resources: {}

# audit:

# resources: {}

terminal:

timeout: 600

注意把示例配置文件部分信息修改如下:

spec:

hosts:

- {name: ks-master-01, address: 172.17.82.222, internalAddress: 172.17.82.222, user: root, password: "[email protected]"}

- {name: ks-master-02, address: 172.17.82.223, internalAddress: 172.17.82.223, user: root, password: "[email protected]"}

- {name: ks-master-03, address: 172.17.82.224, internalAddress: 172.17.82.224, user: root, password: "[email protected]"}

- {name: ks-worker-01, address: 172.17.82.225, internalAddress: 172.17.82.225, user: root, password: "[email protected]"}

- {name: ks-worker-02, address: 172.17.82.226, internalAddress: 172.17.82.226, user: root, password: "[email protected]"}

- {name: ks-worker-03, address: 172.17.82.227, internalAddress: 172.17.82.227, user: root, password: "[email protected]"}

roleGroups:

etcd:

- ks-master-01

- ks-master-02

- ks-master-03

control-plane:

- ks-master-01

- ks-master-02

- ks-master-03

worker:

- ks-worker-01

- ks-worker-02

- ks-worker-03

controlPlaneEndpoint:

## Internal loadbalancer for apiservers

# internalLoadbalancer: haproxy

domain: lb.kubesphere.local

address: 172.17.82.219 # The VIP address

port: 6443

说明:

- 请使用您自己的

VIP地址来替换controlPlaneEndpoint.address的值。- 有关更多本配置文件中不同参数的信息,请参见 多节点安装。

关于 KubeKey 请查看:

KubeKey简介,https://www.kubesphere.io/zh/docs/v3.4/installing-on-linux/introduction/kubekey/KubeKey github地址,https://github.com/kubesphere/kubekeyKubeKey支持的k8s版本,https://github.com/kubesphere/kubekey/blob/master/docs/kubernetes-versions.md

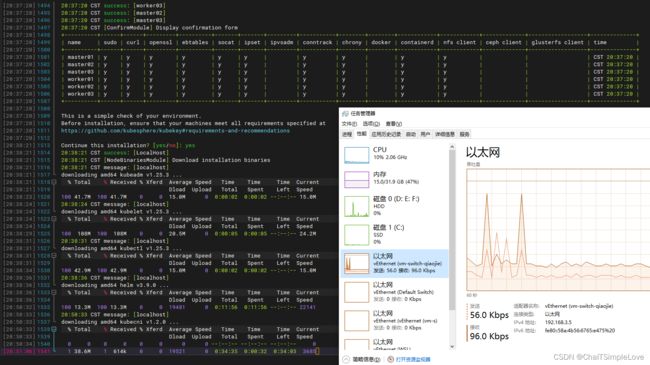

开始安装 KubeSphere 和 Kubernetes

配置好部署示例 config-sample.yaml 文件后,执行安装命令如下:

./kk create cluster -f config-sample.yaml

此过程安装耐心等待,输出信息如下:

不出意外情况,通常都能安装成功。

验证 KubeSphere 和 Kubernetes 安装

1、查看安装日志信息。

kubectl logs -n kubesphere-system $(kubectl get pod -n kubesphere-system -l 'app in (ks-install, ks-installer)' -o jsonpath='{.items[0].metadata.name}') -f

2、看到如下信息时,说明高可用集群已经成功创建。

#####################################################

### Welcome to KubeSphere! ###

#####################################################

Console: http://172.17.82.222:30880

Account: admin

Password: P@88w0rd

NOTES:

1. After you log into the console, please check the

monitoring status of service components in

the "Cluster Management". If any service is not

ready, please wait patiently until all components

are up and running.

2. Please change the default password after login.

#####################################################

https://kubesphere.io 2023-xx-xx xx:xx:xx

#####################################################

以上就是使用 Keepalived 和 HAproxy 创建高可用 Kubernetes 集群的详细步骤,欢迎分享!

参考文档:

- 使用 Keepalived 和 HAproxy 创建高可用 Kubernetes 集群