大模型的实践应用4-ChatGLM-6b大模型的结构与核心代码解读,最全的ChatGLM模型架构介绍与源码解读

大家好,我是微学AI,今天给大家介绍一下大模型的实践应用4-ChatGLM大模型的结构与核心代码解读,最全的ChatGLM模型架构介绍与源码解读,本文介绍将ChatGLM-6B的模型结构,与设计原理。 主要代码来自:https://huggingface.co/THUDM/chatglm-6b/blob/main/modeling_chatglm.py

一、ChatGLM模型介绍

ChatGLM-6B是有清华团队开发的开源大语言模型,可以用中文和英文进行问答对话。它有着62亿个参数,它采用了General Language Model (GLM)架构,并且通过模型量化技术,可以在普通的显卡上运行(只需6GB显存)。为了优化中文问答和对话,ChatGLM-6B经过了大约1T的中英双语训练,并结合了监督微调、反馈自助和人类反馈强化学习等技术。现在,这个具有62亿参数的ChatGLM-6B已经可以生成非常符合人类喜好的回答了。对于学术研究人员来说,ChatGLM-6B的权重是完全开放的,目前已经发展开发出ChatGLM2-6B,对模型有些升级与改造。

二、ChatGLM模型结构思想

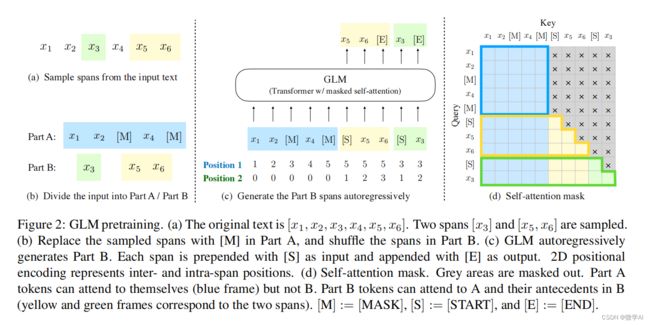

2.1自回归空格填充的任务

ChatGLM模型引入了一种全新的自回归空格填充的任务,例如下图: 对原始的数据 x 1 , x 2 , x 3 , x 4 , x 5 , x 6 x_1,x_2,x_3,x_4,x_5,x_6 x1,x2,x3,x4,x5,x6,随机 m a s k mask mask了 x 3 x_3 x3和 x 5 , x 6 x_5,x_6 x5,x6,目标就是利用未 m a s k mask mask的来自回归式预测被 m a s k mask mask的信息。图 ( c ) (c) (c)可以看到,不同于 M L M MLM MLM的结构,这里通过两种位置编码,就能自回归式预测被 m a s k mask mask的信息。这里有 p o s i t i o n 1 position1 position1, p o s i t o n 2 positon2 positon2两种 p o s i t i o n position position, p o s i t i o n 1 position1 position1标记的是整体的位置信息; p o s i t i o n 2 position2 position2标记的是每个被 m a s k mask mask的块内部的相对位置信息。在 ( d ) (d) (d)中就很清晰地展示出对于未被 m a s k mask mask的信息(用来做 p r o m p t prompt prompt的),在计算self-attention的时候,全部没有 m a s k mask mask,也就是上下文都可见,对于第一块遮挡的信息 x 5 , x 6 x_5,x_6 x5,x6,自己区域内呈下三角形状,也就是自回归预测形式,第二块 m a s k mask mask的信息 x 3 x_3 x3,由于这时候 x 5 x_5 x5和 x 6 x_6 x6已经预测出来了,因此对于 x 3 x_3 x3也变得可见。

2.2 ChatGLM的激活函数选择

ChatGLM-6B使用的激活函数为GELU,其可以近似实现为:

G E L U ( x ) ≈ 0.5 x ( 1 + tanh ( 2 π ( x + 0.044715 x 3 ) ) ) GELU(x)\approx 0.5x(1+ \tanh(\sqrt{\frac{2}{\pi}}(x+0.044715x^{3}))) GELU(x)≈0.5x(1+tanh(π2(x+0.044715x3)))

@torch.jit.script

def gelu_impl(x):

return 0.5 * x * (1.0 + torch.tanh(0.7978845608028654 * x *

(1.0 + 0.044715 * x * x)))

def gelu(x):

return gelu_impl(x)

ChatGLM2-6B(升级版)模型则使用的 SwiGLU 激活函数:

其实在大模型LLaMA中全连接层也使用了SwiGLU 激活函数,它的计算公式如下:

F F N S w i G L U ( x , W , V , W 2 ) = S w i G L U ( x , W , V ) W 2 FFN_{SwiGLU}(x,W,V,W_{2})=SwiGLU(x,W,V)W_{2} FFNSwiGLU(x,W,V,W2)=SwiGLU(x,W,V)W2

S w i G L U ( x , W , V ) = S w i s h β ( x W ) ⊗ x V SwiGLU(x,W,V)=Swish_{\beta}(xW)\otimes xV SwiGLU(x,W,V)=Swishβ(xW)⊗xV

S w i s h β ( x ) = x σ ( β x ) Swish_{\beta}(x)=x \sigma(\beta x) Swishβ(x)=xσ(βx)

其中, σ ( x ) σ(x) σ(x)是 S i g m o i d Sigmoid Sigmoid函数。

三、ChatGLM模型的GLU层

ChatGLM定义了一个名为GLU模块。GLU通过将输入数据与由另一层计算出的“门”值相乘,来实现对输入数据的选择性过滤。设定特定版本的GLU模型首先将输入hidden_states通过一个线性变换(self.dense_h_to_4h)扩展到4倍的维度,然后对其应用激活函数。其中的激活函数是GELU。然后,它再次将结果投影回原始维度(self.dense_4h_to_h)。

class GLU(torch.nn.Module):

def __init__(self, hidden_size, inner_hidden_size=None,

layer_id=None, bias=True, activation_func=gelu, params_dtype=torch.float, empty_init=True):

super(GLU, self).__init__()

if empty_init:

init_method = skip_init

else:

init_method = default_init

self.layer_id = layer_id

self.activation_func = activation_func

self.hidden_size = hidden_size

if inner_hidden_size is None:

inner_hidden_size = 4 * hidden_size

self.inner_hidden_size = inner_hidden_size

self.dense_h_to_4h = init_method(

torch.nn.Linear,

self.hidden_size,

self.inner_hidden_size,

bias=bias,

dtype=params_dtype,

)

# Project back to h.

self.dense_4h_to_h = init_method(

torch.nn.Linear,

self.inner_hidden_size,

self.hidden_size,

bias=bias,

dtype=params_dtype,

)

def forward(self, hidden_states):

intermediate_parallel = self.dense_h_to_4h(hidden_states)

intermediate_parallel = self.activation_func(intermediate_parallel)

output = self.dense_4h_to_h(intermediate_parallel)

return output

四、ChatGLM的位置编码:RoPE

在位置编码上,ChatGLM使用旋转位置嵌入(Rotary Positional Embeddings,RoPE)代替原有的绝对位置编码。

RoPE借助了复数的思想,出发点是通过绝对位置编码的方式实现相对位置编码。其目标是通过下述运算来给 q , k q,k q,k添加绝对位置信息:

q ~ m = f ( q , m ) , k ~ n = f ( k , n ) \tilde{q}_{m}=f(q,m), \tilde{k}_{n}=f(k,n) q~m=f(q,m),k~n=f(k,n)

经过上述操作后, q ~ m \tilde{q}_{m} q~m和 k ~ n \tilde{k}_{n} k~n就带有位置 m m m和 n n n 的绝对位置信息。

最终可以得到二维情况下用复数表示的 RoPE:

f ( q , m ) = R f ( q , m ) e i θ f ( q , m ) = ∣ q ∣ e i ( θ ( q ) + m θ ) = q e i m θ f(q,m)=R_{f}(q,m)e^{i \theta _{f}(q,m)}=|q|e^{i(\theta(q)+m \theta)}=qe^{im \theta} f(q,m)=Rf(q,m)eiθf(q,m)=∣q∣ei(θ(q)+mθ)=qeimθ

根据复数乘法的几何意义,上述变换实际上是对应向量旋转,所以位置向量称为“旋转式位置编码”。还可以使用矩阵形式表示:

f ( q , m ) = ( cos m θ − sin cos m θ sin m θ cos m θ ) ( q 0 q 1 ) f ( q , m ) = \left( \begin{array} { l } { \cos m \theta } & { - \sin \cos m \theta } \\ { \sin m \theta } & { \cos m \theta } \end{array} \right) \left( \begin{array} { l } { q _ { 0 } } \\ { q _ { 1 } } \end{array} \right) f(q,m)=(cosmθsinmθ−sincosmθcosmθ)(q0q1)

ChatGLM模型中定义了一个名为RotaryEmbedding的模块,用于实现旋转嵌入(Rotary Embedding)。它可以捕获序列中单词的位置信息。

RotaryEmbedding模型中定义各个方法的功能:

1 __init__: 初始化函数。定义了embedding维度(dim),基数(base),精度(precision)等参数,并根据是否可学习(learnable)设置inverse frequency (inv_freq)为参数或缓冲区。

2._load_from_state_dict: 这是一个PyTorch内部函数,用于从状态字典加载模型参数。在这里没有进行实现。

3.forward: 前向传播函数。首先计算出输入序列长度(seq_len),然后根据seq_len和inv_freq计算频率(freqs)。接着将freqs复制并拼接到emb上,并根据精度将其转换为相应类型。最后计算cosine和sine值,并缓存起来以供后续使用。

4._apply: PyTorch内部函数,对缓存的cosine和sine值应用给定操作(fn)。

数学计算过程:

inv_freq:inverse frequency(逆频率)是通过对等差数列[0, 2, …, dim-2]除以dim做归一化后取base的负指数得到。

freqs:通过将时间步长t(一个长度为seq_len、元素值从0到seq_len-1的向量)与inv_freq做外积得到。

emb:emb是由两份freqs拼接而成。

cos_cached 和 sin_cached: 是emb中每个元素分别取余弦和正弦得到。

通过对位置索引生成周期性信号(余弦和正弦),进而构建了能够捕获相对位置关系的embedding,也就实现了所谓“旋转”的效果,代码如下:

class RotaryEmbedding(torch.nn.Module):

def __init__(self, dim, base=10000, precision=torch.half, learnable=False):

super().__init__()

inv_freq = 1. / (base ** (torch.arange(0, dim, 2).float() / dim))

inv_freq = inv_freq.half()

self.learnable = learnable

if learnable:

self.inv_freq = torch.nn.Parameter(inv_freq)

self.max_seq_len_cached = None

else:

self.register_buffer('inv_freq', inv_freq)

self.max_seq_len_cached = None

self.cos_cached = None

self.sin_cached = None

self.precision = precision

def _load_from_state_dict(self, state_dict, prefix, local_metadata, strict, missing_keys, unexpected_keys,

error_msgs):

pass

def forward(self, x, seq_dim=1, seq_len=None):

if seq_len is None:

seq_len = x.shape[seq_dim]

if self.max_seq_len_cached is None or (seq_len > self.max_seq_len_cached):

self.max_seq_len_cached = None if self.learnable else seq_len

t = torch.arange(seq_len, device=x.device, dtype=self.inv_freq.dtype)

freqs = torch.einsum('i,j->ij', t, self.inv_freq)

emb = torch.cat((freqs, freqs), dim=-1).to(x.device)

if self.precision == torch.bfloat16:

emb = emb.float()

# [sx, 1 (b * np), hn]

cos_cached = emb.cos()[:, None, :]

sin_cached = emb.sin()[:, None, :]

if self.precision == torch.bfloat16:

cos_cached = cos_cached.bfloat16()

sin_cached = sin_cached.bfloat16()

if self.learnable:

return cos_cached, sin_cached

self.cos_cached, self.sin_cached = cos_cached, sin_cached

return self.cos_cached[:seq_len, ...], self.sin_cached[:seq_len, ...]

def _apply(self, fn):

if self.cos_cached is not None:

self.cos_cached = fn(self.cos_cached)

if self.sin_cached is not None:

self.sin_cached = fn(self.sin_cached)

return super()._apply(fn)

五、ChatGLM的注意力层

ChatGLM采用标准的自注意力机制,在自注意力机制中,输入是一组查询(query) Q Q Q, 键(key) K K K, 值(value) V V V. 这三者都是由输入序列经过线性变换得到。然后计算查询和键之间点积作为权重,并通过softmax函数进行归一化: Attention ( Q , K , V ) = s o f t m a x ( Q K T d ) V \text{Attention}(Q, K, V) = softmax(\frac{QK^T}{\sqrt{d}})V Attention(Q,K,V)=softmax(dQKT)V

代码中,函数attention_fn实现了自注意力机制:

def attention_fn(

self,

query_layer,

key_layer,

value_layer,

attention_mask,

hidden_size_per_partition,

layer_id,

layer_past=None,

scaling_attention_score=True,

use_cache=False,

):

if layer_past is not None:

past_key, past_value = layer_past[0], layer_past[1]

key_layer = torch.cat((past_key, key_layer), dim=0)

value_layer = torch.cat((past_value, value_layer), dim=0)

# seqlen, batch, num_attention_heads, hidden_size_per_attention_head

seq_len, b, nh, hidden_size = key_layer.shape

if use_cache:

present = (key_layer, value_layer)

else:

present = None

query_key_layer_scaling_coeff = float(layer_id + 1)

if scaling_attention_score:

query_layer = query_layer / (math.sqrt(hidden_size) * query_key_layer_scaling_coeff)

# [b, np, sq, sk]

output_size = (query_layer.size(1), query_layer.size(2), query_layer.size(0), key_layer.size(0))

# [sq, b, np, hn] -> [sq, b * np, hn]

query_layer = query_layer.view(output_size[2], output_size[0] * output_size[1], -1)

# [sk, b, np, hn] -> [sk, b * np, hn]

key_layer = key_layer.view(output_size[3], output_size[0] * output_size[1], -1)

matmul_result = torch.zeros(

1, 1, 1,

dtype=query_layer.dtype,

device=query_layer.device,

)

matmul_result = torch.baddbmm(

matmul_result,

query_layer.transpose(0, 1), # [b * np, sq, hn]

key_layer.transpose(0, 1).transpose(1, 2), # [b * np, hn, sk]

beta=0.0,

alpha=1.0,

)

# change view to [b, np, sq, sk]

attention_scores = matmul_result.view(*output_size)

if self.scale_mask_softmax:

self.scale_mask_softmax.scale = query_key_layer_scaling_coeff

attention_probs = self.scale_mask_softmax(attention_scores, attention_mask.contiguous())

else:

if not (attention_mask == 0).all():

# if auto-regressive, skip

attention_scores.masked_fill_(attention_mask, -10000.0)

dtype = attention_scores.dtype

attention_scores = attention_scores.float()

attention_scores = attention_scores * query_key_layer_scaling_coeff

attention_probs = F.softmax(attention_scores, dim=-1)

attention_probs = attention_probs.type(dtype)

# context layer shape: [b, np, sq, hn]

output_size = (value_layer.size(1), value_layer.size(2), query_layer.size(0), value_layer.size(3))

# change view [sk, b * np, hn]

value_layer = value_layer.view(value_layer.size(0), output_size[0] * output_size[1], -1)

attention_probs = attention_probs.view(output_size[0] * output_size[1], output_size[2], -1)

# 注意力分数乘以value,得到最终的输出context

context_layer = torch.bmm(attention_probs, value_layer.transpose(0, 1))

context_layer = context_layer.view(*output_size)

context_layer = context_layer.permute(2, 0, 1, 3).contiguous()

new_context_layer_shape = context_layer.size()[:-2] + (hidden_size_per_partition,)

context_layer = context_layer.view(*new_context_layer_shape)

outputs = (context_layer, present, attention_probs)

return outputs

下面是SelfAttention模块,模块中调用attention_fn实现注意力机制,代码如下:

class SelfAttention(torch.nn.Module):

def __init__(self, hidden_size, num_attention_heads,

layer_id, hidden_size_per_attention_head=None, bias=True,

params_dtype=torch.float, position_encoding_2d=True, empty_init=True):

if empty_init:

init_method = skip_init

else:

init_method = default_init

super(SelfAttention, self).__init__()

self.layer_id = layer_id

self.hidden_size = hidden_size

self.hidden_size_per_partition = hidden_size

self.num_attention_heads = num_attention_heads

self.num_attention_heads_per_partition = num_attention_heads

self.position_encoding_2d = position_encoding_2d

self.rotary_emb = RotaryEmbedding(

self.hidden_size // (self.num_attention_heads * 2)

if position_encoding_2d

else self.hidden_size // self.num_attention_heads,

base=10000,

precision=torch.half,

learnable=False,

)

self.scale_mask_softmax = None

if hidden_size_per_attention_head is None:

self.hidden_size_per_attention_head = hidden_size // num_attention_heads

else:

self.hidden_size_per_attention_head = hidden_size_per_attention_head

self.inner_hidden_size = num_attention_heads * self.hidden_size_per_attention_head

# Strided linear layer.

self.query_key_value = init_method(

torch.nn.Linear,

hidden_size,

3 * self.inner_hidden_size,

bias=bias,

dtype=params_dtype,

)

self.dense = init_method(

torch.nn.Linear,

self.inner_hidden_size,

hidden_size,

bias=bias,

dtype=params_dtype,

)

@staticmethod

def attention_mask_func(attention_scores, attention_mask):

attention_scores.masked_fill_(attention_mask, -10000.0)

return attention_scores

def split_tensor_along_last_dim(self, tensor, num_partitions,

contiguous_split_chunks=False):

# Get the size and dimension.

last_dim = tensor.dim() - 1

last_dim_size = tensor.size()[last_dim] // num_partitions

# Split.

tensor_list = torch.split(tensor, last_dim_size, dim=last_dim)

# Note: torch.split does not create contiguous tensors by default.

if contiguous_split_chunks:

return tuple(chunk.contiguous() for chunk in tensor_list)

return tensor_list

def forward(

self,

hidden_states: torch.Tensor,

position_ids,

attention_mask: torch.Tensor,

layer_id,

layer_past: Optional[Tuple[torch.Tensor, torch.Tensor]] = None,

use_cache: bool = False,

output_attentions: bool = False,

):

# [seq_len, batch, 3 * hidden_size]

mixed_raw_layer = self.query_key_value(hidden_states)

# [seq_len, batch, 3 * hidden_size] --> [seq_len, batch, num_attention_heads, 3 * hidden_size_per_attention_head]

new_tensor_shape = mixed_raw_layer.size()[:-1] + (

self.num_attention_heads_per_partition,

3 * self.hidden_size_per_attention_head,

)

mixed_raw_layer = mixed_raw_layer.view(*new_tensor_shape)

(query_layer, key_layer, value_layer) = self.split_tensor_along_last_dim(mixed_raw_layer, 3)

if self.position_encoding_2d:

q1, q2 = query_layer.chunk(2, dim=(query_layer.ndim - 1))

k1, k2 = key_layer.chunk(2, dim=(key_layer.ndim - 1))

cos, sin = self.rotary_emb(q1, seq_len=position_ids.max() + 1)

position_ids, block_position_ids = position_ids[:, 0, :].transpose(0, 1).contiguous(), \

position_ids[:, 1, :].transpose(0, 1).contiguous()

q1, k1 = apply_rotary_pos_emb_index(q1, k1, cos, sin, position_ids)

q2, k2 = apply_rotary_pos_emb_index(q2, k2, cos, sin, block_position_ids)

query_layer = torch.concat([q1, q2], dim=(q1.ndim - 1))

key_layer = torch.concat([k1, k2], dim=(k1.ndim - 1))

else:

position_ids = position_ids.transpose(0, 1)

cos, sin = self.rotary_emb(value_layer, seq_len=position_ids.max() + 1)

# [seq_len, batch, num_attention_heads, hidden_size_per_attention_head]

query_layer, key_layer = apply_rotary_pos_emb_index(query_layer, key_layer, cos, sin, position_ids)

# [seq_len, batch, hidden_size]

context_layer, present, attention_probs = attention_fn(

self=self,

query_layer=query_layer,

key_layer=key_layer,

value_layer=value_layer,

attention_mask=attention_mask,

hidden_size_per_partition=self.hidden_size_per_partition,

layer_id=layer_id,

layer_past=layer_past,

use_cache=use_cache

)

output = self.dense(context_layer)

outputs = (output, present)

if output_attentions:

outputs += (attention_probs,)

return outputs # output, present, attention_probs

六、ChatGLM的GLMBlock

GLMBlock是基于Transformer模型的一种变体,主要包含以下几个部分:

1.Layer Norm: 这是一种常见的归一化方法,主要用于神经网络中的深度学习。它将每个样本在特征维度上进行归一化,使得输出在每个特征维度上都有均值为0和方差为1。这种方法可以加速模型收敛速度,并有助于解决梯度消失和梯度爆炸问题。

2.Self Attention: 自注意力机制是Transformer模型的核心组成部分。给定一个输入序列,自注意力机制能够根据序列中每个元素与其他元素之间的关系,计算出一个权重向量,并用这个权重向量对输入序列进行加权平均。这样可以让模型更好地捕获序列中长距离依赖关系。 在GLMBlock中,Self Attention后面接了一个残差连接(Residual Connection)。残差连接可以让信息直接从前层传递到后层,在深层网络中有助于解决梯度消失问题。

3.Layer Normalization: GLMBlock在Self Attention和GLU之间又添加了一次Layer Norm操作。

4.GLU: GLU是一种非线性激活函数,主要由两部分组成:线性变换和门控机制。线性变换负责提取输入特征,而门控机制则负责控制信息流动。通过这种方式,GLU能够更好地处理复杂任务。 同样地,在GLU后面也接了一个残差连接。

class GLMBlock(torch.nn.Module):

def __init__(

self,

hidden_size,

num_attention_heads,

layernorm_epsilon,

layer_id,

inner_hidden_size=None,

hidden_size_per_attention_head=None,

layernorm=LayerNorm,

use_bias=True,

params_dtype=torch.float,

num_layers=28,

position_encoding_2d=True,

empty_init=True

):

super(GLMBlock, self).__init__()

self.layer_id = layer_id

# LayerNorm层

self.input_layernorm = layernorm(hidden_size, eps=layernorm_epsilon)

# 是否使用2维位置编码

self.position_encoding_2d = position_encoding_2d

# 自注意力层

self.attention = SelfAttention(

hidden_size,

num_attention_heads,

layer_id,

hidden_size_per_attention_head=hidden_size_per_attention_head,

bias=use_bias,

params_dtype=params_dtype,

position_encoding_2d=self.position_encoding_2d,

empty_init=empty_init

)

# Post Layer Norm层

self.post_attention_layernorm = layernorm(hidden_size, eps=layernorm_epsilon)

self.num_layers = num_layers

# mlp层

self.mlp = GLU(

hidden_size,

inner_hidden_size=inner_hidden_size,

bias=use_bias,

layer_id=layer_id,

params_dtype=params_dtype,

empty_init=empty_init

)

def forward(

self,

hidden_states: torch.Tensor,

position_ids,

attention_mask: torch.Tensor,

layer_id,

layer_past: Optional[Tuple[torch.Tensor, torch.Tensor]] = None,

use_cache: bool = False,

output_attentions: bool = False,

):

# 对输入进行Layer Norm

# [seq_len, batch, hidden_size]

attention_input = self.input_layernorm(hidden_states)

# 自注意力

attention_outputs = self.attention(

attention_input,

position_ids,

attention_mask=attention_mask,

layer_id=layer_id,

layer_past=layer_past,

use_cache=use_cache,

output_attentions=output_attentions

)

attention_output = attention_outputs[0]

outputs = attention_outputs[1:]

# 自注意力的输出和输入残差连接

alpha = (2 * self.num_layers) ** 0.5

hidden_states = attention_input * alpha + attention_output

# Layer Norm

mlp_input = self.post_attention_layernorm(hidden_states)

# 全连接层投影

mlp_output = self.mlp(mlp_input)

# MLP层的输出和输入残差连接

output = mlp_input * alpha + mlp_output

if use_cache:

outputs = (output,) + outputs

else:

outputs = (output,) + outputs[1:]

return outputs

七、ChatGLM的预训练模型

ChatGLM的预训练模型目的是获取注意力mask和position ids,下面具体介绍ChatGLMPreTrainedModel中的get_masks函数实现与获取position_ids函数:

def get_masks(self, input_ids, device):

batch_size, seq_length = input_ids.shape

# context_lengths记录了batch中每个样本的真实长度

context_lengths = [seq.tolist().index(self.config.bos_token_id) for seq in input_ids]

# 生成causal mask,即下三角以及对角线为1,上三角为0

attention_mask = torch.ones((batch_size, seq_length, seq_length), device=device)

attention_mask.tril_()

# 将前缀部分的注意力改为双向

for i, context_length in enumerate(context_lengths):

attention_mask[i, :, :context_length] = 1

attention_mask.unsqueeze_(1)

attention_mask = (attention_mask < 0.5).bool()

return attention_mask

def get_position_ids(self, input_ids, mask_positions, device, use_gmasks=None):

batch_size, seq_length = input_ids.shape

if use_gmasks is None:

use_gmasks = [False] * batch_size

# context_lengths:未被padding前,batch中各个样本的长度

context_lengths = [seq.tolist().index(self.config.bos_token_id) for seq in input_ids]

# 2维位置编码

if self.position_encoding_2d:

# [0,1,2,...,seq_length-1]

position_ids = torch.arange(seq_length, dtype=torch.long, device=device).unsqueeze(0).repeat(batch_size, 1)

# 将原始输入后所有位置的postion id都设置为[Mask]或者[gMask]的位置id

# (该操作见注意力层对位置编码的介绍)

for i, context_length in enumerate(context_lengths):

position_ids[i, context_length:] = mask_positions[i]

# 原始输入的位置编码全部设置为0,待生成的位置添加顺序的位置id

# 例如:[0,0,0,0,1,2,3,4,5]

block_position_ids = [torch.cat((

torch.zeros(context_length, dtype=torch.long, device=device),

torch.arange(seq_length - context_length, dtype=torch.long, device=device) + 1

)) for context_length in context_lengths]

block_position_ids = torch.stack(block_position_ids, dim=0)

# 将postion_ids和block_position_ids堆叠在一起,用于后续的参数传入;

# 在注意力层中,还有将这个position_ids拆分为两部分

position_ids = torch.stack((position_ids, block_position_ids), dim=1)

else:

position_ids = torch.arange(seq_length, dtype=torch.long, device=device).unsqueeze(0).repeat(batch_size, 1)

for i, context_length in enumerate(context_lengths):

if not use_gmasks[i]:

position_ids[i, context_length:] = mask_positions[i]

return position_ids

八、最终模型:ChatGLMModel

ChatGLMModel是将以上各种组件与模型块集成加载后的组合模型,代码如下:

class ChatGLMModel(ChatGLMPreTrainedModel):

def __init__(self, config: ChatGLMConfig, empty_init=True):

super().__init__(config)

if empty_init:

init_method = skip_init

else:

init_method = default_init

self.max_sequence_length = config.max_sequence_length

self.hidden_size = config.hidden_size

self.params_dtype = torch.half

self.num_attention_heads = config.num_attention_heads

self.vocab_size = config.vocab_size

self.num_layers = config.num_layers

self.layernorm_epsilon = config.layernorm_epsilon

self.inner_hidden_size = config.inner_hidden_size

self.hidden_size_per_attention_head = self.hidden_size // self.num_attention_heads

self.position_encoding_2d = config.position_encoding_2d

self.pre_seq_len = config.pre_seq_len

self.prefix_projection = config.prefix_projection

# 初始化embedding层

self.word_embeddings = init_method(

torch.nn.Embedding,

num_embeddings=self.vocab_size, embedding_dim=self.hidden_size,

dtype=self.params_dtype

)

self.gradient_checkpointing = False

def get_layer(layer_id):

return GLMBlock(

self.hidden_size,

self.num_attention_heads,

self.layernorm_epsilon,

layer_id,

inner_hidden_size=self.inner_hidden_size,

hidden_size_per_attention_head=self.hidden_size_per_attention_head,

layernorm=LayerNorm,

use_bias=True,

params_dtype=self.params_dtype,

position_encoding_2d=self.position_encoding_2d,

empty_init=empty_init

)

# 堆叠GLMBlock

self.layers = torch.nn.ModuleList(

[get_layer(layer_id) for layer_id in range(self.num_layers)]

)

# 最后的Layer Norm层

self.final_layernorm = LayerNorm(self.hidden_size, eps=self.layernorm_epsilon)

def get_input_embeddings(self):

return self.word_embeddings

def set_input_embeddings(self, new_embeddings: torch.Tensor):

self.word_embeddings = new_embeddings

@add_start_docstrings_to_model_forward(CHATGLM_6B_INPUTS_DOCSTRING.format("batch_size, sequence_length"))

@add_code_sample_docstrings(

checkpoint=_CHECKPOINT_FOR_DOC,

output_type=BaseModelOutputWithPastAndCrossAttentions,

config_class=_CONFIG_FOR_DOC,

)

def forward(

self,

input_ids: Optional[torch.LongTensor] = None,

position_ids: Optional[torch.LongTensor] = None,

attention_mask: Optional[torch.Tensor] = None,

past_key_values: Optional[Tuple[Tuple[torch.Tensor, torch.Tensor], ...]] = None,

inputs_embeds: Optional[torch.LongTensor] = None,

use_cache: Optional[bool] = None,

output_attentions: Optional[bool] = None,

output_hidden_states: Optional[bool] = None,

return_dict: Optional[bool] = None,

) -> Union[Tuple[torch.Tensor, ...], BaseModelOutputWithPast]:

output_attentions = output_attentions if output_attentions is not None else self.config.output_attentions

output_hidden_states = (

output_hidden_states if output_hidden_states is not None else self.config.output_hidden_states

)

use_cache = use_cache if use_cache is not None else self.config.use_cache

return_dict = return_dict if return_dict is not None else self.config.use_return_dict

if self.gradient_checkpointing and self.training:

if use_cache:

logger.warning_once(

"`use_cache=True` is incompatible with gradient checkpointing. Setting `use_cache=False`..."

)

use_cache = False

if input_ids is not None and inputs_embeds is not None:

raise ValueError("You cannot specify both input_ids and inputs_embeds at the same time")

elif input_ids is not None:

batch_size, seq_length = input_ids.shape[:2]

elif inputs_embeds is not None:

batch_size, seq_length = inputs_embeds.shape[:2]

else:

raise ValueError("You have to specify either input_ids or inputs_embeds")

### (结束)一些输入输出和参数设置,可以忽略

# embedding层

if inputs_embeds is None:

inputs_embeds = self.word_embeddings(input_ids)

if past_key_values is None:

past_key_values = tuple([None] * len(self.layers))

# 获得注意力mask,该功能继承自ChatGLMPreTrainedModel

if attention_mask is None:

attention_mask = self.get_masks(

input_ids,

device=input_ids.device

)

if position_ids is None:

MASK, gMASK = self.config.mask_token_id, self.config.gmask_token_id

seqs = input_ids.tolist()

# 记录input_ids中是否使用了mask以及mask的位置

# mask_positions记录每个样本中mask的位置

# use_gmasks记录是否使用了gMask

mask_positions, use_gmasks = [], []

for seq in seqs:

mask_token = gMASK if gMASK in seq else MASK

use_gmask = mask_token == gMASK

mask_positions.append(seq.index(mask_token))

use_gmasks.append(use_gmask)

# 获得position_ids,该功能继承自ChatGLMPreTrainedModel

position_ids = self.get_position_ids(

input_ids,

mask_positions=mask_positions,

device=input_ids.device,

use_gmasks=use_gmasks

)

# [seq_len, batch, hidden_size]

hidden_states = inputs_embeds.transpose(0, 1)

presents = () if use_cache else None

all_self_attentions = () if output_attentions else None

all_hidden_states = () if output_hidden_states else None

if attention_mask is None:

attention_mask = torch.zeros(1, 1, device=input_ids.device).bool()

else:

attention_mask = attention_mask.to(hidden_states.device)

# 模型的前向传播

for i, layer in enumerate(self.layers):

if output_hidden_states:

all_hidden_states = all_hidden_states + (hidden_states,)

layer_past = past_key_values[i]

if self.gradient_checkpointing and self.training:

layer_ret = torch.utils.checkpoint.checkpoint(

layer,

hidden_states,

position_ids,

attention_mask,

torch.tensor(i),

layer_past,

use_cache,

output_attentions

)

else:

layer_ret = layer(

hidden_states,

position_ids=position_ids,

attention_mask=attention_mask,

layer_id=torch.tensor(i),

layer_past=layer_past,

use_cache=use_cache,

output_attentions=output_attentions

)

hidden_states = layer_ret[0]

if use_cache:

presents = presents + (layer_ret[1],)

if output_attentions:

all_self_attentions = all_self_attentions + (layer_ret[2 if use_cache else 1],)

# 最终的Layer Norm

hidden_states = self.final_layernorm(hidden_states)

if output_hidden_states:

all_hidden_states = all_hidden_states + (hidden_states,)

if not return_dict:

return tuple(v for v in [hidden_states, presents, all_hidden_states, all_self_attentions] if v is not None)

return BaseModelOutputWithPast(

last_hidden_state=hidden_states,

past_key_values=presents,

hidden_states=all_hidden_states,

attentions=all_self_attentions,

)

到此为止,我已经详细介绍了ChaGLM的详细源码与原理介绍,相信大家对ChaGLM的模型架构有了大致的了解了。更多细节内容请持续关注“微学AI”。