集成学习

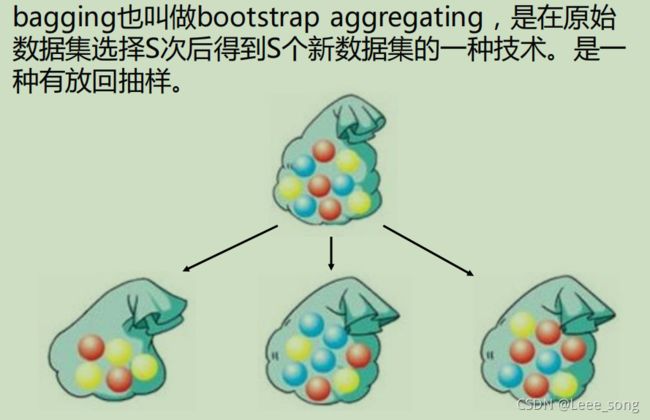

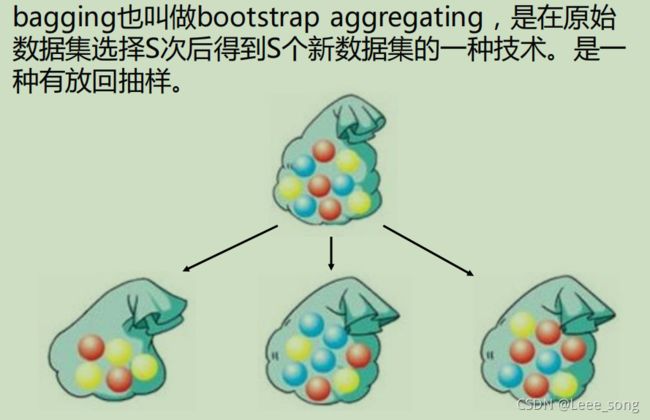

bagging

bagging:

from sklearn import neighbors

from sklearn import datasets

from sklearn.ensemble import BaggingClassifier

from sklearn import tree

from sklearn.model_selection import train_test_split

import numpy as np

import matplotlib.pyplot as plt

def plot(model):

x_min, x_max = x_data[:, 0].min() - 1, x_data[:, 0].max() + 1

y_min, y_max = x_data[:, 1].min() - 1, x_data[:, 1].max() + 1

xx, yy = np.meshgrid(np.arange(x_min, x_max, 0.02),

np.arange(y_min, y_max, 0.02))

z = model.predict(np.c_[xx.ravel(), yy.ravel()])

z = z.reshape(xx.shape)

plt.contourf(xx, yy, z)

iris = datasets.load_iris()

x_data = iris.data[:,:2]

y_data = iris.target

plt.scatter(x_data[:, 0], x_data[:, 1], c=y_data)

x_train,x_test,y_train,y_test = train_test_split(x_data, y_data)

knn = neighbors.KNeighborsClassifier()

knn.fit(x_train, y_train)

plot(knn)

plt.scatter(x_data[:, 0], x_data[:, 1], c=y_data)

plt.show()

knn.score(x_test, y_test)

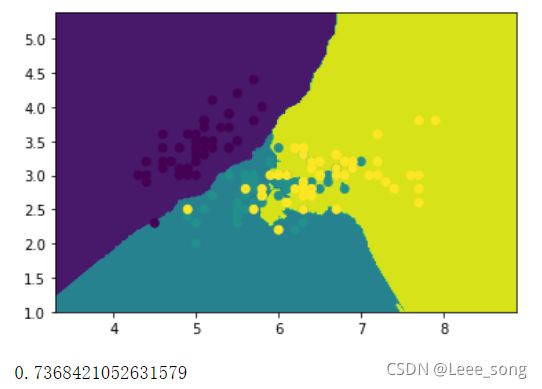

bagging_knn = BaggingClassifier(knn, n_estimators=100)

bagging_knn.fit(x_train, y_train)

plot(bagging_knn)

plt.scatter(x_data[:, 0], x_data[:, 1], c=y_data)

plt.show()

bagging_knn.score(x_test, y_test)

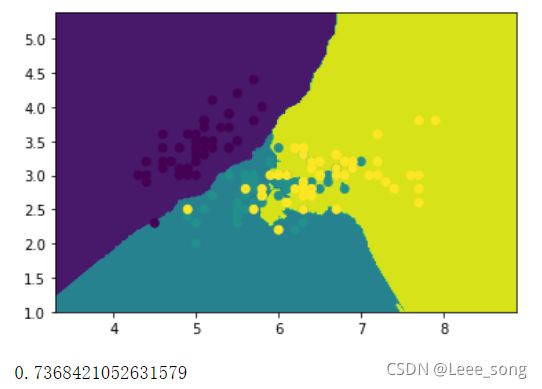

普通KNN分类器:

Bagging+Knn分类器:

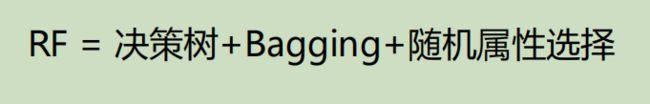

随机森林RF:

from sklearn import tree

from sklearn.model_selection import train_test_split

from sklearn.ensemble import RandomForestClassifier

import numpy as np

import matplotlib.pyplot as plt

def plot(model):

x_min, x_max = x_data[:, 0].min() - 1, x_data[:, 0].max() + 1

y_min, y_max = x_data[:, 1].min() - 1, x_data[:, 1].max() + 1

xx, yy = np.meshgrid(np.arange(x_min, x_max, 0.02),

np.arange(y_min, y_max, 0.02))

z = model.predict(np.c_[xx.ravel(), yy.ravel()])

z = z.reshape(xx.shape)

plt.contourf(xx, yy, z)

plt.scatter(x_test[:, 0], x_test[:, 1], c=y_test)

plt.show()

data = np.genfromtxt("LR-testSet2.txt", delimiter=",")

x_data = data[:,:-1]

y_data = data[:,-1]

x_train,x_test,y_train,y_test = train_test_split(x_data, y_data, test_size = 0.5)

dtree = tree.DecisionTreeClassifier()

dtree.fit(x_train, y_train)

plot(dtree)

dtree.score(x_test, y_test)

RF = RandomForestClassifier(n_estimators=50)

RF.fit(x_train, y_train)

plot(RF)

RF.score(x_test, y_test)

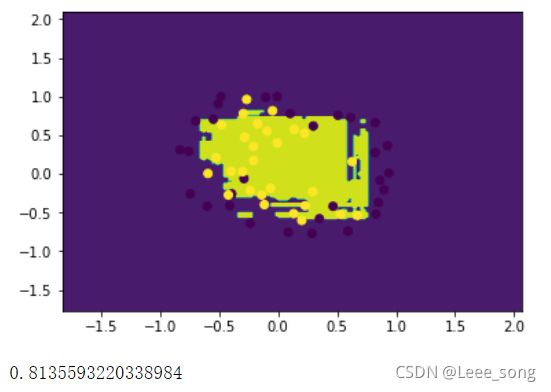

单棵决策树结果:

随机森林结果:

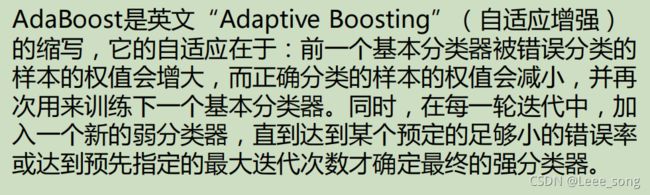

boosting:

import numpy as np

import matplotlib.pyplot as plt

from sklearn import tree

from sklearn.ensemble import AdaBoostClassifier

from sklearn.tree import DecisionTreeClassifier

from sklearn.datasets import make_gaussian_quantiles

from sklearn.metrics import classification_report

x1, y1 = make_gaussian_quantiles(n_samples=500, n_features=2,n_classes=2)

x2, y2 = make_gaussian_quantiles(mean=(3, 3), n_samples=500, n_features=2, n_classes=2)

x_data = np.concatenate((x1, x2))

y_data = np.concatenate((y1, -y2+1))

plt.scatter(x_data[:, 0], x_data[:, 1], c=y_data)

plt.show()

model = tree.DecisionTreeClassifier(max_depth=3)

model.fit(x_data, y_data)

x_min, x_max = x_data[:, 0].min() - 1, x_data[:, 0].max() + 1

y_min, y_max = x_data[:, 1].min() - 1, x_data[:, 1].max() + 1

xx, yy = np.meshgrid(np.arange(x_min, x_max, 0.02),np.arange(y_min, y_max, 0.02))

z = model.predict(np.c_[xx.ravel(), yy.ravel()])

z = z.reshape(xx.shape)

plt.contourf(xx, yy, z)

plt.scatter(x_data[:, 0], x_data[:, 1], c=y_data)

plt.show()

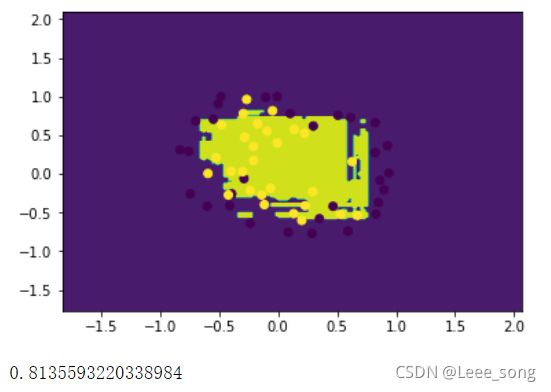

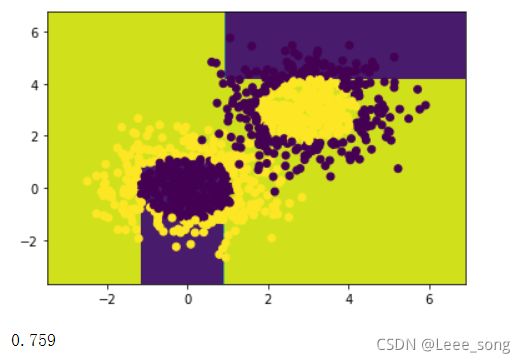

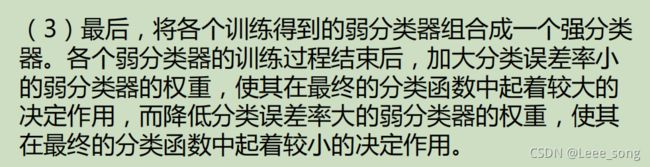

model = AdaBoostClassifier(DecisionTreeClassifier(max_depth=3),n_estimators=10)

model.fit(x_data, y_data)

x_min, x_max = x_data[:, 0].min() - 1, x_data[:, 0].max() + 1

y_min, y_max = x_data[:, 1].min() - 1, x_data[:, 1].max() + 1

xx, yy = np.meshgrid(np.arange(x_min, x_max, 0.02),np.arange(y_min, y_max, 0.02))

z = model.predict(np.c_[xx.ravel(), yy.ravel()])

z = z.reshape(xx.shape)

plt.contourf(xx, yy, z)

plt.scatter(x_data[:, 0], x_data[:, 1], c=y_data)

plt.show()

model.score(x_data,y_data)

原始数据:

决策树模型:

AdaBoost模型:

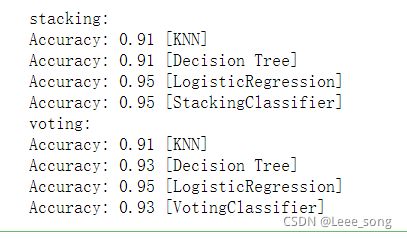

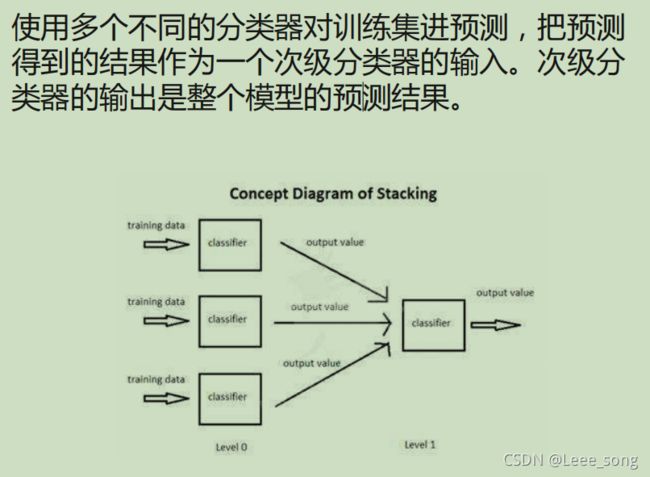

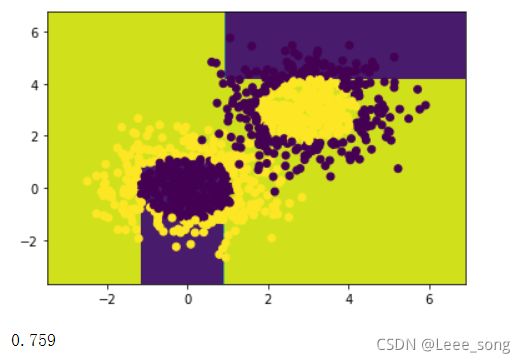

stacking:

from sklearn import datasets

from sklearn import model_selection

from sklearn.linear_model import LogisticRegression

from sklearn.neighbors import KNeighborsClassifier

from sklearn.tree import DecisionTreeClassifier

from mlxtend.classifier import StackingClassifier

from sklearn.ensemble import VotingClassifier

import numpy as np

iris = datasets.load_iris()

x_data, y_data = iris.data[:, 1:3], iris.target

clf1 = KNeighborsClassifier(n_neighbors=1)

clf2 = DecisionTreeClassifier()

clf3 = LogisticRegression()

lr = LogisticRegression()

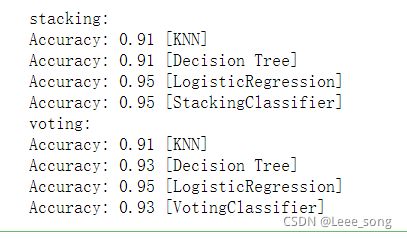

print("stacking:")

sclf1 = StackingClassifier(classifiers=[clf1, clf2, clf3],meta_classifier=lr)

for clf,label in zip([clf1, clf2, clf3, sclf1],['KNN','Decision Tree','LogisticRegression','StackingClassifier']):

scores = model_selection.cross_val_score(clf, x_data, y_data, cv=3, scoring='accuracy')

print("Accuracy: %0.2f [%s]" % (scores.mean(), label))

print("voting:")

sclf2 = VotingClassifier([('knn',clf1),('dtree',clf2), ('lr',clf3)])

for clf, label in zip([clf1, clf2, clf3, sclf2],

['KNN','Decision Tree','LogisticRegression','VotingClassifier']):

scores = model_selection.cross_val_score(clf, x_data, y_data, cv=3, scoring='accuracy')

print("Accuracy: %0.2f [%s]" % (scores.mean(), label))