【spark客户端】Spark SQL CLI详解:怎么执行sql文件、注释怎么写,支持的文件路径协议、交互式模式使用细节

文章目录

- 一. Spark SQL Command Line Options(命令行参数)

- 二. The hiverc File

-

- 1. without the -i

- 2. .hiverc 介绍

- 三. 支持的路径协议

- 四. 支持的注释类型

- 五. Spark SQL CLI交互式命令

- 六. Examples

-

- 1. running a query from the command line

- 2. setting Hive configuration variables

- 3. setting Hive configuration variables and using it in the SQL query

- 4. setting Hive variables substitution

- 5. dumping data out from a query into a file using silent mode

- 6. running a script non-interactively:

- 7. running an initialization script before entering interactive mode

- 8. entering interactive mode

- 9. entering interactive mode with escape ; in comment:

- 七. 非交互模式用法小结

The Spark SQL CLI is a convenient interactive command tool to run the Hive metastore service and execute SQL queries input from the command line. Note that the Spark SQL CLI cannot talk to the Thrift JDBC server.

spark SQL Cli 用于运行hive metastore服务和通过命令行执行sql查询。注意,Spark SQL CLI不能和Thrift JDBC server进行通讯。

一. Spark SQL Command Line Options(命令行参数)

执行./bin/spark-sql --help 获取所有的执行参数

CLI options:

-d,--define 用于HIVE命令的变量替换 比如 -d A=B 或者 --define A=B

--database <databasename> 指定使用哪个数据库

-e <quoted-query-string> 执行sql语句,可以以;号分割的多个sql,比如:spark-sql -e "show databases; select 1,'a'"

-f <filename> 执行sql文件,文件中可以存在以;号分割的多个sql,比如:spark-sql -f spark-test.sql

-H,--help Print help information

--hiveconf Use value for given property

--hivevar Variable substitution to apply to Hive commands. e.g. --hivevar A=B

-i <filename> Initialization SQL file:初始化sql文件

-S,--silent Silent mode in interactive shell

-v,--verbose Verbose mode (echo executed SQL to the console)

二. The hiverc File

1. without the -i

When invoked without the -i, the Spark SQL CLI will attempt to load $HIVE_HOME/bin/.hiverc and $HOME/.hiverc as initialization files.

在没有使用 -i 选项的情况下调用 Spark SQL CLI 时,它将尝试加载 $HIVE_HOME/bin/.hiverc 和 $HOME/.hiverc 作为初始化文件。

这里介绍下.hiverc

2. .hiverc 介绍

在Apache Hive中,.hiverc 文件是一个用于配置Hive客户端的启动脚本。它允许你在启动Hive命令行客户端(hive)时执行一些自定义操作,如设置环境变量、加载自定义函数、定义别名等。

.hiverc 文件通常位于Hive的安装目录下的 bin 子目录。它是一个可执行的脚本文件,当你启动Hive客户端时,会自动执行其中的命令。

以下是一些你可以在 .hiverc 文件中执行的操作:

- 设置环境变量:你可以在 .hiverc 文件中设置环境变量,以便在Hive会话期间使用。例如,你可以设置 HADOOP_HOME 或其他环境变量。

- 加载自定义函数:如果你有自定义的Hive函数,你可以在 .hiverc 文件中使用 ADD JAR 命令加载这些函数。

- 定义别名:你可以定义Hive命令的别名,以简化常用命令的输入。例如,你可以定义一个别名来运行一组复杂的查询。

- 其他初始化操作:你还可以执行其他自定义的初始化操作,以适应特定的需求和工作流程。

如下示例:

#!/bin/bash

# 设置环境变量

export HADOOP_HOME=/path/to/hadoop

export HIVE_HOME=/path/to/hive

# 加载自定义函数

ADD JAR /path/to/custom_functions.jar;

# 定义别名

CREATE ALIAS myquery AS 'SELECT * FROM mytable WHERE condition';

注意,.hiverc 文件中的命令将在每次启动Hive客户端时执行,因此你可以将其中的常用操作自动化,以提高工作效率。

三. 支持的路径协议

Spark SQL CLI supports running SQL from initialization script file(-i) or normal SQL file(-f), If path url don’t have a scheme component, the path will be handled as local file. For example: /path/to/spark-sql-cli.sql equals to file:///path/to/spark-sql-cli.sql.

User also can use Hadoop supported filesystems such as s3://[mys3bucket]/path/to/spark-sql-cli.sql or hdfs://[namenode]:[port]/path/to/spark-sql-cli.sql.

Spark SQL CLI 支持通过

- (-i)初始化脚本

- (-f)普通SQL文件

两种方式执行sql,如果路径没有协议,则被处理为本地文件。

用户也可以使用hadoop支持的s3和hdfs协议的路径,比如说:s3://

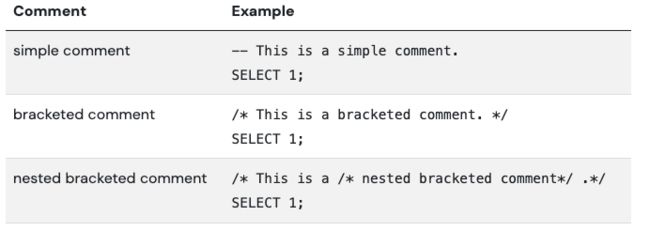

四. 支持的注释类型

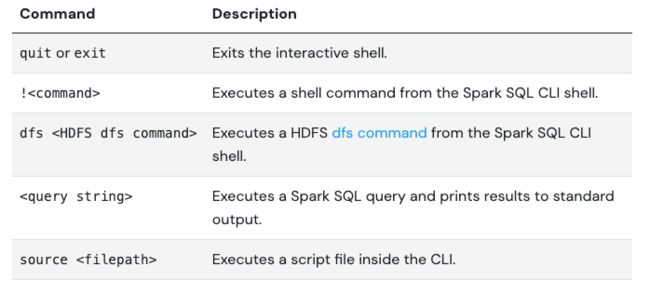

五. Spark SQL CLI交互式命令

当spark-sql没有使用-e 或 -f (sql字符和sql文件)时,命令将进入交互模式,使用;号终止命令。

注意:

- 该CLI仅在分号 ; 出现在行尾且没有被反斜杠 \; 转义时,才将其用于终止命令。

- 分号 ; 是唯一用于终止命令的方式。如果用户输入 SELECT 1 并按回车键,控制台将等待进一步输入。

- 如果用户在同一行中输入多个命令,例如 SELECT 1; SELECT 2;,则命令 SELECT 1 和 SELECT 2 将分别被执行。

- 如果分号 ; 出现在SQL语句中(而不是行尾),则它没有特殊含义:继续解释 SQL 语句的执行

再看一个例子:

/* This is a comment contains ;

*/ SELECT 1;

如果分号 ; 出现在行尾,它将终止SQL语句。上面的例子将被切分为:/* This is a comment contains 和*/ SELECT 1 两个sql执行,此时执行就会报错。

六. Examples

1. running a query from the command line

./bin/spark-sql -e 'SELECT COL FROM TBL'

2. setting Hive configuration variables

./bin/spark-sql -e 'SELECT COL FROM TBL' --hiveconf hive.exec.scratchdir=/home/my/hive_scratch

3. setting Hive configuration variables and using it in the SQL query

./bin/spark-sql -e 'SELECT ${hiveconf:aaa}' --hiveconf aaa=bbb --hiveconf hive.exec.scratchdir=/home/my/hive_scratch

spark-sql> SELECT ${aaa};

bbb

4. setting Hive variables substitution

./bin/spark-sql --hivevar aaa=bbb --define ccc=ddd

spark-sql> SELECT ${aaa}, ${ccc};

bbb ddd

5. dumping data out from a query into a file using silent mode

./bin/spark-sql -S -e 'SELECT COL FROM TBL' > result.txt

6. running a script non-interactively:

[user_excute@poc11v ~/clients]$ spark-sql -f spark-test.sql

Java HotSpot(TM) 64-Bit Server VM warning: Using the ParNew young collector with the Serial old collector is deprecated and will likely be removed in a future release

Warning: Master yarn-client is deprecated since 2.0. Please use master "yarn" with specified deploy mode instead.

23/10/26 15:02:23 WARN SparkConf: The configuration key 'spark.scheduler.executorTaskBlacklistTime' has been deprecated as of Spark 2.1.0 and may be removed in the future. Please use the new blacklisting options, spark.blacklist.*

23/10/26 15:02:23 WARN SparkConf: The configuration key 'spark.akka.frameSize' has been deprecated as of Spark 1.6 and may be removed in the future. Please use the new key 'spark.rpc.message.maxSize' instead.

23/10/26 15:02:24 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

23/10/26 15:02:30 INFO Client: Requesting a new application from cluster with 1 NodeManagers

23/10/26 15:02:30 INFO Client: Verifying our application has not requested more than the maximum memory capability of the cluster (8192 MB per container)

23/10/26 15:02:30 INFO Client: Will allocate AM container, with 2432 MB memory including 384 MB overhead

23/10/26 15:02:30 INFO Client: Setting up container launch context for our AM

23/10/26 15:02:30 INFO Client: Setting up the launch environment for our AM container

23/10/26 15:02:30 INFO Client: Preparing resources for our AM container

23/10/26 15:02:31 WARN Client: Neither spark.yarn.jars nor spark.yarn.archive is set, falling back to uploading libraries under SPARK_HOME.

23/10/26 15:02:35 INFO Client: Uploading resource file:/tmp/spark-abdc8f1b-5f01-42c5-820e-9213f4895e69/__spark_libs__927845140246214662.zip -> hdfs://xmanhdfs3/home/user_excute/spark/cache/.sparkStaging/application_1698291202798_0002/__spark_libs__927845140246214662.zip

23/10/26 15:02:38 INFO Client: Uploading resource file:/tmp/spark-abdc8f1b-5f01-42c5-820e-9213f4895e69/__spark_conf__3928501941049491781.zip -> hdfs://xmanhdfs3/home/user_excute/spark/cache/.sparkStaging/application_1698291202798_0002/__spark_conf__.zip

23/10/26 15:02:40 INFO Client: Submitting application application_1698291202798_0002 to ResourceManager

23/10/26 15:02:41 INFO Client: Application report for application_1698291202798_0002 (state: ACCEPTED)

23/10/26 15:02:41 INFO Client:

client token: N/A

diagnostics: AM container is launched, waiting for AM container to Register with RM

ApplicationMaster host: N/A

ApplicationMaster RPC port: -1

queue: root.user_excute

start time: 1698303760575

final status: UNDEFINED

tracking URL: http://xxx:8888/proxy/application_1698291202798_0002/

user: user_excute

23/10/26 15:02:42 INFO Client: Application report for application_1698291202798_0002 (state: ACCEPTED)

23/10/26 15:02:43 INFO Client: Application report for application_1698291202798_0002 (state: ACCEPTED)

23/10/26 15:02:44 INFO Client: Application report for application_1698291202798_0002 (state: ACCEPTED)

23/10/26 15:02:45 INFO Client: Application report for application_1698291202798_0002 (state: ACCEPTED)

23/10/26 15:02:46 INFO Client: Application report for application_1698291202798_0002 (state: RUNNING)

23/10/26 15:02:46 INFO Client:

client token: N/A

diagnostics: N/A

ApplicationMaster host: xxx.xxx.xxx.xxx

ApplicationMaster RPC port: -1

queue: root.user_excute

start time: 1698303760575

final status: UNDEFINED

tracking URL: http://xxx:8888/proxy/application_1698291202798_0002/

user: user_excute

default

Time taken: 1.274 seconds, Fetched 1 row(s)

1

Time taken: 9.996 seconds, Fetched 1 row(s)

7. running an initialization script before entering interactive mode

./bin/spark-sql -i /path/to/spark-sql-init.sql

8. entering interactive mode

./bin/spark-sql

spark-sql> SELECT 1;

1

spark-sql> -- This is a simple comment.

spark-sql> SELECT 1;

1

9. entering interactive mode with escape ; in comment:

./bin/spark-sql

spark-sql>/* This is a comment contains \\;

> It won't be terminated by \\; */

> SELECT 1;

1

七. 非交互模式用法小结

命令语法:

spark-sql -f file_path

- -f:指定文件参数

- file_path:支持本地路径,也支持hdfs路径,例如:hdfs://[namenode]:[port]/path/to/spark-sql-cli.sql.

sql文件内容的书写格式:

- 各个sql以;号分割;

- 注释的语法:见上

参考:

https://spark.apache.org/docs/latest/sql-distributed-sql-engine-spark-sql-cli.html