教你用BeautifulSoup实现数据解析,并爬取豆瓣TOP250电影榜

5分钟使用Python爬取豆瓣TOP250电影榜

本视频的演示步骤:

- 使用requests爬取网页

- 使用BeautifulSoup实现数据解析

- 借助pandas将数据写出到Excel

这三个库的详细用法,请看我的其他视频课程

import requests

from bs4 import BeautifulSoup

import pandas as pd

`

# 1、下载共10个页面的HTML

# 构造分页数字列表

page_indexs = range(0, 250, 25)

[*range(0, 250, 25)]

list(range(0, 250, 25) )

def download_all_htmls():

"""

下载所有列表页面的HTML,用于后续的分析

"""

htmls = []

for idx in page_indexs:

url = f"https://movie.douban.com/top250?start={idx}&filter="

print("craw html:", url)

r = requests.get(url,headers={"User-Agent":"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko)"})

if r.status_code != 200:

raise Exception("error")

htmls.append(r.text)

return htmls

htmls = download_all_htmls()

craw html: https://movie.douban.com/top250?start=0&filter=

craw html: https://movie.douban.com/top250?start=25&filter=

craw html: https://movie.douban.com/top250?start=50&filter=

craw html: https://movie.douban.com/top250?start=75&filter=

craw html: https://movie.douban.com/top250?start=100&filter=

craw html: https://movie.douban.com/top250?start=125&filter=

craw html: https://movie.douban.com/top250?start=150&filter=

craw html: https://movie.douban.com/top250?start=175&filter=

craw html: https://movie.douban.com/top250?start=200&filter=

craw html: https://movie.douban.com/top250?start=225&filter=

# 2、解析HTML得到数据

def parse_single_html(html):

"""

解析单个HTML,得到数据

@return list({"link", "title", [label]})

"""

soup = BeautifulSoup(html, 'html.parser')

article_items = (

soup.find("div", class_="article")

.find("ol", class_="grid_view")

.find_all("div", class_="item")

)

datas = []

for article_item in article_items:

rank = article_item.find("div", class_="pic").find("em").get_text()

info = article_item.find("div", class_="info")

title = info.find("div", class_="hd").find("span", class_="title").get_text()

stars = (

info.find("div", class_="bd")

.find("div", class_="star")

.find_all("span")

)

rating_star = stars[0]["class"][0]

rating_num = stars[1].get_text()

comments = stars[3].get_text()

datas.append({

"rank":rank,

"title":title,

"rating_star":rating_star.replace("rating","").replace("-t",""),

"rating_num":rating_num,

"comments":comments.replace("人评价", "")

})

return datas

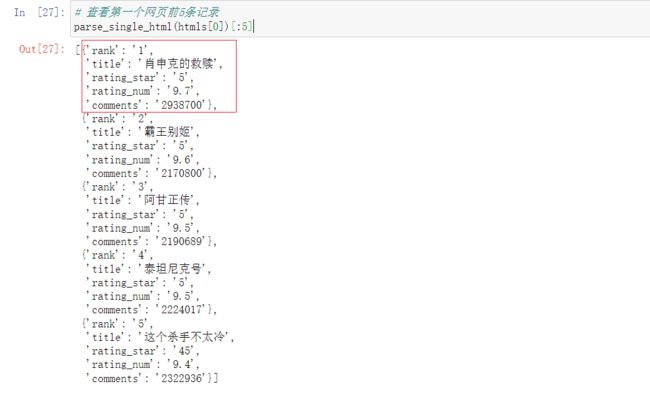

# 查看第一个网页前5条记录

parse_single_html(htmls[0])[:5]

# 3. 执行所有的HTML页面的解析

all_datas = []

for html in htmls:

'''

extend() 函数用于在列表末尾一次性追加另一个序列中的多个值

该方法没有返回值,但会在已存在的列表中添加新的列表内容

'''

all_datas.extend(parse_single_html(html))

``

all_datas

len(all_datas)

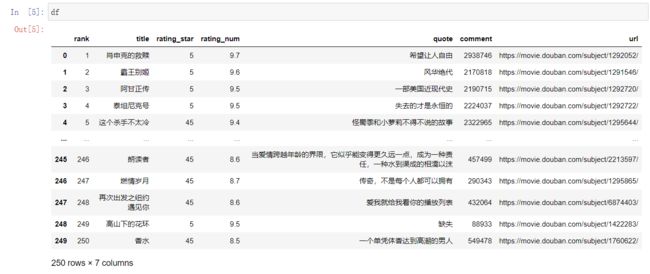

# 4. 将结果存入excel

df = pd.DataFrame(all_datas)

df.to_excel("豆瓣电影TOP250.xlsx")

extend方法:

aList = [123, ‘xyz’, ‘zara’, ‘abc’, 123];

bList = [2009, ‘manni’,2009];

aList.extend(bList)

aList

封装版:

import os

import json

import time

import pprint

import requests

import numpy as np

import pandas as pd

from bs4 import BeautifulSoup

from sqlalchemy import create_engine

def parse_single_url(html):

'''html:一个url产生25条记录'''

soup = BeautifulSoup(html,'html.parser')

items = (

soup.find('div',class_ = 'article')

.find('ol',class_ = 'grid_view')

.find_all('div',class_ = 'item')

)

'''需放在循环之外'''

data1 = []

for item in items:

'''提取需要的字段'''

rank1 = item.find('div',class_ = 'pic').find('em').get_text()

info1 = item.find('div',class_= 'info')

url1 = info1.find('div',class_ = 'hd').find('a').get('href')

title1 = info1.find('div',class_ = 'hd').find('span',class_ = 'title').get_text()

stars1 = (

info1.find('div',class_ = 'bd')

.find('div',class_ = 'star')

.find_all('span')

)

rating_star1 = stars1[0].get('class')[0] # 提取class属性值

rating_num1 = stars1[1].get_text()

comments1 = stars1[3].get_text()

If_None = info1.find('div',class_ = 'bd').find('p',class_ = 'quote')

'''if...else:解决AttributeError: 'NoneType' object has no attribute 'find'的报错问题 '''

if If_None != None:

quote1 = If_None.find('span',class_ = 'inq').get_text() # 提取文本

else:

quote1 = '缺失'

'''

将每条记录以字典形式存储,并追加到空列表中

data1 = [] ,放在循环内部,每一遍都会被初始化

print(tuple([rank1,title1,rating_star1,rating_num1,quote1,comments1,url1]))

'''

'''打印明细,便于定位问题:'''

# print(tuple([rank1,title1,rating_star1,rating_num1,quote1,comments1,url1]))

data1.append({

'rank': int(rank1),

'title':title1,

'rating_star':int(rating_star1.replace('rating','').replace('-t','')),

'rating_num':np.float(rating_num1),

'quote':quote1.replace('。',''),

'comment':int(comments1.replace('人评价','')),

'url':url1

})

'''缩进须对齐'''

return data1

# parse_single_url(html)

def download_all_htmls():

htmls = []

for idx in list(range(0,250,25)):

url = f'https://movie.douban.com/top250?start={idx}&filter='

# print('craw_url:',url)

headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/103.0.0.0 Safari/537.36'}

r = requests.get(url,headers = headers)

if r.status_code != 200:

raise Exception('error')

'''将html文本内容追加存储在列表中'''

htmls.append(r.text)

return htmls

def data_to_Mysql(data,db_tname):

engine = create_engine(f'mysql://xxx:[email protected]:3306/class201911?charset=utf8')

Records = data.to_sql(db_tname, engine, index=False, if_exists='replace')

print(f'{db_tname}数据入库成功,共计导入数据{Records}条')

if __name__ == '__main__':

htmls = download_all_htmls()

all_data = []

for html in htmls:

all_data.extend(parse_single_url(html))

time.sleep(0.5)

df = pd.DataFrame(all_data)

'''存储数据到desktop'''

os.chdir(r'C:\Users\DELL\Desktop')

# df.to_excel('./top250.xlsx',index=False)

# '''存储到数据库'''

# data_to_Mysql(df,'movie_top_250')