spark python_python spark环境配置

放在D盘

添加 SPARK_HOME = D:\spark-2.3.0-bin-hadoop2.7。

并将 %SPARK_HOME%/bin 添加至环境变量PATH。

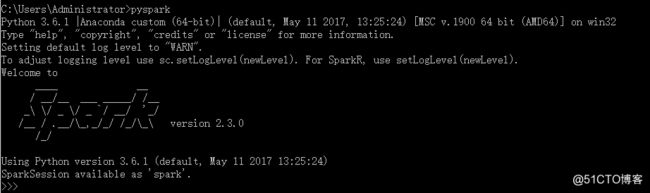

然后进入命令行,输入pyspark命令。若成功执行。则成功设置环境变量

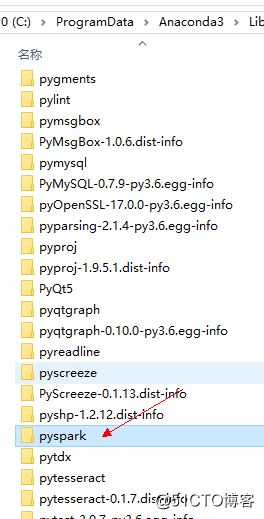

找到pycharm sitepackage目录

右键点击即可进入目录,将上面D:\spark-2.3.0-bin-hadoop2.7里面有个/python/pyspark目录拷贝到上面的 sitepackage目录

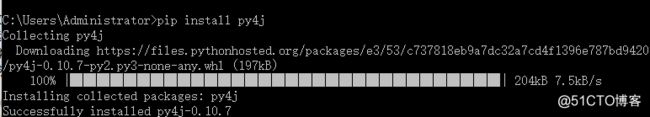

安装 py4j

试验如下代码:

from __future__ import print_function

import sys

from operator import add

import os

# Path for spark source folder

os.environ[‘SPARK_HOME‘] = "D:\spark-2.3.0-bin-hadoop2.7"

# Append pyspark to Python Path

sys.path.append("D:\spark-2.3.0-bin-hadoop2.7\python")

sys.path.append("D:\spark-2.3.0-bin-hadoop2.7\python\lib\py4j-0.9-src.zip")

from pyspark import SparkContext

from pyspark import SparkConf

if __name__ == ‘__main__‘:

inputFile = "D:\Harry.txt"

outputFile = "D:\Harry1.txt"

sc = SparkContext()

text_file = sc.textFile(inputFile)

counts = text_file.flatMap(lambda line: line.split(‘ ‘)).map(lambda word: (word, 1)).reduceByKey(lambda a, b: a + b)

counts.saveAsTextFile(outputFile)

计算成功即可

原文地址:http://blog.51cto.com/yixianwei/2156892