- nosql数据库技术与应用知识点

皆过客,揽星河

NoSQLnosql数据库大数据数据分析数据结构非关系型数据库

Nosql知识回顾大数据处理流程数据采集(flume、爬虫、传感器)数据存储(本门课程NoSQL所处的阶段)Hdfs、MongoDB、HBase等数据清洗(入仓)Hive等数据处理、分析(Spark、Flink等)数据可视化数据挖掘、机器学习应用(Python、SparkMLlib等)大数据时代存储的挑战(三高)高并发(同一时间很多人访问)高扩展(要求随时根据需求扩展存储)高效率(要求读写速度快)

- 多线程之——ExecutorCompletionService

阿福德

在我们开发中,经常会遇到这种情况,我们起多个线程来执行,等所有的线程都执行完成后,我们需要得到个线程的执行结果来进行聚合处理。我在内部代码评审时,发现了不少这种情况。看很多同学都使用正确,但比较啰嗦,效率也不高。本文介绍一个简单处理这种情况的方法:直接上代码:publicclassExecutorCompletionServiceTest{@TestpublicvoidtestExecutorCo

- Java面试题精选:消息队列(二)

芒果不是芒

Java面试题精选javakafka

一、Kafka的特性1.消息持久化:消息存储在磁盘,所以消息不会丢失2.高吞吐量:可以轻松实现单机百万级别的并发3.扩展性:扩展性强,还是动态扩展4.多客户端支持:支持多种语言(Java、C、C++、GO、)5.KafkaStreams(一个天生的流处理):在双十一或者销售大屏就会用到这种流处理。使用KafkaStreams可以快速的把销售额统计出来6.安全机制:Kafka进行生产或者消费的时候会

- python多线程程序设计 之一

IT_Beijing_BIT

#Python程序设计语言python

python多线程程序设计之一全局解释器锁线程APIsthreading.active_count()threading.current_thread()threading.excepthook(args,/)threading.get_native_id()threading.main_thread()threading.stack_size([size])线程对象成员函数构造器start/ru

- MongoDB知识概括

GeorgeLin98

持久层mongodb

MongoDB知识概括MongoDB相关概念单机部署基本常用命令索引-IndexSpirngDataMongoDB集成副本集分片集群安全认证MongoDB相关概念业务应用场景:传统的关系型数据库(如MySQL),在数据操作的“三高”需求以及应对Web2.0的网站需求面前,显得力不从心。解释:“三高”需求:①Highperformance-对数据库高并发读写的需求。②HugeStorage-对海量数

- SpringCloudAlibaba—Sentinel(限流)

菜鸟爪哇

前言:自己在学习过程的记录,借鉴别人文章,记录自己实现的步骤。借鉴文章:https://blog.csdn.net/u014494148/article/details/105484410Sentinel介绍Sentinel诞生于阿里巴巴,其主要目标是流量控制和服务熔断。Sentinel是通过限制并发线程的数量(即信号隔离)来减少不稳定资源的影响,而不是使用线程池,省去了线程切换的性能开销。当资源

- Python多线程实现大规模数据集高效转移

sand&wich

网络python服务器

背景在处理大规模数据集时,通常需要在不同存储设备、不同服务器或文件夹之间高效地传输数据。如果采用单线程传输方式,当数据量非常大时,整个过程会非常耗时。因此,通过多线程并行处理可以大幅提升数据传输效率。本文将分享一个基于Python多线程实现的高效数据传输工具,通过遍历源文件夹中的所有文件,将它们移动到目标文件夹。工具和库这个数据集转移工具主要依赖于以下Python标准库:os:用于文件系统操作,如

- Python实现下载当前年份的谷歌影像

sand&wich

python开发语言

在GIS项目和地图应用中,获取最新的地理影像数据是非常重要的。本文将介绍如何使用Python代码从Google地图自动下载当前年份的影像数据,并将其保存为高分辨率的TIFF格式文件。这个过程涉及地理坐标转换、多线程下载和图像处理。关键功能该脚本的核心功能包括:坐标转换:支持WGS-84与WebMercator投影之间转换,以及处理中国GCJ-02偏移。自动化下载:多线程下载地图瓦片,提高效率。图像

- WebMagic:强大的Java爬虫框架解析与实战

Aaron_945

Javajava爬虫开发语言

文章目录引言官网链接WebMagic原理概述基础使用1.添加依赖2.编写PageProcessor高级使用1.自定义Pipeline2.分布式抓取优点结论引言在大数据时代,网络爬虫作为数据收集的重要工具,扮演着不可或缺的角色。Java作为一门广泛使用的编程语言,在爬虫开发领域也有其独特的优势。WebMagic是一个开源的Java爬虫框架,它提供了简单灵活的API,支持多线程、分布式抓取,以及丰富的

- 00. 这里整理了最全的爬虫框架(Java + Python)

有一只柴犬

爬虫系列爬虫javapython

目录1、前言2、什么是网络爬虫3、常见的爬虫框架3.1、java框架3.1.1、WebMagic3.1.2、Jsoup3.1.3、HttpClient3.1.4、Crawler4j3.1.5、HtmlUnit3.1.6、Selenium3.2、Python框架3.2.1、Scrapy3.2.2、BeautifulSoup+Requests3.2.3、Selenium3.2.4、PyQuery3.2

- 华为云分布式缓存服务DCS 8月新特性发布

华为云PaaS服务小智

华为云分布式缓存

分布式缓存服务(DistributedCacheService,简称DCS)是华为云提供的一款兼容Redis的高速内存数据处理引擎,为您提供即开即用、安全可靠、弹性扩容、便捷管理的在线分布式缓存能力,满足用户高并发及数据快速访问的业务诉求。此次为大家带来DCS8月的特性更新内容,一起来看看吧!

- 【Java】已解决:java.util.concurrent.CompletionException

屿小夏

java开发语言

文章目录一、分析问题背景出现问题的场景代码片段二、可能出错的原因三、错误代码示例四、正确代码示例五、注意事项已解决:java.util.concurrent.CompletionException一、分析问题背景在Java并发编程中,java.util.concurrent.CompletionException是一种常见的运行时异常,通常在使用CompletableFuture进行异步计算时出现

- python爬取微信小程序数据,python爬取小程序数据

2301_81900439

前端

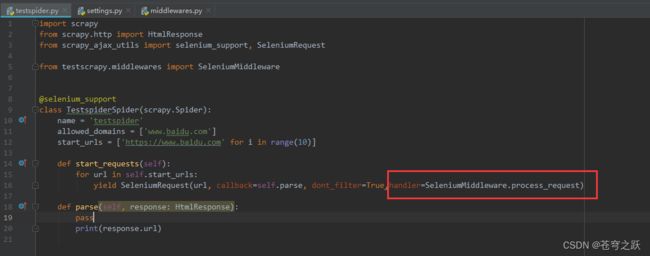

大家好,小编来为大家解答以下问题,python爬取微信小程序数据,python爬取小程序数据,现在让我们一起来看看吧!Python爬虫系列之微信小程序实战基于Scrapy爬虫框架实现对微信小程序数据的爬取首先,你得需要安装抓包工具,这里推荐使用Charles,至于怎么使用后期有时间我会出一个事例最重要的步骤之一就是分析接口,理清楚每一个接口功能,然后连接起来形成接口串思路,再通过Spider的回调

- 【加密算法基础——RSA 加密】

XWWW668899

网络服务器笔记python

RSA加密RSA(Rivest-Shamir-Adleman)加密是非对称加密,一种广泛使用的公钥加密算法,主要用于安全数据传输。公钥用于加密,私钥用于解密。RSA加密算法的名称来源于其三位发明者的姓氏:R:RonRivestS:AdiShamirA:LeonardAdleman这三位计算机科学家在1977年共同提出了这一算法,并发表了相关论文。他们的工作为公钥加密的基础奠定了重要基础,使得安全通

- Redis:缓存击穿

我的程序快快跑啊

缓存redisjava

缓存击穿(热点key):部分key(被高并发访问且缓存重建业务复杂的)失效,无数请求会直接到数据库,造成巨大压力1.互斥锁:可以保证强一致性线程一:未命中之后,获取互斥锁,再查询数据库重建缓存,写入缓存,释放锁线程二:查询未命中,未获得锁(已由线程一获得),等待一会,缓存命中互斥锁实现方式:redis中setnxkeyvalue:改变对应key的value,仅当value不存在时执行,以此来实现互

- 使用selenium调用firefox提示Profile Missing的问题解决

歪歪的酒壶

selenium测试工具python

在Ubuntu22.04环境中,使用python3运行selenium提示ProfileMissing,具体信息为:YourFirefoxprofilecannotbeloaded.Itmaybemissingorinaccessible在这个问题的环境中firefox浏览器工作正常。排查中,手动在命令行执行firefox可以打开浏览器,但是出现如下提示Gtk-Message:15:32:09.9

- 如何在电商平台上使用API接口数据优化商品价格

weixin_43841111

api数据挖掘人工智能pythonjava大数据前端爬虫

利用API接口数据来优化电商商品价格是一个涉及数据收集、分析、策略制定以及实时调整价格的过程。这不仅能提高市场竞争力,还能通过精准定价最大化利润。以下是一些关键步骤和策略,用于通过API接口数据优化电商商品价格:1.数据收集竞争对手价格监控:使用API接口(如Scrapy、BeautifulSoup等工具结合Python进行网页数据抓取,或使用专门的API服务如PriceIntelligence、

- 网关gateway学习总结

猪猪365

学习总结学习总结

一微服务概述:微服务网关就是一个系统!通过暴露该微服务的网关系统,方便我们进行相关的鉴权,安全控制,日志的统一处理,易于监控的相关功能!实现微服务网关技术都有哪些呢?1nginx:nginx是一个高性能的http和反向代理web的服务器,同事也提供了IMAP/POP3/SMTP服务.他可以支撑5万并发链接,并且cpu,内存等资源消耗非常的低,运行非常的稳定!2Zuul:Zuul是Netflix公司

- Rust是否会取代C/C++?Rust与C/C++的较量

AI与编程之窗

源码编译与开发rustc语言c++内存安全并发编程代码安全性能优化

目录引言第一部分:Rust语言的优势内存安全性并发性性能社区和生态系统的成长第二部分:C/C++语言的优势和地位历史积淀和成熟度广泛的库和工具支持性能优化和硬件控制丰富的行业应用社区和行业支持第三部分:挑战和阻碍学习曲线现有代码库的迁移成本生态系统和工具链的完善度社区和人才培养行业应用和推广法规和标准化第四部分:未来趋势和可能性行业趋势教育和人才培养兼容和共存行业标准化企业支持和应用开源社区和生态

- tcp线程进程多并发

@莫福瑞

算法

tcp线程多并发#include#defineSERPORT8888#defineSERIP"192.168.0.118"#defineBACKLOG20typedefstruct{intnewfd;structsockaddr_incin;}BMH;void*fun1(void*sss){intnewfd=accept((BMH*)sss)->newfd;structsockaddr_incin

- 六、全局锁和表锁:给表加个字段怎么有这么多阻碍

nieniemin

数据库锁设计的初衷是处理并发问题。作为多用户共享的资源,当出现并发访问的时候,数据库需要合理地控制资源的访问规则。而锁就是用来实现这些访问规则的重要数据结构。根据加锁的范围,MySQL里面的锁大致可以分成全局锁、表级锁和行锁三类。6.1全局锁全局锁就是对整个数据库实例加锁。MySQL提供了一个加全局读锁的方法,命令是Flushtableswithreadlock(FTWRL)。当你需要让整个库处于

- mybatis 二级缓存失效_Mybatis 缓存原理及失效情况解析

weixin_39844942

mybatis二级缓存失效

这篇文章主要介绍了Mybatis缓存原理及失效情况解析,文中通过示例代码介绍的非常详细,对大家的学习或者工作具有一定的参考学习价值,需要的朋友可以参考下1、什么是缓存[Cache]存在内存中的临时数据。将用户经常查询的数据放在缓存(内存)中,用户去查询数据就不用从磁盘上(关系型数据库数据文件)查询,从缓存中查询,从而提高查询效率,解决了高并发系统的性能问题。2、为什么要使用缓存减少和数据库的交互次

- [转载] NoSQL简介

weixin_30325793

大数据数据库运维

摘自“百度百科”。NoSQL,泛指非关系型的数据库。随着互联网web2.0网站的兴起,传统的关系数据库在应付web2.0网站,特别是超大规模和高并发的SNS类型的web2.0纯动态网站已经显得力不从心,暴露了很多难以克服的问题,而非关系型的数据库则由于其本身的特点得到了非常迅速的发展。NoSQL数据库的产生就是为了解决大规模数据集合多重数据种类带来的挑战,尤其是大数据应用难题。虽然NoSQL流行语

- Python精选200Tips:121-125

AnFany

Python200+Tipspython开发语言

Spendyourtimeonself-improvement121Requests-简化的HTTP请求处理发送GET请求发送POST请求发送PUT请求发送DELETE请求会话管理处理超时文件上传122BeautifulSoup-网页解析和抓取解析HTML和XML文档查找单个标签查找多个标签使用CSS选择器查找标签提取文本修改文档内容删除标签处理XML文档123Scrapy-强大的网络爬虫框架示例

- 高并发内存池(4)——实现CentralCache

Niu_brave

高并发内存池项目笔记c++学习

目录一,CentralCache的简单介绍二,CentralCache的整体结构三,CentralCache实现的详细代码1,成员2,函数1,获取单例对象的指针2,FetchRangeObj函数3,GetOneSpan函数实现4,ReleaseListToSpans函数实现一,CentralCache的简单介绍CentralCache是高并发内存池这个项目的中间层。当第一层ThreadCache内

- C# 开发教程-入门基础

天马3798

教程系列整理c#开发语言

1.C#简介、环境,程序结构2.C#基本语法,变量,控制局域,数据类型,类型转换3.C#数组、循环,Linq4.C#类,封装,方法5.C#枚举、字符串6.C#面相对象,继承,封装,多态7.C#特性、属性、反射、索引器8.C#委托,事件,集合,泛型9.C#匿名方法10.C#多线程更多:JQuery开发教程入门基础Vue开发基础入门教程Vue开发高级学习教程

- 谈谈你对AQS的理解

Mutig_s

jucjava开发语言面试后端

AQS概述AQS,全称为AbstractQueuedSynchronizer,是Java并发包(java.util.concurrent)中一个核心的框架,主要用于构建阻塞式锁和相关的同步器,也是构建锁或者其他同步组件的基础框架。AQS提供了一种基于FIFO(First-In-First-Out)的CLH(三个人名缩写)双向队列的机制,来实现各种同步器,如ReentrantLock、Semapho

- [面试高频问题]关于多线程的单例模式

朱玥玥要每天学习

java单例模式开发语言

单例模式什么是设计模式?设计模式可以看做为框架或者是围棋中的”棋谱”,红方当头炮,黑方马来跳.根据一些固定的套路下,能保证局势不会吃亏.在日常的程序设计中,往往有许多业务场景,根据这些场景,大佬们总结出了一些固定的套路.按照这个套路来实现代码,也不会吃亏.什么是单例模式,保证某类在程序中只有一个实例,而不会创建多份实例.单例模式具体的实现方式:可分为”懒汉模式”,”饿汉模式”.饿汉模式类加载的同时

- [Golang] goroutine

沉着冷静2024

Golanggolang后端

[Golang]goroutine文章目录[Golang]goroutine并发进程和线程协程goroutine概述如何使用goroutine并发进程和线程谈到并发,大多都离不开进程和线程,什么是进程、什么是线程?进程可以这样理解:进程就是运行着的程序,它是程序在操作系统的一次执行过程,是一个程序的动态概念,进程是操作系统分配资源的基本单位。线程可以这样理解:线程是一个进程的执行实体,它是比进程粒

- 《婆婆的意外之伤》

棻子

《妖约芳香165》201809010周一晴28度❤今日感悟上午接到红龙的电话,除了平常的问候,听得出他沉重的声音,出大事了,原来是婆婆不小心摔跤,竟然摔断了右大腿骨,动弹不得,叫了救护车才把老人从六楼的家中抬下,送到医院。这真的对于老人来说,是最怕碰上的事情。都说年老后最怕摔,一摔不知会引起何样的后果与并发症。婆婆身体本就不好,因年轻时劳动过量,导致腰椎肩盘突出,动过大手术,此后走路也是慢悠悠,不

- 统一思想认识

永夜-极光

思想

1.统一思想认识的基础,才能有的放矢

原因:

总有一种描述事物的方式最贴近本质,最容易让人理解.

如何让教育更轻松,在于找到最适合学生的方式.

难点在于,如何模拟对方的思维基础选择合适的方式. &

- Joda Time使用笔记

bylijinnan

javajoda time

Joda Time的介绍可以参考这篇文章:

http://www.ibm.com/developerworks/cn/java/j-jodatime.html

工作中也常常用到Joda Time,为了避免每次使用都查API,记录一下常用的用法:

/**

* DateTime变化(增减)

*/

@Tes

- FileUtils API

eksliang

FileUtilsFileUtils API

转载请出自出处:http://eksliang.iteye.com/blog/2217374 一、概述

这是一个Java操作文件的常用库,是Apache对java的IO包的封装,这里面有两个非常核心的类FilenameUtils跟FileUtils,其中FilenameUtils是对文件名操作的封装;FileUtils是文件封装,开发中对文件的操作,几乎都可以在这个框架里面找到。 非常的好用。

- 各种新兴技术

不懂事的小屁孩

技术

1:gradle Gradle 是以 Groovy 语言为基础,面向Java应用为主。基于DSL(领域特定语言)语法的自动化构建工具。

现在构建系统常用到maven工具,现在有更容易上手的gradle,

搭建java环境:

http://www.ibm.com/developerworks/cn/opensource/os-cn-gradle/

搭建android环境:

http://m

- tomcat6的https双向认证

酷的飞上天空

tomcat6

1.生成服务器端证书

keytool -genkey -keyalg RSA -dname "cn=localhost,ou=sango,o=none,l=china,st=beijing,c=cn" -alias server -keypass password -keystore server.jks -storepass password -validity 36

- 托管虚拟桌面市场势不可挡

蓝儿唯美

用户还需要冗余的数据中心,dinCloud的高级副总裁兼首席营销官Ali Din指出。该公司转售一个MSP可以让用户登录并管理和提供服务的用于DaaS的云自动化控制台,提供服务或者MSP也可以自己来控制。

在某些情况下,MSP会在dinCloud的云服务上进行服务分层,如监控和补丁管理。

MSP的利润空间将根据其参与的程度而有所不同,Din说。

“我们有一些合作伙伴负责将我们推荐给客户作为个

- spring学习——xml文件的配置

a-john

spring

在Spring的学习中,对于其xml文件的配置是必不可少的。在Spring的多种装配Bean的方式中,采用XML配置也是最常见的。以下是一个简单的XML配置文件:

<?xml version="1.0" encoding="UTF-8"?>

<beans xmlns="http://www.springframework.or

- HDU 4342 History repeat itself 模拟

aijuans

模拟

来源:http://acm.hdu.edu.cn/showproblem.php?pid=4342

题意:首先让求第几个非平方数,然后求从1到该数之间的每个sqrt(i)的下取整的和。

思路:一个简单的模拟题目,但是由于数据范围大,需要用__int64。我们可以首先把平方数筛选出来,假如让求第n个非平方数的话,看n前面有多少个平方数,假设有x个,则第n个非平方数就是n+x。注意两种特殊情况,即

- java中最常用jar包的用途

asia007

java

java中最常用jar包的用途

jar包用途axis.jarSOAP引擎包commons-discovery-0.2.jar用来发现、查找和实现可插入式接口,提供一些一般类实例化、单件的生命周期管理的常用方法.jaxrpc.jarAxis运行所需要的组件包saaj.jar创建到端点的点到点连接的方法、创建并处理SOAP消息和附件的方法,以及接收和处理SOAP错误的方法. w

- ajax获取Struts框架中的json编码异常和Struts中的主控制器异常的解决办法

百合不是茶

jsjson编码返回异常

一:ajax获取自定义Struts框架中的json编码 出现以下 问题:

1,强制flush输出 json编码打印在首页

2, 不强制flush js会解析json 打印出来的是错误的jsp页面 却没有跳转到错误页面

3, ajax中的dataType的json 改为text 会

- JUnit使用的设计模式

bijian1013

java设计模式JUnit

JUnit源代码涉及使用了大量设计模式

1、模板方法模式(Template Method)

定义一个操作中的算法骨架,而将一些步骤延伸到子类中去,使得子类可以不改变一个算法的结构,即可重新定义该算法的某些特定步骤。这里需要复用的是算法的结构,也就是步骤,而步骤的实现可以在子类中完成。

- Linux常用命令(摘录)

sunjing

crondchkconfig

chkconfig --list 查看linux所有服务

chkconfig --add servicename 添加linux服务

netstat -apn | grep 8080 查看端口占用

env 查看所有环境变量

echo $JAVA_HOME 查看JAVA_HOME环境变量

安装编译器

yum install -y gcc

- 【Hadoop一】Hadoop伪集群环境搭建

bit1129

hadoop

结合网上多份文档,不断反复的修正hadoop启动和运行过程中出现的问题,终于把Hadoop2.5.2伪分布式安装起来,跑通了wordcount例子。Hadoop的安装复杂性的体现之一是,Hadoop的安装文档非常多,但是能一个文档走下来的少之又少,尤其是Hadoop不同版本的配置差异非常的大。Hadoop2.5.2于前两天发布,但是它的配置跟2.5.0,2.5.1没有分别。 &nb

- Anychart图表系列五之事件监听

白糖_

chart

创建图表事件监听非常简单:首先是通过addEventListener('监听类型',js监听方法)添加事件监听,然后在js监听方法中定义具体监听逻辑。

以钻取操作为例,当用户点击图表某一个point的时候弹出point的name和value,代码如下:

<script>

//创建AnyChart

var chart = new AnyChart();

//添加钻取操作&quo

- Web前端相关段子

braveCS

web前端

Web标准:结构、样式和行为分离

使用语义化标签

0)标签的语义:使用有良好语义的标签,能够很好地实现自我解释,方便搜索引擎理解网页结构,抓取重要内容。去样式后也会根据浏览器的默认样式很好的组织网页内容,具有很好的可读性,从而实现对特殊终端的兼容。

1)div和span是没有语义的:只是分别用作块级元素和行内元素的区域分隔符。当页面内标签无法满足设计需求时,才会适当添加div

- 编程之美-24点游戏

bylijinnan

编程之美

import java.util.ArrayList;

import java.util.Arrays;

import java.util.HashSet;

import java.util.List;

import java.util.Random;

import java.util.Set;

public class PointGame {

/**编程之美

- 主页面子页面传值总结

chengxuyuancsdn

总结

1、showModalDialog

returnValue是javascript中html的window对象的属性,目的是返回窗口值,当用window.showModalDialog函数打开一个IE的模式窗口时,用于返回窗口的值

主界面

var sonValue=window.showModalDialog("son.jsp");

子界面

window.retu

- [网络与经济]互联网+的含义

comsci

互联网+

互联网+后面是一个人的名字 = 网络控制系统

互联网+你的名字 = 网络个人数据库

每日提示:如果人觉得不舒服,千万不要外出到处走动,就呆在床上,玩玩手游,更不能够去开车,现在交通状况不

- oracle 创建视图 with check option

daizj

视图vieworalce

我们来看下面的例子:

create or replace view testview

as

select empno,ename from emp where ename like ‘M%’

with check option;

这里我们创建了一个视图,并使用了with check option来限制了视图。 然后我们来看一下视图包含的结果:

select * from testv

- ToastPlugin插件在cordova3.3下使用

dibov

Cordova

自己开发的Todos应用,想实现“

再按一次返回键退出程序 ”的功能,采用网上的ToastPlugins插件,发现代码或文章基本都是老版本,运行问题比较多。折腾了好久才弄好。下面吧基于cordova3.3下的ToastPlugins相关代码共享。

ToastPlugin.java

package&nbs

- C语言22个系统函数

dcj3sjt126com

cfunction

C语言系统函数一、数学函数下列函数存放在math.h头文件中Double floor(double num) 求出不大于num的最大数。Double fmod(x, y) 求整数x/y的余数。Double frexp(num, exp); double num; int *exp; 将num分为数字部分(尾数)x和 以2位的指数部分n,即num=x*2n,指数n存放在exp指向的变量中,返回x。D

- 开发一个类的流程

dcj3sjt126com

开发

本人近日根据自己的开发经验总结了一个类的开发流程。这个流程适用于单独开发的构件,并不适用于对一个项目中的系统对象开发。开发出的类可以存入私人类库,供以后复用。

以下是开发流程:

1. 明确类的功能,抽象出类的大概结构

2. 初步设想类的接口

3. 类名设计(驼峰式命名)

4. 属性设置(权限设置)

判断某些变量是否有必要作为成员属

- java 并发

shuizhaosi888

java 并发

能够写出高伸缩性的并发是一门艺术

在JAVA SE5中新增了3个包

java.util.concurrent

java.util.concurrent.atomic

java.util.concurrent.locks

在java的内存模型中,类的实例字段、静态字段和构成数组的对象元素都会被多个线程所共享,局部变量与方法参数都是线程私有的,不会被共享。

- Spring Security(11)——匿名认证

234390216

Spring SecurityROLE_ANNOYMOUS匿名

匿名认证

目录

1.1 配置

1.2 AuthenticationTrustResolver

对于匿名访问的用户,Spring Security支持为其建立一个匿名的AnonymousAuthenticat

- NODEJS项目实践0.2[ express,ajax通信...]

逐行分析JS源代码

Ajaxnodejsexpress

一、前言

通过上节学习,我们已经 ubuntu系统搭建了一个可以访问的nodejs系统,并做了nginx转发。本节原要做web端服务 及 mongodb的存取,但写着写着,web端就

- 在Struts2 的Action中怎样获取表单提交上来的多个checkbox的值

lhbthanks

javahtmlstrutscheckbox

第一种方法:获取结果String类型

在 Action 中获得的是一个 String 型数据,每一个被选中的 checkbox 的 value 被拼接在一起,每个值之间以逗号隔开(,)。

所以在 Action 中定义一个跟 checkbox 的 name 同名的属性来接收这些被选中的 checkbox 的 value 即可。

以下是实现的代码:

前台 HTML 代码:

- 003.Kafka基本概念

nweiren

hadoopkafka

Kafka基本概念:Topic、Partition、Message、Producer、Broker、Consumer。 Topic: 消息源(Message)的分类。 Partition: Topic物理上的分组,一

- Linux环境下安装JDK

roadrunners

jdklinux

1、准备工作

创建JDK的安装目录:

mkdir -p /usr/java/

下载JDK,找到适合自己系统的JDK版本进行下载:

http://www.oracle.com/technetwork/java/javase/downloads/index.html

把JDK安装包下载到/usr/java/目录,然后进行解压:

tar -zxvf jre-7

- Linux忘记root密码的解决思路

tomcat_oracle

linux

1:使用同版本的linux启动系统,chroot到忘记密码的根分区passwd改密码 2:grub启动菜单中加入init=/bin/bash进入系统,不过这时挂载的是只读分区。根据系统的分区情况进一步判断. 3: grub启动菜单中加入 single以单用户进入系统. 4:用以上方法mount到根分区把/etc/passwd中的root密码去除 例如: ro

- 跨浏览器 HTML5 postMessage 方法以及 message 事件模拟实现

xueyou

jsonpjquery框架UIhtml5

postMessage 是 HTML5 新方法,它可以实现跨域窗口之间通讯。到目前为止,只有 IE8+, Firefox 3, Opera 9, Chrome 3和 Safari 4 支持,而本篇文章主要讲述 postMessage 方法与 message 事件跨浏览器实现。postMessage 方法 JSONP 技术不一样,前者是前端擅长跨域文档数据即时通讯,后者擅长针对跨域服务端数据通讯,p