MapReduce-WritableComparable排序 (From 尚硅谷)

个人学习整理,所有资料来自尚硅谷

B站学习连接:添加链接描述

MapReduce-WritableComparable排序

1. WritableComparable排序

1.1 排序概述

-

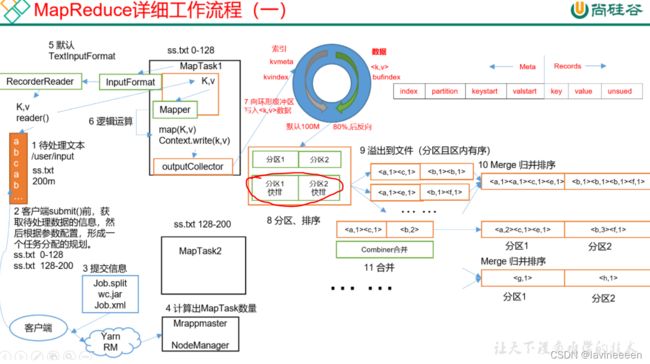

排序是MapReduce框架中最重要的操作之一。

-

MapTask和ReduceTask均会对数据按照key进行排序(若key不能进行排序则会报错)。该操作属于Hadoop的默认行为。任何应用程序中的数据会被排序,而不管逻辑上是否需要。

-

默认排序是按照字典顺序排序,且实现该排序(Map中第一次排序)的方法是快速排序。

-

对于MapTask,它会将处理的结果暂时放到环形缓冲区中,当环形缓冲区使用率达到一定阈值后,再对环形缓冲区的数据进行一次快速排序,并将这些有序数据溢写到磁盘上,而当数据预处理完毕后,它会对磁盘上所有文件进行归并排序。

-

对于ReduceTask,它从每个MapTask上远程拷贝相应的数据文件,如果文件大小超过一定阈值,则溢写到磁盘上,否则存储在内存中。如果磁盘上文件数据达到一定阈值,则进行一次归并排序以生成一个更大文件;如果内存中文件大小或者数目超过一定阈值,则进行一次合并后将数据溢写到磁盘上。当所有数据拷贝完毕后,ReduceTask统一对内存和磁盘上的所有数据进行一次归并排序。

1.2 排序分类

- 部分排序

MapReduce根据输入记录的键对数据集排序。保证输出的每个文件内部有序。

- 全排序

最终输出结果只有一个文件,且内部有序。实现方式是只设置一个ReduceTask,但该方法在处理大型文件时效率极低,因为一台机器处理所有文件,完全丧失了MapReduce所提供的并行框架。

- 辅助排序:(GroupingComparator分组)

在Reduce端对key进行分组。应用于:在接受的key为bean对象时,想让一个或几个字段相同(全部字段比较不相同)的key进入到一个reduce方法时,可以采用分组排序。

- 二次排序

在自定义排序中,如果compareTo中的判断条件为两个,即为二次排序。

1.3 WritableComparable排序案例实操(全排序)

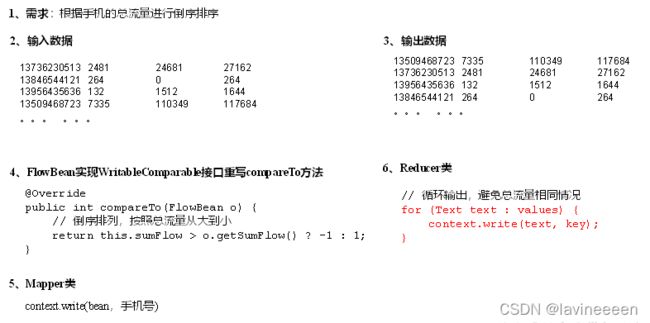

(1)需求

根据上面统计手机总流量的案例的结果对总流量进行倒序排序,当总流量相等时,按照上行流量正序排序。

数据连接:添加链接描述

提取码:9x8j

(2)分析

(3)代码实现

- FlowBean对象在需求1基础上增加了比较功能(增加CompareTo方法)

package com.atguigu.mapreduce.writableComparable;

import org.apache.hadoop.io.WritableComparable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

/**

1、定义类实现WritableComparable接口

2、重写序列化和反序列化方法

3、重写空参构造

4、toString方法

5、增加CompareTo方法

*/

public class FlowBean implements WritableComparable<FlowBean>{//实现Writable接口

private long upFlow;

private long downFlow;

private long sumFlow;

//反序列化时,需要反射调用空参构造函数,所以必须有空参构造

public FlowBean() {

}

public long getUpFlow() {

return upFlow;

}

public void setUpFlow(long upFlow) {

this.upFlow = upFlow;

}

public long getDownFlow() {

return downFlow;

}

public void setDownFlow(long downFlow) {

this.downFlow = downFlow;

}

public long getSumFlow() {

return sumFlow;

}

public void setSumFlow() {

this.sumFlow = this.upFlow + this.downFlow;

}

//重写序列化方法

@Override

public void write(DataOutput dataOutput) throws IOException {

dataOutput.writeLong(upFlow);

dataOutput.writeLong(downFlow);

dataOutput.writeLong(sumFlow);

}

//重写反序列方法

@Override

public void readFields(DataInput dataInput) throws IOException {

this.upFlow = dataInput.readLong();

this.downFlow = dataInput.readLong();

this.sumFlow = dataInput.readLong();

}

//反序列化的顺序必须和序列化的顺序相同

@Override

public String toString() {

return upFlow + "\t" + downFlow + "\t" + sumFlow;

}

@Override

public int compareTo(FlowBean o){

if (this.sumFlow > o.sumFlow){

return -1;

}else if (this.sumFlow < o.sumFlow){

return 1;

}else{

//二次排序:按照上行流量的正排序

if (this.upFlow > o.sumFlow){

return 1;

}else if (this.upFlow < o.upFlow){

return -1;

}else {

return 0;

}

}

}

}

- 编写Mapper类

package com.atguigu.mapreduce.writableComparable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class FlowMapper extends Mapper<LongWritable,Text,FlowBean,Text>{//Mapper输出的key为FlowBean,value为Text

private FlowBean outK = new FlowBean();

private Text outV = new Text();

@Override

protected void map(LongWritable key, Text value, Mapper<LongWritable, Text, FlowBean, Text>.Context context) throws IOException, InterruptedException {

//获取一行

String line = value.toString();

//切割

String[] split = line.split("\t");

//封装

outV.set(split[0]);

outK.setUpFlow(Long.parseLong(split[1]));

outK.setDownFlow(Long.parseLong(split[2]));

outK.setSumFlow();

//写出

context.write(outK,outV);

}

}

- Reducer类

package com.atguigu.mapreduce.writableComparable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class FlowReducer extends Reducer<FlowBean,Text,Text, FlowBean> {

@Override

protected void reduce(FlowBean key, Iterable<Text> values, Reducer<FlowBean, Text, Text, FlowBean>.Context context) throws IOException, InterruptedException {

for (Text value:values){

context.write(value,key);

}

}

}

- Driver类

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class FlowDriver {

public static void main(String[] args) throws IOException, InterruptedException, ClassNotFoundException {

//1、获取配置信息以及获取job对象

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

//2、关联本Driver的jar

job.setJarByClass(FlowDriver.class);

//3、关联Mapper和Reducer的jar

job.setMapperClass(FlowMapper.class);

job.setReducerClass(FlowReducer.class);

//4、设置Mapper输出的kv类型

//Mapper输出的Key为FlowBean,value为Text(手机号)

job.setMapOutputKeyClass(FlowBean.class);

job.setMapOutputValueClass(Text.class);

//5、设置最终输出的kv类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(FlowBean.class);

//6、设置输入和输出路径

FileInputFormat.setInputPaths(job,new Path("D:\\downloads\\hadoop-3.1.0\\data\\output\\output2"));

FileOutputFormat.setOutputPath(job,new Path("D:\\downloads\\hadoop-3.1.0\\data\\output\\output6"));

//7、提交job

boolean result = job.waitForCompletion(true);

System.exit(result?0:1);

}

}

1.4 WritableComparable排序案例实操(区内排序)

(1)需求

要求每个省份手机号输出的文件中按照总流量内部排序

(2)需求分析

基于前一个需要,增加自定义分区类,分区按照省份手机号设置

(3)分析

(4)增加自定义分区类

- ProvincePartitioner2

package com.atguigu.mapreduce.PartitionerandwritableComparable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Partitioner;

public class ProvincePartitioner2 extends Partitioner<FlowBean, Text> {

@Override

public int getPartition(FlowBean flowbean,Text text, int i){

String phone = text.toString();

String prePhone = phone.substring(0,3);

int partition;

if ("136".equals(prePhone)){

partition = 0;

}else if ("137".equals(prePhone)){

partition = 1;

}else if ("138".equals(prePhone)){

partition = 2;

}else if ("139".equals(prePhone)){

partition = 3;

}else{

partition = 4;

}

return partition;

}

}

- FlowBean

package com.atguigu.mapreduce.PartitionerandwritableComparable;

import org.apache.hadoop.io.WritableComparable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

/**

1、定义类实现WritableComparable接口

2、重写序列化和反序列化方法

3、重写空参构造

4、toString方法

5、增加CompareTo方法

*/

public class FlowBean implements WritableComparable<FlowBean>{

private long upFlow;

private long downFlow;

private long sumFlow;

public FlowBean(){

}

public long getUpFlow(){return upFlow;}

public void setUpFlow(long upFlow){this.upFlow = upFlow;}

public long getDownFlow(){return downFlow;}

public void setDownFlow(long downFlow){this.downFlow = downFlow;}

public long getSumFlow{return sumFlow;}

public void setSumFlow(){this.sumFlow = this.upFlow+this.dowmFlow;}

@Override

public void write(DataOutput dataOutput) throws IOException{

dataOutput.writeLong(upFlow);

dataOutput.writeLong(downFlow);

dataOutput.writeLong(sumFlow);

}

@Override

//按照总流量降序,若总流量相等,按照上行量流量升序

public int compareTo(FlowBean o){

if (this.sumFlow > o.sumFlow){

return -1;

}else if (this.sumFlow < o.sumFlow){

return 1;

}else {

if (this.upFlow > o.sumFlow){

return 1;

}else if (this.upFlow < o.upFlow){

return -1;

}else{

return 0;

}

}

}

}

- Mapper类

package com.atguigu.mapreduce.PartitionerandwritableComparable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class FlowMapper extends Mapper(LongWritable,Text,FlowBean,Text){

private FlowBean outK = new FlowBean();

private Text outV = new Text();

@Override

protected void map(LongWritable key,Text value,Mapper<LongWritable, Text, FlowBean, Text>.Context context) throws IOException,InterruptedException{

//获取一行

String line = value.toString();

//切割

Stingp[] split = line.split("\t");

//封装

outV.set(split[0]);

outK.setUpFlow(Long.parseLong(split[1]));

outK.setDownFlow(Long.parseLong(split[2]));

outK.setSumFlow();

//写出

context.write(outK,outV);

}

}

- Reducer类

package com.atguigu.mapreduce.PartitionerandwritableComparable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class FlowReducer extends Reducer<FlowBean,Text,Text,FlowBean>{

@Override

protected void reduce (FlowBean key,Iterable<Text> values, Reducer<FlowBean, Text, Text, FlowBean>.Context context)throws IOException, InterruptedException{

for (Text value:values){

context.write(value,key);

}

}

}

- Driver类

package com.atguigu.mapreduce.PartitionerandwritableComparable;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class FlowDriver{

public static void main(String[] args) throws IOException,InterruptedException,ClassNotFoundException{

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

job.setJarByClass(FlowDriver.class);

job.setMapperClass(FlowMapper.class);

job.setReducerClass(FlowReducer.class);

job.setMapOutputKeyClass(FlowBean.class);

job.setMapOutputValueClass(Text.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(FlowBean.class);

job.setPartitionerClass(ProvincePartition2.class);

job.setNumReduceTasks(5);

FileInputFormat.setInputPaths(job,new Path("D:\\downloads\\hadoop-3.1.0\\data\\output\\output2"));

FileOutputFormat.setOutputPath(job,new Path("D:\\downloads\\hadoop-3.1.0\\data\\output\\output8"));

boolean result = job.waitForCompletion(true);

System.exit(result?0:1);

}

}