第3关 二进制形式安装K8s高可用生产级集群

------> 课程视频同步分享在今日头条和B站

大家好,我是博哥爱运维,下面是这次安装k8s集群相关系统及组件的详细版本号

- Ubuntu 22.04.3 LTS

- k8s: v1.27.5

- containerd: 1.6.23

- etcd: v3.5.9

- coredns: 1.11.1

- calico: v3.24.6

下面是此次虚拟机集群安装前的IP等信息规划(完全模拟一个中小型企业K8S集群)

| IP | hostname | role | resource |

|---|---|---|---|

| 10.0.1.201 | node-1 | master/work node | 2c/4g(ingress-nginx) |

| 10.0.1.202 | node-2 | master/work node | 2c/4g(harbor) |

| 10.0.1.203 | node-3 | work node | 2c/4g |

| 10.0.1.204 | node-4 | work node | 2c/4g |

显然目前为止,前面几关给我们的装备还不太够,我们继续在这一关获取充足的装备弹药,为最终战胜K8s而奋斗吧!

这里采用开源项目https://github.com/easzlab/kubeasz,以二进制安装的方式,符合企业级稳定运行的要求,非常优秀的一个开源项目,亲眼见证了star数从0到现在最新的9.6k的增长过程,版本也在不停地迭代更新,基本是跟着K8S的更新节奏来。

博哥在实际工作中,在全球各区域(国内、北美、欧洲、东南亚),各个主流云厂商(阿里云、华为云、腾讯云、百度云、火山云、移动云、AWS、Google cloud等),均自建过生产K8S集群提供业务服务能力,用的就是这个优秀的K8S安装开源项目,稳定运行时间长的集群有4年左右,期间没出现过大问题很稳定。

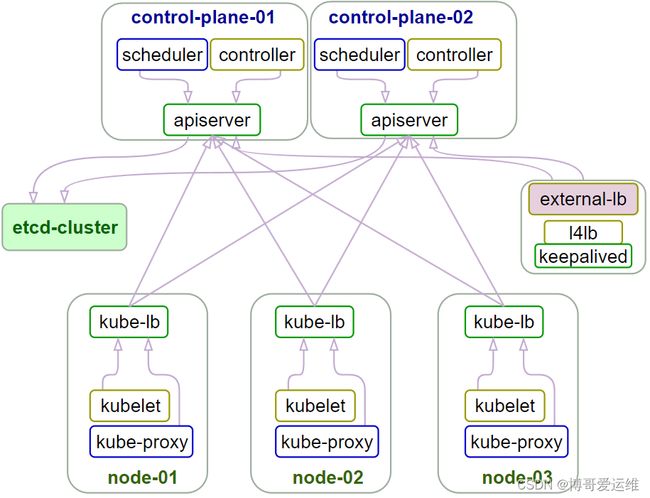

部署架构图

安装步骤清单:

- deploy机器做好对所有k8s node节点的免密登陆操作

- deploy机器安装好python2版本以及pip,然后安装ansible

- 对k8s集群配置做一些定制化配置并开始部署

# 需要注意的在线安装因为会从github及dockerhub上下载文件及镜像,有时候访问这些国外网络会非常慢,这里我也会大家准备好了完整离线安装包,下载地址如下,和上面的安装脚本放在同一目录下,再执行上面的安装命令即可

# 此离线安装包里面的k8s版本为v1.27.5

https://cloud.189.cn/web/share?code=Yj6nEv6jQ3Mv(访问码:3pms)

自建k8s集群部署

挂载数据盘

注意: 如无需独立数据盘可忽略此步骤

# 创建下面4个目录

/var/lib/container/{kubelet,docker,nfs_dir}

/nfs_dir

# 不分区直接格式化数据盘,假设数据盘是/dev/vdb

mkfs.ext4 /dev/vdb

# 然后编辑 /etc/fstab,添加如下内容:

/dev/vdb /var/lib/container/ ext4 defaults 0 0

/var/lib/container/kubelet /var/lib/kubelet none defaults,bind 0 0

/var/lib/container/docker /var/lib/docker none defaults,bind 0 0

/var/lib/container/nfs_dir /nfs_dir none defaults,bind 0 0

# 刷新生效挂载

mount -a

自己编写的k8s安装脚本说明

部署脚本调用核心项目github: https://github.com/easzlab/kubeasz , 此脚本是这个项目的上一层简化二进制部署k8s实施的封装

此脚本安装过的操作系统 CentOS 7, Ubuntu 16.04/18.04/20.04/22.04

注意: k8s 版本 >= 1.24 时,CRI仅支持 containerd

# 安装命令示例(假设我这里root的密码是rootPassword,如已做免密这里的密码可以任意填写;10.0.1为内网网段;后面的依次是主机位;CRI容器运行时;CNI网络插件;我们自己的域名是boge.com;要设定k8s集群名称为test):

# 单台节点部署

bash k8s_install_new.sh rootPassword 10.0.1 201 containerd calico boge.com test

# 多台节点部署

bash k8s_install_new.sh rootPassword 10.0.1 201\ 202\ 203\ 204 containerd calico boge.com test

# 注意:如果是在海外部署,而集群名称又不带aws的话,可以把安装脚本内此部分代码注释掉,避免pip安装过慢

if ! `echo $clustername |grep -iwE aws &>/dev/null`; then

mkdir ~/.pip

cat > ~/.pip/pip.conf <<CB

[global]

index-url = https://mirrors.aliyun.com/pypi/simple

[install]

trusted-host=mirrors.aliyun.com

CB

fi

# 直接执行上面的命令为在线安装,如需在离线环境部署,可自己在本地虚拟机安装一遍,然后将/etc/kubeasz目录打包成kubeasz.tar.gz,在无网络的机器上安装,把脚本和这个压缩包放一起再执行上面这行命令即是离线安装了

完整部署脚本k8s_install_new.sh

#!/bin/bash

# auther: boge

# descriptions: the shell scripts will use ansible to deploy K8S at binary for siample

# docker-tag

# curl -s -S "https://registry.hub.docker.com/v2/repositories/easzlab/kubeasz-k8s-bin/tags/" | jq '."results"[]["name"]' |sort -rn

# github: https://github.com/easzlab/kubeasz

#########################################################################

# 此脚本安装过的操作系统 CentOS/RedHat 7, Ubuntu 16.04/18.04/20.04/22.04

#########################################################################

echo "记得先把数据盘挂载弄好,已经弄好直接回车,否则ctrl+c终止脚本.(Remember to mount the data disk first, and press Enter directly, otherwise ctrl+c terminates the script.)"

read -p "" xxxxxx

# 传参检测

[ $# -ne 7 ] && echo -e "Usage: $0 rootpasswd netnum nethosts cri cni k8s-cluster-name\nExample: bash $0 rootPassword 10.0.1 201\ 202\ 203\ 204 [containerd|docker] [calico|flannel|cilium] boge.com test-cn\n" && exit 11

# 变量定义

export release=3.6.2 # 支持k8s多版本使用,定义下面k8s_ver变量版本范围: 1.28.1 v1.27.5 v1.26.8 v1.25.13 v1.24.17

export k8s_ver=v1.27.5 # | docker-tag tags easzlab/kubeasz-k8s-bin 注意: k8s 版本 >= 1.24 时,仅支持 containerd

rootpasswd=$1

netnum=$2

nethosts=$3

cri=$4

cni=$5

domainName=$6

clustername=$7

if ls -1v ./kubeasz*.tar.gz &>/dev/null;then software_packet="$(ls -1v ./kubeasz*.tar.gz )";else software_packet="";fi

pwd="/etc/kubeasz"

# deploy机器升级软件库

if cat /etc/redhat-release &>/dev/null;then

yum update -y

else

apt-get update && apt-get upgrade -y && apt-get dist-upgrade -y

[ $? -ne 0 ] && apt-get -yf install

fi

# deploy机器检测python环境

python2 -V &>/dev/null

if [ $? -ne 0 ];then

if cat /etc/redhat-release &>/dev/null;then

yum install gcc openssl-devel bzip2-devel

wget https://www.python.org/ftp/python/2.7.16/Python-2.7.16.tgz

tar xzf Python-2.7.16.tgz

cd Python-2.7.16

./configure --enable-optimizations

make altinstall

ln -s /usr/bin/python2.7 /usr/bin/python

cd -

else

apt-get install -y python2.7 && ln -s /usr/bin/python2.7 /usr/bin/python

fi

fi

python3 -V &>/dev/null

if [ $? -ne 0 ];then

if cat /etc/redhat-release &>/dev/null;then

yum install python3 -y

else

apt-get install -y python3

fi

fi

# deploy机器设置pip安装加速源

if `echo $clustername |grep -iwE cn &>/dev/null`; then

mkdir ~/.pip

cat > ~/.pip/pip.conf <<CB

[global]

index-url = https://mirrors.aliyun.com/pypi/simple

[install]

trusted-host=mirrors.aliyun.com

CB

fi

# deploy机器安装相应软件包

which python || ln -svf `which python2.7` /usr/bin/python

if cat /etc/redhat-release &>/dev/null;then

yum install git epel-release python-pip sshpass -y

[ -f ./get-pip.py ] && python ./get-pip.py || {

wget https://bootstrap.pypa.io/pip/2.7/get-pip.py && python get-pip.py

}

else

if grep -Ew '20.04|22.04' /etc/issue &>/dev/null;then apt-get install sshpass -y;else apt-get install python-pip sshpass -y;fi

[ -f ./get-pip.py ] && python ./get-pip.py || {

wget https://bootstrap.pypa.io/pip/2.7/get-pip.py && python get-pip.py

}

fi

python -m pip install --upgrade "pip < 21.0"

which pip || ln -svf `which pip` /usr/bin/pip

pip -V

pip install setuptools -U

pip install --no-cache-dir ansible netaddr

# 在deploy机器做其他node的ssh免密操作

for host in `echo "${nethosts}"`

do

echo "============ ${netnum}.${host} ===========";

if [[ ${USER} == 'root' ]];then

[ ! -f /${USER}/.ssh/id_rsa ] &&\

ssh-keygen -t rsa -P '' -f /${USER}/.ssh/id_rsa

else

[ ! -f /home/${USER}/.ssh/id_rsa ] &&\

ssh-keygen -t rsa -P '' -f /home/${USER}/.ssh/id_rsa

fi

sshpass -p ${rootpasswd} ssh-copy-id -o StrictHostKeyChecking=no ${USER}@${netnum}.${host}

if cat /etc/redhat-release &>/dev/null;then

ssh -o StrictHostKeyChecking=no ${USER}@${netnum}.${host} "yum update -y"

else

ssh -o StrictHostKeyChecking=no ${USER}@${netnum}.${host} "apt-get update && apt-get upgrade -y && apt-get dist-upgrade -y"

[ $? -ne 0 ] && ssh -o StrictHostKeyChecking=no ${USER}@${netnum}.${host} "apt-get -yf install"

fi

done

# deploy机器下载k8s二进制安装脚本(注:这里下载可能会因网络原因失败,可以多尝试运行该脚本几次)

if [[ ${software_packet} == '' ]];then

if [[ ! -f ./ezdown ]];then

curl -C- -fLO --retry 3 https://github.com/easzlab/kubeasz/releases/download/${release}/ezdown

fi

# 使用工具脚本下载

sed -ri "s+^(K8S_BIN_VER=).*$+\1${k8s_ver}+g" ezdown

chmod +x ./ezdown

# ubuntu_22 to download package of Ubuntu 22.04

./ezdown -D && ./ezdown -P ubuntu_22 && ./ezdown -X

else

tar xvf ${software_packet} -C /etc/

sed -ri "s+^(K8S_BIN_VER=).*$+\1${k8s_ver}+g" ${pwd}/ezdown

chmod +x ${pwd}/{ezctl,ezdown}

chmod +x ./ezdown

./ezdown -D # 离线安装 docker,检查本地文件,正常会提示所有文件已经下载完成,并上传到本地私有镜像仓库

./ezdown -S # 启动 kubeasz 容器

fi

# 初始化一个名为$clustername的k8s集群配置

CLUSTER_NAME="$clustername"

${pwd}/ezctl new ${CLUSTER_NAME}

if [[ $? -ne 0 ]];then

echo "cluster name [${CLUSTER_NAME}] was exist in ${pwd}/clusters/${CLUSTER_NAME}."

exit 1

fi

if [[ ${software_packet} != '' ]];then

# 设置参数,启用离线安装

# 离线安装文档:https://github.com/easzlab/kubeasz/blob/3.6.2/docs/setup/offline_install.md

sed -i 's/^INSTALL_SOURCE.*$/INSTALL_SOURCE: "offline"/g' ${pwd}/clusters/${CLUSTER_NAME}/config.yml

fi

# to check ansible service

ansible all -m ping

#---------------------------------------------------------------------------------------------------

#修改二进制安装脚本配置 config.yml

sed -ri "s+^(CLUSTER_NAME:).*$+\1 \"${CLUSTER_NAME}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

## k8s上日志及容器数据存独立磁盘步骤(参考阿里云的)

mkdir -p /var/lib/container/{kubelet,docker,nfs_dir} /var/lib/{kubelet,docker} /nfs_dir

## 不用fdisk分区,直接格式化数据盘 mkfs.ext4 /dev/vdb,按下面添加到fstab后,再mount -a刷新挂载(blkid /dev/sdx)

## cat /etc/fstab

# UUID=105fa8ff-bacd-491f-a6d0-f99865afc3d6 / ext4 defaults 1 1

# /dev/vdb /var/lib/container/ ext4 defaults 0 0

# /var/lib/container/kubelet /var/lib/kubelet none defaults,bind 0 0

# /var/lib/container/docker /var/lib/docker none defaults,bind 0 0

# /var/lib/container/nfs_dir /nfs_dir none defaults,bind 0 0

## tree -L 1 /var/lib/container

# /var/lib/container

# ├── docker

# ├── kubelet

# └── lost+found

# docker data dir

DOCKER_STORAGE_DIR="/var/lib/container/docker"

sed -ri "s+^(STORAGE_DIR:).*$+STORAGE_DIR: \"${DOCKER_STORAGE_DIR}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

# containerd data dir

CONTAINERD_STORAGE_DIR="/var/lib/container/containerd"

sed -ri "s+^(STORAGE_DIR:).*$+STORAGE_DIR: \"${CONTAINERD_STORAGE_DIR}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

# kubelet logs dir

KUBELET_ROOT_DIR="/var/lib/container/kubelet"

sed -ri "s+^(KUBELET_ROOT_DIR:).*$+KUBELET_ROOT_DIR: \"${KUBELET_ROOT_DIR}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

if [[ $clustername != 'aws' ]]; then

# docker aliyun repo

REG_MIRRORS="https://pqbap4ya.mirror.aliyuncs.com"

sed -ri "s+^REG_MIRRORS:.*$+REG_MIRRORS: \'[\"${REG_MIRRORS}\"]\'+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

fi

# [docker]信任的HTTP仓库

sed -ri "s+127.0.0.1/8+${netnum}.0/24+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

# disable dashboard auto install

sed -ri "s+^(dashboard_install:).*$+\1 \"no\"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

# 融合配置准备(按示例部署命令这里会生成testk8s.boge.com这个域名,部署脚本会基于这个域名签证书,优势是后面访问kube-apiserver,可以基于此域名解析任意IP来访问,灵活性更高)

CLUSEER_WEBSITE="${CLUSTER_NAME}k8s.${domainName}"

lb_num=$(grep -wn '^MASTER_CERT_HOSTS:' ${pwd}/clusters/${CLUSTER_NAME}/config.yml |awk -F: '{print $1}')

lb_num1=$(expr ${lb_num} + 1)

lb_num2=$(expr ${lb_num} + 2)

sed -ri "${lb_num1}s+.*$+ - "${CLUSEER_WEBSITE}"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

sed -ri "${lb_num2}s+(.*)$+#\1+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

# node节点最大pod 数

MAX_PODS="120"

sed -ri "s+^(MAX_PODS:).*$+\1 ${MAX_PODS}+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

# calico 自建机房都在二层网络可以设置 CALICO_IPV4POOL_IPIP=“off”,以提高网络性能; 公有云上VPC在三层网络,需设置CALICO_IPV4POOL_IPIP: "Always"开启ipip隧道

#sed -ri "s+^(CALICO_IPV4POOL_IPIP:).*$+\1 \"off\"+g" ${pwd}/clusters/${CLUSTER_NAME}/config.yml

# 修改二进制安装脚本配置 hosts

# clean old ip

sed -ri '/192.168.1.1/d' ${pwd}/clusters/${CLUSTER_NAME}/hosts

sed -ri '/192.168.1.2/d' ${pwd}/clusters/${CLUSTER_NAME}/hosts

sed -ri '/192.168.1.3/d' ${pwd}/clusters/${CLUSTER_NAME}/hosts

sed -ri '/192.168.1.4/d' ${pwd}/clusters/${CLUSTER_NAME}/hosts

sed -ri '/192.168.1.5/d' ${pwd}/clusters/${CLUSTER_NAME}/hosts

# 输入准备创建ETCD集群的主机位

echo "enter etcd hosts here (example: 203 202 201) ↓"

read -p "" ipnums

for ipnum in `echo ${ipnums}`

do

echo $netnum.$ipnum

sed -i "/\[etcd/a $netnum.$ipnum" ${pwd}/clusters/${CLUSTER_NAME}/hosts

done

# 输入准备创建KUBE-MASTER集群的主机位

echo "enter kube-master hosts here (example: 202 201) ↓"

read -p "" ipnums

for ipnum in `echo ${ipnums}`

do

echo $netnum.$ipnum

sed -i "/\[kube_master/a $netnum.$ipnum" ${pwd}/clusters/${CLUSTER_NAME}/hosts

done

# 输入准备创建KUBE-NODE集群的主机位

echo "enter kube-node hosts here (example: 204 203) ↓"

read -p "" ipnums

for ipnum in `echo ${ipnums}`

do

echo $netnum.$ipnum

sed -i "/\[kube_node/a $netnum.$ipnum" ${pwd}/clusters/${CLUSTER_NAME}/hosts

done

# 配置容器运行时CNI

case ${cni} in

flannel)

sed -ri "s+^CLUSTER_NETWORK=.*$+CLUSTER_NETWORK=\"${cni}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/hosts

;;

calico)

sed -ri "s+^CLUSTER_NETWORK=.*$+CLUSTER_NETWORK=\"${cni}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/hosts

;;

cilium)

sed -ri "s+^CLUSTER_NETWORK=.*$+CLUSTER_NETWORK=\"${cni}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/hosts

;;

*)

echo "cni need be flannel or calico or cilium."

exit 11

esac

# 配置K8S的ETCD数据备份的定时任务

# https://github.com/easzlab/kubeasz/blob/master/docs/op/cluster_restore.md

if cat /etc/redhat-release &>/dev/null;then

if ! grep -w '94.backup.yml' /var/spool/cron/root &>/dev/null;then echo "00 00 * * * /usr/local/bin/ansible-playbook -i /etc/kubeasz/clusters/${CLUSTER_NAME}/hosts -e @/etc/kubeasz/clusters/${CLUSTER_NAME}/config.yml /etc/kubeasz/playbooks/94.backup.yml &> /dev/null; find /etc/kubeasz/clusters/${CLUSTER_NAME}/backup/ -type f -name '*.db' -mtime +3|xargs rm -f" >> /var/spool/cron/root;else echo exists ;fi

chown root.crontab /var/spool/cron/root

chmod 600 /var/spool/cron/root

rm -f /var/run/cron.reboot

service crond restart

else

if ! grep -w '94.backup.yml' /var/spool/cron/crontabs/root &>/dev/null;then echo "00 00 * * * /usr/local/bin/ansible-playbook -i /etc/kubeasz/clusters/${CLUSTER_NAME}/hosts -e @/etc/kubeasz/clusters/${CLUSTER_NAME}/config.yml /etc/kubeasz/playbooks/94.backup.yml &> /dev/null; find /etc/kubeasz/clusters/${CLUSTER_NAME}/backup/ -type f -name '*.db' -mtime +3|xargs rm -f" >> /var/spool/cron/crontabs/root;else echo exists ;fi

chown root.crontab /var/spool/cron/crontabs/root

chmod 600 /var/spool/cron/crontabs/root

rm -f /var/run/crond.reboot

service cron restart

fi

#---------------------------------------------------------------------------------------------------

# 准备开始安装了

rm -rf ${pwd}/{dockerfiles,docs,.gitignore,pics,dockerfiles} &&\

find ${pwd}/ -name '*.md'|xargs rm -f

read -p "Enter to continue deploy k8s to all nodes >>>" YesNobbb

# now start deploy k8s cluster

cd ${pwd}/

# to prepare CA/certs & kubeconfig & other system settings

${pwd}/ezctl setup ${CLUSTER_NAME} 01

sleep 1

# to setup the etcd cluster

${pwd}/ezctl setup ${CLUSTER_NAME} 02

sleep 1

# to setup the container runtime(docker or containerd)

case ${cri} in

containerd)

sed -ri "s+^CONTAINER_RUNTIME=.*$+CONTAINER_RUNTIME=\"${cri}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/hosts

${pwd}/ezctl setup ${CLUSTER_NAME} 03

;;

docker)

sed -ri "s+^CONTAINER_RUNTIME=.*$+CONTAINER_RUNTIME=\"${cri}\"+g" ${pwd}/clusters/${CLUSTER_NAME}/hosts

${pwd}/ezctl setup ${CLUSTER_NAME} 03

;;

*)

echo "cri need be containerd or docker."

exit 11

esac

sleep 1

# to setup the master nodes

${pwd}/ezctl setup ${CLUSTER_NAME} 04

sleep 1

# to setup the worker nodes

${pwd}/ezctl setup ${CLUSTER_NAME} 05

sleep 1

# to setup the network plugin(flannel、calico...)

${pwd}/ezctl setup ${CLUSTER_NAME} 06

sleep 1

# to setup other useful plugins(metrics-server、coredns...)

${pwd}/ezctl setup ${CLUSTER_NAME} 07

sleep 1

# [可选]对集群所有节点进行操作系统层面的安全加固 https://github.com/dev-sec/ansible-os-hardening

#ansible-playbook roles/os-harden/os-harden.yml

#sleep 1

#cd `dirname ${software_packet:-/tmp}`

k8s_bin_path='/opt/kube/bin'

echo "------------------------- k8s version list ---------------------------"

${k8s_bin_path}/kubectl version

echo

echo "------------------------- All Healthy status check -------------------"

${k8s_bin_path}/kubectl get componentstatus

echo

echo "------------------------- k8s cluster info list ----------------------"

${k8s_bin_path}/kubectl cluster-info

echo

echo "------------------------- k8s all nodes list -------------------------"

${k8s_bin_path}/kubectl get node -o wide

echo

echo "------------------------- k8s all-namespaces's pods list ------------"

${k8s_bin_path}/kubectl get pod --all-namespaces

echo

echo "------------------------- k8s all-namespaces's service network ------"

${k8s_bin_path}/kubectl get svc --all-namespaces

echo

echo "------------------------- k8s welcome for you -----------------------"

echo

# you can use k alias kubectl to siample

echo "alias k=kubectl && complete -F __start_kubectl k" >> ~/.bashrc

# get dashboard url

${k8s_bin_path}/kubectl cluster-info|grep dashboard|awk '{print $NF}'|tee -a /root/k8s_results

# get login token

${k8s_bin_path}/kubectl -n kube-system describe secret $(${k8s_bin_path}/kubectl -n kube-system get secret | grep admin-user | awk '{print $1}')|grep 'token:'|awk '{print $NF}'|tee -a /root/k8s_results

echo

echo "you can look again dashboard and token info at >>> /root/k8s_results <<<"

echo ">>>>>>>>>>>>>>>>> You need to excute command [ reboot ] to restart all nodes <<<<<<<<<<<<<<<<<<<<"

#find / -type f -name "kubeasz*.tar.gz" -o -name "k8s_install_new.sh"|xargs rm -f

注意:ubuntu-22.04.3系统在使用kubeasz-3.6.2离线包进行离线安装时,会报kube-proxy服务启动异常,这是缺少ipset包的原因,正好可以作为安装过程中排错的案例

TASK [kube-node : 轮询等待kube-proxy启动] ***************************************************************

fatal: [10.0.1.203]: FAILED! => {"attempts": 4, "changed": true, "cmd": "systemctl is-active kube-proxy.service", "delta": "0:00:00.005924", "end": "2023-10-17 14:52:50.142647", "msg": "non-zero return code", "rc": 3, "start": "2023-10-17 14:52:50.136723", "stderr": "", "stderr_lines": [], "stdout": "activating", "stdout_lines": ["activating"]}

# 开始在线安装(这里选择容器运行时是docker,CNI为calico,K8S集群名称为test)

# 脚本安装参数依次为:所有服务器的root统一密码、网络位、主机位、容器运行时、K8S网络插件、K8S集群名称

bash k8s_install_new.sh bogeit 10.0.1 201\ 202\ 203\ 204 containerd calico boge.com test-cn

# 脚本基本是自动化的,除了下面几处提示按要求复制粘贴下,再回车即可

# 输入准备创建ETCD集群的主机位,复制 203 202 201 粘贴并回车

echo "enter etcd hosts here (example: 203 202 201) ↓"

# 输入准备创建KUBE-MASTER集群的主机位,复制 202 201 粘贴并回车

echo "enter kube-master hosts here (example: 202 201) ↓"

# 输入准备创建KUBE-NODE集群的主机位,复制 204 203 粘贴并回车

echo "enter kube-node hosts here (example: 204 203) ↓"

# 这里会提示你是否继续安装,没问题的话直接回车即可

Enter to continue deploy k8s to all nodes >>>

# 安装完成后重新加载下环境变量以实现kubectl命令补齐

. ~/.bashrc

安装完K8S集群后,我们部署一个nginx服务来看看服务运行效果吧!

# k8s v1.18 use this command to create deployment and service:

# kubectl create deployment nginx --image=nginx:1.21.6

# kubectl expose deployment nginx --port=80 --target-port=80

---

kind: Service

apiVersion: v1

metadata:

name: new-nginx

spec:

selector:

app: new-nginx

ports:

- name: http-port

port: 80

protocol: TCP

targetPort: 80

---

# 新版本k8s的ingress配置

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: new-nginx

annotations:

nginx.ingress.kubernetes.io/force-ssl-redirect: "true"

nginx.ingress.kubernetes.io/whitelist-source-range: 0.0.0.0/0

nginx.ingress.kubernetes.io/configuration-snippet: |

if ($host != 'www.boge.com' ) {

rewrite ^ http://www.boge.com$request_uri permanent;

}

spec:

rules:

- host: boge.com

http:

paths:

- backend:

service:

name: new-nginx

port:

number: 80

path: /

pathType: Prefix

- host: m.boge.com

http:

paths:

- backend:

service:

name: new-nginx

port:

number: 80

path: /

pathType: Prefix

- host: www.boge.com

http:

paths:

- backend:

service:

name: new-nginx

port:

number: 80

path: /

pathType: Prefix

# tls:

# - hosts:

# - boge.com

# - m.boge.com

# - www.boge.com

# secretName: boge-com-tls

# kubectl -n create secret tls boge-com-tls --key boge.key --cert boge.csr

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: new-nginx

labels:

app: new-nginx

spec:

replicas: 2

selector:

matchLabels:

app: new-nginx

template:

metadata:

labels:

app: new-nginx

spec:

containers:

#--------------------------------------------------

- name: new-nginx

image: nginx:1.21.6

# image: nginx:1.25.1

env:

- name: TZ

value: Asia/Shanghai

ports:

- containerPort: 80

volumeMounts:

- name: html-files

mountPath: "/usr/share/nginx/html"

#--------------------------------------------------

- name: busybox

image: registry.cn-shanghai.aliyuncs.com/acs/busybox:v1.29.2

# image: nicolaka/netshoot

args:

- /bin/sh

- -c

- >

while :; do

if [ -f /html/index.html ];then

echo "[$(date +%F\ %T)] ${MY_POD_NAMESPACE}-${MY_POD_NAME}-${MY_POD_IP}" > /html/index.html

sleep 1

else

touch /html/index.html

fi

done

env:

- name: TZ

value: Asia/Shanghai

- name: MY_POD_NAME

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: metadata.name

- name: MY_POD_NAMESPACE

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: metadata.namespace

- name: MY_POD_IP

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: status.podIP

volumeMounts:

- name: html-files

mountPath: "/html"

- mountPath: /etc/localtime

name: tz-config

#--------------------------------------------------

volumes:

- name: html-files

emptyDir:

medium: Memory

sizeLimit: 10Mi

- name: tz-config

hostPath:

path: /usr/share/zoneinfo/Asia/Shanghai

---

K8S在各个云平台的应用

AWS EKS

GOOGLE GKE

ALIYUN ACK

HUAWEI Cloud CCE

TENCENT Cloud TKE

volcengine Cloud VKE