Data Mining数据挖掘—4. Text Mining文本挖掘

5. Text Mining

The extraction of implicit, previously unknown and potentially useful information from a large amount of textual resources.

Applications: Email and news filtering, spam detection, fake review classification, sentiment analysis, query suggestion, auto-complete, search result organization, information extraction(named entity recognition and disambiguation, relation extraction, fact extraction) …

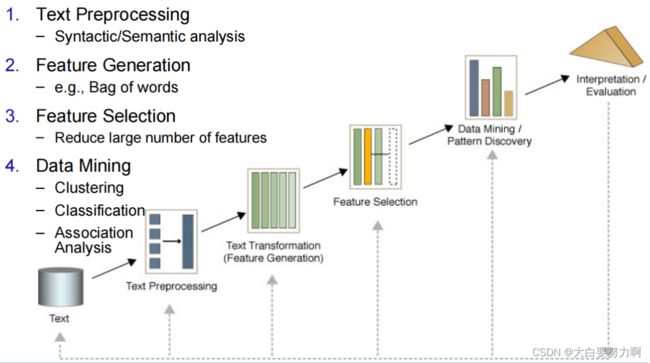

Text Mining Process

5.1 Text Similarity and Feature Creation

1. Levenshtein / edit distance

Compute total number of character insertions/deletions/changes, then divide by longer text length. Levenshtein距离(也称为编辑距离)定义为通过插入、删除或替换一个字符,将一个字符串转换为另一个字符串所需的最小操作次数。

Example: kitten & sitting -> Levenshtein distance = 3

2. Jaro-Winkler

Based on the number of common characters within a specific distance (Optimized for comparing person names)

3. n-gram

split string into set of trigrams, then measure overlap of trigrams. e.g. Jaccard: |common trigrams| / |all trigrams|

Example: “iPhone5 Apple” vs. “Apple iPhone 5”

common trigrams: “iPh”, “Pho”, “hon”, “one”, “App”, “ppl”, “ple”

other trigrams: “ne5”, “e5 “, “5 A”, “ Ap” (1), “le “, “e i”, “ iP”, “e 5” (2)

Jaccard: 7/15 = 0.47

4. word2vec Distance

represent a word by a numerical vector (sematic similarity)

5. Term-Document Matrix

compare long texts

Document is treated as a bag of words (each word or term becomes a feature; order of words/terms is ignored)

Each document is represented by a vector

Different techniques for vector creation:

(1)Binary Term Occurrence: Boolean attributes describe whether or not a term appears in the document.

Asymmetric binary attributes: If one of the states is more important or more valuable than the other. By convention, state 1 represents the more important state, rare or infrequent state.

Jaccard Coefficient for Text Similarity

Interpretation of Jaccard: Ratio of common words to all words in both texts

Drawback: All words are considered equal

(2)Term Occurrence: Number of occurences of a term in the document (problematic if documents have different length).

(3) Terms frequency: Attributes represent the frequency in which a term appears in the document (Number of occurrences / Number of words in document)

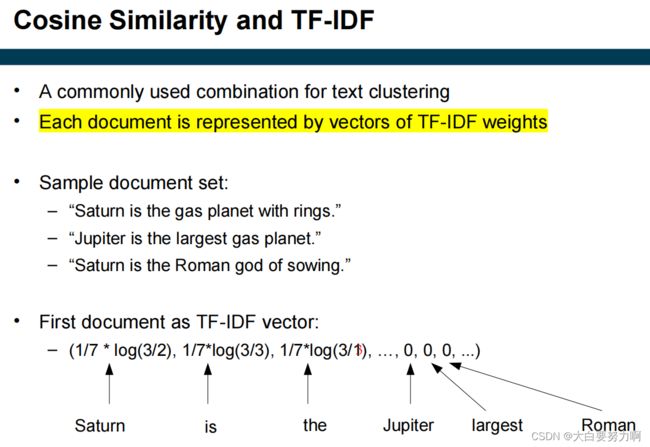

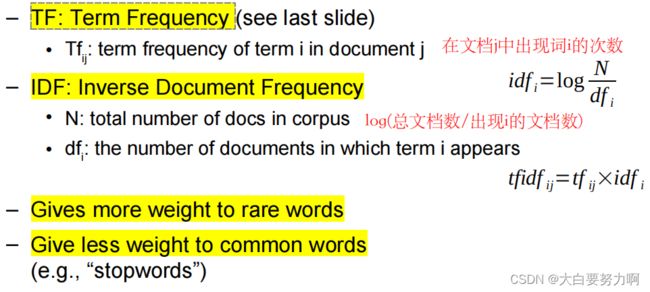

(4) TF-IDF

TF-IDF weight (term frequency–inverse document frequency) is used to evaluate how important a word is to a corpus of documents

Similarity of documents – Cosine Similarity

Words are now weighted

– Words that are important for a text: high TF

– Words that are too unspecific: low IDF

5.2 Text Preprocessing

5.2.1 Syntactic / Linguistic Text Analysis

Simple Syntactic Analysis: Text Cleanup (remove punctuation, HTML tags), Normalize case, Tokenization (break text into single words or N-grams)

Advanced Linguistic Analysis: Word Sense Disambiguation, Part Of Speech (POS) tagging

Synonym Normalization & Spelling Correction: usually using catalogs, such as WordNet. Or anchor texts of links to that page

POS Tagging: determining word classes and syntactic functions, finding the structure of a sentence. Supervised approach (Use an annotated corpus of text). Simple algorithm for key phrase extraction– e.g., annotation of text corpora.

Stop Words Removal: Many of the most frequent words (stop words) are likely to be useless -> remove -> Reduce data set size + Improve efficiency and effectiveness

Stemming: Techniques to find out the root/stem of a word -> improve effectiveness, reduce term vector size

| Lookup-based Stemming | Rule-based Stemming |

|---|---|

| accurate, exceptions are handled easily, consume much space | low space consumption, works for emerging words without update |

5.3 Feature Selection

Not all features are helpful. Transformation approaches tend to create lots of features (dimensionality problem).

Pruning methods

Specify if and how too frequent or too infrequent words should be ignored [percentual, absolute, by rank]

POS Tagging

POS tags may help with feature selection

Named Entity Recognition and Linking

The classes of Named Entity Recognition (NER) may be useful features

Named Entity Linking (Identify named entities in a knowledge base) May be used to create additional features

5.4 Pattern Discovery

Classification Definition

Given: A collection of labeled documents (training set)

Find: A model for the class as a function of the values of the features.

Goal: Previously unseen documents should be assigned a class as accurately as possible.

Classification methods commonly used for text: Naive Bayes, SVMs, Neural Networks, Random Forests…

Sentiment Analysis

A specific classification task.

Given: a text

Target: a class of sentiments( e.g., positive, neutral, negative; sad, happy, angry, surprised)

Alternative: numerical score (e.g., -5…+5)

Can be implemented as supervised classification/regression task

Reasonable features for sentiment analysis might include punctuation: use of “!”, “?”, “?!”; smileys (usually encoded using punctuation: ); use of visual markup, where available (red color, bold face, …); amount of capitalization (“screaming”)

Identifying Fake Reviews

Alternative Document Representations

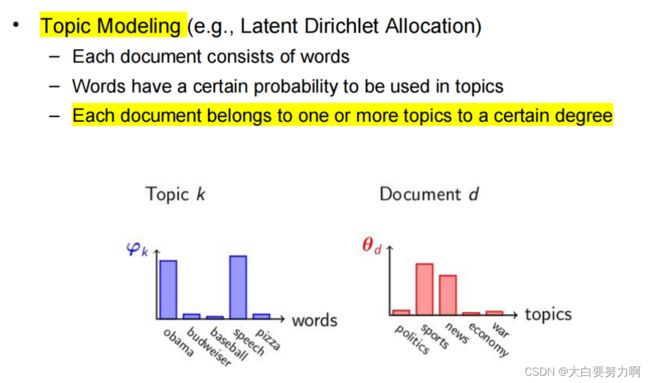

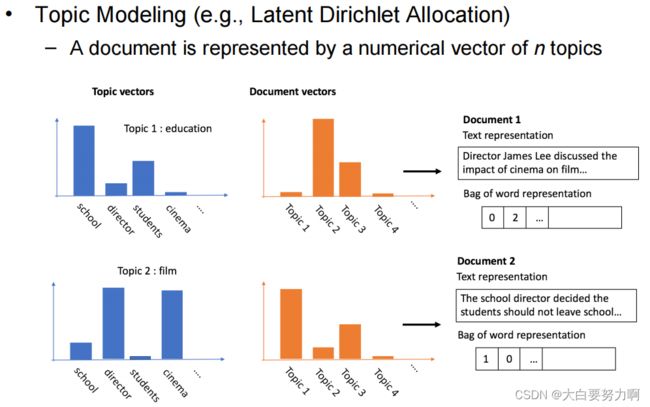

[a] Topic Modeling

[b] doc2vec

an extension of word2vec, an extension of word2vec

[c] BERT

5.5 Processing Text from Social Media

Respect special characters

Normalizing