大数据分析与应用实验任务十一

大数据分析与应用实验任务十一

实验目的

-

通过实验掌握spark Streaming相关对象的创建方法;

-

熟悉spark Streaming对文件流、套接字流和RDD队列流的数据接收处理方法;

-

熟悉spark Streaming的转换操作,包括无状态和有状态转换。

-

熟悉spark Streaming输出编程操作。

实验任务

一、DStream 操作概述

-

创建 StreamingContext 对象

登录 Linux 系统后,启动 pyspark。进入 pyspark 以后,就已经获得了一个默认的 SparkConext 对象,也就是 sc。因此,可以采用如下方式来创建 StreamingContext 对象:

from pyspark.streaming import StreamingContext sscluozhongye = StreamingContext(sc, 1)如果是编写一个独立的 Spark Streaming 程序,而不是在 pyspark 中运行,则需要在代码文件中通过类似如下的方式创建 StreamingContext 对象:

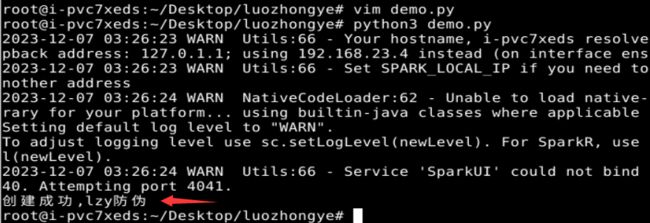

from pyspark import SparkContext, SparkConf from pyspark.streaming import StreamingContext conf = SparkConf() conf.setAppName('TestDStream') conf.setMaster('local[2]') sc = SparkContext(conf = conf) ssc = StreamingContext(sc, 1) print("创建成功,lzy防伪")

二、基本输入源

- 文件流

-

在 pyspark 中创建文件流

首先,在 Linux 系统中打开第 1 个终端(为了便于区分多个终端,这里记作“数据源终端”),创建一个 logfile 目录,命令如下:

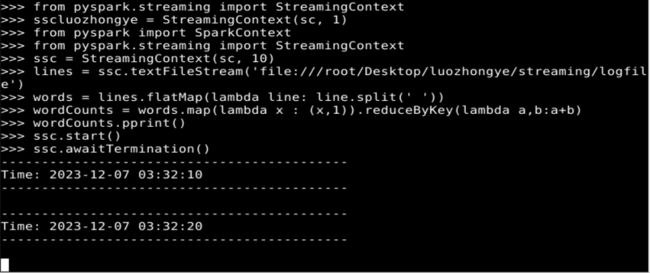

cd /root/Desktop/luozhongye/ mkdir streaming cd streaming mkdir logfile其次,在 Linux 系统中打开第二个终端(记作“流计算终端”),启动进入 pyspark,然后,依次输入如下语句:

from pyspark import SparkContext from pyspark.streaming import StreamingContext ssc = StreamingContext(sc, 10) lines = ssc.textFileStream('file:///root/Desktop/luozhongye/streaming/logfile') words = lines.flatMap(lambda line: line.split(' ')) wordCounts = words.map(lambda x : (x,1)).reduceByKey(lambda a,b:a+b) wordCounts.pprint() ssc.start() ssc.awaitTermination()

-

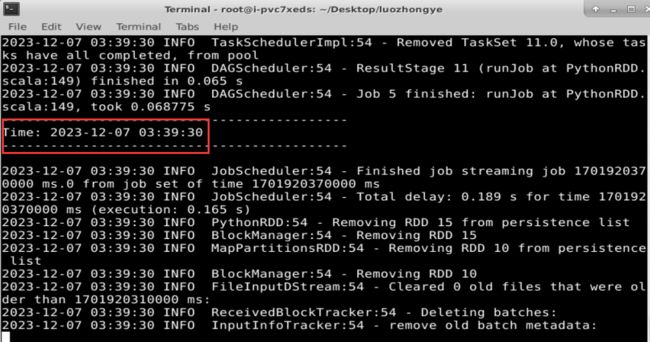

采用独立应用程序方式创建文件流

#!/usr/bin/env python3 from pyspark import SparkContext, SparkConf from pyspark.streaming import StreamingContext conf = SparkConf() conf.setAppName('TestDStream') conf.setMaster('local[2]') sc = SparkContext(conf = conf) ssc = StreamingContext(sc, 10) lines = ssc.textFileStream('file:///root/Desktop/luozhongye/streaming/logfile') words = lines.flatMap(lambda line: line.split(' ')) wordCounts = words.map(lambda x : (x,1)).reduceByKey(lambda a,b:a+b) wordCounts.pprint() ssc.start() ssc.awaitTermination() print("2023年12月7日lzy")保存该文件,并执行以下命令:

cd /root/Desktop/luozhongye/streaming/logfile/ spark-submit FileStreaming.py

- 套接字流

-

使用套接字流作为数据源

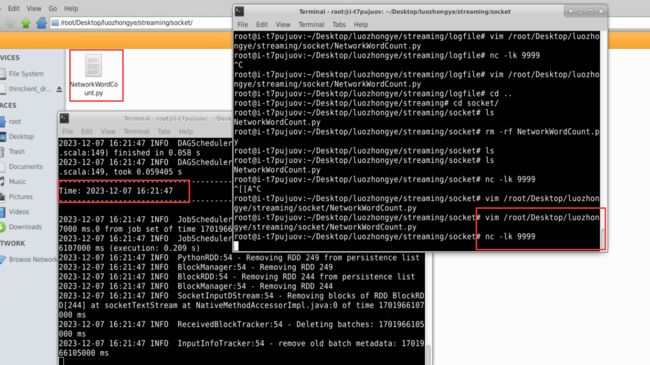

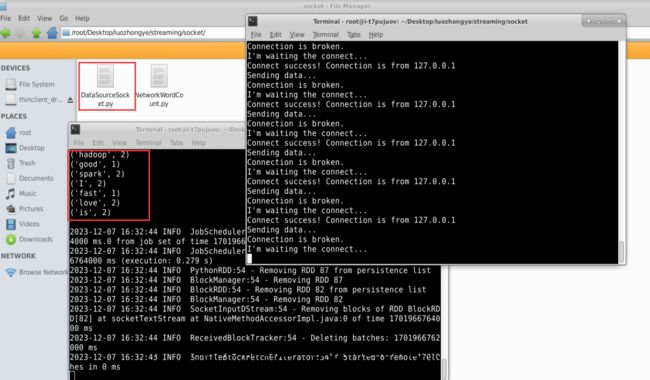

新建一个代码文件“/root/Desktop/luozhongye/streaming/socket/NetworkWordCount.py”,在NetworkWordCount.py 中输入如下内容:

#!/usr/bin/env python3 from __future__ import print_function import sys from pyspark import SparkContext from pyspark.streaming import StreamingContext if __name__ == "__main__": if len(sys.argv) != 3: print("Usage: NetworkWordCount.py" 使用如下 nc 命令生成一个 Socket 服务器端:

nc -lk 9999新建一个终端(记作“流计算终端”),执行如下代码启动流计算:

cd /root/Desktop/luozhongye/streaming/socket /usr/local/spark/bin/spark-submit NetworkWordCount.py localhost 9999

-

使用 Socket 编程实现自定义数据源

新建一个代码文件“/root/Desktop/luozhongye/streaming/socket/DataSourceSocket.py”,在 DataSourceSocket.py 中输入如下代码:

#!/usr/bin/env python3 import socket # 生成 socket 对象 server = socket.socket() # 绑定 ip 和端口 server.bind(('localhost', 9999)) # 监听绑定的端口 server.listen(1) while 1: # 为了方便识别,打印一个“I’m waiting the connect...” print("I'm waiting the connect...") # 这里用两个值接收,因为连接上之后使用的是客户端发来请求的这个实例 # 所以下面的传输要使用 conn 实例操作 conn, addr = server.accept() # 打印连接成功 print("Connect success! Connection is from %s " % addr[0]) # 打印正在发送数据 print('Sending data...') conn.send('I love hadoop I love spark hadoop is good spark is fast'.encode()) conn.close() print('Connection is broken.') print("2023年12月7日lzy")执行如下命令启动 Socket 服务器端:

cd /root/Desktop/luozhongye/streaming/socket /usr/local/spark/bin/spark-submit DataSourceSocket.py新建一个终端(记作“流计算终端”),输入以下命令启动 NetworkWordCount 程序:

cd /root/Desktop/luozhongye/streaming/socket /usr/local/spark/bin/spark-submit NetworkWordCount.py localhost 9999

-

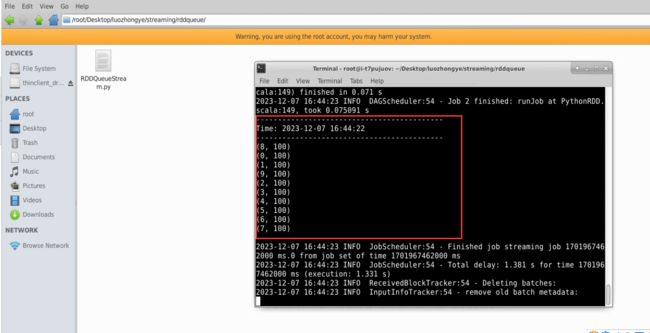

RDD 队列流

Linux 系统中打开一个终端,新建一个代码文件“/root/Desktop/luozhongye/ streaming/rddqueue/ RDDQueueStream.py”,输入以下代码:

#!/usr/bin/env python3 import time from pyspark import SparkContext from pyspark.streaming import StreamingContext if __name__ == "__main__": print("") sc = SparkContext(appName="PythonStreamingQueueStream") ssc = StreamingContext(sc, 2) # 创建一个队列,通过该队列可以把 RDD 推给一个 RDD 队列流 rddQueue = [] for i in range(5): rddQueue += [ssc.sparkContext.parallelize([j for j in range(1, 1001)], 10)] time.sleep(1) # 创建一个 RDD 队列流 inputStream = ssc.queueStream(rddQueue) mappedStream = inputStream.map(lambda x: (x % 10, 1)) reducedStream = mappedStream.reduceByKey(lambda a, b: a + b) reducedStream.pprint() ssc.start() ssc.stop(stopSparkContext=True, stopGraceFully=True)下面执行如下命令运行该程序:

cd /root/Desktop/luozhongye/streaming/rddqueue /usr/local/spark/bin/spark-submit RDDQueueStream.py

三、转换操作

-

滑动窗口转换操作

对“套接字流”中的代码 NetworkWordCount.py 进行一个小的修改,得到新的代码文件“/root/Desktop/luozhongye/streaming/socket/WindowedNetworkWordCount.py”,其内容如下:

#!/usr/bin/env python3 from __future__ import print_function import sys from pyspark import SparkContext from pyspark.streaming import StreamingContext if __name__ == "__main__": if len(sys.argv) != 3: print("Usage: WindowedNetworkWordCount.py"

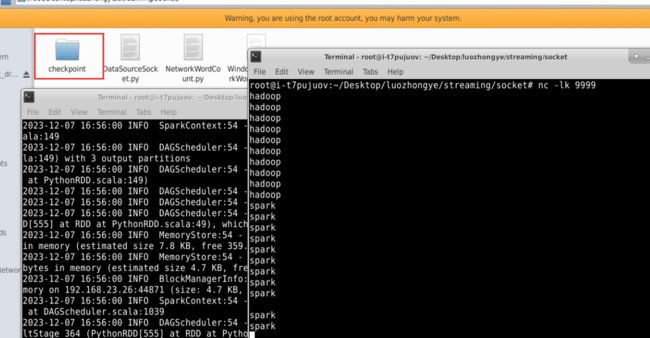

为了测试程序的运行效果,首先新建一个终端(记作“数据源终端”),执行如下命令运行nc 程序:

cd /root/Desktop/luozhongye/streaming/socket/

nc -lk 9999

然后,再新建一个终端(记作“流计算终端”),运行客户端程序 WindowedNetworkWordCount.py,命令如下:

cd /root/Desktop/luozhongye/streaming/socket/

/usr/local/spark/bin/spark-submit WindowedNetworkWordCount.py localhost 9999

在数据源终端内,连续输入 10 个“hadoop”,每个 hadoop 单独占一行(即每输入一个 hadoop就按回车键),再连续输入 10 个“spark”,每个 spark 单独占一行。这时,可以查看流计算终端内显示的词频动态统计结果,可以看到,随着时间的流逝,词频统计结果会发生动态变化。

-

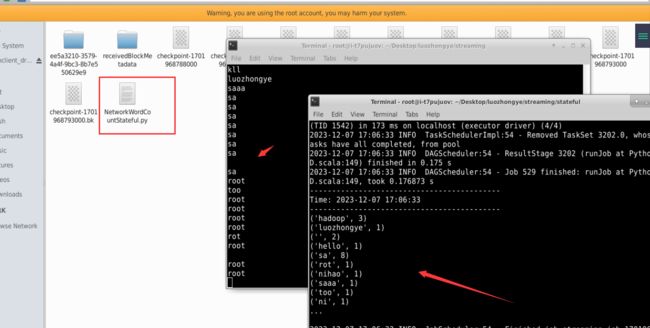

updateStateByKey 操作

在“/root/Desktop/luozhongye/streaming/stateful/”目录下新建一个代码文件 NetworkWordCountStateful.py,输入以下代码:

#!/usr/bin/env python3 from __future__ import print_function import sys from pyspark import SparkContext from pyspark.streaming import StreamingContext if __name__ == "__main__": if len(sys.argv) != 3: print("Usage: NetworkWordCountStateful.py" 新建一个终端(记作“数据源终端”),执行如下命令启动 nc 程序:

nc -lk 9999新建一个 Linux 终端(记作“流计算终端”),执行如下命令提交运行程序:

cd /root/Desktop/luozhongye/streaming/stateful /usr/local/spark/bin/spark-submit NetworkWordCountStateful.py localhost 9999

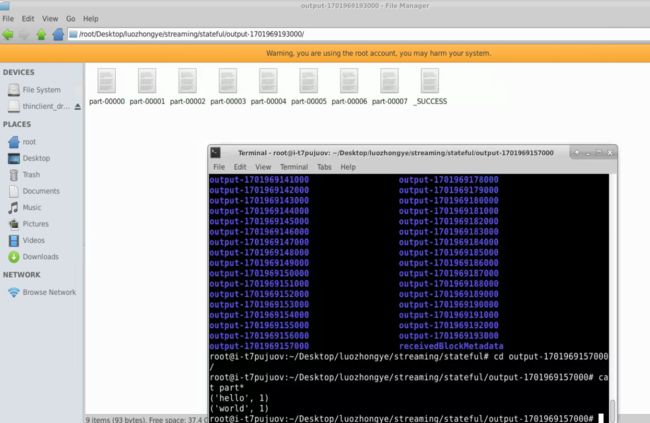

四、把 DStream 输出到文本文件中

下面对之前已经得到的“/root/Desktop/luozhongye/streaming/stateful/NetworkWordCountStateful.py”代码进行简单的修改,把生成的词频统计结果写入文本文件中。

修改后得到的新代码文件“/root/Desktop/luozhongye/streaming/stateful/NetworkWordCountStatefulText.py”的内容如下:

#!/usr/bin/env python3

from __future__ import print_function

import sys

from pyspark import SparkContext

from pyspark.streaming import StreamingContext

if __name__ == "__main__":

if len(sys.argv) != 3:

print("Usage: NetworkWordCountStateful.py " , file=

sys.stderr)

exit(-1)

sc = SparkContext(appName="PythonStreamingStatefulNetworkWordCount")

ssc = StreamingContext(sc, 1)

ssc.checkpoint("file:///root/Desktop/luozhongye/streaming/stateful/")

# RDD with initial state (key, value) pairs

initialStateRDD = sc.parallelize([(u'hello', 1), (u'world', 1)])

def updateFunc(new_values, last_sum):

return sum(new_values) + (last_sum or 0)

lines = ssc.socketTextStream(sys.argv[1], int(sys.argv[2]))

running_counts = lines.flatMap(lambda line: line.split(" ")) \

.map(lambda word: (word, 1)) \

.updateStateByKey(updateFunc, initialRDD=initialStateRDD)

running_counts.saveAsTextFiles("file:///root/Desktop/luozhongye/streaming/stateful/output")

running_counts.pprint()

ssc.start()

ssc.awaitTermination()

新建一个终端(记作“数据源终端”),执行如下命令运行nc 程序:

cd /root/Desktop/luozhongye/streaming/socket/

nc -lk 9999

新建一个 Linux 终端(记作“流计算终端”),执行如下命令提交运行程序:

cd /root/Desktop/luozhongye/streaming/stateful

/usr/local/spark/bin/spark-submit NetworkWordCountStatefulText.py localhost 9999

实验心得

通过本次实验,我深入理解了Spark Streaming,包括创建StreamingContext、DStream等对象。同时,我了解了Spark Streaming对不同类型数据流的处理方式,如文件流、套接字流和RDD队列流。此外,我还熟悉了Spark Streaming的转换操作和输出编程操作,并掌握了map、flatMap、filter等方法。最后,我能够自定义输出方式和格式。总之,这次实验让我全面了解了Spark Streaming,对未来的学习和工作有很大的帮助。