- 分布式定时器:原理设计与技术挑战

你一身傲骨怎能输

架构设计分布式

文章摘要分布式定时器用于在分布式系统中可靠、准确地触发定时任务,常见实现方案包括:基于数据库/消息队列的定时扫描、分布式任务调度框架(如Quartz集群、xxl-job)、时间轮/延迟队列(如Redis/Kafka)以及Zookeeper/Etcd协调服务。主要技术挑战包括时钟同步、任务幂等、高可用、负载均衡和故障恢复等。核心难点在于保证任务唯一性、调度精度与分布式一致性,技术选型需权衡轻量级(R

- 应用层流量与缓存累积延迟解析

你一身傲骨怎能输

计算机网络缓存

文章摘要应用层流量指OSI模型中应用层协议(如HTTP、gRPC)产生的数据交互,常见于Web请求、微服务通信等场景。缓存累积延迟指多级缓存或消息队列机制中,各级延迟叠加导致数据更新滞后,例如数据库更新后,因消息队列、缓存刷新等环节延迟,用户最终看到的数据可能滞后数秒。两者分别描述了网络通信的数据流机制和分布式系统中的延迟问题。1.应用层流量应用层流量,一般指的是在网络通信的OSI七层模型中,**

- 某银行基于容器负载均衡信创替代,实现完整全自动对外服务暴露的流水线实践

一、背景介绍外部硬件负载均衡作为容器业务统一入口的架构模式已在我行运行3年之久,通过长时间的容器云平台使用经验与负载均衡运维经验积累,在我行容器云环境形成一套特有的负载均衡适配模型,现部署模式下实现了应用上线人员以自服务的形式将容器服务对外暴露。根据2022年1月银保监会办发[2022]2号中关于科技能力建设的指导意见,坚持关键技术自主可控原则,降低外部依赖、避免单一依赖。为配合推进指导意见,同时

- 内测分发平台应用的异地容灾和负载均衡处理和实现思路

咕噜企业签名分发-大圣

负载均衡运维

内测分发平台应用的异地容灾和负载均衡处理和实现思路如下:一、异地容灾1.风险评估和需求分析:首先,对现有的IT基础设施进行全面的风险评估和需求分析,评估潜在风险和灾害的可能性,确定业务和数据的关键性。2.设计备份架构:根据风险评估和需求分析的结果,设计合理的备份架构,选择合适的备份设备和工具,确定备份频率和存储位置,确保数据的完整性和可用性。3.数据备份和同步:一旦备份架构设计完成,开始进行数据备

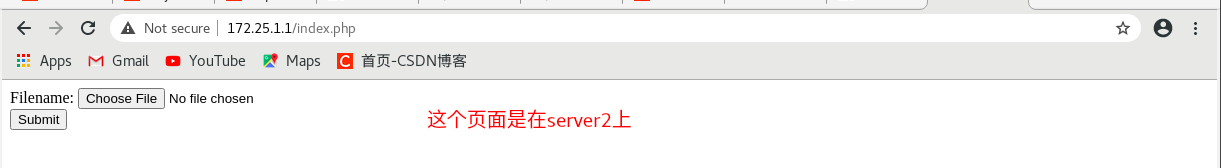

- HAProxy实现负载均衡及高可用集群(corosync+pacemaker

}}}else{echo“Invalidfile”;}?>注意:需要重启httpd **测试:** 的核心设计,以下是其关键基本概念的详细解析。这些概念构成了K8s容器编排系统的基石,用于自动化部署、扩展和管理容器化应用。###一、K8s核心概念概览K8s的核心对象围绕容器生命周期管理、资源调度和服务发现展开,主要包括:1.**Pod**-**定义**:K8s最小调度单元,封装一个或多个紧密关联的容器(如主应用容器+辅助sidecar容器)。-**特性**:-共

- 大规模图计算引擎的分区与通信优化:负载均衡与网络延迟的解决方案

LCG元

系统服务架构负载均衡网络运维

目录一、系统架构设计与核心流程1.1原创架构图解析1.2双流程对比分析二、分区策略优化实践2.1动态权重分区算法实现(Python)三、通信优化机制实现3.1基于RDMA的通信层实现(TypeScript)四、性能对比与调优4.1分区策略基准测试五、生产级部署方案5.1Kubernetes部署配置(YAML)5.2安全审计配置六、技术前瞻与演进附录:完整技术图谱一、系统架构设计与核心流程1.1原创

- 阿里云产品介绍

阿里云产品相关计算:云服务器ECS、云虚拟机、GPU云服务器网络:负载均衡SLB、弹性公网IP、专有网络VPC、CDN(CDN通过将内容缓存到全球分布的多个边缘节点(EdgeNodes)上,使用户可以从离自己最近的节点获取内容,从而减少网络延迟,提升访问速度)存储:块存储EBS(ElasticBlockStorage)、对象存储OSS(ObjectStorageService)、文件存储NAS数据

- 阿里云 RabbitMQ 可观测性最佳实践

观测云

阿里云rabbitmq云计算

阿里云RabbitMQ阿里云RabbitMQ是一款高性能、高可靠的消息中间件,支持多种消息协议和丰富的功能特性。它提供消息队列功能,能够实现应用间的消息解耦和异步通信,提升系统扩展性和稳定性。其支持多种消息持久化策略,确保消息不丢失;具备灵活的路由和负载均衡能力,可高效分发消息;还提供丰富的管理功能,如队列监控、消息追踪和权限管理等,帮助用户轻松管理和优化消息队列,广泛应用于分布式系统、微服务架构

- C#微服务配置管理黑科技:Nacos与量子级动态配置实战——从零构建永不宕机的云原生架构

墨夶

C#学习资料4云原生架构c#

1.微服务的‘配置熵增’困境传统配置管理的致命缺陷:雪崩效应:单点配置变更导致全链路重启优先级混乱:本地配置与云端配置冲突导致服务不可用动态性缺失:无法实时响应业务需求变更解决方案:量子级配置同步:Nacos的实时推送与本地缓存黑洞优先级机制:共享配置、扩展配置、应用配置的优先级博弈服务发现的‘引力波’:健康检查驱动的动态路由2.1Nacos在C#中的“量子叠加态”:从SDK安装到服务发现核心组件

- dubbo与zookeeper

中庸逍遥

1.什么是DubboDubbo是一款高性能、轻量级的开源JavaRPC框架,它提供了三大核心能力:面向接口的远程方法调用,智能容错和负载均衡,以及服务自动注册和发现。1.1架构1.2节点角色说明:Provider:暴露服务的服务提供方(生产者)Consumer:调用远程服务的服务消费方(消费者)Registry:服务注册与发现的注册中心(例如:zookeeper)Monitor:统计服务的调用次数

- Kubernetes 集群简介 部署搭建 及常用命令

GHY@CloudGuardian

Kuberneteskubernetes容器云原生运维linux

Kubernetes集群简介Kubernetes(简称K8s)是一个开源的容器编排平台,用于自动化容器化应用的部署、扩展和管理。它为容器提供了一个完整的管理框架,帮助开发者和运维团队在大规模环境中高效地部署和管理应用。Kubernetes集群是由多个组件组成的,主要包括控制平面和工作节点。集群的核心目的是确保容器化应用的高可用性、可扩展性、负载均衡、自动化部署等功能。Kubernetes集群的基本

- etcd:从应用场景到实现原理的全方位解读

转自:http://www.infoq.com/cn/articles/etcd-interpretation-application-scenario-implement-principleetcd:从应用场景到实现原理的全方位解读随着CoreOS和Kubernetes等项目在开源社区日益火热,它们项目中都用到的etcd组件作为一个高可用强一致性的服务发现存储仓库,渐渐为开发人员所关注。在云计算

- Proto文件从入门到精通——现代分布式系统通信的基石(含实战案例)

筏.k

gRPCc++rpc服务器

gRPC核心技术详解:Proto文件从入门到精通——现代分布式系统通信的基石(含实战案例)更新时间:2025年7月18日️标签:gRPC|ProtocolBuffers|Proto文件|微服务|分布式系统|RPC通信|接口定义文章目录前言一、基础概念:Proto文件究竟是什么?1.什么是Proto文件?2.传统通信vsProto通信二、语法详解:Proto文件的构成要素1.基本语法结构2.数据类型

- 云原生环境中Consul的动态服务发现实践

AI云原生与云计算技术学院

AI云原生与云计算云原生consul服务发现ai

云原生环境中Consul的动态服务发现实践关键词:云原生,服务发现,Consul,微服务,动态注册,健康检查,Raft算法摘要:本文深入探讨云原生环境下Consul在动态服务发现中的核心原理与实践方法。通过剖析Consul的架构设计、核心算法和关键机制,结合具体代码案例演示服务注册、发现和健康检查的全流程。详细阐述在Kubernetes、Docker等云原生技术栈中的集成方案,分析实际应用场景中的

- 容器化技术:Kubernetes(k8s)、Pod、Docker容器

人工干智能

Docker的高级知识kubernetesdocker容器

三个相关的容器化技术Kubernetes(k8s)、Pod、Docker容器在容器化技术领域各自扮演着不同的角色,它们之间既存在区别又相互联系。Kubernetes(k8s)定义:Kubernetes是一个开源的容器编排平台,用于自动化部署、扩展和管理容器化应用程序。功能:提供了强大的工具和功能,如服务发现、负载均衡、自动伸缩、滚动更新等,帮助用户更高效地管理复杂的容器环境。架构:基于控制论和反馈

- JAVA中分布式环境中如何实现单点登录与session共享

在远方的你等我

在单服务器web应用中,登录用户信息只需存在该服务的session中,这是我们几年前最长见的办法。而在当今分布式系统的流行中,微服务已成为主流,用户登录由某一个单点服务完成并存储session后,在高并发量的请求(需要验证登录信息)到达服务端的时候通过负载均衡的方式分发到集群中的某个服务器,这样就有可能导致同一个用户的多次请求被分发到集群的不同服务器上,就会出现取不到session数据的情况,于是

- 分布式学习笔记_04_复制模型

NzuCRAS

分布式学习笔记架构后端

常见复制模型使用复制的目的在分布式系统中,数据通常需要被分布在多台机器上,主要为了达到:拓展性:数据量因读写负载巨大,一台机器无法承载,数据分散在多台机器上仍然可以有效地进行负载均衡,达到灵活的横向拓展高容错&高可用:在分布式系统中单机故障是常态,在单机故障的情况下希望整体系统仍然能够正常工作,这时候就需要数据在多台机器上做冗余,在遇到单机故障时能够让其他机器接管统一的用户体验:如果系统客户端分布

- php SPOF

贵哥的编程之路(热爱分享 为后来者)

PHP语言经典程序100题php开发语言

1.什么是单点故障(SPOF)?单点故障指的是系统中某个组件一旦失效,整个系统或服务就会不可用。常见的单点有:数据库、缓存、Web服务器、负载均衡、网络设备等。2.常见单点故障场景只有一台数据库服务器,宕机后所有业务不可用只有一台Redis缓存,挂掉后缓存全部失效只有一台Web服务器,挂掉后网站无法访问只有一个负载均衡节点,挂掉后流量无法分发只有一条网络链路,断开后所有服务失联3.消除单点故障的主

- ZooKeeper架构及应用场景详解

走过冬季

学习笔记zookeeper架构分布式

ZooKeeper是一个开源的分布式协调服务,由Apache软件基金会维护。它旨在为分布式应用提供高性能、高可用、强一致性的基础服务,解决分布式系统中常见的协调难题(如配置管理、命名服务、分布式锁、服务发现、领导者选举等)。核心软件架构ZooKeeper的架构设计围绕其核心目标(协调)而优化,主要包含以下关键组件:集群模式(Ensemble):ZooKeeper通常部署为集群(称为ensemble

- 【个人笔记】负载均衡

撰卢

笔记负载均衡运维

文章目录nginx反向代理的好处负载均衡负载均很的配置方式均衡负载的方式nginx反向代理的好处提高访问速度进行负载均衡保证后端服务安全负载均衡负载均衡,就是把大量的请求按照我们指定的方式均衡的分配给集群中的每台服务器负载均很的配置方式upstreamwebservers{server192.168.100.128:8080server192.168.100.129:8080}server{lis

- Spring Boot基础

小李是个程序

springboot后端java

5.SpringBoot配置解析5.1.基础服务端口:server.port=8080(应用启动后监听8080端口)应用名称:spring.application.name=Chat64(注册到服务发现等场景时的标识)5.2.数据库连接(MySQL)URL:jdbc:mysql://localhost:3306/ai-chat(连接本地3306端口的ai-chat数据库,含时区、编码等参数)驱动:

- 前端面试题——5.AjAX的缺点?

浅端

前端面试题前端面试题

①传统的web交互是:用户一个网页动作,就会发送一个http请求到服务器,服务器处理完该请求再返回一个完整的HTML页面,客户端再重新加载,这样极大地浪费了带宽。②AJAX的出现解决了这个问题,它只会向服务器请求用户所需要的数据,并在客户端采用JavaScript处理返回的数据,操作DOM更新页面。③AJXA优点:无刷新更新页面异步服务器通信前端后端负载均衡④AJAX缺点:干掉了Back和Hist

- 【JAVA】的SPI机制

小白杨树树

javamicrosoft开发语言

在Java里,SPI(ServiceProviderInterface)是一种关键的服务发现机制。其核心在于,它能让服务提供者在运行时动态地向系统注册自身实现,实现了服务接口与具体实现的解耦。比如,自己开发的RPC框架定义了一个序列化器的接口,但是希望能够提供让用户自己使用实现好的序列化器的功能,就可以使用SPI机制。JAVA内置了这样的SPI功能。核心概念阐释服务接口(ServiceInterf

- 20250707-3-Kubernetes 核心概念-有了Docker,为什么还用K8s_笔记

Andy杨

CKA-专栏kubernetesdocker笔记

一、Kubernetes核心概念1.有了Docker,为什么还用Kubernetes1)企业需求独立性问题:Docker容器本质上是独立存在的,多个容器跨主机提供服务时缺乏统一管理机制负载均衡需求:为提高业务并发和高可用,企业会使用多台服务器部署多个容器实例,但Docker本身不具备负载均衡能力管理复杂度:随着Docker主机和容器数量增加,面临部署、升级、监控等统一管理难题运维效率:单机升

- SkyWalking实现微服务链路追踪的埋点方案

MenzilBiz

服务器运维微服务skywalking

SkyWalking实现微服务链路追踪的埋点方案一、SkyWalking简介SkyWalking是一款开源的APM(应用性能监控)系统,特别为微服务、云原生架构和容器化(Docker/Kubernetes)应用而设计。它主要功能包括分布式追踪、服务网格遥测分析、指标聚合和可视化等。SkyWalking支持多种语言(Java、Go、Python等)和协议(HTTP、gRPC等),能够提供端到端的调用

- Spring Boot 在后端领域的微服务负载均衡实践

AI大模型应用实战

springboot微服务负载均衡ai

SpringBoot在后端领域的微服务负载均衡实践关键词:SpringBoot、微服务、负载均衡、Ribbon、服务发现、高可用、分布式系统摘要:本文深入探讨了SpringBoot在微服务架构中实现负载均衡的实践方法。我们将从基础概念出发,详细分析负载均衡的核心原理,介绍SpringCloud生态中的关键组件(如Ribbon、Eureka等),并通过完整的代码示例展示如何在实际项目中实现高效的负载

- 客户端请求在 Spring Cloud Alibaba 框架中,包括 Nginx、Gateway、Nacos、Dubbo、Sentinel、RocketMQ 和 Seata 的调用链路描述

飞升不如收破烂~

nginxgatewaydubbo

以下是一个更详细和清晰的客户端请求在SpringCloudAlibaba框架中,包括Nginx、Gateway、Nacos、Dubbo、Sentinel、RocketMQ和Seata的调用链路描述:1.客户端请求用户在浏览器或移动应用中发起请求(例如,获取用户信息的API请求),请求通过HTTP发送到服务器。2.Nginx处理入口:请求首先到达Nginx。负载均衡:-Nginx根据配置的负载均衡策

- Nginx 配置完全指南:从基础到高阶优化

Nginx是一个高性能的HTTP和反向代理服务器,广泛应用于Web服务、负载均衡和静态资源托管。其配置文件通常位于/etc/nginx/nginx.conf或/usr/local/nginx/conf/nginx.conf,配置结构清晰,模块化设计便于管理。配置文件结构Nginx配置文件主要由全局块、events块和http块组成。全局块配置影响整个服务器的运行,events块配置与网络连接相关,

- WebLogic 作用,以及漏洞原理,流量特征与防御

Bigliuzi@

进阶漏洞进阶漏洞weblogic安全

WebLogic的核心作用:企业级别的应用服务器,相当于一个高性能的java环境主要功能:应用部署,事务管理,集群与负载均衡,安全控制,资源池化,消息中间件典型的使用场景:银行核心系统,电信计费平台,电商大促平台主要漏洞:T3反序列化,IIop反序列化,xml反序列化,未授权访问流量特征:T3协议攻击特征,未授权访问特征,.反序列化攻击特征危害:远程代码执行完全控制服务器(删库、安装后门)数据泄露

- 怎么样才能成为专业的程序员?

cocos2d-x小菜

编程PHP

如何要想成为一名专业的程序员?仅仅会写代码是不够的。从团队合作去解决问题到版本控制,你还得具备其他关键技能的工具包。当我们询问相关的专业开发人员,那些必备的关键技能都是什么的时候,下面是我们了解到的情况。

关于如何学习代码,各种声音很多,然后很多人就被误导为成为专业开发人员懂得一门编程语言就够了?!呵呵,就像其他工作一样,光会一个技能那是远远不够的。如果你想要成为

- java web开发 高并发处理

BreakingBad

javaWeb并发开发处理高

java处理高并发高负载类网站中数据库的设计方法(java教程,java处理大量数据,java高负载数据) 一:高并发高负载类网站关注点之数据库 没错,首先是数据库,这是大多数应用所面临的首个SPOF。尤其是Web2.0的应用,数据库的响应是首先要解决的。 一般来说MySQL是最常用的,可能最初是一个mysql主机,当数据增加到100万以上,那么,MySQL的效能急剧下降。常用的优化措施是M-S(

- mysql批量更新

ekian

mysql

mysql更新优化:

一版的更新的话都是采用update set的方式,但是如果需要批量更新的话,只能for循环的执行更新。或者采用executeBatch的方式,执行更新。无论哪种方式,性能都不见得多好。

三千多条的更新,需要3分多钟。

查询了批量更新的优化,有说replace into的方式,即:

replace into tableName(id,status) values

- 微软BI(3)

18289753290

微软BI SSIS

1)

Q:该列违反了完整性约束错误;已获得 OLE DB 记录。源:“Microsoft SQL Server Native Client 11.0” Hresult: 0x80004005 说明:“不能将值 NULL 插入列 'FZCHID',表 'JRB_EnterpriseCredit.dbo.QYFZCH';列不允许有 Null 值。INSERT 失败。”。

A:一般这类问题的存在是

- Java中的List

g21121

java

List是一个有序的 collection(也称为序列)。此接口的用户可以对列表中每个元素的插入位置进行精确地控制。用户可以根据元素的整数索引(在列表中的位置)访问元素,并搜索列表中的元素。

与 set 不同,列表通常允许重复

- 读书笔记

永夜-极光

读书笔记

1. K是一家加工厂,需要采购原材料,有A,B,C,D 4家供应商,其中A给出的价格最低,性价比最高,那么假如你是这家企业的采购经理,你会如何决策?

传统决策: A:100%订单 B,C,D:0%

&nbs

- centos 安装 Codeblocks

随便小屋

codeblocks

1.安装gcc,需要c和c++两部分,默认安装下,CentOS不安装编译器的,在终端输入以下命令即可yum install gccyum install gcc-c++

2.安装gtk2-devel,因为默认已经安装了正式产品需要的支持库,但是没有安装开发所需要的文档.yum install gtk2*

3. 安装wxGTK

yum search w

- 23种设计模式的形象比喻

aijuans

设计模式

1、ABSTRACT FACTORY—追MM少不了请吃饭了,麦当劳的鸡翅和肯德基的鸡翅都是MM爱吃的东西,虽然口味有所不同,但不管你带MM去麦当劳或肯德基,只管向服务员说“来四个鸡翅”就行了。麦当劳和肯德基就是生产鸡翅的Factory 工厂模式:客户类和工厂类分开。消费者任何时候需要某种产品,只需向工厂请求即可。消费者无须修改就可以接纳新产品。缺点是当产品修改时,工厂类也要做相应的修改。如:

- 开发管理 CheckLists

aoyouzi

开发管理 CheckLists

开发管理 CheckLists(23) -使项目组度过完整的生命周期

开发管理 CheckLists(22) -组织项目资源

开发管理 CheckLists(21) -控制项目的范围开发管理 CheckLists(20) -项目利益相关者责任开发管理 CheckLists(19) -选择合适的团队成员开发管理 CheckLists(18) -敏捷开发 Scrum Master 工作开发管理 C

- js实现切换

百合不是茶

JavaScript栏目切换

js主要功能之一就是实现页面的特效,窗体的切换可以减少页面的大小,被门户网站大量应用思路:

1,先将要显示的设置为display:bisible 否则设为none

2,设置栏目的id ,js获取栏目的id,如果id为Null就设置为显示

3,判断js获取的id名字;再设置是否显示

代码实现:

html代码:

<di

- 周鸿祎在360新员工入职培训上的讲话

bijian1013

感悟项目管理人生职场

这篇文章也是最近偶尔看到的,考虑到原博客发布者可能将其删除等原因,也更方便个人查找,特将原文拷贝再发布的。“学东西是为自己的,不要整天以混的姿态来跟公司博弈,就算是混,我觉得你要是能在混的时间里,收获一些别的有利于人生发展的东西,也是不错的,看你怎么把握了”,看了之后,对这句话记忆犹新。 &

- 前端Web开发的页面效果

Bill_chen

htmlWebMicrosoft

1.IE6下png图片的透明显示:

<img src="图片地址" border="0" style="Filter.Alpha(Opacity)=数值(100),style=数值(3)"/>

或在<head></head>间加一段JS代码让透明png图片正常显示。

2.<li>标

- 【JVM五】老年代垃圾回收:并发标记清理GC(CMS GC)

bit1129

垃圾回收

CMS概述

并发标记清理垃圾回收(Concurrent Mark and Sweep GC)算法的主要目标是在GC过程中,减少暂停用户线程的次数以及在不得不暂停用户线程的请夸功能,尽可能短的暂停用户线程的时间。这对于交互式应用,比如web应用来说,是非常重要的。

CMS垃圾回收针对新生代和老年代采用不同的策略。相比同吞吐量垃圾回收,它要复杂的多。吞吐量垃圾回收在执

- Struts2技术总结

白糖_

struts2

必备jar文件

早在struts2.0.*的时候,struts2的必备jar包需要如下几个:

commons-logging-*.jar Apache旗下commons项目的log日志包

freemarker-*.jar

- Jquery easyui layout应用注意事项

bozch

jquery浏览器easyuilayout

在jquery easyui中提供了easyui-layout布局,他的布局比较局限,类似java中GUI的border布局。下面对其使用注意事项作简要介绍:

如果在现有的工程中前台界面均应用了jquery easyui,那么在布局的时候最好应用jquery eaysui的layout布局,否则在表单页面(编辑、查看、添加等等)在不同的浏览器会出

- java-拷贝特殊链表:有一个特殊的链表,其中每个节点不但有指向下一个节点的指针pNext,还有一个指向链表中任意节点的指针pRand,如何拷贝这个特殊链表?

bylijinnan

java

public class CopySpecialLinkedList {

/**

* 题目:有一个特殊的链表,其中每个节点不但有指向下一个节点的指针pNext,还有一个指向链表中任意节点的指针pRand,如何拷贝这个特殊链表?

拷贝pNext指针非常容易,所以题目的难点是如何拷贝pRand指针。

假设原来链表为A1 -> A2 ->... -> An,新拷贝

- color

Chen.H

JavaScripthtmlcss

<!DOCTYPE HTML PUBLIC "-//W3C//DTD HTML 4.01 Transitional//EN" "http://www.w3.org/TR/html4/loose.dtd"> <HTML> <HEAD>&nbs

- [信息与战争]移动通讯与网络

comsci

网络

两个坚持:手机的电池必须可以取下来

光纤不能够入户,只能够到楼宇

建议大家找这本书看看:<&

- oracle flashback query(闪回查询)

daizj

oracleflashback queryflashback table

在Oracle 10g中,Flash back家族分为以下成员:

Flashback Database

Flashback Drop

Flashback Table

Flashback Query(分Flashback Query,Flashback Version Query,Flashback Transaction Query)

下面介绍一下Flashback Drop 和Flas

- zeus持久层DAO单元测试

deng520159

单元测试

zeus代码测试正紧张进行中,但由于工作比较忙,但速度比较慢.现在已经完成读写分离单元测试了,现在把几种情况单元测试的例子发出来,希望有人能进出意见,让它走下去.

本文是zeus的dao单元测试:

1.单元测试直接上代码

package com.dengliang.zeus.webdemo.test;

import org.junit.Test;

import o

- C语言学习三printf函数和scanf函数学习

dcj3sjt126com

cprintfscanflanguage

printf函数

/*

2013年3月10日20:42:32

地点:北京潘家园

功能:

目的:

测试%x %X %#x %#X的用法

*/

# include <stdio.h>

int main(void)

{

printf("哈哈!\n"); // \n表示换行

int i = 10;

printf

- 那你为什么小时候不好好读书?

dcj3sjt126com

life

dady, 我今天捡到了十块钱, 不过我还给那个人了

good girl! 那个人有没有和你讲thank you啊

没有啦....他拉我的耳朵我才把钱还给他的, 他哪里会和我讲thank you

爸爸, 如果地上有一张5块一张10块你拿哪一张呢....

当然是拿十块的咯...

爸爸你很笨的, 你不会两张都拿

爸爸为什么上个月那个人来跟你讨钱, 你告诉他没

- iptables开放端口

Fanyucai

linuxiptables端口

1,找到配置文件

vi /etc/sysconfig/iptables

2,添加端口开放,增加一行,开放18081端口

-A INPUT -m state --state NEW -m tcp -p tcp --dport 18081 -j ACCEPT

3,保存

ESC

:wq!

4,重启服务

service iptables

- Ehcache(05)——缓存的查询

234390216

排序ehcache统计query

缓存的查询

目录

1. 使Cache可查询

1.1 基于Xml配置

1.2 基于代码的配置

2 指定可搜索的属性

2.1 可查询属性类型

2.2 &

- 通过hashset找到数组中重复的元素

jackyrong

hashset

如何在hashset中快速找到重复的元素呢?方法很多,下面是其中一个办法:

int[] array = {1,1,2,3,4,5,6,7,8,8};

Set<Integer> set = new HashSet<Integer>();

for(int i = 0

- 使用ajax和window.history.pushState无刷新改变页面内容和地址栏URL

lanrikey

history

后退时关闭当前页面

<script type="text/javascript">

jQuery(document).ready(function ($) {

if (window.history && window.history.pushState) {

- 应用程序的通信成本

netkiller.github.com

虚拟机应用服务器陈景峰netkillerneo

应用程序的通信成本

什么是通信

一个程序中两个以上功能相互传递信号或数据叫做通信。

什么是成本

这是是指时间成本与空间成本。 时间就是传递数据所花费的时间。空间是指传递过程耗费容量大小。

都有哪些通信方式

全局变量

线程间通信

共享内存

共享文件

管道

Socket

硬件(串口,USB) 等等

全局变量

全局变量是成本最低通信方法,通过设置

- 一维数组与二维数组的声明与定义

恋洁e生

二维数组一维数组定义声明初始化

/** * */ package test20111005; /** * @author FlyingFire * @date:2011-11-18 上午04:33:36 * @author :代码整理 * @introduce :一维数组与二维数组的初始化 *summary: */ public c

- Spring Mybatis独立事务配置

toknowme

mybatis

在项目中有很多地方会使用到独立事务,下面以获取主键为例

(1)修改配置文件spring-mybatis.xml <!-- 开启事务支持 --> <tx:annotation-driven transaction-manager="transactionManager" /> &n

- 更新Anadroid SDK Tooks之后,Eclipse提示No update were found

xp9802

eclipse

使用Android SDK Manager 更新了Anadroid SDK Tooks 之后,

打开eclipse提示 This Android SDK requires Android Developer Toolkit version 23.0.0 or above, 点击Check for Updates

检测一会后提示 No update were found