HBU_神经网络与深度学习 实验10 卷积神经网络:基于ResNet18网络完成图像分类任务

目录

- 写在前面的一些内容

- 一、实践:基于ResNet18网络完成图像分类任务

-

- 1. 数据处理

-

- (1) 数据集介绍

- (2) 数据读取

- (3) 构造Dataset类

- 2. 模型构建

- 3. 模型训练

- 4. 模型评价

- 5. 模型预测

- 二、实验Q&A

写在前面的一些内容

- 本文为HBU_神经网络与深度学习实验(2022年秋)实验10的实验报告,此文的基本内容参照 [1]Github/卷积神经网络-下.ipynb ,检索时请按对应序号进行检索。

- 本实验编程语言为Python 3.10,使用Pycharm进行编程。

- 本实验报告目录标题级别顺序:一、 1. (1)

- 水平有限,难免有误,如有错漏之处敬请指正。

一、实践:基于ResNet18网络完成图像分类任务

在本实践中,我们实践一个更通用的图像分类任务。

图像分类(Image Classification)是计算机视觉中的一个基础任务,将图像的语义将不同图像划分到不同类别。很多任务也可以转换为图像分类任务。比如人脸检测就是判断一个区域内是否有人脸,可以看作一个二分类的图像分类任务。

这里,我们使用的计算机视觉领域的经典数据集:CIFAR-10数据集,网络为ResNet18模型,损失函数为交叉熵损失,优化器为Adam优化器,评价指标为准确率。

1. 数据处理

(1) 数据集介绍

CIFAR-10数据集包含了10种不同的类别、共60,000张图像,其中每个类别的图像都是6000张,图像大小均为 32 × 32 32 \times 32 32×32像素。CIFAR-10数据集的示例如 图15 所示。

将数据集文件进行解压。

不用代码解压,下载文件之后直接解压到根目录就好了。

(2) 数据读取

在本实验中,将原始训练集拆分成了train_set、dev_set两个部分,分别包括40 000条和10 000条样本。将data_batch_1到data_batch_4作为训练集,data_batch_5作为验证集,test_batch作为测试集。 最终的数据集构成为:

- 训练集:40 000条样本。

- 验证集:10 000条样本。

- 测试集:10 000条样本。

读取一个batch数据的代码如下所示:

import os

import pickle

import numpy as np

def load_cifar10_batch(folder_path, batch_id=1, mode='train'):

if mode == 'test':

file_path = os.path.join(folder_path, 'test_batch')

else:

file_path = os.path.join(folder_path, 'data_batch_' + str(batch_id))

# 加载数据集文件

with open(file_path, 'rb') as batch_file:

batch = pickle.load(batch_file, encoding='latin1')

imgs = batch['data'].reshape((len(batch['data']), 3, 32, 32)) / 255.

labels = batch['labels']

return np.array(imgs, dtype='float32'), np.array(labels)

imgs_batch, labels_batch = load_cifar10_batch(folder_path='./cifar-10-batches-py', batch_id=1, mode='train')

查看数据的维度:

print("batch of imgs shape: ", imgs_batch.shape, "batch of labels shape: ", labels_batch.shape)

代码执行结果:

batch of imgs shape: (10000, 3, 32, 32) batch of labels shape: (10000,)

可视化观察其中的一张样本图像和对应的标签,代码如下所示:

import matplotlib.pyplot as plt

image, label = imgs_batch[1], labels_batch[1]

print("The label in the picture is {}".format(label))

plt.figure(figsize=(2, 2))

plt.imshow(image.transpose(1, 2, 0))

plt.savefig('cnn-car.pdf')

plt.show()

代码执行结果:

The label in the picture is 9

(3) 构造Dataset类

构造一个CIFAR10Dataset类,其将继承自torch.utils.data.DataSet类,可以逐个数据进行处理。代码实现如下:

import torch

import torch.utils.data as io

from torchvision.transforms import Compose, ToTensor, Normalize

class CIFAR10Dataset(io.Dataset):

def __init__(self, folder_path='./cifar-10-batches-py', mode='train'):

if mode == 'train':

# 加载batch1-batch4作为训练集

self.imgs, self.labels = load_cifar10_batch(folder_path=folder_path, batch_id=1, mode='train')

for i in range(2, 5):

imgs_batch, labels_batch = load_cifar10_batch(folder_path=folder_path, batch_id=i, mode='train')

self.imgs, self.labels = np.concatenate([self.imgs, imgs_batch]), np.concatenate(

[self.labels, labels_batch])

elif mode == 'dev':

# 加载batch5作为验证集

self.imgs, self.labels = load_cifar10_batch(folder_path=folder_path, batch_id=5, mode='dev')

elif mode == 'test':

# 加载测试集

self.imgs, self.labels = load_cifar10_batch(folder_path=folder_path, mode='test')

self.transform = Compose([ToTensor(), Normalize(mean=[0.4914, 0.4822, 0.4465], std=[0.2023, 0.1994, 0.2010])])

def __getitem__(self, idx):

img, label = self.imgs[idx], self.labels[idx]

img = img.transpose(1, 2, 0)

img = self.transform(img)

return img, label

def __len__(self):

return len(self.imgs)

torch.manual_seed(100)

train_dataset = CIFAR10Dataset(folder_path='./cifar-10-batches-py', mode='train')

dev_dataset = CIFAR10Dataset(folder_path='./cifar-10-batches-py', mode='dev')

test_dataset = CIFAR10Dataset(folder_path='./cifar-10-batches-py', mode='test')

2. 模型构建

使用torchvision.modelsAPI中的resnet18进行图像分类实验。

from torchvision.models import resnet18

resnet18_model = resnet18(pretrained=True)

3. 模型训练

复用RunnerV3类,实例化RunnerV3类,并传入训练配置。 使用训练集和验证集进行模型训练,共训练30个epoch。 在实验中,保存准确率最高的模型作为最佳模型。代码实现如下:

import torch.nn.functional as F

import torch.optim as opt

import time

start = time.perf_counter()

class RunnerV3(object):

def __init__(self, model, optimizer, loss_fn, metric, **kwargs):

self.model = model

self.optimizer = optimizer

self.loss_fn = loss_fn

self.metric = metric # 只用于计算评价指标

# 记录训练过程中的评价指标变化情况

self.dev_scores = []

# 记录训练过程中的损失函数变化情况

self.train_epoch_losses = [] # 一个epoch记录一次loss

self.train_step_losses = [] # 一个step记录一次loss

self.dev_losses = []

# 记录全局最优指标

self.best_score = 0

def train(self, train_loader, dev_loader=None, **kwargs):

# 将模型切换为训练模式

self.model.train()

# 传入训练轮数,如果没有传入值则默认为0

num_epochs = kwargs.get("num_epochs", 0)

# 传入log打印频率,如果没有传入值则默认为100

log_steps = kwargs.get("log_steps", 100)

# 评价频率

eval_steps = kwargs.get("eval_steps", None)

# 传入模型保存路径,如果没有传入值则默认为"best_model.pdparams"

save_path = kwargs.get("save_path", "best_model.pdparams")

custom_print_log = kwargs.get("custom_print_log", None)

# 训练总的步数

num_training_steps = num_epochs * len(train_loader)

if eval_steps:

assert self.metric and dev_loader

do_eval = eval_steps and self.metric and dev_loader

# 运行的step数目

global_step = 0

# 进行num_epochs轮训练

for epoch in range(num_epochs):

# 用于统计训练集的损失

total_loss = 0

for step, data in enumerate(train_loader):

X, y = data

# 获取模型预测

logits = self.model(X.to(device))

loss = self.loss_fn(logits, y.long().to(device)) # 默认求mean

total_loss += loss

# 训练过程中,每个step的loss进行保存

self.train_step_losses.append((global_step, loss.item()))

if global_step % log_steps == 0:

print(

f"[Train] epoch: {epoch}/{num_epochs}, step: {global_step}/{num_training_steps}, loss: {loss.item():.5f}")

# 梯度反向传播,计算每个参数的梯度值

loss.backward()

if custom_print_log:

custom_print_log(self)

# 小批量梯度下降进行参数更新

self.optimizer.step()

# 梯度归零

self.optimizer.zero_grad()

# 判断是否需要评价

if do_eval and (global_step % eval_steps == 0):

dev_score, dev_loss = self.evaluate(dev_loader, global_step=global_step)

print(f"[Evaluate] dev_score: {dev_score:.5f}, dev_loss: {dev_loss:.5f}")

# 将模型切换为训练模式

self.model.train()

# 如果当前指标为最优指标,保存该模型

if dev_score > self.best_score:

self.save_model(save_path)

print(f"best accuracy performence has been updated: {self.best_score:.5f} --> {dev_score:.5f}")

self.best_score = dev_score

global_step += 1

# 当前epoch 训练loss累计值

trn_loss = (total_loss / len(train_loader)).item()

# epoch粒度的训练loss保存

self.train_epoch_losses.append(trn_loss)

print("[Train] Training done!")

# 模型评估阶段,使用'paddle.no_grad()'控制不计算和存储梯度

@torch.no_grad()

def evaluate(self, dev_loader, **kwargs):

assert self.metric is not None

# 将模型设置为评估模式

self.model.eval()

global_step = kwargs.get("global_step", -1)

# 用于统计训练集的损失

total_loss = 0

# 重置评价

self.metric.reset()

# 遍历验证集每个批次

for batch_id, data in enumerate(dev_loader):

X, y = data

# 计算模型输出

logits = self.model(X.to(device))

# 计算损失函数

loss = self.loss_fn(logits, y.long().to(device)).item()

# 累积损失

total_loss += loss

# 累积评价

self.metric.update(logits, y)

dev_loss = (total_loss / len(dev_loader))

self.dev_losses.append((global_step, dev_loss))

dev_score = self.metric.accumulate()

self.dev_scores.append(dev_score)

return dev_score, dev_loss

# 模型评估阶段,使用'paddle.no_grad()'控制不计算和存储梯度

@torch.no_grad()

def predict(self, x, **kwargs):

# 将模型设置为评估模式

self.model.eval()

# 运行模型前向计算,得到预测值

logits = self.model(x)

return logits

def save_model(self, save_path):

torch.save(self.model.state_dict(), save_path)

def load_model(self, model_path):

model_state_dict = torch.load(model_path)

self.model.load_state_dict(model_state_dict)

class Accuracy():

def __init__(self, is_logist=True):

"""

输入:

- is_logist: outputs是logist还是激活后的值

"""

# 用于统计正确的样本个数

self.num_correct = 0

# 用于统计样本的总数

self.num_count = 0

self.is_logist = is_logist

def update(self, outputs, labels):

"""

输入:

- outputs: 预测值, shape=[N,class_num]

- labels: 标签值, shape=[N,1]

"""

# 判断是二分类任务还是多分类任务,shape[1]=1时为二分类任务,shape[1]>1时为多分类任务

if outputs.shape[1] == 1: # 二分类

outputs = torch.squeeze(outputs, dim=-1)

if self.is_logist:

# logist判断是否大于0

preds = torch.tensor((outputs >= 0), dtype=torch.float32)

else:

# 如果不是logist,判断每个概率值是否大于0.5,当大于0.5时,类别为1,否则类别为0

preds = torch.tensor((outputs >= 0.5), dtype=torch.float32)

else:

# 多分类时,使用'paddle.argmax'计算最大元素索引作为类别

preds = torch.argmax(outputs, dim=1)

# 获取本批数据中预测正确的样本个数

labels = torch.squeeze(labels, dim=-1)

batch_correct = torch.sum(torch.tensor(preds == labels, dtype=torch.float32)).numpy()

batch_count = len(labels)

# 更新num_correct 和 num_count

self.num_correct += batch_correct

self.num_count += batch_count

def accumulate(self):

# 使用累计的数据,计算总的指标

if self.num_count == 0:

return 0

return self.num_correct / self.num_count

def reset(self):

# 重置正确的数目和总数

self.num_correct = 0

self.num_count = 0

def name(self):

return "Accuracy"

# 指定运行设备

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

print(device)

# 学习率大小

lr = 0.001

# 批次大小

batch_size = 64

# 加载数据

train_loader = io.DataLoader(train_dataset, batch_size=batch_size, shuffle=True)

dev_loader = io.DataLoader(dev_dataset, batch_size=batch_size)

test_loader = io.DataLoader(test_dataset, batch_size=batch_size)

# 定义网络

model = resnet18_model

# 定义优化器,这里使用Adam优化器以及l2正则化策略,相关内容在7.3.3.2和7.6.2中会进行详细介绍

optimizer = opt.Adam(lr=lr, params=model.parameters(), weight_decay=0.005)

# 定义损失函数

loss_fn = F.cross_entropy

# 定义评价指标

metric = Accuracy(is_logist=True)

# 实例化RunnerV3

runner = RunnerV3(model, optimizer, loss_fn, metric)

# 启动训练

log_steps = 300

eval_steps = 300

runner.train(train_loader, dev_loader, num_epochs=3, log_steps=log_steps,

eval_steps=eval_steps, save_path="best_model.pdparams")

end = time.perf_counter()

print("运行耗时", end-start)

代码执行结果:

cpu

[Train] epoch: 0/3, step: 0/1875, loss: 12.96724

[Evaluate] dev_score: 0.00130, dev_loss: 9.34741

best accuracy performence has been updated: 0.00000 --> 0.00130

[Train] epoch: 0/3, step: 300/1875, loss: 1.07171

[Evaluate] dev_score: 0.60550, dev_loss: 1.17587

best accuracy performence has been updated: 0.00130 --> 0.60550

[Train] epoch: 0/3, step: 600/1875, loss: 1.13140

[Evaluate] dev_score: 0.63220, dev_loss: 1.10873

best accuracy performence has been updated: 0.60550 --> 0.63220

[Train] epoch: 1/3, step: 900/1875, loss: 1.07615

[Evaluate] dev_score: 0.66010, dev_loss: 0.97729

best accuracy performence has been updated: 0.63220 --> 0.66010

[Train] epoch: 1/3, step: 1200/1875, loss: 1.00880

[Evaluate] dev_score: 0.69780, dev_loss: 0.90015

best accuracy performence has been updated: 0.66010 --> 0.69780

[Train] epoch: 2/3, step: 1500/1875, loss: 1.03829

[Evaluate] dev_score: 0.69600, dev_loss: 0.91976

[Train] epoch: 2/3, step: 1800/1875, loss: 1.04858

[Evaluate] dev_score: 0.65900, dev_loss: 0.99803

[Train] Training done!

运行耗时 1257.6101669

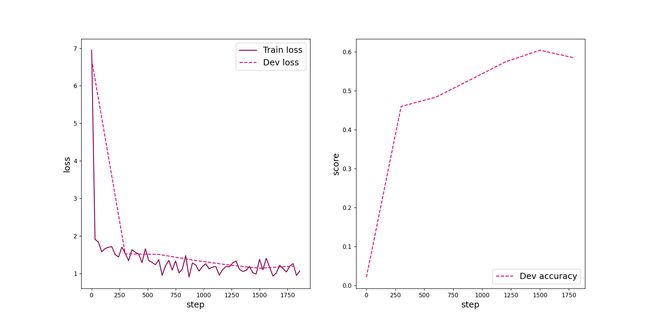

可视化观察训练集与验证集的准确率及损失变化情况。

def plot(runner, fig_name):

plt.figure(figsize=(10, 5))

plt.subplot(1, 2, 1)

train_items = runner.train_step_losses[::30]

train_steps = [x[0] for x in train_items]

train_losses = [x[1] for x in train_items]

plt.plot(train_steps, train_losses, color='#8E004D', label="Train loss")

if runner.dev_losses[0][0] != -1:

dev_steps = [x[0] for x in runner.dev_losses]

dev_losses = [x[1] for x in runner.dev_losses]

plt.plot(dev_steps, dev_losses, color='#E20079', linestyle='--', label="Dev loss")

# 绘制坐标轴和图例

plt.ylabel("loss", fontsize='x-large')

plt.xlabel("step", fontsize='x-large')

plt.legend(loc='upper right', fontsize='x-large')

plt.subplot(1, 2, 2)

# 绘制评价准确率变化曲线

if runner.dev_losses[0][0] != -1:

plt.plot(dev_steps, runner.dev_scores,

color='#E20079', linestyle="--", label="Dev accuracy")

else:

plt.plot(list(range(len(runner.dev_scores))), runner.dev_scores,

color='#E20079', linestyle="--", label="Dev accuracy")

# 绘制坐标轴和图例

plt.ylabel("score", fontsize='x-large')

plt.xlabel("step", fontsize='x-large')

plt.legend(loc='lower right', fontsize='x-large')

plt.savefig(fig_name)

plt.show()

plot(runner, fig_name='cnn-loss4.pdf')

4. 模型评价

使用测试数据对在训练过程中保存的最佳模型进行评价,观察模型在测试集上的准确率以及损失情况。代码实现如下:

# 加载最优模型

runner.load_model('best_model.pdparams')

# 模型评价

score, loss = runner.evaluate(test_loader)

print("[Test] accuracy/loss: {:.4f}/{:.4f}".format(score, loss))

代码执行结果:

[Test] accuracy/loss: 0.6902/0.9364

5. 模型预测

同样地,也可以使用保存好的模型,对测试集中的数据进行模型预测,观察模型效果,具体代码实现如下:

# 获取测试集中的一个batch的数据

X, label = next(iter(test_loader))

logits = runner.predict(X)

# 多分类,使用softmax计算预测概率

pred = F.softmax(logits)

# 获取概率最大的类别

pred_class = torch.argmax(pred[2]).numpy()

label = label[2].numpy()

# 输出真实类别与预测类别

print("The true category is {} and the predicted category is {}".format(label, pred_class))

# 可视化图片

plt.figure(figsize=(2, 2))

imgs, labels = load_cifar10_batch(folder_path='./cifar-10-batches-py', mode='test')

plt.imshow(imgs[2].transpose(1, 2, 0))

plt.savefig('cnn-test-vis.pdf')

plt.show()

代码执行结果:

The true category is 8 and the predicted category is 8

二、实验Q&A

什么是“预训练模型”?什么是“迁移学习”?

预训练模型:预训练模型是在大型基准数据集上训练的模型,用于解决相似的问题。

迁移学习:将一个已开发任务的模型迁移到另一个任务的开发模型过程中。

比较“使用预训练模型”和“不使用预训练模型”的效果。

# 不使用预训练模型

resnet18_model = resnet18(pretrained=False)

代码执行结果:

[Train] epoch: 0/3, step: 0/1875, loss: 6.94788

[Evaluate] dev_score: 0.02140, dev_loss: 6.69647

best accuracy performence has been updated: 0.00000 --> 0.02140

[Train] epoch: 0/3, step: 300/1875, loss: 1.54176

[Evaluate] dev_score: 0.45960, dev_loss: 1.52372

best accuracy performence has been updated: 0.02140 --> 0.45960

[Train] epoch: 0/3, step: 600/1875, loss: 1.36936

[Evaluate] dev_score: 0.48340, dev_loss: 1.51000

best accuracy performence has been updated: 0.45960 --> 0.48340

[Train] epoch: 1/3, step: 900/1875, loss: 1.28010

[Evaluate] dev_score: 0.52840, dev_loss: 1.36051

best accuracy performence has been updated: 0.48340 --> 0.52840

[Train] epoch: 1/3, step: 1200/1875, loss: 1.18633

[Evaluate] dev_score: 0.57390, dev_loss: 1.23637

best accuracy performence has been updated: 0.52840 --> 0.57390

[Train] epoch: 2/3, step: 1500/1875, loss: 1.37826

[Evaluate] dev_score: 0.60410, dev_loss: 1.14194

best accuracy performence has been updated: 0.57390 --> 0.60410

[Train] epoch: 2/3, step: 1800/1875, loss: 1.26612

[Evaluate] dev_score: 0.58430, dev_loss: 1.19695

[Train] Training done!

运行耗时 1255.6881118

[Test] accuracy/loss: 0.6089/1.1440

执行代码后得到下图:

显然,不使用预训练模型的耗时要比使用预训练模型要少一些,但是不使用预训练模型的正确率比使用预训练模型的要小,损失更大。推测原因是:使用预训练模型时需要输入一部分参数并处理。

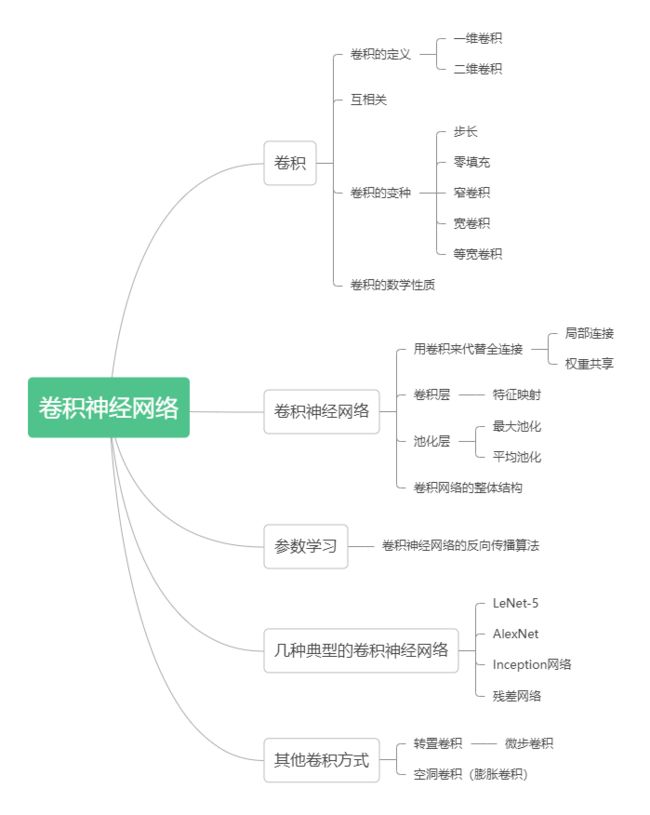

总结卷积神经网络的内容。