涌现出来的模拟能力#OpenAI视频生成大模型构建世界模拟器的可行性

Q:Sora出来后,普通人应该怎么办?

"Sora的到来带来了机遇和挑战。普通人关注创意和技术,探索表达想法的新方式。他们制作高质量视频,平衡工作与生活,并拥抱行业变革。梦想成为现实。#SoraRevolution"

今天一早被OpenAI的视频生成刷屏了。社交媒体上,开始各种解读。在Mixlab的社群里我们也组织讨论和交流。最值得关注的是大模型涌现出了新的能力:模拟世界成为可能。

以下是openai这篇研究的注解:

https://openai.com/research/video-generation-models-as-world-simulators

We explore large-scale training of generative models on video data. Specifically, we train text-conditional diffusion models jointly on videos and images of variable durations, resolutions and aspect ratios. We leverage a transformer architecture that operates on spacetime patches of video and image latent codes. Our largest model, Sora, is capable of generating a minute of high fidelity video. Our results suggest that scaling video generation models is a promising path towards building general purpose simulators of the physical world.

我们探索在大规模视频数据上训练生成模型的方法。具体而言,我们同时在可变时长、分辨率和纵横比的视频和图像上训练了文本条件扩散模型。我们利用了一个在视频和图像潜在编码的时空补丁上操作的Transformer架构。大模型Sora能够生成 [ 一分钟、高保真度 ] 的视频。结果表明,大规模视频生成模型是构建通用物理世界模拟器的有希望的路径。

February 15, 2024

This technical report focuses on (1) our method for turning visual data of all types into a unified representation that enables large-scale training of generative models

(1)我们的方法是将各种类型的视觉数据转化为统一表示,以实现大规模生成模型的训练

and (2) qualitative evaluation of Sora’s capabilities and limitations. Model and implementation details are not included in this report.

(2)对Sora的能力和局限性进行定性评估。模型和实现细节不包含在本报告中。

Much prior work has studied generative modeling of video data using a variety of methods, including recurrent networks,1,2,3 generative adversarial networks,4,5,6,7 autoregressive transformers,8,9 and diffusion models.10,11,12 These works often focus on a narrow category of visual data, on shorter videos, or on videos of a fixed size. Sora is a generalist model of visual data—it can generate videos and images spanning diverse durations, aspect ratios and resolutions, up to a full minute of high definition video.

许多先前的工作研究了使用各种方法对视频数据进行生成建模,包括循环网络、生成对抗网络、自回归Transformer和扩散模型。

这些工作通常关注于特定类别的视觉数据、较短的视频或固定尺寸的视频。

Sora是一种对视觉数据具有广泛适应性的模型,它可以生成跨越不同时长、纵横比和分辨率的视频和图像,高清视频的生成时长可达一分钟。

Turning visual data into patches

将视觉数据转化为补丁

We take inspiration from large language models which acquire generalist capabilities by training on internet-scale data.13,14 The success of the LLM paradigm is enabled in part by the use of tokens that elegantly unify diverse modalities of text—code, math and various natural languages. In this work, we consider how generative models of visual data can inherit such benefits. Whereas LLMs have text tokens, Sora has visual patches. Patches have previously been shown to be an effective representation for models of visual data.15,16,17,18 We find that patches are a highly-scalable and effective representation for training generative models on diverse types of videos and images.

我们受到大语言模型的启发,这些模型通过在互联网大规模的数据上进行训练获得了通用能力。LLM(大语言模型)范式的成功部分得益于使用优雅地统一了代码、数学和各种自然语言等多样化文本模态的标记。在这项工作中,我们考虑了生成视觉数据模型如何继承这些优势。

而LLMs使用文本标记text tokens,Sora则使用视觉补丁visual patches。

先前研究表明,补丁是视觉数据模型的一种有效表示形式。我们发现,补丁是一种高度可扩展且有效的表示形式,可用于训练各种类型的视频和图像的生成模型。

什么是visual patches?

来自谷歌的一篇论文提出了ViT,视觉Transformer架构。

《AN IMAGE IS WORTH 16X16 WORDS:

TRANSFORMERS FOR IMAGE RECOGNITION AT SCALE》

一张图像胜过16x16个单词:用于大规模图像识别的transformers技术。

尽管transformers架构已成为自然语言处理任务的标准,但其在计算机视觉领域的应用仍然有限。在视觉任务中,注意力要么与卷积网络结合使用,要么用于替代卷积网络的某些组件,同时保持其整体结构不变。论文展示了对CNN的依赖并不是必需的,并且直接应用于图像补丁序列的transformers在图像分类任务上表现非常出色。在大量数据上进行预训练后,进行图像识别(ImageNet,CIFAR-100,VTAB等)基准测试,结果表明,Vision Transformer(ViT)相比最先进的卷积网络取得了出色的结果,同时需要较少的计算资源进行训练。

At a high level, we turn videos into patches by first compressing videos into a lower-dimensional latent space,19 and subsequently decomposing the representation into spacetime patches.

将视频转化为补丁,我们首先将视频压缩为较低维度的潜在空间,然后将表示分解为时空补丁。

Video compression network视频压缩网络

We train a network that reduces the dimensionality of visual data.20 This network takes raw video as input and outputs a latent representation that is compressed both temporally and spatially. Sora is trained on and subsequently generates videos within this compressed latent space. We also train a corresponding decoder model that maps generated latents back to pixel space.

我们训练了一个能够降低视觉数据维度的网络。该网络以原始视频作为输入,并输出一个在时间和空间上都进行了压缩的潜在表示。

Sora在这个压缩的潜在空间上进行训练,并生成视频。

我们还训练了一个对应的解码器模型,将生成的潜在表示映射回像素空间。

Spacetime Latent Patches时空潜在补丁

Given a compressed input video, we extract a sequence of spacetime patches which act as transformer tokens. This scheme works for images too since images are just videos with a single frame. Our patch-based representation enables Sora to train on videos and images of variable resolutions, durations and aspect ratios. At inference time, we can control the size of generated videos by arranging randomly-initialized patches in an appropriately-sized grid.

给定一个压缩的输入视频,我们提取一系列时空补丁,它们充当变换器的标记。

这个方案也适用于图像,因为图像是单帧的视频。

我们基于补丁的表示使得Sora能够在分辨率、时长和纵横比可变的视频和图像上进行训练。

在推理阶段,我们可以通过将随机初始化的补丁按适当大小的网格排列来控制生成视频的尺寸。

Scaling transformers for video generation

Sora is a diffusion model21,22,23,24,25; given input noisy patches (and conditioning information like text prompts), it’s trained to predict the original “clean” patches. Importantly, Sora is a diffusion transformer.26 Transformers have demonstrated remarkable scaling properties across a variety of domains, including language modeling,13,14 computer vision,15,16,17,18 and image generation.

Sora是一个扩散模型,输入噪声补丁以及像文本提示这样的条件信息,它将通过去噪的过程来恢复补丁。

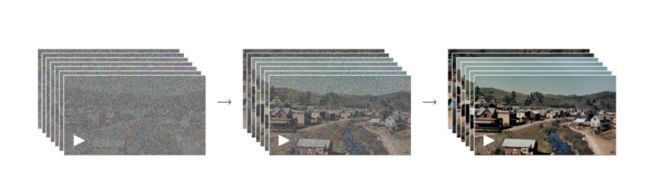

In this work, we find that diffusion transformers scale effectively as video models as well. Below, we show a comparison of video samples with fixed seeds and inputs as training progresses. Sample quality improves markedly as training compute increases.

在这项工作中,我们发现扩散变形器在作为视频模型时也能有效地扩展。下面,我们展示了在训练进行时使用固定种子和输入的视频样本的比较。随着训练计算量的增加,样本质量显著提高。

Variable durations, resolutions, aspect ratios 可变时长、分辨率、长宽比

Past approaches to image and video generation typically resize, crop or trim videos to a standard size – e.g., 4 second videos at 256x256 resolution. We find that instead training on data at its native size provides several benefits.

以往的图像和视频生成方法通常会将视频调整大小、裁剪或修剪为标准尺寸,例如256x256分辨率的4秒视频。我们发现,以原始尺寸的数据进行训练具有几个优点。

Sampling flexibility 灵活的尺寸

Sora can sample widescreen 1920x1080p videos, vertical 1080x1920 videos and everything inbetween. This lets Sora create content for different devices directly at their native aspect ratios. It also lets us quickly prototype content at lower sizes before generating at full resolution—all with the same model.

Sora可以对宽屏1920x1080p视频、纵向1080x1920视频以及其中的任何尺寸进行采样。

这使得Sora能够直接以原始纵横比为不同设备创建内容。

这也使我们能够在生成全分辨率内容之前,以较低的尺寸快速原型制作内容,而所有这些都可以使用同一个模型完成。

Improved framing and composition改进的构图和画面组成

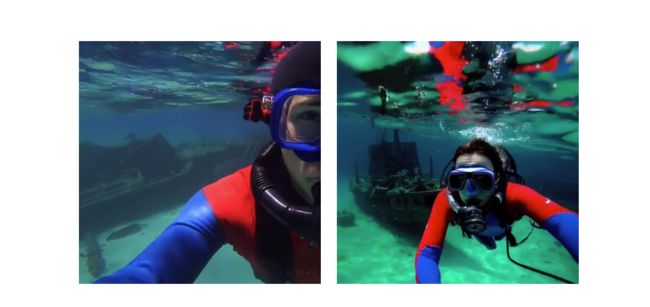

We empirically find that training on videos at their native aspect ratios improves composition and framing. We compare Sora against a version of our model that crops all training videos to be square, which is common practice when training generative models. The model trained on square crops (left) sometimes generates videos where the subject is only partially in view. In comparison, videos from Sora (right)s have improved framing.

我们发现,以原始纵横比训练视频可以改善构图和画面组成。

我们将Sora与将所有训练视频裁剪为正方形的模型进行了比较。在使用正方形裁剪进行训练的模型(左侧)有时会生成只有部分主体可见的视频。相比之下,Sora生成的视频(右侧)具有改善的构图和画面组成。

Language understanding语言理解

Training text-to-video generation systems requires a large amount of videos with corresponding text captions. We apply the re-captioning technique introduced in DALL·E 330 to videos. We first train a highly descriptive captioner model and then use it to produce text captions for all videos in our training set. We find that training on highly descriptive video captions improves text fidelity as well as the overall quality of videos.

训练文本到视频生成系统需要大量具有对应文本标题的视频。我们使用了DALL·E 3中介绍的标题生成技术到视频中。

首先训练一个高度描述性的标题模型,然后使用它为训练集中的所有视频生成文本标题。

我们发现,通过高度描述性的视频标题进行训练可以提高文本的准确性以及视频的整体质量。

Similar to DALL·E 3, we also leverage GPT to turn short user prompts into longer detailed captions that are sent to the video model. This enables Sora to generate high quality videos that accurately follow user prompts.

与DALL·E 3类似,我们还利用GPT将用户简短的提示转化为更详细的长描述,然后将其发送给视频模型。这使得Sora能够生成高质量的视频,准确地按照用户的提示进行生成。

Prompting with images and videos 图像和视频的提示工程

All of the results above and in our landing page show text-to-video samples. But Sora can also be prompted with other inputs, such as pre-existing images or video. This capability enables Sora to perform a wide range of image and video editing tasks—creating perfectly looping video, animating static images, extending videos forwards or backwards in time, etc.

Sora可以通过其他输入进行提示,例如现有的图像或视频。这种能力使得Sora能够执行各种图像和视频编辑任务,如创建完美循环的视频,为静态图像添加动画效果,延长视频的时间等。

Animating DALL·E images 图像生成视频

Sora is capable of generating videos provided an image and prompt as input. Below we show example videos generated based on DALL·E 231 and DALL·E 330 images.

Sora能够根据图像和提示生成视频。下面我们展示了基于DALL·E 2和DALL·E 3图像生成的示例视频。

Extending generated videos 视频“续写”

Sora is also capable of extending videos, either forward or backward in time. Below are four videos that were all extended backward in time starting from a segment of a generated video. As a result, each of the four videos starts different from the others, yet all four videos lead to the same ending

Sora还能够扩展视频,无论是向前还是向后延长时间。以下是四个视频,它们都是从生成的视频片段向后延长的。因此,这四个视频的开头各不相同,但最终都会导向相同的结尾。

We can use this method to extend a video both forward and backward to produce a seamless infinite loop.

我们可以使用这种方法向前和向后延长视频,以产生一个无缝的无限循环。

Video-to-video editing 视频编辑

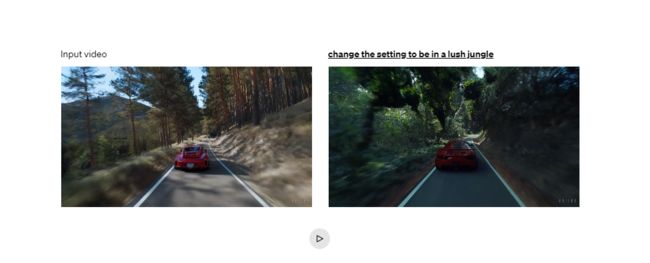

Diffusion models have enabled a plethora of methods for editing images and videos from text prompts. Below we apply one of these methods, SDEdit,32 to Sora. This technique enables Sora to transform the styles and environments of input videos zero-shot.

扩散模型为通过文本提示编辑图像和视频提供了大量的方法。

我们将其中一种方法:SDEdit,应用到Sora上。这种技术使得Sora能够以零样本的方式转换输入视频的风格和环境。

Connecting videos 无缝组合视频

We can also use Sora to gradually interpolate between two input videos, creating seamless transitions between videos with entirely different subjects and scene compositions. In the examples below, the videos in the center interpolate between the corresponding videos on the left and right.

我们还可以使用Sora逐渐插值两个输入视频,创建在完全不同的主题和场景构图之间无缝过渡的视频。在下面的示例中,中间的视频在左侧和右侧的对应视频之间进行插值。

Image generation capabilities 图像生成

Sora is also capable of generating images. We do this by arranging patches of Gaussian noise in a spatial grid with a temporal extent of one frame. The model can generate images of variable sizes—up to 2048x2048 resolution.

Sora还可以生成图像。我们通过在一个帧的时间范围内将高斯噪声的补丁排列在一个空间网格中来实现。该模型可以生成可变大小的图像,分辨率高达2048x2048。

Emerging simulation capabilities涌现出来的模拟能力

We find that video models exhibit a number of interesting emergent capabilities when trained at scale. These capabilities enable Sora to simulate some aspects of people, animals and environments from the physical world. These properties emerge without any explicit inductive biases for 3D, objects, etc.—they are purely phenomena of scale.

我们发现,当视频模型经过大规模数据训练后,它们涌现出了新的能力。这些能力使得Sora能够模拟一些来自物理世界的人、动物和环境的某些方面。这些能力的涌现是在没有经过3D、物理等明确数据标记的情况下出现的,它们纯粹是规模效应。

3D consistency. Sora can generate videos with dynamic camera motion. As the camera shifts and rotates, people and scene elements move consistently through three-dimensional space.

3D一致性。Sora可以生成具有动态相机运动的视频。随着相机的移动和旋转,人物和场景元素在三维空间中以一致的方式移动。

Long-range coherence and object permanence. A significant challenge for video generation systems has been maintaining temporal consistency when sampling long videos. We find that Sora is often, though not always, able to effectively model both short- and long-range dependencies. For example, our model can persist people, animals and objects even when they are occluded or leave the frame. Likewise, it can generate multiple shots of the same character in a single sample, maintaining their appearance throughout the video.

长视频时间一致性和物体永恒性。视频生成系统面临的一个重要挑战是在采样长视频时保持时间上的一致性。我们发现,Sora通常能够有效地建模短程和长程的依赖关系。

例如,Sora可以在人、动物和物体被遮挡或离开画面时仍然保持它们的存在。同样,它可以在单个样本中生成同一角色的多个镜头,并在整个视频中保持它们的外观。

Interacting with the world. Sora can sometimes simulate actions that affect the state of the world in simple ways. For example, a painter can leave new strokes along a canvas that persist over time, or a man can eat a burger and leave bite marks.

与世界互动。Sora有时可以模拟以简单方式影响世界状态的动作。例如,一位画家可以在画布上留下持续一段时间的新笔触,或者一个人可以吃掉一个汉堡并留下咬痕。

Simulating digital worlds. Sora is also able to simulate artificial processes–one example is video games. Sora can simultaneously control the player in Minecraft with a basic policy while also rendering the world and its dynamics in high fidelity. These capabilities can be elicited zero-shot by prompting Sora with captions mentioning “Minecraft.”

模拟数字世界。Sora还可以模拟人工过程,其中一个例子就是视频游戏。Sora可以在高保真度下同时控制Minecraft中的玩家,并渲染世界及其动态。通过以“Minecraft”为提示,可以零样本调用Sora展现这些能力。

These capabilities suggest that continued scaling of video models is a promising path towards the development of highly-capable simulators of the physical and digital world, and the objects, animals and people that live within them.

这些能力表明,扩大视频模型的规模是实现物理世界和数字世界模拟器的有希望的途径。(世界模拟器)

Discussion

Sora currently exhibits numerous limitations as a simulator. For example, it does not accurately model the physics of many basic interactions, like glass shattering. Other interactions, like eating food, do not always yield correct changes in object state. We enumerate other common failure modes of the model—such as incoherencies that develop in long duration samples or spontaneous appearances of objects—in our landing page.

目前,Sora作为模拟器还存在许多不足。

例如,它无法准确地模拟许多基本交互的物理特性,比如玻璃破碎。

其他交互,比如吃东西,也不总是能正确地改变物体状态。

我们列举了模型的其他常见失败模式,比如在长时间采样中出现的不一致性或物体的突然出现。

We believe the capabilities Sora has today demonstrate that continued scaling of video models is a promising path towards the development of capable simulators of the physical and digital world, and the objects, animals and people that live within them.

我们坚信扩大视频模型的规模是实现物理世界和数字世界模拟器的有希望的途径。(世界模拟器)

以上为OpenAI原文的中文注解,文中多次提及了世界模拟器。通过涌现出来的新能力,我们可以猜测,训练数据可能是通过UE5 + NeRF + Metahumans 来获得的。

https://t.zsxq.com/17iGhZWji

已整理至AIGC知识库

社群可添加