Pytorch(5): LeNet,ResNet,RNN,LSTM代码

1、 LeNet5与ResNet18实战

第一部分:LeNet5代码:

import torch

from torch import nn

from torch.nn import functional as F

class Lenet5(nn.Module):

def __init__(self):

super(Lenet5, self).__init__()

self.conv_unit=nn.Sequential(

nn.Conv2d(3,16,kernel_size=5,stride=1,padding=0),

nn.MaxPool2d(kernel_size=2,stride=2,padding=0),

nn.Conv2d(16,32,kernel_size=5,stride=1,padding=0),

nn.MaxPool2d(kernel_size=2,stride=2,padding=0),

)

self.fc_unit=nn.Sequential(

nn.Linear(32*5*5,32),

nn.ReLU(),

nn.Linear(32,10)

)

def forward(self,x):

batchsz=x.size(0)

out=self.conv_unit(x)

out=out.view(batchsz,-1)

out=self.fc_unit(out)

return out

def main():

net = Lenet5()

tmp = torch.randn(2, 3, 32, 32)

out = net(tmp)

print('lenet out:', out.shape)

if __name__ == '__main__':

main()

代码步骤

- 1、建立一个卷积sequential:

第一层将三个channel变为16个,核函数size为5,步长为1,不padding,使用maxpooling将feature 的h和w缩小一倍

第二层将16个channel变为32个,设定和pooling与前一个一致 - 2、将dim铺平[b,-1]

- 3、经过两个线性全连接层将dim size变为10[b,x]=>[b,10]

- 4、建立forward函数

第二部分 ResNet18 代码:

import torch

from torch import nn

from torch.nn import functional as F

class ResBlk(nn.Module):

def __init__(self,ch_in,ch_out,stride=1):

super(ResBlk, self).__init__()

self.conv1=nn.Conv2d(ch_in,ch_out,kernel_size=3,stride=stride,padding=1)

self.bn1=nn.BatchNorm2d(ch_out)

self.conv2=nn.Conv2d(ch_out,ch_out,kernel_size=3,stride=1,padding=1)

self.bn2=nn.BatchNorm2d(ch_out)

self.extra=nn.Sequential()

if ch_in!=ch_out:

self.extra=nn.Sequential(nn.Conv2d(ch_in,ch_out,kernel_size=1,stride=stride),

nn.BatchNorm2d(ch_out))

def forward(self,x):

out=F.relu(self.bn1(self.conv1(x)))

out=self.bn2(self.conv2(out))

extra=self.extra(x)

out=out+extra

out=F.relu(out)

return out

class ResNet18(nn.Module):

def __init__(self):

super(ResNet18, self).__init__()

self.conv_first=nn.Sequential(nn.Conv2d(3,64,kernel_size=3,stride=3,padding=0),

nn.BatchNorm2d(64))

self.BLK1= ResBlk(64,128,stride=2)

self.BLK2 = ResBlk(128, 256, stride=2)

self.BLK3 = ResBlk(256, 512, stride=2)

self.BLK4 = ResBlk(512, 512, stride=2)

self.outlayer=nn.Linear(512*1*1,10)

def forward(self,x):

out=F.relu(self.conv_first(x))

out=self.BLK1(out)

out=self.BLK2(out)

out=self.BLK3(out)

out=self.BLK4(out)

out=F.adaptive_avg_pool2d(out,[1,1])

out=out.view(x.size(0),-1)

out=self.outlayer(out)

return out

def main():

blk = ResBlk(64, 128, stride=4)

tmp = torch.randn(2, 64, 32, 32)

out = blk(tmp)

print('block:', out.shape)

x = torch.randn(2, 3, 32, 32)

model = ResNet18()

out = model(x)

print('resnet:', out.shape)

if __name__ == '__main__':

main()

代码流程

先建立一个resblock

- 1、每个block包含两个卷积层,每层还要做batch norm

- 2、建立一个short cut

- 3、如果输入的channel和输出的channel不一致,还要对short cut使用一个卷积,将shortcut和两个卷积层输出的channel数量是一致的,才可以相加

- 4、建立forward函数

建立ResNet18,包含四个basic block

- 1、先用一个卷积层,将3个channel变为64个,核函数size为3,步长为3,即将原来的feature缩小三分之一,加入batch norm

- 2、连续给与四个basic block

- 3、经过basic block后,输出channel从64变为512,stride使feature缩小至八分之一

- 4、经过一个outlayer,使最终输出的dim size 为10

- 5、建立forrward函数

- 6、要注意的是经过四个basic block后,使用F.adaptive_avg_pood2d使feature size都变为1x1

第三部分 main函数:

import torch

from torch.utils.data import DataLoader

from torchvision import datasets,transforms

from .lenet5 import Lenet5

from torch import nn

from torch import optim

def main():

'''加载数据集'''

batchsz=128

cifar_train=datasets.CIFAR10("cifar",True,transform=transforms.Compose([

transforms.Resize((32,32)),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485,0.456,0.406],

std=[0.229,0.225,0.225])

]),download=True)

cifar_train=DataLoader(cifar_train,batch_size=batchsz,shuffle=True)

cifar_test = datasets.CIFAR10("cifar", False, transform=transforms.Compose([

transforms.Resize((32, 32)),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406],

std=[0.229, 0.225, 0.225])

]), download=True)

cifar_test = DataLoader(cifar_test, batch_size=batchsz, shuffle=True)

x,label=next(iter(cifar_train))

print("x:",x.shape,"label:",label.shape)

device=torch.device("cuda")

model=Lenet5().to(device)

criterion=nn.CrossEntropyLoss.to(device)

optimizer=optim.Adam(model.parameters(),lr=1e-3)

for epoch in range(1000):

model.train()

for batch_idx, (x,label) in enumerate(cifar_train):

x, label = x.to(device), label.to(device)

logits=model(x)

loss=criterion(logits,label)

optimizer.zero_grad()

loss.backward()

optimizer.step()

print(epoch,"loss:",loss.item())

model.eval()

with torch.no_grad:

total_correct=0

total_num=0

for x,label in cifar_test:

logits=model(x)

pred=logits.argmax(dim=1)

total_correct+=torch.eq(pred,label).float().sum().item()

total_num+=x.size(0)

acc=total_correct/total_num

print(epoch,"acc:",acc)

if __name__ == '__main__':

main()

代码流程:

- 1、数据集加载

- 2、使用device=torch.device(“cuda”)进行gpu加速

- 3、建立模型model=ResNet18()

- 4、建立损失函数标准 criterion=nn.CrossEntropyLoss(), 该方法包含了softmax方法,后续直接带入logits即可

- 5、建立一个优化器,使用adam方法,赋予model的变量和学习率

- 6、设定迭代次数

- 7、model.train()下:

对于每个batch迭代,将x和label to(device)

带入model

计算损失

optimizer.zero_grad()将优化器清洗

loss.backward()做后向算法

optimizer.step() 更新梯度

打印每个step后的loss - 8、model.eval()下:

对于每个batch迭代

将x放入model

使用pred找到每次预测出的最大的index

使用torch.eq(pred,label)做对比返回浮点型,求和,返回numpy型

total_num为每次做的样本数,即加上每个batch

计算总acc,打印

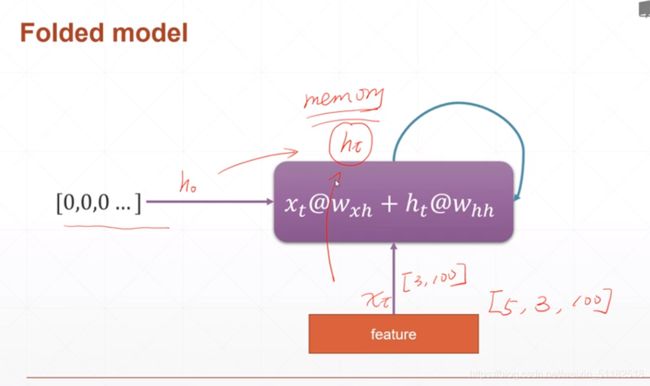

2、RNN

只用线性层的缺点:

- long sentence,too much parameter

- no context information

RNN:

- weight sharing

- memory:consider 前一个字对于当前时刻的影响