FlatDHCP模式单nova-network主机部署示例

本博客欢迎转发,但请保留原作者信息(@孔令贤HW)!内容系本人学习、研究和总结,如有雷同,实属荣幸!

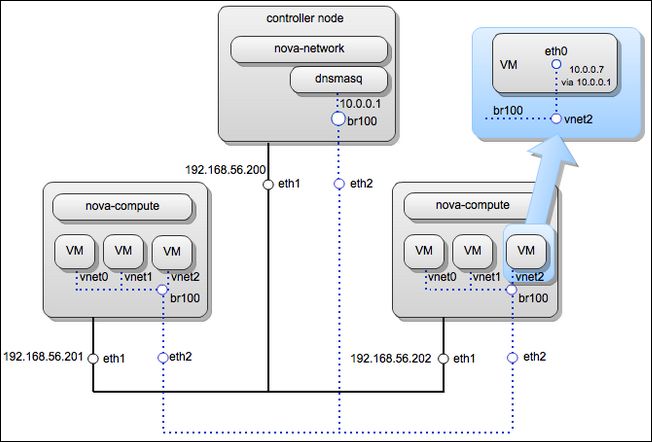

1 场景图

一个控制节点

两个计算节点

eth1连接管理平面

eth2连接业务平面

2 网络配置

2.1 控制节点,未创建虚拟机

网络配置文件:

openstack@controller-1:~$ ip a

... (loopback has the metadata service on 169.254.169.254) ...

3: eth1: mtu 1500 qdisc pfifo_fast state UNKNOWN qlen 1000

link/ether 08:00:27:9d:c4:b0 brd ff:ff:ff:ff:ff:ff

inet 192.168.56.200/24 brd 192.168.56.255 scope global eth1

inet6 fe80::a00:27ff:fe9d:c4b0/64 scope link

valid_lft forever preferred_lft forever

4: eth2: mtu 1500 qdisc pfifo_fast master br100 state UNKNOWN qlen 1000

link/ether 08:00:27:8f:87:fa brd ff:ff:ff:ff:ff:ff

inet6 fe80::a00:27ff:fe8f:87fa/64 scope link

valid_lft forever preferred_lft forever

5: br100: mtu 1500 qdisc noqueue state UP

link/ether 08:00:27:8f:87:fa brd ff:ff:ff:ff:ff:ff

inet 10.0.0.1/24 brd 10.0.0.255 scope global br100

inet6 fe80::7053:6bff:fe43:4dfd/64 scope link

valid_lft forever preferred_lft forever

openstack@compute-1:~$ cat /etc/network/interfaces

...

iface eth2 inet manual

up ifconfig $IFACE 0.0.0.0 up

up ifconfig $IFACE promisc

注意:eth2配置为混杂模式,在计算节点上也是这么配置。混杂模式允许目的MAC不是本机的数据包通过本机。因为虚拟机之间通信时,目的MAC地址必定是某一个虚拟机的MAC。

网桥:

openstack@controller-1:~$ brctl show

bridge name bridge id STP enabled interfaces

br100 8000.0800278f87fa no eth2

路由:

openstack@controller-1:~$ route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 192.168.56.101 0.0.0.0 UG 100 0 0 eth1

10.0.0.0 0.0.0.0 255.255.255.0 U 0 0 0 br100

169.254.0.0 0.0.0.0 255.255.0.0 U 1000 0 0 eth1

192.168.56.0 0.0.0.0 255.255.255.0 U 0 0 0 eth1

Dnsmasq进程:

openstack@controller-1:~$ ps aux | grep dnsmasq

nobody 2729 0.0 0.0 27532 996 ? S 23:12 0:00 /usr/sbin/dns

masq --strict-order --bind-interfaces --conf-file= --domain=novalocal --pid-fi

le=/var/lib/nova/networks/nova-br100.pid --listen-address=10.0.0.1 --except-in

terface=lo --dhcp-range=10.0.0.2,static,120s --dhcp-lease-max=256 --dhcp-hosts

file=/var/lib/nova/networks/nova-br100.conf --dhcp-script=/usr/bin/nova-dhcpbr

idge --leasefile-ro

root 2730 0.0 0.0 27504 240 ? S 23:12 0:00 /usr/sbin/dns

masq --strict-order --bind-interfaces --conf-file= --domain=novalocal --pid-fi

le=/var/lib/nova/networks/nova-br100.pid --listen-address=10.0.0.1 --except-in

terface=lo --dhcp-range=10.0.0.2,static,120s --dhcp-lease-max=256 --dhcp-hosts

file=/var/lib/nova/networks/nova-br100.conf --dhcp-script=/usr/bin/nova-dhcpbr

idge --leasefile-ro

Nova配置文件:

openstack@controller-1:~$ sudo cat /etc/nova/nova.conf

--public_interface=eth1

--fixed_range=10.0.0.0/24

--flat_interface=eth2

--flat_network_bridge=br100

--network_manager=nova.network.manager.FlatDHCPManager

... (more entries omitted) ...

Dnsmasq配置文件:

openstack@controller-1:~$ cat /var/lib/nova/networks/nova-br100.conf

(empty)

eth1:管理平面接口,IP地址192.168.56.200,默认网关192.168.56.101

eth2:业务平面接口,提供类似于2层交换机的功能,没有指定IP,桥接在br100

br100:通常也是没有IP地址的,但在控制节点上,dnsmasq需要在10.0.0.1的地址上监听(这个地址是flat地址段的起始地址)

Dnsmasq的配置文件内容为空,是因为目前还没有虚拟机创建。两个dnsmasq进程是父子进程,实际的工作主要由子进程完成。

两个网卡在安装openstack前就已经存在且由管理员配置完成,openstack不会自动完成该工作。但br100是由nova-network启动时自动创建(在/nova/network/linux_net.py的ensure_brdge方法,在nova/network/L3.py初始化时调用)

看一下控制节点的iptable规则(主要是filter和nat表):

root@controller-1:/home/openstack# iptables -t filter -S

-P INPUT ACCEPT

-P FORWARD ACCEPT

-P OUTPUT ACCEPT

-N nova-api-FORWARD

-N nova-api-INPUT

-N nova-api-OUTPUT

-N nova-api-local

-N nova-filter-top

-N nova-network-FORWARD

-N nova-network-INPUT

-N nova-network-OUTPUT

-N nova-network-local

-A INPUT -j nova-network-INPUT

-A INPUT -j nova-api-INPUT

-A FORWARD -j nova-filter-top

-A FORWARD -j nova-network-FORWARD

-A FORWARD -j nova-api-FORWARD

-A OUTPUT -j nova-filter-top

-A OUTPUT -j nova-network-OUTPUT

-A OUTPUT -j nova-api-OUTPUT

-A nova-api-INPUT -d 192.168.56.200/32 -p tcp -m tcp --dport 8775 -j ACCEPT

-A nova-filter-top -j nova-network-local

-A nova-filter-top -j nova-api-local

-A nova-network-FORWARD -i br100 -j ACCEPT

-A nova-network-FORWARD -o br100 -j ACCEPT

-A nova-network-INPUT -i br100 -p udp -m udp --dport 67 -j ACCEPT

-A nova-network-INPUT -i br100 -p tcp -m tcp --dport 67 -j ACCEPT

-A nova-network-INPUT -i br100 -p udp -m udp --dport 53 -j ACCEPT

-A nova-network-INPUT -i br100 -p tcp -m tcp --dport 53 -j ACCEPT

大致意思是:到br100的DHCP数据包允许通过,通过br100的数据包允许转发,可以访问本机的nova API端点。

openstack@controller-1:~$ sudo iptables -t nat -S

-P PREROUTING ACCEPT

-P INPUT ACCEPT

-P OUTPUT ACCEPT

-P POSTROUTING ACCEPT

-N nova-api-OUTPUT

-N nova-api-POSTROUTING

-N nova-api-PREROUTING

-N nova-api-float-snat

-N nova-api-snat

-N nova-network-OUTPUT

-N nova-network-POSTROUTING

-N nova-network-PREROUTING

-N nova-network-float-snat

-N nova-network-snat

-N nova-postrouting-bottom

-A PREROUTING -j nova-network-PREROUTING

-A PREROUTING -j nova-api-PREROUTING

-A OUTPUT -j nova-network-OUTPUT

-A OUTPUT -j nova-api-OUTPUT

-A POSTROUTING -j nova-network-POSTROUTING

-A POSTROUTING -j nova-api-POSTROUTING

-A POSTROUTING -j nova-postrouting-bottom

-A nova-api-snat -j nova-api-float-snat

-A nova-network-POSTROUTING -s 10.0.0.0/24 -d 192.168.56.200/32 -j ACCEPT

-A nova-network-POSTROUTING -s 10.0.0.0/24 -d 10.128.0.0/24 -j ACCEPT

-A nova-network-POSTROUTING -s 10.0.0.0/24 -d 10.0.0.0/24 -m conntrack ! --ctstate DNAT -j ACCEPT

-A nova-network-PREROUTING -d 169.254.169.254/32 -p tcp -m tcp --dport 80 -j DNAT --to-destination 192.168.56.200:8775

-A nova-network-snat -j nova-network-float-snat

-A nova-network-snat -s 10.0.0.0/24 -j SNAT --to-source 192.168.56.200

-A nova-postrouting-bottom -j nova-network-snat

-A nova-postrouting-bottom -j nova-api-snat

其中最重要的一条规则是:-A nova-network-PREROUTING -d 169.254.169.254/32 -p tcp -m tcp --dport 80 -j DNAT --to-destination 192.168.56.200:8775

使nova metadata service(nova-api的一部分,运行在控制节点,通过iptables规则监听)在地址169.254.169.254监听,而实际的目的地址是本机的192.168.56.200:8775。

2.2 计算节点,未创建虚拟机

主机网络配置:

openstack@compute-1:~$ ip a

... (localhost) ...

2: eth1: mtu 1500 qdisc pfifo_fast state UNKNOWN qlen 1000

link/ether 08:00:27:ee:49:bd brd ff:ff:ff:ff:ff:ff

inet 192.168.56.202/24 brd 192.168.56.255 scope global eth1

inet6 fe80::a00:27ff:feee:49bd/64 scope link

valid_lft forever preferred_lft forever

3: eth2: mtu 1500 qdisc noop state DOWN qlen 1000

link/ether 08:00:27:15:85:17 brd ff:ff:ff:ff:ff:ff

... (virbr0 - not used by openstack) ...

openstack@compute-1:~$ cat /etc/network/interfaces

...

iface eth2 inet manual

up ifconfig $IFACE 0.0.0.0 up

up ifconfig $IFACE promisc

网桥和iptables没有什么实质性内容,略去。

路由:

openstack@compute-1:~$ route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 192.168.56.101 0.0.0.0 UG 100 0 0 eth1

169.254.0.0 0.0.0.0 255.255.0.0 U 1000 0 0 eth1

192.168.56.0 0.0.0.0 255.255.255.0 U 0 0 0 eth1

192.168.122.0 0.0.0.0 255.255.255.0 U 0 0 0 virbr0

目前计算节点还没有网桥,因为nova-network并没有在该节点运行,且当前没有虚拟机,如之前所说,L3 driver并没有初始化。其中,virbr0是libvert创建,未被openstack使用到。

计算节点唯一一个值得注意的地方是,有一条到169.254.0.0/16的路由规则,这是用来访问nova metadata service的。因为如果直接访问192.168.56.0/24内的地址,不会走路由表,而会直接走二层链路(交换机)。

2.3 创建虚拟机

通过nova命令创建一台虚拟机并查看其信息。

openstack@controller-1:~$ nova boot --image cirros --flavor 1 cirros

...

openstack@controller-1:~$ nova list

+--------------------------------------+--------+--------+----------------------+

| ID | Name | Status | Networks |

+--------------------------------------+--------+--------+----------------------+

| 5357143d-66f5-446c-a82f-86648ebb3842 | cirros | BUILD | novanetwork=10.0.0.2 |

+--------------------------------------+--------+--------+----------------------+

...

openstack@controller-1:~$ nova list

+--------------------------------------+--------+--------+----------------------+

| ID | Name | Status | Networks |

+--------------------------------------+--------+--------+----------------------+

| 5357143d-66f5-446c-a82f-86648ebb3842 | cirros | ACTIVE | novanetwork=10.0.0.2 |

+--------------------------------------+--------+--------+----------------------+

openstack@controller-1:~$ ping 10.0.0.2

PING 10.0.0.2 (10.0.0.2) 56(84) bytes of data.

64 bytes from 10.0.0.2: icmp_req=4 ttl=64 time=2.97 ms

64 bytes from 10.0.0.2: icmp_req=5 ttl=64 time=0.893 ms

64 bytes from 10.0.0.2: icmp_req=6 ttl=64 time=0.909 ms

注意:默认情况下,从控制节点可以ping通虚拟机(根据iptables规则),除非设置allow_same_net_traffic=false,这样只有从10.0.0.1才能ping通虚拟机。

2.4 控制节点,创建虚拟机

当创建虚拟机时,nova-network为其分配IP(在/nova/network/manager.py的allocate_for_instance方法),第一个可用的IP是10.0.0.2,dnsmasq为虚拟机关联MAC地址

openstack@controller-1:~$ cat /var/lib/nova/networks/nova-br100.conf

fa:16:3e:2c:e8:ec,cirros.novalocal,10.0.0.2

虚拟机启动时,通过DHCP从dnsmasq获取IP,通过syslog日志可以看出来:

openstack@controller-1:~$ grep 10.0.0.2 /var/log/syslog

Jul 30 23:12:06 controller-1 dnsmasq-dhcp[2729]: DHCP, static leases only on 10.0.0.2, lease time 2m

Jul 31 00:16:47 controller-1 dnsmasq-dhcp[2729]: DHCPRELEASE(br100) 10.0.0.2 fa:16:3e:5a:9b:de unknown lease

Jul 31 01:00:45 controller-1 dnsmasq-dhcp[2729]: DHCPOFFER(br100) 10.0.0.2 fa:16:3e:2c:e8:ec

Jul 31 01:00:45 controller-1 dnsmasq-dhcp[2729]: DHCPREQUEST(br100) 10.0.0.2 fa:16:3e:2c:e8:ec

Jul 31 01:00:45 controller-1 dnsmasq-dhcp[2729]: DHCPACK(br100) 10.0.0.2 fa:16:3e:2c:e8:ec cirros

Jul 31 01:01:45 controller-1 dnsmasq-dhcp[2729]: DHCPREQUEST(br100) 10.0.0.2 fa:16:3e:2c:e8:ec

Jul 31 01:01:45 controller-1 dnsmasq-dhcp[2729]: DHCPACK(br100) 10.0.0.2 fa:16:3e:2c:e8:ec cirros

除此之外,其他比如iptables或路由表,没有发生变化。

即:创建虚拟机,只会改变控制节点上的dnsmasq配置。

2.5 计算节点,创建虚拟机

创建虚拟机时,计算节点的网络配置有变化:

openstack@compute-1:~$ ip a

... (all interfaces as before) ...

10: vnet0: mtu 1500 qdisc pfifo_fast master br100 state UNKNOWN qlen 500

link/ether fe:16:3e:2c:e8:ec brd ff:ff:ff:ff:ff:ff

inet6 fe80::fc16:3eff:fe2c:e8ec/64 scope link

valid_lft forever preferred_lft forever

出现了一个vnet0,这就是虚拟机的虚拟机网卡,其MAC地址从/var/lib/nova/instances/instance-XXXXXXXX/libvirt.xml文件初始化,从文件/var/lib/nova/instances/instance-XXXXXXXX/console.log可以看到虚拟机的行为:

openstack@compute-1:~$ sudo cat /var/lib/nova/instances/instance-00000009/console.log

...

Starting network...

udhcpc (v1.18.5) started

Sending discover...

Sending select for 10.0.0.2...

Lease of 10.0.0.2 obtained, lease time 120

deleting routers

route: SIOCDELRT: No such process

adding dns 10.0.0.1

cloud-setup: checking http://169.254.169.254/2009-04-04/meta-data/instance-id

cloud-setup: successful after 1/30 tries: up 4.74. iid=i-00000009

wget: server returned error: HTTP/1.1 404 Not Found

failed to get http://169.254.169.254/latest/meta-data/public-keys

Starting dropbear sshd: generating rsa key... generating dsa key... OK

===== cloud-final: system completely up in 6.82 seconds ====

instance-id: i-00000009

public-ipv4:

local-ipv4 : 10.0.0.2

...

虚拟机获取到IP和网关IP(DNS的IP),然后尝试从metadata服务(169.254.169.254)下载“user data”,成功后,又尝试下载公钥,但这里我们并没有指定,所以下载失败,创建一个新的公钥。

完成后,iptables发生了变化:

openstack@compute-1:~$ sudo iptables -S

-P INPUT ACCEPT

-P FORWARD ACCEPT

-P OUTPUT ACCEPT

-N nova-compute-FORWARD

-N nova-compute-INPUT

-N nova-compute-OUTPUT

-N nova-compute-inst-9

-N nova-compute-local

-N nova-compute-provider

-N nova-compute-sg-fallback

-N nova-filter-top

-A INPUT -j nova-compute-INPUT

-A FORWARD -j nova-filter-top

-A FORWARD -j nova-compute-FORWARD

... (virbr0 stuff omitted) ...

-A OUTPUT -j nova-filter-top

-A OUTPUT -j nova-compute-OUTPUT

-A nova-compute-FORWARD -i br100 -j ACCEPT

-A nova-compute-FORWARD -o br100 -j ACCEPT

-A nova-compute-inst-9 -m state --state INVALID -j DROP

-A nova-compute-inst-9 -m state --state RELATED,ESTABLISHED -j ACCEPT

-A nova-compute-inst-9 -j nova-compute-provider

-A nova-compute-inst-9 -s 10.0.0.1/32 -p udp -m udp --sport 67 --dport 68 -j ACCEPT

-A nova-compute-inst-9 -s 10.0.0.0/24 -j ACCEPT

-A nova-compute-inst-9 -j nova-compute-sg-fallback

-A nova-compute-local -d 10.0.0.2/32 -j nova-compute-inst-9

-A nova-compute-sg-fallback -j DROP

-A nova-filter-top -j nova-compute-local

因为虚拟机的网络已经初始化,所以根据规则,nova-compute-inst-9规则链处理直接发送到10.0.0.2的数据包,允许从10.0.0.1发来的DHCP包和从虚拟机子网中发来的包,其他均拒绝。对每个创建的虚拟机都会有这样一个规则链。这里-A nova-compute-inst-9 -s 10.0.0.1/32 -p udp -m udp --sport 67 --dport 68 -j ACCEPTACCEPT规则存在的原因是因为如果设置了allow_same_net_traffic=true,仍可以保证能接收DHCP响应。

同时,libvert会自动设置一些网络过滤规则(在/nova/virt/libvert/connection.py和firewall.py中,配置文件是/etc/libvert/nwfilter),比如防止arp spoofing。相关的配置在虚拟机的libert.xml文件中的filterref,可以使用sudo virsh nwfilter-list, sudo virsh nwfilter-dumpxml查看过滤的内容。

2.6 虚拟机网络配置

openstack@controller-1:~$ ssh [email protected]

[email protected]'s password:

$ ip a

1: lo: mtu 16436 qdisc noqueue state UNKNOWN

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether fa:16:3e:06:5c:27 brd ff:ff:ff:ff:ff:ff

inet 10.0.0.2/24 brd 10.0.0.255 scope global eth0

inet6 fe80::f816:3eff:fe06:5c27/64 scope link tentative flags 08

valid_lft forever preferred_lft forever

$ route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.0.0.1 0.0.0.0 UG 0 0 0 eth0

10.0.0.0 0.0.0.0 255.255.255.0 U 0 0 0 eth0

可以看到虚拟机IP地址10.0.0.2,网关10.0.0.1。

3 通信流程

3.1 二层网络的通信

所有的物理节点都通过物理网络连接(通过eth2),同时在eth2都创建有br100,而一个网桥就相当于一个虚拟机二层网络。

所有的虚拟机都连接在br100.

所以,以太网的广播包会到达所有物理机的eth2和br100,

3.2 三层网络通信

为了向目的IP发送数据包,会首先通过ARP查询目的IP对应的MAC,然后通过二层通信。

当我们从虚拟机发送数据包到指定IP时,系统会决定:

« 通过哪个设备发送?这个根据路由表查询得到.比如10.0.0.0 / 0.0.0.0 / 255.255.255.0 / br100就规定了发往10.0.0.1的数据包通过br100发送。

« 源地址写啥?这通常是路由设备的默认IP,如果设备没有指定IP,系统会从其他设备获取一个IP。(这点不太懂,原话:This is usually the default IP address assigned to the device through which our packet is being routed. If this device doesn’t have an IP assigned, the OS will take an IP from one of the other devices. For more details, see Source address selection in the Linux IP networking guide.)

3.3 从控制节点ping虚拟机

从控制节点ping 10.0.0.2(一个虚拟机的IP地址):

« 首先查看路由表,找到匹配:10.0.0.0 / 0.0.0.0 / 255.255.255.0 / br100,意味着数据要通过br100发送,也意味着返回地址是10.0.0.1

« br100发送ARP广播查询10.0.0.2的对应的MAC地址(为了演示,之前需要通过arp –d 10.0.0.2删除原有的arp缓存)

« arp包发送到所有的节点,compute-1的eth2收到ARP包,被br100转发到vnet0,到达虚拟机,注意,此时不会走计算节点的iptables表,因为这一切都发生在链路层。

« 虚拟机返回ARP相应到10.0.0.1的MAC地址。vnet0-->br100-->eth2

« 知道了虚拟机的mac地址,就可以继续发送icmp包,根据路由规则,该包可以正确路由到虚拟机。

-A FORWARD -j nova-filter-top

-A nova-filter-top -j nova-compute-local

-A nova-compute-local -d 10.0.0.2/32 -j nova-compute-inst-9

-A nova-compute-inst-9 -s 10.0.0.0/24 -j ACCEPT

« 数据包到达虚拟机,系统返回响应。因为虚拟机的路由表规定了发往10.0.0.0/24的包通过eth0.

在计算节点,可以使用tcpdump -i eth2 or tcpdump -i br100 or tcpdump -i vnet0命令查看整个过程。

3.4 从计算节点ping虚拟机

此时会失败,如下显示:

openstack@compute-1:~$ ping 10.0.0.2

PING 10.0.0.2 (10.0.0.2) 56(84) bytes of data.

ping: sendmsg: Operation not permitted

意思是该计算节点不允许发送ICMP包,因为计算节点的iptables中的filter表的OUTPUT规则如下:

-A OUTPUT -j nova-filter-top

-A nova-filter-top -j nova-compute-local

-A nova-compute-local -d 10.0.0.2/32 -j nova-compute-inst-9

-A nova-compute-inst-9 -j nova-compute-sg-fallback

-A nova-compute-sg-fallback -j DROP

可以看到OUTPUT的规则规定丢弃发往10.0.0.2的数据包。

3.5 从虚拟机ping虚拟机

因为虚拟机在同一个广播域,所以可以通过ARP查询目的主机的MAC地址,所以这种情况与从控制节点ping虚拟机的流程相似。

3.6 从外网ping虚拟机

这种情况是ping不通的,因为目前的设置(没有为虚拟机关联外网IP)虚拟机只能同本网段内的主机通信(10.0.0.0/24).

本博客欢迎转发,但请保留原作者(@孔令贤HW)信息!内容系本人学习、研究和总结,如有雷同,实属荣幸!