- 四章-32-点要素的聚合

彩云飘过

本文基于腾讯课堂老胡的课《跟我学Openlayers--基础实例详解》做的学习笔记,使用的openlayers5.3.xapi。源码见1032.html,对应的官网示例https://openlayers.org/en/latest/examples/cluster.htmlhttps://openlayers.org/en/latest/examples/earthquake-clusters.

- 02-Cesium聚合分析EntityCluster完整代码

fxshy

htmlcssjavascript

1.完整代码Document-->-->Cesium.Ion.defaultAccessToken='eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9.eyJqdGkiOiJhZjZkZDAwZC1mNTFhLTRhOTEtOGExNi00MzRhNGIzMDdlNDQiLCJpZCI6MTA1MTUzLCJpYXQiOjE2NjA4MDg0Njd9.qajeJtc4-kp

- k8s中Service暴露的种类以及用法

听说唐僧不吃肉

K8Skubernetes容器云原生

一、说明在Kubernetes中,有几种不同的方式可以将服务(Service)暴露给外部流量。这些方式通过定义服务的spec.type字段来确定。二、详解1.ClusterIP定义:默认类型,服务只能在集群内部访问。作用:通过集群内部IP地址暴露服务。示例:spec:type:ClusterIPports:-port:80targetPo

- 【YashanDB知识库】YashanDB 开机自启

YashanDB

YashanDB知识库数据库数据库系统崖山数据库YashanDBoracle

【问题分类】YashanDB开机自启【关键字】开机自启,依赖包【问题描述】数据库所在服务器重启后只拉起monit、yasom、yasom进程,缺少yasdb进程:【问题原因分析】数据库安装的时候未启动守护进程【解决/规避方法】进入数据库之前的安装目录,启动守护进程:Shellcd/home/yashan/install./bin/yasbootmonitstart--clusteryashandb

- Redisson分布式锁实现原理和使用

牧竹子

springboot#redisRedissonredis

常见的锁内存锁lock,synchronize分布式锁redis,zookeeper实现Redisson基于redis实现了Lock接口的分布式集群锁,是可重入锁,功能强大,源码复杂,比redis单机模式分布式锁可靠,稳定性更高,支持集群模式,支持锁根据业务时长自动延迟释放redis普通分布式锁存在一定的缺陷——它加锁只作用在一个Redis节点上,如果通过sentinel和cluster保证高可用

- Redis的持久化和高可用性

小辛学西嘎嘎

redis数据库缓存

目录一、淘汰策略1、背景2、淘汰策略二、持久化1、背景2、fork进程写时复制机制3、Redis持久化方式1、aof2、rdb三、高可用1、主从复制2、Redis哨兵模式3、Rediscluster集群一、淘汰策略1、背景首先Redis是一个内存数据库,将所有数据存放在内存中,通过对K值进行hash后存储在散列表中。有一个小问题Redis数据库占96G,但为什么最终占满只有48G呢。因为中间有个过

- Redis安装详解(单机安装,sentinel哨兵模式,Cluster模式)

dream21st

中间件学习笔记sentinelredisjava

文章目录1Redis单机安装1.1windows中安装1.2linux中安装2Redis主从复制安装3Redis哨兵模式安装4Springboot项目操作RedisSentinel集群5官方cluster分区搭建5.1部署架构5.2RedisCluster的优势5.3集群搭建6Springboot项目操作Cluster集群1Redis单机安装Redis安装包可以从官网下载,也可以在redis的官方

- go-etcd实战

小书go

golang实战演练golangetcd服务发现服务注册微服务

etcd简介etcdisastronglyconsistent,distributedkey-valuestorethatprovidesareliablewaytostoredatathatneedstobeaccessedbyadistributedsystemorclusterofmachines.Itgracefullyhandlesleaderelectionsduringnetwork

- 聚类分析 | Python密度聚类(DBSCAN)

天天酷科研

聚类分析算法(CLA)python聚类机器学习DBSCAN

密度聚类是一种无需预先指定聚类数量的聚类方法,它依赖于数据点之间的密度关系来自动识别聚类结构。本文中,演示如何使用密度聚类算法,具体是DBSCAN(Density-BasedSpatialClusteringofApplicationswithNoise)来对一个实际的数据集进行聚类分析。一、基本介绍密度聚类的核心思想是将数据点分为高密度区域和低密度区域。高密度区域内的数据点被认为属于同一簇,而低

- Etcd 配置详解

SkTj

配置标记成员标记—name—data-dir—wal-dir—snapshot-count—heartbeat-interval—election-timeout—listen-peer-urls—listen-client-urls—max-snapshots—max-wals—cors集群标记—initial-advertise-peer-urls—initial-cluster—initia

- Redis高可用

確定饿的猫

redis数据库linux

目录持久化主从复制哨兵Cluster集群RDB持久化手动触发自动触发RDB执行流程RDB载入AOF持久化执行流程命令追加文件写入和文件同步appendfsyncalwaysappendfsyncnoappendfsynceverysecond文件重写文件重写流程载入对比nginx、tomcat、mysql等服务都具有预防单点故障、提高整体性能和安全性的功能,当然,Redis也不例外在Redis中,

- 图计算:基于SparkGrpahX计算聚类系数

妙龄少女郭德纲

Spark图算法Scala聚类数据挖掘机器学习

图计算:基于SparkGrpahX计算聚类系数文章目录图计算:基于SparkGrpahX计算聚类系数一、什么是聚类系数二、基于SparkGraphX的聚类系数代码实现总结一、什么是聚类系数聚类系数(ClusteringCoefficient)是图计算和网络分析中的一个重要概念,用于衡量网络中节点的局部聚集程度。它有助于理解网络中节点之间的紧密程度和网络的结构特性。这是一种用来衡量图中节点聚类程度的

- Oracle数据库中的Oracle Real Application Clusters是什么

2401_85812053

数据库oracle

OracleRealApplicationClusters(简称OracleRAC)是Oracle数据库的一个关键特性,它允许多个数据库实例同时访问和管理同一个数据库。这种架构设计的目的是为了提高数据库系统的可扩展性、可用性和性能。OracleRAC的核心特点包括:高可用性:如果任何一个节点发生故障,其他节点可以继续处理请求,从而保持应用程序的连续运行。数据库实例之间的负载均衡可以自动进行,减少单

- kubeadm升级k8s_remote version is much newer v1

2401_86367086

kubernetes容器云原生

可以看到我们的版本可以升级到v1.24.4###显示版本差异kubeadmupgradediff1.24.4[upgrade/diff]Readingconfigurationfromthecluster…[upgrade/diff]FYI:Youcanlookatthisconfigfilewith‘kubectl-nkube-systemgetcmkubeadm-config-oyaml’—/

- 如何在 KubeBlocks 中配置实例模板?

小猿姐

kubernetes数据库云原生mysql

背景在KubeBlocks中,一个Cluster由若干个Component组成,一个Component最终管理若干Pod和其它对象。在0.9版本之前,这些Pod是从同一个PodTemplate渲染出来的(该PodTemplate在ClusterDefinition或ComponentDefinition中定义)。这样的设计不能满足如下需求:对于从同一个Add-on中渲染出来的Cluster,为其设

- 如何通过Python SDK描述Collection

DashVector

pythonjava服务器数据库数据库架构人工智能

本文介绍如何通过PythonSDK获取已创建的Collection的状态和Schema信息。前提条件已创建Cluster:创建Cluster。已获得API-KEY:API-KEY管理。已安装最新版SDK:安装DashVectorSDK。接口定义Python示例:Client.describe(name:str)->DashVectorResponse使用示例说明需要使用您的api-key替换示例中

- 如何通过Python SDK新建一个DashVector Client

DashVector

pythonjava数据库embedding大数据人工智能

本文介绍如何通过PythonSDK新建一个DashVectorClient。说明通过DashVectorClient可连接DashVector服务端,进行Collection相关操作。前提条件已创建Cluster:创建Cluster。已获得API-KEY:API-KEY管理。已安装最新版SDK:安装DashVectorSDK。接口定义Python示例:dashvector.Client(api_k

- Spark运行时架构

tooolik

spark架构大数据

目录一,Spark运行时架构二,YARN集群架构(一)YARN集群主要组件1、ResourceManager-资源管理器2、NodeManager-节点管理器3、Task-任务4、Container-容器5、ApplicationMaster-应用程序管理器6,总结(二)YARN集群中应用程序的执行流程三、SparkStandalone架构(一)client提交方式(二)cluster提交方式四、

- redis cluster之Gossip协议

tracy_668

什么是Gossip协议Gossipprotocol也叫EpidemicProtocol(流行病协议),实际上它还有很多别名,比如:“流言算法”、“疫情传播算法”等。这个协议的作用就像其名字表示的意思一样,非常容易理解,它的方式其实在我们日常生活中也很常见,比如电脑病毒的传播,森林大火,细胞扩散等等。Gossipprotocol最早是在1987年发表在ACM上的论文《EpidemicAlgorith

- ActiveMQ集群、负载均衡、消息回流

星星都没我亮

ActiveMQactivemq

文章目录集群配置主备集群SharedFileSystemMasterSlavefailover故障转移协议TransportOptions负载均衡静态网络配置可配置属性URI的几个属性NetworkConnectorProperties动态网络配置消息回流消息副本集群配置官方文档http://activemq.apache.org/clustering主备集群http://activemq.apa

- 【深入学习Redis丨第三篇】深入详解Redis高可用集群模式

陈橘又青

深入学习Redis学习redis数据库高可用集群

前言本文我们将介绍Redis的四种模式及各自优缺点分析。Redis一共4种模式:1、主从复制模式2、(Sentinel)哨兵模式3、(Cluster)集群模式4、代理模式文章目录前言1.**主从模式****1.1简介****1.2工作机制**2.**哨兵模式****2.1简介****2.2工作机制****2.3注意点**3.**Cluster模式****3.1简介****3.2工作机制****3.

- 【Redis】Redis 集群搭建与管理: 原理、实现与操作

Hsu琛君珩

Redisredisbootstrap数据库

目录集群(Cluster)基本概念数据分片算法哈希求余⼀致性哈希算法哈希槽分区算法(Redis使⽤)集群搭建(基于docker)第⼀步:创建⽬录和配置第⼆步:编写docker-compose.yml第三步:启动容器第四步:构建集群主节点宕机演⽰效果处理流程1)故障判定2)故障迁移集群扩容第⼀步:把新的主节点加⼊到集群第⼆步:重新分配slots第三步:给新的主节点添加从节点集群缩容(选学)第⼀步:删

- Puppeteer Cluster:自动化网页操作的新利器

宋溪普Gale

PuppeteerCluster:自动化网页操作的新利器puppeteer-clusterthomasdondorf/puppeteer-cluster:PuppeteerCluster是一个基于Puppeteer的库,用于并行处理多个网页操作任务,可以提高网页抓取和自动化任务的效率。项目地址:https://gitcode.com/gh_mirrors/pu/puppeteer-cluster在

- 深度图解Redis Cluster原理

SH的全栈笔记

Redis后端后端redis

不想谈好吉他的撸铁狗,不是好的程序员,欢迎微信关注「SH的全栈笔记」前言上文我们聊了基于Sentinel的Redis高可用架构,了解了Redis基于读写分离的主从架构,同时也知道当Redis的master发生故障之后,Sentinel集群是如何执行failover的,以及其执行failover的原理是什么。这里大概再提一下,Sentinel集群会对Redis的主从架构中的Redis实例进行监控,一

- elasticsearch

图灵农场

tl微服务专题

cluster:代表一个集群,集群中有多个节点,其中有一个为主节点,这个主节点是可以通过选举产生的,主从节点是对于集群内部来说的。es的一个概念就是去中心化,字面上理解就是无中心节点,这是对于集群外部来说的,因为从外部来看es集群,在逻辑上是个整体,你与任何一个节点的通信和与整个es集群通信是等价的。shards:代表索引分片,es可以把一个完整的索引分成多个分片,这样的好处是可以把一个大的索引拆

- Redis cluster 集群TLS and Jedis使用SSL调用redis服务

潘多编程

Redis数据库redislinux

安装#安装依赖软件sudoaptupdatesudoaptinstallmakegcclibssl-devpkg-config#下载redis解压wgethttps://download.redis.io/releases/redis-6.2.6.tar.gztar-xvfredis-6.2.6.tar.gz#编译cdredis-6.2.6makeBUILD_TLS=yes#如果执行编译出错,提示

- Folium:Python地图可视化库使用详解

零 度°

pythonpython开发语言

{row['Description']}",icon=folium.Icon(color='red',icon='info-sign')).add_to(marker_cluster)#添加多边形folium.Polygon(locations=[[39.9,116.4],[39.95,116.45],[40.0,116.4],[39.9,116.4]],color='blue',fill=Tru

- Redis分布式

Flying_Fish_Xuan

mongodb数据库

Redis是一个高性能的内存数据库,具有多种分布式部署和扩展能力。Redis的分布式架构包括主从复制、哨兵模式(Sentinel)、RedisCluster集群模式。不同的分布式机制各自适用于不同的场景,提供了从简单的高可用性到复杂的水平扩展能力。1.主从复制(Master-SlaveReplication)1.1基本概念Redis的主从复制是其最基本的分布式架构模式。在这种模式下,一个Redis

- Mysql 8.0 集群简介【官方文档5种方式】

arroganceee

文档介绍mysql数据库架构

Mysql官方介绍几种集群架构:Replication【主从复制】GroupReplication【组复制】InnoDBClusterInnoDBReplicaSetMySQLNDBCluster8.0网上比较全的介绍比较少,本文机翻了Mysql官网对Mysql8.0几种集群方式的简介。之后会一一研究并实际部署。Replication【主从复制】https://dev.mysql.com/doc/

- 存储集群消除pg数量过多的告警

大 大金

ceph

[root@xxxxxxxxxxxxxx~]#ceph-scluster334cfe7e-9ccc-483d-8d2c-218fde3a5fdehealthHEALTH_WARNtoomanyPGsperOSD(307>max300)nodeep-scrubflag(s)setmonmape1:3monsat{node1=100.88.28.11:6789/0,node2=100.88.28.12

- tomcat基础与部署发布

暗黑小菠萝

Tomcat java web

从51cto搬家了,以后会更新在这里方便自己查看。

做项目一直用tomcat,都是配置到eclipse中使用,这几天有时间整理一下使用心得,有一些自己配置遇到的细节问题。

Tomcat:一个Servlets和JSP页面的容器,以提供网站服务。

一、Tomcat安装

安装方式:①运行.exe安装包

&n

- 网站架构发展的过程

ayaoxinchao

数据库应用服务器网站架构

1.初始阶段网站架构:应用程序、数据库、文件等资源在同一个服务器上

2.应用服务和数据服务分离:应用服务器、数据库服务器、文件服务器

3.使用缓存改善网站性能:为应用服务器提供本地缓存,但受限于应用服务器的内存容量,可以使用专门的缓存服务器,提供分布式缓存服务器架构

4.使用应用服务器集群改善网站的并发处理能力:使用负载均衡调度服务器,将来自客户端浏览器的访问请求分发到应用服务器集群中的任何

- [信息与安全]数据库的备份问题

comsci

数据库

如果你们建设的信息系统是采用中心-分支的模式,那么这里有一个问题

如果你的数据来自中心数据库,那么中心数据库如果出现故障,你的分支机构的数据如何保证安全呢?

是否应该在这种信息系统结构的基础上进行改造,容许分支机构的信息系统也备份一个中心数据库的文件呢?

&n

- 使用maven tomcat plugin插件debug关联源代码

商人shang

mavendebug查看源码tomcat-plugin

*首先需要配置好'''maven-tomcat7-plugin''',参见[[Maven开发Web项目]]的'''Tomcat'''部分。

*配置好后,在[[Eclipse]]中打开'''Debug Configurations'''界面,在'''Maven Build'''项下新建当前工程的调试。在'''Main'''选项卡中点击'''Browse Workspace...'''选择需要开发的

- 大访问量高并发

oloz

大访问量高并发

大访问量高并发的网站主要压力还是在于数据库的操作上,尽量避免频繁的请求数据库。下面简

要列出几点解决方案:

01、优化你的代码和查询语句,合理使用索引

02、使用缓存技术例如memcache、ecache将不经常变化的数据放入缓存之中

03、采用服务器集群、负载均衡分担大访问量高并发压力

04、数据读写分离

05、合理选用框架,合理架构(推荐分布式架构)。

- cache 服务器

小猪猪08

cache

Cache 即高速缓存.那么cache是怎么样提高系统性能与运行速度呢?是不是在任何情况下用cache都能提高性能?是不是cache用的越多就越好呢?我在近期开发的项目中有所体会,写下来当作总结也希望能跟大家一起探讨探讨,有错误的地方希望大家批评指正。

1.Cache 是怎么样工作的?

Cache 是分配在服务器上

- mysql存储过程

香水浓

mysql

Description:插入大量测试数据

use xmpl;

drop procedure if exists mockup_test_data_sp;

create procedure mockup_test_data_sp(

in number_of_records int

)

begin

declare cnt int;

declare name varch

- CSS的class、id、css文件名的常用命名规则

agevs

JavaScriptUI框架Ajaxcss

CSS的class、id、css文件名的常用命名规则

(一)常用的CSS命名规则

头:header

内容:content/container

尾:footer

导航:nav

侧栏:sidebar

栏目:column

页面外围控制整体布局宽度:wrapper

左右中:left right

- 全局数据源

AILIKES

javatomcatmysqljdbcJNDI

实验目的:为了研究两个项目同时访问一个全局数据源的时候是创建了一个数据源对象,还是创建了两个数据源对象。

1:将diuid和mysql驱动包(druid-1.0.2.jar和mysql-connector-java-5.1.15.jar)copy至%TOMCAT_HOME%/lib下;2:配置数据源,将JNDI在%TOMCAT_HOME%/conf/context.xml中配置好,格式如下:&l

- MYSQL的随机查询的实现方法

baalwolf

mysql

MYSQL的随机抽取实现方法。举个例子,要从tablename表中随机提取一条记录,大家一般的写法就是:SELECT * FROM tablename ORDER BY RAND() LIMIT 1。但是,后来我查了一下MYSQL的官方手册,里面针对RAND()的提示大概意思就是,在ORDER BY从句里面不能使用RAND()函数,因为这样会导致数据列被多次扫描。但是在MYSQL 3.23版本中,

- JAVA的getBytes()方法

bijian1013

javaeclipseunixOS

在Java中,String的getBytes()方法是得到一个操作系统默认的编码格式的字节数组。这个表示在不同OS下,返回的东西不一样!

String.getBytes(String decode)方法会根据指定的decode编码返回某字符串在该编码下的byte数组表示,如:

byte[] b_gbk = "

- AngularJS中操作Cookies

bijian1013

JavaScriptAngularJSCookies

如果你的应用足够大、足够复杂,那么你很快就会遇到这样一咱种情况:你需要在客户端存储一些状态信息,这些状态信息是跨session(会话)的。你可能还记得利用document.cookie接口直接操作纯文本cookie的痛苦经历。

幸运的是,这种方式已经一去不复返了,在所有现代浏览器中几乎

- [Maven学习笔记五]Maven聚合和继承特性

bit1129

maven

Maven聚合

在实际的项目中,一个项目通常会划分为多个模块,为了说明问题,以用户登陆这个小web应用为例。通常一个web应用分为三个模块:

1. 模型和数据持久化层user-core,

2. 业务逻辑层user-service以

3. web展现层user-web,

user-service依赖于user-core

user-web依赖于user-core和use

- 【JVM七】JVM知识点总结

bit1129

jvm

1. JVM运行模式

1.1 JVM运行时分为-server和-client两种模式,在32位机器上只有client模式的JVM。通常,64位的JVM默认都是使用server模式,因为server模式的JVM虽然启动慢点,但是,在运行过程,JVM会尽可能的进行优化

1.2 JVM分为三种字节码解释执行方式:mixed mode, interpret mode以及compiler

- linux下查看nginx、apache、mysql、php的编译参数

ronin47

在linux平台下的应用,最流行的莫过于nginx、apache、mysql、php几个。而这几个常用的应用,在手工编译完以后,在其他一些情况下(如:新增模块),往往想要查看当初都使用了那些参数进行的编译。这时候就可以利用以下方法查看。

1、nginx

[root@361way ~]# /App/nginx/sbin/nginx -V

nginx: nginx version: nginx/

- unity中运用Resources.Load的方法?

brotherlamp

unity视频unity资料unity自学unityunity教程

问:unity中运用Resources.Load的方法?

答:Resources.Load是unity本地动态加载资本所用的方法,也即是你想动态加载的时分才用到它,比方枪弹,特效,某些实时替换的图像什么的,主张此文件夹不要放太多东西,在打包的时分,它会独自把里边的一切东西都会集打包到一同,不论里边有没有你用的东西,所以大多数资本应该是自个建文件放置

1、unity实时替换的物体即是依据环境条件

- 线段树-入门

bylijinnan

java算法线段树

/**

* 线段树入门

* 问题:已知线段[2,5] [4,6] [0,7];求点2,4,7分别出现了多少次

* 以下代码建立的线段树用链表来保存,且树的叶子结点类似[i,i]

*

* 参考链接:http://hi.baidu.com/semluhiigubbqvq/item/be736a33a8864789f4e4ad18

* @author lijinna

- 全选与反选

chicony

全选

<!DOCTYPE HTML PUBLIC "-//W3C//DTD HTML 4.01 Transitional//EN" "http://www.w3.org/TR/html4/loose.dtd">

<html>

<head>

<title>全选与反选</title>

- vim一些简单记录

chenchao051

vim

mac在/usr/share/vim/vimrc linux在/etc/vimrc

1、问:后退键不能删除数据,不能往后退怎么办?

答:在vimrc中加入set backspace=2

2、问:如何控制tab键的缩进?

答:在vimrc中加入set tabstop=4 (任何

- Sublime Text 快捷键

daizj

快捷键sublime

[size=large][/size]Sublime Text快捷键:Ctrl+Shift+P:打开命令面板Ctrl+P:搜索项目中的文件Ctrl+G:跳转到第几行Ctrl+W:关闭当前打开文件Ctrl+Shift+W:关闭所有打开文件Ctrl+Shift+V:粘贴并格式化Ctrl+D:选择单词,重复可增加选择下一个相同的单词Ctrl+L:选择行,重复可依次增加选择下一行Ctrl+Shift+L:

- php 引用(&)详解

dcj3sjt126com

PHP

在PHP 中引用的意思是:不同的名字访问同一个变量内容. 与C语言中的指针是有差别的.C语言中的指针里面存储的是变量的内容在内存中存放的地址 变量的引用 PHP 的引用允许你用两个变量来指向同一个内容 复制代码代码如下:

<?

$a="ABC";

$b =&$a;

echo

- SVN中trunk,branches,tags用法详解

dcj3sjt126com

SVN

Subversion有一个很标准的目录结构,是这样的。比如项目是proj,svn地址为svn://proj/,那么标准的svn布局是svn://proj/|+-trunk+-branches+-tags这是一个标准的布局,trunk为主开发目录,branches为分支开发目录,tags为tag存档目录(不允许修改)。但是具体这几个目录应该如何使用,svn并没有明确的规范,更多的还是用户自己的习惯。

- 对软件设计的思考

e200702084

设计模式数据结构算法ssh活动

软件设计的宏观与微观

软件开发是一种高智商的开发活动。一个优秀的软件设计人员不仅要从宏观上把握软件之间的开发,也要从微观上把握软件之间的开发。宏观上,可以应用面向对象设计,采用流行的SSH架构,采用web层,业务逻辑层,持久层分层架构。采用设计模式提供系统的健壮性和可维护性。微观上,对于一个类,甚至方法的调用,从计算机的角度模拟程序的运行情况。了解内存分配,参数传

- 同步、异步、阻塞、非阻塞

geeksun

非阻塞

同步、异步、阻塞、非阻塞这几个概念有时有点混淆,在此文试图解释一下。

同步:发出方法调用后,当没有返回结果,当前线程会一直在等待(阻塞)状态。

场景:打电话,营业厅窗口办业务、B/S架构的http请求-响应模式。

异步:方法调用后不立即返回结果,调用结果通过状态、通知或回调通知方法调用者或接收者。异步方法调用后,当前线程不会阻塞,会继续执行其他任务。

实现:

- Reverse SSH Tunnel 反向打洞實錄

hongtoushizi

ssh

實際的操作步驟:

# 首先,在客戶那理的機器下指令連回我們自己的 Server,並設定自己 Server 上的 12345 port 會對應到幾器上的 SSH port

ssh -NfR 12345:localhost:22

[email protected]

# 然後在 myhost 的機器上連自己的 12345 port,就可以連回在客戶那的機器

ssh localhost -p 1

- Hibernate中的缓存

Josh_Persistence

一级缓存Hiberante缓存查询缓存二级缓存

Hibernate中的缓存

一、Hiberante中常见的三大缓存:一级缓存,二级缓存和查询缓存。

Hibernate中提供了两级Cache,第一级别的缓存是Session级别的缓存,它是属于事务范围的缓存。这一级别的缓存是由hibernate管理的,一般情况下无需进行干预;第二级别的缓存是SessionFactory级别的缓存,它是属于进程范围或群集范围的缓存。这一级别的缓存

- 对象关系行为模式之延迟加载

home198979

PHP架构延迟加载

形象化设计模式实战 HELLO!架构

一、概念

Lazy Load:一个对象,它虽然不包含所需要的所有数据,但是知道怎么获取这些数据。

延迟加载貌似很简单,就是在数据需要时再从数据库获取,减少数据库的消耗。但这其中还是有不少技巧的。

二、实现延迟加载

实现Lazy Load主要有四种方法:延迟初始化、虚

- xml 验证

pengfeicao521

xmlxml解析

有些字符,xml不能识别,用jdom或者dom4j解析的时候就报错

public static void testPattern() {

// 含有非法字符的串

String str = "Jamey친ÑԂ

- div设置半透明效果

spjich

css半透明

为div设置如下样式:

div{filter:alpha(Opacity=80);-moz-opacity:0.5;opacity: 0.5;}

说明:

1、filter:对win IE设置半透明滤镜效果,filter:alpha(Opacity=80)代表该对象80%半透明,火狐浏览器不认2、-moz-opaci

- 你真的了解单例模式么?

w574240966

java单例设计模式jvm

单例模式,很多初学者认为单例模式很简单,并且认为自己已经掌握了这种设计模式。但事实上,你真的了解单例模式了么。

一,单例模式的5中写法。(回字的四种写法,哈哈。)

1,懒汉式

(1)线程不安全的懒汉式

public cla

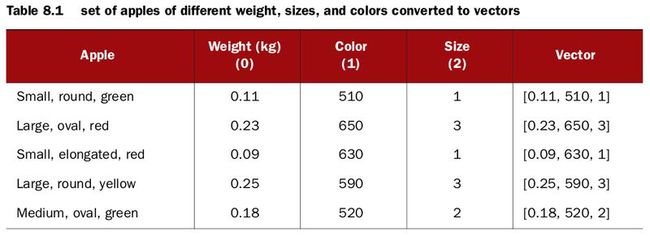

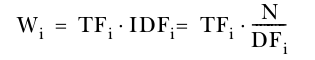

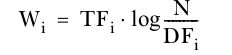

The basic assumption of the vector space model (VSM) is that the words are dimensions and therefore are orthogonal to each other. In other words, VSM assumes that the occurrences of words are independent of each other, in the same sense that a point’s x coordinate is entirely independent of its y coordinate, in two dimensions. By intuition you know that this assumption is wrong in many cases. For example, the word Cola has higher probability of occurring along with the word Coca, so these words aren’t completely independent. Other models try to consider word dependencies. One well-known technique is latent semantic indexing (LSI), which detects dimensions that seem to go together and merges them into a single one.

The basic assumption of the vector space model (VSM) is that the words are dimensions and therefore are orthogonal to each other. In other words, VSM assumes that the occurrences of words are independent of each other, in the same sense that a point’s x coordinate is entirely independent of its y coordinate, in two dimensions. By intuition you know that this assumption is wrong in many cases. For example, the word Cola has higher probability of occurring along with the word Coca, so these words aren’t completely independent. Other models try to consider word dependencies. One well-known technique is latent semantic indexing (LSI), which detects dimensions that seem to go together and merges them into a single one.