虚拟机重启后hadoop走动步骤

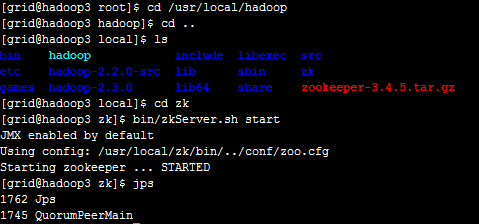

/usr/local/zk/bin/zkServer.sh start

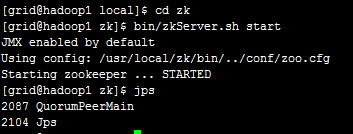

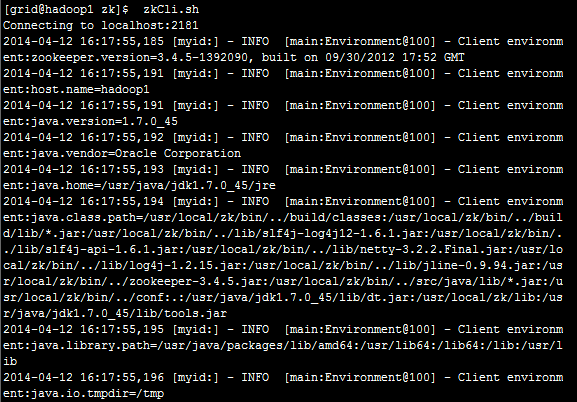

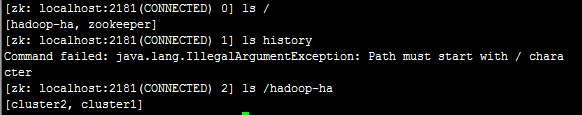

zkCli.sh

格式化ZooKeeper集群,目的是在ZooKeeper集群上建立HA的相应节点。

在hadoop1上执行命令:/usr/local/hadoop/bin/hdfs zkfc �CformatZK

【格式化操作的目的是在ZK集群中建立一个节点,用于保存集群c1中NameNode的状态数据】

在hadoop3上执行命令:/usr/local/hadoop/bin/hdfs zkfc �CformatZK

【集群c2也格式化,产生一个新的ZK节点cluster2】

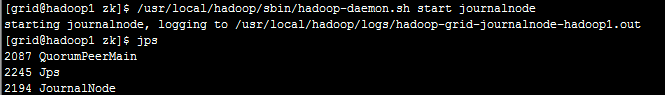

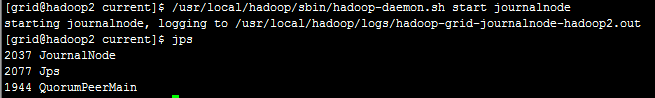

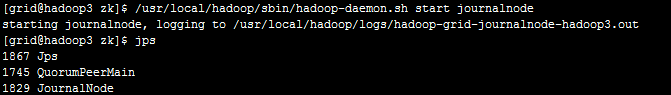

启动JournalNode集群

在hadoop1、hadoop2、hadoop3上分别执行命令

/usr/local/hadoop/sbin/hadoop-daemon.sh start journalnode

【启动JournalNode后,会在本地磁盘产生一个目录,用户保存NameNode的edits文件的数据】

格式化集群c1的一个NameNode

从hadoop1和hadoop2中任选一个即可,这里选择的是hadoop1

在hadoop1执行以下命令:/usr/local/hadoop/bin/hdfs namenode -format -clusterId c1

【格式化NameNode会在磁盘产生一个目录,用于保存NameNode的fsimage、edits等文件】

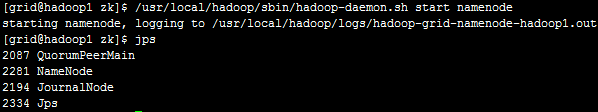

启动c1中刚才格式化的NameNode

在hadoop1上执行命令:/usr/local/hadoop/sbin/hadoop-daemon.sh start namenode

【启动后,产生一个新的java进程NameNode】

把NameNode的数据从hadoop1同步到hadoop2中

在hadoop2上执行命令:/usr/local/hadoop/bin/hdfs namenode �CbootstrapStandby

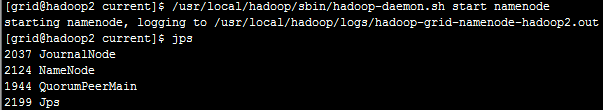

启动c1中另一个Namenode

在hadoop2上执行命令:/usr/local/hadoop/sbin/hadoop-daemon.sh start namenode

【产生java进程NameNode】

格式化集群c2的一个NameNode

从hadoop3和hadoop4中任选一个即可,这里选择的是hadoop3

在hadoop3执行以下命令:/usr/local/hadoop/bin/hdfs namenode -format -clusterId c2

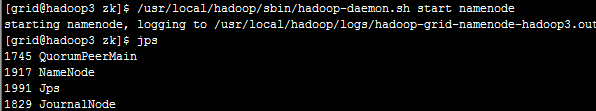

启动c2中刚才格式化的NameNode

在hadoop3上执行命令:/usr/local/hadoop/sbin/hadoop-daemon.sh start namenode

把NameNode的数据从hadoop3同步到hadoop4中

在hadoop4上执行命令:/usr/local/hadoop/bin/hdfs namenode �CbootstrapStandby

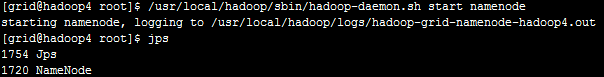

.启动c2中另一个Namenode

在hadoop4上执行命令:/usr/local/hadoop/sbin/hadoop-daemon.sh start namenode

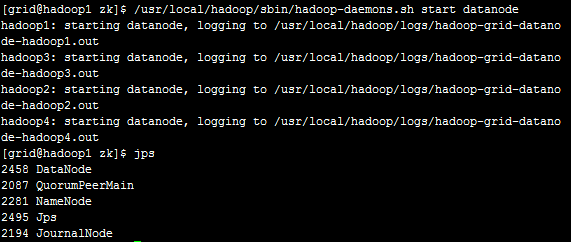

启动所有的DataNode

在hadoop1上执行命令:/usr/local/hadoop/sbin/hadoop-daemons.sh start datanode

命令输出:

[root@hadoop1 hadoop]# /usr/local/hadoop/sbin/hadoop-daemons.sh start datanode

hadoop1: starting datanode, logging to /usr/local/hadoop/logs/hadoop-root-datanode-hadoop1.out

hadoop3: starting datanode, logging to /usr/local/hadoop/logs/hadoop-root-datanode-hadoop3.out

hadoop2: starting datanode, logging to /usr/local/hadoop/logs/hadoop-root-datanode-hadoop2.out

hadoop4: starting datanode, logging to /usr/local/hadoop/logs/hadoop-root-datanode-hadoop4.out

[root@hadoop1 hadoop]#

【上述命令会在四个节点分别启动DataNode进程】

验证(以hadoop1为例):

[root@hadoop1 hadoop]# jps

23396 JournalNode

24302 Jps

24232 DataNode

23558 NameNode

22491 QuorumPeerMain

[root@hadoop1 hadoop]#

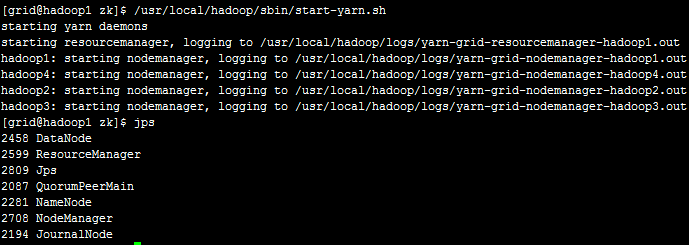

启动Yarn

在hadoop1上执行命令:/usr/local/hadoop/sbin/start-yarn.sh

命令输出:

[root@hadoop1 hadoop]# /usr/local/hadoop/sbin/start-yarn.sh

starting yarn daemons

starting resourcemanager, logging to /usr/local/hadoop/logs/yarn-root-resourcemanager-hadoop1.out

hadoop4: starting nodemanager, logging to /usr/local/hadoop/logs/yarn-root-nodemanager-hadoop4.out

hadoop3: starting nodemanager, logging to /usr/local/hadoop/logs/yarn-root-nodemanager-hadoop3.out

hadoop2: starting nodemanager, logging to /usr/local/hadoop/logs/yarn-root-nodemanager-hadoop2.out

hadoop1: starting nodemanager, logging to /usr/local/hadoop/logs/yarn-root-nodemanager-hadoop1.out

[root@hadoop1 hadoop]#

验证:

[root@hadoop1 hadoop]# jps

23396 JournalNode

25154 ResourceManager

25247 NodeManager

24232 DataNode

23558 NameNode

22491 QuorumPeerMain

25281 Jps

[root@hadoop1 hadoop]#

【产生java进程ResourceManager和NodeManager】

也可以通过浏览器访问,如下图

启动ZooKeeperFailoverController

在hadoop1、hadoop2、hadoop3、hadoop4上分别执行命令:/usr/local/hadoop/sbin/hadoop-daemon.sh start zkfc

命令输出(以hadoop1为例):

[root@hadoop1 hadoop]# /usr/local/hadoop/sbin/hadoop-daemon.sh start zkfc

starting zkfc, logging to /usr/local/hadoop/logs/hadoop-root-zkfc-hadoop101.out

[root@hadoop1 hadoop]#

验证(以hadoop1为例):

[root@hadoop1 hadoop]# jps

24599 DFSZKFailoverController

23396 JournalNode

24232 DataNode

23558 NameNode

22491 QuorumPeerMain

24654 Jps

[root@hadoop1 hadoop]#

【产生java进程DFSZKFailoverController】

hadoop1,hadoop2.hadoop3.hadoop4:/usr/local/hadoop/sbin/hadoop-daemon.sh stop zkfc

hadoop1:/usr/local/hadoop/sbin/stop-yarn.sh

hadoop1: /usr/local/hadoop/sbin/hadoop-daemons.sh sotp datanode

hadoop1,hadoop2.hadoop3.hadoop4: /usr/local/hadoop/sbin/hadoop-daemon.sh stop namenode

hadoop1,hadoop2.hadoop3:/usr/local/hadoop/sbin/hadoop-daemon.sh stop journalnode

hadoop1,hadoop2.hadoop3:/usr/local/zk/bin/zkServer.sh stop