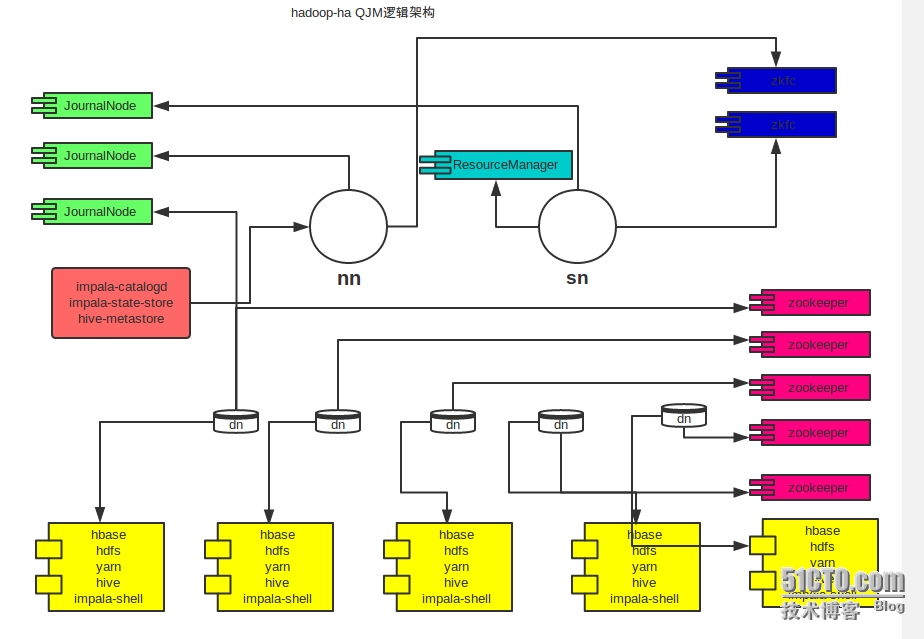

hadoop-ha QJM 架构部署

公司之前老的hadoop集群namenode有单点风险,最近学习此链接http://www.binospace.com/index.php/hdfs-ha-quorum-journal-manager/ 牛人上的hadoop高可用部署,受益非浅,自己搞了一个和自己集群比较匹配的部署逻辑图,供要用hadoop的兄弟们使用,

如下图:

部署过程,有时间整理完了,给兄弟们奉上,供大家参考少走变路,哈哈!

一,安装准备

操作系统 centos6.2

7台虚拟机

192.168.10.138 yum-test.h.com #需要从 cloudera 取最新稳定的yum包到本地,

192.168.10.134 namenode.h.com

192.168.10.139 snamenode.h.com

192.168.10.135 datanode1.h.com

192.168.10.140 datanode2.h.com

192.168.10.141 datanode3.h.com

192.168.10.142 datanode4.h.com

以上对应的主机名和域名加到七台主机的 /etc/hosts中,

二,安装篇

master-namenode 上安装如下包

yum install hadoop-yarn hadoop-mapreduce hadoop-hdfs-zkfc hadoop-hdfs-journalnode impala-lzo* hadoop-hdfs-namenode impala-state-store impala-catalog hive-metastore -y

注:最后安装

standby-namenode 上安装如下包

yum install hadoop-yarn hadoop-yarn-resourcemanager hadoop-hdfs-namenode hadoop-hdfs-zkfc hadoop-hdfs-journalnode hadoop-mapreduce hadoop-mapreduce-historyserver -y

datanode 集群安装(4台) 以下简称为dn节点:

yum install zookeeper zookeeper-server hive-hbase hbase-master hbase hbase-regionserver impala impala-server impala-shell impala-lzo* hadoop-hdfs hadoop-hdfs-datanode hive hive-server2 hive-jdbc hadoop-yarn hadoop-yarn-nodemanager -y

三,服务配置篇:

nn 节点:

cd /etc/hadoop/conf/

vim core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://namenode.h.com:8020/</value>

</property>

<property>

<name>fs.default.name</name>

<value>hdfs://namenode.h.com:8020/</value>

</property>

<property>

<name>ha.zookeeper.quorum</name>

<value>namenode.h.com,datanode01.h.com,datanode02.h.com,datanode03.h.com,datanode04.h.com</value>

</property>

<property>

<name>fs.trash.interval</name>

<value>14400</value>

</property>

<property>

<name>io.file.buffer.size</name>

<value>65536</value>

</property>

<property>

<name>io.compression.codecs</name>

<value>org.apache.hadoop.io.compress.DefaultCodec,org.apache.hadoop.io.compress.GzipCodec,org.apache.hadoop.io.compress.BZip2Codec,com.hadoop.compression.lzo.LzopCodec,org.apache.hadoop.io.compress.SnappyCodec</value>

</property>

<property>

<name>io.compression.codec.lzo.class</name>

<value>com.hadoop.compression.lzo.LzoCodec</value>

</property>

</configuration>

cat hdfs-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:///data/dfs/nn</value>

</property>

<!-- hadoop-datanode-- >

<!--

<property>

<name>dfs.datanode.data.dir</name>

<value>/data1/dfs/dn,/data2/dfs/dn,/data3/dfs/dn,/data4/dfs/dn,/data5/dfs/dn,/data6/dfs/dn,/data7/dfs/dn</value>

</property>

-->

<!-- hadoop HA -->

<property>

<name>dfs.nameservices</name>

<value>wqkcluster</value>

</property>

<property>

<name>dfs.ha.namenodes.wqkcluster</name>

<value>nn1,nn2</value>

</property>

<property>

<name>dfs.namenode.rpc-address.wqkcluster.nn1</name>

<value>namenode.h.com:8020</value>

</property>

<property>

<name>dfs.namenode.rpc-address.wqkcluster.nn2</name>

<value>snamenode.h.com:8020</value>

</property>

<property>

<name>dfs.namenode.http-address.wqkcluster.nn1</name>

<value>namenode.h.com:50070</value>

</property>

<property>

<name>dfs.namenode.http-address.wqkcluster.nn2</name>

<value>snamenode.h.com:50070</value>

</property>

<property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://namenode.h.com:8485;snamenode.h.com:8485;datanode01.h.com:8485/wqkcluster</value>

</property>

<property>

<name>dfs.journalnode.edits.dir</name>

<value>/data/dfs/jn</value>

</property>

<property>

<name>dfs.client.failover.proxy.provider.wqkcluster</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value>

</property>

<property>

<name>dfs.ha.fencing.methods</name>

<value>sshfence(hdfs)</value>

</property>

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/var/lib/hadoop-hdfs/.ssh/id_rsa</value>

</property>

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

<property>

<name>dfs.https.port</name>

<value>50470</value>

</property>

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.block.size</name>

<value>134217728</value>

</property>

<property>

<name>dfs.datanode.max.xcievers</name>

<value>8192</value>

</property>

<property>

<name>fs.permissions.umask-mode</name>

<value>022</value>

</property>

<property>

<name>dfs.permissions.superusergroup</name>

<value>hadoop</value>

</property>

<property>

<name>dfs.client.read.shortcircuit</name>

<value>true</value>

</property>

<property>

<name>dfs.domain.socket.path</name>

<value>/var/run/hadoop-hdfs/dn._PORT</value>

</property>

<property>

<name>dfs.client.file-block-storage-locations.timeout</name>

<value>10000</value>

</property>

<property>

<name>dfs.datanode.hdfs-blocks-metadata.enabled</name>

<value>true</value>

</property>

<property>

<name>dfs.client.domain.socket.data.traffic</name>

<value>false</value>

</property>

</configuration>

cat yarn-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>snamenode.h.com:8031</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>snamenode.h.com:8032</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address</name>

<value>snamenode.h.com:8033</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>snamenode.h.com:8030</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>snamenode.h.com:8088</value>

</property>

<property>

<name>yarn.nodemanager.local-dirs</name>

<value>/data1/yarn/local,/data2/yarn/local,/data3/yarn/local,/data4/yarn/local</value>

</property>

<property>

<name>yarn.nodemanager.log-dirs</name>

<value>/data1/yarn/logs,/data2/yarn/logs,/data3/yarn/logs,/data4/yarn/logs</value>

</property>

<property>

<name>yarn.nodemanager.remote-app-log-dir</name>

<value>/yarn/apps</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce.shuffle</value>

</property>

<property>

<name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

</property>

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<property>

<name>yarn.application.classpath</name>

<value>

$HADOOP_CONF_DIR,

$HADOOP_COMMON_HOME/*,

$HADOOP_COMMON_HOME/lib/*,

$HADOOP_HDFS_HOME/*,

$HADOOP_HDFS_HOME/lib/*,

$HADOOP_MAPRED_HOME/*,

$HADOOP_MAPRED_HOME/lib/*,

$YARN_HOME/*,

$YARN_HOME/lib/*</value>

</property>

<!--

<property>

<name>yarn.resourcemanager.scheduler.class</name>

<value>org.apache.hadoop.yarn.server.resourcemanager.scheduler.fifo.FifoScheduler</value>

</property>

-->

<property>

<name>yarn.resourcemanager.max-completed-applications</name>

<value>10000</value>

</property>

</configuration>

配置服务过程中,往其它节点分发配置的脚本:

cat /root/cmd.sh

#!/bin/sh

for ip in 134 139 135 140 141 142;do

echo "==============="$node"==============="

ssh 10.168.35.$ip $1

done

cat /root/syn.sh

#!/bin/sh

for ip in 127 120 121 122 123;do

scp -r $1 10.168.35.$ip:$2

done

journalnode部署在 namenode ,snamenode,datanode1三个节点上创建目录:

namenode:

mkdir -p /data/dfs/jn ; chown -R hdfs:hdfs /data/dfs/jn

snamenode:

mkdir -p /data/dfs/jn ; chown -R hdfs:hdfs /data/dfs/jn

dn1

mkdir -p /data/dfs/jn ; chown -R hdfs:hdfs /data/dfs/jn

启动三个journalnode

/root/cmd.sh "for x in `ls /etc/init.d/|grep hadoop-hdfs-journalnode` ; do service $x start ; done"

格式化集群hdfs存储(primary):

namenode上创建目及给相关权限:

mkdir -p /data/dfs/nn ; chown hdfs.hdfs /data/dfs/nn -R

sudo -u hdfs hdfs namenode -format;/etc/init.d/hadoop-hdfs-namenode start

snamenode上操作(standby)

mkdir -p /data/dfs/nn ; chown hdfs.hdfs /data/dfs/nn -R

ssh snamenode 'sudo -u hdfs hdfs namenode -bootstrapStandby ; sudo service hadoop-hdfs-namenode start'

datanode上创建目录及权限:

hdfs:

mkdir -p /data{1,2}/dfs ; chown hdfs.hdfs /data{1,2}/dfs -R

yarn:

mkdir -p /data{1,2}/yarn; chown yarn.yarn /data{1,2}/yarn -R

在namenode和snamenode上配置hdfs用户间无密码登陆

namenode:

#passwd hdfs

#su - hdfs

$ ssh-keygen

$ ssh-copy-id snamenode

snamenode:

#passwd hdfs

#su - hdfs

$ ssh-keygen

$ ssh-copy-id namenode

在两个NameNode上安装hadoop-hdfs-zkfc

yum install hadoop-hdfs-zkfc

hdfs zkfc -formatZK

service hadoop-hdfs-zkfc start

测试执行手动切换:

sudo -u hdfs hdfs haadmin -failover nn1 nn2

查看某Namenode的状态:

sudo -u hdfs hdfs haadmin -getServiceState nn2

sudo -u hdfs hdfs haadmin -getServiceState nn1

配置启动yarn

在 hdfs 上创建目录:

sudo -u hdfs hadoop fs -mkdir -p /yarn/apps

sudo -u hdfs hadoop fs -chown yarn:mapred /yarn/apps

sudo -u hdfs hadoop fs -chmod -R 1777 /yarn/apps

sudo -u hdfs hadoop fs -mkdir /user

sudo -u hdfs hadoop fs -chmod 777 /user

sudo -u hdfs hadoop fs -mkdir -p /user/history

sudo -u hdfs hadoop fs -chmod -R 1777 /user/history

sudo -u hdfs hadoop fs -chown mapred:hadoop /user/history

snamenode 启动yarn-mapred-historyserver

sh /root/cmd.sh ' for x in `ls /etc/init.d/|grep hadoop-mapreduce-historyserver` ; do service $x start ; done'

为每个 MapReduce 用户创建主目录,比如说 hive 用户或者当前用户:

sudo -u hdfs hadoop fs -mkdir /user/$USER

sudo -u hdfs hadoop fs -chown $USER /user/$USER

每个节点启动 YARN :

sh /root/cmd.sh ' for x in `ls /etc/init.d/|grep hadoop-yarn` ; do service $x start ; done'

检查yarn是否启动成功:

sh /root/cmd.sh ' for x in `ls /etc/init.d/|grep hadoop-yarn` ; do service $x status ; done'

测试yarn

sudo -u hdfs hadoop jar /usr/lib/hadoop-mapreduce/hadoop-mapreduce-examples.jar randomwriter out

安装hive(在namenode上进行)

sh /root/cmd.sh 'yum install hive hive-hbase hvie-server hive server2 hive-jdbc -y' 上面可能已安装这些包,检查一下,

下载mysql jar并设置软连接:

ln -s /usr/share/java/mysql-connector-java-5.1.25-bin.jar /usr/lib/hive/lib/mysql-connector-java.jar

创建数据库和用户:

mysql -e "

CREATE DATABASE metastore;

USE metastore;

SOURCE /usr/lib/hive/scripts/metastore/upgrade/mysql/hive-schema-0.10.0.mysql.sql;

CREATE USER 'hiveuser'@'%' IDENTIFIED BY 'redhat';

CREATE USER 'hiveuser'@'localhost' IDENTIFIED BY 'redhat';

CREATE USER 'hiveuser'@'bj03-bi-pro-hdpnameNN' IDENTIFIED BY 'redhat';

REVOKE ALL PRIVILEGES, GRANT OPTION FROM 'hiveuser'@'%';

REVOKE ALL PRIVILEGES, GRANT OPTION FROM 'hiveuser'@'localhost';

REVOKE ALL PRIVILEGES, GRANT OPTION FROM 'hiveuser'@'bj03-bi-pro-hdpnameNN';

GRANT SELECT,INSERT,UPDATE,DELETE,LOCK TABLES,EXECUTE ON metastore.* TO 'hiveuser'@'%';

GRANT SELECT,INSERT,UPDATE,DELETE,LOCK TABLES,EXECUTE ON metastore.* TO 'hiveuser'@'localhost';

GRANT SELECT,INSERT,UPDATE,DELETE,LOCK TABLES,EXECUTE ON metastore.* TO 'hiveuser'@'bj03-bi-pro-hdpnameNN';

FLUSH PRIVILEGES;

"

修改hive配置文件:

cat /etc/hive/conf/hive-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>javax.jdo.rootion.ConnectionURL</name>

<value>jdbc:mysql://namenode.h.com:3306/metastore?useUnicode=true&characterEncoding=UTF-8</value>

</property>

<property>

<name>javax.jdo.rootion.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<property>

<name>javax.jdo.rootion.ConnectionUserName</name>

<value>hiveuser</value>

</property>

<property>

<name>javax.jdo.rootion.ConnectionPassword</name>

<value>redhat</value>

</property>

<property>

<name>datanucleus.autoCreateSchema</name>

<value>false</value>

</property>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>hive.metastore.local</name>

<value>false</value>

</property>

<property>

<name>hive.files.umask.value</name>

<value>0002</value>

</property>

<property>

<name>hive.metastore.uris</name>

<value>thrift://namenode.h.com:9083</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>namenode.h.com:8031</value>

</property>

<property>

<name>hive.metastore.warehouse.dir</name>

<value>/user/hive/warehouse</value>

</property>

<property>

<name>hive.metastore.cache.pinobjtypes</name>

<value>Table,Database,Type,FieldSchema,Order</value>

</property>

</configuration>

创建目录并设置权限:

sudo -u hdfs hadoop fs -mkdir /user/hive

sudo -u hdfs hadoop fs -chown hive /user/hive

sudo -u hdfs hadoop fs -mkdir /user/hive/warehouse

sudo -u hdfs hadoop fs -chmod 1777 /user/hive/warehouse

sudo -u hdfs hadoop fs -chown hive /user/hive/warehouse

启动metastore:

service hive-metastore start

安装zk:(安装namenode和4个dn节点上)

sh /root/cmd.sh 'yum install zookeeper* -y'

修改zoo.cfg,添加下面代码:

server.1=namenode.h.com:2888:3888

server.2=datanode01.h.com:2888:3888

server.3=datanode02.h.com:2888:3888

server.4=datanode03.h.com:2888:3888

server.5=datanode04.h.com:2888:3888

将配置文件同步到其他节点:

sh /root/syn.sh /etc/zookeeper/conf /etc/zookeeper/

在每个节点上初始化并启动 zookeeper,注意 n 的值需要和 zoo.cfg 中的编号一致。

sh /root/cmd.sh 'mkdir -p /data/zookeeper; chown -R zookeeper:zookeeper /data/zookeeper ; rm -rf /data/zookeeper/*'

ssh 192.168.10.134 'service zookeeper-server init --myid=1'

ssh 192.168.10.135 'service zookeeper-server init --myid=2'

ssh 192.168.10.140 'service zookeeper-server init --myid=3'

ssh 192.168.10.141'service zookeeper-server init --myid=4'

ssh 192.168.10.142 'service zookeeper-server init --myid=5'

检查是否初始化成功:

sh /root/cmd.sh 'cat /data/zookeeper/myid'

启动zk:

sh /root/cmd.sh 'service zookeeper-server start'

通过下面命令测试是否启动成功:

zookeeper-client -server namenode.h.com:2181

安装hbase(部署在4个dn节点上)

设置时钟同步:

sh /root/cmd.sh 'yum install ntpdate -y; ntpdate pool.ntp.org;

sh /root/cmd.sh ' ntpdate pool.ntp.org'

设置crontab:

sh /root/cmd.sh ‘echo "* 3 * * * ntpdate pool.ntp.org" > /var/spool/cron/root’

在4个数据节点上安装hbase:

注:上面yum 已完成安装

在 hdfs 中创建 /hbase 目录

sudo -u hdfs hadoop fs -mkdir /hbase;sudo -u hdfs hadoop fs -chown hbase:hbase /hbase

修改hbase配置文件,

配置 cat /etc/hbase/conf/hbase-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>hbase.rootdir</name>

<value>hdfs://wqkcluster/hbase</value>

</property>

<property>

<name>dfs.support.append</name>

<value>true</value>

</property>

<property>

<name>hbase.cluster.distributed</name>

<value>true</value>

</property>

<property>

<name>hbase.hregion.max.filesize</name>

<value>3758096384</value>

</property>

<property>

<name>hbase.hregion.memstore.flush.size</name>

<value>67108864</value>

</property>

<property>

<name>hbase.security.authentication</name>

<value>simple</value>

</property>

<property>

<name>zookeeper.session.timeout</name>

<value>180000</value>

</property>

<property>

<name>hbase.zookeeper.quorum</name>

<value>datanode01.h.com,datanode02.h.com,datanode03.h.com,datanode04.h.com,namenode.h.com</value>

</property>

<property>

<name>hbase.zookeeper.property.clientPort</name>

<value>2181</value>

</property>

<property>

<name>hbase.hregion.memstore.mslab.enabled</name>

<value>true</value>

</property>

<property>

<name>hbase.regions.slop</name>

<value>0</value>

</property>

<property>

<name>hbase.regionserver.handler.count</name>

<value>20</value>

</property>

<property>

<name>hbase.regionserver.lease.period</name>

<value>600000</value>

</property>

<property>

<name>hbase.client.pause</name>

<value>20</value>

</property>

<property>

<name>hbase.ipc.client.tcpnodelay</name>

<value>true</value>

</property>

<property>

<name>ipc.ping.interval</name>

<value>3000</value>

</property>

<property>

<name>hbase.client.retries.number</name>

<value>4</value>

</property>

<property>

<name>hbase.rpc.timeout</name>

<value>60000</value>

</property>

<property>

<name>hbase.zookeeper.property.maxClientCnxns</name>

<value>2000</value>

</property>

</configuration>

同步到其它四个dn节点:

sh /root/syn.sh /etc/hbase/conf /etc/hbase/

创建本地目录:

sh /root/cmd.sh 'mkdir /data/hbase ; chown -R hbase:hbase /data/hbase/'

启动HBase:

sh /root/cmd.sh ' for x in `ls /etc/init.d/|grep hbase` ; do service $x start ; done'

检查是否启动成功:

sh /root/cmd.sh ' for x in `ls /etc/init.d/|grep hbase` ; do service $x status ; done'

安装impala(安装在namenode和4个dn节点上)

在namenode节点安装impala-state-store impala-catalog

安装过程参考上面

在4个dn节点上安装impala impala-server impala-shell impala-udf-devel:

安装过程参考上面

拷贝mysql jdbc jar到impala目录,并分发到四个dn节点上

sh /root/syn.sh /usr/lib/hive/lib/mysql-connector-java.jar /usr/lib/impala/lib/

在每个节点上创建/var/run/hadoop-hdfs:

sh /root/cmd.sh 'mkdir -p /var/run/hadoop-hdfs'

将hive和hdfs配置文件拷贝到impala conf,并分发到4个dn节点上。

cp /etc/hive/conf/hive-site.xml /etc/impala/conf/

cp /etc/hadoop/conf/hdfs-site.xml /etc/impala/conf/

cp /etc/hadoop/conf/core-site.xml /etc/impala/conf/

sh /root/syn.sh /etc/impala/conf /etc/impala/

修改 /etc/default/impala,然后将其同步到impala节点上:

IMPALA_CATALOG_SERVICE_HOST=bj03-bi-pro-hdpnameNN

IMPALA_STATE_STORE_HOST=bj03-bi-pro-hdpnameNN

IMPALA_STATE_STORE_PORT=24000

IMPALA_BACKEND_PORT=22000

IMPALA_LOG_DIR=/var/log/impala

sh /root/syn.sh /etc/default/impala /etc/default/

启动 impala:

sh /root/cmd.sh ' for x in `ls /etc/init.d/|grep impala` ; do service $x start ; done'

检查是否启动成功:

sh /root/cmd.sh ' for x in `ls /etc/init.d/|grep impala` ; do service $x status ; done'

四,测试篇:

hdfs 服务状态测试

sudo -u hdfs hadoop dfsadmin -report

hdfs 文件上传,下载

su - hdfs hadoop dfs -put test.txt /tmp/

mapreduce 任务测试

bin/Hadoop jar \

share/hadoop/mapreduce/hadoop-mapreduce-examples-2.2.0.jar \

wordcount \

-files hdfs:///tmp/text.txt \

/test/input \

/test/output

测试yarn

sudo -u hdfs hadoop jar /usr/lib/hadoop-mapreduce/hadoop-mapreduce-examples.jar randomwriter out

namenode 自动切换测试

执行手动切换:

sudo -u hdfs hdfs haadmin -failover nn1 nn2

[root@snamenode ~]# sudo -u hdfs hdfs haadmin -getServiceState nn1

active

[root@snamenode ~]# sudo -u hdfs hdfs haadmin -getServiceState nn2

standby