RAID配置与管理详解

大纲

一、RAID概念

二、RAID级别

三、mdadm各模式介绍

四、软件RAID实现

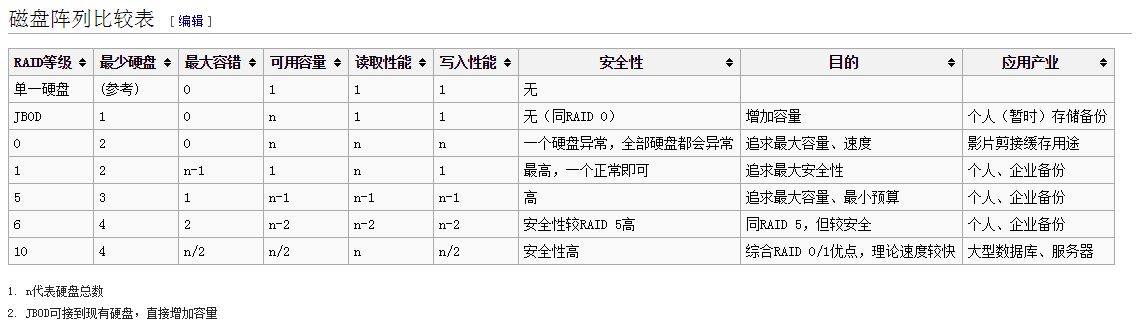

五、各RAID级别对比

一、RAID概念

1987年, Patterson、Gibson和Katz这三位工程师在加州大学伯克利分校发表了题为《A Case of Redundant Array of Inexpensive Disks(廉价磁盘冗余阵列方案)》的论文,其基本思想就是将多只容量较小的、相对廉价的硬盘驱动器进行有机组合,使其性能超过一只昂贵的大硬盘。但是在后来的发展与实践中发现,想要完成在生产环境中能够达到标准的话,代价已经比当时的SLED盘便宜多少,所以后来便又重新定义了RAID的概念,将Inexpensive改为了Independent的意思,所以现在的RAID全称是Redundant Array of Independent Disks,译为独立冗余磁盘阵列

RAID分类

硬RAID:由装载在主板上的RAID卡结合驱动程序实现,Linux下表现为/dev/sd#

软RAID:由内核模块MD(Multi Device)软件方式模拟实现的软件RAID,Linux下表现为/dev/md#性能比硬件RAID差,生产环境建议不到万不得已不要使用软件RAID

二、RAID级别

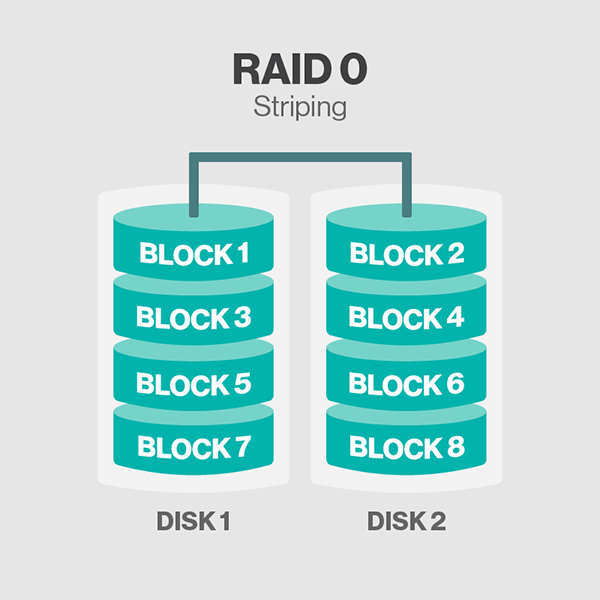

1.、RAID0:Striping(条带模式),连续以位或字节为单位分割数据,并行读/写于多个磁盘上,因此具有很高的数据传输率,但它没有数据冗余,因此并不能算是真正的RAID结构。RAID 0只是单纯地提高性能,并没有为数据的可靠性提供保证,而且其中的一个磁盘失效将影响到所有数据。因此,RAID 0不能应用于数据安全性要求高的场合

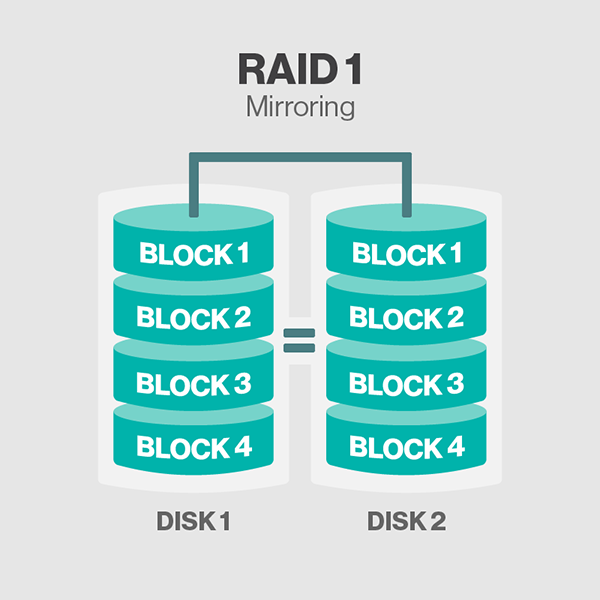

2、RAID 1:mirroring(镜像模式),它是通过磁盘数据镜像实现数据冗余,在成对的独立磁盘上产生互为备份的数据。当原始数据繁忙时,可直接从镜像拷贝中读取数据,因此RAID 1可以提高读取性能。RAID 1是磁盘阵列中单位成本最高的,但提供了很高的数据安全性和可用性。当一个磁盘失效时,系统可以自动切换到镜像磁盘上读写,而不需要重组失效的数据

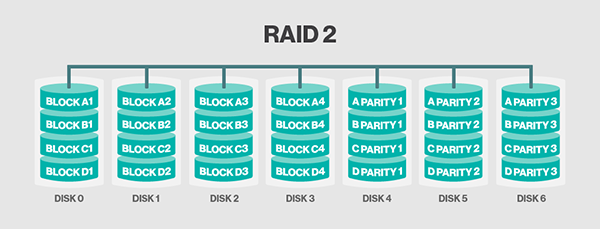

2、RAID 2:将数据条块化地分布于不同的硬盘上,条块单位为位或字节,并使用称为“加重平均纠错码(海明码)”的编码技术来提供错误检查及恢复。这种编码技术需要多个磁盘存放检查及恢复信息,使得RAID 2技术实施更复杂,因此在商业环境中很少使用

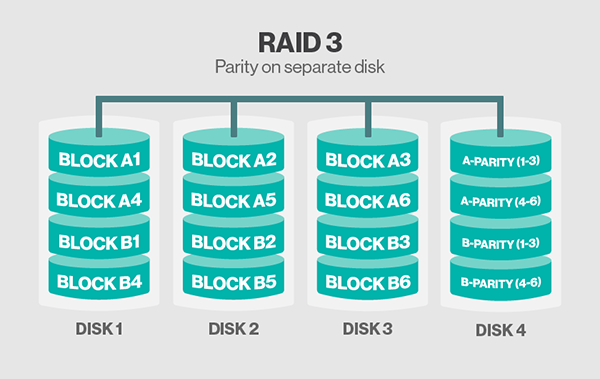

3、RAID 3:它同RAID 2非常类似,都是将数据条块化分布于不同的硬盘上,区别在于RAID 3使用简单的奇偶校验,并用单块磁盘存放奇偶校验信息。如果一块磁盘失效,奇偶盘及其他数据盘可以重新产生数据;如果奇偶盘失效则不影响数据使用。RAID 3对于大量的连续数据可提供很好的传输率,但对于随机数据来说,奇偶盘会成为写操作的瓶颈

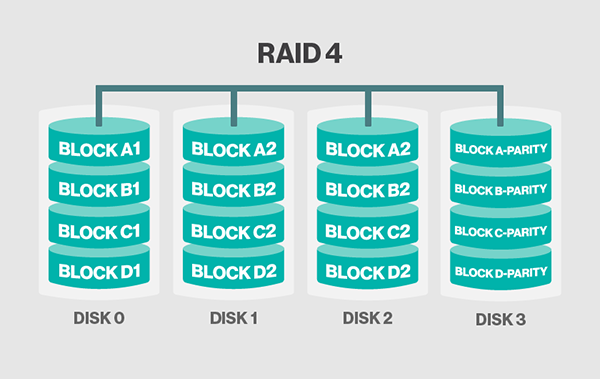

4、RAID 4:RAID 4同样也将数据条块化并分布于不同的磁盘上,但条块单位为块或记录。RAID 4使用一块磁盘作为奇偶校验盘,每次写操作都需要访问奇偶盘,这时奇偶校验盘会成为写操作的瓶颈,因此RAID 4在商业环境中也很少使用

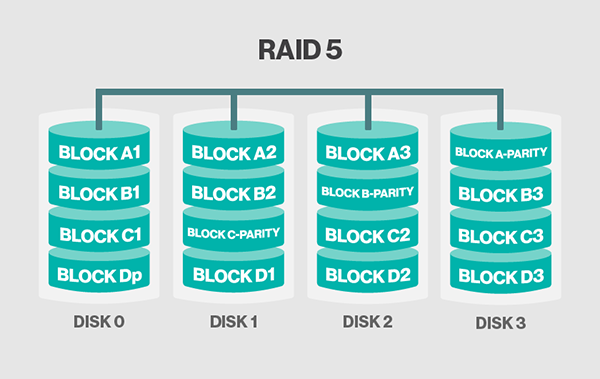

5、RAID 5:至少需要3块盘,数据同样是分散存储与多个磁盘之上,但是存储数据的同时也会存储校验码,而校验码轮流存储到多块磁盘上,读写性能都有提升,同时也有冗余能力

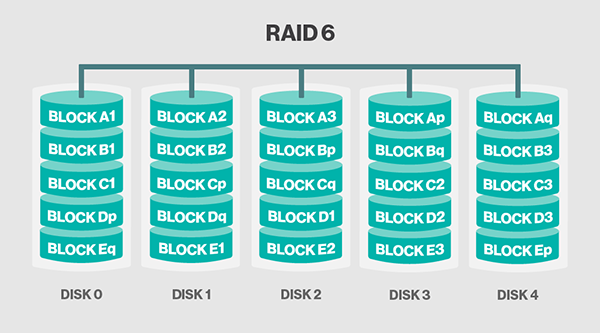

6、RAID 6:与RAID 5相比,RAID 6增加了第二个独立的奇偶校验信息块。两个独立的奇偶系统使用不同的算法,数据的可靠性非常高,即使两块磁盘同时失效也不会影响数据的使用。但RAID 6需要分配给奇偶校验信息更大的磁盘空间,相对于RAID 5有更大的“写损失”,因此“写性能”非常差。较差的性能和复杂的实施方式使得RAID 6很少得到实际应用

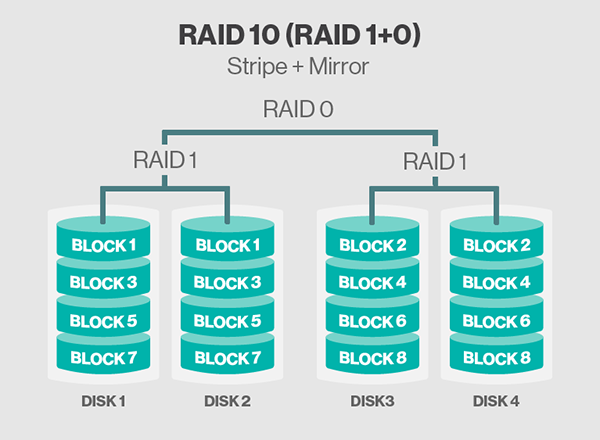

7、RAID 1+0:Raid 10是一个Raid 0与Raid1的组合体,它是利用奇偶校验实现条带集镜像,所以它继承了Raid0的快速和Raid1的安全。我们知道,RAID 1在这里就是一个冗余的备份阵列,而RAID 0则负责数据的读写阵列。数据是从主通路分出两路,做Striping操作,即把数据分割,而这分出来的每一路则再分两路,做Mirroring操作,即互做镜像

8、RAID 0+1:RAID 01类似RAID10,只不过RAID 01中的RAID 0和RAID 1的组合次序相反,RAID 01中数据是从主通路分出两路,先做Mirroring操作,即互做镜像,而这分出来的每一路则再分两路,后做Striping操作,即把数据分割

三、mdam各模式介绍

mdadm - manage MD devices aka Linux Software RAID # 管理软RAID工具

SYNOPSIS

mdadm [mode] <raiddevice> [options] <component-devices>

创建模式:

-C:--create,创建新的阵列,如 mdadm -C /dev/md0 -l 0 -n 2 -a yes /dev/sdb{1,2}

专用选项:

-l #:级别

-n #:设备个数

-a {yes|no}:是否自动为其创建设备文件

-c #:CHUNK大小, 2^n,默认为64K

-x #:指定空闲盘个数,-x后的空闲盘数与-n设备数之和应等于后面指定的磁盘数

管理模式

-f:--fail,模拟磁盘损坏,如 mdadm /dev/md# -f /dev/sda7

-r:--remove,移除模拟损坏的盘,如 mdadm /dev/md# -r/dev/sda7

-a:--add,装载空闲盘,如 mdadm /dev/md# -a /dev/sda7

-S:--stop,停止一个阵列,如 mdadm -S /dev/md#

监控模式

-F:--monitor,选择监控模式

-D:--detail,显示指定RAID的详细信息,如 mdadm -Ds >> /etc/mdadm.conf可保存配置信息至配置文件

增长模式

-G:--grow,改变激活阵列的大小或形态

装配模式

-A:--assemble,装配一个此前已存在的阵列,如 mdadm -A /dev/md0 /dev/sdb{1,2}

四、软件RAID实现

RAID 0实现

RAID 1 实现

RAID 5实现

RAID 10实现

系统环境:CentOS6.5

mdadm-3.3.2-5.el6.x86_64

同一块磁盘的四个分区:/dev/sdb{1,2,3,4}

注意:生产环境建议尽量不要做软RAID,实在要做也要弄单独的磁盘做,而不是我这样弄四个分区,我这里只是演示方便,其实四个分区没有任何效果。

以下两步的结果是实验的必要条件,故放在这里,下面每个RAID实例都是在此基础上演示的。

首先安装mdadm软件,已经安装了的可以略过此步

[root@soysauce ~]# man mdadm No manual entry for mdadm [root@soysauce ~]# yum install -y mdadm

接着准备四个分区

[root@soysauce ~]# fdisk /dev/sdb

WARNING: DOS-compatible mode is deprecated. It's strongly recommended to

switch off the mode (command 'c') and change display units to

sectors (command 'u').

Command (m for help): p # 一块新的磁盘,没有任何分区

Disk /dev/sdb: 21.5 GB, 21474836480 bytes

255 heads, 63 sectors/track, 2610 cylinders

Units = cylinders of 16065 * 512 = 8225280 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk identifier: 0x84c99918

Device Boot Start End Blocks Id System

Command (m for help): n # 新建一个分区

Command action

e extended

p primary partition (1-4)

p # 主分区

Partition number (1-4): 1 # 分区号为1

First cylinder (1-2610, default 1): # 指定起始柱面位置

Using default value 1

Last cylinder, +cylinders or +size{K,M,G} (1-2610, default 2610): +2G # 大小为2G

Command (m for help): n

Command action

e extended

p primary partition (1-4)

p

Partition number (1-4): 2

First cylinder (263-2610, default 263):

Using default value 263

Last cylinder, +cylinders or +size{K,M,G} (263-2610, default 2610): +2G

Command (m for help): n

Command action

e extended

p primary partition (1-4)

p

Partition number (1-4): 3

First cylinder (525-2610, default 525):

Using default value 525

Last cylinder, +cylinders or +size{K,M,G} (525-2610, default 2610): +2G

Command (m for help): n

Command action

e extended

p primary partition (1-4)

p

Selected partition 4

First cylinder (787-2610, default 787):

Using default value 787

Last cylinder, +cylinders or +size{K,M,G} (787-2610, default 2610): +2G

Command (m for help): p

Disk /dev/sdb: 21.5 GB, 21474836480 bytes

255 heads, 63 sectors/track, 2610 cylinders

Units = cylinders of 16065 * 512 = 8225280 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk identifier: 0x84c99918

Device Boot Start End Blocks Id System

/dev/sdb1 1 262 2104483+ 83 Linux

/dev/sdb2 263 524 2104515 83 Linux

/dev/sdb3 525 786 2104515 83 Linux

/dev/sdb4 787 1048 2104515 83 Linux

Command (m for help): t # 调整分区类型为fd

Partition number (1-4): 1

Hex code (type L to list codes): fd

Changed system type of partition 1 to fd (Linux raid autodetect)

Command (m for help): t

Partition number (1-4): 2

Hex code (type L to list codes): fd

Changed system type of partition 2 to fd (Linux raid autodetect)

Command (m for help): t

Partition number (1-4): 3

Hex code (type L to list codes): fd

Changed system type of partition 3 to fd (Linux raid autodetect)

Command (m for help): t

Partition number (1-4): 4

Hex code (type L to list codes): fd

Changed system type of partition 4 to fd (Linux raid autodetect)

Command (m for help): p

Disk /dev/sdb: 21.5 GB, 21474836480 bytes

255 heads, 63 sectors/track, 2610 cylinders

Units = cylinders of 16065 * 512 = 8225280 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk identifier: 0x84c99918

Device Boot Start End Blocks Id System

/dev/sdb1 1 262 2104483+ fd Linux raid autodetect

/dev/sdb2 263 524 2104515 fd Linux raid autodetect

/dev/sdb3 525 786 2104515 fd Linux raid autodetect

/dev/sdb4 787 1048 2104515 fd Linux raid autodetect

Command (m for help): w

The partition table has been altered!

Calling ioctl() to re-read partition table.

Syncing disks.

1、RAID 0

[root@soysauce ~]# mdadm -C /dev/md0 -a yes -l 0 -n 2 /dev/sdb{1,2} # 创建RAID 0

mdadm: /dev/sdb1 appears to contain an ext2fs file system

size=2104480K mtime=Thu Jan 1 08:00:00 1970

Continue creating array? y # 因为这个sdb1以前创建过文件系统

mdadm: Defaulting to version 1.2 metadata

mdadm: array /dev/md0 started. # 显示创建成功并启动了

[root@soysauce ~]# cat /proc/mdstat

Personalities : [raid0]

md0 : active raid0 sdb2[1] sdb1[0] # 这里可以看到RAID 0状态信息

4204544 blocks super 1.2 512k chunks

unused devices: <none>

[root@soysauce ~]# mdadm -D /dev/md0 # 查看RAID阵列的详细状态信息

/dev/md0:

Version : 1.2

Creation Time : Wed Nov 25 14:32:22 2015

Raid Level : raid0

Array Size : 4204544 (4.01 GiB 4.31 GB)

Raid Devices : 2

Total Devices : 2

Persistence : Superblock is persistent

Update Time : Wed Nov 25 14:32:22 2015

State : clean

Active Devices : 2

Working Devices : 2

Failed Devices : 0

Spare Devices : 0

Chunk Size : 512K

Name : soysauce:0 (local to host soysauce)

UUID : 1cdf117e:769e1279:e7c96b21:2009ec4f

Events : 0

Number Major Minor RaidDevice State

0 8 17 0 active sync /dev/sdb1

1 8 18 1 active sync /dev/sdb2

[root@soysauce ~]# mdadm -D -s >> /etc/mdadm.conf # 将RAID配置信息保存至配置文件

[root@soysauce ~]# mdadm -S /dev/md0 # 停止RAID 0

mdadm: stopped /dev/md0

[root@soysauce ~]# cat /proc/mdstat # 查看所有RAID信息,因为停止了,所以为空

Personalities : [raid0]

unused devices: <none>

[root@soysauce ~]# mdadm -A /dev/md0 # 装配RAID 0,因为配置信息保存了,所有不用指定磁盘

mdadm: /dev/md0 has been started with 2 drives.

[root@soysauce ~]# mke2fs -t ext4 /dev/md0 # 格式化,创建文件系统

mke2fs 1.41.12 (17-May-2010)

Filesystem label=

OS type: Linux

Block size=4096 (log=2)

Fragment size=4096 (log=2)

Stride=128 blocks, Stripe width=256 blocks

262944 inodes, 1051136 blocks

52556 blocks (5.00%) reserved for the super user

First data block=0

Maximum filesystem blocks=1077936128

33 block groups

32768 blocks per group, 32768 fragments per group

7968 inodes per group

Superblock backups stored on blocks:

32768, 98304, 163840, 229376, 294912, 819200, 884736

Writing inode tables: done

Creating journal (32768 blocks): done

Writing superblocks and filesystem accounting information: done

This filesystem will be automatically checked every 20 mounts or

180 days, whichever comes first. Use tune2fs -c or -i to override.

[root@soysauce ~]# mount /dev/md0 /data/ # 挂载之后就可以使用了

[root@soysauce ~]# ls /data/

lost+found

2、RAID 1

[root@soysauce ~]# mdadm -C /dev/md1 -a yes -l 1 -n 2 /dev/sdb{1,2}

mdadm: array /dev/md1 started.

[root@soysauce ~]# cat /proc/mdstat # 正在同步

Personalities : [raid0] [raid1]

md1 : active raid1 sdb2[1] sdb1[0]

2102400 blocks super 1.2 [2/2] [UU]

[================>....] resync = 82.1% (1726912/2102400) finish=0.0min speed=132839K/sec

unused devices: <none>

[root@soysauce ~]# mke2fs -t ext4 /dev/md1 # 格式化,创建ext4文件系统

mke2fs 1.41.12 (17-May-2010)

Filesystem label=

OS type: Linux

Block size=4096 (log=2)

Fragment size=4096 (log=2)

Stride=0 blocks, Stripe width=0 blocks

131648 inodes, 525600 blocks

26280 blocks (5.00%) reserved for the super user

First data block=0

Maximum filesystem blocks=541065216

17 block groups

32768 blocks per group, 32768 fragments per group

7744 inodes per group

Superblock backups stored on blocks:

32768, 98304, 163840, 229376, 294912

Writing inode tables: done

Creating journal (16384 blocks): done

Writing superblocks and filesystem accounting information: done

This filesystem will be automatically checked every 33 mounts or

180 days, whichever comes first. Use tune2fs -c or -i to override.

[root@soysauce ~]# mount /dev/md1 /data/ # 挂载使用

[root@soysauce ~]# cp /etc/inittab /data/

[root@soysauce ~]# cd /data/

[root@soysauce data]# mdadm -D /dev/md1 # 查看RAID阵列详细信息

/dev/md1:

Version : 1.2

Creation Time : Wed Nov 25 15:13:25 2015

Raid Level : raid1

Array Size : 2102400 (2.01 GiB 2.15 GB)

Used Dev Size : 2102400 (2.01 GiB 2.15 GB)

Raid Devices : 2

Total Devices : 2

Persistence : Superblock is persistent

Update Time : Wed Nov 25 15:18:06 2015

State : clean

Active Devices : 2

Working Devices : 2

Failed Devices : 0

Spare Devices : 0

Name : soysauce:1 (local to host soysauce)

UUID : 6330ff0a:f2d76826:9a24d91d:e218523f

Events : 17

Number Major Minor RaidDevice State

0 8 17 0 active sync /dev/sdb1

1 8 18 1 active sync /dev/sdb2

[root@soysauce data]# mdadm /dev/md1 -f /dev/sdb1 # 模拟sdb1损坏

mdadm: set /dev/sdb1 faulty in /dev/md1

[root@soysauce data]# mdadm -D /dev/md1 # 再次查看RAID 1信息

/dev/md1:

Version : 1.2

Creation Time : Wed Nov 25 15:13:25 2015

Raid Level : raid1

Array Size : 2102400 (2.01 GiB 2.15 GB)

Used Dev Size : 2102400 (2.01 GiB 2.15 GB)

Raid Devices : 2

Total Devices : 2

Persistence : Superblock is persistent

Update Time : Wed Nov 25 15:19:11 2015

State : clean, degraded

Active Devices : 1

Working Devices : 1

Failed Devices : 1

Spare Devices : 0

Name : soysauce:1 (local to host soysauce)

UUID : 6330ff0a:f2d76826:9a24d91d:e218523f

Events : 19

Number Major Minor RaidDevice State

0 0 0 0 removed

1 8 18 1 active sync /dev/sdb2

0 8 17 - faulty /dev/sdb1 # 此时sdb1已被标记为faulty

[root@soysauce data]# tail -5 inittab # 此时仍然可以正常查看文件

# 4 - unused

# 5 - X11

# 6 - reboot (Do NOT set initdefault to this)

#

id:3:initdefault:

[root@soysauce data]# mdadm /dev/md1 -r /dev/sdb1 # 移除模拟损坏的sdb1

mdadm: hot removed /dev/sdb1 from /dev/md1

[root@soysauce data]# mdadm -D /dev/md1 # 再次查看RAID阵列信息

/dev/md1:

Version : 1.2

Creation Time : Wed Nov 25 15:13:25 2015

Raid Level : raid1

Array Size : 2102400 (2.01 GiB 2.15 GB)

Used Dev Size : 2102400 (2.01 GiB 2.15 GB)

Raid Devices : 2

Total Devices : 1

Persistence : Superblock is persistent

Update Time : Wed Nov 25 15:22:52 2015

State : clean, degraded

Active Devices : 1

Working Devices : 1

Failed Devices : 0

Spare Devices : 0

Name : soysauce:1 (local to host soysauce)

UUID : 6330ff0a:f2d76826:9a24d91d:e218523f

Events : 26

Number Major Minor RaidDevice State

0 0 0 0 removed # 此时可以看到sdb1已经被移除了

1 8 18 1 active sync /dev/sdb2

[root@soysauce data]# mdadm /dev/md1 -a /dev/sdb1 # 再加进去一块盘

[root@soysauce data]# mdadm -D /dev/md1

/dev/md1:

Version : 1.2

Creation Time : Wed Nov 25 15:13:25 2015

Raid Level : raid1

Array Size : 2102400 (2.01 GiB 2.15 GB)

Used Dev Size : 2102400 (2.01 GiB 2.15 GB)

Raid Devices : 2

Total Devices : 2

Persistence : Superblock is persistent

Update Time : Wed Nov 25 15:25:05 2015

State : clean, degraded, recovering

Active Devices : 1

Working Devices : 2

Failed Devices : 0

Spare Devices : 1

Rebuild Status : 31% complete

Name : soysauce:1 (local to host soysauce)

UUID : 6330ff0a:f2d76826:9a24d91d:e218523f

Events : 57

Number Major Minor RaidDevice State

2 8 17 0 spare rebuilding /dev/sdb1

1 8 18 1 active sync /dev/sdb2

[root@soysauce data]# cat /proc/mdstat # 此时正在同步数据

Personalities : [raid0] [raid1]

md1 : active raid1 sdb1[2] sdb2[1]

2102400 blocks super 1.2 [2/1] [_U]

[=>...................] recovery = 9.7% (206016/2102400) finish=0.1min speed=206016K/sec

unused devices: <none>

[root@soysauce data]# cat /proc/mdstat # 可以看到此时已经同步完成

Personalities : [raid0] [raid1]

md1 : active raid1 sdb1[2] sdb2[1]

2102400 blocks super 1.2 [2/2] [UU]

unused devices: <none>

[root@soysauce data]# mdadm /dev/md1 -f /dev/sdb2 # 再移除sdb2

mdadm: set /dev/sdb2 faulty in /dev/md1

[root@soysauce data]# tail -5 inittab # 刚才加进来的sdb1数据已经同步完成,数据可以正常访问

# 4 - unused

# 5 - X11

# 6 - reboot (Do NOT set initdefault to this)

#

id:3:initdefault:

3、RAID 5

[root@soysauce /]# mdadm -C /dev/md5 -a yes -l 5 -n 3 -x 1 -c 64 /dev/sdb{1,2,3,4}

mdadm: array /dev/md5 started.

[root@soysauce /]# cat /proc/mdstat # 正在做同步

Personalities : [raid0] [raid1] [raid6] [raid5] [raid4]

md5 : active raid5 sdb3[4] sdb4[3](S) sdb2[1] sdb1[0]

4204800 blocks super 1.2 level 5, 64k chunk, algorithm 2 [3/2] [UU_]

[>....................] recovery = 2.5% (52888/2102400) finish=2.5min speed=13222K/sec

unused devices: <none>

[root@soysauce /]# mdadm -D /dev/md5 # 查看RAID阵列信息

/dev/md5:

Version : 1.2

Creation Time : Wed Nov 25 16:03:06 2015

Raid Level : raid5

Array Size : 4204800 (4.01 GiB 4.31 GB)

Used Dev Size : 2102400 (2.01 GiB 2.15 GB)

Raid Devices : 3

Total Devices : 4

Persistence : Superblock is persistent

Update Time : Wed Nov 25 16:03:16 2015

State : clean, degraded, recovering

Active Devices : 2

Working Devices : 4

Failed Devices : 0

Spare Devices : 2

Layout : left-symmetric

Chunk Size : 64K # chunk大小为64k

Rebuild Status : 6% complete

Name : soysauce:5 (local to host soysauce)

UUID : ede33181:d3d2cea6:c5a4c03e:9a12c4a3

Events : 2

Number Major Minor RaidDevice State

0 8 17 0 active sync /dev/sdb1

1 8 18 1 active sync /dev/sdb2

4 8 19 2 spare rebuilding /dev/sdb3

3 8 20 - spare /dev/sdb4

[root@soysauce ~]# mke2fs -t ext4 -b 4096 -E stride=16 /dev/md5 # 格式化为ext4文件系统,并设置stride为chunk/block做性能优化

mke2fs 1.41.12 (17-May-2010)

Filesystem label=

OS type: Linux

Block size=4096 (log=2)

Fragment size=4096 (log=2)

Stride=16 blocks, Stripe width=32 blocks

262944 inodes, 1051200 blocks

52560 blocks (5.00%) reserved for the super user

First data block=0

Maximum filesystem blocks=1077936128

33 block groups

32768 blocks per group, 32768 fragments per group

7968 inodes per group

Superblock backups stored on blocks:

32768, 98304, 163840, 229376, 294912, 819200, 884736

Writing inode tables: done

Creating journal (32768 blocks): done

Writing superblocks and filesystem accounting information: done

This filesystem will be automatically checked every 39 mounts or

180 days, whichever comes first. Use tune2fs -c or -i to override.

[root@soysauce /]# mdadm -Ds >> /etc/mdadm.conf # RAID配置信息保存至配置文件,下次装备时不用指定磁盘

[root@soysauce /]# mount /dev/md5 /mnt/ # 挂载使用

[root@soysauce /]# cd /mnt/

[root@soysauce mnt]# ls

lost+found

[root@soysauce mnt]# cd ..

[root@soysauce /]# umount /dev/md5 # 卸载

[root@soysauce /]# mdadm -S /dev/md5 # 停止阵列

mdadm: stopped /dev/md5

[root@soysauce /]# mdadm -A /dev/md5 # 重新装配,若是上面没有保存配置文件,则要求指定磁盘

mdadm: /dev/md5 has been started with 3 drives and 1 spare.

[root@CentOS6 ~]# mdadm /dev/md5 -a /dev/sdc1 # 添加一块新的盘

mdadm: added /dev/sdc1

[root@CentOS6 ~]# mdadm -D /dev/md5 # 再次查看RAID阵列信息

/dev/md5:

Version : 1.2

Creation Time : Wed Nov 25 16:03:06 2015

Raid Level : raid5

Array Size : 4204800 (4.01 GiB 4.31 GB)

Used Dev Size : 2102400 (2.01 GiB 2.15 GB)

Raid Devices : 3

Total Devices : 5

Persistence : Superblock is persistent

Update Time : Wed Nov 25 16:22:47 2015

State : clean

Active Devices : 3

Working Devices : 5

Failed Devices : 0

Spare Devices : 2

Layout : left-symmetric

Chunk Size : 64K

Name : soysauce:5

UUID : ede33181:d3d2cea6:c5a4c03e:9a12c4a3

Events : 25

Number Major Minor RaidDevice State

0 8 17 0 active sync /dev/sdb1

1 8 18 1 active sync /dev/sdb2

4 8 19 2 active sync /dev/sdb3

3 8 20 - spare /dev/sdb4

5 8 33 - spare /dev/sdc1

[root@CentOS6 ~]# mdadm -G /dev/md5 -n 5 # 扩展RAID 5到5块盘

[root@CentOS6 ~]# mdadm -D /dev/md5 # 再次查看

/dev/md5:

Version : 1.2

Creation Time : Wed Nov 25 16:03:06 2015

Raid Level : raid5

Array Size : 4204800 (4.01 GiB 4.31 GB)

Used Dev Size : 2102400 (2.01 GiB 2.15 GB)

Raid Devices : 5

Total Devices : 5

Persistence : Superblock is persistent

Update Time : Wed Nov 25 16:23:27 2015

State : clean, reshaping

Active Devices : 5

Working Devices : 5

Failed Devices : 0

Spare Devices : 0

Layout : left-symmetric

Chunk Size : 64K

Reshape Status : 0% complete

Delta Devices : 2, (3->5)

Name : soysauce:5

UUID : ede33181:d3d2cea6:c5a4c03e:9a12c4a3

Events : 36

Number Major Minor RaidDevice State

0 8 17 0 active sync /dev/sdb1

1 8 18 1 active sync /dev/sdb2

4 8 19 2 active sync /dev/sdb3

5 8 33 3 active sync /dev/sdc1

3 8 20 4 active sync /dev/sdb4 # 此时sdb4和sdc1都不再作为空闲盘使用了

4、RAID 10

[root@CentOS6 ~]# mdadm -C /dev/md11 -a yes -l 1 -n 2 -c 64 /dev/sdb{1,2} # 先创建一个RAID 1

mdadm: Defaulting to version 1.2 metadata

mdadm: array /dev/md11 started.

[root@CentOS6 ~]# mdadm -C /dev/md12 -a yes -l 1 -n 2 -c 64 /dev/sdb{3,4} # 再创建一个RAID 1

mdadm: Defaulting to version 1.2 metadata

mdadm: array /dev/md12 started.

[root@CentOS6 ~]# mdadm -C /dev/md10 -l 0 -n 2 -a yes -c 64 /dev/md{11,12} # 在创建一个RAID 0

mdadm: Defaulting to version 1.2 metadata

mdadm: array /dev/md10 started.

[root@CentOS6 ~]# mdadm -D /dev/md10 # 查看RAID阵列信息

/dev/md10:

Version : 1.2

Creation Time : Wed Nov 25 16:34:43 2015

Raid Level : raid0

Array Size : 4200768 (4.01 GiB 4.30 GB)

Raid Devices : 2

Total Devices : 2

Persistence : Superblock is persistent

Update Time : Wed Nov 25 16:34:43 2015

State : clean

Active Devices : 2

Working Devices : 2

Failed Devices : 0

Spare Devices : 0

Chunk Size : 64K

Name : CentOS6.5:10 (local to host CentOS6.5)

UUID : 9ad9152e:e37caee8:d301e917:a78cddb8

Events : 0

Number Major Minor RaidDevice State

0 9 11 0 active sync /dev/md11

1 9 12 1 active sync /dev/md12

[root@CentOS6 ~]# mdadm -Ds >> /etc/mdadm.conf # 保存配置信息至配置文件中

[root@CentOS6 ~]# mke2fs -t ext4 -b 4096 -E stride=16 /dev/md10 # 格式化为ext4文件系统,并设置stride为chunk/block做性能优化

[root@soysauce /]# mount /dev/md10 /mnt/ # 挂载使用

[root@soysauce /]# cd /mnt/

[root@soysauce mnt]# ls

lost+found

五、各RAID级别对比

注:此表来源于wiki