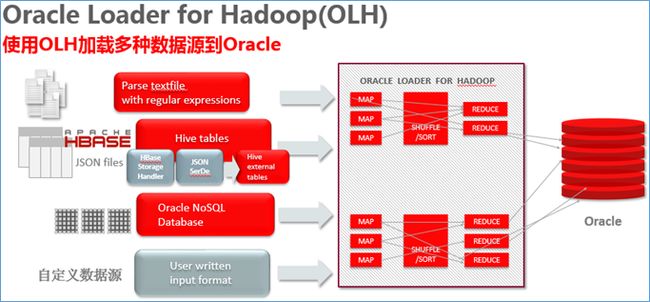

连接Oracle与Hadoop(1) 使用OLH加载HDFS文件到Oracle

OLH是Oracle Loader for Hadoop的缩写,Oracle的大数据连接器(BDC)的一个组件,可将多种数据格式从HDFS上加载到Oracle数据库库中。

本文在同一台服务器上模拟oracle数据库与hadoop集群,实验目标:使用OLH从Hadoop端的HDFS加载数据到Oracle表中。

Oracle端:

| 服务器 |

系统用户 |

安装软件 |

软件安装路径 |

| Server1 |

oracle |

Oracle Database 12.1.0.2 |

/u01/app/oracle/product/12.1.0/dbhome_1 |

Hadoop集群端:

| 服务器 |

系统用户 |

安装软件 |

软件安装路径 |

| Server1 |

hadoop |

Hadoop 2.6.2 |

/home/hadoop/hadoop-2.6.2 |

| Hive 1.1.1 |

/home/hadoop/hive-1.1.1 |

||

| Hbase 1.1.2 |

/home/hadoop/hbase-1.1.2 |

||

| jdk1.8.0_65 |

/home/hadoop/jdk1.8.0_65 |

||

| OLH 3.5.0 |

/home/hadoop/oraloader-3.5.0-h2 |

- 部署Hadoop/Hive/Hbase/OLH软件

将Hadoop/Hive/Hbase/OLH软件解压到相应目录

-

[hadoop@server1 ~]$ tree -L 1

-

├── hadoop-2.6.2

-

├── hbase-1.1.2

-

├── hive-1.1.1

-

├── jdk1.8.0_65

-

├── oraloader-3.5.0-h2

- 配置Hadoop/Hive/Hbase/OLH环境变量

-

export JAVA_HOME=/home/hadoop/jdk1.8.0_65

-

-

export HADOOP_USER_NAME=hadoop

-

export HADOOP_YARN_USER=hadoop

-

export HADOOP_HOME=/home/hadoop/hadoop-2.6.2

-

export HADOOP_CONF_DIR=${HADOOP_HOME}/etc/hadoop

-

export HADOOP_LOG_DIR=${HADOOP_HOME}/logs

-

export HADOOP_LIBEXEC_DIR=${HADOOP_HOME}/libexec

-

export HADOOP_COMMON_HOME=${HADOOP_HOME}

-

export HADOOP_HDFS_HOME=${HADOOP_HOME}

-

export HADOOP_MAPRED_HOME=${HADOOP_HOME}

-

export HADOOP_YARN_HOME=${HADOOP_HOME}

-

export HDFS_CONF_DIR=${HADOOP_HOME}/etc/hadoop

-

export YARN_CONF_DIR=${HADOOP_HOME}/etc/hadoop

-

-

export HIVE_HOME=/home/hadoop/hive-1.1.1

-

export HIVE_CONF_DIR=${HIVE_HOME}/conf

-

-

export HBASE_HOME=/home/hadoop/hbase-1.1.2

-

export HBASE_CONF_DIR=/home/hadoop/hbase-1.1.2/conf

-

-

export OLH_HOME=/home/hadoop/oraloader-3.5.0-h2

-

-

export HADOOP_CLASSPATH=/usr/share/java/mysql-connector-java.jar

-

export HADOOP_CLASSPATH=$HADOOP_CLASSPATH:$HIVE_HOME/lib/*:$HIVE_CONF_DIR:$HBASE_HOME/lib/*:$HBASE_CONF_DIR

-

export HADOOP_CLASSPATH=$HADOOP_CLASSPATH:$OLH_HOME/jlib/*

-

-

export PATH=$JAVA_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$HIVE_HOME/bin:$HBASE_HOME/bin:$PATH

- 配置Hadoop/Hive/Hbase软件

core-site.xml

-

<configuration>

-

<property>

-

<name>fs.defaultFS</name>

-

<value>hdfs://server1:8020</value>

-

</property>

-

<property>

-

<name>fs.checkpoint.period</name>

-

<value>3600</value>

-

</property>

-

<property>

-

<name>fs.checkpoint.size</name>

-

<value>67108864</value>

-

</property>

-

<property>

-

<name>hadoop.proxyuser.hadoop.groups</name>

-

<value>*</value>

-

</property>

-

<property>

-

<name>hadoop.proxyuser.hadoop.hosts</name>

-

<value>*</value>

-

</property>

-

</configuration>

hdfs-site.xml

-

<configuration>

-

<property>

-

<name>hadoop.tmp.dir</name>

-

<value>file:///home/hadoop</value>

-

</property>

-

<property>

-

<name>dfs.namenode.name.dir</name>

-

<value>file:///home/hadoop/dfs/nn</value>

-

</property>

-

<property>

-

<name>dfs.datanode.data.dir</name>

-

<value>file:///home/hadoop/dfs/dn</value>

-

</property>

-

<property>

-

<name>dfs.namenode.checkpoint.dir</name>

-

<value>file:///home/hadoop/dfs/sn</value>

-

</property>

-

<property>

-

<name>dfs.replication</name>

-

<value>1</value>

-

</property>

-

<property>

-

<name>dfs.permissions.superusergroup</name>

-

<value>supergroup</value>

-

</property>

-

<property>

-

<name>dfs.namenode.http-address</name>

-

<value>server1:50070</value>

-

</property>

-

<property>

-

<name>dfs.namenode.secondary.http-address</name>

-

<value>server1:50090</value>

-

</property>

-

<property>

-

<name>dfs.webhdfs.enabled</name>

-

<value>true</value>

-

</property>

-

</configuration>

yarn-site.xml

-

<configuration>

-

<property>

-

<name>yarn.resourcemanager.scheduler.address</name>

-

<value>server1:8030</value>

-

</property>

-

<property>

-

<name>yarn.resourcemanager.resource-tracker.address</name>

-

<value>server1:8031</value>

-

</property>

-

<property>

-

<name>yarn.resourcemanager.address</name>

-

<value>server1:8032</value>

-

</property>

-

<property>

-

<name>yarn.resourcemanager.admin.address</name>

-

<value>server1:8033</value>

-

</property>

-

<property>

-

<name>yarn.resourcemanager.webapp.address</name>

-

<value>server1:8088</value>

-

</property>

-

<property>

-

<name>yarn.nodemanager.local-dirs</name>

-

<value>file:///home/hadoop/yarn/local</value>

-

</property>

-

<property>

-

<name>yarn.nodemanager.log-dirs</name>

-

<value>file:///home/hadoop/yarn/logs</value>

-

</property>

-

<property>

-

<name>yarn.log-aggregation-enable</name>

-

<value>true</value>

-

</property>

-

<property>

-

<name>yarn.nodemanager.remote-app-log-dir</name>

-

<value>/yarn/apps</value>

-

</property>

-

<property>

-

<name>yarn.app.mapreduce.am.staging-dir</name>

-

<value>/user</value>

-

</property>

-

<property>

-

<name>yarn.nodemanager.aux-services</name>

-

<value>mapreduce_shuffle</value>

-

</property>

-

<property>

-

<name>yarn.nodemanager.aux-services.mapreduce_shuffle.class</name>

-

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

-

</property>

-

<property>

-

<name>yarn.log.server.url</name>

-

<value>http://server1:19888/jobhistory/logs/</value>

-

</property>

-

</configuration>

- 设置OraLoader配置文件

-

<?xml version="1.0" encoding="UTF-8" ?>

-

<configuration>

-

<!-- Input settings -->

-

<property>

-

<name>mapreduce.inputformat.class</name>

-

<value>oracle.hadoop.loader.lib.input.DelimitedTextInputFormat</value>

-

</property>

-

<property>

-

<name>mapred.input.dir</name>

-

<value>/catalog</value>

-

</property>

-

<property>

-

<name>oracle.hadoop.loader.input.fieldTerminator</name>

-

<value>\u002C</value>

-

</property>

-

<property>

-

<name>oracle.hadoop.loader.input.fieldNames</name>

-

<value>CATALOGID,JOURNAL,PUBLISHER,EDITION,TITLE,AUTHOR</value>

-

</property>

-

-

<!-- Output settings -->

-

<property>

-

<name>mapreduce.job.outputformat.class</name>

-

<value>oracle.hadoop.loader.lib.output.JDBCOutputFormat</value>

-

</property>

-

<property>

-

<name>mapreduce.output.fileoutputformat.outputdir</name>

-

<value>oraloadout</value>

-

</property>

-

-

<!-- Table information -->

-

<property>

-

<name>oracle.hadoop.loader.loaderMap.targetTable</name>

-

<value>catalog</value>

-

</property>

-

-

<!-- Connection information -->

-

<property>

-

<name>oracle.hadoop.loader.connection.url</name>

-

<value>jdbc:oracle:thin:@${HOST}:${TCPPORT}/${SERVICE_NAME}</value>

-

</property>

-

<property>

-

<name>TCPPORT</name>

-

<value>1521</value>

-

</property>

-

<property>

-

<name>HOST</name>

-

<value>192.168.56.101</value>

-

</property>

-

<property>

-

<name>SERVICE_NAME</name>

-

<value>orcl</value>

-

</property>

-

<property>

-

<name>oracle.hadoop.loader.connection.user</name>

-

<value>baron</value>

-

</property>

-

<property>

-

<name>oracle.hadoop.loader.connection.password</name>

-

<value>baron</value>

-

</property>

- 生成测试数据

-

$cat catalog.txt

-

1,Oracle Magazine,Oracle Publishing,Nov-Dec 2004,Database Resource Manager,Kimberly Floss

-

2,Oracle Magazine,Oracle Publishing,Nov-Dec 2004,From ADF UIX to JSF,Jonas Jacobi

-

3,Oracle Magazine,Oracle Publishing,March-April 2005,Starting with Oracle ADF,Steve Muench

-

-

$ hdfs dfs -mkdir /catalog

-

$ hdfs dfs -put catalog.txt /catalog/catalog.txt

- 使用OraLoader加载HDFS文件到Oracle数据库

-

hadoop jar $OLH_HOME/jlib/oraloader.jar oracle.hadoop.loader.OraLoader -conf OraLoadJobConf.xml -libjars $OLH_HOME/jlib/oraloader.jar

输出结果如下:

-

Oracle Loader for Hadoop Release 3.4.0 - Production

-

Copyright (c) 2011, 2015, Oracle and/or its affiliates. All rights reserved.

-

15/12/07 08:35:52 INFO loader.OraLoader: Oracle Loader for Hadoop Release 3.4.0 - Production

-

Copyright (c) 2011, 2015, Oracle and/or its affiliates. All rights reserved.

-

15/12/07 08:35:52 INFO loader.OraLoader: Built-Against: hadoop-2.2.0 hive-0.13.0 avro-1.7.3 jackson-1.8.8

-

15/12/07 08:35:52 INFO Configuration.deprecation: mapreduce.outputformat.class is deprecated. Instead, use mapreduce.job.outputformat.class

-

15/12/07 08:35:52 INFO Configuration.deprecation: mapred.output.dir is deprecated. Instead, use mapreduce.output.fileoutputformat.outputdir

-

15/12/07 08:36:27 INFO Configuration.deprecation: mapred.submit.replication is deprecated. Instead, use mapreduce.client.submit.file.replication

-

15/12/07 08:36:29 INFO loader.OraLoader: oracle.hadoop.loader.loadByPartition is disabled because table: CATALOG is not partitioned

-

15/12/07 08:36:29 INFO output.DBOutputFormat: Setting reduce tasks speculative execution to false for : oracle.hadoop.loader.lib.output.JDBCOutputFormat

-

15/12/07 08:36:29 INFO Configuration.deprecation: mapred.reduce.tasks.speculative.execution is deprecated. Instead, use mapreduce.reduce.speculative

-

15/12/07 08:36:32 WARN loader.OraLoader: Sampler is disabled because the number of reduce tasks is less than two. Job will continue without sampled information.

-

15/12/07 08:36:32 INFO loader.OraLoader: Submitting OraLoader job OraLoader

-

15/12/07 08:36:32 INFO client.RMProxy: Connecting to ResourceManager at server1/192.168.56.101:8032

-

15/12/07 08:36:34 INFO input.FileInputFormat: Total input paths to process : 1

-

15/12/07 08:36:34 INFO mapreduce.JobSubmitter: number of splits:1

-

15/12/07 08:36:35 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1449494864827_0001

-

15/12/07 08:36:36 INFO impl.YarnClientImpl: Submitted application application_1449494864827_0001

-

15/12/07 08:36:37 INFO mapreduce.Job: The url to track the job: http://server1:8088/proxy/application_1449494864827_0001/

-

15/12/07 08:37:05 INFO loader.OraLoader: map 0% reduce 0%

-

15/12/07 08:37:22 INFO loader.OraLoader: map 100% reduce 0%

-

15/12/07 08:37:36 INFO loader.OraLoader: map 100% reduce 67%

-

15/12/07 08:38:05 INFO loader.OraLoader: map 100% reduce 100%

-

15/12/07 08:38:06 INFO loader.OraLoader: Job complete: OraLoader (job_1449494864827_0001)

-

15/12/07 08:38:06 INFO loader.OraLoader: Counters: 49

-

File System Counters

-

FILE: Number of bytes read=395

-

FILE: Number of bytes written=244157

-

FILE: Number of read operations=0

-

FILE: Number of large read operations=0

-

FILE: Number of write operations=0

-

HDFS: Number of bytes read=367

-

HDFS: Number of bytes written=1861

-

HDFS: Number of read operations=7

-

HDFS: Number of large read operations=0

-

HDFS: Number of write operations=5

-

Job Counters

-

Launched map tasks=1

-

Launched reduce tasks=1

-

Data-local map tasks=1

-

Total time spent by all maps in occupied slots (ms)=12516

-

Total time spent by all reduces in occupied slots (ms)=40696

-

Total time spent by all map tasks (ms)=12516

-

Total time spent by all reduce tasks (ms)=40696

-

Total vcore-seconds taken by all map tasks=12516

-

Total vcore-seconds taken by all reduce tasks=40696

-

Total megabyte-seconds taken by all map tasks=12816384

-

Total megabyte-seconds taken by all reduce tasks=41672704

-

Map-Reduce Framework

-

Map input records=3

-

Map output records=3

-

Map output bytes=383

-

Map output materialized bytes=395

-

Input split bytes=104

-

Combine input records=0

-

Combine output records=0

-

Reduce input groups=1

-

Reduce shuffle bytes=395

-

Reduce input records=3

-

Reduce output records=3

-

Spilled Records=6

-

Shuffled Maps =1

-

Failed Shuffles=0

-

Merged Map outputs=1

-

GC time elapsed (ms)=556

-

CPU time spent (ms)=9450

-

Physical memory (bytes) snapshot=444141568

-

Virtual memory (bytes) snapshot=4221542400

-

Total committed heap usage (bytes)=331350016

-

Shuffle Errors

-

BAD_ID=0

-

CONNECTION=0

-

IO_ERROR=0

-

WRONG_LENGTH=0

-

WRONG_MAP=0

-

WRONG_REDUCE=0

-

File Input Format Counters

-

Bytes Read=263

-

File Output Format Counters

-

Bytes Written=1620

- 在Oracle中验证加载结果

-

select * fromcatalog;

CATALOGID JOURNAL PUBLISHER EDITION TITLE AUTHOR

---------- ------------------------- ------------------------- ------------------------- ------------------------------ ---------------------

1 Oracle Magazine Oracle Publishing Nov-Dec 2004 Database Resource Manager Kimberly Floss

2 Oracle Magazine Oracle Publishing Nov-Dec 2004 From ADF UIX to JSF Jonas Jacobi

3 Oracle Magazine Oracle Publishing March-April 2005 Starting with Oracle ADF Steve Muenc