学习OpenCV——ORB简化版&Location加速版

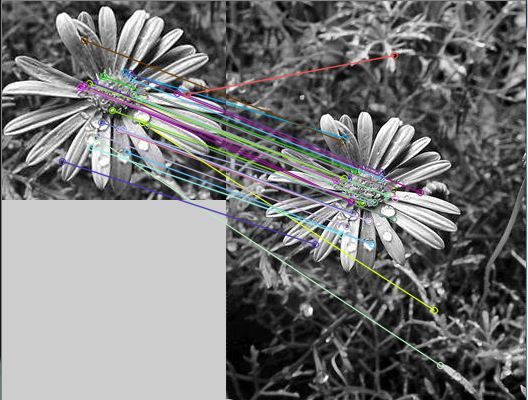

根据前面surf简化版的结构,重新把ORB检测的代码给简化以下,发现虽然速度一样,确实能省好多行代码,关键是有

BruteForceMatcher<HammingLUT>matcher的帮忙,直接省的写了一个函数;

NB类型:class gpu::BruteForceMatcher_GPU

再加上findHomography,之后perspectiveTransform就可以location,但是这样速度很慢;

于是改动一下,求matches的keypoints的x与y坐标和的平均值,基本上就是对象中心!!!

以这个点为中心画与原对象大小相同的矩形框,就可以定位出大概位置,但是肯定不如透视变换准确,而且不具有尺度不变性。

但是鲁棒性应该更好,因为,只要能match成功,基本都能定位中心,但是透视变换有时却因为尺度变换过大等因素,画出很不靠谱的矩形框!

#include "opencv2/objdetect/objdetect.hpp"

#include "opencv2/features2d/features2d.hpp"

#include "opencv2/highgui/highgui.hpp"

#include "opencv2/calib3d/calib3d.hpp"

#include "opencv2/imgproc/imgproc_c.h"

#include "opencv2/imgproc/imgproc.hpp"

#include <string>

#include <vector>

#include <iostream>

using namespace cv;

using namespace std;

char* image_filename1 = "D:/src.jpg";

char* image_filename2 = "D:/Demo.jpg";

int main()

{

Mat img1 = imread( image_filename1, CV_LOAD_IMAGE_GRAYSCALE );

Mat img2 = imread( image_filename2, CV_LOAD_IMAGE_GRAYSCALE );

int64 st,et;

ORB orb1(30,ORB::CommonParams(1.2,1));

ORB orb2(100,ORB::CommonParams(1.2,1));

vector<KeyPoint>keys1,keys2;

Mat descriptor1,descriptor2;

orb1(img1,Mat(),keys1,descriptor1,false);

st=getTickCount();

orb2(img2,Mat(),keys2,descriptor2,false);

et=getTickCount()-st;

et=et*1000/(double)getTickFrequency();

cout<<"extract time:"<<et<<"ms"<<endl;

vector<DMatch> matches;

//class gpu::BruteForceMatcher_GPU

BruteForceMatcher<HammingLUT>matcher;//BruteForceMatcher支持<Hamming> <L1<float>> <L2<float>>

//FlannBasedMatcher matcher;不支持

st=getTickCount();

matcher.match(descriptor1,descriptor2,matches);

et=getTickCount()-st;

et=et*1000/getTickFrequency();

cout<<"match time:"<<et<<"ms"<<endl;

Mat img_matches;

drawMatches( img1, keys1, img2, keys2,

matches, img_matches, Scalar::all(-1), Scalar::all(-1),

vector<char>(), DrawMatchesFlags::NOT_DRAW_SINGLE_POINTS );

imshow("match",img_matches);

cout<<"match size:"<<matches.size()<<endl;

/*

Mat showImg;

drawMatches(img1,keys1,img2,keys2,matchs,showImg);

imshow( "win", showImg );

*/

waitKey(0);

st=getTickCount();

vector<Point2f>pt1;

vector<Point2f>pt2;

float x=0,y=0;

for(size_t i=0;i<matches.size();i++)

{

pt1.push_back(keys1[matches[i].queryIdx].pt);

pt2.push_back(keys2[matches[i].trainIdx].pt);

x+=keys2[matches[i].trainIdx].pt.x;

y+=keys2[matches[i].trainIdx].pt.y;

}

x=x/matches.size();

y=y/matches.size();

Mat homo;

homo=findHomography(pt1,pt2,CV_RANSAC);

vector<Point2f>src_cornor(4);

vector<Point2f>dst_cornor(4);

src_cornor[0]=cvPoint(0,0);

src_cornor[1]=cvPoint(img1.cols,0);

src_cornor[2]=cvPoint(img1.cols,img1.rows);

src_cornor[3]=cvPoint(0,img1.rows);

perspectiveTransform(src_cornor,dst_cornor,homo);

Mat img=imread(image_filename2,1);

line(img,dst_cornor[0],dst_cornor[1],Scalar(255,0,0),2);

line(img,dst_cornor[1],dst_cornor[2],Scalar(255,0,0),2);

line(img,dst_cornor[2],dst_cornor[3],Scalar(255,0,0),2);

line(img,dst_cornor[3],dst_cornor[0],Scalar(255,0,0),2);

/*

line(img,cvPoint((int)dst_cornor[0].x,(int)dst_cornor[0].y),cvPoint((int)dst_cornor[1].x,(int)dst_cornor[1].y),Scalar(255,0,0),2);

line(img,cvPoint((int)dst_cornor[1].x,(int)dst_cornor[1].y),cvPoint((int)dst_cornor[2].x,(int)dst_cornor[2].y),Scalar(255,0,0),2);

line(img,cvPoint((int)dst_cornor[2].x,(int)dst_cornor[2].y),cvPoint((int)dst_cornor[3].x,(int)dst_cornor[3].y),Scalar(255,0,0),2);

line(img,cvPoint((int)dst_cornor[3].x,(int)dst_cornor[3].y),cvPoint((int)dst_cornor[0].x,(int)dst_cornor[0].y),Scalar(255,0,0),2);

*/

circle(img,Point(x,y),10,Scalar(0,0,255),3,CV_FILLED);

line(img,Point(x-img1.cols/2,y-img1.rows/2),Point(x+img1.cols/2,y-img1.rows/2),Scalar(0,0,255),2);

line(img,Point(x+img1.cols/2,y-img1.rows/2),Point(x+img1.cols/2,y+img1.rows/2),Scalar(0,0,255),2);

line(img,Point(x+img1.cols/2,y+img1.rows/2),Point(x-img1.cols/2,y+img1.rows/2),Scalar(0,0,255),2);

line(img,Point(x-img1.cols/2,y+img1.rows/2),Point(x-img1.cols/2,y-img1.rows/2),Scalar(0,0,255),2);

imshow("location",img);

et=getTickCount()-st;

et=et*1000/getTickFrequency();

cout<<"location time:"<<et<<"ms"<<endl;

waitKey(0);

}