A FIRST EXPLORATION OF SOLRCLOUD

SolrCloud has recently been in the news and was merged into Solr trunk, so it was high time to have a fresh look at it.

The SolrCloud wiki page gives various examples but left a few things unclear for me. The examples only show Solr instances which host one core/shard, and it doesn’t go deep on the relation between cores, collections and shards, or how to manage configurations.

In this blog, we will have a look at an example where we host multiple shards per instance, and explain some details along the way.

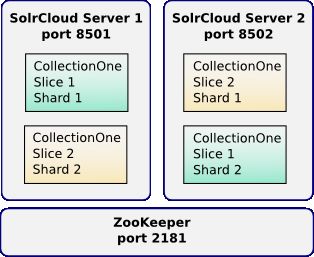

The setup we are going to create is shown in this diagram.

SolrCloud terminology

In SolrCloud you can have multiple collections. Collections can be divided into partitions, these partitions are called slices. Each slice can exist in multiple copies, these copies of the same slice are called shards. So the word shard has a bit of a confusing meaning in SolrCloud, it is rather a replica than a partition. One of the shards within a slice is the leader, though this is not fixed: any shard can become the leader through a leader-election process.

Each shard is a physical index, so one shard corresponds to one Solr core.

If you look at the SolrCloud wiki page, you won’t find the word slice [anymore]. It seems like the idea is to hide the use of this word, though once you start looking a bit deeper you will encounter it anyway so it’s good to know about it. It’s also good to know that the words shard and slice are often used in ambiguous ways, switching one for the other (even in the sources). Once you know this, things become more comprehensible. An interesting quote in this regard: “removing that ambiguity by introducing another term seemed to add more perceived complexity”. In this article I'll use the words slice and shard as defined above, so that we can distinguish the two concepts.

In SolrCloud, the Solr configuration files like schema.xml and solrconfig.xml are stored in ZooKeeper. You can upload multiple configurations to ZooKeeper, each collection can be associated with one configuration. The Solr instances hence don’t need the configuration files to be on the file system, they will read them from ZooKeeper.

Running ZooKeeper

Let’s start by launching a ZooKeeper instance. While Solr allows to run an embedded ZooKeeper instance, I find that this rather complicates things. ZooKeeper is responsible for storing coordination and configuration information for the cluster, and should be highly available. By running it separately, we can start and stop Solr instances without having to think about which one(s) embed ZooKeeper.

For the purpose of this article, you can get it running like this:

- download ZooKeeper 3.3. Don’t take version 3.4, as it’s not recommended for production yet.

- extract the download

- copy conf/zoo_sample.cfg to conf/zoo.cfg

- mkdir /tmp/zookeeper (path can be changed in zoo.cfg)

- ./bin/zkServer.sh start

And that’s it, you have ZooKeeper running.

Setting up Solr instance directories

We are going to run two Solr instances, thus we’ll need two Solr instance directories.

Let’s create two directories like this:

mkdir -p ~/solrcloudtest/sc_server_1 mkdir -p ~/solrcloudtest/sc_server_2

Create the file ~/solrcloudtest/sc_server_1/solr.xml containing:

<solr persistent="true"> <cores adminPath="/admin/cores" hostPort=”8501”> </cores> </solr>

Create the file ~/solrcloudtest/sc_server_2/solr.xml containing (note the different hostPort value):

<solr persistent="true"> <cores adminPath="/admin/cores" hostPort=”8502”> </cores> </solr>

We need to specify the hostPort attribute since Solr can’t detect the port, it falls back to the default 8983 when not specified.

This is all we need: the actual core configuration will be uploaded to ZooKeeper in the next section.

Creating a Solr configuration in ZooKeeper

As explained before, the Solr configuration needs to be available in ZooKeeper rather than on the file system.

Currently, you can upload a configuration directory from the file system to ZooKeeper as part of the Solr startup. It is also possible to run ZkController’s main method for this purpose (SOLR-2805), but as there’s no script to launch it, the easiest way right now to upload a configuration is by starting Solr:

export SOLR_HOME=/path/to/solr-trunk/solr # important: move into one the instance directory! # (otherwise Solr will start up with defaults and create a core etc.) cd sc_server_1 java \ -Djetty.port=8501 \ -Djetty.home=$SOLR_HOME/example/ \ -Dsolr.solr.home=. \ -Dbootstrap_confdir=$SOLR_HOME/example/solr/conf \ -Dcollection.configName=config1 \ -DzkHost=localhost:2181 \ -jar $SOLR_HOME/example/start.jar

Now that the configuration is uploaded, you can stop this Solr instance again (ctrl+c).

We can now check in ZooKeeper that the configuration has been uploaded, and that no collections have been created yet.

For this, go to the ZooKeeper directory, and run ./bin/zkCli.sh, and do the following commands:

[zk: localhost:2181(CONNECTED) 1] ls /configs [config1] [zk: localhost:2181(CONNECTED) 2] ls /collections []

You could repeat this process to upload more configurations.

If you would like to change a configuration later on, you essentially have to upload it again in the same way. The various Solr cores that make use of that configuration won’t be reloaded automatically however (SOLR-3071).

Starting the Solr servers

All SolrCloud magic is activated by specifying the zkHost parameter. Without this parameter, you run Solr ‘classic’, with the parameter, you run SolrCloud. If you look into the source code, you will see that this parameter causes the creation of a ZkController, and at various places checks of the kind ‘zkController != null’ are done to change behavior when in cloud mode.

Open two shells, and start the two Solr instances:

export SOLR_HOME=/path/to/solr-trunk/solr cd sc_server_1 java \ -Djetty.port=8501 \ -Djetty.home=$SOLR_HOME/example/ \ -Dsolr.solr.home=. \ -DzkHost=localhost:2181 \ -jar $SOLR_HOME/example/start.jar

and (note: different instance dir & jetty port)

export SOLR_HOME=/path/to/solr-trunk/solr cd sc_server_2 java \ -Djetty.port=8502 \ -Djetty.home=$SOLR_HOME/example/ \ -Dsolr.solr.home=. \ -DzkHost=localhost:2181 \ -jar $SOLR_HOME/example/start.jar

Note that now, we don’t have to specify the boostrap_confdir and collection.configName properties anymore (though that last one can still be useful as default sometimes, but not with the way we will create collections & shards below).

We have neither added the -Dnumshards parameter, which you might have encountered elsewhere. When you manually assign cores to shards as we will do below, I don’t think it serves any purpose.

So the situation now is that we have two Solr instances running, both with 0 cores.

Define the cores, collections, slices, shards

We are now going to create cores, and assign each core to a specific collection and slice. It is not necessary to define collections & shards anywhere, they are implicit by the fact that there are cores that refer them.

In our example, the collection is called 'collectionOne' and the slices are called 'slice1' and 'slice2'.

Let’s start with creating a core on the first server:

curl 'http://localhost:8501/solr/admin/cores?action=CREATE&name=core_collectionOne_slice1_shard1&collection=collectionOne&shard=slice1&collection.configName=config1'

(in the URL above, and the solr.xml snippet below, the word 'shard' is used for 'slice')

If you have a look now at sc_server_1/solr.xml, you will see the core was added:

<?xml version="1.0" encoding="UTF-8" ?>

<solr persistent="true">

<cores adminPath="/admin/cores" zkClientTimeout="10000" hostPort="8501"

hostContext="solr">

<core

name="core_collectionOne_slice1_shard1"

collection="collectionOne"

shard="slice1"

instanceDir="core_collectionOne_slice1_shard1/"/>

</cores>

</solr>

AFAIU the information in ZooKeeper takes precedence, so the attributes collection and shard on the core above serve more as documentation, or they are of course also relevant if you would create cores by listing them in solr.xml rather than using the cores-admin API. Actually listing them in solr.xml might be simpler than doing a bunch of API calls, but there is currently one limitation: you can’t specify the configName this way.

In ZooKeeper, you can verify this collection is associated with the config1 configuration:

[zk: localhost:2181(CONNECTED) 5] get /collections/collectionOne

{"configName":"config1"}

And you can also get an overview of all collections, slices and shards like this:

[zk: localhost:2181(CONNECTED) 0] get /clusterstate.json

{

"collectionOne": {

"slice1": {

"fietsbel:8501_solr_core_collectionOne_slice1_shard1": {

"shard_id":"slice1",

"leader":"true",

"state":"active",

"core":"core_collectionOne_slice1_shard1",

"collection":"collectionOne",

"node_name":"fietsbel:8501_solr",

"base_url":"http://fietsbel:8501/solr"

}

}

}

}

(the somewhat strange "shard_id":"slice1" is just a back-pointer from the shard to the slice to which it belongs)

Now let’s create the remaining cores: one more on server 1, and two on server 2 (notice the different port numbers to which we send these requests).

curl 'http://localhost:8502/solr/admin/cores?action=CREATE&name=core_collectionOne_slice2_shard1&collection=collectionOne&shard=slice2&collection.configName=config1' curl 'http://localhost:8501/solr/admin/cores?action=CREATE&name=core_collectionOne_slice2_shard2&collection=collectionOne&shard=slice2&collection.configName=config1' curl 'http://localhost:8502/solr/admin/cores?action=CREATE&name=core_collectionOne_slice1_shard2&collection=collectionOne&shard=slice1&collection.configName=config1'

Let’s have a look in ZooKeeper at the current state of the clusterstate.json:

[zk: localhost:2181(CONNECTED) 0] get /clusterstate.json

{

"collectionOne": {

"slice1": {

"fietsbel:8501_solr_core_collectionOne_slice1_shard1": {

"shard_id":"slice1",

"leader":"true",

"state":"active",

"core":"core_collectionOne_slice1_shard1",

"collection":"collectionOne",

"node_name":"fietsbel:8501_solr",

"base_url":"http://fietsbel:8501/solr"

},

"fietsbel:8502_solr_core_collectionOne_slice1_shard2": {

"shard_id":"slice1",

"state":"active",

"core":"core_collectionOne_slice1_shard2",

"collection":"collectionOne",

"node_name":"fietsbel:8502_solr",

"base_url":"http://fietsbel:8502/solr"

}

},

"slice2": {

"fietsbel:8502_solr_core_collectionOne_slice2_shard1": {

"shard_id":"slice2",

"leader":"true",

"state":"active",

"core":"core_collectionOne_slice2_shard1",

"collection":"collectionOne",

"node_name":"fietsbel:8502_solr",

"base_url":"http://fietsbel:8502/solr"

},

"fietsbel:8501_solr_core_collectionOne_slice2_shard2": {

"shard_id":"slice2",

"state":"active",

"core":"core_collectionOne_slice2_shard2",

"collection":"collectionOne",

"node_name":"fietsbel:8501_solr",

"base_url":"http://fietsbel:8501/solr"

}

}

}

}

We see we have:

- one collection named collectionOne

- two slices named slice1 and slice2

- each slice has two shards. Within each slice, one shard is the leader (see "leader":"true"), the other(s) are replicas. Of each slice, one shard is hosted in each Solr instance.

Adding some documents

Now let’s try our setup works by adding some documents:

cd $SOLR_HOME/example/exampledocs java -Durl=http://localhost:8501/solr/core_collectionOne_slice1_shard1/update -jar post.jar *.xml

We sent the request to one specific core, but you could have picked any other core and the end result would be the same. The request will be forwarded automatically to the leader shard of the appropriate slice. The slice is selected based on the hash of the id of the document.

Use the admin stats page to see documents got added to all cores:

http://localhost:8501/solr/core_collectionOne_slice1_shard1/admin/stats.jsp

http://localhost:8502/solr/core_collectionOne_slice1_shard2/admin/stats.jsp

http://localhost:8502/solr/core_collectionOne_slice2_shard1/admin/stats.jsp

http://localhost:8501/solr/core_collectionOne_slice2_shard2/admin/stats.jsp

In my case, the cores for slice1 got 16 documents, those for slice2, 12. Unlike the traditional Solr replication, with SolrCloud updates are sent directly to the replica's.

Querying

Let’s just query all documents. Again, we send the request to one particular shard:

http://localhost:8501/solr/core_collectionOne_slice1_shard1/select?q=*:*

You will see the numFound=”28”, the sum of 16 and 12.

What happens internally is that when you sent a request to a core, when in SolrCloud mode, Solr will look up what collection the core is associated with, and do a distributed query across all slices (it will pick one shard for each slice).

The SolrCloud wiki page gives the suggestion that you can use the collection name in the URL (like /solr/collection1/select). In our example, this would then be /solr/collectionOne/select. This is however not the case, but rather a particularity of that example. As long as you don’t host more than one slice and shard of the same collection in one Solr server, it can make sense to use such a core naming strategy.

Starting from scratch

When playing around with this stuff, you might want to start from scratch sometimes. In such case, don’t forget you have to remove data in three places: (1) the state stored in ZooKeeper (2) the cores defined in solr.xml and (3) the instance directories of these cores.

When writing the first draft of this article, I was using just one Solr instance and tried to have all the 4 cores (including replica's) in one Solr instance. Turns out there was a bug that prevents this from working correctly (SOLR-3108).

Managing slices & shards

Once you have defined a collection, you can not (or rather should not) add new slices to it, since documents won’t be automatically moved to the new slice to fit with the hash-based partitioning (SOLR-2595).

Adding more replica shards should be no problem though. While above we have used a very explicit way of assigning each core to a particular slice, you can actually leave that parameter off and Solr will automatically assign it to some slice within the collection. (I guess here the -Dnumshards parameter kicks in to decide whether the new core should be a slice or a shard)

How about removing replicas? It can be done, but manually. You have to unload the core and remove the related state in ZooKeeper. This is an area that will be improved upon later. (SOLR-3080)

Another interesting thing to note is that when your run in SolrCloud mode, all cores will automatically take part in the cloud thing. If you add a core without specifying the collection, a collection named after that core will be created. You can’t mix ‘classic’ cores and ‘cloud’ cores in one Solr instance.

Conclusion

In this article we have barely touched the surface of everything SolrCloud is: there’s the update log for durability and recovery, the sync’ing between replica’s, the details of distributed querying and updating, the special _version_ field to help with some of the these points, the coordination (election of overseer & shard leaders), … Much interesting stuff to explore!

As becomes clear from this article, SolrCloud isn't as easy to use yet as ElasticSearch. It still needs polishing and there's more manual work involved in setting it up. To some extent this has its advantages, as long as it's clear what you can expect from the system, and what you have to take care of yourself. Anyway, it's great to see that the Solr developers were able to catch up with the cloud world.