kettle 5.1.0 连接 Hadoop hive 2 (hive 1.2.1)

1. 配置HiveServer2,在hive-site.xml中添加如下的属性

<property>

<name>hive.server2.thrift.bind.host</name>

<value>192.168.56.101</value>

<description>Bind host on which to run the HiveServer2 Thrift service.</description>

</property>

<property>

<name>hive.server2.thrift.port</name>

<value>10001</value>

<description>Port number of HiveServer2 Thrift interface when hive.server2.transport.mode is 'binary'.</description>

</property>

<property>

<name>hive.server2.thrift.min.worker.threads</name>

<value>5</value>

<description>Minimum number of Thrift worker threads</description>

</property>

<property>

<name>hive.server2.thrift.max.worker.threads</name>

<value>500</value>

<description>Maximum number of Thrift worker threads</description>

</property>

2. 启动HiveServer2

$HIVE_HOME/bin/hiveserver2

3. 修改kettle的配置文件

%KETTLE_HOME%/plugins/pentaho-big-data-plugin/plugin.properties

修改成下面的值

active.hadoop.configuration=hdp20

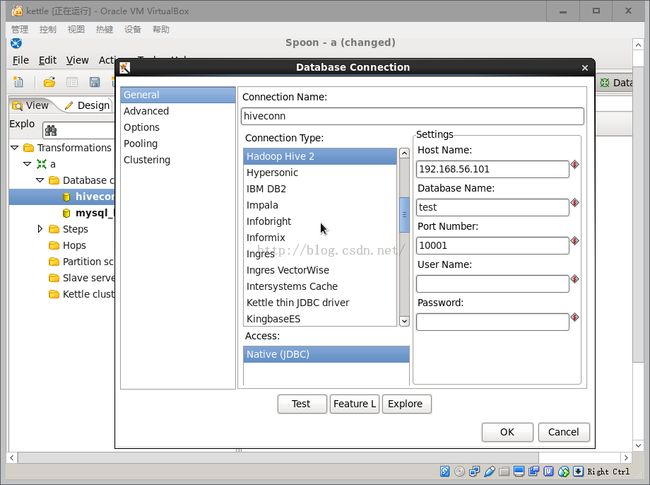

4. 启动kettle,配置数据库连接,如图1所示

5. 测试

(1)在hive中建立测试表和数据

CREATE DATABASE test;

USE test;

CREATE TABLE a(a int,b int) ROW FORMAT DELIMITED FIELDS TERMINATED BY ',';

LOAD DATA LOCAL INPATH '/home/grid/a.txt' INTO TABLE a;

SELECT * FROM a;

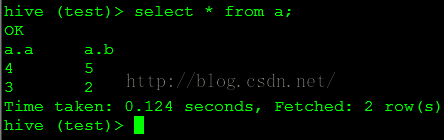

查询结果如图2所示

(3)点击预览,显示的数据如图4所示

https://cwiki.apache.org/confluence/display/Hive/Setting+up+HiveServer2

http://stackoverflow.com/questions/25625088/pentaho-data-integration-with-hive-connection

http://blog.csdn.net/victor_ww/article/details/40041589

<property>

<name>hive.server2.thrift.bind.host</name>

<value>192.168.56.101</value>

<description>Bind host on which to run the HiveServer2 Thrift service.</description>

</property>

<property>

<name>hive.server2.thrift.port</name>

<value>10001</value>

<description>Port number of HiveServer2 Thrift interface when hive.server2.transport.mode is 'binary'.</description>

</property>

<property>

<name>hive.server2.thrift.min.worker.threads</name>

<value>5</value>

<description>Minimum number of Thrift worker threads</description>

</property>

<property>

<name>hive.server2.thrift.max.worker.threads</name>

<value>500</value>

<description>Maximum number of Thrift worker threads</description>

</property>

2. 启动HiveServer2

$HIVE_HOME/bin/hiveserver2

3. 修改kettle的配置文件

%KETTLE_HOME%/plugins/pentaho-big-data-plugin/plugin.properties

修改成下面的值

active.hadoop.configuration=hdp20

4. 启动kettle,配置数据库连接,如图1所示

图1

5. 测试

(1)在hive中建立测试表和数据

CREATE DATABASE test;

USE test;

CREATE TABLE a(a int,b int) ROW FORMAT DELIMITED FIELDS TERMINATED BY ',';

LOAD DATA LOCAL INPATH '/home/grid/a.txt' INTO TABLE a;

SELECT * FROM a;

查询结果如图2所示

图2

(2)在kettle建立表输入步骤,结果如图3所示图3

注意:这里需要加上库名test,否则查询的是default库。(3)点击预览,显示的数据如图4所示

图4

参考:https://cwiki.apache.org/confluence/display/Hive/Setting+up+HiveServer2

http://stackoverflow.com/questions/25625088/pentaho-data-integration-with-hive-connection

http://blog.csdn.net/victor_ww/article/details/40041589