为已存在的Hadoop集群配置HDFS Federation

一、实验目的

1. 现有Hadoop集群只有一个NameNode,现在要增加一个NameNode。

2. 两个NameNode构成HDFS Federation。

3. 不重启现有集群,不影响数据访问。

二、实验环境

4台CentOS release 6.4虚拟机,IP地址为

192.168.56.101 master

192.168.56.102 slave1

192.168.56.103 slave2

192.168.56.104 kettle

其中kettle是新增的一台“干净”的机器,已经配置好免密码ssh,将作为新增的NameNode。

软件版本:

hadoop 2.7.2

hbase 1.1.4

hive 2.0.0

spark 1.5.0

zookeeper 3.4.8

kylin 1.5.1

现有配置:

master作为hadoop的NameNode、SecondaryNameNode、ResourceManager,hbase的HMaster

slave1、slave2作为hadoop的DataNode、NodeManager,hbase的HRegionServer

同时master、slave1、slave2作为三台zookeeper服务器

三、配置步骤

1. 编辑master上的hdfs-site.xml文件,修改后的文件内容如下所示。

执行后启动了NameNode、SecondaryNameNode进程,如图1所示。

四、测试

参考:

http://hadoop.apache.org/docs/r2.7.2/hadoop-project-dist/hadoop-hdfs/Federation.html

1. 现有Hadoop集群只有一个NameNode,现在要增加一个NameNode。

2. 两个NameNode构成HDFS Federation。

3. 不重启现有集群,不影响数据访问。

二、实验环境

4台CentOS release 6.4虚拟机,IP地址为

192.168.56.101 master

192.168.56.102 slave1

192.168.56.103 slave2

192.168.56.104 kettle

其中kettle是新增的一台“干净”的机器,已经配置好免密码ssh,将作为新增的NameNode。

软件版本:

hadoop 2.7.2

hbase 1.1.4

hive 2.0.0

spark 1.5.0

zookeeper 3.4.8

kylin 1.5.1

现有配置:

master作为hadoop的NameNode、SecondaryNameNode、ResourceManager,hbase的HMaster

slave1、slave2作为hadoop的DataNode、NodeManager,hbase的HRegionServer

同时master、slave1、slave2作为三台zookeeper服务器

三、配置步骤

1. 编辑master上的hdfs-site.xml文件,修改后的文件内容如下所示。

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/home/grid/hadoop-2.7.2/hdfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/home/grid/hadoop-2.7.2/hdfs/data</value>

</property>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

<!-- 新增属性 -->

<property>

<name>dfs.nameservices</name>

<value>ns1,ns2</value>

</property>

<property>

<name>dfs.namenode.rpc-address.ns1</name>

<value>master:9000</value>

</property>

<property>

<name>dfs.namenode.http-address.ns1</name>

<value>master:50070</value>

</property>

<property>

<name>dfs.namenode.secondary.http-address.ns1</name>

<value>master:9001</value>

</property>

<property>

<name>dfs.namenode.rpc-address.ns2</name>

<value>kettle:9000</value>

</property>

<property>

<name>dfs.namenode.http-address.ns2</name>

<value>kettle:50070</value>

</property>

<property>

<name>dfs.namenode.secondary.http-address.ns2</name>

<value>kettle:9001</value>

</property>

</configuration>2. 拷贝master上的hdfs-site.xml文件到集群上的其它节点

scp hdfs-site.xml slave1:/home/grid/hadoop-2.7.2/etc/hadoop/ scp hdfs-site.xml slave2:/home/grid/hadoop-2.7.2/etc/hadoop/3. 将Java目录、Hadoop目录、环境变量文件从master拷贝到kettle

scp -rp /home/grid/hadoop-2.7.2 kettle:/home/grid/ scp -rp /home/grid/jdk1.7.0_75 kettle:/home/grid/ # 用root执行 scp -p /etc/profile.d/* kettle:/etc/profile.d/4. 启动新的NameNode、SecondaryNameNode

# 在kettle上执行 source /etc/profile ln -s hadoop-2.7.2 hadoop $HADOOP_HOME/sbin/hadoop-daemon.sh start namenode $HADOOP_HOME/sbin/hadoop-daemon.sh start secondarynamenode

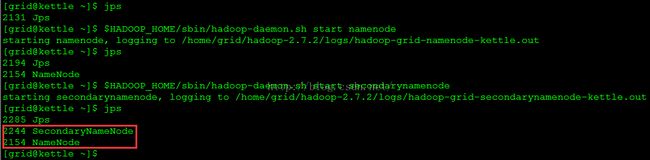

执行后启动了NameNode、SecondaryNameNode进程,如图1所示。

图1

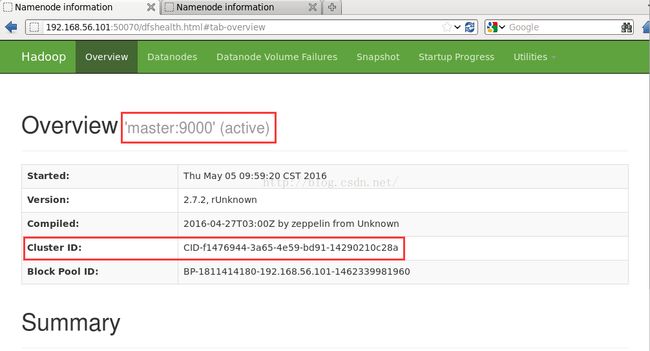

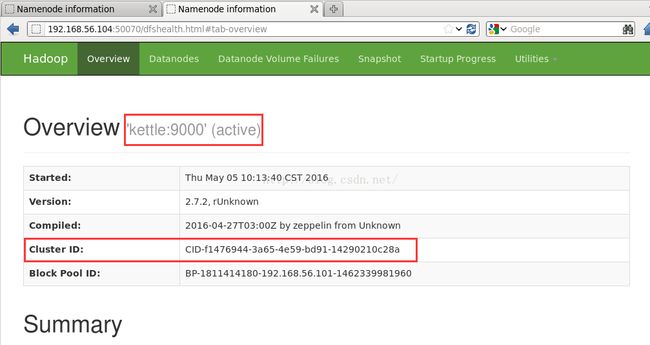

5. 刷新DataNode收集新添加的NameNode# 在集群中任意一台机器上执行均可 $HADOOP_HOME/bin/hdfs dfsadmin -refreshNamenodes slave1:50020 $HADOOP_HOME/bin/hdfs dfsadmin -refreshNamenodes slave2:50020至此,HDFS Federation配置完成,从web查看两个NameNode的状态分别如图2、图3所示。

图2

图3

四、测试

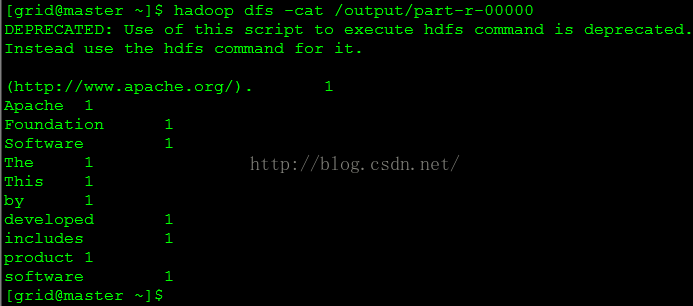

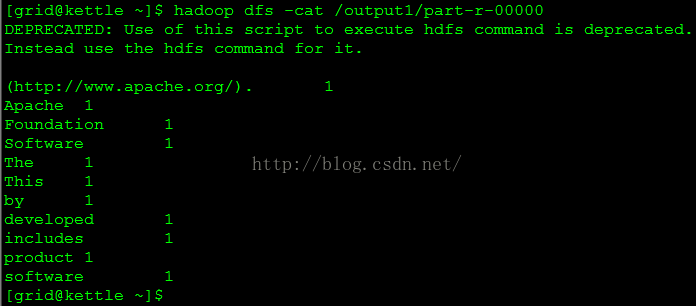

# 向HDFS上传一个文本文件 hadoop dfs -put /home/grid/hadoop/NOTICE.txt / # 分别在两台NameNode节点上运行Hadoop自带的例子 # 在master上执行 hadoop jar /home/grid/hadoop-2.7.2/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.2.jar wordcount /NOTICE.txt /output # 在kettle上执行 hadoop jar /home/grid/hadoop-2.7.2/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.2.jar wordcount /NOTICE.txt /output1用下面的命令查看两个输出结果,分别如图4、图5所示。

hadoop dfs -cat /output/part-r-00000 hadoop dfs -cat /output1/part-r-00000

图4

图5

参考:

http://hadoop.apache.org/docs/r2.7.2/hadoop-project-dist/hadoop-hdfs/Federation.html