Linux协议栈-netfilter(2)-conntrack

连接跟踪(conntrack)用来跟踪和记录一个连接的状态,它为经过协议栈的数据包记录状态,这为防火墙检测连接状态提供了参考,同时在数据包需要做NAT时也为转换工作提供便利。

本文基于Linux内核2.6.31实现的conntrack进行源码分析。

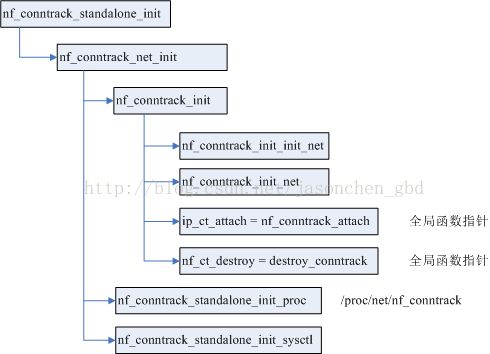

1. conntrack模块初始化

1.1 conntrack模块入口

conntrack模块的初始化主要就是为必要的全局数据结构进行初始化,代码流程如下:上面这些函数只是简单的调用关系,下面来看具体实现。

nf_conntrack_init_init_net()函数:

1. 根据内存的大小给全局变量nf_conntrack_htable_size和nf_conntrack_max赋值,他们分别指定了存放连接跟踪条目的hash表的大小以及系统可以创建的连接跟踪条目的数量。

2. 为nf_conn结构申请slab cache:

nf_conntrack_cachep= kmem_cache_create("nf_conntrack",

sizeof(structnf_conn), 0, SLAB_DESTROY_BY_RCU, NULL);

这个缓存用于新建struct nf_conn结构,每个连接的状态都由这样一个结构体实例来描述,每个连接都分为original和reply两个方向,每个方向都用一个元组(tuple)表示,tuple中包含了这个方向上数据包的信息,如源IP、目的IP、源port、目的port等。

3. 给全局的nf_ct_l3protos[]赋上默认值:

nf_ct_l3protos[]数组中的每个元素都赋值为nf_conntrack_l3proto_generic,即不区分L3协议的处理函数,后续的初始化会为不同的L3协议赋上相应的值(例如1.2节初始化特定于IPv4协议的conntrack的时候)。由下面的定义可知这是一组操作函数。

该全局数据的定义为:

struct nf_conntrack_l3proto * nf_ct_l3protos[AF_MAX]__read_mostly;struct nf_conntrack_l3proto nf_conntrack_l3proto_generic __read_mostly = {

.l3proto = PF_UNSPEC,

.name = "unknown",

.pkt_to_tuple = generic_pkt_to_tuple,

.invert_tuple = generic_invert_tuple,

.print_tuple = generic_print_tuple,

.get_l4proto = generic_get_l4proto,

};

4. 给全局的hash表nf_ct_helper_hash分配一个页大小的空间,通过nf_ct_alloc_hashtable()分配。

static struct hlist_head *nf_ct_helper_hash__read_mostly;

这个hash表用于存放helper类型的conntrack extension(见2.3节)。

这里要注意两个地方:

1) sizeof(struct hlist_nulls_head)和 sizeof(struct hlist_head)大小必须相等。

2) roundup(x, y)宏定义,这个宏保证了分配整个页的空间。

#defineroundup(x, y) ((((x) + ((y) - 1)) / (y)) * (y))

意思是要把x调整为y的整数倍的值,如x=46,y=32,则返回64。

5. 把helper_extend注册进全局数组nf_ct_ext_types[]中,并调整helper_extend.alloc_size。

struct nf_ct_ext_type *nf_ct_ext_types[NF_CT_EXT_NUM];static struct nf_ct_ext_type helper_extend __read_mostly = {

.len = sizeof(struct nf_conn_help),

.align = __alignof__(struct nf_conn_help),

.id = NF_CT_EXT_HELPER,

};

conntrack extentions共有4种类型,即全局数组nf_ct_ext_types[]的元素个数,每个元素的含义如下定义:

enum nf_ct_ext_id

{

NF_CT_EXT_HELPER,

NF_CT_EXT_NAT,

NF_CT_EXT_ACCT,

NF_CT_EXT_ECACHE,

NF_CT_EXT_NUM,

};

struct nf_ct_ext_type结构定义如下:

struct nf_ct_ext_type

{

/* Destroys relationships (can be NULL). */

void (*destroy)(struct nf_conn *ct);

/* Called when realloacted (can be NULL).

Contents has already been moved. */

void (*move)(void *new, void *old);

enum nf_ct_ext_id id;

unsigned int flags;

/* Length and min alignment. */

u8 len;

u8 align;

/* initial size of nf_ct_ext. */

u8 alloc_size;

};

nf_conntrack_init_net()函数:

该函数用来初始化net->ct成员。net是本地CPU的网络命名空间,在单CPU系统中就是全局变量init_net。它的ct成员的定义如下:

struct netns_ct {

atomic_t count;

unsigned int expect_count;

struct hlist_nulls_head *hash;

struct hlist_head *expect_hash;

struct hlist_nulls_head unconfirmed;

struct hlist_nulls_head dying;

struct ip_conntrack_stat *stat;

int sysctl_events;

unsigned int sysctl_events_retry_timeout;

int sysctl_acct;

int sysctl_checksum;

unsigned int sysctl_log_invalid; /* Log invalid packets */

#ifdef CONFIG_SYSCTL

struct ctl_table_header *sysctl_header;

struct ctl_table_header *acct_sysctl_header;

struct ctl_table_header *event_sysctl_header;

#endif

int hash_vmalloc;

int expect_vmalloc;

};

函数分析:

static int nf_conntrack_init_net(struct net *net)

{

int ret;

atomic_set(&net->ct.count, 0); /* 设置计数器为0 */

/* 初始化unconfirmed链表 */

INIT_HLIST_NULLS_HEAD(&net->ct.unconfirmed, UNCONFIRMED_NULLS_VAL);

/* 初始化dying链表 */

INIT_HLIST_NULLS_HEAD(&net->ct.dying, DYING_NULLS_VAL);

/* 给net->ct.stat分配空间并清0。统计数据 */

net->ct.stat = alloc_percpu(struct ip_conntrack_stat);

if (!net->ct.stat) {

ret = -ENOMEM;

goto err_stat;

}

/* 初始化conntrack hash table,表的大小上面已初始化过了。同样的,这里会调整nf_conntrack_htable_size为页大小的整数倍。 */

net->ct.hash = nf_ct_alloc_hashtable(&nf_conntrack_htable_size,

&net->ct.hash_vmalloc, 1);

if (!net->ct.hash) {

ret = -ENOMEM;

printk(KERN_ERR "Unable to create nf_conntrack_hash\n");

goto err_hash;

}

/* 初始化net->ct.expect_hash及缓存,并在/proc中创建相应文件,下面会单独说明该函数。 */

ret = nf_conntrack_expect_init(net);

if (ret < 0)

goto err_expect;

/* 将acct_extend注册进全局数组nf_ct_ext_types[NF_CT_EXT_ACCT]中。 */

ret = nf_conntrack_acct_init(net);

if (ret < 0)

goto err_acct;

ret = nf_conntrack_ecache_init(net); /* event cache of netfilter */

if (ret < 0)

goto err_ecache;

/* 单独的fake conntrack */

/* Set up fake conntrack:

- to never be deleted, not in any hashes */

atomic_set(&nf_conntrack_untracked.ct_general.use, 1);

/* - and look it like as a confirmed connection */

set_bit(IPS_CONFIRMED_BIT, &nf_conntrack_untracked.status);

return 0;

}

nf_conntrack_expect_init()函数,

int nf_conntrack_expect_init(struct net *net)

{

int err = -ENOMEM;

if (net_eq(net, &init_net)) {

if (!nf_ct_expect_hsize) {

/* 期望连接hash table的size */

nf_ct_expect_hsize = nf_conntrack_htable_size / 256;

if (!nf_ct_expect_hsize)

nf_ct_expect_hsize = 1;

}

/* 期望连接的最大连接数 */

nf_ct_expect_max = nf_ct_expect_hsize * 4;

}

/* 分配期望连接hash table。 */

net->ct.expect_count = 0;

net->ct.expect_hash = nf_ct_alloc_hashtable(&nf_ct_expect_hsize,

&net->ct.expect_vmalloc, 0);

if (net->ct.expect_hash == NULL)

goto err1;

if (net_eq(net, &init_net)) {

/* 期望连接的slab cache。 */

nf_ct_expect_cachep = kmem_cache_create("nf_conntrack_expect",

sizeof(struct nf_conntrack_expect),

0, 0, NULL);

if (!nf_ct_expect_cachep)

goto err2;

}

/* /proc/net/nf_conntrack_expect文件操作。 */

err = exp_proc_init(net);

if (err < 0)

goto err3;

return 0;

}

需要先说一下struct hlist_nulls_head结构以及INIT_HLIST_NULLS_HEAD宏:

/*

* Special version of lists, where end of list is not a NULL pointer,

* but a 'nulls' marker, which can have many different values.

* (up to 2^31 different values guaranteed on all platforms)

*

* In the standard hlist, termination of a list is the NULL pointer.

* In this special 'nulls' variant, we use the fact that objects stored in

* a list are aligned on a word (4 or 8 bytes alignment).

* We therefore use the last significant bit of 'ptr' :

* Set to 1 : This is a 'nulls' end-of-list marker (ptr >> 1)

* Set to 0 : This is a pointer to some object (ptr)

*/

struct hlist_nulls_head {

struct hlist_nulls_node *first;

};

struct hlist_nulls_node {

struct hlist_nulls_node *next, **pprev;

};

/* 注意右值是first里面的值而不是地址。这个值都是奇数,即二进制的最后一位是1;

在普通链表中一般用NULL来判断链表结束,而linux内核中由于地址都是4或8字节对齐的,所以指针的末两位用不上,因此可以复用一下,最后一位是1表示链表结束,是0则未结束。

这个宏保证了最末位是1,而其他位可以是随意值,所以可以有2^31种可能值。

下面是对HLIST_NULLS_HEAD链表的初始化,所以给first赋值。

*/

#define INIT_HLIST_NULLS_HEAD(ptr, nulls) \

((ptr)->first = (struct hlist_nulls_node *) (1UL | (((long)nulls) << 1)))

在上面初始化unconfirmed和dying链表时就使用了这一特性,unconfirmed链表如果发现一个节点的地址的末两位是二进制01就说明链表结束了,dying链表如果发现一个节点的地址的末两位是11就说明链表结束了。net->ct.hash中的每个冲突链表也通过INIT_HLIST_NULLS_HEAD宏做了初始化:

for (i =0; i < nr_slots; i++) INIT_HLIST_NULLS_HEAD(&hash[i], i);

总结一下初始化完成的数据结构:

◇ conntrack模块使用到的SLAB缓存:

1. nf_conntrack_cachep

nf_conntrack_cachep =kmem_cache_create("nf_conntrack",

sizeof(struct nf_conn),

0,SLAB_DESTROY_BY_RCU, NULL);

构建nf_conn的SLAB缓存,所有的conntrack实例都从这个SLAB缓存中申请。

2. nf_ct_expect_cachep

nf_ct_expect_cachep =kmem_cache_create("nf_conntrack_expect",

sizeof(struct nf_conntrack_expect),

0,0, NULL);

构建nf_conntrack_expect的SLAB缓存,所有的conntrack期望连接实例都从这个SLAB缓存中申请。

◇ net结构中的hash表:

net->ct.hash

用于缓存nf_conn实例,一个哈希链表,实际上里面存的是structnf_conntrack_tuple_hash结构,想得到nf_conn实体,使用nf_ct_tuplehash_to_ctrack(hash)即可。original和reply方向的tuple有不同的哈希值。struct nf_conntrack_tuple_hash {

struct hlist_nulls_node hnnode;

struct nf_conntrack_tuple tuple;

};

struct nf_conn结构:

/* 唯一描述一个连接的状态 */

struct nf_conn {

......

/* 两个哈希表,分别为original和reply,新建一个连接就在这两个hash中添加一项nf_conntrack_tuple。*/

struct nf_conntrack_tuple_hash tuplehash[IP_CT_DIR_MAX];

union nf_conntrack_proto proto;

......

};

struct nf_conntrack_tuple结构:

/* 唯一标识一个连接 */

struct nf_conntrack_tuple

{

struct nf_conntrack_man src;

/* These are the parts of the tuple which are fixed. */

struct {

union nf_inet_addr u3;

union {

/* Add other protocols here. */

__be16 all;

struct {

__be16 port;

} tcp;

struct {

__be16 port;

} udp;

struct {

u_int8_t type, code;

} icmp;

struct {

__be16 port;

} dccp;

struct {

__be16 port;

} sctp;

struct {

__be16 key;

} gre;

} u;

/* The protocol. */

u_int8_t protonum;

/* The direction (for tuplehash) */

u_int8_t dir; /* original or reply */

} dst;

};

net->ct.expect_hash

用于缓存ALG的nf_conn实体,即期望连接。

net->ct.unconfirmed

用于缓存还未被确认conntrack条目,数据包进入路由器后会经过两个conntrack相关的hook点,第一个hook点会新建conntrack条目,但这个条目还没有被确认,因此先放到unconfirm链表中,在经过了协议栈的处理和过滤,如果能够成功到达第二个hook点,才被添加进net->ct.hash中去。

net->ct.dying

用于存放超时并即将被删除的conntrack条目。

nf_ct_ext_types[]和nf_ct_helper_hash

conntrack extensions要用到的结构,extensions一共有4个type,其中helper类型的数据还需要一个额外的hash表,即nf_ct_helper_hash。

系统中conntrack条目可以通过proc文件/proc/net/nf_conntrack查看,文件中的内容可以参考ct_seq_show()函数的实现。

1.2 conntrack_ipv4模块入口

特定于IPv4协议的conntrack模块的初始化内容如下:

nf_conntrack_l3proto_ipv4_init()的工作如上图所示:

1. 初始化socket选项的get方法,可以通过getsockopt()来通过socket得到相应original方向的tuple。

2. 注册tcp4、udp4和icmp三个L4协议到全局的二维数组nf_ct_protos[][]中,其定义如下:

staticstruct nf_conntrack_l4proto **nf_ct_protos[PF_MAX]__read_mostly;

数组的每个成员都是struct nf_conntrack_l4proto类型的,这里注册的三个成员定义如下:

nf_conntrack_l4proto_tcp4(nf_conntrack_proto_tcp.c):

struct nf_conntrack_l4proto nf_conntrack_l4proto_tcp4 __read_mostly =

{

.l3proto = PF_INET,

.l4proto = IPPROTO_TCP,

.name = "tcp",

.pkt_to_tuple = tcp_pkt_to_tuple,

.invert_tuple = tcp_invert_tuple,

.print_tuple = tcp_print_tuple,

.print_conntrack = tcp_print_conntrack,

.packet = tcp_packet,

.new = tcp_new,

.error = tcp_error,

#if defined(CONFIG_NF_CT_NETLINK) || defined(CONFIG_NF_CT_NETLINK_MODULE)

.to_nlattr = tcp_to_nlattr,

.nlattr_size = tcp_nlattr_size,

.from_nlattr = nlattr_to_tcp,

.tuple_to_nlattr = nf_ct_port_tuple_to_nlattr,

.nlattr_to_tuple = nf_ct_port_nlattr_to_tuple,

.nlattr_tuple_size = tcp_nlattr_tuple_size,

.nla_policy = nf_ct_port_nla_policy,

#endif

#ifdef CONFIG_SYSCTL

.ctl_table_users = &tcp_sysctl_table_users,

.ctl_table_header = &tcp_sysctl_header,

.ctl_table = tcp_sysctl_table,

#ifdef CONFIG_NF_CONNTRACK_PROC_COMPAT

.ctl_compat_table = tcp_compat_sysctl_table,

#endif

#endif

};

nf_conntrack_l4proto_udp4(nf_conntrack_proto_udp.c):

struct nf_conntrack_l4proto nf_conntrack_l4proto_udp4 __read_mostly =

{

.l3proto = PF_INET,

.l4proto = IPPROTO_UDP,

.name = "udp",

.pkt_to_tuple = udp_pkt_to_tuple,

.invert_tuple = udp_invert_tuple,

.print_tuple = udp_print_tuple,

.packet = udp_packet,

.new = udp_new,

.error = udp_error,

#if defined(CONFIG_NF_CT_NETLINK) || defined(CONFIG_NF_CT_NETLINK_MODULE)

.tuple_to_nlattr = nf_ct_port_tuple_to_nlattr,

.nlattr_to_tuple = nf_ct_port_nlattr_to_tuple,

.nlattr_tuple_size = nf_ct_port_nlattr_tuple_size,

.nla_policy = nf_ct_port_nla_policy,

#endif

#ifdef CONFIG_SYSCTL

.ctl_table_users = &udp_sysctl_table_users,

.ctl_table_header = &udp_sysctl_header,

.ctl_table = udp_sysctl_table,

#ifdef CONFIG_NF_CONNTRACK_PROC_COMPAT

.ctl_compat_table = udp_compat_sysctl_table,

#endif

#endif

};

nf_conntrack_l4proto_icmp(nf_conntrack_proto_icmp.c):

struct nf_conntrack_l4proto nf_conntrack_l4proto_icmp __read_mostly =

{

.l3proto = PF_INET,

.l4proto = IPPROTO_ICMP,

.name = "icmp",

.pkt_to_tuple = icmp_pkt_to_tuple,

.invert_tuple = icmp_invert_tuple,

.print_tuple = icmp_print_tuple,

.packet = icmp_packet,

.new = icmp_new,

.error = icmp_error,

.destroy = NULL,

.me = NULL,

#if defined(CONFIG_NF_CT_NETLINK) || defined(CONFIG_NF_CT_NETLINK_MODULE)

.tuple_to_nlattr = icmp_tuple_to_nlattr,

.nlattr_tuple_size = icmp_nlattr_tuple_size,

.nlattr_to_tuple = icmp_nlattr_to_tuple,

.nla_policy = icmp_nla_policy,

#endif

#ifdef CONFIG_SYSCTL

.ctl_table_header = &icmp_sysctl_header,

.ctl_table = icmp_sysctl_table,

#ifdef CONFIG_NF_CONNTRACK_PROC_COMPAT

.ctl_compat_table = icmp_compat_sysctl_table,

#endif

#endif

};

注册是通过nf_conntrack_l4proto_register(l4proto)完成的,参数l4proto是要注册的structnf_conntrack_l4proto结构,注册步骤为:

- 如果nf_ct_protos[l4proto->l3proto]还没有初始化,就给该数组元素中的所有数组元素赋值为nf_conntrack_l4proto_generic。

- 初始化nfnetlink attributes相关的l4proto->nla_size和l4proto->nla_size的值。

- 将l4proto赋值给nf_ct_protos[l4proto->l3proto][l4proto->l4proto]。

3. 将L3协议nf_conntrack_l3proto_ipv4注册到全局数组nf_ct_l3protos[]中,数组定义如下:

struct nf_conntrack_l3proto *nf_ct_l3protos[AF_MAX]__read_mostly;

数组中的每个成员都是structnf_conntrack_l3proto结构体类型的,这里注册的成员实例定义如下:struct nf_conntrack_l3proto nf_conntrack_l3proto_ipv4 __read_mostly = {

.l3proto = PF_INET,

.name = "ipv4",

.pkt_to_tuple = ipv4_pkt_to_tuple,

.invert_tuple = ipv4_invert_tuple,

.print_tuple = ipv4_print_tuple,

.get_l4proto = ipv4_get_l4proto,

#if defined(CONFIG_NF_CT_NETLINK) || defined(CONFIG_NF_CT_NETLINK_MODULE)

.tuple_to_nlattr = ipv4_tuple_to_nlattr,

.nlattr_tuple_size = ipv4_nlattr_tuple_size,

.nlattr_to_tuple = ipv4_nlattr_to_tuple,

.nla_policy = ipv4_nla_policy,

#endif

#if defined(CONFIG_SYSCTL) && defined(CONFIG_NF_CONNTRACK_PROC_COMPAT)

.ctl_table_path = nf_net_ipv4_netfilter_sysctl_path,

.ctl_table = ip_ct_sysctl_table,

#endif

.me = THIS_MODULE,

};

注册过程是通过nf_conntrack_l3proto_register(proto)完成的,参数proto是要注册的实例,注册步骤如下:

- 初始化nfnetlink attributes相关的proto->nla_size。

- 将proto赋值给nf_ct_l3protos[proto->l3proto]。

这里没有给每个成员赋初值,因为在nf_conntrack_init_init_net()函数中已经做过了。

可以看到结构体struct nf_conntrack_l3proto和结构体struct nf_conntrack_l4proto都有pkt_to_tuple()和invert_tuple()函数指针,他们分别作用在数据包的L3头部和L4头部,例如L3的pkt_to_tuple()用于获取源IP和目的IP来初始化tuple,L4的pkt_to_tuple()用于获取源port和目的port来初始化tuple。

总结一下这节初始化的内容:

1. 初始化L4层协议相关的nf_ct_protos[PF_INET][]数组中TCP, UDP, ICMP三个成员。

2. 初始化L3层协议相关的nf_ct_l3protos[PF_INET]。

3. 注册conntrack的四个hook函数。2. conntrack处理数据包的过程

上一篇文章提到netfilter为conntrack注册了四个hook函数。

下面看一下和conntrack相关的hook函数,即上图中标记为浅蓝色的4个函数,其中ipv4_conntrack_in()和ipv4_conntrack_local()作用相似,他们都位于数据包传输的起点,且优先级仅次于defrag,两个ipv4_confirm()作用相似,他们都位于数据包传输的终点,且优先级最低。

2.1 nf_conntrack_in()

hook函数nf_conntrack_in()的实现如下:

unsigned int

nf_conntrack_in(struct net *net, u_int8_t pf, unsigned int hooknum,

struct sk_buff *skb)

{

struct nf_conn *ct;

enum ip_conntrack_info ctinfo;

struct nf_conntrack_l3proto *l3proto;

struct nf_conntrack_l4proto *l4proto;

unsigned int dataoff;

u_int8_t protonum;

int set_reply = 0;

int ret;

/* Previously seen (loopback or untracked)? Ignore. */

if (skb->nfct) { /* 如果skb已指向一个连接 */

NF_CT_STAT_INC_ATOMIC(net, ignore);

return NF_ACCEPT; /* 可能是环回,不做处理 */

}

/* 获取skb->data中L4层(TCP, UDP, etc)首部指针和协议类型,赋值给dataoff和protonum */

l3proto = __nf_ct_l3proto_find(pf);

ret = l3proto->get_l4proto(skb, skb_network_offset(skb),

&dataoff, &protonum);

if (ret <= 0) { /* 如果返回NF_DROP */

pr_debug("not prepared to track yet or error occured\n");

NF_CT_STAT_INC_ATOMIC(net, error);

NF_CT_STAT_INC_ATOMIC(net, invalid);

return -ret;

}

/* 获取相应L4协议的struct nf_conntrack_l4proto对象。 */

l4proto = __nf_ct_l4proto_find(pf, protonum);

if (l4proto->error != NULL)

{

/* 检验L4头部最基本的合法性。如长度, checksum等 */

ret = l4proto->error(net, skb, dataoff, &ctinfo, pf, hooknum);

if (ret <= 0) {

NF_CT_STAT_INC_ATOMIC(net, error);

NF_CT_STAT_INC_ATOMIC(net, invalid);

return -ret;

}

}

/* 根据skb得到一个nf_conn结构 */

ct = resolve_normal_ct(net, skb, dataoff, pf, protonum,

l3proto, l4proto, &set_reply, &ctinfo);

if (!ct) {

/* Not valid part of a connection */

NF_CT_STAT_INC_ATOMIC(net, invalid);

return NF_DROP;

}

if (IS_ERR(ct)) {

/* Too stressed to deal. */

NF_CT_STAT_INC_ATOMIC(net, drop);

return NF_DROP;

}

/* 这时skb应该已经指向一个连接了。 */

NF_CT_ASSERT(skb->nfct);

/* 对数据包中L4的字段进行合法性检查以及一些协议相关的处理,如修改ct的超时时间,返回处理结果(ACCEPT, DROP etc.) */

ret = l4proto->packet(ct, skb, dataoff, ctinfo, pf, hooknum);

if (ret <= 0) { /* 经过上面的packet函数处理失败,就把ct引用删掉 */

/* Invalid: inverse of the return code tells

* the netfilter core what to do */

pr_debug("nf_conntrack_in: Can't track with proto module\n");

nf_conntrack_put(skb->nfct);

skb->nfct = NULL;

NF_CT_STAT_INC_ATOMIC(net, invalid);

if (ret == -NF_DROP)

NF_CT_STAT_INC_ATOMIC(net, drop);

return -ret;

}

/* 如果set_reply=1,即这是reply方向的tuple,就设置ct->status = IPS_SEEN_REPLY_BIT。

*/

if (set_reply && !test_and_set_bit(IPS_SEEN_REPLY_BIT, &ct->status))

nf_conntrack_event_cache(IPCT_STATUS, ct); //空函数

return ret;

}

resolve_normal_ct()函数用来处理数据包,查找或新建一个tuple,生成一个nf_conn结构:

static inline struct nf_conn *

resolve_normal_ct(struct net *net,

struct sk_buff *skb,

unsigned int dataoff,

u_int16_t l3num,

u_int8_t protonum,

struct nf_conntrack_l3proto *l3proto,

struct nf_conntrack_l4proto *l4proto,

int *set_reply,

enum ip_conntrack_info *ctinfo)

{

struct nf_conntrack_tuple tuple;

struct nf_conntrack_tuple_hash *h = NULL;

struct nf_conn *ct = NULL;

/* 由skb得出一个original方向的tuple,赋值给tuple,这是一个struct nf_conntrack_tuple结构,这里给其所有成员都赋值了,以TCP包为例:

tuple->src.l3num = l3num;

tuple->src.u3.ip = srcip;

tuple->dst.u3.ip = dstip;

tuple->dst.protonum = protonum;

tuple->dst.dir = IP_CT_DIR_ORIGINAL;

tuple->src.u.tcp.port = srcport;

tuple->dst.u.tcp.port = destport;

*/

if (!nf_ct_get_tuple(skb, skb_network_offset(skb),

dataoff, l3num, protonum, &tuple, l3proto,

l4proto)) {

pr_debug("resolve_normal_ct: Can't get tuple\n");

return NULL;

}

/* 在hash表net->ct.hash中匹配tuple,并初始化其外围的nf_conn结构 */

h = nf_conntrack_find_get(net, &tuple);

if (!h) {

/* 如果没有找到,则创建一对新的tuple(两个方向的tuple是同时创建的)及其

nf_conn结构,并添加到unconfirmed链表中。 */

h = init_conntrack(net, &tuple, l3proto, l4proto, skb, dataoff);

if (!h)

return NULL;

if (IS_ERR(h))

return (void *)h;

}

ct = nf_ct_tuplehash_to_ctrack(h); /* 获得tuple的nf_conn结构 */

/* 修改数据包的ct状态。 */

if (NF_CT_DIRECTION(h) == IP_CT_DIR_REPLY) {

/* 如果这个tuple是reply方向的 */

*ctinfo = IP_CT_ESTABLISHED + IP_CT_IS_REPLY;

/* Please set reply bit if this packet OK */

*set_reply = 1; /* nf_conntrack_in()要用到这个值=1的时候 */

} else {

/* Once we've had two way comms, always ESTABLISHED. */

if (test_bit(IPS_SEEN_REPLY_BIT, &ct->status)) {

pr_debug("nf_conntrack_in: normal packet for %p\n", ct);

*ctinfo = IP_CT_ESTABLISHED;

} else if (test_bit(IPS_EXPECTED_BIT, &ct->status)) {

/* 如果在expect链表中找到 */

pr_debug("nf_conntrack_in: related packet for %p\n",

ct);

*ctinfo = IP_CT_RELATED;

} else {

pr_debug("nf_conntrack_in: new packet for %p\n", ct);

*ctinfo = IP_CT_NEW;

}

*set_reply = 0;

}

/* 这里取的地址,由于ct_general是ct的第一个成员,所以skb->nfct保存的是ct的地址。 */

skb->nfct = &ct->ct_general;

/* ct的状态 */

skb->nfctinfo = *ctinfo;

return ct;

}

init_conntrack()函数用于分配并初始化一个新的nf_conn结构实例:

/* Allocate a new conntrack: we return -ENOMEM if classification

failed due to stress. Otherwise it really is unclassifiable. */

static struct nf_conntrack_tuple_hash *

init_conntrack(struct net *net,

struct nf_conntrack_tuple *tuple,

struct nf_conntrack_l3proto *l3proto,

struct nf_conntrack_l4proto *l4proto,

struct sk_buff *skb,

unsigned int dataoff

)

{

struct nf_conn *ct;

struct nf_conn_help *help;

struct nf_conntrack_tuple repl_tuple;

struct nf_conntrack_expect *exp;

/* 根据tuple制作一个repl_tuple。主要是调用L3和L4的invert_tuple方法 */

if (!nf_ct_invert_tuple(&repl_tuple, tuple, l3proto, l4proto)) {

pr_debug("Can't invert tuple.\n");

return NULL;

}

/* 在cache中申请一个nf_conn结构,把tuple和repl_tuple赋值给ct的tuplehash[]数组,并初始化ct.timeout定时器函数为death_by_timeout(),但不启动定时器。 */

ct = nf_conntrack_alloc(net, tuple, &repl_tuple, GFP_ATOMIC);

if (IS_ERR(ct)) {

pr_debug("Can't allocate conntrack.\n");

return (struct nf_conntrack_tuple_hash *)ct;

}

/* 对tcp来说,下面函数就是将L4层字段如window, ack等字段

赋给ct->proto.tcp.seen[0],由于新建立的连接才调这里,所以

不用给reply方向的ct->proto.tcp.seen[1]赋值 */

if (!l4proto->new(ct, skb, dataoff)) {

nf_conntrack_free(ct);

pr_debug("init conntrack: can't track with proto module\n");

return NULL;

}

/* 为acct和ecache两个ext分配空间。不过之后一般不会被初始化,所以用不到 */

nf_ct_acct_ext_add(ct, GFP_ATOMIC);

nf_ct_ecache_ext_add(ct, GFP_ATOMIC);

spin_lock_bh(&nf_conntrack_lock);

exp = nf_ct_find_expectation(net, tuple);

/* 如果在期望连接链表中 */

if (exp) { /* 将exp的值赋给ct->master */

pr_debug("conntrack: expectation arrives ct=%p exp=%p\n",

ct, exp);

/* Welcome, Mr. Bond. We've been expecting you... */

__set_bit(IPS_EXPECTED_BIT, &ct->status);

ct->master = exp->master;

if (exp->helper) {

/* helper的ext以及help链表分配空间 */

help = nf_ct_helper_ext_add(ct, GFP_ATOMIC);

if (help)

rcu_assign_pointer(help->helper, exp->helper);

}

/* 如果在expect链表中,将master引用计数加1 */

nf_conntrack_get(&ct->master->ct_general);

NF_CT_STAT_INC(net, expect_new);

} else {

__nf_ct_try_assign_helper(ct, GFP_ATOMIC);

NF_CT_STAT_INC(net, new);

}

/* 将这个tuple添加到unconfirmed链表中,因为数据包还没有出去,所以不知道是否会被丢弃,所以暂时先不添加到conntrack hash中 */

hlist_nulls_add_head_rcu(&ct->tuplehash[IP_CT_DIR_ORIGINAL].hnnode,

&net->ct.unconfirmed);

spin_unlock_bh(&nf_conntrack_lock);

if (exp) {

/* expect连接的引用计数减1 */

if (exp->expectfn)

exp->expectfn(ct, exp);

nf_ct_expect_put(exp);

}

return &ct->tuplehash[IP_CT_DIR_ORIGINAL];

}

ipv4_conntrack_local()做的事情和nf_conntrack_in()基本相同。可以看到在入口的两个hook点上,conntrack的工作主要是:

1. 由skb得到一个tuple,对数据包做合法性检查。

2. 查找net->ct.hash表是否已记录这个tuple。如果没有记录,则新建一个tuple及nf_conn并添加到unconfirmed链表中。

3. 对ct做一些协议相关的特定处理和检查。

4. 更新conntrack的状态ct->status和skb的状态skb->nfctinfo。

conntrack和skb的状态定义如下:

/* Connection state tracking for netfilter. This is separated from,

but required by, the NAT layer; it can also be used by an iptables

extension. */

/* 一共5个状态,下面四个,加上IP_CT_RELATED + IP_CT_IS_REPLY */

/* 这些值是skb->nfctinfo使用的 */

enum ip_conntrack_info

{

/* Part of an established connection (either direction). */

IP_CT_ESTABLISHED, /* enum从0开始。 */

/* Like NEW, but related to an existing connection, or ICMP error

(in either direction). */

IP_CT_RELATED,

/* Started a new connection to track (only

IP_CT_DIR_ORIGINAL); may be a retransmission. */

IP_CT_NEW,

/* >= this indicates reply direction */

IP_CT_IS_REPLY,

/* Number of distinct IP_CT types (no NEW in reply dirn). */

IP_CT_NUMBER = IP_CT_IS_REPLY * 2 - 1

};

/* Bitset representing status of connection. */

/* 这些值是ct->status使用的 */

enum ip_conntrack_status {

/* It's an expected connection: bit 0 set. This bit never changed */

IPS_EXPECTED_BIT = 0,

IPS_EXPECTED = (1 << IPS_EXPECTED_BIT),

/* We've seen packets both ways: bit 1 set. Can be set, not unset. */

IPS_SEEN_REPLY_BIT = 1,

IPS_SEEN_REPLY = (1 << IPS_SEEN_REPLY_BIT),

/* Conntrack should never be early-expired. */

IPS_ASSURED_BIT = 2,

IPS_ASSURED = (1 << IPS_ASSURED_BIT),

/* Connection is confirmed: originating packet has left box */

IPS_CONFIRMED_BIT = 3,

IPS_CONFIRMED = (1 << IPS_CONFIRMED_BIT),

/* Connection needs src nat in orig dir. This bit never changed. */

IPS_SRC_NAT_BIT = 4,

IPS_SRC_NAT = (1 << IPS_SRC_NAT_BIT),

/* Connection needs dst nat in orig dir. This bit never changed. */

IPS_DST_NAT_BIT = 5,

IPS_DST_NAT = (1 << IPS_DST_NAT_BIT),

/* Both together. */

IPS_NAT_MASK = (IPS_DST_NAT | IPS_SRC_NAT),

/* Connection needs TCP sequence adjusted. */

IPS_SEQ_ADJUST_BIT = 6,

IPS_SEQ_ADJUST = (1 << IPS_SEQ_ADJUST_BIT),

/* NAT initialization bits. */

IPS_SRC_NAT_DONE_BIT = 7,

IPS_SRC_NAT_DONE = (1 << IPS_SRC_NAT_DONE_BIT),

IPS_DST_NAT_DONE_BIT = 8,

IPS_DST_NAT_DONE = (1 << IPS_DST_NAT_DONE_BIT),

/* Both together */

IPS_NAT_DONE_MASK = (IPS_DST_NAT_DONE | IPS_SRC_NAT_DONE),

/* Connection is dying (removed from lists), can not be unset. */

IPS_DYING_BIT = 9,

IPS_DYING = (1 << IPS_DYING_BIT),

/* Connection has fixed timeout. */

IPS_FIXED_TIMEOUT_BIT = 10,

IPS_FIXED_TIMEOUT = (1 << IPS_FIXED_TIMEOUT_BIT),

};

我们注意这样一个赋值过程:

skb->nfct = &ct->ct_general;

在struct nf_conn结构体中,ct_general是其第一个成员,所以它的地址和整个结构体的地址相同,所以skb->nfct的值实际上就是skb对应的conntrack条目的地址,因此通过(struct nf_conn *)skb->nfct就可以通过skb得到它的conntrack条目。

2.2 ipv4_confirm()

hook函数ipv4_confirm()的函数实现如下:

static unsigned int ipv4_confirm(unsigned int hooknum,

struct sk_buff *skb,

const struct net_device *in,

const struct net_device *out,

int (*okfn)(struct sk_buff *))

{

struct nf_conn *ct;

enum ip_conntrack_info ctinfo;

const struct nf_conn_help *help;

const struct nf_conntrack_helper *helper;

unsigned int ret;

/* This is where we call the helper: as the packet goes out. */

/* ct结构从下面获得: (struct nf_conn *)skb->nfct; */

ct = nf_ct_get(skb, &ctinfo);

/* 这种状态的不确认 */

if (!ct || ctinfo == IP_CT_RELATED + IP_CT_IS_REPLY)

goto out;

/* 获得ct->ext中的NF_CT_EXT_HELPER数据。一般都是没有的。ftp,tftp,pptp等这些协议的数据包就会有helper。 */

help = nfct_help(ct);

if (!help)

goto out;

/* rcu_read_lock()ed by nf_hook_slow */

helper = rcu_dereference(help->helper);

if (!helper)

goto out;

ret = helper->help(skb, skb_network_offset(skb) + ip_hdrlen(skb),

ct, ctinfo);

if (ret != NF_ACCEPT)

return ret;

if (test_bit(IPS_SEQ_ADJUST_BIT, &ct->status)) {

/* TCP,调整做NAT之后的seq number */

typeof(nf_nat_seq_adjust_hook) seq_adjust;

seq_adjust = rcu_dereference(nf_nat_seq_adjust_hook);

if (!seq_adjust || !seq_adjust(skb, ct, ctinfo)) {

NF_CT_STAT_INC_ATOMIC(nf_ct_net(ct), drop);

return NF_DROP;

}

}

out:

/* We've seen it coming out the other side: confirm it */

return nf_conntrack_confirm(skb);

}

nf_conntrack_confirm()函数:

/* Confirm a connection: returns NF_DROP if packet must be dropped. */

static inline int nf_conntrack_confirm(struct sk_buff *skb)

{

struct nf_conn *ct = (struct nf_conn *)skb->nfct;

int ret = NF_ACCEPT;

if (ct && ct != &nf_conntrack_untracked) {

/* 如果未被确认 */

if (!nf_ct_is_confirmed(ct) && !nf_ct_is_dying(ct))

ret = __nf_conntrack_confirm(skb); /* 则进行确认 */

if (likely(ret == NF_ACCEPT))

nf_ct_deliver_cached_events(ct); /* 空 */

}

return ret;

}

__nf_conntrack_confirm()函数:

/* Confirm a connection given skb; places it in hash table */

int

__nf_conntrack_confirm(struct sk_buff *skb)

{

unsigned int hash, repl_hash;

struct nf_conntrack_tuple_hash *h;

struct nf_conn *ct;

struct nf_conn_help *help;

struct hlist_nulls_node *n;

enum ip_conntrack_info ctinfo;

struct net *net;

ct = nf_ct_get(skb, &ctinfo);

net = nf_ct_net(ct);

/* ipt_REJECT uses nf_conntrack_attach to attach related

ICMP/TCP RST packets in other direction. Actual packet

which created connection will be IP_CT_NEW or for an

expected connection, IP_CT_RELATED. */

/* 如果不是original方向的包,直接返回 */

if (CTINFO2DIR(ctinfo) != IP_CT_DIR_ORIGINAL)

return NF_ACCEPT;

/* 计算hash key,用于添加进hash表中 */

hash = hash_conntrack(&ct->tuplehash[IP_CT_DIR_ORIGINAL].tuple);

repl_hash = hash_conntrack(&ct->tuplehash[IP_CT_DIR_REPLY].tuple);

spin_lock_bh(&nf_conntrack_lock);

/* See if there's one in the list already, including reverse:

NAT could have grabbed it without realizing, since we're

not in the hash. If there is, we lost race. */

/* 待确认的连接如果已经在conntrack的hash表中(有一个方向存在就视为存在),就不再插入了,丢弃它 */

hlist_nulls_for_each_entry(h, n, &net->ct.hash[hash], hnnode)

if(nf_ct_tuple_equal(&ct->tuplehash[IP_CT_DIR_ORIGINAL].tuple,

&h->tuple)) {

goto out;

}

hlist_nulls_for_each_entry(h, n, &net->ct.hash[repl_hash], hnnode)

if (nf_ct_tuple_equal(&ct->tuplehash[IP_CT_DIR_REPLY].tuple,

&h->tuple)) {

goto out;

}

/*从unconfirmed链表中删除该连接 */

/* Remove from unconfirmed list */

hlist_nulls_del_rcu(&ct->tuplehash[IP_CT_DIR_ORIGINAL].hnnode);

/* Timer relative to confirmation time, not original

setting time, otherwise we'd get timer wrap in

weird delay cases. */

/* 启动定时器,注意这里的超时时间是L4协议处理后的指定的,如tcp_packet()中由nf_ct_refresh_acct()更新的。 */

ct->timeout.expires += jiffies;

add_timer(&ct->timeout);

/* use加1,设置IPS_CONFIRMED_BIT标志 */

atomic_inc(&ct->ct_general.use);

set_bit(IPS_CONFIRMED_BIT, &ct->status);

/* Since the lookup is lockless, hash insertion must be done after

* starting the timer and setting the CONFIRMED bit. The RCU barriers

* guarantee that no other CPU can find the conntrack before the above

* stores are visible.

*/

/* 将连接添加进conntrack的hash表(双向链表)中 */

__nf_conntrack_hash_insert(ct, hash, repl_hash);

NF_CT_STAT_INC(net, insert);

spin_unlock_bh(&nf_conntrack_lock);

return NF_ACCEPT;

out:

NF_CT_STAT_INC(net, insert_failed);

spin_unlock_bh(&nf_conntrack_lock);

return NF_DROP;

}

一个nf_conn的超时处理函数death_by_timeout(),即超时后会执行这个函数:

static void death_by_timeout(unsigned long ul_conntrack)

{

struct nf_conn *ct = (void *)ul_conntrack;

if (!test_bit(IPS_DYING_BIT, &ct->status) &&

unlikely(nf_conntrack_event(IPCT_DESTROY, ct) < 0)) {

/* destroy event was not delivered */

nf_ct_delete_from_lists(ct);

nf_ct_insert_dying_list(ct);

return;

}

set_bit(IPS_DYING_BIT, &ct->status);

nf_ct_delete_from_lists(ct);

nf_ct_put(ct);

}

通过上面的代码,可知在这个hook点上的工作为:

1. 如果该连接存在helper类型的extension数据,就执行其helper函数。

2. 将该conntrack条目从unfirmed链表中删除,并加入到已确认的链表中,为该条目启动定时器,即该条目已经生效了。

2.3 conntrack extension

conntrack extension用来完成正常的conntrack流程没有考虑的问题,如一些数据包做完NAT后需要调整数据包内容,又如ftp,pptp这些应用层协议需要额外的expect连接。这些工作针对协议有特殊性,无法放在通用处理流程中,于是就出现了conntrack extension。

nf_conn结构中有一个指向本连接extension数据的成员:

struct nf_conn {

......

/* Extensions */

struct nf_ct_ext *ext;

......

};

这是一个struct nf_ct_ext结构体类型的成员,

struct nf_ct_ext {

struct rcu_head rcu;

u8 offset[NF_CT_EXT_NUM];

u8 len;

char data[0];

};

从这个结构体的定义可以看出,其数据data是可变长度的,并且可以分为固定的几部分。offset数组指定了每部分数据的位置。

从下面的enum定义可知,data可以由固定位置的4部分组成。当然,一个连接并不需要所有的这些extension数据,一般的UDP/TCP连接只需要NAT数据即可,需要用到期望连接的连接则必须要有HELPER数据。

enum nf_ct_ext_id

{

NF_CT_EXT_HELPER,

NF_CT_EXT_NAT,

NF_CT_EXT_ACCT,

NF_CT_EXT_ECACHE,

NF_CT_EXT_NUM,

};

这四种数据分别有自己的数据格式,如下:

#define NF_CT_EXT_HELPER_TYPE struct nf_conn_help #define NF_CT_EXT_NAT_TYPE struct nf_conn_nat #define NF_CT_EXT_ACCT_TYPE struct nf_conn_counter #define NF_CT_EXT_ECACHE_TYPE struct nf_conntrack_ecache

即data中存放的就是这些结构体。

给一个nf_conn->ext赋值都是通过下面函数来完成的:

void * nf_ct_ext_add(struct nf_conn *ct, enum nf_ct_ext_id id, gfp_tgfp);

- 参数ct是nf_conn对象。

- 参数id是extension数据的类型,即上面4个其中的一种。

- 参数gfp是kmalloc的时候的分配方式,这里不关心。

- 函数的返回值是新添加的ext数据指针,即data中新分配空间的位置。返回void *类型,根据参数id可以转换成struct nf_conn_help、struct nf_conn_nat、struct nf_conn_counter或structnf_conntrack_ecache其中的一种类型。

由struct nf_ct_ext结构体定义可知,在给nf_conn->ext分配空间的时候,最主要的一点就是需要知道要添加的类型需要放在data的什么位置,即offset的值。这个值是根据下面的全局数组来确定的:

static struct nf_ct_ext_type *nf_ct_ext_types[NF_CT_EXT_NUM];

这个数组在内核初始化的时候被赋值,初始化完成后这个数组的内容如下。注意,在使用nf_ct_extend_register()注册这几个数组元素时,每个元素的alloc_size会发生改变,总之,这些值在系统启动完成后已经是固定的了。

static struct nf_ct_ext_type *nf_ct_ext_types[NF_CT_EXT_NUM] = {

{

.len = sizeof(struct nf_conn_help),

.align = __alignof__(struct nf_conn_help),

.id = NF_CT_EXT_HELPER, ////

.alloc_size = ALIGN(sizeof(struct nf_ct_ext), align)

+ len,

},

{

.len = sizeof(struct nf_conn_nat),

.align = __alignof__(struct nf_conn_nat),

.destroy = nf_nat_cleanup_conntrack,

.move = nf_nat_move_storage,

.id = NF_CT_EXT_NAT, ////

.flags = NF_CT_EXT_F_PREALLOC,

.alloc_size = ALIGN(sizeof(struct nf_ct_ext), align)

+ len,

},

{

.len = sizeof(struct nf_conn_counter[IP_CT_DIR_MAX]),

.align = __alignof__(struct nf_conn_counter[IP_CT_DIR_MAX]),

.id = NF_CT_EXT_ACCT, ////

.alloc_size = ALIGN(sizeof(struct nf_ct_ext), align)

+ len,

},

/* 如果没有开启CONFIG_NF_CONNTRACK_EVENTS选项,所以这一项是NULL。

{

.len = sizeof(struct nf_conntrack_ecache),

.align = __alignof__(struct nf_conntrack_ecache),

.id = NF_CT_EXT_ECACHE, ////

.alloc_size = ALIGN(sizeof(struct nf_ct_ext), align)

+ len,

},

*/

};

下面来看一下nf_ct_ext_add()函数的实现:

/* 根据id给ct->ext分配一个新的ext数据,新数据清0。 */

void *__nf_ct_ext_add(struct nf_conn *ct, enum nf_ct_ext_id id, gfp_t gfp)

{

struct nf_ct_ext *new;

int i, newlen, newoff;

struct nf_ct_ext_type *t;

/* 如果连ct->ext都还没有,说明肯定没有ext数据。所以先分配一个ct->ext,然后分配类型为id的ext数据,数据位置由ct->ext->offset[id]指向。*/

if (!ct->ext)

return nf_ct_ext_create(&ct->ext, id, gfp);

/* --- ct->ext不为NULL,说明不是第一次分配了 --- */

/* 如果类型为id的数据已经存在,直接返回。 */

if (nf_ct_ext_exist(ct, id))

return NULL;

rcu_read_lock();

t = rcu_dereference(nf_ct_ext_types[id]);

BUG_ON(t == NULL);

/* 找到已有数据的尾端,从这里开始分配新的id的数据。 */

newoff = ALIGN(ct->ext->len, t->align);

newlen = newoff + t->len;

rcu_read_unlock();

new = __krealloc(ct->ext, newlen, gfp);

if (!new)

return NULL;

/* 由于上面是realloc,可能起始地址改变了,如果变了地址,就先把原有数据考过去。 */

if (new != ct->ext) {

for (i = 0; i < NF_CT_EXT_NUM; i++) {

if (!nf_ct_ext_exist(ct, i))

continue;

rcu_read_lock();

t = rcu_dereference(nf_ct_ext_types[i]);

if (t && t->move)

t->move((void *)new + new->offset[i],

(void *)ct->ext + ct->ext->offset[i]);

rcu_read_unlock();

}

call_rcu(&ct->ext->rcu, __nf_ct_ext_free_rcu);

ct->ext = new;

}

/* 调整ct->ext的offset[id]和len */

new->offset[id] = newoff;

new->len = newlen;

/* 清0。 */

memset((void *)new + newoff, 0, newlen - newoff);

return (void *)new + newoff;

}

nf_ct_ext_create()函数:

staticvoid *

nf_ct_ext_create(structnf_ct_ext **ext, enum nf_ct_ext_id id, gfp_t gfp)

{

unsigned int off, len;

struct nf_ct_ext_type *t;

rcu_read_lock();

t = rcu_dereference(nf_ct_ext_types[id]);

BUG_ON(t == NULL);

off = ALIGN(sizeof(struct nf_ct_ext),t->align);

len = off + t->len;

rcu_read_unlock();

*ext = kzalloc(t->alloc_size, gfp);

if (!*ext)

return NULL;

INIT_RCU_HEAD(&(*ext)->rcu);

(*ext)->offset[id] = off;

(*ext)->len = len;

/* 由于上面用kzalloc分配时就清0了,所以这里不用清。 */

return (void *)(*ext) + off;

}

其中NF_CT_EXT_HELPER类型的ext还有维护了一个全局的hash表nf_ct_helper_hash[],用来保存所有已注册的helper函数,如ftp、pptp、tftp、sip、rtsp等协议的helper函数都注册到了这个表中。注册函数为nf_conntrack_helper_register()。NF_CT_EXT_HELPER是用来生成expect连接的。

在下一篇博文介绍NAT时会给出一个conntrack的例子,并在之后给出一个期望连接的例子。