主要讲的是使用Hive如何和Hbase集成,Hbase和Hive的底层存储都在HDFS上,都是hadoop生态系统中的重要一员,所以他们之间有着很亲密的联系,可以相互转换与操作。

hadoop,hbase和hive的搭建就不重复说了,不会的朋友,可以看散仙前面的博客,下面直接进入重点,关于hive集成hbase这一块,网上资料不算多,有的版本比较旧,散仙这里使用的版本是hive0.12和hbase0.96.2。

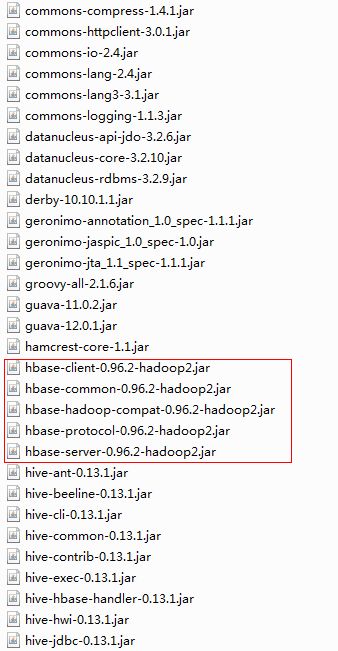

hive集成hbase的方式其实很简单,就是把Hbase的几个jar包,拷贝到hive的lib目录下即可截图如下:

上面的几个包放进Hive的lib中之后,我们需要配置在hive-site.xml里面配置下Hbase的zk地址,便于Hive连接Hbase使用,添加配置如下:

- <property>

- <name>hbase.zookeeper.quorum</name>

- <value>h1,h2,h3</value>

- </property>

<property> <name>hbase.zookeeper.quorum</name> <value>h1,h2,h3</value> </property>

下面可以进行测试,我们直接把Hbase里的一张表,转成我们的Hive表,Hive启动前,首先要把Hadoop和Hbase启动起来,然后启动Hive,启动完进程如下所示:

- [search@h1 ~]$ jps

- 7950 RunJar

- 6559 DataNode

- 7691 HRegionServer

- 6879 ResourceManager

- 7563 HMaster

- 6985 NodeManager

- 7474 HQuorumPeer

- 6731 SecondaryNameNode

- 6458 NameNode

- 8120 Jps

- [search@h1 ~]$

[search@h1 ~]$ jps 7950 RunJar 6559 DataNode 7691 HRegionServer 6879 ResourceManager 7563 HMaster 6985 NodeManager 7474 HQuorumPeer 6731 SecondaryNameNode 6458 NameNode 8120 Jps [search@h1 ~]$

先在hbase里建一张表,并添加列簇,和列数据如下:

- hbase(main):001:0> list

- TABLE

- SLF4J: Class path contains multiple SLF4J bindings.

- SLF4J: Found binding in [jar:file:/home/search/hbase-0.96.2-hadoop2/lib/slf4j-log4j12-1.6.4.jar!/org/slf4j/impl/StaticLoggerBinder.class]

- SLF4J: Found binding in [jar:file:/home/search/hadoop/share/hadoop/common/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class]

- SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

- mytest

- webpage

- 2 row(s) in 3.0870 seconds

- => ["mytest", "webpage"]

- hbase(main):002:0> scan 'mytest'

- ROW COLUMN+CELL

- 2207 column=item:name, timestamp=1407329588864, value=hadoop

- 23008 column=home:address, timestamp=1407329589330, value=shenzhen

- 2309 column=info:age, timestamp=1407329589497, value=899

- 24008 column=home:address, timestamp=1407329588587, value=guangzhou

- 2407 column=item:name, timestamp=1407329588447, value=big data

- 2409 column=info:age, timestamp=1407329588728, value=22.32

- 3109 column=info:age, timestamp=1407329588308, value=7.4

- 321008 column=home:address, timestamp=1407329588169, value=luoyang

- 33107 column=item:name, timestamp=1407329588028, value=x-code

- 4205 column=home:address, timestamp=1407329587701, value=beijing

- 44101 column=info:age, timestamp=1407329587883, value=18.5

- 444105 column=item:name, timestamp=1407329587555, value=a dog

- 12 row(s) in 0.0980 seconds

hbase(main):001:0> list TABLE SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/home/search/hbase-0.96.2-hadoop2/lib/slf4j-log4j12-1.6.4.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/home/search/hadoop/share/hadoop/common/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. mytest webpage 2 row(s) in 3.0870 seconds => ["mytest", "webpage"] hbase(main):002:0> scan 'mytest' ROW COLUMN+CELL 2207 column=item:name, timestamp=1407329588864, value=hadoop 23008 column=home:address, timestamp=1407329589330, value=shenzhen 2309 column=info:age, timestamp=1407329589497, value=899 24008 column=home:address, timestamp=1407329588587, value=guangzhou 2407 column=item:name, timestamp=1407329588447, value=big data 2409 column=info:age, timestamp=1407329588728, value=22.32 3109 column=info:age, timestamp=1407329588308, value=7.4 321008 column=home:address, timestamp=1407329588169, value=luoyang 33107 column=item:name, timestamp=1407329588028, value=x-code 4205 column=home:address, timestamp=1407329587701, value=beijing 44101 column=info:age, timestamp=1407329587883, value=18.5 444105 column=item:name, timestamp=1407329587555, value=a dog 12 row(s) in 0.0980 seconds

在Hive里,执行如下语句,进行关联建表:

- hive> CREATE EXTERNAL TABLE hbaseive(key int, name string,address string,age double)

- > STORED BY 'org.apache.hadoop.hive.hbase.HBaseStorageHandler'

- > WITH SERDEPROPERTIES ("hbase.columns.mapping" = ":key,item:name,home:address,info:age")

- > TBLPROPERTIES ("hbase.table.name" = "mytest");

hive> CREATE EXTERNAL TABLE hbaseive(key int, name string,address string,age double)

> STORED BY 'org.apache.hadoop.hive.hbase.HBaseStorageHandler'

> WITH SERDEPROPERTIES ("hbase.columns.mapping" = ":key,item:name,home:address,info:age")

> TBLPROPERTIES ("hbase.table.name" = "mytest");

如果不报错,就证明,集成成功,如果出现错误,一般都是hbase的某个jar包缺少造成,我们根据提示添加到hive的lib里即可,然后重启hive的的shell客户端。

建表成功后,查询如下:

- hive> select * from hbaseive;

- OK

- 2207 hadoop NULL NULL

- 23008 NULL shenzhen NULL

- 2309 NULL NULL 899.0

- 24008 NULL guangzhou NULL

- 2407 big data NULL NULL

- 2409 NULL NULL 22.32

- 3109 NULL NULL 7.4

- 321008 NULL luoyang NULL

- 33107 x-code NULL NULL

- 4205 NULL beijing NULL

- 44101 NULL NULL 18.5

- 444105 a dog NULL NULL

- Time taken: 0.481 seconds, Fetched: 12 row(s)

- hive>

hive> select * from hbaseive; OK 2207 hadoop NULL NULL 23008 NULL shenzhen NULL 2309 NULL NULL 899.0 24008 NULL guangzhou NULL 2407 big data NULL NULL 2409 NULL NULL 22.32 3109 NULL NULL 7.4 321008 NULL luoyang NULL 33107 x-code NULL NULL 4205 NULL beijing NULL 44101 NULL NULL 18.5 444105 a dog NULL NULL Time taken: 0.481 seconds, Fetched: 12 row(s) hive>

至此,我们的Hive集成Hbase就成功了,关联成功后,我们就可以对数据进行分析了,需要注意的是,我们关联过的hbase表,如果在Hbase里面删除了这个表,那么在Hive里面再次查询,就会报错,如果删除了Hive里面的表,则对Hbase没有影响。另外一点需要注意的是,使用Hive关联Hbase的建的表,是一个内部表,所以需要在Create 后面加上 external关键字 。