机器学习实战笔记——AdaBoost

基本原理

Boosting族算法可以将多个弱分类器加权结合成强分类器。其中,AdaBoost是最为显著的代表算法,它的基本思想为:先从初始训练集训练出一个弱分类器,每一次训练的弱分类器参与下一次训练,直到错误率足够小或者达到指定迭代次数。

AdaBoost算法的三个步骤:

一、对训练数据赋相同的权重。如果样本数为m,权重为1/m。

二、训练弱分类器并计算每个分类器的权重值alpha。训练出一个弱分类器后,计算该分类器的错误率ε,alpha计算公式如下

分类正确的样本在下一次训练时权重减小,分类错误的样本在下一次训练时权重增加。即每次训练的弱分类器一方面计算分类器权重值alpha,另一方面调整用于下一轮训练的样本权重。

三、将所有弱分类器加权结合得到强分类器,权值为alpha。

如果基于“加性模型”(additive model),即弱分类器的线性组合,用公式表达为

其中ht(x)是弱分类器,αt是每个弱分类器的权重值alpha。

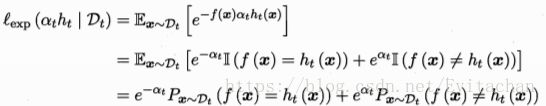

使用线性组合来最小化损失函数

如果H(x)能使损失最小化,考虑上式对H(x)的偏导

令偏导等于0解得

最后构建的强分类器为

第一个弱分类器h1由初始数据得到,迭代生成ht,αt

弱分类器ht使得αtht能够最小化损失函数

![]()

其中![]() ,对损失函数求导

,对损失函数求导

令偏导为0,解得

得到分类器t的权重值。

代码部分

数据集wine quality(http://archive.ics.uci.edu/ml/datasets/Wine+Quality),共1599个样本,将其分为训练集和测试集两部分。

from numpy import *

def loadDataSet(fileName): #general function to parse tab -delimited floats

numFeat = len(open(fileName).readline().split('\t')) #get number of fields

dataMat = []; labelMat = []

fr = open(fileName)

for line in fr.readlines():

lineArr =[]

curLine = line.strip().split('\t')

for i in range(numFeat-1):

lineArr.append(float(curLine[i]))

dataMat.append(lineArr)

labelMat.append(float(curLine[-1]))

return dataMat,labelMat

def stumpClassify(dataMatrix,dimen,threshVal,threshIneq):#just classify the data

retArray = ones((shape(dataMatrix)[0],1))

if threshIneq == 'lt':

retArray[dataMatrix[:,dimen] <= threshVal] = -1.0

else:

retArray[dataMatrix[:,dimen] > threshVal] = -1.0

return retArray

def buildStump(dataArr,classLabels,D):

dataMatrix = mat(dataArr); labelMat = mat(classLabels).T

m,n = shape(dataMatrix)

numSteps = 10.0; bestStump = {}; bestClasEst = mat(zeros((m,1)))

minError = inf #init error sum, to +infinity

for i in range(n):#loop over all dimensions

rangeMin = dataMatrix[:,i].min(); rangeMax = dataMatrix[:,i].max();

stepSize = (rangeMax-rangeMin)/numSteps

for j in range(-1,int(numSteps)+1):#loop over all range in current dimension

for inequal in ['lt', 'gt']: #go over less than and greater than

threshVal = (rangeMin + float(j) * stepSize)

predictedVals = stumpClassify(dataMatrix,i,threshVal,inequal)#call stump classify with i, j, lessThan

errArr = mat(ones((m,1)))

errArr[predictedVals == labelMat] = 0

weightedError = D.T*errArr #calc total error multiplied by D

#print "split: dim %d, thresh %.2f, thresh ineqal: %s, the weighted error is %.3f" % (i, threshVal, inequal, weightedError)

if weightedError < minError:

minError = weightedError

bestClasEst = predictedVals.copy()

bestStump['dim'] = i

bestStump['thresh'] = threshVal

bestStump['ineq'] = inequal

return bestStump,minError,bestClasEst

def adaBoostTrainDS(dataArr,classLabels,numIt=40):

weakClassArr = []

m = shape(dataArr)[0]

D = mat(ones((m,1))/m) #init D to all equal

aggClassEst = mat(zeros((m,1)))

for i in range(numIt):

bestStump,error,classEst = buildStump(dataArr,classLabels,D)#build Stump

#print "D:",D.T

alpha = float(0.5*log((1.0-error)/max(error,1e-16)))#calc alpha, throw in max(error,eps) to account for error=0

bestStump['alpha'] = alpha

weakClassArr.append(bestStump) #store Stump Params in Array

#print "classEst: ",classEst.T

expon = multiply(-1*alpha*mat(classLabels).T,classEst) #exponent for D calc, getting messy

D = multiply(D,exp(expon)) #Calc New D for next iteration

D = D/D.sum()

#calc training error of all classifiers, if this is 0 quit for loop early (use break)

aggClassEst += alpha*classEst

#print "aggClassEst: ",aggClassEst.T

aggErrors = multiply(sign(aggClassEst) != mat(classLabels).T,ones((m,1)))

errorRate = aggErrors.sum()/m

#print ("total error: ",errorRate)

if errorRate == 0.0: break

print ("total error: ",errorRate)

return weakClassArr,aggClassEst

def plotROC(predStrengths, classLabels):

import matplotlib.pyplot as plt

cur = (1.0,1.0) #cursor

ySum = 0.0 #variable to calculate AUC

numPosClas = sum(array(classLabels)==1.0)

yStep = 1/float(numPosClas); xStep = 1/float(len(classLabels)-numPosClas)

sortedIndicies = predStrengths.argsort()#get sorted index, it's reverse

fig = plt.figure()

fig.clf()

ax = plt.subplot(111)

#loop through all the values, drawing a line segment at each point

for index in sortedIndicies.tolist()[0]:

if classLabels[index] == 1.0:

delX = 0; delY = yStep;

else:

delX = xStep; delY = 0;

ySum += cur[1]

#draw line from cur to (cur[0]-delX,cur[1]-delY)

ax.plot([cur[0],cur[0]-delX],[cur[1],cur[1]-delY], c='b')

cur = (cur[0]-delX,cur[1]-delY)

ax.plot([0,1],[0,1],'b--')

plt.xlabel('False positive rate'); plt.ylabel('True positive rate')

plt.title('ROC curve for AdaBoost horse colic detection system')

ax.axis([0,1,0,1])

plt.show()

print ("the Area Under the Curve is: ",ySum*xStep)

def test():

datArr,labelArr = loadDataSet('winetrain.txt')

classifierArray,aggClassEst = adaBoostTrainDS(datArr,labelArr,10)

testArr,testLabelArr = loadDataSet('winetest.txt')

classifierArray = adaBoostTrainDS(testArr,testLabelArr,10)

plotROC(aggClassEst.T,labelArr)

test()

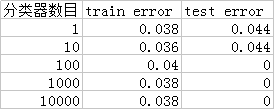

弱分类器数目不同时,训练错误率和测试错误率。在数目为100时,可以看到训练错误率增大,是因为出现了过拟合。

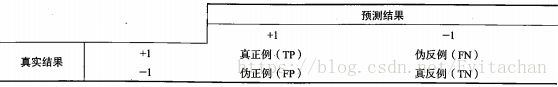

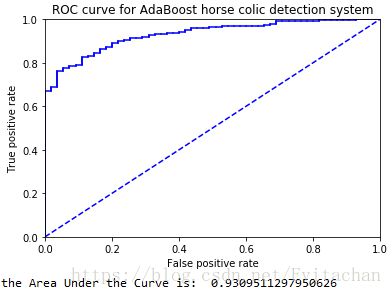

ROC曲线用于度量分类中的非均衡性。

上图为使用10000个弱分类器时强分类器的ROC曲线。图中横轴是伪正例的比例差准率(precision)TP/(TP+FP),轴是真正例的比例查全率(recall)TP/(TP+FN),虚线是随机猜测的结果曲线,图上点十分靠近左上角,说明在保证查全率低的情况下,差准率很高,分类器的性能很好。

参考资料

【1】《机器学习实战》 Peter Harrington 著 人民邮电出版社

【2】《机器学习》 周志华 著 清华大学出版社