深度学习--十折交叉验证

用scikit-learn来评价模型质量,为了更好地挑拣出结果的差异,采用了十折交叉验证(10-fold cross validation)方法。

本程序在输入层和第一个隐含层之间加入20%Dropout

采用十折交叉验证的方法进行测试。

# dropout in the input layer with weight constraint

def create_model1():

# create model

model = Sequential()

model.add(Dropout(0.2, input_shape=(60,)))

model.add(Dense(60, init='normal', activation='relu', W_constraint=maxnorm(3)))

model.add(Dense(30, init='normal', activation='relu', W_constraint=maxnorm(3)))

model.add(Dense(1, init='normal', activation='sigmoid'))

# Compile model

sgd = SGD(lr=0.1, momentum=0.9, decay=0.0, nesterov=False)

model.compile(loss='binary_crossentropy', optimizer=sgd, metrics=['accuracy'])

return model

numpy.random.seed(seed)

estimators = []

estimators.append(('standardize', StandardScaler()))

estimators.append(('mlp', KerasClassifier(build_fn=create_model1, nb_epoch=300, batch_size=16, verbose=0)))

pipeline = Pipeline(estimators)

kfold = StratifiedKFold(y=encoded_Y, n_folds=10, shuffle=True, random_state=seed)

results = cross_val_score(pipeline, X, encoded_Y, cv=kfold)

print("Accuracy: %.2f%% (%.2f%%)" % (results.mean()*100, results.std()*100))Pineline

from sklearn.pipeline import Pipeline

from sklearn.preprocessing import StandardScaler

num_pipeline = Pipeline([

('imputer', Imputer(strategy="median")),

('attribs_adder', CombinedAttributesAdder()),

('std_scaler', StandardScaler()),

])

housing_num_tr = num_pipeline.fit_transform(housing_num)Pipeline构造器接受(name, transform) tuple的列表作为参数。按顺序执行列表中的transform,完成数据预处理

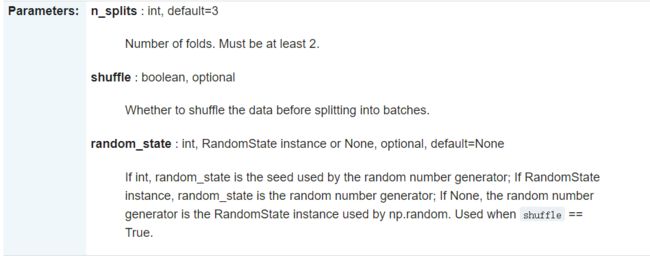

StratifiedKFold

StratifiedKFold用法类似Kfold,但是分层采样,确保训练集,测试集中各类别样本的比例与原始数据集中相同

sklearn.model_selection.StratifiedKFold(n_splits=3, shuffle=False, random_state=None)

import numpy as np

from sklearn.model_selection import KFold,StratifiedKFold

X=np.array([

[1,2,3,4],

[11,12,13,14],

[21,22,23,24],

[31,32,33,34],

[41,42,43,44],

[51,52,53,54],

[61,62,63,64],

[71,72,73,74]

])

y=np.array([1,1,0,0,1,1,0,0])

floder = KFold(n_splits=4,random_state=0,shuffle=False)

sfolder = StratifiedKFold(n_splits=4,random_state=0,shuffle=False)

for train, test in sfolder.split(X,y):

print('Train: %s | test: %s' % (train, test))

print(" ")

for train, test in floder.split(X,y):

print('Train: %s | test: %s' % (train, test))

#RESULT

Train: [1 3 4 5 6 7] | test: [0 2]

Train: [0 2 4 5 6 7] | test: [1 3]

Train: [0 1 2 3 5 7] | test: [4 6]

Train: [0 1 2 3 4 6] | test: [5 7]

Train: [2 3 4 5 6 7] | test: [0 1]

Train: [0 1 4 5 6 7] | test: [2 3]

Train: [0 1 2 3 6 7] | test: [4 5]

Train: [0 1 2 3 4 5] | test: [6 7]

cross_val_score:

不同的训练集、测试集分割的方法导致其准确率不同

交叉验证的基本思想是:将数据集进行一系列分割,生成一组不同的训练测试集,然后分别训练模型并计算测试准确率,最后对结果进行平均处理。这样来有效降低测试准确率的差异。

使用交叉验证的建议

-

K=10是一个一般的建议

-

如果对于分类问题,应该使用分层抽样(stratified sampling)来生成数据,保证正负例的比例在训练集和测试集中的比例相同

from sklearn.cross_validation import cross_val_score

knn = KNeighborsClassifier(n_neighbors=5)

# 这里的cross_val_score将交叉验证的整个过程连接起来,不用再进行手动的分割数据

# cv参数用于规定将原始数据分成多少份

scores = cross_val_score(knn, X, y, cv=10, scoring='accuracy')

print(scores)

print(scores.mean())#输出结果平均值

参考网页:

https://blog.csdn.net/u010159842/article/details/54138157

cross_val_score交叉验证及其用于参数选择、模型选择、特征选择

https://blog.csdn.net/u012735708/article/details/82258615