台大林轩田《机器学习基石》:作业二python实现

台大林轩田《机器学习基石》:作业一python实现

台大林轩田《机器学习基石》:作业二python实现

台大林轩田《机器学习基石》:作业三python实现

台大林轩田《机器学习基石》:作业四python实现

完整代码:

https://github.com/xjwhhh/LearningML/tree/master/MLFoundation

欢迎follow和star

在学习和总结的过程中参考了不少别的博文,且自己的水平有限,如果有错,希望能指出,共同学习,共同进步

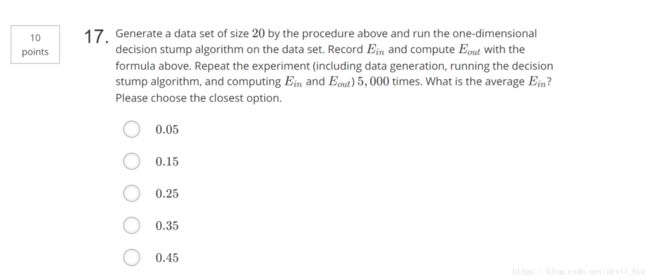

##17,18

分类方法是"positive and negative rays",老师上课讲过的

第17题是要在[-1,1]种取20个点,分隔为21个区间作为theta的取值区间,每种分类有42个hyphothesis,枚举所有可能情况找到使E_in最小的hyphothesis,记录最小E_in

第18题的意思是在17题得到的最佳hyphothesis的基础上,利用第16题的公式计算E_out.

注意点:

1.由16题得需要加20%噪声

2.使用16题的答案来计算E_out

代码如下:

import numpy as np

# generate input data with 20% flipping noise

def generate_input_data(time_seed):

np.random.seed(time_seed)

raw_X = np.sort(np.random.uniform(-1, 1, 20))

# 加20%噪声

noised_y = np.sign(raw_X) * np.where(np.random.random(raw_X.shape[0]) < 0.2, -1, 1)

return raw_X, noised_y

def calculate_Ein(x, y):

# calculate median of interval & negative infinite & positive infinite

thetas = np.array([float("-inf")] + [(x[i] + x[i + 1]) / 2 for i in range(0, x.shape[0] - 1)] + [float("inf")])

Ein = x.shape[0]

sign = 1

target_theta = 0.0

# positive and negative rays

for theta in thetas:

y_positive = np.where(x > theta, 1, -1)

y_negative = np.where(x < theta, 1, -1)

error_positive = sum(y_positive != y)

error_negative = sum(y_negative != y)

if error_positive > error_negative:

if Ein > error_negative:

Ein = error_negative

sign = -1

target_theta = theta

else:

if Ein > error_positive:

Ein = error_positive

sign = 1

target_theta = theta

# two corner cases

if target_theta == float("inf"):

target_theta = 1.0

if target_theta == float("-inf"):

target_theta = -1.0

return Ein, target_theta, sign

if __name__ == '__main__':

T = 5000

total_Ein = 0

sum_Eout = 0

for i in range(0, T):

x, y = generate_input_data(i)

curr_Ein, theta, sign = calculate_Ein(x, y)

total_Ein = total_Ein + curr_Ein

sum_Eout = sum_Eout + 0.5 + 0.3 * sign * (abs(theta) - 1)

# 17

print((total_Ein * 1.0) / (T * 20))

# 18

print((sum_Eout * 1.0) / T)

我的运行结果17题是0.17014,18题是0.2561434158451364

##19

下载训练样本,分别拿出每一维度分别按照16-18的方法计算其错误率,在每一维度选取各自最好的Hyphothesis。在得到的9个最好的Hyphothesis中,再选取这9个中最好的Hyphothesis,作为全局最优Hyphothesis,记录此时的Hyphothesis的参数,它所在的维度,最小错误率

实际上和16-18并无什么区别,只是注意要记得保存和比较

代码如下:

import numpy as np

def read_input_data(path):

x = []

y = []

for line in open(path).readlines():

items = line.strip().split(' ')

tmp_x = []

for i in range(0, len(items) - 1): tmp_x.append(float(items[i]))

x.append(tmp_x)

y.append(float(items[-1]))

return np.array(x), np.array(y)

def calculate_Ein(x, y):

# calculate median of interval & negative infinite & positive infinite

thetas = np.array([float("-inf")] + [(x[i] + x[i + 1]) / 2 for i in range(0, x.shape[0] - 1)] + [float("inf")])

Ein = x.shape[0]

sign = 1

target_theta = 0.0

# positive and negative rays

for theta in thetas:

y_positive = np.where(x > theta, 1, -1)

y_negative = np.where(x < theta, 1, -1)

error_positive = sum(y_positive != y)

error_negative = sum(y_negative != y)

if error_positive > error_negative:

if Ein > error_negative:

Ein = error_negative

sign = -1

target_theta = theta

else:

if Ein > error_positive:

Ein = error_positive

sign = 1

target_theta = theta

return Ein, target_theta, sign

if __name__ == '__main__':

# 19

x, y = read_input_data("hw2_train.dat")

# record optimal descision stump parameters

Ein = x.shape[0]

theta = 0

sign = 1

index = 0

# multi decision stump optimal process

for i in range(0, x.shape[1]):

input_x = x[:, i]

input_data = np.transpose(np.array([input_x, y]))

input_data = input_data[np.argsort(input_data[:, 0])]

curr_Ein, curr_theta, curr_sign = calculate_Ein(input_data[:, 0], input_data[:, 1])

if Ein > curr_Ein:

Ein = curr_Ein

theta = curr_theta

sign = curr_sign

index = i

print((Ein * 1.0) / x.shape[0])

运行结果为0.25

##20

对19题得到的最好的Hyphothesis,对测试数据集对应维度计算E_out

注意此时计算E_out是使用测试数据集,而不是16-18那样使用公式计算

代码如下,直接添加在19题的main方法里即可:

# 20

# test process

test_x, test_y = read_input_data("hw2_test.dat")

test_x = test_x[:, index]

predict_y = np.array([])

if sign == 1:

predict_y = np.where(test_x > theta, 1.0, -1.0)

else:

predict_y = np.where(test_x < theta, 1.0, -1.0)

Eout = sum(predict_y != test_y)

print((Eout * 1.0) / test_x.shape[0])

运行结果为0.355