前言

收集日志的组件多不胜数,有ELK久负盛名组合中的logstash, 也有EFK组合中的filebeat,更有cncf新贵fluentd,另外还有大数据领域使用比较多的flume。本次主要说另外一种,和fluentd一脉相承的fluent bit。

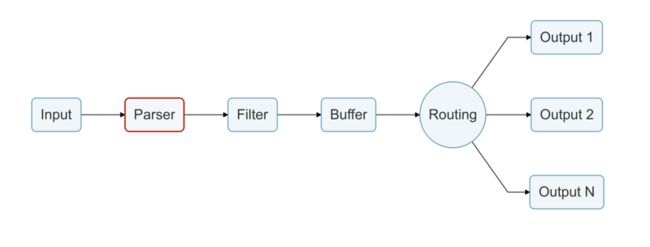

Fluent Bit是一个开源和多平台的Log Processor and Forwarder,它允许您从不同的来源收集数据/日志,统一并将它们发送到多个目的地。它与Docker和Kubernetes环境完全兼容。Fluent Bit用C语言编写,具有可插拔的架构,支持大约30个扩展。它快速轻便,通过TLS为网络运营提供所需的安全性。

之所以选择fluent bit,看重了它的高性能。下面是官方贴出的一张与fluentd对比图:

| Fluentd | Fluent Bit | |

|---|---|---|

| Scope | Containers / Servers | Containers / Servers |

| Language | C & Ruby | C |

| Memory | ~40MB | ~450KB |

| Performance | High Performance | High Performance |

| Dependencies | Built as a Ruby Gem, it requires a certain number of gems. | Zero dependencies, unless some special plugin requires them. |

| Plugins | More than 650 plugins available | Around 35 plugins available |

| License | Apache License v2.0 | Apache License v2.0 |

在已经拥有的插件满足需求和场景的前提下,fluent bit无疑是一个很好的选择。

fluent bit 简介

在使用的这段时间之后,总结以下几点优点:

- 支持routing,适合多output的场景。比如有些业务日志,或写入到es中,供查询。或写入到hdfs中,供大数据进行分析。

- fliter支持lua。对于那些对c语言hold不住的团队,可以用lua写自己的filter。

- output 除了官方已经支持的十几种,还支持用golang写output。例如:fluent-bit-kafka-output-plugin

k8s日志收集

k8s日志分析

主要讲kubeadm部署的k8s集群。日志主要有:

- kubelet和etcd的日志,一般采用systemd部署,自然而然就是要支持systemd格式日志的采集。filebeat并不支持该类型。

- kube-apiserver等组件stderr和stdout日志,这个一般输出的格式取决于docker的日志驱动,一般为json-file。

- 业务落盘的日志。支持tail文件的采集组件都满足。这点不在今天的讨论范围之内。

部署方案

fluent bit 采取DaemonSet部署。 如下图:

部署yaml

---

apiVersion: v1

kind: Service

metadata:

name: elasticsearch-logging

namespace: kube-system

labels:

k8s-app: elasticsearch-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

kubernetes.io/name: "Elasticsearch"

spec:

ports:

- port: 9200

protocol: TCP

targetPort: db

selector:

k8s-app: elasticsearch-logging

---

# RBAC authn and authz

apiVersion: v1

kind: ServiceAccount

metadata:

name: elasticsearch-logging

namespace: kube-system

labels:

k8s-app: elasticsearch-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: elasticsearch-logging

labels:

k8s-app: elasticsearch-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

rules:

- apiGroups:

- ""

resources:

- "services"

- "namespaces"

- "endpoints"

verbs:

- "get"

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

namespace: kube-system

name: elasticsearch-logging

labels:

k8s-app: elasticsearch-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

subjects:

- kind: ServiceAccount

name: elasticsearch-logging

namespace: kube-system

apiGroup: ""

roleRef:

kind: ClusterRole

name: elasticsearch-logging

apiGroup: ""

---

# Elasticsearch deployment itself

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: elasticsearch-logging

namespace: kube-system

labels:

k8s-app: elasticsearch-logging

version: v6.3.0

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

spec:

serviceName: elasticsearch-logging

replicas: 2

selector:

matchLabels:

k8s-app: elasticsearch-logging

version: v6.3.0

template:

metadata:

labels:

k8s-app: elasticsearch-logging

version: v6.3.0

kubernetes.io/cluster-service: "true"

spec:

serviceAccountName: elasticsearch-logging

containers:

- image: k8s.gcr.io/elasticsearch:v6.3.0

name: elasticsearch-logging

resources:

# need more cpu upon initialization, therefore burstable class

limits:

cpu: 1000m

requests:

cpu: 100m

ports:

- containerPort: 9200

name: db

protocol: TCP

- containerPort: 9300

name: transport

protocol: TCP

volumeMounts:

- name: elasticsearch-logging

mountPath: /data

env:

- name: "NAMESPACE"

valueFrom:

fieldRef:

fieldPath: metadata.namespace

# Elasticsearch requires vm.max_map_count to be at least 262144.

# If your OS already sets up this number to a higher value, feel free

# to remove this init container.

initContainers:

- image: alpine:3.6

command: ["/sbin/sysctl", "-w", "vm.max_map_count=262144"]

name: elasticsearch-logging-init

securityContext:

privileged: true

volumeClaimTemplates:

- metadata:

name: elasticsearch-logging

annotations:

volume.beta.kubernetes.io/storage-class: gp2

spec:

accessModes:

- "ReadWriteOnce"

resources:

requests:

storage: 10Gi

---

apiVersion: v1

kind: ConfigMap

metadata:

name: fluent-bit-config

namespace: kube-system

labels:

k8s-app: fluent-bit

data:

# Configuration files: server, input, filters and output

# ======================================================

fluent-bit.conf: |

[SERVICE]

Flush 1

Log_Level info

Daemon off

Parsers_File parsers.conf

HTTP_Server On

HTTP_Listen 0.0.0.0

HTTP_Port 2020

@INCLUDE input-kubernetes.conf

@INCLUDE filter-kubernetes.conf

@INCLUDE output-elasticsearch.conf

input-kubernetes.conf: |

[INPUT]

Name tail

Tag kube.*

Path /var/log/containers/*.log

Parser docker

DB /var/log/flb_kube.db

Mem_Buf_Limit 5MB

Skip_Long_Lines On

Refresh_Interval 10

[INPUT]

Name systemd

Tag host.*

Systemd_Filter _SYSTEMD_UNIT=kubelet.service

Path /var/log/journal

DB /var/log/flb_host.db

filter-kubernetes.conf: |

[FILTER]

Name kubernetes

Match kube.*

Kube_URL https://kubernetes.default.svc.cluster.local:443

Merge_Log On

K8S-Logging.Parser On

K8S-Logging.Exclude On

[FILTER]

Name kubernetes

Match host.*

Kube_URL https://kubernetes.default.svc.cluster.local:443

Merge_Log On

Use_Journal On

output-elasticsearch.conf: |

[OUTPUT]

Name es

Match *

Host ${FLUENT_ELASTICSEARCH_HOST}

Port ${FLUENT_ELASTICSEARCH_PORT}

Logstash_Format On

Retry_Limit False

parsers.conf: |

[PARSER]

Name apache

Format regex

Regex ^(?[^ ]*) [^ ]* (?[^ ]*) \[(? 总结

真实场景的日志收集比较复杂,在日志量大的情况下,一般要引入kafka。

此外关于注意日志的lograte。一般来说,docker是支持该功能的。可以通过下面的配置解决:

cat > /etc/docker/daemon.json <在k8s中运行的业务日志,不仅要考虑清除过时的日志,还要考虑新增pod的日志的收集。这个时候,往往需要在fluent bit上面再包一层逻辑,获取需要收集的日志路径。比如log-pilot。