基于Kubernetes构建和部署企业容器云

目录

第一章:Kubernetes介绍和环境准备

一、什么是K8S

二、主要功能

三、服务介绍

3.1 master节点

3.2 node节点

四、实验环境准备

4.1 规划

4.2 网络设置

4.3 配置静态IP地址

4.4 关闭selinux、防火墙、networkmanage

4.5 设置主机名解析

4.6 配置epel源

4.7 配置免秘钥登录

第二章:Kubernetes集群初始化

一、安装Docker

二、准备部署目录

三、准备软件包

四、解压软件包

五、修改环境变量

六、手动制作CA证书

6.1 安装 CFSSL

6.2 初始化CFSSL

6.3 创建用来生成 CA 文件的 JSON 配置文件

6.4 创建用来生成 CA 证书签名请求(CSR)的 JSON 配置文件

6.5 生成CA证书(ca.pem)和密钥(ca-key.pem)

6.6 分发证书

七、ETCD集群部署

7.1 准备etcd软件包

7.2 创建 etcd 证书签名请求

7.4 生成 etcd 证书和私钥

7.4 证书移动到/opt/kubernetes/ssl目录下

7.5 设置ETCD配置文件

7.6 创建ETCD系统服务

7.7 重新加载系统服务

7.8 验证集群

第三章:Kubernetes的master节点部署

一、部署API server服务

1.1 准备软件包

1.2 创建生成CSR的 JSON 配置文件

1.3 生成 kubernetes 证书和私钥

1.4 创建 kube-apiserver 使用的客户端 token 文件

1.5 创建基础用户名/密码认证配置

1.6 部署Kubernetes API Server

1.7 启动APIserver

二、部署Controller Manager服务

三、部署Kubernetes Scheduler服务

四、部署kubectl 命令行工具

第四章:Kubernetes的node节点部署

4.1-kubelet部署

4.2-kube-proxy部署

第五章:Flannel网络部署

5.1 部署Flanne

5.2 创建一个K8S应用

第一章:Kubernetes介绍和环境准备

一、什么是K8S

Kubernetes是一个全新的基于容器技术的分布式架构领先方案,是一个开放的开发平台。不局限于任何一种语言,没有限定任何编程接口。是一个完备的分布式系统支撑平台。它构建在docker之上,提供应用部署、维护、扩展机制等功能,利用Kubernetes能方便地管理跨机器运行容器化的应用。

二、主要功能

- 使用Docker对应用程序包装、实例化;

- 以集群的方式运行、管理跨机器的容器;

- 解决Docker跨机器容器之间的通讯问题;

- Kubernetes的自我修复机制使得容器集群总是运行在用户期望的状态;

三、服务介绍

Kubernetes中的:Node、Pod、Replication Controller、Service等都可以看作一种“资源对象”,几乎所有的资源对象都可以通过kubectl工具(API调用)执行增、删、改、查等操作并将其保存在etcd中持久化存储。

- Master:集群控制管理节点,所有的命令都经由master处理。

- Node:是kubernetes集群的工作负载节点。Master为其分配工作,当某个Node宕机时,Master会将其工作负载自动转移到其他节点。

- Pod:是kubernetes最重要也是最基本的概念。每个Pod都会包含一个 “根容器”,还会包含一个或者多个紧密相连的业务容器。

- ETCD:k8s中,所有数据的存储以及操作记录都在etcd中进行存储,一旦故障,可能导致整个集群的瘫痪或者数据丢失。

- Label:是一个key=value的键值对,其中key与value由用户自己指定。可以附加到各种资源对象上,一个资源对象可以定义任意数量的Label。

- RC:Replication Controller声明某个Pod的副本数在任意时刻都符合某个预期值。定义包含如下:

(1)Pod期待的副本数(replicas)

(2)用于筛选目标Pod的Label Selector

(3)当Pod副本数小于期望时,用于新的创建Pod的模板template

(4)通过改变RC里的Pod副本数量,可以实现Pod的扩容或缩容功能

(5)通过改变RC里Pod模板中的镜像版本,可以实现Pod的滚动升级功能

3.1 master节点

- API Server

集群控制管理节点,所有的命令都经由master处理,所有资源增删改查的唯一入口。

提供集群管理的REST API接口,包括认证授权、数据校验以及集群状态变更等;

只有API Server才直接操作etcd;

其他模块通过API Server查询或修改数据;

提供其他模块之间的数据交互和通信的枢纽;

- Scheduler

负责分配调度Pod到集群内的node节点;

监听kube-apiserver,查询还未分配Node的Pod;

根据调度策略为这些Pod分配节点;

- Controller Manager

所有其他群集级别的功能,目前由控制器Manager执行。资源对象的自动化控制中心;

由一系列的控制器组成,它通过apiserver监控整个集群的状态,并确保集群处于预期的工作状态;

- ETCD

所有持久化的状态信息存储在ETCD中。

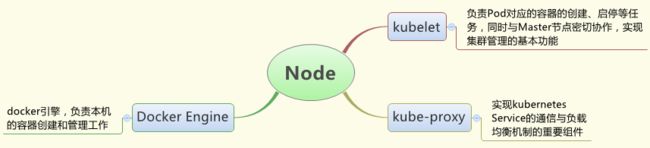

3.2 node节点

默认情况下,Node节点可动态增加到kubernetes集群中,一旦Node被纳入集群管理范围,kubelet会定时向Master汇报自身的情况,以及之前有哪些Pod在运行等,这样Master可以获知每个Node的资源使用情况,并实现高效均衡的资源调度策略。如果Node没有按时上报信息,则会被Master判断为失联,Node状态会被标记为Not Ready,随后Master会触发工作负载转移流程。

- Kubelet

管理Pods以及容器、镜像、 Volume等,实现对集群对节点的管理。

- Kube-proxy

提供网络代理以及负载均衡,实现与Service通信。

- Docker Engine

负责节点的容器的管理工作。

四、实验环境准备

4.1 规划

| 本次实验是基于2个node+1个master,每个节点都跑一个ETCD。 1. 操作系统 CentOS-7.x-x86_64。 |

||||

| 主机名 | IP地址(NAT) | CPU | 内存 | 描述 |

| k8s-master | eth0 : 10.0.0.25 | 1VCPU | 2G | Kubernets Master节点/Etcd节点 |

| k8s-node-1 | eth0 : 10.0.0.26 | 1VCPU | 2G | Kubernets Node节点/ Etcd节点 |

| k8s-node-1 | eth0 : 10.0.0.27 | 1VCPU | 2G | Kubernets Node节点/ Etcd节点 |

4.2 网络设置

4.3 配置静态IP地址

![]()

#将 UUID 和 MAC 地址已经其它配置删除掉,3个节点除了IP和主机名不同其他相同。

[root@k8s-master ~]# cat /etc/sysconfig/network-scripts/ifcfg-eth0

TYPE=Ethernet

BOOTPROTO=static

NAME=eth0

DEVICE=eth0

ONBOOT=yes

IPADDR=10.0.0.25

NETMASK=255.255.255.0

GATEWAY=10.0.0.254

DNS=223.5.5.5

#重启网络服务

[root@k8s-master ~]# systemctl restart network

#设置 DNS 解析

[root@k8s-master ~]# vi /etc/resolv.conf

nameserver 223.5.5.5![]()

4.4 关闭selinux、防火墙、networkmanage

![]()

setenforce 0

sed -i 's#SELINUX=enforcing#SELINUX=disabled#' /etc/selinux/config

systemctl disable firewalld.service

systemctl stop firewalld.service

systemctl stop NetworkManager

systemctl disable NetworkManager![]()

4.5 设置主机名解析

3个节点都做

![]()

#3个节点都一样

[root@k8s-master ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

10.0.0.25 k8s-master

10.0.0.26 k8s-node-1

10.0.0.27 k8s-node-2![]()

4.6 配置epel源

3个节点都做

![]()

rpm -ivh http://mirrors.aliyun.com/epel/epel-release-latest-7.noarch.rpm

#下载常用命令

yum install -y net-tools vim lrzsz tree screen lsof tcpdump nc mtr nmap

#保证能上网

[root@k8s-master ~]# ping www.baidu.com -c3

PING www.a.shifen.com (61.135.169.121) 56(84) bytes of data.

64 bytes from 61.135.169.121: icmp_seq=1 ttl=128 time=5.41 ms

64 bytes from 61.135.169.121: icmp_seq=2 ttl=128 time=6.55 ms

64 bytes from 61.135.169.121: icmp_seq=3 ttl=128 time=8.97 ms

--- www.a.shifen.com ping statistics ---

3 packets transmitted, 3 received, 0% packet loss, time 2023ms

rtt min/avg/max/mdev = 5.418/6.981/8.974/1.486 ms![]()

4.7 配置免秘钥登录

只在master节点做

![]()

[root@k8s-master ~]# ssh-keygen -t rsa

Generating public/private rsa key pair.

Enter file in which to save the key (/root/.ssh/id_rsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

b1:a0:5b:02:57:0e:8f:1e:25:bf:46:1f:d1:f3:24:c4 root@k8s-master

The key's randomart image is:

+--[ RSA 2048]----+

| o o .+. |

| X .E . |

| . + * o = |

| + + + + . |

| + + S |

| = |

| . |

| |

| |

+-----------------+

[root@k8s-master ~]# ssh-copy-id k8s-master

The authenticity of host 'k8s-master (10.0.0.25)' can't be established.

ECDSA key fingerprint is 75:5c:83:a1:b4:cc:bf:28:71:a5:d5:d1:94:35:3c:9a.

Are you sure you want to continue connecting (yes/no)? yes

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

root@k8s-master's password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'k8s-master'"

and check to make sure that only the key(s) you wanted were added.

[root@k8s-master ~]# ssh-copy-id k8s-node-1

The authenticity of host 'k8s-node-1 (10.0.0.26)' can't be established.

ECDSA key fingerprint is 75:5c:83:a1:b4:cc:bf:28:71:a5:d5:d1:94:35:3c:9a.

Are you sure you want to continue connecting (yes/no)? yes

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

root@k8s-node-1's password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'k8s-node-1'"

and check to make sure that only the key(s) you wanted were added.

[root@k8s-master ~]# ssh-copy-id k8s-node-2

The authenticity of host 'k8s-node-2 (10.0.0.27)' can't be established.

ECDSA key fingerprint is 75:5c:83:a1:b4:cc:bf:28:71:a5:d5:d1:94:35:3c:9a.

Are you sure you want to continue connecting (yes/no)? yes

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

root@k8s-node-2's password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'k8s-node-2'"

and check to make sure that only the key(s) you wanted were added.![]()

回到顶部

第二章:Kubernetes集群初始化

一、安装Docker

3个节点都做

![]()

#第一步:使用国内Docker源

[root@k8s-master ~]# cd /etc/yum.repos.d/

[root@k8s-master yum.repos.d]# wget \https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

#第二步:Docker安装:

[root@k8s-master ~]# yum install -y docker-ce

#第三步:启动后台进程:

[root@k8s-master ~]# systemctl start docker

[root@k8s-master ~]# systemctl enable docker![]()

二、准备部署目录

3个节点都做

![]()

[root@k8s-master ~]# mkdir -p /opt/kubernetes/{cfg,bin,ssl,log}

[root@k8s-master ~]# tree -L 1 /opt/kubernetes/

/opt/kubernetes/

├── bin

├── cfg

├── log

└── ssl![]()

三、准备软件包

百度网盘下载地址:

链接: https://pan.baidu.com/s/1kUNV7t8SSF_yuP_WDaXj5g

密码: wexh

四、解压软件包

master节点做

![]()

#将下载软件包上传到/usr/local/src/

[root@k8s-master ~]# ll /usr/local/src/

总用量 579812

-rw-r--r-- 1 root root 593725046 6月 2 13:27 k8s-v1.10.1-manual.zip

#解压

cd /usr/local/src/

unzip k8s-v1.10.1-manual.zip

cd /usr/local/src/k8s-v1.10.1-manual/k8s-v1.10.1

tar zxf kubernetes.tar.gz

tar zxf kubernetes-server-linux-amd64.tar.gz

tar zxf kubernetes-client-linux-amd64.tar.gz

tar zxf kubernetes-node-linux-amd64.tar.gz

mv ./* /usr/local/src/![]()

五、修改环境变量

3个节点都做

[root@k8s-master ~]# sed -i 's#PATH=$PATH:$HOME/bin#PATH=$PATH:$HOME/bin:/opt/kubernetes/bin#g' /root/.bash_profile

[root@k8s-master ~]# source /root/.bash_profile六、手动制作CA证书

6.1 安装 CFSSL

master节点做

![]()

[root@k8s-master ~]# cd /usr/local/src/

[root@k8s-master src]# wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

[root@k8s-master src]# wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

[root@k8s-master src]# wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64

[root@k8s-master src]# chmod +x cfssl*

[root@k8s-master src]# mv cfssl-certinfo_linux-amd64 /opt/kubernetes/bin/cfssl-certinfo

[root@k8s-master src]# mv cfssljson_linux-amd64 /opt/kubernetes/bin/cfssljson

[root@k8s-master src]# mv cfssl_linux-amd64 /opt/kubernetes/bin/cfssl

#复制cfssl命令文件到k8s-node1和k8s-node2节点。如果实际中多个节点,就都需要同步复制。

[root@k8s-master src]# scp /opt/kubernetes/bin/cfssl* 10.0.0.26: /opt/kubernetes/bin

[root@k8s-master src]# scp /opt/kubernetes/bin/cfssl* 10.0.0.27: /opt/kubernetes/bin![]()

6.2 初始化CFSSL

master节点做

[root@k8s-master src]# mkdir ssl && cd ssl

[root@k8s-master ssl]# cfssl print-defaults config > config.json

[root@k8s-master ssl]# cfssl print-defaults csr > csr.json6.3 创建用来生成 CA 文件的 JSON 配置文件

master节点做

![]()

[root@k8s-master ssl]# cd /usr/local/src/ssl

[root@k8s-master ssl]# vim ca-config.json

{

"signing": {

"default": {

"expiry": "8760h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "8760h"

}

}

}

}![]()

6.4 创建用来生成 CA 证书签名请求(CSR)的 JSON 配置文件

master节点做

![]()

[root@k8s-master ssl]# cd /usr/local/src/ssl

[root@k8s-master ssl]# vim ca-csr.json

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}![]()

6.5 生成CA证书(ca.pem)和密钥(ca-key.pem)

master节点做

![]()

[root@k8s-master ssl]# cd /usr/local/src/ssl

[root@k8s-master ssl]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca

[root@k8s-master ssl]# ls -l ca*

-rw-r--r-- 1 root root 290 6月 2 15:58 ca-config.json

-rw-r--r-- 1 root root 1001 6月 2 15:58 ca.csr

-rw-r--r-- 1 root root 208 6月 2 15:58 ca-csr.json

-rw------- 1 root root 1675 6月 2 15:58 ca-key.pem

-rw-r--r-- 1 root root 1359 6月 2 15:58 ca.pem![]()

6.6 分发证书

master节点做

[root@k8s-master ssl]# cp ca.csr ca.pem ca-key.pem ca-config.json /opt/kubernetes/ssl

[root@k8s-master ssl]# scp ca.csr ca.pem ca-key.pem ca-config.json 10.0.0.26:/opt/kubernetes/ssl

[root@k8s-master ssl]# scp ca.csr ca.pem ca-key.pem ca-config.json 10.0.0.27:/opt/kubernetes/ssl七、ETCD集群部署

7.1 准备etcd软件包

master节点做

![]()

[root@k8s-master ~]# cd /usr/local/src/

[root@k8s-master src]# wget https://github.com/coreos/etcd/releases/download/v3.2.18/etcd-v3.2.18-linux-amd64.tar.gz

[root@k8s-master src]# tar zxf etcd-v3.2.18-linux-amd64.tar.gz

[root@k8s-master src]# cd etcd-v3.2.18-linux-amd64

[root@k8s-master etcd-v3.2.18-linux-amd64]# cp etcd etcdctl /opt/kubernetes/bin/

[root@k8s-master etcd-v3.2.18-linux-amd64]# scp etcd etcdctl 10.0.0.26:/opt/kubernetes/bin/

[root@k8s-master etcd-v3.2.18-linux-amd64]# scp etcd etcdctl 10.0.0.27:/opt/kubernetes/bin/![]()

7.2 创建 etcd 证书签名请求

master节点做

![]()

[root@k8s-master ~]# cd /usr/local/src/ssl/

[root@k8s-master ssl]# vim etcd-csr.json

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"10.0.0.25",

"10.0.0.26",

"10.0.0.27"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}![]()

7.4 生成 etcd 证书和私钥

master节点做

![]()

[root@k8s-master ssl]# cd /usr/local/src/ssl/

[root@k8s-master ssl]# cfssl gencert -ca=/opt/kubernetes/ssl/ca.pem \

-ca-key=/opt/kubernetes/ssl/ca-key.pem \

-config=/opt/kubernetes/ssl/ca-config.json \

-profile=kubernetes etcd-csr.json | cfssljson -bare etcd

#生成以下证书文件

[root@k8s-master ssl]# ls -l etcd*

-rw-r--r-- 1 root root 1062 6月 3 10:48 etcd.csr

-rw-r--r-- 1 root root 275 6月 3 10:45 etcd-csr.json

-rw------- 1 root root 1675 6月 3 10:48 etcd-key.pem

-rw-r--r-- 1 root root 1436 6月 3 10:48 etcd.pem

[root@k8s-master ssl]# ![]()

7.4 证书移动到/opt/kubernetes/ssl目录下

master节点做

[root@k8s-master ssl]# cp etcd*.pem /opt/kubernetes/ssl

[root@k8s-master ssl]# scp etcd*.pem 10.0.0.26:/opt/kubernetes/ssl

[root@k8s-master ssl]# scp etcd*.pem 10.0.0.27:/opt/kubernetes/ssl7.5 设置ETCD配置文件

master节点配置文件

![]()

[root@k8s-master ~]# vim /opt/kubernetes/cfg/etcd.conf

#[member]

ETCD_NAME="etcd-master"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

#ETCD_SNAPSHOT_COUNTER="10000"

#ETCD_HEARTBEAT_INTERVAL="100"

#ETCD_ELECTION_TIMEOUT="1000"

ETCD_LISTEN_PEER_URLS="https://10.0.0.25:2380"

ETCD_LISTEN_CLIENT_URLS="https://10.0.0.25:2379,https://127.0.0.1:2379"

#ETCD_MAX_SNAPSHOTS="5"

#ETCD_MAX_WALS="5"

#ETCD_CORS=""

#[cluster]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://10.0.0.25:2380"

# if you use different ETCD_NAME (e.g. test),

# set ETCD_INITIAL_CLUSTER value for this name, i.e. "test=http://..."

ETCD_INITIAL_CLUSTER="etcd-master=https://10.0.0.25:2380,etcd-node1=https://10.0.0.26:2380,etcd-node2=https://10.0.0.27:2380"

ETCD_INITIAL_CLUSTER_STATE="new"

ETCD_INITIAL_CLUSTER_TOKEN="k8s-etcd-cluster"

ETCD_ADVERTISE_CLIENT_URLS="https://10.0.0.25:2379"

#[security]

CLIENT_CERT_AUTH="true"

ETCD_CA_FILE="/opt/kubernetes/ssl/ca.pem"

ETCD_CERT_FILE="/opt/kubernetes/ssl/etcd.pem"

ETCD_KEY_FILE="/opt/kubernetes/ssl/etcd-key.pem"

PEER_CLIENT_CERT_AUTH="true"

ETCD_PEER_CA_FILE="/opt/kubernetes/ssl/ca.pem"

ETCD_PEER_CERT_FILE="/opt/kubernetes/ssl/etcd.pem"

ETCD_PEER_KEY_FILE="/opt/kubernetes/ssl/etcd-key.pem"![]()

node1节点配置文件

![]()

[root@k8s-node-1 ~]# vim /opt/kubernetes/cfg/etcd.conf

#[member]

ETCD_NAME="etcd-node1"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

#ETCD_SNAPSHOT_COUNTER="10000"

#ETCD_HEARTBEAT_INTERVAL="100"

#ETCD_ELECTION_TIMEOUT="1000"

ETCD_LISTEN_PEER_URLS="https://10.0.0.26:2380"

ETCD_LISTEN_CLIENT_URLS="https://10.0.0.26:2379,https://127.0.0.1:2379"

#ETCD_MAX_SNAPSHOTS="5"

#ETCD_MAX_WALS="5"

#ETCD_CORS=""

#[cluster]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://10.0.0.26:2380"

# if you use different ETCD_NAME (e.g. test),

# set ETCD_INITIAL_CLUSTER value for this name, i.e. "test=http://..."

ETCD_INITIAL_CLUSTER="etcd-master=https://10.0.0.25:2380,etcd-node1=https://10.0.0.26:2380,etcd-node2=https://10.0.0.27:2380"

ETCD_INITIAL_CLUSTER_STATE="new"

ETCD_INITIAL_CLUSTER_TOKEN="k8s-etcd-cluster"

ETCD_ADVERTISE_CLIENT_URLS="https://10.0.0.26:2379"

#[security]

CLIENT_CERT_AUTH="true"

ETCD_CA_FILE="/opt/kubernetes/ssl/ca.pem"

ETCD_CERT_FILE="/opt/kubernetes/ssl/etcd.pem"

ETCD_KEY_FILE="/opt/kubernetes/ssl/etcd-key.pem"

PEER_CLIENT_CERT_AUTH="true"

ETCD_PEER_CA_FILE="/opt/kubernetes/ssl/ca.pem"

ETCD_PEER_CERT_FILE="/opt/kubernetes/ssl/etcd.pem"

ETCD_PEER_KEY_FILE="/opt/kubernetes/ssl/etcd-key.pem"![]()

node2节点配置文件

![]()

[root@k8s-node-2 ~]# vim /opt/kubernetes/cfg/etcd.conf

#[member]

ETCD_NAME="etcd-node2"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

#ETCD_SNAPSHOT_COUNTER="10000"

#ETCD_HEARTBEAT_INTERVAL="100"

#ETCD_ELECTION_TIMEOUT="1000"

ETCD_LISTEN_PEER_URLS="https://10.0.0.27:2380"

ETCD_LISTEN_CLIENT_URLS="https://10.0.0.27:2379,https://127.0.0.1:2379"

#ETCD_MAX_SNAPSHOTS="5"

#ETCD_MAX_WALS="5"

#ETCD_CORS=""

#[cluster]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://10.0.0.27:2380"

# if you use different ETCD_NAME (e.g. test),

# set ETCD_INITIAL_CLUSTER value for this name, i.e. "test=http://..."

ETCD_INITIAL_CLUSTER="etcd-master=https://10.0.0.25:2380,etcd-node1=https://10.0.0.26:2380,etcd-node2=https://10.0.0.27:2380"

ETCD_INITIAL_CLUSTER_STATE="new"

ETCD_INITIAL_CLUSTER_TOKEN="k8s-etcd-cluster"

ETCD_ADVERTISE_CLIENT_URLS="https://10.0.0.27:2379"

#[security]

CLIENT_CERT_AUTH="true"

ETCD_CA_FILE="/opt/kubernetes/ssl/ca.pem"

ETCD_CERT_FILE="/opt/kubernetes/ssl/etcd.pem"

ETCD_KEY_FILE="/opt/kubernetes/ssl/etcd-key.pem"

PEER_CLIENT_CERT_AUTH="true"

ETCD_PEER_CA_FILE="/opt/kubernetes/ssl/ca.pem"

ETCD_PEER_CERT_FILE="/opt/kubernetes/ssl/etcd.pem"

ETCD_PEER_KEY_FILE="/opt/kubernetes/ssl/etcd-key.pem"![]()

7.6 创建ETCD系统服务

master节点做

![]()

[root@k8s-master ~]# vim /etc/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

[Service]

Type=simple

WorkingDirectory=/var/lib/etcd

EnvironmentFile=-/opt/kubernetes/cfg/etcd.conf

# set GOMAXPROCS to number of processors

ExecStart=/bin/bash -c "GOMAXPROCS=$(nproc) /opt/kubernetes/bin/etcd"

Type=notify

[Install]

WantedBy=multi-user.target[root@k8s-master ~]# scp /etc/systemd/system/etcd.service 10.0.0.26:/etc/systemd/system/

[root@k8s-master ~]# scp /etc/systemd/system/etcd.service 10.0.0.27:/etc/systemd/system/![]()

7.7 重新加载系统服务

3个节点都做

systemctl daemon-reload

mkdir /var/lib/etcd

systemctl enable etcd

systemctl start etcd

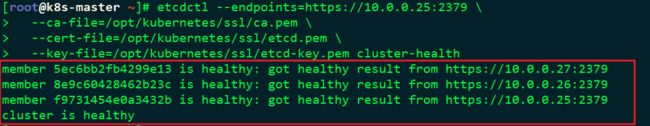

systemctl status etcd7.8 验证集群

master节点做

[root@k8s-master ~]# etcdctl --endpoints=https://10.0.0.25:2379 \

--ca-file=/opt/kubernetes/ssl/ca.pem \

--cert-file=/opt/kubernetes/ssl/etcd.pem \

--key-file=/opt/kubernetes/ssl/etcd-key.pem cluster-health如下图所示证明ETCD集群部署成功

回到顶部

第三章:Kubernetes的master节点部署

一、部署API server服务

1.1 准备软件包

master节点做

因为K8S是用二进制文件构写的所以不需要编译,只需要拷贝文件就行。

[root@k8s-master ~]# cd /usr/local/src/kubernetes

[root@k8s-master kubernetes]# cp server/bin/kube-apiserver /opt/kubernetes/bin/

[root@k8s-master kubernetes]# cp server/bin/kube-controller-manager /opt/kubernetes/bin/

[root@k8s-master kubernetes]# cp server/bin/kube-scheduler /opt/kubernetes/bin/1.2 创建生成CSR的 JSON 配置文件

master节点做

![]()

[root@k8s-master src]# cd /usr/local/src/ssl/

[root@k8s-master ssl]# vim kubernetes-csr.json

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"10.0.0.25",

"10.1.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}![]()

1.3 生成 kubernetes 证书和私钥

master节点做

![]()

[root@k8s-master ssl]# cfssl gencert -ca=/opt/kubernetes/ssl/ca.pem \

-ca-key=/opt/kubernetes/ssl/ca-key.pem \

-config=/opt/kubernetes/ssl/ca-config.json \

-profile=kubernetes kubernetes-csr.json | cfssljson -bare kubernetes

[root@k8s-master ssl]# cp kubernetes*.pem /opt/kubernetes/ssl/

[root@k8s-master ssl]# scp kubernetes*.pem 10.0.0.26:/opt/kubernetes/ssl/

[root@k8s-master ssl]# scp kubernetes*.pem 10.0.0.27:/opt/kubernetes/ssl/![]()

1.4 创建 kube-apiserver 使用的客户端 token 文件

master节点做

[root@k8s-master ~]# head -c 16 /dev/urandom | od -An -t x | tr -d ' '

841276913ac026513f75d267f0fc5212

[root@k8s-master ~]# vim /opt/kubernetes/ssl/bootstrap-token.csv

841276913ac026513f75d267f0fc5212,kubelet-bootstrap,10001,"system:kubelet-bootstrap" #根据上面自己生成的填写1.5 创建基础用户名/密码认证配置

master节点做

[root@k8s-master ~]# vim /opt/kubernetes/ssl/basic-auth.csv

admin,admin,1

readonly,readonly,21.6 部署Kubernetes API Server

master节点做

![]()

[root@k8s-master ~]# vim /usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

[Service]

ExecStart=/opt/kubernetes/bin/kube-apiserver \

--admission-control=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota,NodeRestriction \

--bind-address=10.0.0.25 \

--insecure-bind-address=127.0.0.1 \

--authorization-mode=Node,RBAC \

--runtime-config=rbac.authorization.k8s.io/v1 \

--kubelet-https=true \

--anonymous-auth=false \

--basic-auth-file=/opt/kubernetes/ssl/basic-auth.csv \

--enable-bootstrap-token-auth \

--token-auth-file=/opt/kubernetes/ssl/bootstrap-token.csv \

--service-cluster-ip-range=10.1.0.0/16 \

--service-node-port-range=20000-40000 \

--tls-cert-file=/opt/kubernetes/ssl/kubernetes.pem \

--tls-private-key-file=/opt/kubernetes/ssl/kubernetes-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/kubernetes/ssl/ca.pem \

--etcd-certfile=/opt/kubernetes/ssl/kubernetes.pem \

--etcd-keyfile=/opt/kubernetes/ssl/kubernetes-key.pem \

--etcd-servers=https://10.0.0.25:2379,https://10.0.0.26:2379,https://10.0.0.27:2379 \

--enable-swagger-ui=true \

--allow-privileged=true \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/opt/kubernetes/log/api-audit.log \

--event-ttl=1h \

--v=2 \

--logtostderr=false \

--log-dir=/opt/kubernetes/log

Restart=on-failure

RestartSec=5

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target![]()

1.7 启动APIserver

master节点做

[root@k8s-master ~]# systemctl daemon-reload

[root@k8s-master ~]# systemctl enable kube-apiserver

[root@k8s-master ~]# systemctl start kube-apiserver

[root@k8s-master ~]# systemctl status kube-apiserver二、部署Controller Manager服务

master节点做

![]()

[root@k8s-master ~]# vim /usr/lib/systemd/system/kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

ExecStart=/opt/kubernetes/bin/kube-controller-manager \

--address=127.0.0.1 \

--master=http://127.0.0.1:8080 \

--allocate-node-cidrs=true \

--service-cluster-ip-range=10.1.0.0/16 \

--cluster-cidr=10.2.0.0/16 \

--cluster-name=kubernetes \

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \

--root-ca-file=/opt/kubernetes/ssl/ca.pem \

--leader-elect=true \

--v=2 \

--logtostderr=false \

--log-dir=/opt/kubernetes/log

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

###启动Controller Manager

[root@k8s-master ~]# systemctl daemon-reload

[root@k8s-master ~]# systemctl enable kube-controller-manager

[root@k8s-master ~]# systemctl start kube-controller-manager

[root@k8s-master ~]# systemctl status kube-controller-manager![]()

三、部署Kubernetes Scheduler服务

master节点上做

![]()

[root@k8s-master ~]# vim /usr/lib/systemd/system/kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

ExecStart=/opt/kubernetes/bin/kube-scheduler \

--address=127.0.0.1 \

--master=http://127.0.0.1:8080 \

--leader-elect=true \

--v=2 \

--logtostderr=false \

--log-dir=/opt/kubernetes/log

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

###启动scheduler服务

[root@k8s-master ~]# systemctl daemon-reload

[root@k8s-master ~]# systemctl enable kube-scheduler

[root@k8s-master ~]# systemctl start kube-scheduler

[root@k8s-master ~]# systemctl status kube-scheduler![]()

四、部署kubectl 命令行工具

master节点做

![]()

###准备二进制命令包

[root@k8s-master ~]# cd /usr/local/src/kubernetes/client/bin

[root@k8s-master bin]# cp kubectl /opt/kubernetes/bin/

###创建 admin 证书签名请求

[root@linux-node1 ~]# cd /usr/local/src/ssl/

[root@linux-node1 ssl]# vim admin-csr.json

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

###生成 admin 证书和私钥

[root@k8s-master ssl]# cd /usr/local/src/ssl/

[root@k8s-master ssl]# cfssl gencert -ca=/opt/kubernetes/ssl/ca.pem -ca-key=/opt/kubernetes/ssl/ca-key.pem -config=/opt/kubernetes/ssl/ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

[root@k8s-master ssl]# mv admin*.pem /opt/kubernetes/ssl/

###设置集群参数

[root@k8s-master ssl]# kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=https://10.0.0.25:6443

###设置客户端认证参数

[root@k8s-master ssl]# kubectl config set-credentials admin \

--client-certificate=/opt/kubernetes/ssl/admin.pem \

--embed-certs=true \

--client-key=/opt/kubernetes/ssl/admin-key.pem

###设置上下文参数

[root@k8s-master ssl]# kubectl config set-context kubernetes \

--cluster=kubernetes \

--user=admin

###使用kubectl工具

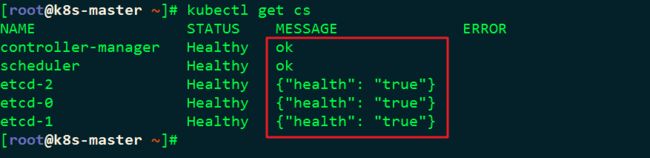

[root@k8s-master ~]# ll /root/.kube/config

[root@k8s-master ~]# kubectl get cs![]()

如下图所示证明master节点部署成功

回到顶部

第四章:Kubernetes的node节点部署

4.1-kubelet部署

master节点做

![]()

###二进制包准备

[root@k8s-master ~]# cd /usr/local/src/kubernetes/server/bin/

[root@k8s-master bin]# cp kubelet kube-proxy /opt/kubernetes/bin/

[root@k8s-master bin]# scp kubelet kube-proxy 10.0.0.26:/opt/kubernetes/bin/

[root@k8s-master bin]# scp kubelet kube-proxy 10.0.0.27:/opt/kubernetes/bin/

###创建角色绑定

[root@k8s-master ~]# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

###设置集群参数

[root@k8s-master ~]# kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=https://10.0.0.25:6443 \

--kubeconfig=bootstrap.kubeconfig

###设置客户端认证参数

[root@k8s-master ~]# kubectl config set-credentials kubelet-bootstrap \

--token=30e6006d17ccc1ef829d26d1323cc7d6 \

--kubeconfig=bootstrap.kubeconfig

###设置上下文参数

[root@k8s-master ~]# kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=bootstrap.kubeconfig

###选择默认上下文

[root@k8s-master ssl]# kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

[root@k8s-master ssl]# cp bootstrap.kubeconfig /opt/kubernetes/cfg

[root@k8s-master ssl]# scp bootstrap.kubeconfig 10.0.0.26:/opt/kubernetes/cfg

[root@k8s-master ssl]# scp bootstrap.kubeconfig 10.0.0.27:/opt/kubernetes/cfg

###设置CNI支持 3个节点都创建

mkdir -p /etc/cni/net.d

vim /etc/cni/net.d/10-default.conf

{

"name": "flannel",

"type": "flannel",

"delegate": {

"bridge": "docker0",

"isDefaultGateway": true,

"mtu": 1400

}

}

[root@k8s-master ~]# scp /etc/cni/net.d/10-default.conf 10.0.0.26:/etc/cni/net.d/10-default.conf

[root@k8s-master ~]# scp /etc/cni/net.d/10-default.conf 10.0.0.27:/etc/cni/net.d/10-default.conf

###创建kubelet目录 #3个节点都创建

mkdir /var/lib/kubelet

###master节点配置文件

[root@k8s-master ~]# vim /usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=docker.service

Requires=docker.service

[Service]

WorkingDirectory=/var/lib/kubelet

ExecStart=/opt/kubernetes/bin/kubelet \

--address=10.0.0.25 \

--hostname-override=10.0.0.25 \

--pod-infra-container-image=mirrorgooglecontainers/pause-amd64:3.0 \

--experimental-bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \

--cert-dir=/opt/kubernetes/ssl \

--network-plugin=cni \

--cni-conf-dir=/etc/cni/net.d \

--cni-bin-dir=/opt/kubernetes/bin/cni \

--cluster-dns=10.1.0.2 \

--cluster-domain=cluster.local. \

--hairpin-mode hairpin-veth \

--allow-privileged=true \

--fail-swap-on=false \

--logtostderr=true \

--v=2 \

--logtostderr=false \

--log-dir=/opt/kubernetes/log

Restart=on-failure

RestartSec=5

###node1节点配置文件

[root@k8s-node-1 ~]# vim /usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=docker.service

Requires=docker.service

[Service]

WorkingDirectory=/var/lib/kubelet

ExecStart=/opt/kubernetes/bin/kubelet \

--address=10.0.0.26 \

--hostname-override=10.0.0.26 \

--pod-infra-container-image=mirrorgooglecontainers/pause-amd64:3.0 \

--experimental-bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \

--cert-dir=/opt/kubernetes/ssl \

--network-plugin=cni \

--cni-conf-dir=/etc/cni/net.d \

--cni-bin-dir=/opt/kubernetes/bin/cni \

--cluster-dns=10.1.0.2 \

--cluster-domain=cluster.local. \

--hairpin-mode hairpin-veth \

--allow-privileged=true \

--fail-swap-on=false \

--logtostderr=true \

--v=2 \

--logtostderr=false \

--log-dir=/opt/kubernetes/log

Restart=on-failure

RestartSec=5

###node2节点配置文件

[root@k8s-node-2 ~]# vim /usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=docker.service

Requires=docker.service

[Service]

WorkingDirectory=/var/lib/kubelet

ExecStart=/opt/kubernetes/bin/kubelet \

--address=10.0.0.27 \

--hostname-override=10.0.0.27 \

--pod-infra-container-image=mirrorgooglecontainers/pause-amd64:3.0 \

--experimental-bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \

--cert-dir=/opt/kubernetes/ssl \

--network-plugin=cni \

--cni-conf-dir=/etc/cni/net.d \

--cni-bin-dir=/opt/kubernetes/bin/cni \

--cluster-dns=10.1.0.2 \

--cluster-domain=cluster.local. \

--hairpin-mode hairpin-veth \

--allow-privileged=true \

--fail-swap-on=false \

--logtostderr=true \

--v=2 \

--logtostderr=false \

--log-dir=/opt/kubernetes/log

Restart=on-failure

RestartSec=5

###启动Kubelet #3个节点都做

systemctl daemon-reload

systemctl enable kubelet

systemctl start kubelet

systemctl status kubelet

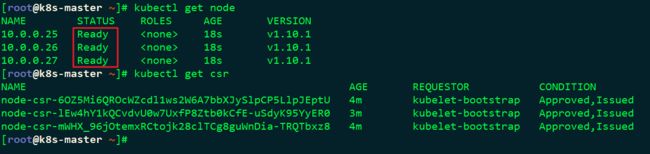

###查看csr请求

kubectl get csr

###批准kubelet的TLS证书请求

kubectl get csr|grep 'Pending' | awk 'NR>0{print $1}'| xargs kubectl certificate approve

###查看节点状态

kubectl get node

![]()

4.2-kube-proxy部署

![]()

###配置kube-proxy使用LVS

[root@k8s-master ~]# yum install -y ipvsadm ipset conntrack

[root@k8s-node-1 ~]# yum install -y ipvsadm ipset conntrack

[root@k8s-node-2 ~]# yum install -y ipvsadm ipset conntrack

###创建 kube-proxy 证书请求

[root@k8s-master ~]# cd /usr/local/src/ssl/

[root@k8s-master ssl]# vim kube-proxy-csr.json

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

###生成证书

[root@k8s-master ssl]# cfssl gencert -ca=/opt/kubernetes/ssl/ca.pem \

-ca-key=/opt/kubernetes/ssl/ca-key.pem \

-config=/opt/kubernetes/ssl/ca-config.json \

-profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

###分发证书到所有节点

[root@k8s-master ssl]# cp kube-proxy*.pem /opt/kubernetes/ssl/

[root@k8s-master ssl]# scp kube-proxy*.pem 10.0.0.26:/opt/kubernetes/ssl/

[root@k8s-master ssl]# scp kube-proxy*.pem 10.0.0.27:/opt/kubernetes/ssl/

###创建kube-proxy配置文件 #master节点做

[root@k8s-master ssl]# kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=https://10.0.0.25:6443 \

--kubeconfig=kube-proxy.kubeconfig

[root@k8s-master ssl]# kubectl config set-credentials kube-proxy \

--client-certificate=/opt/kubernetes/ssl/kube-proxy.pem \

--client-key=/opt/kubernetes/ssl/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

[root@k8s-master ssl]# kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

[root@k8s-master ssl]# kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

[root@k8s-master ssl]# cp kube-proxy.kubeconfig /opt/kubernetes/cfg/

[root@k8s-master ssl]# scp kube-proxy.kubeconfig 10.0.0.26:/opt/kubernetes/cfg/

[root@k8s-master ssl]# scp kube-proxy.kubeconfig 10.0.0.27:/opt/kubernetes/cfg/

###创建kube-proxy服务配置 #3个节点都创建

[root@k8s-master ssl]# mkdir /var/lib/kube-proxy

[root@k8s-node-1 ~]# mkdir /var/lib/kube-proxy

[root@k8s-node-2 ~]# mkdir /var/lib/kube-proxy

##master节点

[root@k8s-master ssl]# vim /usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Kube-Proxy Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

[Service]

WorkingDirectory=/var/lib/kube-proxy

ExecStart=/opt/kubernetes/bin/kube-proxy \

--bind-address=10.0.0.25 \

--hostname-override=10.0.0.25 \

--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig \

--masquerade-all \

--feature-gates=SupportIPVSProxyMode=true \

--proxy-mode=ipvs \

--ipvs-min-sync-period=5s \

--ipvs-sync-period=5s \

--ipvs-scheduler=rr \

--logtostderr=true \

--v=2 \

--logtostderr=false \

--log-dir=/opt/kubernetes/log

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

##node1节点

[root@k8s-node-1 ~]# vim /usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Kube-Proxy Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

[Service]

WorkingDirectory=/var/lib/kube-proxy

ExecStart=/opt/kubernetes/bin/kube-proxy \

--bind-address=10.0.0.26 \

--hostname-override=10.0.0.26 \

--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig \

--masquerade-all \

--feature-gates=SupportIPVSProxyMode=true \

--proxy-mode=ipvs \

--ipvs-min-sync-period=5s \

--ipvs-sync-period=5s \

--ipvs-scheduler=rr \

--logtostderr=true \

--v=2 \

--logtostderr=false \

--log-dir=/opt/kubernetes/log

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

##node2节点

[root@k8s-node-2 ~]# vim /usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Kube-Proxy Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

[Service]

WorkingDirectory=/var/lib/kube-proxy

ExecStart=/opt/kubernetes/bin/kube-proxy \

--bind-address=10.0.0.27 \

--hostname-override=10.0.0.27 \

--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig \

--masquerade-all \

--feature-gates=SupportIPVSProxyMode=true \

--proxy-mode=ipvs \

--ipvs-min-sync-period=5s \

--ipvs-sync-period=5s \

--ipvs-scheduler=rr \

--logtostderr=true \

--v=2 \

--logtostderr=false \

--log-dir=/opt/kubernetes/log

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

###启动Kubernetes Proxy #3个节点都做

systemctl daemon-reload

systemctl enable kube-proxy

systemctl start kube-proxy

systemctl status kube-proxy

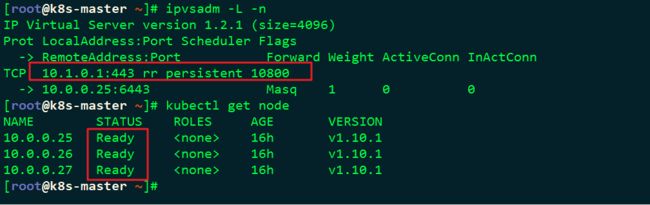

检查LVS状态

ipvsadm -L -n

kubectl get node![]()

回到顶部

第五章:Flannel网络部署

5.1 部署Flanne

![]()

###为Flannel生成证书

[root@k8s-master ~]# cd /usr/local/src/ssl/

[root@k8s-master ~]# vim flanneld-csr.json

{

"CN": "flanneld",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

###生成证书

[root@k8s-master ~]# cfssl gencert -ca=/opt/kubernetes/ssl/ca.pem \

-ca-key=/opt/kubernetes/ssl/ca-key.pem \

-config=/opt/kubernetes/ssl/ca-config.json \

-profile=kubernetes flanneld-csr.json | cfssljson -bare flanneld

###分发证书

[root@k8s-master ssl]# cp flanneld*.pem /opt/kubernetes/ssl/

[root@k8s-master ssl]# scp flanneld*.pem 10.0.0.26:/opt/kubernetes/ssl/

[root@k8s-master ssl]# scp flanneld*.pem 10.0.0.27:/opt/kubernetes/ssl/

###下载Flannel软件包

[root@k8s-master ~]# cd /usr/local/src

wget https://github.com/coreos/flannel/releases/download/v0.10.0/flannel-v0.10.0-linux-amd64.tar.gz

[root@k8s-master src]# tar zxf flannel-v0.10.0-linux-amd64.tar.gz

[root@k8s-master src]# cp flanneld mk-docker-opts.sh /opt/kubernetes/bin/

[root@k8s-master src]# scp flanneld mk-docker-opts.sh 10.0.0.26:/opt/kubernetes/bin/

[root@k8s-master src]# scp flanneld mk-docker-opts.sh 10.0.0.27:/opt/kubernetes/bin/

###复制对应脚本到/opt/kubernetes/bin目录

[root@k8s-master ~]# cd /usr/local/src/kubernetes/cluster/centos/node/bin/

[root@k8s-master bin]# cp remove-docker0.sh /opt/kubernetes/bin/

[root@k8s-master bin]# scp remove-docker0.sh 10.0.0.26:/opt/kubernetes/bin/

[root@k8s-master bin]# scp remove-docker0.sh 10.0.0.27:/opt/kubernetes/bin/

###配置Flannel

[root@k8s-master ~]# vim /opt/kubernetes/cfg/flannel

FLANNEL_ETCD="-etcd-endpoints=https://10.0.0.25:2379,https://10.0.0.26:2379,https://10.0.0.27:2379"

FLANNEL_ETCD_KEY="-etcd-prefix=/kubernetes/network"

FLANNEL_ETCD_CAFILE="--etcd-cafile=/opt/kubernetes/ssl/ca.pem"

FLANNEL_ETCD_CERTFILE="--etcd-certfile=/opt/kubernetes/ssl/flanneld.pem"

FLANNEL_ETCD_KEYFILE="--etcd-keyfile=/opt/kubernetes/ssl/flanneld-key.pem"

##复制配置到其它节点上

[root@k8s-master ~]# scp /opt/kubernetes/cfg/flannel 10.0.0.26:/opt/kubernetes/cfg/

[root@k8s-master ~]# scp /opt/kubernetes/cfg/flannel 10.0.0.27:/opt/kubernetes/cfg/

###设置Flannel系统服务

[root@k8s-master ~]# vim /usr/lib/systemd/system/flannel.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network.target

Before=docker.service

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/flannel

ExecStartPre=/opt/kubernetes/bin/remove-docker0.sh

ExecStart=/opt/kubernetes/bin/flanneld ${FLANNEL_ETCD} ${FLANNEL_ETCD_KEY} ${FLANNEL_ETCD_CAFILE} ${FLANNEL_ETCD_CERTFILE} ${FLANNEL_ETCD_KEYFILE}

ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -d /run/flannel/docker

Type=notify

[Install]

WantedBy=multi-user.target

RequiredBy=docker.service

##复制系统服务脚本到其它节点上

[root@k8s-master ~]# scp /usr/lib/systemd/system/flannel.service 10.0.0.26:/usr/lib/systemd/system/

[root@k8s-master ~]# scp /usr/lib/systemd/system/flannel.service 10.0.0.27:/usr/lib/systemd/system/

###下载Flannel CNI集成插件

[root@k8s-master ~]# mkdir /opt/kubernetes/bin/cni #3个节点都要创建

[root@k8s-master ~]# cd /usr/local/src/

[root@k8s-master src]# wget https://github.com/containernetworking/plugins/releases/download/v0.7.1/cni-plugins-amd64-v0.7.1.tgz

[root@k8s-master src]# tar zxf cni-plugins-amd64-v0.7.1.tgz -C /opt/kubernetes/bin/cni

[root@k8s-master src]# scp -r /opt/kubernetes/bin/cni/* 10.0.0.26:/opt/kubernetes/bin/cni/

[root@k8s-master src]# scp -r /opt/kubernetes/bin/cni/* 10.0.0.27:/opt/kubernetes/bin/cni/

###创建Etcd的key

[root@k8s-master ~]# /opt/kubernetes/bin/etcdctl --ca-file /opt/kubernetes/ssl/ca.pem --cert-file /opt/kubernetes/ssl/flanneld.pem --key-file /opt/kubernetes/ssl/flanneld-key.pem \

--no-sync -C https://10.0.0.25:2379,https://10.0.0.26:2379,https://10.0.0.27:2379 \

mk /kubernetes/network/config '{ "Network": "10.2.0.0/16", "Backend": { "Type": "vxlan", "VNI": 1 }}' >/dev/null 2>&1

###启动flannel #3个节点都做

chmod +x /opt/kubernetes/bin/*

systemctl daemon-reload

systemctl enable flannel

systemctl start flannel

systemctl status flannel

###配置Docker使用Flannel

[root@k8s-master ~]# vim /usr/lib/systemd/system/docker.service

#在Unit下面修改After和增加Requires

[Unit]

After=network-online.target firewalld.service flannel.service

Requires=flannel.service

#增加EnvironmentFile=-/run/flannel/docker和$DOCKER_OPTS

[Service]

EnvironmentFile=-/run/flannel/docker

ExecStart=/usr/bin/dockerd $DOCKER_OPTS

###将配置复制到另外两个阶段

[root@k8s-master ~]# scp /usr/lib/systemd/system/docker.service 10.0.0.26:/usr/lib/systemd/system/

[root@k8s-master ~]# scp /usr/lib/systemd/system/docker.service 10.0.0.27:/usr/lib/systemd/system/

###重启Docker #3个节点都做

systemctl daemon-reload

systemctl restart docker![]()

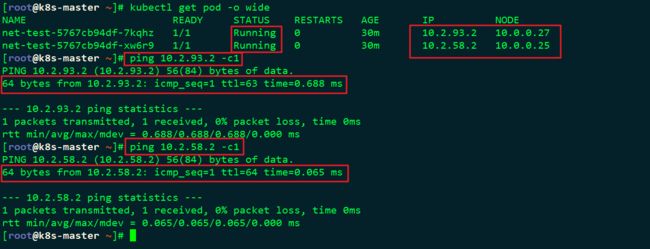

5.2 创建一个K8S应用

![]()

###创建一个测试用的deployment

[root@k8s-master ~]# kubectl run net-test --image=alpine --replicas=2 sleep 360000

###查看获取IP情况

[root@k8s-master ~]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE

net-test-5767cb94df-7kqhz 1/1 Running 0 30m 10.2.93.2 10.0.0.27

net-test-5767cb94df-xw6r9 1/1 Running 0 30m 10.2.58.2 10.0.0.25

###测试联通性

[root@k8s-master ~]# ping 10.2.58.2 -c1![]()

如下图所示说明成功:

![]()

###创建nginx的deployment.yaml文件

[root@k8s-master ~]# vim nginx-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

labels:

app: nginx

spec:

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.10.3

ports:

- containerPort: 80

###创建deployment

[root@k8s-master ~]# kubectl create -f nginx-deployment.yaml

deployment.apps "nginx-deployment" created

###查看deployment

[root@k8s-master ~]# kubectl get deployment

NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE

net-test 2 2 2 2 43m

nginx-deployment 3 3 3 0 53s

[root@k8s-master ~]# kubectl describe deployment nginx-deployment

###查看pod

[root@k8s-master ~]# kubectl get pod

NAME READY STATUS RESTARTS AGE

net-test-5767cb94df-7kqhz 1/1 Running 0 45m

net-test-5767cb94df-xw6r9 1/1 Running 0 45m

nginx-deployment-75d56bb955-8tzh6 0/1 ContainerCreating 0 2m #ContainerCreating说明正在创建中

nginx-deployment-75d56bb955-bjmg7 0/1 ContainerCreating 0 2m #ContainerCreating说明正在创建中

nginx-deployment-75d56bb955-w4dks 0/1 ContainerCreating 0 2m #ContainerCreating说明正在创建中

[root@k8s-master ~]# kubectl describe pod nginx-deployment-75d56bb955-8tzh6|tail -2

Normal SuccessfulMountVolume 5m kubelet, 10.0.0.27 MountVolume.SetUp succeeded for volume "default-token-rpdp6"

Normal Pulling 5m kubelet, 10.0.0.27 pulling image "nginx:1.10.3" #正在pull取nginx的镜像

[root@k8s-master ~]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE

net-test-5767cb94df-7kqhz 1/1 Running 0 49m 10.2.93.2 10.0.0.27

net-test-5767cb94df-xw6r9 1/1 Running 0 49m 10.2.58.2 10.0.0.25

nginx-deployment-75d56bb955-8tzh6 0/1 ContainerCreating 0 6m 10.0.0.27

nginx-deployment-75d56bb955-bjmg7 0/1 ContainerCreating 0 6m 10.0.0.26

nginx-deployment-75d56bb955-w4dks 0/1 ContainerCreating 0 6m 10.0.0.25

[root@k8s-master ~]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE

net-test-5767cb94df-7kqhz 1/1 Running 0 1h 10.2.93.2 10.0.0.27

net-test-5767cb94df-xw6r9 1/1 Running 0 1h 10.2.58.2 10.0.0.25

nginx-deployment-75d56bb955-8tzh6 1/1 Running 0 21m 10.2.93.3 10.0.0.27

nginx-deployment-75d56bb955-bjmg7 1/1 Running 0 21m 10.2.83.2 10.0.0.26

nginx-deployment-75d56bb955-w4dks 1/1 Running 0 21m 10.2.58.3 10.0.0.25

###测试连通性

[root@k8s-master ~]# curl -I 10.2.93.3 -s|awk 'NR==1{print$2}'

200

[root@k8s-master ~]# curl -I 10.2.83.2 -s|awk 'NR==1{print$2}'

200

[root@k8s-master ~]# curl -I 10.2.58.3 -s|awk 'NR==1{print$2}'

200

###更新deployment

#--record是记录日志,方便回滚

[root@k8s-master ~]# kubectl set image deployment/nginx-deployment nginx=nginx:1.12.2 --record

deployment.apps "nginx-deployment" image updated

###查看更新后的deployment

[root@k8s-master ~]# kubectl get deployment -o wide

NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

net-test 2 2 2 2 2h net-test alpine run=net-test

nginx-deployment 3 3 3 3 1h nginx nginx:1.12.2 app=nginx

###查看更新历史

[root@k8s-master ~]# kubectl rollout history deployment/nginx-deployment --revision=1

deployments "nginx-deployment" with revision #1

Pod Template:

Labels: app=nginx

pod-template-hash=3181266511

Containers:

nginx:

Image: nginx:1.10.3

Port: 80/TCP

Host Port: 0/TCP

Environment:

Mounts:

Volumes:

#查看具体某一个版本的更新历史

[root@k8s-master ~]# kubectl rollout history deployment/nginx-deployment --revision=1

deployments "nginx-deployment" with revision #1

Pod Template:

Labels: app=nginx

pod-template-hash=3181266511

Containers:

nginx:

Image: nginx:1.10.3

Port: 80/TCP

Host Port: 0/TCP

Environment:

Mounts:

Volumes:

###快速回滚到上一个版本

[root@k8s-master ~]# kubectl rollout undo deployment/nginx-deployment

deployment.apps "nginx-deployment"

[root@k8s-master ~]# vim nginx-service.yaml

kind: Service

apiVersion: v1

metadata:

name: nginx-service

spec:

selector:

app: nginx

ports:

- protocol: TCP

port: 80

targetPort: 80

###创建nginx的service.yaml文件

[root@k8s-master ~]# kubectl create -f nginx-service.yaml

service "nginx-service" created

###查看service

[root@k8s-master ~]# kubectl get service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.1.0.1 443/TCP 18h

nginx-service ClusterIP 10.1.172.14 80/TCP 12s

###测试连通性

[root@k8s-master ~]# curl --head http://10.1.172.14

HTTP/1.1 200 OK

Server: nginx/1.10.3

Date: Mon, 04 Jun 2018 04:58:47 GMT

Content-Type: text/html

Content-Length: 612

Last-Modified: Tue, 31 Jan 2017 15:01:11 GMT

Connection: keep-alive

ETag: "5890a6b7-264"

Accept-Ranges: bytes

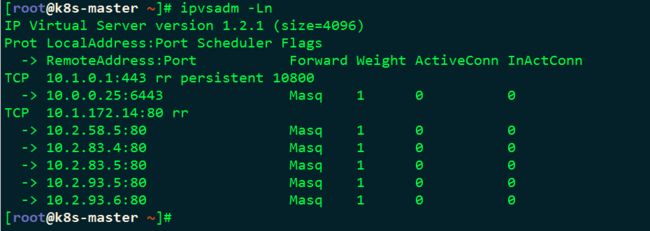

###查看虚IP

[root@k8s-master ~]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 10.1.0.1:443 rr persistent 10800

-> 10.0.0.25:6443 Masq 1 0 0

TCP 10.1.172.14:80 rr

-> 10.2.58.5:80 Masq 1 0 0

-> 10.2.83.4:80 Masq 1 0 0

-> 10.2.93.5:80 Masq 1 0 1

###自动扩容到5个副本节点

[root@k8s-master ~]# kubectl scale deployment nginx-deployment --replicas 5

deployment.extensions "nginx-deployment" scaled

[root@k8s-master ~]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE

net-test-5767cb94df-7kqhz 1/1 Running 0 2h 10.2.93.2 10.0.0.27

net-test-5767cb94df-xw6r9 1/1 Running 0 2h 10.2.58.2 10.0.0.25

nginx-deployment-75d56bb955-6dfxr 1/1 Running 0 17s 10.2.83.5 10.0.0.26

nginx-deployment-75d56bb955-9jmch 1/1 Running 0 17s 10.2.93.6 10.0.0.27

nginx-deployment-75d56bb955-gssxl 1/1 Running 0 7m 10.2.58.5 10.0.0.25

nginx-deployment-75d56bb955-hkqdc 1/1 Running 0 7m 10.2.93.5 10.0.0.27

nginx-deployment-75d56bb955-s66c4 1/1 Running 0 7m 10.2.83.4 10.0.0.26

[root@k8s-master ~]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 10.1.0.1:443 rr persistent 10800

-> 10.0.0.25:6443 Masq 1 0 0

TCP 10.1.172.14:80 rr

-> 10.2.58.5:80 Masq 1 0 0

-> 10.2.83.4:80 Masq 1 0 0

-> 10.2.83.5:80 Masq 1 0 0

-> 10.2.93.5:80 Masq 1 0 0

-> 10.2.93.6:80 Masq 1 0 0 ![]()

不知你与我能否走过这漫长岁月,又可否彼此陪伴于身边,或许因为不是同类的原因,总会有不同的性格