前言:首先有这样一个需求,需要统计一篇10000字的文章,需要统计里面哪些词出现的频率比较高,这里面比较重要的是如何对文章中的一段话进行分词,例如“北京是×××的首都”,“北京”,“×××”,“中华”,“华人”,“人民”,“共和国”,“首都”这些是一个词,需要切分出来,而“京是”“民共”这些就不是有意义的词,所以不能分出来。这些分词的规则如果自己去写,是一件很麻烦的事,利用开源的IK分词,就可以很容易的做到。并且可以根据分词的模式来决定分词的颗粒度。

ik_max_word: 会将文本做最细粒度的拆分,比如会将“×××国歌”拆分为“×××,中华人民,中华,华人,人民共和国,人民,人,民,共和国,共和,和,国国,国歌”,会穷尽各种可能的组合;

ik_smart: 会做最粗粒度的拆分,比如会将“×××国歌”拆分为“×××,国歌”。

一:首先要准备环境

如果有ES环境可以跳过前两步,这里我假设你只有一台刚装好的CentOS6.X系统,方便你跑通这个流程。

(1)安装jdk。

$ wget http://download.oracle.com/otn-pub/java/jdk/8u111-b14/jdk-8u111-linux-x64.rpm $ rpm -ivh jdk-8u111-linux-x64.rpm

(2)安装ES

$ wget https://download.elastic.co/elasticsearch/release/org/elasticsearch/distribution/rpm/elasticsearch/2.4.2/elasticsearch-2.4.2.rpm $ rpm -iv elasticsearch-2.4.2.rpm

(3)安装IK分词器

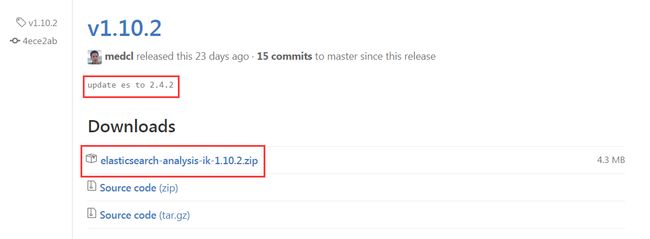

在github上面下载1.10.2版本的ik分词,注意:es版本为2.4.2,兼容的版本为1.10.2。

$ mkdir /usr/share/elasticsearch/plugins/ik $ wget https://github.com/medcl/elasticsearch-analysis-ik/releases/download/v1.10.2/elasticsearch-analysis-ik-1.10.2.zip $ unzip elasticsearch-analysis-ik-1.10.2.zip -d /usr/share/elasticsearch/plugins/ik

(4)配置ES

$ vim /etc/elasticsearch/elasticsearch.yml ###### Cluster ###### cluster.name: test ###### Node ###### node.name: test-10.10.10.10 node.master: true node.data: true ###### Index ###### index.number_of_shards: 5 index.number_of_replicas: 0 ###### Path ###### path.data: /data/elk/es path.logs: /var/log/elasticsearch path.plugins: /usr/share/elasticsearch/plugins ###### Refresh ###### refresh_interval: 5s ###### Memory ###### bootstrap.mlockall: true ###### Network ###### network.publish_host: 10.10.10.10 network.bind_host: 0.0.0.0 transport.tcp.port: 9300 ###### Http ###### http.enabled: true http.port : 9200 ###### IK ######## index.analysis.analyzer.ik.alias: [ik_analyzer] index.analysis.analyzer.ik.type: ik index.analysis.analyzer.ik_max_word.type: ik index.analysis.analyzer.ik_max_word.use_smart: false index.analysis.analyzer.ik_smart.type: ik index.analysis.analyzer.ik_smart.use_smart: true index.analysis.analyzer.default.type: ik

(5)启动ES

$ /etc/init.d/elasticsearch start

(6)检查es节点状态

$ curl localhost:9200/_cat/nodes?v #看到一个节点正常 host ip heap.percent ram.percent load node.role master name 10.10.10.10 10.10.10.10 16 52 0.00 d * test-10.10.10.10 $ curl localhost:9200/_cat/health?v #集群状态为green epoch timestamp cluster status node.total node.data shards pri relo init 1483672233 11:10:33 test green 1 1 0 0 0 0

二:检测分词功能

(1)创建测试索引

$ curl -XPUT http://localhost:9200/test

(2)创建mapping

$ curl -XPOST http://localhost:9200/test/fulltext/_mapping -d'

{

"fulltext": {

"_all": {

"analyzer": "ik"

},

"properties": {

"content": {

"type" : "string",

"boost" : 8.0,

"term_vector" : "with_positions_offsets",

"analyzer" : "ik",

"include_in_all" : true

}

}

}

}'

(3)测试数据

$ curl 'http://localhost:9200/index/_analyze?analyzer=ik&pretty=true' -d '{ "text":"美国留给伊拉克的是个烂摊子吗" }'

返回内容:

{

"tokens" : [ {

"token" : "美国",

"start_offset" : 0,

"end_offset" : 2,

"type" : "CN_WORD",

"position" : 0

}, {

"token" : "留给",

"start_offset" : 2,

"end_offset" : 4,

"type" : "CN_WORD",

"position" : 1

}, {

"token" : "伊拉克",

"start_offset" : 4,

"end_offset" : 7,

"type" : "CN_WORD",

"position" : 2

}, {

"token" : "伊",

"start_offset" : 4,

"end_offset" : 5,

"type" : "CN_WORD",

"position" : 3

}, {

"token" : "拉",

"start_offset" : 5,

"end_offset" : 6,

"type" : "CN_CHAR",

"position" : 4

}, {

"token" : "克",

"start_offset" : 6,

"end_offset" : 7,

"type" : "CN_WORD",

"position" : 5

}, {

"token" : "个",

"start_offset" : 9,

"end_offset" : 10,

"type" : "CN_CHAR",

"position" : 6

}, {

"token" : "烂摊子",

"start_offset" : 10,

"end_offset" : 13,

"type" : "CN_WORD",

"position" : 7

}, {

"token" : "摊子",

"start_offset" : 11,

"end_offset" : 13,

"type" : "CN_WORD",

"position" : 8

}, {

"token" : "摊",

"start_offset" : 11,

"end_offset" : 12,

"type" : "CN_WORD",

"position" : 9

}, {

"token" : "子",

"start_offset" : 12,

"end_offset" : 13,

"type" : "CN_CHAR",

"position" : 10

}, {

"token" : "吗",

"start_offset" : 13,

"end_offset" : 14,

"type" : "CN_CHAR",

"position" : 11

} ]

}

三:开始导入真正的数据

(1)将中文的文本文件上传到linux上面。

$ cat /tmp/zhongwen.txt 京津冀重污染天气持续 督查发现有企业恶意生产 《孤芳不自赏》被指“抠像演戏” 制片人:特效不到位 奥巴马不顾特朗普反对坚持外迁关塔那摩监狱囚犯 . . . . 韩媒:日本叫停韩日货币互换磋商 韩财政部表遗憾 中国百万年薪须交40多万个税 精英无奈出国发展

注意:确保文本文件编码为utf-8,否则后面传到es会乱码。

$ vim /tmp/zhongwen.txt

命令模式下输入:set fineencoding,即可看到fileencoding=utf-8。

如果是 fileencoding=utf-16le,则输入:set fineencoding=utf-8

(2)创建索引和mapping

创建索引

$ curl -XPUT http://localhost:9200/index

创建mapping #对要分词的字段message进行分词器设置和fielddata设置。

$ curl -XPOST http://localhost:9200/index/logs/_mapping -d '

{

"logs": {

"_all": {

"analyzer": "ik"

},

"properties": {

"path": {

"type": "string"

},

"@timestamp": {

"format": "strict_date_optional_time||epoch_millis",

"type": "date"

},

"@version": {

"type": "string"

},

"host": {

"type": "string"

},

"message": {

"include_in_all": true,

"analyzer": "ik",

"term_vector": "with_positions_offsets",

"boost": 8,

"type": "string",

"fielddata" : { "format" : "true" }

},

"tags": {

"type": "string"

}

}

}

}'

(3)使用logstash 将文本文件写入到es中

安装logstash

$ wget https://download.elasticsearch.org/elasticsearch/release/org/elasticsearch/distribution/rpm/elasticsearch/2.1.1/elasticsearch-2.1.1.rpm $ rpm -ivh logstash-2.1.1.rpm

配置logstash

$ vim /etc/logstash/conf.d/logstash.conf

input {

file {

codec => 'json'

path => "/tmp/zhongwen.txt"

start_position => "beginning"

}

}

output {

elasticsearch {

hosts => "10.10.10.10:9200"

index => "index"

flush_size => 3000

idle_flush_time => 2

workers => 4

}

stdout { codec => rubydebug }

}

启动

$ /etc/init.d/logstash start

查看stdout输出,就能判断是否写入es中。

$ tail -f /var/log/logstash.stdout

(4)检查索引中是否有数据

$ curl 'localhost:9200/_cat/indices/index?v' #可以看到有6007条数据。 health status index pri rep docs.count docs.deleted store.size pri.store.size green open index 5 0 6007 0 2.5mb 2.5mb

$ curl -XPOST "http://localhost:9200/index/_search?pretty"

{

"took" : 1,

"timed_out" : false,

"_shards" : {

"total" : 5,

"successful" : 5,

"failed" : 0

},

"hits" : {

"total" : 5227,

"max_score" : 1.0,

"hits" : [ {

"_index" : "index",

"_type" : "logs",

"_id" : "AVluC7Dpbw7ZlXPmUTSG",

"_score" : 1.0,

"_source" : {

"message" : "中国百万年薪须交40多万个税 精英无奈出国发展",

"tags" : [ "_jsonparsefailure" ],

"@version" : "1",

"@timestamp" : "2017-01-05T09:52:56.150Z",

"host" : "0.0.0.0",

"path" : "/tmp/333.log"

}

}, {

"_index" : "index",

"_type" : "logs",

"_id" : "AVluC7Dpbw7ZlXPmUTSN",

"_score" : 1.0,

"_source" : {

"message" : "奥巴马不顾特朗普反对坚持外迁关塔那摩监狱囚犯",

"tags" : [ "_jsonparsefailure" ],

"@version" : "1",

"@timestamp" : "2017-01-05T09:52:56.222Z",

"host" : "0.0.0.0",

"path" : "/tmp/333.log"

}

}

四:开始计算分词的词频,排序

(1)查询所有词出现频率最高的top10

$ curl -XGET "http://localhost:9200/index/_search?pretty" -d'

{

"size" : 0,

"aggs" : {

"messages" : {

"terms" : {

"size" : 10,

"field" : "message"

}

}

}

}'

返回结果

{

"took" : 3,

"timed_out" : false,

"_shards" : {

"total" : 5,

"successful" : 5,

"failed" : 0

},

"hits" : {

"total" : 6007,

"max_score" : 0.0,

"hits" : [ ]

},

"aggregations" : {

"messages" : {

"doc_count_error_upper_bound" : 154,

"sum_other_doc_count" : 94992,

"buckets" : [ {

"key" : "一",

"doc_count" : 1582

}, {

"key" : "后",

"doc_count" : 560

}, {

"key" : "人",

"doc_count" : 541

}, {

"key" : "家",

"doc_count" : 538

}, {

"key" : "出",

"doc_count" : 489

}, {

"key" : "发",

"doc_count" : 451

}, {

"key" : "个",

"doc_count" : 440

}, {

"key" : "州",

"doc_count" : 421

}, {

"key" : "岁",

"doc_count" : 405

}, {

"key" : "子",

"doc_count" : 402

} ]

}

}

}

(2)查询所有两字词出现频率最高的top10

$ curl -XGET "http://localhost:9200/index/_search?pretty" -d'

{

"size" : 0,

"aggs" : {

"messages" : {

"terms" : {

"size" : 10,

"field" : "message",

"include" : "[\u4E00-\u9FA5][\u4E00-\u9FA5]"

}

}

},

"highlight": {

"fields": {

"message": {}

}

}

}'

返回

{

"took" : 22,

"timed_out" : false,

"_shards" : {

"total" : 5,

"successful" : 5,

"failed" : 0

},

"hits" : {

"total" : 6007,

"max_score" : 0.0,

"hits" : [ ]

},

"aggregations" : {

"messages" : {

"doc_count_error_upper_bound" : 73,

"sum_other_doc_count" : 42415,

"buckets" : [ {

"key" : "女子",

"doc_count" : 291

}, {

"key" : "男子",

"doc_count" : 264

}, {

"key" : "竟然",

"doc_count" : 257

}, {

"key" : "上海",

"doc_count" : 255

}, {

"key" : "这个",

"doc_count" : 238

}, {

"key" : "女孩",

"doc_count" : 174

}, {

"key" : "这些",

"doc_count" : 167

}, {

"key" : "一个",

"doc_count" : 159

}, {

"key" : "注意",

"doc_count" : 143

}, {

"key" : "这样",

"doc_count" : 142

} ]

}

}

}

(3)查询所有两字词且不包含“女”字,出现频率最高的top10

curl -XGET "http://localhost:9200/index/_search?pretty" -d'

{

"size" : 0,

"aggs" : {

"messages" : {

"terms" : {

"size" : 10,

"field" : "message",

"include" : "[\u4E00-\u9FA5][\u4E00-\u9FA5]",

"exclude" : "女.*"

}

}

},

"highlight": {

"fields": {

"message": {}

}

}

}'

返回

{

"took" : 19,

"timed_out" : false,

"_shards" : {

"total" : 5,

"successful" : 5,

"failed" : 0

},

"hits" : {

"total" : 5227,

"max_score" : 0.0,

"hits" : [ ]

},

"aggregations" : {

"messages" : {

"doc_count_error_upper_bound" : 71,

"sum_other_doc_count" : 41773,

"buckets" : [ {

"key" : "男子",

"doc_count" : 264

}, {

"key" : "竟然",

"doc_count" : 257

}, {

"key" : "上海",

"doc_count" : 255

}, {

"key" : "这个",

"doc_count" : 238

}, {

"key" : "这些",

"doc_count" : 167

}, {

"key" : "一个",

"doc_count" : 159

}, {

"key" : "注意",

"doc_count" : 143

}, {

"key" : "这样",

"doc_count" : 142

}, {

"key" : "重庆",

"doc_count" : 142

}, {

"key" : "结果",

"doc_count" : 137

} ]

}

}

}

还有更多的分词策略,例如设置近义词(设置“番茄”和“西红柿”为同义词,搜索“番茄”,“西红柿”也会出来),设置拼音分词(搜索“zhonghua”,“中华”也可以搜索出来)等等。