一、副本集(repl set)简介

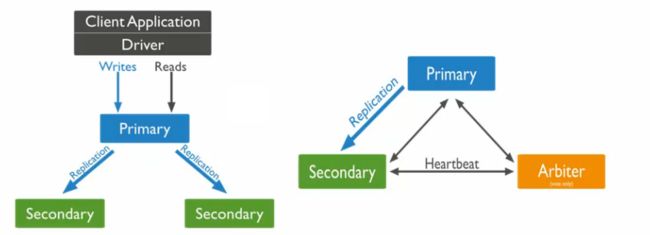

早期版本使用 master-slave 模式,一主一从和 MySQL 类似,但 slave 在此架构中为只读,当主库宕机后,从库不能自动切换为主。目前已经淘汰了 master-slave 模式,改为副本集,这种模式下有一个主(primary),和多个从(secondary)只读。支持给它们设置权重,当主宕掉后,权重最高的切换为主。

在此架构中还可以建立一个仲裁(arbiter)的角色,它只负责裁决,而不存储数据。并且此架构读写数据都在主上,优点在于能够自动的切换角色,但是缺点在于不能实现让用户的访问由原来的主自动切换到现在的主,这个还需手动切换来实现负载均衡的目的;当然这个我们可以写一个监控脚本,让程序自动的帮我们切换到要访问的主的IP即可。

最后应该注意一点,要想使用副本集,从的mongodb的数据必须都是空的,要不然执行 rs.initiate()命令时会提示因存在数据而导致初始化不成功(has data already, cannot initiate set)。

二、副本集的架构

1、主要流程

2、裁决机制

三、副本集的搭建

1、准备工作

准备好以下三台机器

主(primary):192.168.0.103

从(secondary1):192.168.0.109

从(secondary2):192.168.0.120

这三台机器全部都安装好 MongoDB ,安装的详细过程参考我上篇文章MongoDB的安装:

http://msiyuetian.blog.51cto.com/8637744/1720559

2、编辑配置文件

1)对三台机器的配置文件都进行以下修改

# vim /etc/mongod.conf

找到:

| #replication: |

修改为:

replication: oplogSizeMB: 20 replSetName: tpp |

说明:oplogSizeMB 指 oplog 大小;replSetName 指副本集名称。注意格式是前面空两格,冒号后空一格。

2)然后重启三台 MongoDB

# /etc/init.d/mongod restart

若重启失败,用 mongod -f /etc/mongod.conf 命令开启。

3)连接主

[root@primary ~]# mongo

MongoDB shell version: 3.0.7 connecting to: test |

> use admin

| switched to db admin |

> config={_id:"tpp",members:[{_id:0,host:"192.168.0.103:27017"},{_id:1,host:"192.168.0.109:27017"},{_id:2,host:"192.168.0.120:27017"}]}

{ "_id" : "tpp", "members" : [ { "_id" : 0, "host" : "192.168.0.103:27017" }, { "_id" : 1, "host" : "192.168.0.109:27017" }, { "_id" : 2, "host" : "192.168.0.120:27017" } ] } |

> rs.initiate(config) //初始化

| { "ok" : 1 } |

tpp:OTHER> rs.status() //查看状态

{ "set" : "tpp", "date" : ISODate("2015-12-12T23:19:04.253Z"), "myState" : 1, "members" : [ { "_id" : 0, "name" : "192.168.0.103:27017", "health" : 1, "state" : 1, "stateStr" : "PRIMARY", "uptime" : 10034, "optime" : Timestamp(1449961495, 1), "optimeDate" : ISODate("2015-12-12T23:04:55Z"), "electionTime" : Timestamp(1449961497, 1), "electionDate" : ISODate("2015-12-12T23:04:57Z"), "configVersion" : 1, "self" : true }, { "_id" : 1, "name" : "192.168.0.109:27017", "health" : 1, "state" : 2, "stateStr" : "SECONDARY", "uptime" : 848, "optime" : Timestamp(1449961495, 1), "optimeDate" : ISODate("2015-12-12T23:04:55Z"), "lastHeartbeat" : ISODate("2015-12-12T23:19:04.073Z"), "lastHeartbeatRecv" : ISODate("2015-12-12T23:19:03.796Z"), "pingMs" : 0, "configVersion" : 1 }, { "_id" : 2, "name" : "192.168.0.120:27017", "health" : 1, "state" : 2, "stateStr" : "SECONDARY", "uptime" : 848, "optime" : Timestamp(1449961495, 1), "optimeDate" : ISODate("2015-12-12T23:04:55Z"), "lastHeartbeat" : ISODate("2015-12-12T23:19:04.073Z"), "lastHeartbeatRecv" : ISODate("2015-12-12T23:19:03.831Z"), "pingMs" : 0, "configVersion" : 1 } ], "ok" : 1 } |

注意:两个从上的状态为 "stateStr" : "SECONDARY",若为 "STARTUP",则需要进行如下操作:

| > var config={_id:"tpp",members:[{_id:0,host:"192.168.0.103:27017"},{_id:1,host:"192.168.0.109:27017"},{_id:2,host:"192.168.0.120:27017"}]} > rs.initiate(config) |

查看到以上信息就说明配置成功了,我们也会发现主上前缀变为 tpp:PRIMARY

| tpp:PRIMARY> |

而两个从上都变为 tpp:SECONDARY

[root@secondary1 ~]# mongo MongoDB shell version: 3.0.7 connecting to: test tpp:SECONDARY> |

三、副本集的测试

测试1:同步数据测试

1)主上建库、建集合

tpp:PRIMARY> use mydb

| switched to db mydb |

tpp:PRIMARY> db.createCollection('test1')

| { "ok" : 1 } |

tpp:PRIMARY> show dbs

local 0.078GB mydb 0.078GB |

2)从上查看

tpp:SECONDARY> show dbs //会报错,因为secondary节点默认不可读

2015-12-13T07:44:46.256+0800 E QUERY Error: listDatabases failed:{ "note" : "from execCommand", "ok" : 0, "errmsg" : "not master" } at Error ( at Mongo.getDBs (src/mongo/shell/mongo.js:47:15) at shellHelper.show (src/mongo/shell/utils.js:630:33) at shellHelper (src/mongo/shell/utils.js:524:36) at (shellhelp2):1:1 at src/mongo/shell/mongo.js:47 |

tpp:SECONDARY> rs.slaveOk() //执行该命令后就可以查询了

tpp:SECONDARY> show dbs

local 0.078GB mydb 0.078GB |

注意:通过上面的命令只能临时查询,下次再通过mongo命令进入后查询仍会报错,所以可以修改文件

[root@secondary2 ~]# vi ~/.mongorc.js //写入下面这行

| rs.slaveOk(); |

[root@secondary2 ~]# service mongod restart

[root@secondary2 ~]# mongo

MongoDB shell version: 3.0.7

connecting to: test

tpp:SECONDARY> show dbs

local 0.078GB mydb 0.078GB |

测试2:模拟主宕机,从变成主

1)在主上查看所有机器的权重

tpp:PRIMARY> rs.config()

{ "_id" : "tpp", "version" : 1, "members" : [ { "_id" : 0, "host" : "192.168.0.103:27017", "arbiterOnly" : false, "buildIndexes" : true, "hidden" : false, "priority" : 1, "tags" : { }, "slaveDelay" : 0, "votes" : 1 }, { "_id" : 1, "host" : "192.168.0.109:27017", "arbiterOnly" : false, "buildIndexes" : true, "hidden" : false, "priority" : 1, "tags" : { }, "slaveDelay" : 0, "votes" : 1 }, { "_id" : 2, "host" : "192.168.0.120:27017", "arbiterOnly" : false, "buildIndexes" : true, "hidden" : false, "priority" : 1, "tags" : { }, "slaveDelay" : 0, "votes" : 1 } ], "settings" : { "chainingAllowed" : true, "heartbeatTimeoutSecs" : 10, "getLastErrorModes" : { }, "getLastErrorDefaults" : { "w" : 1, "wtimeout" : 0 } } } |

注意:默认所有的机器权重都为1,如果任何一个权重设置为比其他的高,则该台机器立马会切换为primary角色,所以要我们预设三台机器的权重。

2)设置权重

预设主(0.103)权重为3、从(0.109)权重为2、从(0.120)权重为1(默认值可不用重新赋值)。

在主上执行下面命令:

tpp:PRIMARY> cfg=rs.config() //重新赋值

tpp:PRIMARY> cfg.members[0].priority = 3

| 3 |

tpp:PRIMARY> cfg.members[1].priority = 2

| 2 |

tpp:PRIMARY> rs.reconfig(cfg) //重新加载配置

| { "ok" : 1 } |

tpp:PRIMARY> rs.config() //重新查看权重已改变

{ "_id" : "tpp", "version" : 2, "members" : [ { "_id" : 0, "host" : "192.168.0.103:27017", "arbiterOnly" : false, "buildIndexes" : true, "hidden" : false, "priority" : 3, "tags" : { }, "slaveDelay" : 0, "votes" : 1 }, { "_id" : 1, "host" : "192.168.0.109:27017", "arbiterOnly" : false, "buildIndexes" : true, "hidden" : false, "priority" : 2, "tags" : { }, "slaveDelay" : 0, "votes" : 1 }, { "_id" : 2, "host" : "192.168.0.120:27017", "arbiterOnly" : false, "buildIndexes" : true, "hidden" : false, "priority" : 1, "tags" : { }, "slaveDelay" : 0, "votes" : 1 } ], "settings" : { "chainingAllowed" : true, "heartbeatTimeoutSecs" : 10, "getLastErrorModes" : { }, "getLastErrorDefaults" : { "w" : 1, "wtimeout" : 0 } } } |

3)禁掉主上的mongod服务,模拟主宕机

[root@primary ~]# iptables -I INPUT -p tcp --dport 27017 -j DROP

执行上面命令以后,在权重大的从(0.109)上敲下回车,从的角色立马由SECONDARY变为PRIMARY:

tpp:SECONDARY>

tpp:PRIMARY>

tpp:PRIMARY> rs.status() //查看状态

{ "set" : "tpp", "date" : ISODate("2015-12-13T00:29:11.161Z"), "myState" : 1, "members" : [ { "_id" : 0, "name" : "192.168.0.103:27017", "health" : 0, "state" : 8, "stateStr" : "(not reachable/healthy)", "uptime" : 0, "optime" : Timestamp(0, 0), "optimeDate" : ISODate("1970-01-01T00:00:00Z"), "lastHeartbeat" : ISODate("2015-12-13T00:29:07.659Z"), "lastHeartbeatRecv" : ISODate("2015-12-13T00:29:09.708Z"), "pingMs" : 0, "lastHeartbeatMessage" : "Failed attempt to connect to 192.168.0.103:27017; couldn't connect to server 192.168.0.103:27017 (192.168.0.103), connection attempt failed", "configVersion" : -1 }, { "_id" : 1, "name" : "192.168.0.109:27017", "health" : 1, "state" : 1, "stateStr" : "PRIMARY", "uptime" : 5165, "optime" : Timestamp(1449965758, 1), "optimeDate" : ISODate("2015-12-13T00:15:58Z"), "electionTime" : Timestamp(1449966238, 1), "electionDate" : ISODate("2015-12-13T00:23:58Z"), "configVersion" : 2, "self" : true }, { "_id" : 2, "name" : "192.168.0.120:27017", "health" : 1, "state" : 2, "stateStr" : "SECONDARY", "uptime" : 5053, "optime" : Timestamp(1449965758, 1), "optimeDate" : ISODate("2015-12-13T00:15:58Z"), "lastHeartbeat" : ISODate("2015-12-13T00:29:09.922Z"), "lastHeartbeatRecv" : ISODate("2015-12-13T00:29:09.980Z"), "pingMs" : 0, "configVersion" : 2 } ], "ok" : 1 } |

注意:由上面可发现原来的主(0.103)已处于不健康状态(失连),而原来权重高的从(0.109)的角色变为PRIMARY,权重低的从角色不变。不过要想让用户识别新主,还需手动指定读库的目标server。

测试3:原主恢复,新主写入的数据是否同步

1)新主建库、建集合

tpp:PRIMARY> use mydb2

| switched to db mydb2 |

tpp:PRIMARY> db.createCollection('test2')

| { "ok" : 1 } |

tpp:PRIMARY> show dbs

local 0.078GB mydb 0.078GB mydb2 0.078GB |

2)删除规则,恢复原主

[root@primary ~]# iptables -D INPUT -p tcp --dport 27017 -j DROP

新主上按下回车,新主角色由 PRIMARY 变为 SECONDARY,并输出如下信息:

tpp:PRIMARY>

2015-12-13T08:58:37.143+0800 I NETWORK DBClientCursor::init call() failed 2015-12-13T08:58:37.145+0800 I NETWORK trying reconnect to 127.0.0.1:27017 (127.0.0.1) failed 2015-12-13T08:58:37.146+0800 I NETWORK reconnect 127.0.0.1:27017 (127.0.0.1) ok |

tpp:SECONDARY>

3)登入原主,查看数据库信息

[root@primary ~]# mongo

MongoDB shell version: 3.0.7

connecting to: test

tpp:PRIMARY> show dbs

local 0.078GB mydb 0.078GB mydb2 0.078GB |

说明:由上面可知,当权重更高的原主恢复运行了,在新主期间写入的新数据同样同步到了原主上,即副本集成功实现了负载均衡的目的。