前期准备:

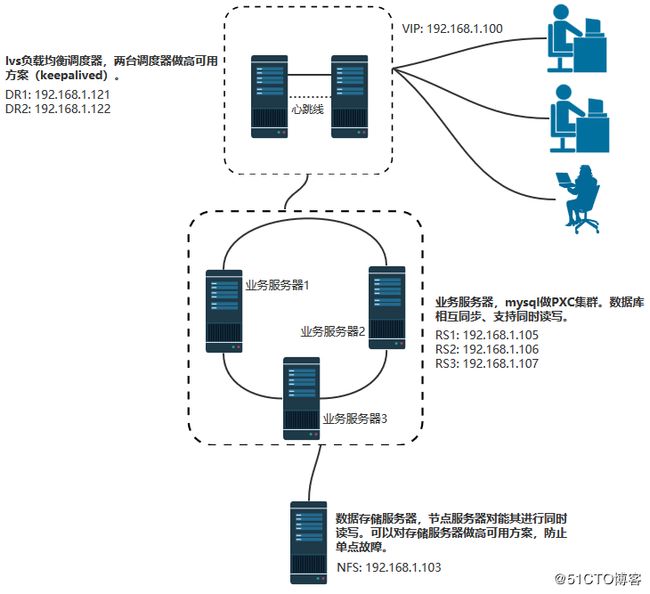

NFS服务器:计算机名nfsserver,IP地址192.168.1.103,用于存放业务系统的数据。 node1:计算机名PXC01,IP地址192.168.1.105,安装pxc系统和业务系统。 node2:计算机名PXC02,IP地址192.168.1.106,安装pxc系统和业务系统。 node3:计算机名PXC03,IP地址192.168.1.107,安装pxc系统和业务系统。 lvs服务器:计算机名lvsserver,IP地址192.168.1.121,vip为192.168.1.100,安装lvs用于负载均衡。 操作系统均为:Centos 6.9 64位 备注1:第五章节为两台lvs服务器使用keepalived做高可用。 备注2:本方案相关工具原理知识,请网上了解。

方案架构图:

一、安装业务系统、配置mysql负载均衡(PXC方案)

----------------------------------前言--------------------------------- 需实现以下目标: 1、实现多台mysql节点数据一模一样 2、任何一台mysql节点挂了,不影响使用 3、每个节点同时提供服务和读写 4、要考虑到以后的扩展性,比如增加节点方便 5、在复杂情况下全自动化,不需要手工干预 考虑和尝试过很多方案,最终选择PXC方案。其它方案不理想的原因如下: (1)主从复制方案:此方案满足不了需求,因为如果对从节点写入东西,不会同步到主服务器。 (2)主主复制方案:不适合生产环境,而且增加第三个节点很麻烦。 (3)基于主从复制扩展方案(MMM、MHA):此方案满足不了需求,因为读服务器虽然实现了负载均衡,但是写服务器只有一台提供服务。 (4)将mysql存在共享存储、DRBD中:不可行,节点不能同时提供服务,属于HA级别。 (5)Mysql Cluster集群:某种意义上来说只支持"NDB数据引擎"(分片要改成NDB,不分片不需要改),而且不支持外键、占用磁盘和内存大。重启的时候数据load到内存要很久。部署和管理起来复杂。 (6)Mysql Fabric集群:主要用两个功能,一个是mysql HA,另外一个是分片(即比如一个上TB的表,对其进行分片,然后每台服务器上存储一部分),不适用于我需要的环境。 ----------------------------------前言---------------------------------

1、环境准备(node1、node2、node3)

node1 192.168.1.105 PXC01 centos 6.9 mini

node2 192.168.1.106 PXC02 centos 6.9 mini

node3 192.168.1.107 PXC03 centos 6.9 mini

2、关闭防火墙或selinux(node1、node2、node3)

[root@localhost ~]# /etc/init.d/iptables stop

[root@localhost ~]# chkconfig iptables off

[root@localhost ~]# setenforce 0

[root@localhost ~]# cat /etc/sysconfig/selinux

# This file controls the state of SELinux on the system.

# SELINUX= can take one of these three values:

# enforcing - SELinux security policy is enforced.

# permissive - SELinux prints warnings instead of enforcing.

# disabled - SELinux is fully disabled.

SELINUX=disabled

# SELINUXTYPE= type of policy in use. Possible values are:

# targeted - Only targeted network daemons are protected.

# strict - Full SELinux protection.

SELINUXTYPE=targeted

3、安装业务系统,并升级到最新版本(node1、node2、node3)

4、把业务系统所有表都设置成主键(node1)

查没主键的表的命令是:

select t1.table_schema,t1.table_name from information_schema.tables t1

left outer join

information_schema.TABLE_CONSTRAINTS t2

on t1.table_schema = t2.TABLE_SCHEMA and t1.table_name = t2.TABLE_NAME and t2.CONSTRAINT_NAME in

('PRIMARY')

where t2.table_name is null and t1.TABLE_SCHEMA not in ('information_schema','performance_schema','test','mysql', 'sys');

查有主键的表的命令是:

select t1.table_schema,t1.table_name from information_schema.tables t1

left outer join

information_schema.TABLE_CONSTRAINTS t2

on t1.table_schema = t2.TABLE_SCHEMA and t1.table_name = t2.TABLE_NAME and t2.CONSTRAINT_NAME in

('PRIMARY')

where t2.table_name is not null and t1.TABLE_SCHEMA not in ('information_schema','performance_schema','test','mysql', 'sys');

按照下面的模版设置主键(node1)

ALTER TABLE `表名` ADD `id` int(11) NOT NULL auto_increment FIRST, ADD primary key(id);

5、把业务系统非innodb引擎的表修改成innodb引擎表(node1)

查看哪些表用了MyISAM引擎:

[root@PXC01 ~]# mysql -u oa -p密码 -e "show table status from oa where Engine='MyISAM';"

[root@PXC01 ~]# mysql -u oa -p密码 -e "show table status from oa where Engine='MyISAM';" |awk '{print $1}' |sed 1d>mysqlchange

停止有使用到mysql的其它服务:

[root@PXC01 ~]# for i in 服务器名1 服务器名2;do /etc/init.d/$i stop;done

执行更改成innodb引擎的脚本:

[root@PXC01 ~]# cat mysqlchange_innodb.sh

#! /bin/bash

cat mysqlchange | while read LINE

do

tablename=$(echo $LINE |awk '{print $1}')

echo "现在修改$tablename的引擎为innodb"

mysql -u oa -p`密码 | grep -w pass | awk -F"= " '{print $NF}'` oa -e "alter table $tablename engine=innodb;"

done

验证:

[root@PXC01 ~]# mysql -u oa -p`密码 | grep -w pass | awk -F"= " '{print $NF}'` -e "show table status from oa where Engine='MyISAM';"

[root@PXC01 ~]# mysql -u oa -p`密码 | grep -w pass | awk -F"= " '{print $NF}'` -e "show table status from oa where Engine='innoDB';"

6、备份数据库(node1)

[root@PXC01 ~]# mysqldump -u oa -p`密码 | grep -w pass | awk -F"= " '{print $NF}'` --databases oa |gzip>20180524.sql.gz

[root@PXC01 ~]# ll

总用量 44

-rw-r--r-- 1 root root 24423 5月 22 16:55 20180524.sql.gz

7、将业务系统自带的mysql端口改成其它端口并且停止服务,开机不启动服务(node1、node2、node3)

8、yum安装pxc并配置

基础安装(node1、node2、node3)

[root@percona1 ~]# yum -y groupinstall Base Compatibility libraries Debugging Tools Dial-up Networking suppport Hardware monitoring utilities Performance Tools Development tools

组件安装(node1、node2、node3)

[root@percona1 ~]# yum install http://www.percona.com/downloads/percona-release/redhat/0.1-3/percona-release-0.1-3.noarch.rpm -y

[root@percona1 ~]# yum localinstall http://dl.fedoraproject.org/pub/epel/6/x86_64/epel-release-6-8.noarch.rpm

[root@percona1 ~]# yum install socat libev -y

[root@percona1 ~]# yum install Percona-XtraDB-Cluster-55 -y

node1配置:

[root@PXC01 ~]# vi /etc/my.cnf

# 我业务系统需要6033端口

[client]

port=6033

# 我业务系统需要6033端口

[mysqld]

datadir=/var/lib/mysql

user=mysql

port=6033

# Path to Galera library

wsrep_provider=/usr/lib64/libgalera_smm.so

# Cluster connection URL contains the IPs of node#1, node#2 and node#3

wsrep_cluster_address=gcomm://192.168.1.105,192.168.1.106,192.168.1.107

# In order for Galera to work correctly binlog format should be ROW

binlog_format=ROW

# MyISAM storage engine has only experimental support

default_storage_engine=InnoDB

# This changes how InnoDB autoincrement locks are managed and is a requirement for Galera

innodb_autoinc_lock_mode=2

# Node #1 address

wsrep_node_address=192.168.1.105

# SST method

wsrep_sst_method=xtrabackup-v2

# Cluster name

wsrep_cluster_name=my_centos_cluster

# Authentication for SST method

wsrep_sst_auth="sstuser:s3cret"

node1启动mysql命令如下:

[root@PXC01 mysql]# /etc/init.d/mysql bootstrap-pxc

Bootstrapping PXC (Percona XtraDB Cluster) ERROR! MySQL (Percona XtraDB Cluster) is not running, but lock file (/var/lock/subsys/mysql) exists

Starting MySQL (Percona XtraDB Cluster)... SUCCESS!

如果是centos7,则启动命令如下:

[root@percona1 ~]# systemctl start [email protected]

若是重启的话,就先kill,然后删除pid文件后再执行上面的启动命令。

node1查看服务:

[root@PXC01 ~]# /etc/init.d/mysql status

SUCCESS! MySQL (Percona XtraDB Cluster) running (7712)

将/etc/init.d/mysql bootstrap-pxc加入到rc.local里面。

node1进入mysql控制台:

[root@PXC01 ~]# mysql

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 3

Server version: 5.5.41-37.0-55 Percona XtraDB Cluster (GPL), Release rel37.0, Revision 855, WSREP version 25.12, wsrep_25.12.r4027

Copyright (c) 2009-2014 Percona LLC and/or its affiliates

Copyright (c) 2000, 2014, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql> show status like 'wsrep%';

+----------------------------+--------------------------------------+

| Variable_name | Value |

+----------------------------+--------------------------------------+

| wsrep_local_state_uuid | 1ab083fc-5c46-11e8-a7b7-76a002f2b5c8 |

| wsrep_protocol_version | 4 |

| wsrep_last_committed | 0 |

| wsrep_replicated | 0 |

| wsrep_replicated_bytes | 0 |

| wsrep_received | 2 |

| wsrep_received_bytes | 134 |

| wsrep_local_commits | 0 |

| wsrep_local_cert_failures | 0 |

| wsrep_local_replays | 0 |

| wsrep_local_send_queue | 0 |

| wsrep_local_send_queue_avg | 0.000000 |

| wsrep_local_recv_queue | 0 |

| wsrep_local_recv_queue_avg | 0.000000 |

| wsrep_flow_control_paused | 0.000000 |

| wsrep_flow_control_sent | 0 |

| wsrep_flow_control_recv | 0 |

| wsrep_cert_deps_distance | 0.000000 |

| wsrep_apply_oooe | 0.000000 |

| wsrep_apply_oool | 0.000000 |

| wsrep_apply_window | 0.000000 |

| wsrep_commit_oooe | 0.000000 |

| wsrep_commit_oool | 0.000000 |

| wsrep_commit_window | 0.000000 |

| wsrep_local_state | 4 |

| wsrep_local_state_comment | Synced |

| wsrep_cert_index_size | 0 |

| wsrep_causal_reads | 0 |

| wsrep_incoming_addresses | 192.168.1.105:3306 |

| wsrep_cluster_conf_id | 1 |

| wsrep_cluster_size | 1 |

| wsrep_cluster_state_uuid | 1ab083fc-5c46-11e8-a7b7-76a002f2b5c8 |

| wsrep_cluster_status | Primary |

| wsrep_connected | ON |

| wsrep_local_bf_aborts | 0 |

| wsrep_local_index | 0 |

| wsrep_provider_name | Galera |

| wsrep_provider_vendor | Codership Oy |

| wsrep_provider_version | 2.12(r318911d) |

| wsrep_ready | ON |

| wsrep_thread_count | 2 |

+----------------------------+--------------------------------------+

41 rows in set (0.00 sec)

node1设置数据库用户名密码

mysql> UPDATE mysql.user SET password=PASSWORD("Passw0rd") where user='root';

node1创建、授权、同步账号

mysql> CREATE USER 'sstuser'@'localhost' IDENTIFIED BY 's3cret';

mysql> GRANT RELOAD, LOCK TABLES, REPLICATION CLIENT ON *.* TO 'sstuser'@'localhost';

mysql> FLUSH PRIVILEGES;

node1查看你指定集群的IP地址

mysql> SHOW VARIABLES LIKE 'wsrep_cluster_address';

+-----------------------+---------------------------------------------------+

| Variable_name | Value |

+-----------------------+---------------------------------------------------+

| wsrep_cluster_address | gcomm://192.168.1.105,192.168.1.106,192.168.1.107 |

+-----------------------+---------------------------------------------------+

1 row in set (0.00 sec)

node1此参数查看是否开启

mysql> show status like 'wsrep_ready';

+---------------+-------+

| Variable_name | Value |

+---------------+-------+

| wsrep_ready | ON |

+---------------+-------+

1 row in set (0.00 sec)

node1查看集群的成员数

mysql> show status like 'wsrep_cluster_size';

+--------------------+-------+

| Variable_name | Value |

+--------------------+-------+

| wsrep_cluster_size | 1 |

+--------------------+-------+

1 row in set (0.00 sec)

node1查看wsrep的相关参数

mysql> show status like 'wsrep%';

+----------------------------+--------------------------------------+

| Variable_name | Value |

+----------------------------+--------------------------------------+

| wsrep_local_state_uuid | 1ab083fc-5c46-11e8-a7b7-76a002f2b5c8 |

| wsrep_protocol_version | 4 |

| wsrep_last_committed | 2 |

| wsrep_replicated | 2 |

| wsrep_replicated_bytes | 405 |

| wsrep_received | 2 |

| wsrep_received_bytes | 134 |

| wsrep_local_commits | 0 |

| wsrep_local_cert_failures | 0 |

| wsrep_local_replays | 0 |

| wsrep_local_send_queue | 0 |

| wsrep_local_send_queue_avg | 0.000000 |

| wsrep_local_recv_queue | 0 |

| wsrep_local_recv_queue_avg | 0.000000 |

| wsrep_flow_control_paused | 0.000000 |

| wsrep_flow_control_sent | 0 |

| wsrep_flow_control_recv | 0 |

| wsrep_cert_deps_distance | 1.000000 |

| wsrep_apply_oooe | 0.000000 |

| wsrep_apply_oool | 0.000000 |

| wsrep_apply_window | 0.000000 |

| wsrep_commit_oooe | 0.000000 |

| wsrep_commit_oool | 0.000000 |

| wsrep_commit_window | 0.000000 |

| wsrep_local_state | 4 |

| wsrep_local_state_comment | Synced |

| wsrep_cert_index_size | 2 |

| wsrep_causal_reads | 0 |

| wsrep_incoming_addresses | 192.168.1.105:3306 |

| wsrep_cluster_conf_id | 1 |

| wsrep_cluster_size | 1 |

| wsrep_cluster_state_uuid | 1ab083fc-5c46-11e8-a7b7-76a002f2b5c8 |

| wsrep_cluster_status | Primary |

| wsrep_connected | ON |

| wsrep_local_bf_aborts | 0 |

| wsrep_local_index | 0 |

| wsrep_provider_name | Galera |

| wsrep_provider_vendor | Codership Oy |

| wsrep_provider_version | 2.12(r318911d) |

| wsrep_ready | ON |

| wsrep_thread_count | 2 |

+----------------------------+--------------------------------------+

41 rows in set (0.00 sec)

node2配置:

[root@PXC02 ~]# vi /etc/my.cnf

我业务系统需要6033端口

[client]

port=6033

我业务系统需要6033端口

[mysqld]

datadir=/var/lib/mysql

user=mysql

port=6033

# Path to Galera library

wsrep_provider=/usr/lib64/libgalera_smm.so

# Cluster connection URL contains the IPs of node#1, node#2 and node#3

wsrep_cluster_address=gcomm://192.168.1.105,192.168.1.106,192.168.1.107

# In order for Galera to work correctly binlog format should be ROW

binlog_format=ROW

# MyISAM storage engine has only experimental support

default_storage_engine=InnoDB

# This changes how InnoDB autoincrement locks are managed and is a requirement for Galera

innodb_autoinc_lock_mode=2

# Node #1 address

wsrep_node_address=192.168.1.106

# SST method

wsrep_sst_method=xtrabackup-v2

# Cluster name

wsrep_cluster_name=my_centos_cluster

# Authentication for SST method

wsrep_sst_auth="sstuser:s3cret"

node2启动服务:

[root@PXC02 ~]# /etc/init.d/mysql start

ERROR! MySQL (Percona XtraDB Cluster) is not running, but lock file (/var/lock/subsys/mysql) exists

Starting MySQL (Percona XtraDB Cluster).....State transfer in progress, setting sleep higher

... SUCCESS!

node2查看服务:

[root@PXC02 ~]# /etc/init.d/mysql status

SUCCESS! MySQL (Percona XtraDB Cluster) running (9071)

node3配置:

[root@PXC03 ~]# vi /etc/my.cnf

我业务系统需要6033端口

[client]

port=6033

我业务系统需要6033端口

[mysqld]

datadir=/var/lib/mysql

user=mysql

port=6033

# Path to Galera library

wsrep_provider=/usr/lib64/libgalera_smm.so

# Cluster connection URL contains the IPs of node#1, node#2 and node#3

wsrep_cluster_address=gcomm://192.168.1.105,192.168.1.106,192.168.1.107

# In order for Galera to work correctly binlog format should be ROW

binlog_format=ROW

# MyISAM storage engine has only experimental support

default_storage_engine=InnoDB

# This changes how InnoDB autoincrement locks are managed and is a requirement for Galera

innodb_autoinc_lock_mode=2

# Node #1 address

wsrep_node_address=192.168.1.107

# SST method

wsrep_sst_method=xtrabackup-v2

# Cluster name

wsrep_cluster_name=my_centos_cluster

# Authentication for SST method

wsrep_sst_auth="sstuser:s3cret"

node3启动服务:

[root@PXC03 ~]# /etc/init.d/mysql start

ERROR! MySQL (Percona XtraDB Cluster) is not running, but lock file (/var/lock/subsys/mysql) exists

Starting MySQL (Percona XtraDB Cluster)......State transfer in progress, setting sleep higher

.... SUCCESS!

node3查看服务:

[root@PXC03 ~]# /etc/init.d/mysql status

SUCCESS! MySQL (Percona XtraDB Cluster) running (9071)

..................................注意................................

-> 除了名义上的master之外,其它的node节点只需要启动mysql即可。

-> 节点的数据库的登陆和master节点的用户名密码一致,自动同步。所以其它的节点数据库用户名密码无须重新设置。

也就是说,如上设置,只需要在名义上的master节点(如上的node1)上设置权限,其它的节点配置好/etc/my.cnf后,只需要启动mysql就行,权限会自动同步过来。

如上的node2,node3节点,登陆mysql的权限是和node1一样的(即是用node1设置的权限登陆)

.....................................................................

如果上面的node2、node3启动mysql失败,比如/var/lib/mysql下的err日志报错如下:

[ERROR] WSREP: gcs/src/gcs_group.cpp:long int gcs_group_handle_join_msg(gcs_

解决办法:

-> 查看节点上的iptables防火墙是否关闭;检查到名义上的master节点上的4567端口是否连通(telnet)

-> selinux是否关闭

-> 删除名义上的master节点上的grastate.dat后,重启名义上的master节点的数据库;当然当前节点上的grastate.dat也删除并重启数据库

.....................................................................

9、最后进行测试

在任意一个node上,进行添加,删除,修改操作,都会同步到其他的服务器,是现在主主的模式,当然前提是表引擎必须是innodb,因为galera目前只支持innodb的表。

mysql> show status like 'wsrep%';

+----------------------------+----------------------------------------------------------+

| Variable_name | Value |

+----------------------------+----------------------------------------------------------+

| wsrep_local_state_uuid | 1ab083fc-5c46-11e8-a7b7-76a002f2b5c8 |

| wsrep_protocol_version | 4 |

| wsrep_last_committed | 2 |

| wsrep_replicated | 2 |

| wsrep_replicated_bytes | 405 |

| wsrep_received | 10 |

| wsrep_received_bytes | 728 |

| wsrep_local_commits | 0 |

| wsrep_local_cert_failures | 0 |

| wsrep_local_replays | 0 |

| wsrep_local_send_queue | 0 |

| wsrep_local_send_queue_avg | 0.000000 |

| wsrep_local_recv_queue | 0 |

| wsrep_local_recv_queue_avg | 0.000000 |

| wsrep_flow_control_paused | 0.000000 |

| wsrep_flow_control_sent | 0 |

| wsrep_flow_control_recv | 0 |

| wsrep_cert_deps_distance | 0.000000 |

| wsrep_apply_oooe | 0.000000 |

| wsrep_apply_oool | 0.000000 |

| wsrep_apply_window | 0.000000 |

| wsrep_commit_oooe | 0.000000 |

| wsrep_commit_oool | 0.000000 |

| wsrep_commit_window | 0.000000 |

| wsrep_local_state | 4 |

| wsrep_local_state_comment | Synced |

| wsrep_cert_index_size | 0 |

| wsrep_causal_reads | 0 |

| wsrep_incoming_addresses | 192.168.1.105:6033,192.168.1.106:6033,192.168.1.107:6033 |

| wsrep_cluster_conf_id | 3 |

| wsrep_cluster_size | 3 |

| wsrep_cluster_state_uuid | 1ab083fc-5c46-11e8-a7b7-76a002f2b5c8 |

| wsrep_cluster_status | Primary |

| wsrep_connected | ON |

| wsrep_local_bf_aborts | 0 |

| wsrep_local_index | 0 |

| wsrep_provider_name | Galera |

| wsrep_provider_vendor | Codership Oy |

| wsrep_provider_version | 2.12(r318911d) |

| wsrep_ready | ON |

| wsrep_thread_count | 2 |

+----------------------------+----------------------------------------------------------+

41 rows in set (0.00 sec)

在node3上创建一个库

mysql> create database wangshibo;

Query OK, 1 row affected (0.02 sec)

然后在node1和node2上查看,自动同步过来

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| mysql |

| performance_schema |

| test |

| wangshibo |

+--------------------+

5 rows in set (0.00 sec)

在node1上的wangshibo库下创建表,插入数据

mysql> use wangshibo;

Database changed

mysql> create table test(id int(5));

Query OK, 0 rows affected (0.11 sec)

mysql> insert into test values(1);

Query OK, 1 row affected (0.01 sec)

mysql> insert into test values(2);

Query OK, 1 row affected (0.02 sec)

同样,在其它的节点上查看,也是能自动同步过来

mysql> select * from wangshibo.test;

+------+

| id |

+------+

| 1 |

| 2 |

+------+

2 rows in set (0.00 sec)

10、node1还原数据库(node1)

mysql> create database oa;

gunzip 20180524.sql.gz

/usr/bin/mysql -uroot -p密码 oa <20180524.sql

for i in 服务器名1 服务器名2;do /etc/init.d/$i start;done

11、然后验证node2和node3是否从node1同步了数据库和所有表

二、NFS服务器配置

----------------------------------前言--------------------------------- 这步其实就是就是存储数据。 要实现三台节点服务器都使用一样的数据,方法一般有: (1)NFS:所有数据存放在一台NFS服务器上,因为NFS可以将锁放在nfs服务器端,这样就不会造成同时读写文件、损坏文件。 (2)支持同时读写一个文件的存储设备:设备很昂贵。 (3)分布式文件系统:比如Fasthds、HDFS,没有深入研究,可能需要更改业务程序代码。 ----------------------------------前言---------------------------------

1、安装nfs相关包 [root@nfsserver ~]# yum -y install nfs-utils rpcbind 2、建立共享目录,并且建立用户,并赋予目录用户和组权限(什么用户和组取决于业务系统要求)。 [root@nfsserver ~]# groupadd -g 9005 oa [root@nfsserver ~]# useradd -u 9005 -g 9005 oa -d /home/oa -s /sbin/nologin [root@nfsserver ~]# mkdir /data [root@nfsserver ~]# chown -hR oa.oa /data [root@nfsserver ~]# chmod -R 777 /data (或755) 3、建立exports文件,rw表示读写。 [root@nfsserver /]# cat /etc/exports /data 192.168.1.105(rw,sync,all_squash,anonuid=9005,anongid=9005) /data 192.168.1.106(rw,sync,all_squash,anonuid=9005,anongid=9005) /data 192.168.1.107(rw,sync,all_squash,anonuid=9005,anongid=9005) 4、重启服务和设置服务开机启动,一定要先重启rpcbind,然后才能重启nfs。 [root@nfsserver /]# /etc/init.d/rpcbind start 正在启动 rpcbind: [确定] [root@nfsserver /]# rpcinfo -p localhost [root@nfsserver /]# netstat -lnt [root@nfsserver /]# chkconfig rpcbind on [root@nfsserver /]# chkconfig --list | grep rpcbind [root@nfsserver /]# /etc/init.d/nfs start 启动 NFS 服务: [确定] 启动 NFS mountd: [确定] 启动 NFS 守护进程: [确定] 正在启动 RPC idmapd: [确定] [root@nfsserver /]# rpcinfo -p localhost 会发现多出来好多端口 [root@nfsserver /]# chkconfig nfs on [root@nfsserver /]# chkconfig --list | grep nfs 5、关闭防火墙和selinux。 [root@nfsserver /]# service iptables stop iptables:将链设置为政策 ACCEPT:filter [确定] iptables:清除防火墙规则: [确定] iptables:正在卸载模块: [确定] [root@nfsserver /]# chkconfig iptables off [root@nfsserver /]# selinux关闭方法略。

三、NFS客户端配置(node1、node2、node3)

1、看下NFS服务器的共享(node1、node2、node3) [root@oaserver1 ~]# yum -y install nfs-utils rpcbind(showmount命令要安装这个) [root@oaserver1 /]# /etc/init.d/rpcbind start [root@oaserver1 /]# rpcinfo -p localhost [root@oaserver1 /]# netstat -lnt [root@oaserver1 /]# chkconfig rpcbind on [root@oaserver1 /]# chkconfig --list | grep rpcbind [root@PXC01 ~]# showmount -e 192.168.1.103 Export list for 192.168.1.103: /data 192.168.1.107,192.168.1.106,192.168.1.105 2、新建挂载目录,并且挂载(node1、node2、node3) [root@oaserver1 ~]# mkdir /data [root@oaserver1 ~]# mount -t nfs 192.168.1.103:/data /data [root@oaserver1 ~]# df -h Filesystem Size Used Avail Use% Mounted on /dev/mapper/VolGroup-lv_root 18G 3.6G 13G 22% / tmpfs 491M 4.0K 491M 1% /dev/shm /dev/sda1 477M 28M 425M 7% /boot 192.168.1.103:/data 14G 2.1G 11G 16% /data [root@oaserver1 ~]# cd / [root@oaserver1 /]# ls -lhd data* drwxrwxrwx 2 oa oa 4.0K 5月 24 2018 data 3、设置开机自动挂载(node1、node2、node3) [root@oaserver1 data]# vi /etc/fstab 192.168.1.103:/data /data nfs defaults 0 0 4、移动数据目录到/data目录下 步骤主要是停止相关服务,然后移动数据到/data目录,然后做个软连接。 5、重启下服务器后验证

四、负载均衡lvs配置(单台负载均衡服务器)

1、DS配置

安装所需的依赖包

yum install -y wget make kernel-devel gcc gcc-c++ libnl* libpopt* popt-static

创建一个软链接,防止后面编译安装ipvsadm时找不到系统内核

ln -s /usr/src/kernels/2.6.32-696.30.1.el6.x86_64/ /usr/src/linux

下载安装ipvsadm

wget http://www.linuxvirtualserver.org/software/kernel-2.6/ipvsadm-1.26.tar.gz

tar zxvf ipvsadm-1.26.tar.gz

cd ipvsadm-1.26

make && make install

ipvsadm

创建文件 /etc/init.d/lvsdr, 并赋予执行权限:

#!/bin/sh

VIP=192.168.1.100

RIP1=192.168.1.105

RIP2=192.168.1.106

RIP3=192.168.1.107

. /etc/rc.d/init.d/functions

case "$1" in

start)

echo " start LVS of DirectorServer"

# set the Virtual IP Address

ifconfig eth0:0 $VIP/24

#/sbin/route add -host $VIP dev eth0:0

#Clear IPVS table

/sbin/ipvsadm -c

#set LVS

/sbin/ipvsadm -A -t $VIP:80 -s sh

/sbin/ipvsadm -a -t $VIP:80 -r $RIP1:80 -g

/sbin/ipvsadm -a -t $VIP:80 -r $RIP2:80 -g

/sbin/ipvsadm -a -t $VIP:80 -r $RIP3:80 -g

/sbin/ipvsadm -A -t $VIP:25 -s sh

/sbin/ipvsadm -a -t $VIP:25 -r $RIP1:25 -g

/sbin/ipvsadm -a -t $VIP:25 -r $RIP2:25 -g

/sbin/ipvsadm -a -t $VIP:25 -r $RIP3:25 -g

/sbin/ipvsadm -A -t $VIP:110 -s sh

/sbin/ipvsadm -a -t $VIP:110 -r $RIP1:110 -g

/sbin/ipvsadm -a -t $VIP:110 -r $RIP2:110 -g

/sbin/ipvsadm -a -t $VIP:110 -r $RIP3:110 -g

/sbin/ipvsadm -A -t $VIP:143 -s sh

/sbin/ipvsadm -a -t $VIP:143 -r $RIP1:143 -g

/sbin/ipvsadm -a -t $VIP:143 -r $RIP2:143 -g

/sbin/ipvsadm -a -t $VIP:143 -r $RIP3:143 -g

#/sbin/ipvsadm -a -t $VIP:80 -r $RIP3:80 –g

#Run LVS

/sbin/ipvsadm

#end

;;

stop)

echo "close LVS Directorserver"

/sbin/ipvsadm -c

;;

*)

echo "Usage: $0 {start|stop}"

exit 1

esac

[root@lvsserver ~]# chmod +x /etc/init.d/lvsdr

[root@lvsserver ~]# /etc/init.d/lvsdr start 启动

[root@lvsserver ~]# vi /etc/rc.local 加入到开机自启动

/etc/init.d/lvsdr start

[root@localhost ~]# ifconfig

eth0 Link encap:Ethernet HWaddr 00:50:56:8D:19:13

inet addr:192.168.1.121 Bcast:192.168.1.255 Mask:255.255.255.0

inet6 addr: fe80::250:56ff:fe8d:1913/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:10420500 errors:0 dropped:0 overruns:0 frame:0

TX packets:421628 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:1046805128 (998.3 MiB) TX bytes:101152496 (96.4 MiB)

eth0:0 Link encap:Ethernet HWaddr 00:50:56:8D:19:13

inet addr:192.168.1.100 Bcast:192.168.1.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:164717347 errors:0 dropped:0 overruns:0 frame:0

TX packets:164717347 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:28297589130 (26.3 GiB) TX bytes:28297589130 (26.3 GiB)

2、RS配置(node1、node2、node3)

[root@oaserver1 ~]# vi /etc/init.d/realserver

#!/bin/sh

VIP=192.168.1.100

. /etc/rc.d/init.d/functions

case "$1" in

start)

echo " start LVS of RealServer"

echo 2 > /proc/sys/net/ipv4/conf/all/arp_announce

echo 2 > /proc/sys/net/ipv4/conf/eth0/arp_announce

echo 1 > /proc/sys/net/ipv4/conf/all/arp_ignore

echo 1 > /proc/sys/net/ipv4/conf/eth0/arp_ignore

service network restart

ifconfig lo:0 $VIP netmask 255.255.255.255 broadcast $VIP

route add -host $VIP dev lo:0

#end

;;

stop)

echo "close LVS Realserver"

service network restart

;;

*)

echo "Usage: $0 {start|stop}"

exit 1

esac

[root@oaserver1 ~]# chmod +x /etc/init.d/realserver

[root@oaserver1 ~]# /etc/init.d/realserver start

[root@oaserver1 ~]# vi /etc/rc.local

/etc/init.d/realserver start

[root@oaserver1 ~]# ifconfig

eth0 Link encap:Ethernet HWaddr 00:0C:29:DC:B1:39

inet addr:192.168.1.105 Bcast:192.168.1.255 Mask:255.255.255.0

inet6 addr: fe80::20c:29ff:fedc:b139/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:816173 errors:0 dropped:0 overruns:0 frame:0

TX packets:399007 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:534582215 (509.8 MiB) TX bytes:98167814 (93.6 MiB)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:43283 errors:0 dropped:0 overruns:0 frame:0

TX packets:43283 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:8895319 (8.4 MiB) TX bytes:8895319 (8.4 MiB)

lo:0 Link encap:Local Loopback

inet addr:192.168.1.100 Mask:255.255.255.255

UP LOOPBACK RUNNING MTU:65536 Metric:1

3、重启服务器测试下,我是将其中一台的logo换掉,然后刷新,如果logo会变就表示成功。可以用ipvsadm名称查看。

五、负载均衡lvs+keepalived配置(两台负载均衡服务器实现高可用)

两台lvs负载均衡服务器用keepalived高可用,另外keepalived能实现健康检查(自动移除有问题RS节点),如果没有此功能,那么有一台oa挂了,lvs依然会转发给它,造成访问故障。

1、环境

Keepalived1 + lvs1(Director1):192.168.1.121

Keepalived2 + lvs2(Director2):192.168.1.122

Real server1:192.168.1.105

Real server1:192.168.1.106

Real server1:192.168.1.107

IP: 192.168.1.100

2、安装所需的依赖包

yum install -y wget make kernel-devel gcc gcc-c++ libnl* libpopt* popt-static

创建一个软链接,防止后面编译安装ipvsadm时找不到系统内核

ln -s /usr/src/kernels/2.6.32-696.30.1.el6.x86_64/ /usr/src/linux

3、Lvs + keepalived的2个节点安装

yum install ipvsadm keepalived -y

也可以编译安装ipvsadm(没试过这个,不建议)

wget http://www.linuxvirtualserver.org/software/kernel-2.6/ipvsadm-1.26.tar.gz

tar zxvf ipvsadm-1.26.tar.gz

cd ipvsadm-1.26

make && make install

ipvsadm

4、Real server节点3台配置脚本(node1、node2、node3):

[root@oaserver1 ~]# vi /etc/init.d/realserver

#!/bin/sh

VIP=192.168.1.100

. /etc/rc.d/init.d/functions

case "$1" in

start)

echo " start LVS of RealServer"

echo 2 > /proc/sys/net/ipv4/conf/all/arp_announce

echo 2 > /proc/sys/net/ipv4/conf/eth0/arp_announce

echo 1 > /proc/sys/net/ipv4/conf/all/arp_ignore

echo 1 > /proc/sys/net/ipv4/conf/eth0/arp_ignore

service network restart

ifconfig lo:0 $VIP netmask 255.255.255.255 broadcast $VIP

route add -host $VIP dev lo:0

#end

;;

stop)

echo "close LVS Realserver"

service network restart

;;

*)

echo "Usage: $0 {start|stop}"

exit 1

esac

[root@oaserver1 ~]# chmod +x /etc/init.d/realserver

[root@oaserver1 ~]# /etc/init.d/realserver start

[root@oaserver1 ~]# vi /etc/rc.local

/etc/init.d/realserver start

[root@oaserver1 ~]# ifconfig

eth0 Link encap:Ethernet HWaddr 00:0C:29:07:D5:96

inet addr:192.168.1.105 Bcast:192.168.1.255 Mask:255.255.255.0

inet6 addr: fe80::20c:29ff:fe07:d596/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:1390 errors:0 dropped:0 overruns:0 frame:0

TX packets:1459 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:334419 (326.5 KiB) TX bytes:537109 (524.5 KiB)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:2633 errors:0 dropped:0 overruns:0 frame:0

TX packets:2633 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:539131 (526.4 KiB) TX bytes:539131 (526.4 KiB)

lo:0 Link encap:Local Loopback

inet addr:192.168.1.100 Mask:255.255.255.255

UP LOOPBACK RUNNING MTU:65536 Metric:1

5、Lvs + keepalived节点配置,好像可以设置如果那台有问题,然后邮件通知。

主节点( MASTER )配置文件

vi /etc/keepalived/keepalived.conf

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.1.100

}

}

virtual_server 192.168.1.100 80 {

delay_loop 6

lb_algo sh

lb_kind DR

persistence_timeout 0

protocol TCP

real_server 192.168.1.105 80 {

weight 1

TCP_CHECK {

connect_timeout 10

nb_get_retry 3

delay_before_retry 3

connect_port 80

}

}

real_server 192.168.1.106 80 {

weight 1

TCP_CHECK {

connect_timeout 10

nb_get_retry 3

delay_before_retry 3

connect_port 80

}

}

real_server 192.168.1.107 80 {

weight 1

TCP_CHECK {

connect_timeout 10

nb_get_retry 3

delay_before_retry 3

connect_port 80

}

}

}

virtual_server 192.168.1.100 25 {

delay_loop 6

lb_algo sh

lb_kind DR

persistence_timeout 0

protocol TCP

real_server 192.168.1.105 25 {

weight 1

TCP_CHECK {

connect_timeout 10

nb_get_retry 3

delay_before_retry 3

connect_port 25

}

}

real_server 192.168.1.106 25 {

weight 1

TCP_CHECK {

connect_timeout 10

nb_get_retry 3

delay_before_retry 3

connect_port 25

}

}

real_server 192.168.1.107 25 {

weight 1

TCP_CHECK {

connect_timeout 10

nb_get_retry 3

delay_before_retry 3

connect_port 25

}

}

}

virtual_server 192.168.1.100 110 {

delay_loop 6

lb_algo sh

lb_kind DR

persistence_timeout 0

protocol TCP

real_server 192.168.1.105 110 {

weight 1

TCP_CHECK {

connect_timeout 10

nb_get_retry 3

delay_before_retry 3

connect_port 110

}

}

real_server 192.168.1.106 110 {

weight 1

TCP_CHECK {

connect_timeout 10

nb_get_retry 3

delay_before_retry 3

connect_port 110

}

}

real_server 192.168.1.107 110 {

weight 1

TCP_CHECK {

connect_timeout 10

nb_get_retry 3

delay_before_retry 3

connect_port 110

}

}

}

virtual_server 192.168.1.100 143 {

delay_loop 6

lb_algo sh

lb_kind DR

persistence_timeout 0

protocol TCP

real_server 192.168.1.105 143 {

weight 1

TCP_CHECK {

connect_timeout 10

nb_get_retry 3

delay_before_retry 3

connect_port 143

}

}

real_server 192.168.1.106 143 {

weight 1

TCP_CHECK {

connect_timeout 10

nb_get_retry 3

delay_before_retry 3

connect_port 143

}

}

real_server 192.168.1.107 143 {

weight 1

TCP_CHECK {

connect_timeout 10

nb_get_retry 3

delay_before_retry 3

connect_port 143

}

}

}

从节点( BACKUP )配置文件

拷贝主节点的配置文件keepalived.conf,然后修改如下内容:

state MASTER -> state BACKUP

priority 100 -> priority 90

keepalived的2个节点执行如下命令,开启转发功能:

# echo 1 > /proc/sys/net/ipv4/ip_forward

6、两节点关闭防火墙

/etc/init.d/iptables stop

chkconfig iptables off

7、两节点先后顺序启动keepalive

先主后从分别启动keepalive

service keepalived start

chkconfig keepalived on

8、测试故障转移

六、维护注意项

1、麻烦开机或维护的时候,务必按照这个开机顺序(间隔2-3分钟左右): (1)、103 NFS服务器 (2)、105 业务服务器 (3)、106、107 业务服务器 (4)、121负载分发服务器 (5)、122负载分发服务器 备注:如果103不先启动,那oa服务器挂载不了存储分区。然后105oa服务器不首先启动,106和107的mysql服务启动不起来。 2、正常关机或重启顺序 (1)121、122负载分发服务器 (2)106、107 业务服务器 (3)105 业务服务器 (4)103 NFS服务器 3、如果3台业务服务器,出现个别正常关机或重启卡在那里很久的,麻烦暂时直接关机就可以。

--------------------------------------------------------------------------------

附:

LVS的调度算法分为静态与动态两类。 1.静态算法(4种):只根据算法进行调度 而不考虑后端服务器的实际连接情况和负载情况 ①.RR:轮叫调度(Round Robin) 调度器通过”轮叫”调度算法将外部请求按顺序轮流分配到集群中的真实服务器上,它均等地对待每一台服务器,而不管服务器上实际的连接数和系统负载。 ②.WRR:加权轮叫(Weight RR) 调度器通过“加权轮叫”调度算法根据真实服务器的不同处理能力来调度访问请求。这样可以保证处理能力强的服务器处理更多的访问流量。调度器可以自动问询真实服务器的负载情况,并动态地调整其权值。 ③.DH:目标地址散列调度(Destination Hash ) 根据请求的目标IP地址,作为散列键(HashKey)从静态分配的散列表找出对应的服务器,若该服务器是可用的且未超载,将请求发送到该服务器,否则返回空。 ④.SH:源地址 hash(Source Hash) 源地址散列”调度算法根据请求的源IP地址,作为散列键(HashKey)从静态分配的散列表找出对应的服务器,若该服务器是可用的且未超载,将请求发送到该服务器,否则返回空。 2.动态算法(6种):前端的调度器会根据后端真实服务器的实际连接情况来分配请求 ①.LC:最少链接(Least Connections) 调度器通过”最少连接”调度算法动态地将网络请求调度到已建立的链接数最少的服务器上。如果集群系统的真实服务器具有相近的系统性能,采用”最小连接”调度算法可以较好地均衡负载。 ②.WLC:加权最少连接(默认采用的就是这种)(Weighted Least Connections) 在集群系统中的服务器性能差异较大的情况下,调度器采用“加权最少链接”调度算法优化负载均衡性能,具有较高权值的服务器将承受较大比例的活动连接负载。调度器可以自动问询真实服务器的负载情况,并动态地调整其权值。 ③.SED:最短延迟调度(Shortest Expected Delay ) 在WLC基础上改进,Overhead = (ACTIVE+1)*256/加权,不再考虑非活动状态,把当前处于活动状态的数目+1来实现,数目最小的,接受下次请求,+1的目的是为了考虑加权的时候,非活动连接过多缺陷:当权限过大的时候,会倒置空闲服务器一直处于无连接状态。 ④.NQ永不排队/最少队列调度(Never Queue Scheduling NQ) 无需队列。如果有台 realserver的连接数=0就直接分配过去,不需要再进行sed运算,保证不会有一个主机很空间。在SED基础上无论+几,第二次一定给下一个,保证不会有一个主机不会很空闲着,不考虑非活动连接,才用NQ,SED要考虑活动状态连接,对于DNS的UDP不需要考虑非活动连接,而httpd的处于保持状态的服务就需要考虑非活动连接给服务器的压力。 ⑤.LBLC:基于局部性的最少链接(locality-Based Least Connections) 基于局部性的最少链接”调度算法是针对目标IP地址的负载均衡,目前主要用于Cache集群系统。该算法根据请求的目标IP地址找出该目标IP地址最近使用的服务器,若该服务器是可用的且没有超载,将请求发送到该服务器;若服务器不存在,或者该服务器超载且有服务器处于一半的工作负载,则用“最少链接”的原则选出一个可用的服务器,将请求发送到该服务器。 ⑥. LBLCR:带复制的基于局部性最少连接(Locality-Based Least Connections with Replication) 带复制的基于局部性最少链接”调度算法也是针对目标IP地址的负载均衡,目前主要用于Cache集群系统。它与LBLC算法的不同之处是它要维护从一个目标IP地址到一组服务器的映射,而LBLC算法维护从一个目标IP地址到一台服务器的映射。该算法根据请求的目标IP地址找出该目标IP地址对应的服务器组,按”最小连接”原则从服务器组中选出一台服务器,若服务器没有超载,将请求发送到该服务器;若服务器超载,则按“最小连接”原则从这个集群中选出一台服务器,将该服务器加入到服务器组中,将请求发送到该服务器。同时,当该服务器组有一段时间没有被修改,将最忙的服务器从服务器组中删除,以降低复制的程度。 3、keepalived切换正常日志/var/log/message日志 以下是另外一台服务器挂了,当前这台服务器自动接管: Aug 4 15:15:47 localhost Keepalived_vrrp[1306]: VRRP_Instance(VI_1) Transition to MASTER STATE Aug 4 15:15:48 localhost Keepalived_vrrp[1306]: VRRP_Instance(VI_1) Entering MASTER STATE Aug 4 15:15:48 localhost Keepalived_vrrp[1306]: VRRP_Instance(VI_1) setting protocol VIPs. Aug 4 15:15:48 localhost Keepalived_healthcheckers[1303]: Netlink reflector reports IP 192.168.1.100 added Aug 4 15:15:48 localhost Keepalived_vrrp[1306]: VRRP_Instance(VI_1) Sending gratuitous ARPs on eth0 for 192.168.1.100 Aug 4 15:15:53 localhost Keepalived_vrrp[1306]: VRRP_Instance(VI_1) Sending gratuitous ARPs on eth0 for 192.168.1.100 以下是另外一台服务器恢复后,自动释放资源: Aug 4 15:17:25 localhost Keepalived_vrrp[1306]: VRRP_Instance(VI_1) Received higher prio advert Aug 4 15:17:25 localhost Keepalived_vrrp[1306]: VRRP_Instance(VI_1) Entering BACKUP STATE Aug 4 15:17:25 localhost Keepalived_vrrp[1306]: VRRP_Instance(VI_1) removing protocol VIPs. Aug 4 15:17:25 localhost Keepalived_healthcheckers[1303]: Netlink reflector reports IP 192.168.1.100 removed Aug 4 15:17:34 localhost Keepalived_healthcheckers[1303]: TCP connection to [192.168.1.105]:110 success. Aug 4 15:17:34 localhost Keepalived_healthcheckers[1303]: Adding service [192.168.1.105]:110 to VS [192.168.1.100]:110 Aug 4 15:17:35 localhost Keepalived_healthcheckers[1303]: TCP connection to [192.168.1.105]:143 success. Aug 4 15:17:35 localhost Keepalived_healthcheckers[1303]: Adding service [192.168.1.105]:143 to VS [192.168.1.100]:143 Aug 4 15:18:04 localhost Keepalived_healthcheckers[1303]: TCP connection to [192.168.1.107]:110 success. Aug 4 15:18:04 localhost Keepalived_healthcheckers[1303]: Adding service [192.168.1.107]:110 to VS [192.168.1.100]:110 Aug 4 15:18:05 localhost Keepalived_healthcheckers[1303]: TCP connection to [192.168.1.107]:143 success. Aug 4 15:18:05 localhost Keepalived_healthcheckers[1303]: Adding service [192.168.1.107]:143 to VS [192.168.1.100]:143